Alleged Price and TGP of RTX 4070 Revealed by Leak

Nvidia allegedly compared GeForce RTX 4070 to RTX 3070 Ti in its marketing materials.

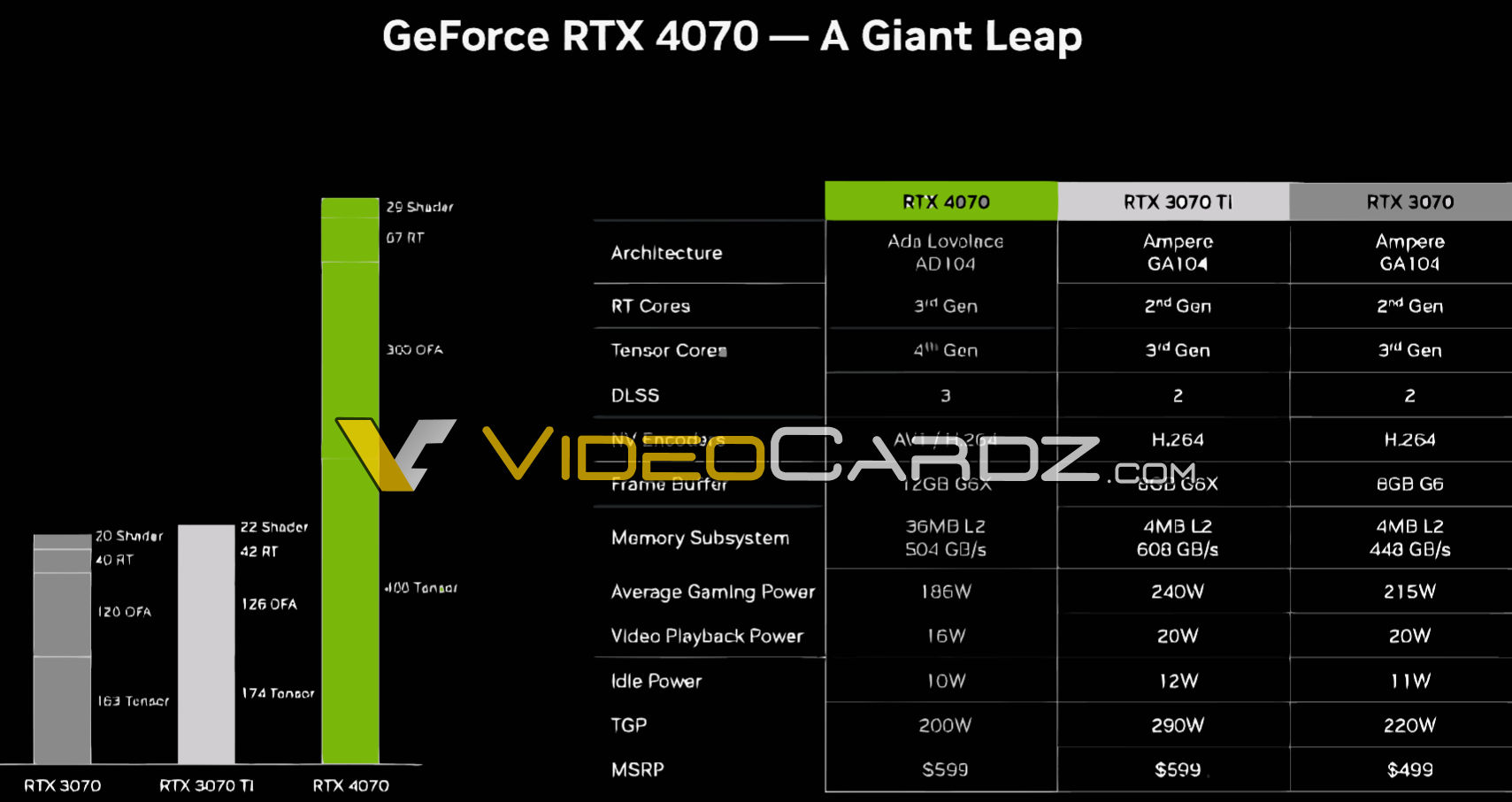

VideoCardz has published a leaked slide presumably from Nvidia's final presentation of its GeForce RTX 4070 graphics card. The slide reveals a final price of the add-in-board as well as some of its specifications. In particular, it says that the product will cost $599 and will have a total graphics power of 200W.

Nvidia is expected to release its GeForce RTX 4070 graphics card in mid-April, but most of its specifications have been known for some time, albeit not from the company itself. The upcoming vanilla version of Nvidia's GeForce RTX 4070 shares the AD104 graphics processor with the RTX 4070 Ti, but has only 5888 CUDA cores operating at 1920 MHz – 2475 MHz. Just like the 'Titanium' version, the GeForce RTX 4070 features a 12GB GDDR6X memory subsystem with a 192-bit interface.

The new graphics card is said to have a total graphics power of 200W, which is significantly lower than the TGP of Nvidia's GeForce RTX 4070 Ti (285W) and GeForce RTX 3070 Ti (290W). This would enable graphics cards makers to miniaturize cooling systems of the GeForce RTX 4070 and therefore maximize compatibility with more compact computer cases, which will be another reason why this board will likely enter the ranks of one of the best graphics cards available. Idle power and video playback power of the new board will also be lower compared to that of its predecessors, according to the slide.

Despite having a heavily cut-down AD104 GPU, the GeForce RTX 4070 is expected to produce approximately 29 FP32 TFLOPS of computational power, which is comparable to that of the GeForce RTX 3080. However, the RTX 3080 possesses a 320-bit memory bus and an impressive peak bandwidth of 760 GB/s, which is substantially greater than the 504 GB/s bandwidth provided by AD104's 21 GT/s GDDR6X memory. In fact, even a GeForce RTX 3070 Ti has higher memory bandwidth (608 GB/s) than the newcomer. Meanwhile, the new graphics card is supposed to have a large L2 cache, which will likely compensate for a slower memory subsystem.

| Row 0 - Cell 0 | GPU | FP32 CUDA Cores | Memory Configuration | TBP | MSRP |

| GeForce RTX 4090 Ti | AD102 | 18176 (?) | 24GB 384-bit 24 GT/s GDDR6X (?) | 600W (?) | ? |

| GeForce RTX 4090 | AD102 | 16384 | 24GB 384-bit 21 GT/s GDDR6X | 450W | $1,599 |

| GeForce RTX 4080 | AD103 | 9728 | 16GB 256-bit 22.4 GT/s GDDR6X | 320W | $1,199 |

| GeForce RTX 4070 Ti | AD104 | 7680 | 12GB 192-bit 21 GT/s GDDR6X | 285W | $799 |

| GeForce RTX 4070* | AD104 | 5888 (?) | 12GB 192-bit 21 GT/s GDDR6X | 250W (?) | ? |

| GeForce RTX 4060 Ti* | AD106 | 4352 (?) | 8GB 128-bit 18 GT/s GDDR6 | 160W (?) | <$500? |

| GeForce RTX 3070 | GA104 | 5888 | 8GB 256-bit 14 GT/s GDDR6 | 220W | $499 |

Perhaps an interesting wrinkle of the slide is that Nvidia compares its GeForce RTX 4070 graphics card to its previous-generation GeForce RTX 3070 and RTX 3070 Ti offerings. With its Ada Lovelace GPUs, Nvidia has been emphasizing that its latest GPUs can rival or surpass higher-class previous-generation offerings at lower price. With its GeForce RTX 4070, it looks like Nvidia is betting on higher-performance and lower power at the same launch price.

In any case, Nvidia has not announced its GeForce RTX 4070 officially and we cannot verify authenticity of the slide. Therefore, take the information with a grain of salt for now.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Anton Shilov is a contributing writer at Tom’s Hardware. Over the past couple of decades, he has covered everything from CPUs and GPUs to supercomputers and from modern process technologies and latest fab tools to high-tech industry trends.

-

Why_Me (Pending reviews of course) this card should be a decent one imo for those peeps looking to break into 1440P gaming.Reply -

InvalidError Reply

Plenty of people already do 1440p on much slower GPUs, just got to bump details down a peg or two from RT-Ultra-Nightmare, which still looks almost as good without the massively inflated performance and VRAM requirements.Why_Me said:(Pending reviews of course) this card should be a decent one imo for those peeps looking to break into 1440P gaming. -

atomicWAR IF the 599 msrp is correct, the RTX 4070 will have ended up 50 dollars cheaper then I guessed it would cost, up to a 100 dollars cheaper than I was worried it could cost if I was wrong and still 100 dollars more expensive than it should actually be. At the end of the day I am not impressed BUT its more sane pricing compared to the 80/70 TI series cards sadly still leaving the 4090 feeling like the best bang for the buck this gen due to its large performance uplift to cost increase which is still just weird to me for a high end card to slot in like that.Reply

Edit: As noted below in shear terms of cost per frame the RTX 4070 Ti does indeed hold the "best bang for buck" title among the available 4000 series cards. By saying feeling the 4090 has better bang for the buck I meant for that 100 dollars more on each you get 3090->4090 = 65% performance increase VS 3070 Ti-> 4070 Ti = 50% performance increase. Thus 100 dollars more for 65% increase feels more worth it compared to 100 dollars more for 50%. But the steep performance drop off for Nvidia cards as you go down the stack does predate the 4000 series by a fair number of generations. -

KyaraM Reply

It might feel that way, but actually the 4070Ti leads in performance/dollar and comes close enough in performance/watt while having the overall lowest consumption of all the frevious gen cards plus all relevant last gen cards, and coming very close to the other Ada cards:atomicWAR said:IF the 599 msrp is correct, the RTX 4070 will have ended up 50 dollars cheaper then I guessed it would cost, up to a 100 dollars cheaper than I was worried it could cost if I was wrong and still 100 dollars more expensive than it should actually be. At the end of the day I am not impressed BUT its more sane pricing compared to the 80/70 TI series cards sadly still leaving the 4090 feeling like the best bang for the buck this gen which is still just weird to me for a high end card to slot in like that.

https://www.techpowerup.com/review/palit-geforce-rtx-4070-ti-gamingpro-oc/33.html

https://www.techpowerup.com/review/palit-geforce-rtx-4070-ti-gamingpro-oc/40.html

So while it might feel like the 4090 has the better price/performance, that isn't actually correct. Especially since more and more 4070Ti crop up for below MSRP here where I live. The 4070 will most likely be even better price/performance and possibly better performance/watt as well. But we will have to wait for the 3rd party testing to be sure. -

thisisaname Reply

Even if the MSRP is $599 I bet most cards are going to be priced higher than that.atomicWAR said:IF the 599 msrp is correct, the RTX 4070 will have ended up 50 dollars cheaper then I guessed it would cost, up to a 100 dollars cheaper than I was worried it could cost if I was wrong and still 100 dollars more expensive than it should actually be. At the end of the day I am not impressed BUT its more sane pricing compared to the 80/70 TI series cards sadly still leaving the 4090 feeling like the best bang for the buck this gen which is still just weird to me for a high end card to slot in like that. -

Ar558 A MSRP of $599 will make the Ti irrelevant. I don't believe this number till I see it, $699 makes much more sense given nVidia's strategy.Reply -

atomicWAR ReplyKyaraM said:It might feel that way, but actually the 4070Ti leads in performance/dollar ....

I am aware thus why I said feels like and not is (ie overall performance uplift compared to cost increase for each 65% vs 50% for 100 US) though I could have been more clear on my why I will edit my post... and until the RTX 4070Ti dropped the 4090 did indeed have a better price peformance compared to the 4080 which was insane. Something I have not seen happen before in a flagship card. And despite beating the 4090 in bang for buck...the 4070Ti is still woefully overpriced, which was generally my point for all Nvidia GPUs this gen. Not to mention the performance of both the 4080 and 4070 Ti are also underpowered by about 10-15% each. IMHO at least...All in all I have been less than satisfied with this gen. But I also think Nvidia and AMD will course correct in time with prices which could change the conversation considerably. -

Giroro If this is true, then I definitely won't buy it.Reply

What's with Nvidia forcing every single product into the "no man's land" pricing between $500 and their halo product?

If you have $600 for something as completely unnecessary as "slightly prettier" gaming graphics (but only when viewed on a high end monitor), then you have $1200. If you have $1200, then price is no object.

If you want to sell us absurdly overpriced GPUs, then leather granddaddy should at least even pretend to give us a reason. Where are the games?

Everybody has already figured out that you can't make money with these GPUs. Streaming is rigged and mining is dead, so what does GPU performance even matter, at this point? -

KyaraM Reply

I won't say anything about it being overpriced or anything. I don't think a 3090/3090Ti level card is underpowered in any way, though, especially not for 800 USD new. However, the overpriced argument also applies to all AMD cards no matter what certain people might claim.atomicWAR said:I am aware thus why I said feels like and not is (ie overall performance uplift compared to cost increase for each 65% vs 50% for 100 US) though I could have been more clear on my why I will edit my post... and until the RTX 4070Ti dropped the 4090 did indeed have a better price peformance compared to the 4080 which was insane. Something I have not seen happen before in a flagship card. And despite beating the 4090 in bang for buck...the 4070Ti is still woefully overpriced, which was generally my point for all Nvidia GPUs this gen. Not to mention the performance of both the 4080 and 4070 Ti are also underpowered by about 10-15% each. IMHO at least...All in all I have been less than satisfied with this gen. But I also think Nvidia and AMD will course correct in time with prices which could change the conversation considerably.

The 2070 didn't even get close to beating the 1080Ti. It barely beat the regular 1080 by a single digit percentage.oofdragon said:Did any time in history the 70 series not beat it's 80Ti predecessor?

https://www.techspot.com/review/1727-nvidia-geforce-rtx-2070/

From what I dug up online, the 1070 was less than 5% better than the 980Ti and the 3070 again didn't quite reach the 2080Ti. So yes, plenty of time.

Your argument makes no sense. Someone willing or able to pay 800 USD might not be able or willing to 900, 1000, or especially 1200. That is utter bs. Also, with the current trend, with that stance you will never buy a GPU again, be it Nvidia or AMD. Who, I want to point out, is just as bad with their pricing. Biiiiiig surprise (not).Giroro said:If this is true, then I definitely won't buy it.

What's with Nvidia forcing every single product into the "no man's land" pricing between $500 and their halo product?

If you have $600 for something as completely unnecessary as "slightly prettier" gaming graphics (but only when viewed on a high end monitor), then you have $1200. If you have $1200, then price is no object.

If you want to sell us absurdly overpriced GPUs, then leather granddaddy should at least even pretend to give us a reason. Where are the games?

Everybody has already figured out that you can't make money with these GPUs. Streaming is rigged and mining is dead, so what does GPU performance even matter, at this point?