AMD Radeon R9 380X Nitro Launch Review

Tonga’s been around for more than a year now, and it’s taken all this time for it to finally be available for regular desktop PCs. Before now, this configuration was exclusively offered for a different platform. But does it still make sense today?

FHD (1920x1080) Gaming Results

AMD positions the Radeon R9 380X as a QHD/1440p graphics card. However, if we’re honest, most buyers will probably use it to drive the still-popular FHD resolution and happily max-out their detail settings.

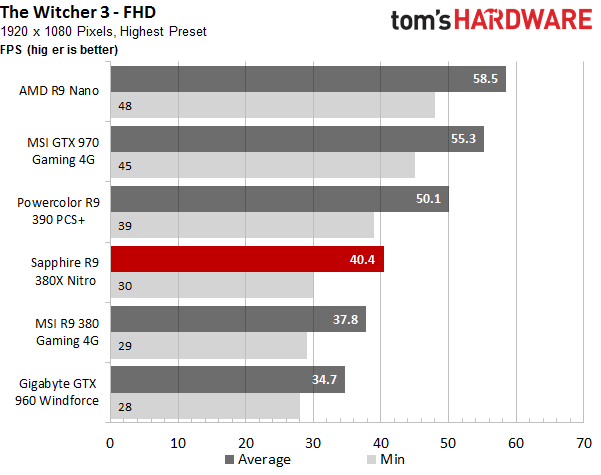

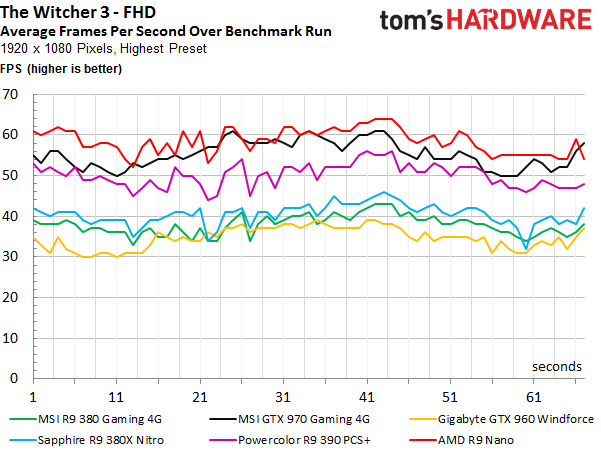

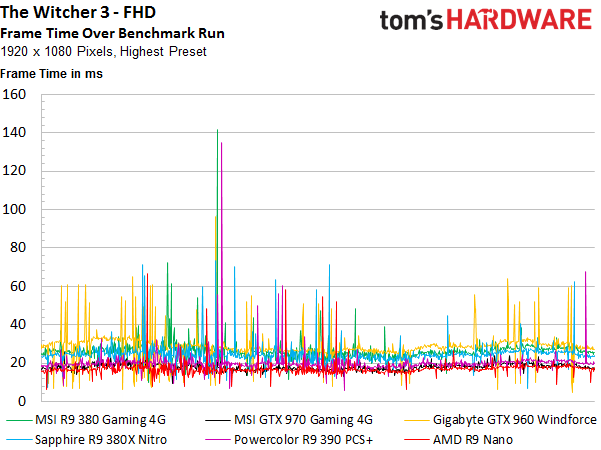

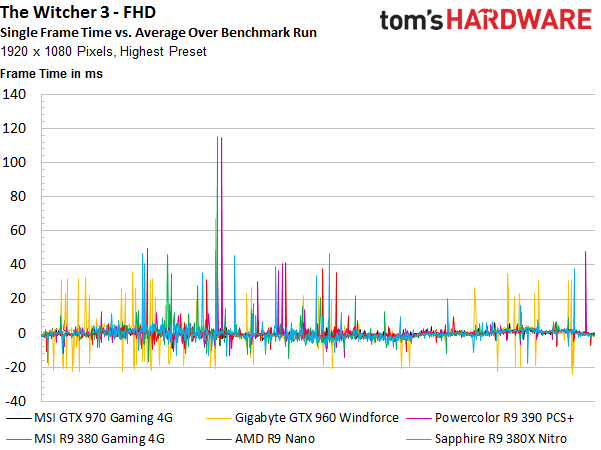

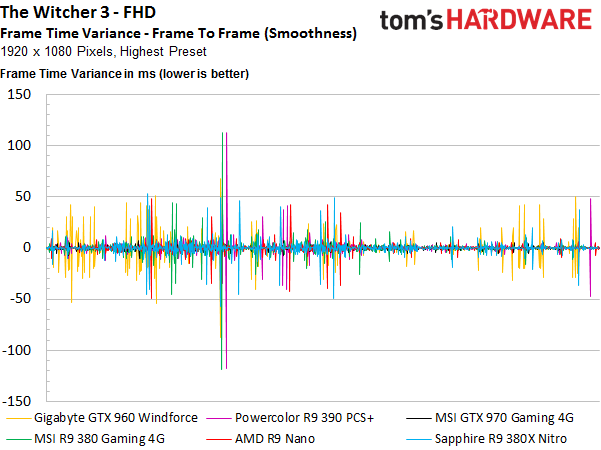

The Witcher 3: Wild Hunt

The difference between AMD's Radeon R9 380 and 380X is only about seven percent, which really isn’t that much. Both graphics cards average close to 40 FPS, and it's really hard to tell them apart subjectively in the real world. Both cards would, however, benefit from slightly lower detail settings.

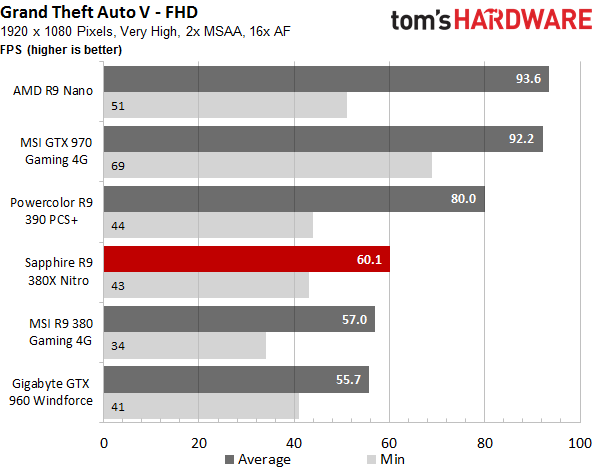

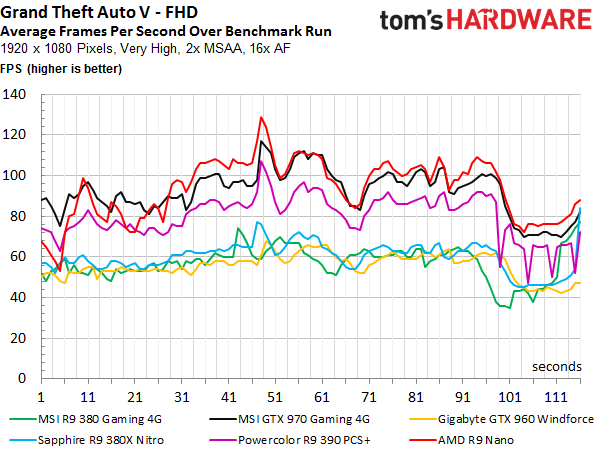

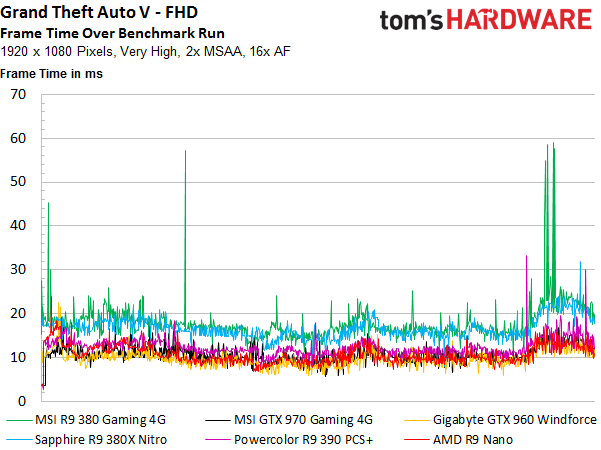

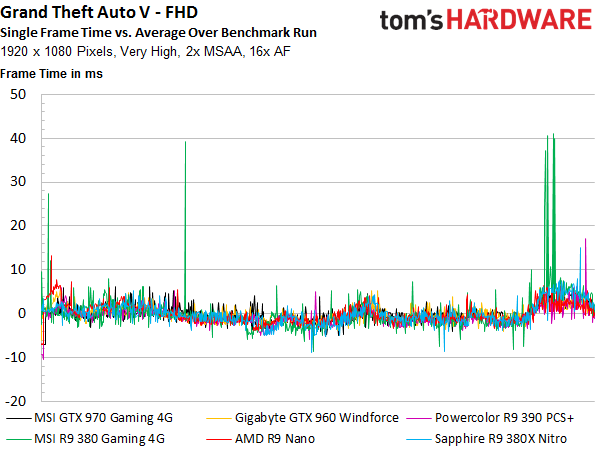

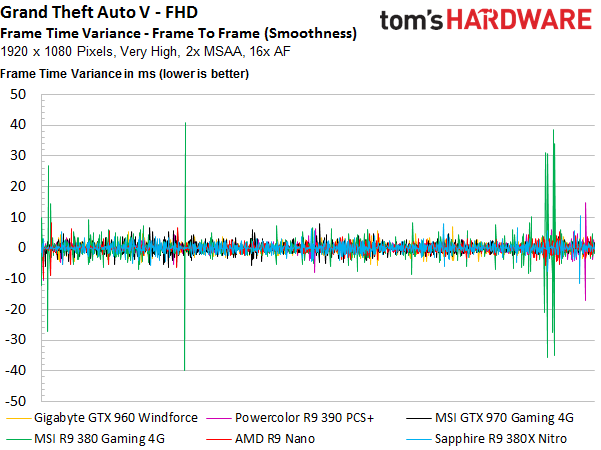

Grand Theft Auto V

New game, same results? Almost, but not quite; the new graphics card’s lead shrinks to five percent. Both cards do manage to produce playable frame rates, even though most enthusiasts would prefer significantly better performance. You'd want to dial back graphics quality to achieve this.

Article continues below

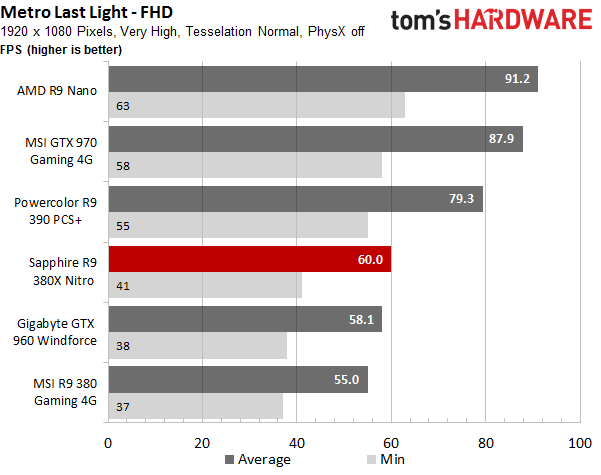

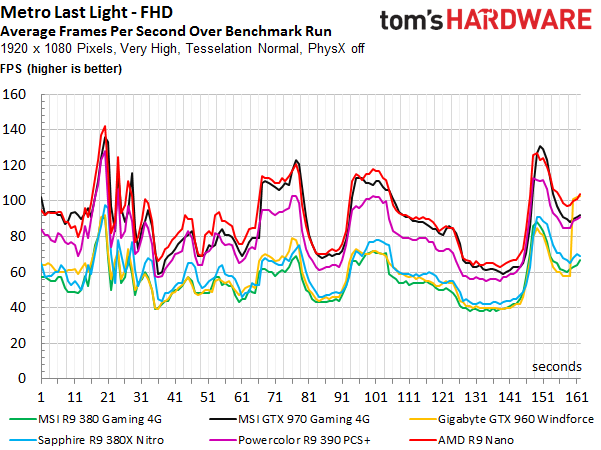

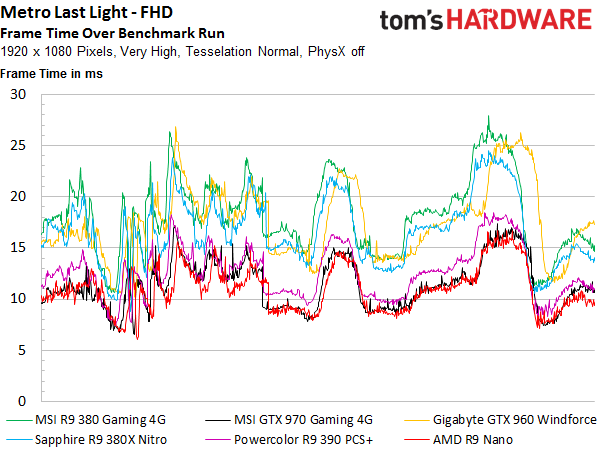

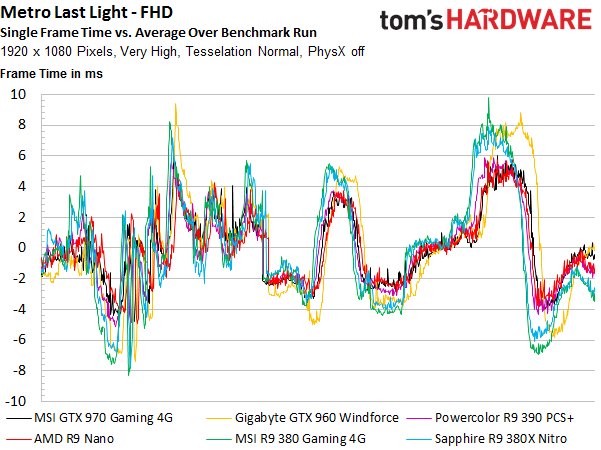

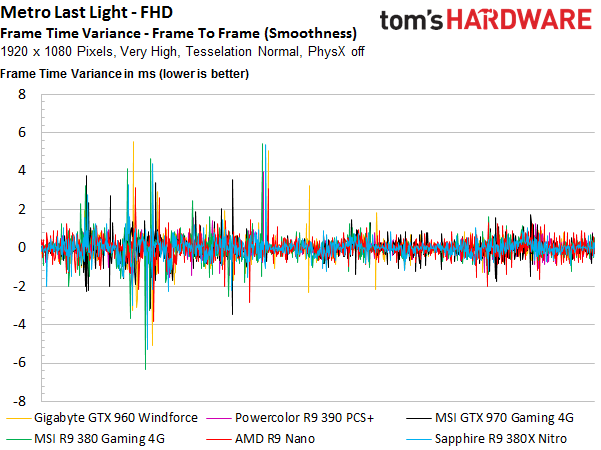

Metro: Last Light

This title is also a classic hardware benchmark, since it heavily features tessellation. AMD’s latest pulls ahead, managing a more comfortable nine percent lead. It’s interesting to see that the GeForce GTX 960, purportedly the weakest card on paper, manages to secure a position in between AMD’s two contenders.

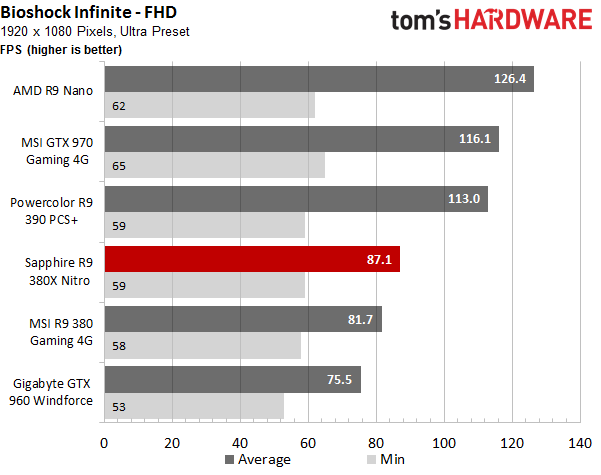

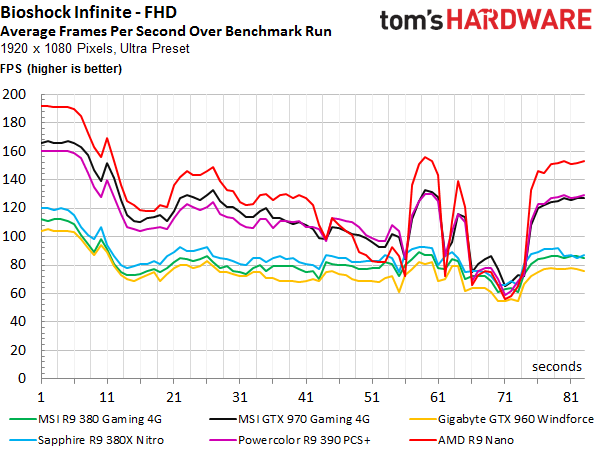

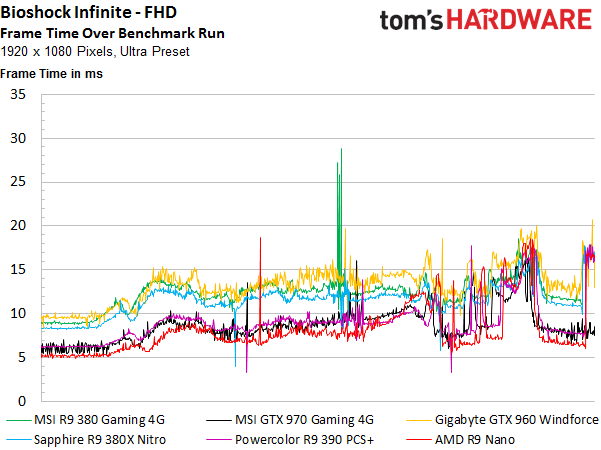

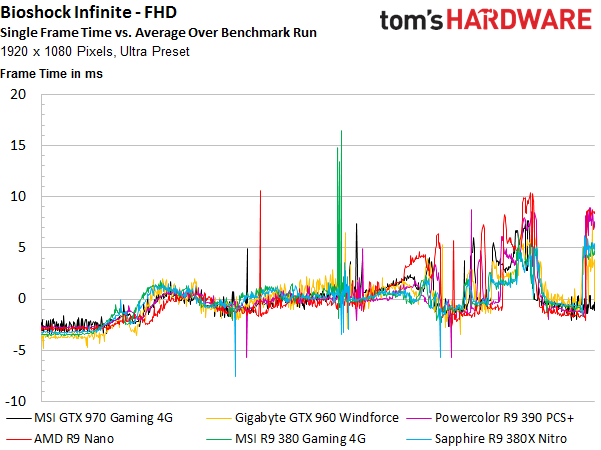

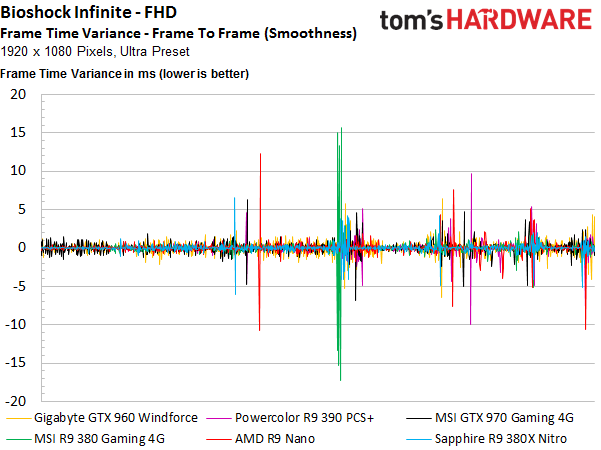

Bioshock Infinite

Now what? AMD’s Radeon R9 380X beats its smaller sibling by six percent. If you think that's subtle, just wait until you actually play the games we're testing. You won’t notice the difference at all.

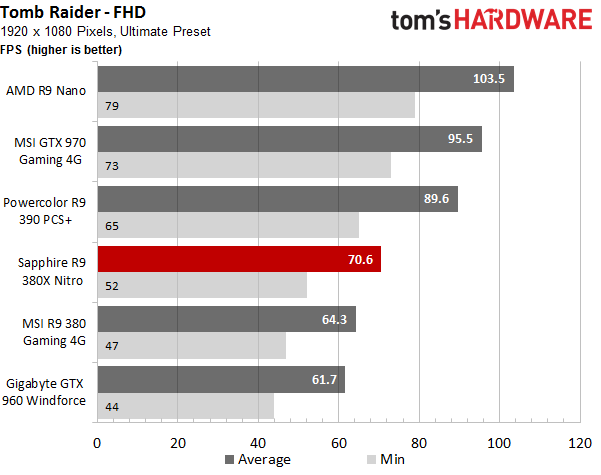

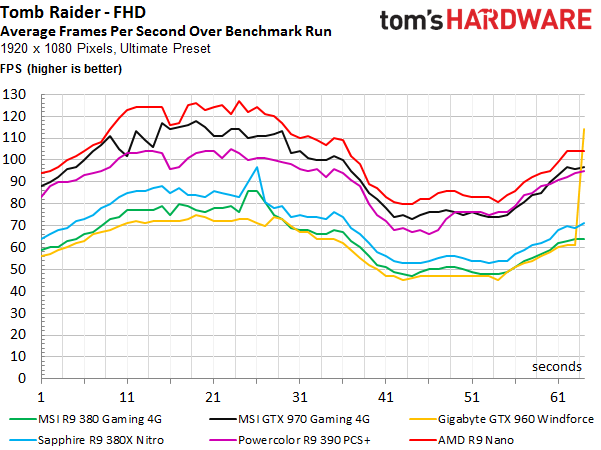

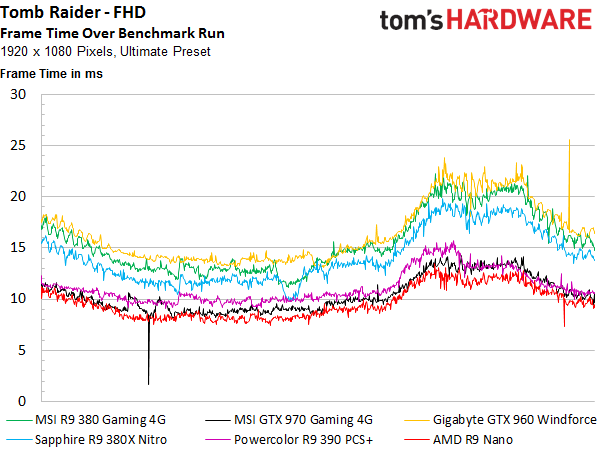

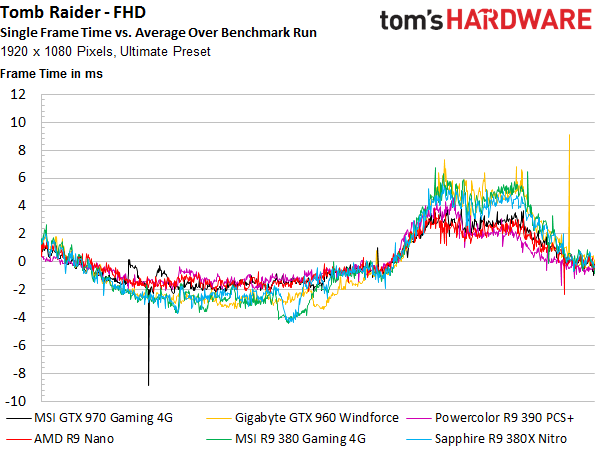

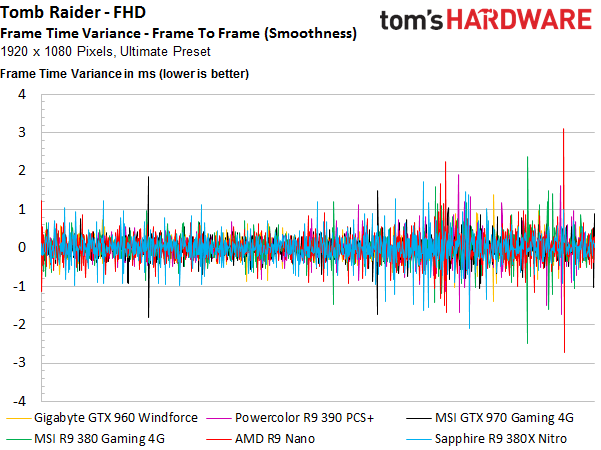

Tomb Raider

Tomb Raider is one of AMD’s flagship titles. Both of the company's primary contenders fare well in it. For the first time, the Radeon R9 380X manages a double-digit lead over the X-less 380 (a full 10 percent). However, this difference is still barely noticeable when you sit down to play. Elsewhere, AMD's Radeon R9 390 plays in a league of its own, and Nvidia’s GeForce GTX 960 is left in the other cards’ dust.

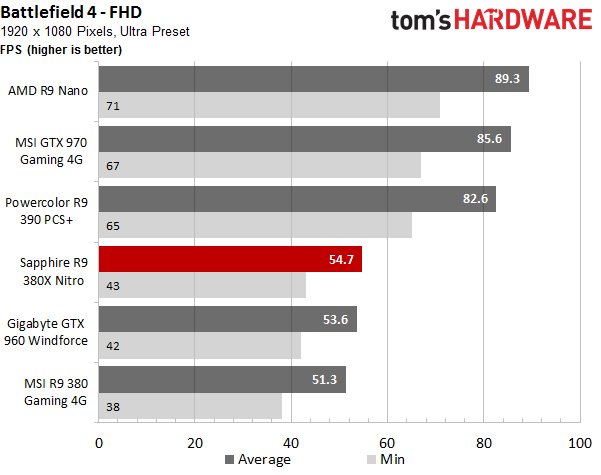

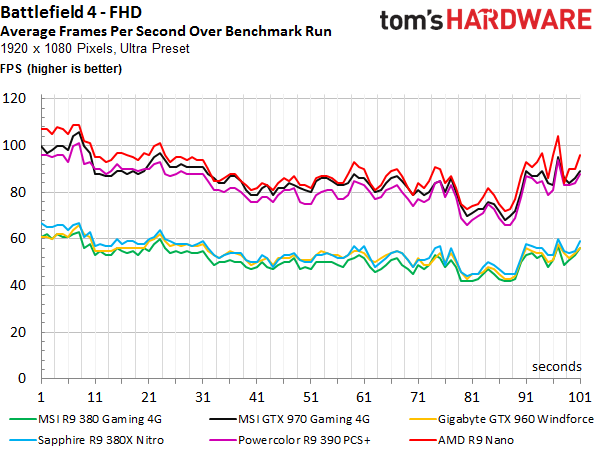

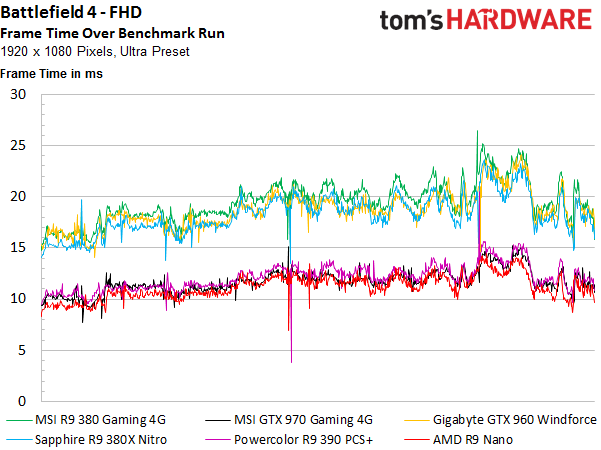

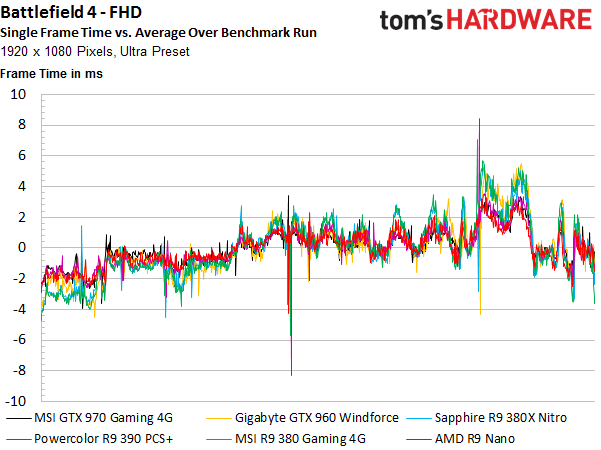

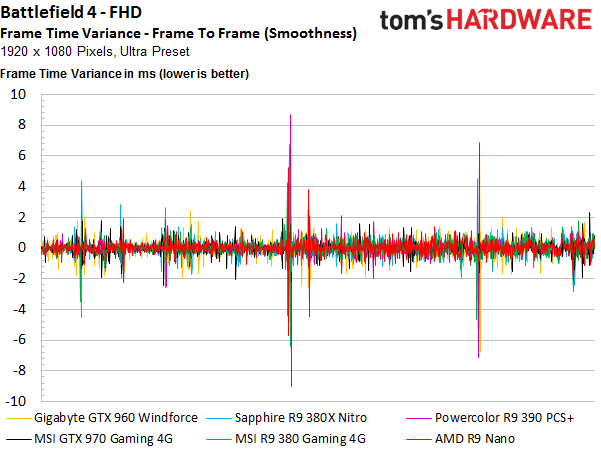

Battlefield 4 (Campaign)

Battlefield 4 has seen many patches, and the drivers should be perfectly optimized for it. Consequently, this game is still worth a look. Nvidia’s GeForce GTX 960 manages to beat AMD’s Radeon R9 380 and is, in turn, beaten by the 380X. Again, a six percent difference is of no consequence during a subjective gaming comparison, though.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

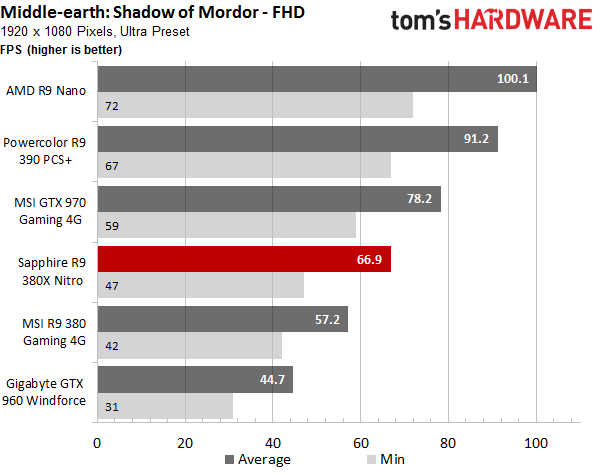

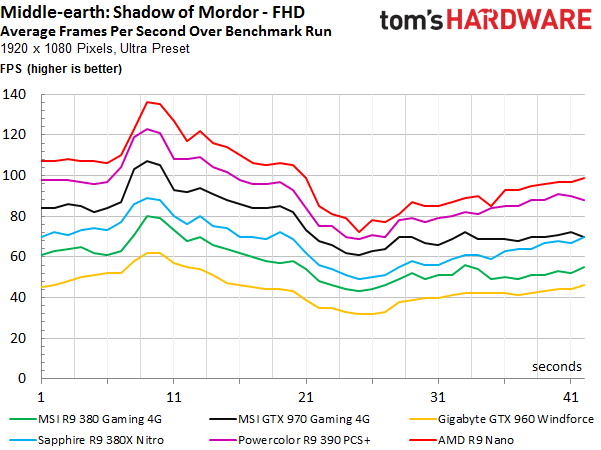

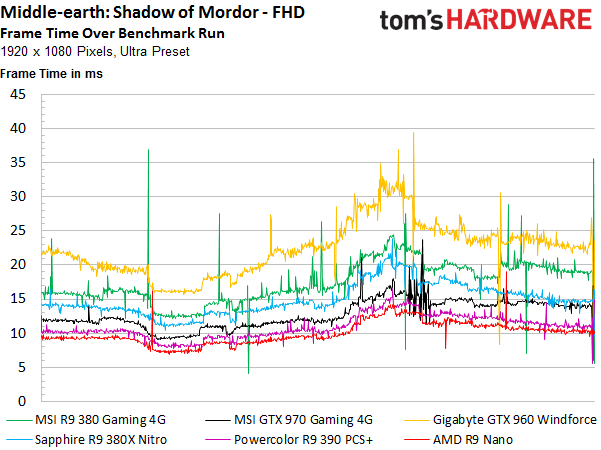

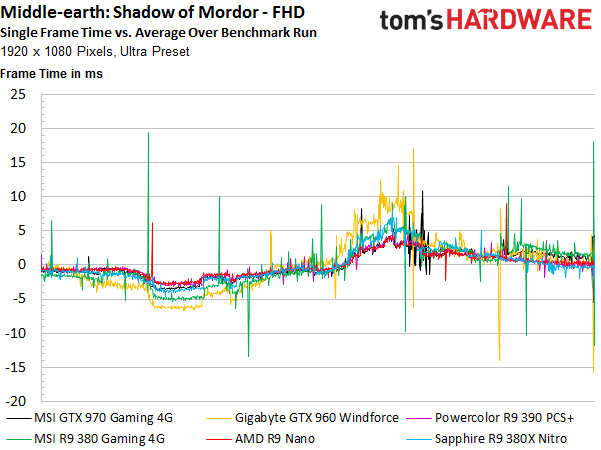

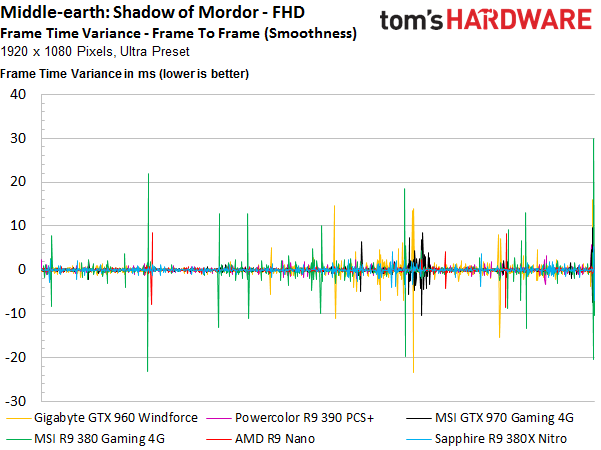

Middle-Earth: Shadow of Mordor

We finally get a look at what happens when Tonga faces a real challenge and the driver does its part. AMD’s newest card pulls ahead of its stablemate by 16 percent, yielding our first impressive result. Nvidia’s GeForce GTX 960 can’t keep up with either of its main competitors.

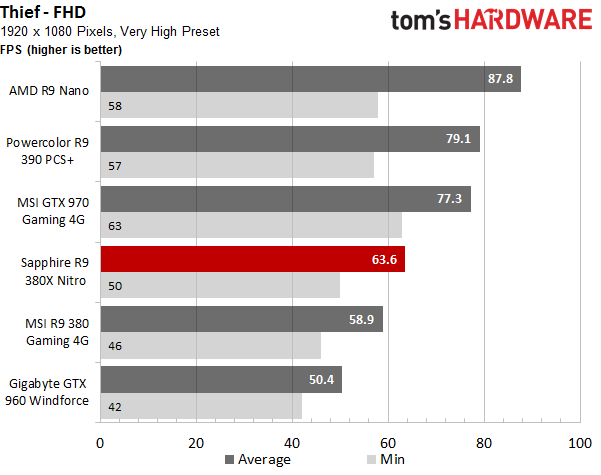

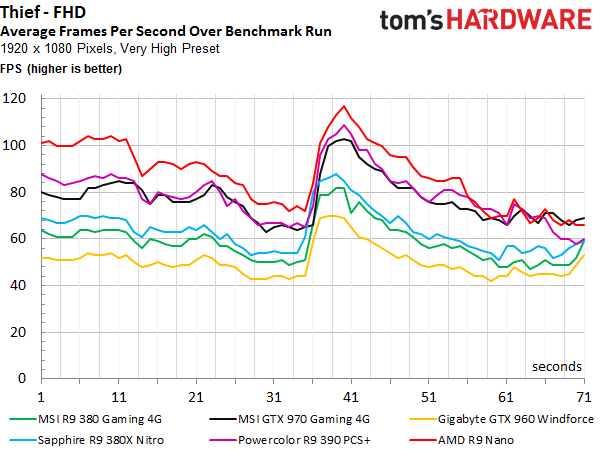

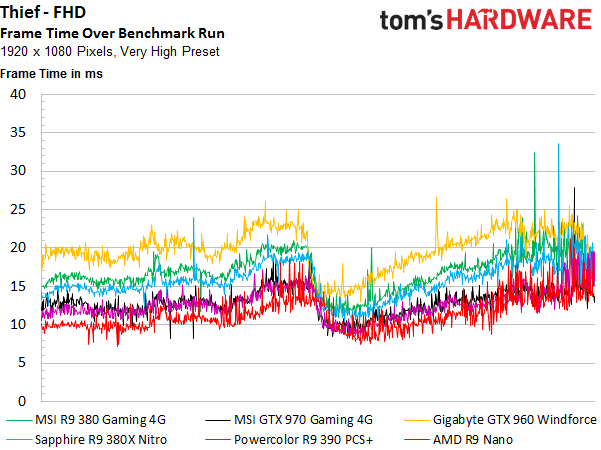

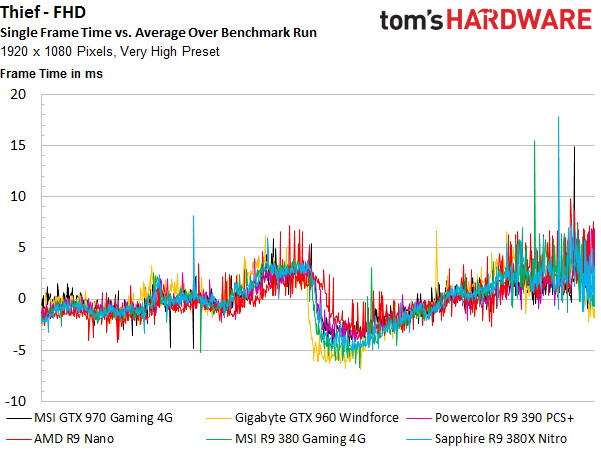

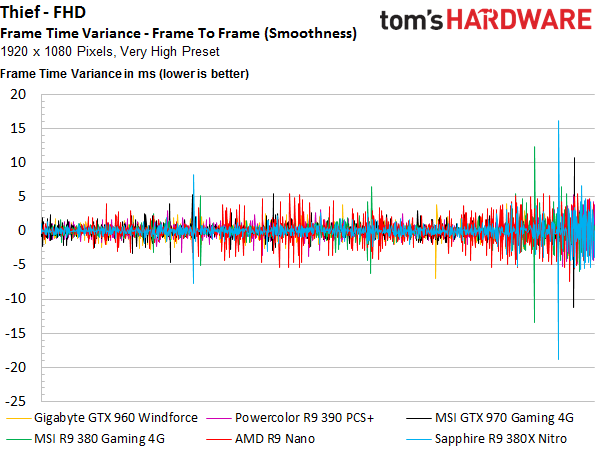

Thief

Things get more challenging again, with power consumption going up to the level we saw running Metro: Last Light. When the dust settles, AMD’s Radeon R9 380X comes out ahead by eight percent. Once again, this is barely noticeable in a real-world situation. The frame-time curves are very similar, after all.

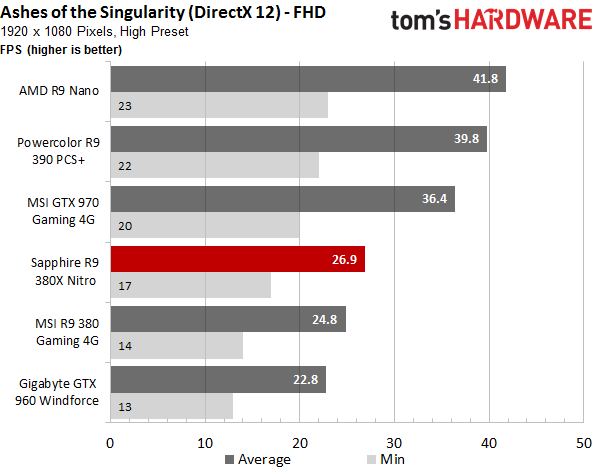

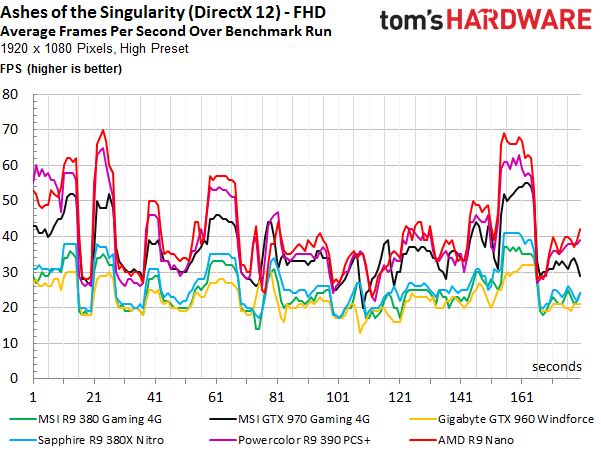

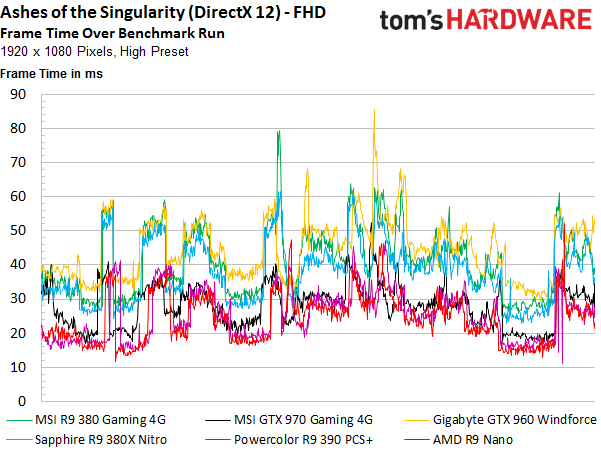

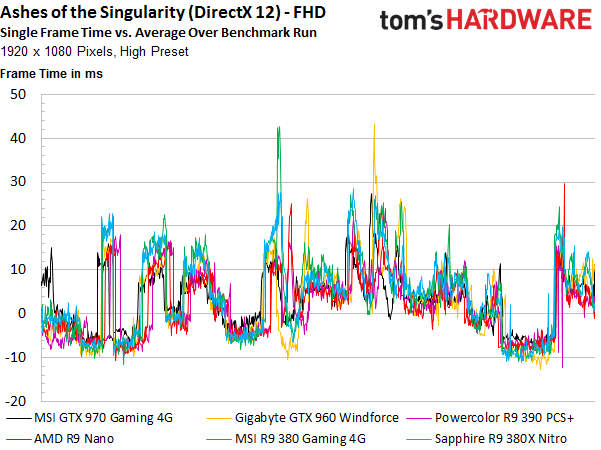

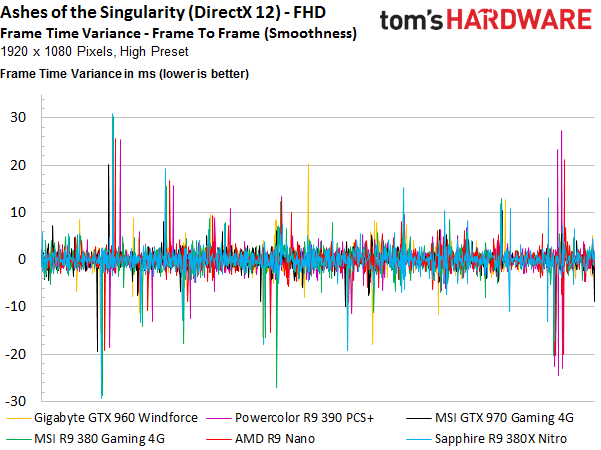

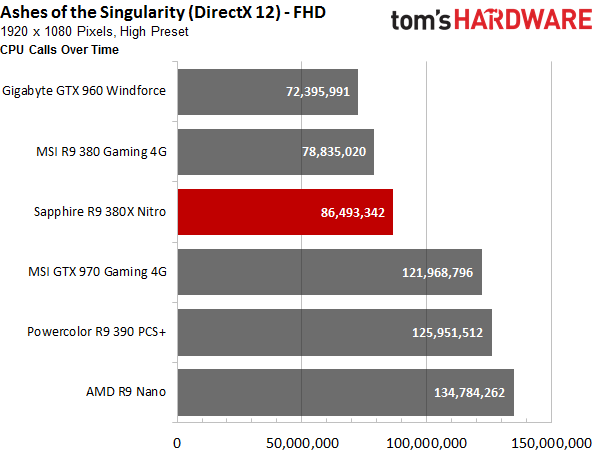

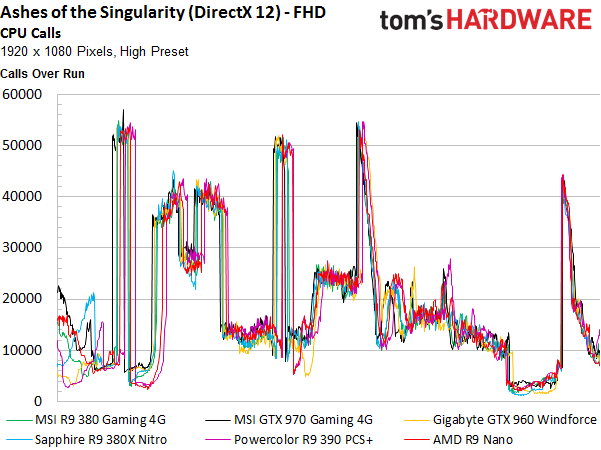

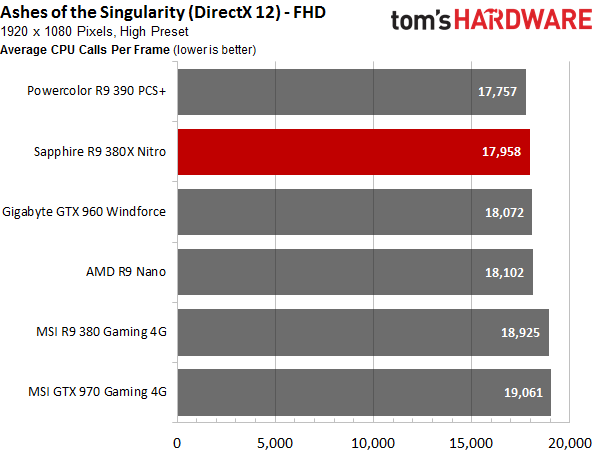

Ashes of the Singularity

Since there are really no mature, or even finished DirectX 12 games on the market, we had to go with the pre-beta build of Ashes of the Singularity. Consequently, consider the results subject to change. There's just not enough optimization in place yet. At least we'll get some idea of where performance may stand in the future. Ark: Survival Evolved would have been nice to test, but because its DirectX 12 patch kept getting pushed back, we had to skip it.

The individual frames' render times from the different views are interesting. The total rendering time is congruent with how demanding the benchmark scenes are.

We programmed our own interpreter that automatically analyses the log files and gives us the number of CPU calls and a ratio of the frames that were actually rendered.

Bottom Line

With few exceptions, the R9 380X Nitro provides a good gaming experience at FHD using the highest settings. Enthusiasts who prefer high frame rates at or above their monitor’s native refresh rate need to dial down the quality settings, though.

Really, there's not much difference between AMD’s Radeon R9 380X and the older X-less version when it comes to real-world gaming. Both of the cards we tested came overclocked from the factory and didn’t offer much room for further tuning, so this is really all of the performance you'll get from them.

Fortunately for AMD, Nvidia doesn’t really have a competing product in this category. The GeForce GTX 960 is just too slow, and the 970 is significantly more expensive. AMD’s new Radeon R9 380X is positioned right in the middle of that gap, whereas the 380 is a bit closer to Nvidia’s GeForce GTX 960. Its price is competitive with the GeForce as well, though.

Current page: FHD (1920x1080) Gaming Results

Prev Page How We Test Next Page QHD (2560x1440) Gaming Results

Igor Wallossek wrote a wide variety of hardware articles for Tom's Hardware, with a strong focus on technical analysis and in-depth reviews. His contributions have spanned a broad spectrum of PC components, including GPUs, CPUs, workstations, and PC builds. His insightful articles provide readers with detailed knowledge to make informed decisions in the ever-evolving tech landscape

-

ingtar33 so full tonga, release date 2015; matches full tahiti, release date 2011.Reply

so why did they retire tahiti 7970/280x for this? 3 generations of gpus with the same rough number scheme and same performance is sorta sad. -

Eggz Seems underwhelming until you read the price. Pretty good for only $230! It's not that much slower than the 970, but it's still about $60 cheaper. Well placed.Reply -

chaosmassive been waiting for this card review, I saw photographer fingers on silicon reflection btw !Reply -

Onus Once again, it appears that the relevance of a card is determined by its price (i.e. price/performance, not just performance). There are no bad cards, only bad prices. That it needs two 6-pin PCIe power connections rather than the 8-pin plus 6-pin needed by the HD7970 is, however, a step in the right direction.Reply

-

FormatC ReplyI saw photographer fingers on silicon

I know, this are my fingers and my wedding ring. :P

Call it a unique watermark. ;) -

psycher1 Honestly I'm getting a bit tired of people getting so over-enthusiastic about which resolutions their cards can handle. I barely think the 970 is good enough for 1080p.Reply

With my 2560*1080 (to be fair, 33% more pixels) panel and a 970, I can almost never pull off ultimate graphic settings out of modern games, with the Witcher 3 only averaging about 35fps while at medium-high according to GeForce Experience.

If this is already the case, give it a year or two. Future proofing does not mean you should need to consider sli after only 6 months and a minor display upgrade. -

Eggz Reply16976217 said:Honestly I'm getting a bit tired of people getting so over-enthusiastic about which resolutions their cards can handle. I barely think the 970 is good enough for 1080p.

With my 2560*1080 (to be fair, 33% more pixels) panel and a 970, I can almost never pull off ultimate graphic settings out of modern games, with the Witcher 3 only averaging about 35fps while at medium-high according to GeForce Experience.

If this is already the case, give it a year or two. Future proofing does not mean you should need to consider sli after only 6 months and a minor display upgrade.

Yeah, I definitely think that the 980 ti, Titan X, FuryX, and Fury Nano are the first cards that adequately exceed 1080p. No cards before those really allow the user to forget about graphics bottlenecks at a higher standard resolution. But even with those, 1440p is about the most you can do when your standards are that high. I consider my 780 ti almost perfect for 1080p, though it does bottleneck here and there in 1080p games. Using 4K without graphics bottlenecks is a lot further out than people realize.

-

ByteManiak everyone is playing GTA V and Witcher 3 in 4K at 30 fps and i'm just sitting here struggling to get a TNT2 to run Descent 3 at 60 fps in 800x600 on a Pentium 3 machineReply