Battlefield 4 Beta Performance: 16 Graphics Cards, Benchmarked

We spent our weekend playing the Battlefield 4 multiplayer beta, and made sure to capture a ton of performance data with lots of PC hardware. Does your system have what it takes to handle this title? It comes out this month; you'd better look and see!

Game Engine, Image Quality, And Settings

Again, Battlefield 4 employs an updated Frostbite 3 engine, the newest version of Digital Illusions CE's game technology for the next-gen console and PC platforms. While it makes its commercial debut with Battlefield 4, Frostbite 3 will also power Need or Speed: Rivals later this year, in addition to the next iterations of the Dragon Age, Mass Effect, and Star Wars: Battlefront franchises.

Compared to it's predecessor, Frostbite 3 features higher-resolution textures, particle effects, and changes to tessellation, according to the company's feature video. A new networked water feature ensures that all players see the same waves in the water at the same time, allowing small naval craft to hide behind waves in rough seas.

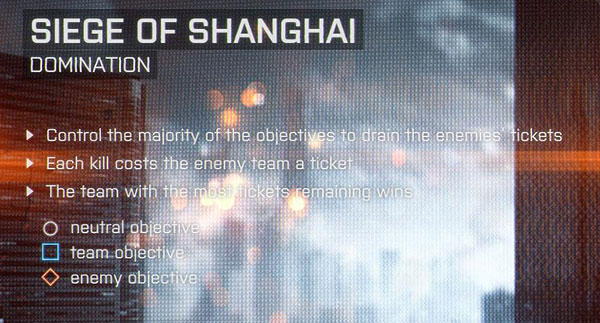

First we tested the Low, Medium, High, and Ultra detail presets, finding that the texture detail on Low appeared dependent on the game type. We noticed higher-resolution textures on the Low setting in domination mode compared to conquest, even on the same Seige of Shanghai map. It's not clear whether this is a beta glitch, or a result of the game dynamically allocating resources based on the number and size of models in the map.

We chose to benchmark the domination map because of its higher texture resolution on the Low setting. We didn't notice a significant performance hit shifting between the Medium and High presets, so we tested Low (MSAA off, AA Deferred off, Ambient Occlusion off), High (MSAA off, AA Deferred high, HBAO enabled), and Ultra (4x MSAA, AA Deferred high, HBAO enabled) detail presets.

The challenge of benchmarking a multiplayer game is that every run is potentially different, altering the load from one test to the next. For this reason, we performed our measurements on servers with low 40 ms-or-less latencies and no other players. Populated servers are likely to exact more demanding CPU loads. However, we needed to eliminate this variable from our testing. The good news is that, when we compared our numbers to runs on servers with more people on them, frame rates didn't change noticeably.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: Game Engine, Image Quality, And Settings

Prev Page Battlefield 4, Powered By DICE's Frostbite 3 Engine Next Page Test System And Graphics HardwareDon Woligroski was a former senior hardware editor for Tom's Hardware. He has covered a wide range of PC hardware topics, including CPUs, GPUs, system building, and emerging technologies.

-

corvetteguy1994 My system is good to go!Reply

****EDIT BY TOM'S HARDWARE****

Sorry, corvetteguy, you're the first so I'm going to hijack your post to answer some common questions:

- Why didn't you mention mantle?I probably *should* have mentioned it, but at this point it seems a little early. We don't know that much about it and we don't even know exactly when it arrives. Rest assured, when Mantle is rolled out we will cover it!

- Why did you use a Titan in the CPU tests instead of the dual-GPU 690 or 7990?Dual-GPU performance can be tricky, and without FCAT working, I didn't want to report potential pie-in-the-sky FRAPS performance that is difficult to verify. Titan is the fastest single GPU card we have.

- Why no FX-6000 CPU?We benched the FX-4170 and FX-8350. The FX-6000 will be in between, there wasn't a colossal spread so it seems pretty straightforward.

- For the love of everything good and pure, why did you use IE?Haha! Lots of comments on this. I used it because it was there - remember, we clean install for our benchmarks, so unless the test involves browsers we don't bother investing time installing anything else. For the record I feel dirty and violated having opened the software, but you should all know that my personal PC has both Firefox and Chrome installed. :)

Hope that clarifies things!

- Don Woligroski

****END OF EDIT BY TOM'S HARDWARE**** -

itzsnypah Why did you use a Titan for the CPU benchmarks when you have the GTX 690 / HD7990 delivering ~30% more FPS?Reply -

slomo4sho Any particular reason why only the 2500K was overclocked in the CPU benchmarks and why the FX-4170 was benchmarked in place of the 4300 or 6300?Reply -

BigMack70 Would love to see some more detailed CPU benchmarks on a full 64 man conquest server once the game comes out... from some other data out there it looks possible that BF4 multiplayer is the first game to actually benefit from Hyperthreaded i7s over their i5 counterparts.Reply

In 64 man conquest games, doing a FRAPS benchmark of an entire 30 minute round, I got a minimum framerate of 42, average of 74, and max of 118 on my rig (4.8 GHz 2600k || 780 SLI @ 1100/1500 || 16GB DDR3 2133c11) at 1440p with all settings maxed and 120 fov.

Also interesting to see 2GB cards struggling at high res on this game. I really didn't think we'd see that so soon, given that the 780/Titan/7950/7970 are the only cards yet released with >2GB standard memory. -

loops I have an 2500k and an 7870xt (7930). As long as I dont max out AA I tend to be able to play at 45-50 fps with a mix of high/ultra on 1080p/ 24" screen.Reply

But not matter what, each time that main building is blown up I loss at least 5 fps for the rest of the round and have big time fps/lag spikes.

Imo you want an 7970/280x and a quad core to be able to play smooth.

Also, I hear a lot about vram...what is the feed back on 2 gigs vs 3 ? -

smeezekitty I think they focused too much on the bottom end cards (6450, 210). I think anybody that has less than a 6670 probably won't be buying BF4.Reply

I also wish they tested a Radeon and Geforce card that would be considered equal to see how it performs by brand. -

nevilence I have a 7770 and an i5, runs pretty clean on high, wouldnt want to bump up to ultra though, that would likely suckReply -

slomo4sho Reply11689688 said:Weird that there is absolutely no mention of Mantle when BF4 is going to be the first game to implement it.

Considering that mantle wont be available until December, why would it be mentioned? Especially considering the fact that none of the "new" AMD GPUs were included in the benchmarks...