Nvidia GeForce GTX 1070 Ti 8GB Review: Vega In The Crosshairs

Why you can trust Tom's Hardware

A Closer Look At MSI's GeForce GTX 1070 Ti Titanium 8G

All of hardware in MSI's Titanium line-up follow the same general design principles. Most obviously, they're dominated by matte silver surfaces. Aesthetically similar motherboards are already available, and now we have a matching graphics card to go with them.

We have to wonder: does the GeForce GTX 1080 Gaming X's cooler work just as well for the lower-end 1070 Ti, or is its thermal performance overkill for a 180W TDP?

Specifications

Exterior

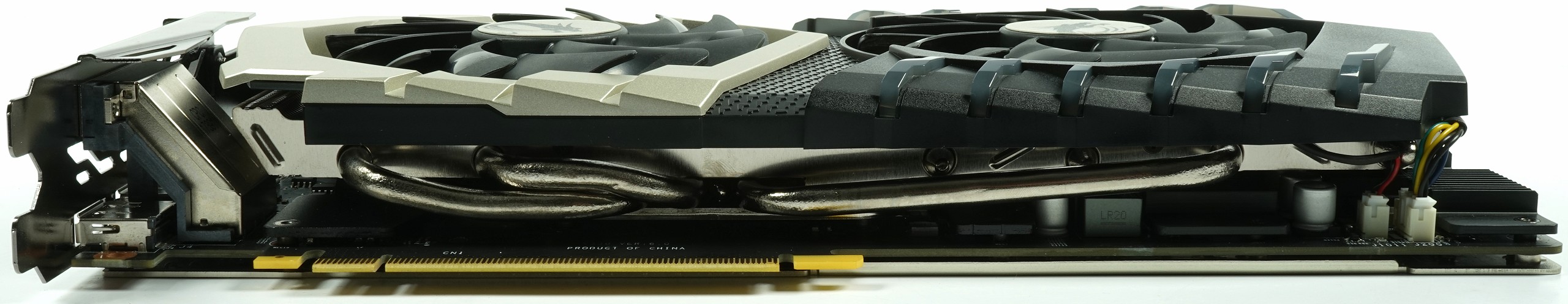

As you can see, the GeForce GTX 1070 Ti Titanium 8G's exterior is smattered with silver metallic accents instead of MSI's signature red splashes. Otherwise, the TwinFrozr VI cooler’s cover might as well be part of the Gaming X family. Two 10cm fans with 14 blades dominate the card’s front shroud.

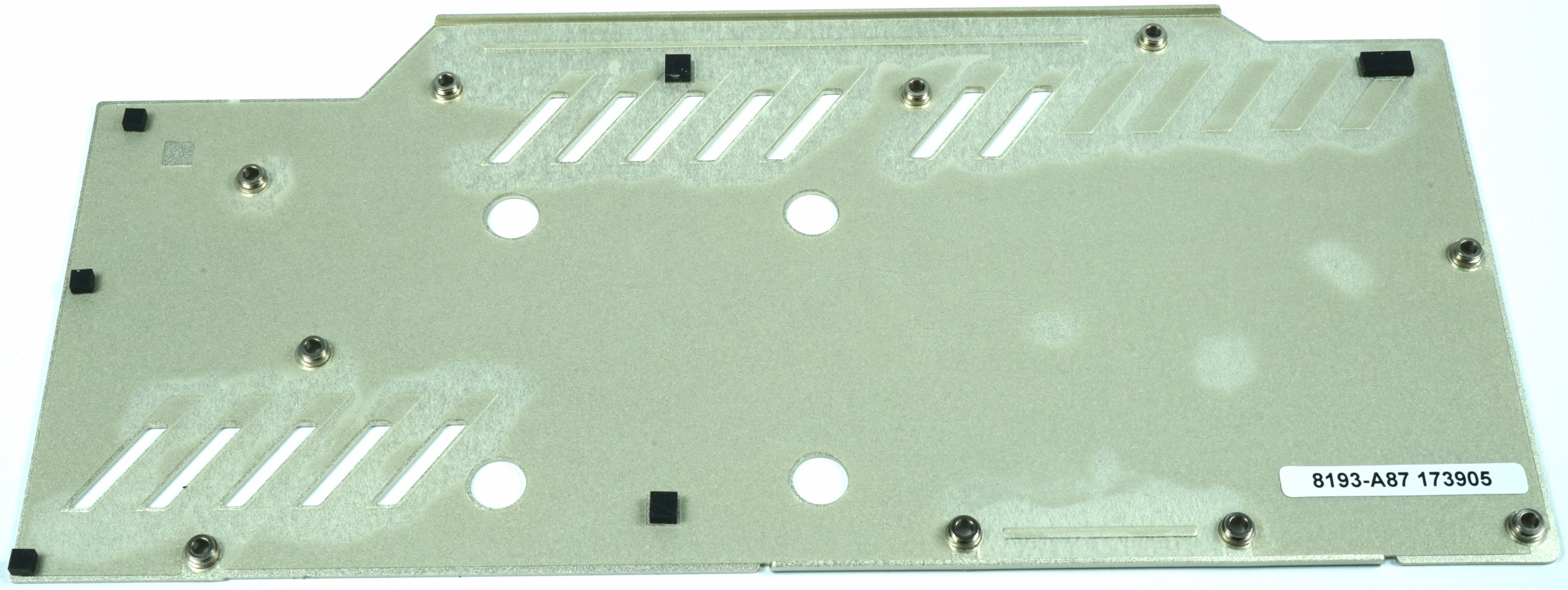

The backplate’s only function is to look good. That printed dragon logo is sure to catch eyes inside a windowed chassis.

MSI arms its Titanium 8G board with eight- and six-pin auxiliary power connectors. They're rotated 180° to avoid interfering with the cooler. Also visible from the top, MSI's logo is back-lit and configurable through bundled software.

Fortunately, the cooling fins are oriented horizontally, which helps direct some exhaust out the back of your case instead of blowing it down across your motherboard.

Axial fan-based coolers typically achieve better thermal performance and make less noise than radial designs. But they recirculate hot air inside of your case, and that's a problem in small form factor PCs.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

MSI exposes five display outputs through its I/O bracket, four of which can be used simultaneously in multi-monitor configurations. There's a dual-link DVI-D port, an HDMI 2.0 interface, and three DisplayPort 1.4-capable connectors. Although the remaining space back there is cut up into openings for exhausting hot air, this card doesn't rely on them to the same extent as Nvidia's GeForce GTX 1070 Ti Founders Edition.

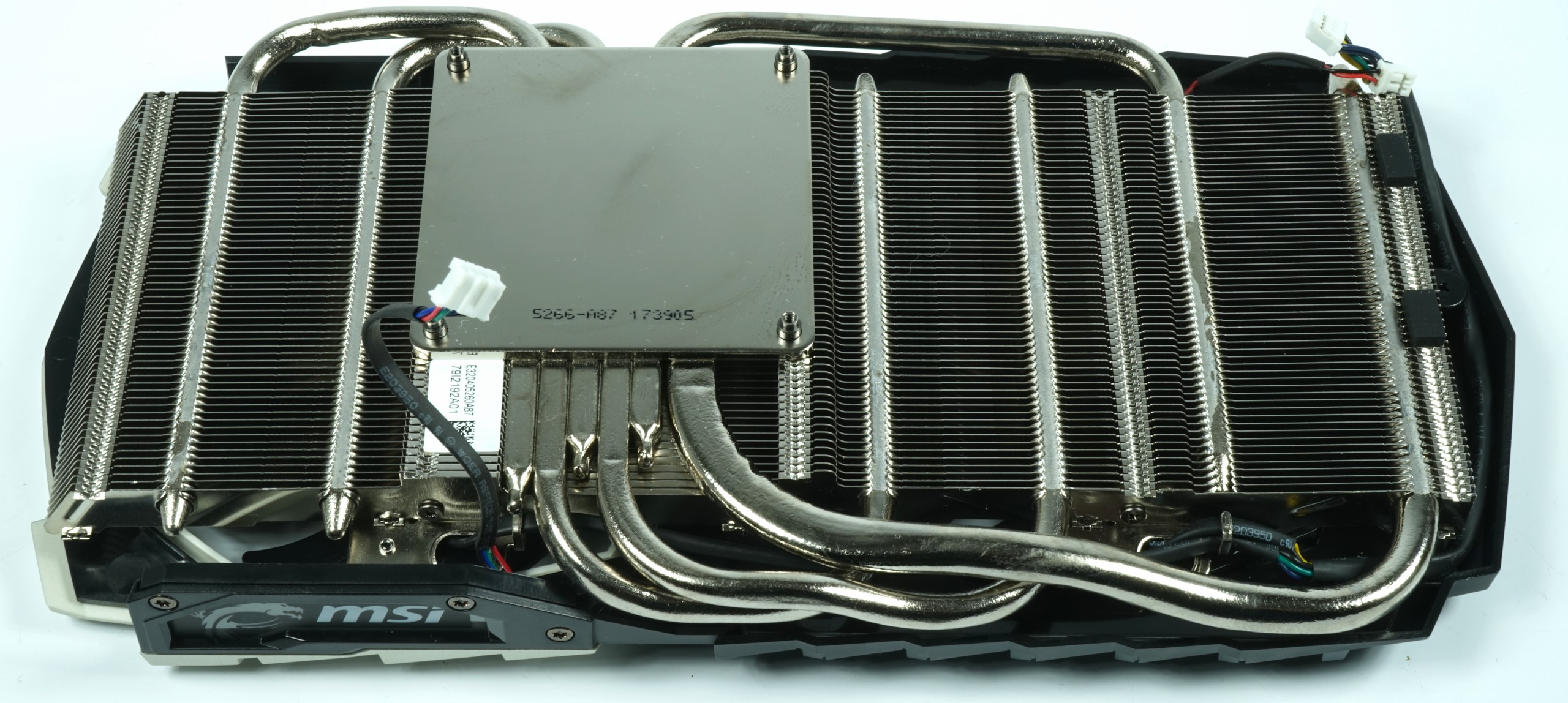

Cooling Solution & Backplate

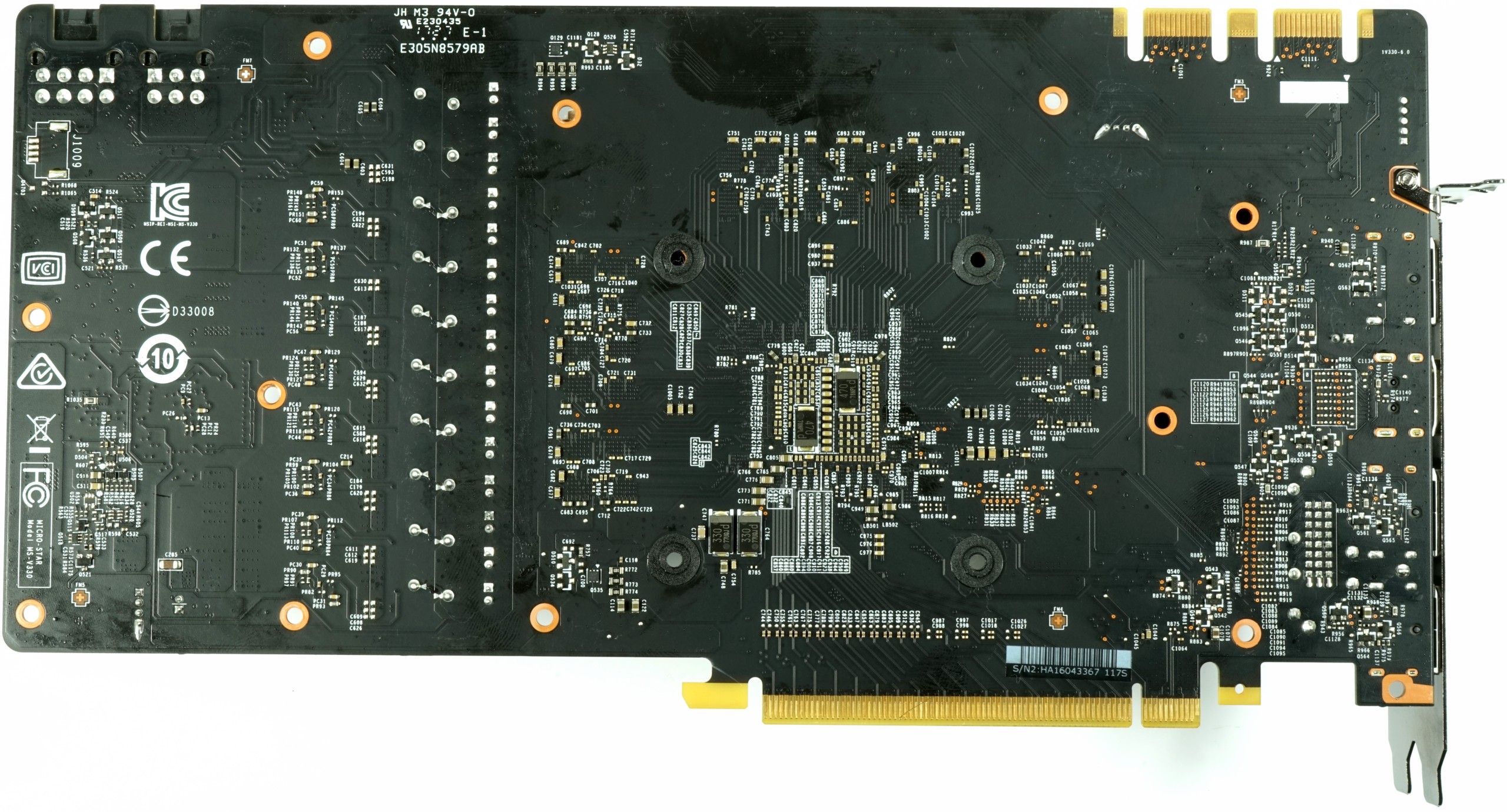

The backplate sure looks nice. It also plays a part in securing the two cooling elements up front. However, the backplate doesn't help with passive cooling.

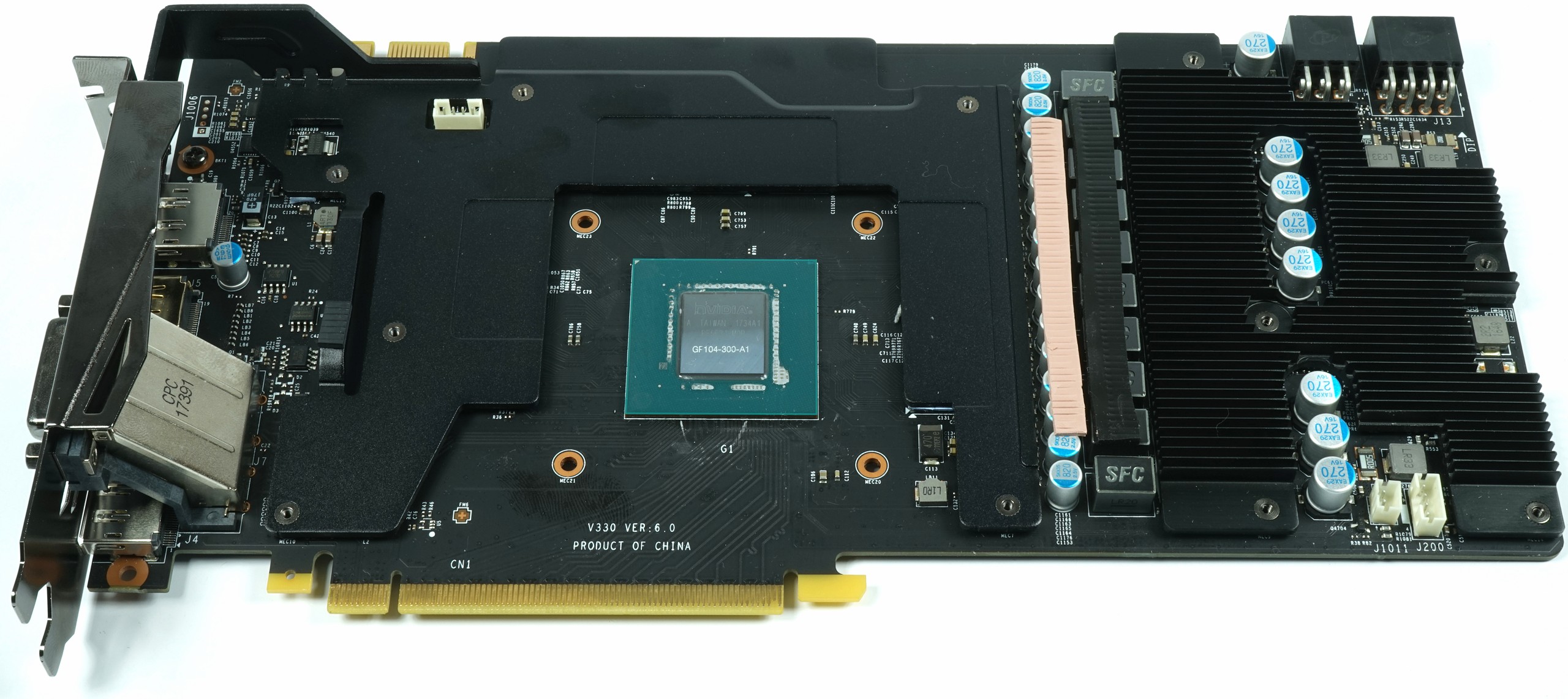

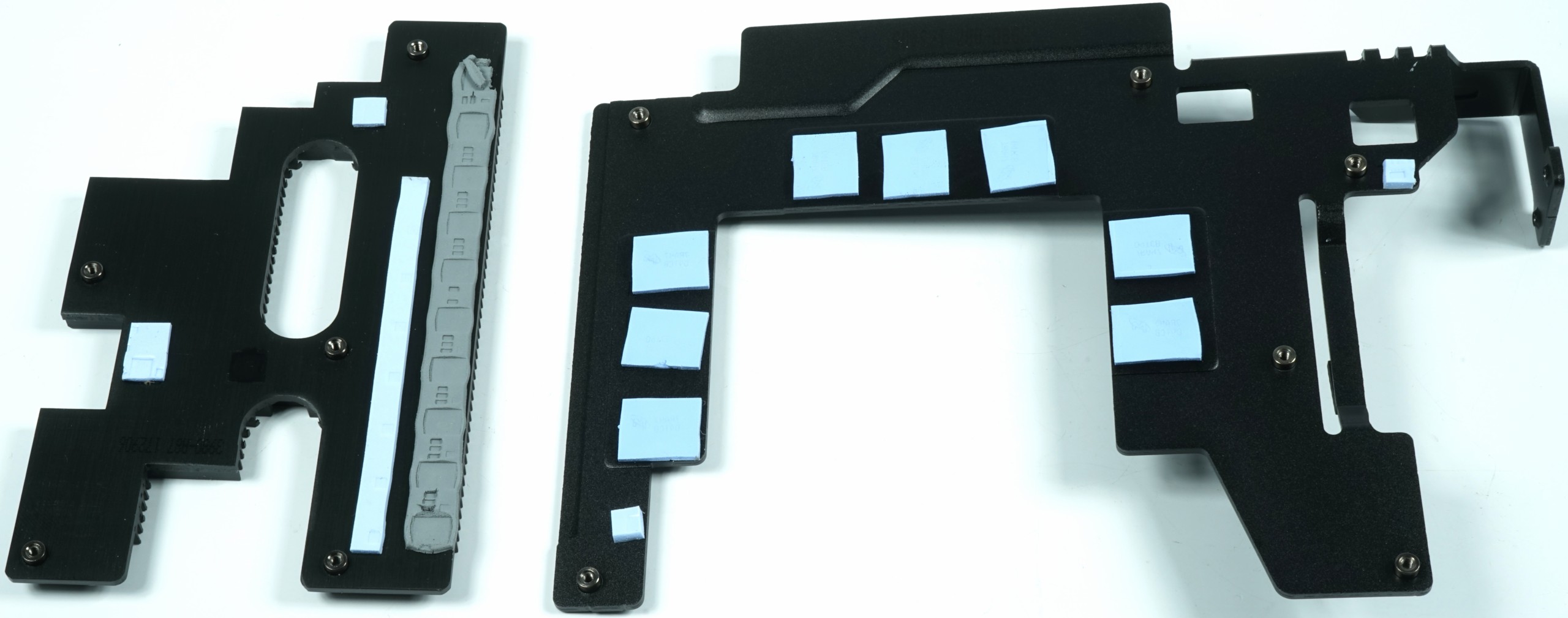

What makes this thermal solution special is its use of a shortened sandwich-like design. A cooling and retention frame sits between the PCB and heat sink's body. In addition, GPU and memory VRMs have their own separate sink.

The power circuitry's cooler is made more potent through the use of fins across the frame, adding quite a bit of surface area. These fins definitely benefit from the horizontal airflow moving through the main heat sink right above them.

A large number of pads between the chokes/caps and main cooler's fins helps draw heat away from those components. Speaking of the main sink, it delivers the same great performance we've seen from other MSI cards. A nickel-plated heat sink covers the GP104 processor, while five 6mm heat pipes and one 8mm one spread heat through the cooler's body.

| Thermal Solution | |

|---|---|

| Type of Cooler | Air Cooling |

| Heat Sink | Nickel-plated GPU Heat Sink |

| Cooling Fins | Aluminum, Horizontally-Oriented, Tight Grouping |

| Heat Pipes | 1x 8mm, 5x 6mm, Nickel-plated |

| VRM Cooling | Separate VRM Cooler with Cooling FinsOnly MOSFETs are Cooled |

| RAM Cooling | Via Retention Frame |

| Fan | 2x 10cm Fan (9.7cm Fan Diameter)14 BladesSemi-Passive Control |

| Backplate | Aluminum, Painted Silver-MetallicNo Cooling Function, Foil on the Inside |

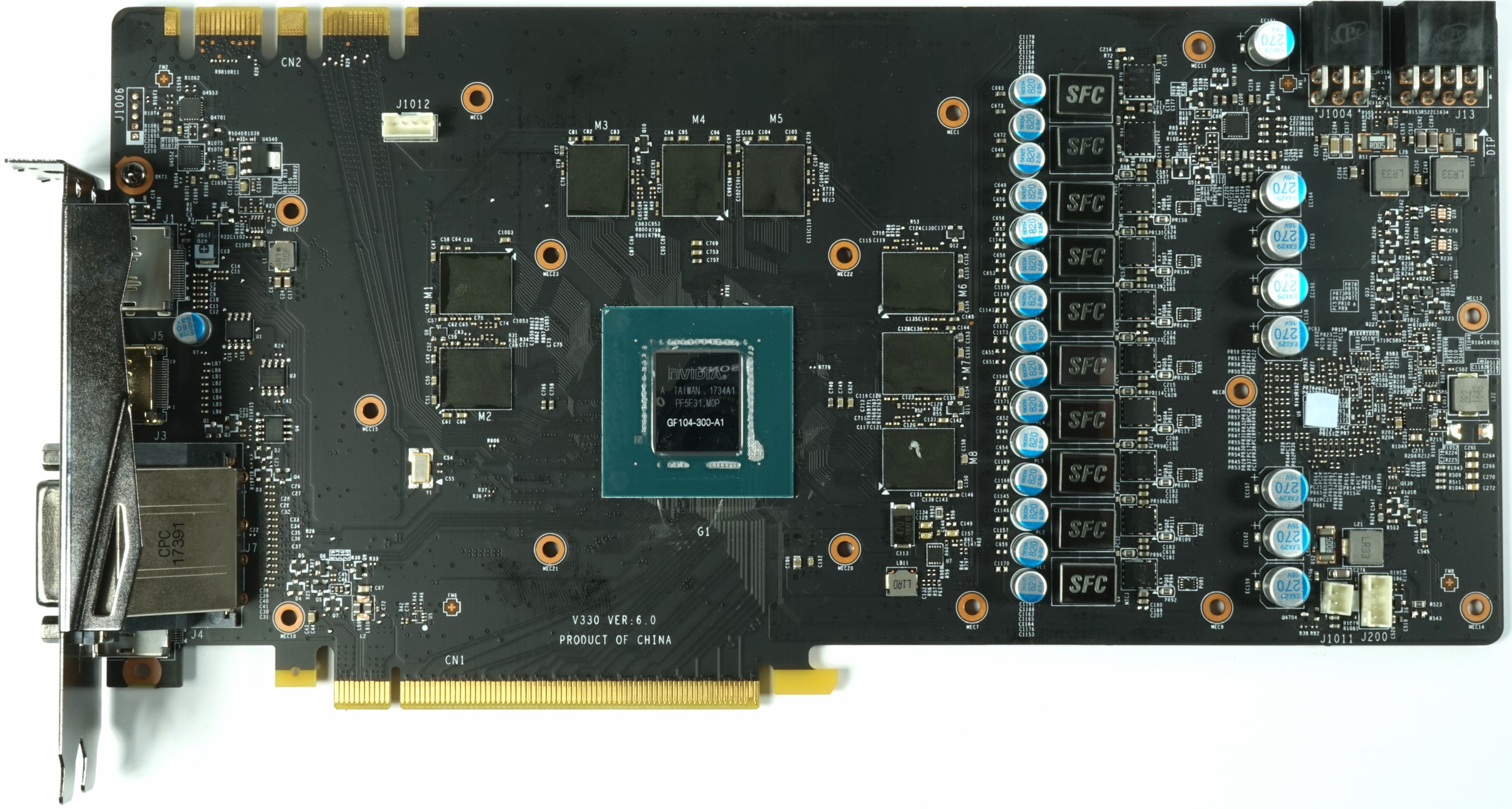

Power Supply & Components

MSI places its two memory voltage converters above eight for the GPU (giving the processor four real power phases, doubled). Because the VRMs are all lined up, the memory's VRMs end up fairly far away from the modules themselves. As a result, the hot-spot we complained about in our MSI GeForce GTX 1080 Ti Gaming X 11G Review is no longer an issue.

Like Nvidia's GeForce GTX 1070 Ti FE, this 4+2-phase design leans on uPI Semiconductor's up9511 eight-phase buck controller. It cannot address the voltage converter phases directly, so F6A4 gate drivers are used to control the UBIQ M3816Ns. These are durable dual N-channel MOSFETs with sufficient reserves in the DC/DC voltage converter category.

| GPU Power Supply | ||

|---|---|---|

| PWM Controller | uP9511uPI SemiconductorEight-Phase PWM Controller |

Gate DriverF6A4Phase Doubler& Gate Driver

VRMM3816NUBIQ (uPI Semiconductor)Power MOSFETDual N-ChannelHigh- and Low-Side

CoilsSFCSuper Ferrite ChokeLianzhen Electronics

Memory & Memory Power SupplyModulesMT51J256M32HF-80MicronGDDR5, 8 Gb/s8 Gigabit (32x 256Mb)Eight Modules

PWM ControlleruP1641uPI SemiconductorTwo-Phase Buck Converter

VRMBSC0923NDIInfineon CloneDual N-Channel MOSFETHigh- and Low-Side

CoilsSFCSuper Ferrite ChokeLianzhen Electronics

Other ComponentsMonitoringINA3221Monitoring ChipCurrents, Voltages

BIOSWinbond 25Q40Kynix SemiconductorEEPROMBIOS

Shunts & Filter1x Coil (Smoothing) & Shunt per PCIe Connector (12V Input Voltage)

Other FeaturesSpecial Features- 1x Eight-Pin + 1x Six-Pin Auxiliary Power Connector- Filter Chokes at Entry

MORE: Best Graphics Cards

MORE: Desktop GPU Performance Hierarchy Table

MORE: All Graphics Content

Current page: A Closer Look At MSI's GeForce GTX 1070 Ti Titanium 8G

Prev Page A Closer Look At Nvidia's GeForce GTX 1070 Ti FE Next Page How We Tested GeForce GTX 1070 Ti-

10tacle Yaaayyy! The NDA prison has freed everyone to release their reviews! Outstanding review, Chris. This card landed exactly where it was expected to, between the 1070 and 1080. In some games it gets real close to the 1080, where in other games, the 1080 is significantly ahead. Same with comparison to the RX 56 - close in some, not so close in others. Ashes and Destiny 2 clearly favor AMD's Vega GPUs. Can we get Project Cars 2 in the mix soon?Reply

It's a shame the overclocking results were too inconsistent to report, but I guess that will have to wait for vendor versions to test. Also, a hat tip for using 1440p where this GPU is targeted. Now the question is what will the real world selling prices be vs. the 1080. There are $520 1080s available out there (https://www.newegg.com/Product/Product.aspx?Item=N82E16814127945), so if AIB partners get closer to the $500 pricing threshold, that will be way too close to the 1080 in pricing. -

samer.forums Vega Still wins , If you take in consideration $200 Cheaper Freesync 1440p wide/nonwide monitors , AMD is still a winner.Reply -

SinxarKnights So why did MSI call it the GTX 1070 Ti Titanium? Do they not know what Ti means?Reply

ed: Lol at least one other person doesn't know what Ti means either : If you don't know Ti stands for "titanium" effectively they named the card GTX 1070 Titanium Titanium. -

10tacle Reply20334482 said:Vega Still wins , If you take in consideration $200 Cheaper Freesync 1440p wide/nonwide monitors , AMD is still a winner.

Well that is true and always goes without saying. You pay more for G-sync than Freesync which needs to be taken into consideration when deciding on GPUs. However, if you already own a 1440p 60Hz monitor, the choice becomes not so easy to make, especially considering how hard it is to find Vegas. -

10tacle For those interested, Guru3D overclocked their Founder's Edition sample successfully. As expected, it gains 9-10% which puts it square into reference 1080 territory. Excellent for the lame blower cooler. The AIB vendor dual-triple fan cards will exceed that overclocking capability.Reply

http://www.guru3d.com/articles_pages/nvidia_geforce_gtx_1070_ti_review,42.html -

mapesdhs Chris, what is it that pummels the minimums for the 1080 Ti and Vega 64 in BF1 at 1440p? And why, when moving up to UHD, does this effect persist for the 1080 Ti but not for Vega 64?Reply

Also, wrt the testing of Division, and comparing to your 1080 Ti review back in March, I notice the results for the 1070 are identical at 1440p (58.7), but completely different at UHD (42.7 in March, 32.7 now); what has changed? This new test states it's using Medium detail at UHD, so was the March testing using Ultra or something? The other cards are affected in the same way.

Not sure if it's significant, but I also see 1080 and 1080 Ti performance at 1440p being a bit better back in March.

Re pricing, Scan here in the UK has the Vega 56 a bit cheaper than a reference 1070 Ti, but not by much. One thing which is kinda nuts though, the AIB versions of the 1070 Ti are using the same branding names as they do for what are normally overclocked models, eg. SC for EVGA, AMP for Zotac, etc., but of course they're all 1607MHz base. Maybe they'll vary in steady state for boost clocks, but it kinda wrecks the purpose of their marketing names. :D

Ian.

PS. When I follow the Forums link, the UK site looks different, then reverts to its more usual layout when one logs in (weird). Also, the UK site is failing to retain the login credentials from the US transfer as it used to.

-

mapesdhs Reply20334510 said:Well that is true and always goes without saying. You pay more for G-sync than Freesync which needs to be taken into consideration when deciding on GPUs. ...

It's a bit odd that people are citing the monitor cost advantage of Freesync, while article reviews are not showing games actually running at frame rates which would be relevant to that technology. Or are all these Freesync buyers just using 1080p? Or much lower detail levels? I'd rather stick to 60Hz and higher quality visuals.

Ian.

-

FormatC @Ian:Reply

The typical Freesync-Buddy is playing in Wireframe-Mode at 720p ;)

All this sync options can help to smoothen the output, if you are too sensitive. This is a fact, but not for everybody with the same prio. -

TJ Hooker Reply

From other benchmarks I've seen, DX12 performance in BF1 is poor. Average FPS is a bit lower than in DX11, and minimum FPS far worse in some cases. If you're looking for BF1 performance info, I'd recommend looking for benchmarks on other sites that test in DX11.20334648 said:Chris, what is it that pummels the minimums for the 1080 Ti and Vega 64 in BF1 at 1440p? And why, when moving up to UHD, does this effect persist for the 1080 Ti but not for Vega 64? -

10tacle Reply20334667 said:It's a bit odd that people are citing the monitor cost advantage of Freesync, while article reviews are not showing games actually running at frame rates which would be relevant to that technology. Or are all these Freesync buyers just using 1080p? Or much lower detail levels? I'd rather stick to 60Hz and higher quality visuals.

Well I'm not sure I understand your point. The benchmarks show FPS exceeding 60FPS, meaning maximum GPU performance. It's about matching monitor refresh rate (Hz) to FPS for smooth gameplay, not just raw FPS. But regarding the Freesync argument, that's usually what is brought up in price comparisons between AMD and Nvidia. If someone is looking to upgrade from both a 60Hz monitor and a GPU, then it's a valid point.

However, as I stated, if someone already has a 60Hz 2560x1440 or one of those ultrawide monitors, then the argument for Vega gets much weaker. Especially considering their limited availability. As I posted in a link above, you can buy a nice dual fan MSI GTX 1080 for $520 on NewEgg right now. I have not seen a dual fan MSI Vega for sale anywhere (every Vega for sale I've seen is the reference blower design).