Tom's Hardware Verdict

The Nvidia RTX 4060 costs just $299, and that low price covers a multitude of compromises. Even with less memory than the previous generation RTX 3060, performance is still good, especially for 1080p gaming, plus it only needs 115W of power.

Pros

- +

Very efficient architecture and design

- +

Lower price than the previous generation 3060

- +

Built for mainstream 1080p gaming

Cons

- -

DLSS 3 benefits are debatable

- -

8GB and 128-bit bus are worse than the 3060

- -

Big drop in core counts relative to 4060 Ti

Why you can trust Tom's Hardware

The Nvidia GeForce RTX 4060 drops the entry price for the Ada Lovelace architecture and RTX 40-series GPUs to $299. It lands between the former RTX 3060 and RTX 3050 in pricing, and while there are always compromises as you move down the price and performance ladder, it represents a potentially great value proposition. For those on a budget, it could be one of the best graphics cards, assuming performance is up to snuff.

There are deserved complaints about limiting the AD107 GPU at the heart of this card to a 128-bit memory interface, though the lower price compared to the RTX 4060 Ti takes out some of the sting. Still, the previous generation RTX 3060 came with a 192-bit interface and 12GB of memory, so this represents a clear step back in that area. We'll discuss this more on the next page, as it's an important topic.

We'll update the GPU benchmarks hierarchy later today, now that the embargo is over. The bottom line shouldn't be too surprising: In the vast majority of games, the new the RTX 4060 easily outperforms the previous generation RTX 3060, and it almost catches the RTX 3060 Ti. Toss in DLSS 3's Frame Generation and the dramatically improved efficiency and you can make a reasonable case for buying an RTX 4060 over a previous gen card. You won't tap into new levels of performance, but you'll get all the latest Nvidia features and upgrades.

The chief competition from AMD comes from two places. The latest generation Radeon RX 7600 undercuts Nvidia's price by up to $50 now, while the previous generation RX 6700 XT starts at $309, basically matching the RTX 4060 on price but providing 50% more memory and potentially better overall performance. Depending on pricing and availability, the RTX 3060 Ti (and other 30-series GPUs) may also be an interesting option, but we don't expect such cards to stick around for long, unless you're willing to purchase a used card.

Let's check the specifications, which were revealed over a month ago with the RTX 4060 Ti announcement. Nvidia allows reviews of the $299 MSRP cards today, while more expensive models are under embargo until tomorrow. We received an Asus RTX 4060 Dual OC model from Nvidia, which comes with a modest factory overclock, but it's still priced at $299.

| Graphics Card | RTX 4060 | RTX 4060 Asus Dual OC | RTX 4060 Ti | RTX 4070 | RTX 3050 | RTX 3060 | RTX 3060 Ti | RTX 3070 | RX 7600 | RX 6700 XT | Arc A770 16GB | Arc A750 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Architecture | AD107 | AD107 | AD106 | AD104 | GA106 | GA106 | GA104 | GA104 | Navi 33 | Navi 22 | ACM-G10 | ACM-G10 |

| Process Technology | TSMC 4N | TSMC 4N | TSMC 4N | TSMC 4N | Samsung 8N | Samsung 8N | Samsung 8N | Samsung 8N | TSMC N6 | TSMC N7 | TSMC N6 | TSMC N6 |

| Transistors (Billion) | 18.9 | 18.9 | 22.9 | 32 | 12 | 12 | 17.4 | 17.4 | 13.3 | 17.2 | 21.7 | 21.7 |

| Die size (mm^2) | 158.7 | 158.7 | 187.8 | 294.5 | 276 | 276 | 392.5 | 392.5 | 204 | 336 | 406 | 406 |

| SMs / CUs / Xe-Cores | 24 | 24 | 34 | 46 | 20 | 28 | 38 | 46 | 32 | 40 | 32 | 28 |

| GPU Cores (Shaders) | 3072 | 3072 | 4352 | 5888 | 2560 | 3584 | 4864 | 5888 | 2048 | 2560 | 4096 | 3584 |

| Tensor / AI Cores | 96 | 96 | 136 | 184 | 80 | 112 | 152 | 184 | 64 | N/A | 512 | 448 |

| Ray Tracing "Cores" | 24 | 24 | 34 | 46 | 20 | 28 | 38 | 46 | 32 | 40 | 32 | 28 |

| Boost Clock (MHz) | 2460 | 2505 | 2535 | 2475 | 1777 | 1777 | 1665 | 1725 | 2625 | 2581 | 2100 | 2050 |

| VRAM Speed (Gbps) | 17 | 17 | 18 | 21 | 14 | 15 | 14 | 14 | 18 | 16 | 17.5 | 16 |

| VRAM (GB) | 8 | 8 | 8 | 12 | 8 | 12 | 8 | 8 | 8 | 12 | 16 | 8 |

| VRAM Bus Width | 128 | 128 | 128 | 192 | 128 | 192 | 256 | 256 | 128 | 192 | 256 | 256 |

| L2 / Infinity Cache | 24 | 24 | 32 | 36 | 2 | 3 | 4 | 4 | 32 | 96 | 16 | 16 |

| ROPs | 48 | 48 | 48 | 64 | 48 | 48 | 80 | 96 | 64 | 64 | 128 | 128 |

| TMUs | 96 | 96 | 136 | 184 | 80 | 112 | 152 | 184 | 128 | 160 | 256 | 224 |

| TFLOPS FP32 (Boost) | 15.1 | 15.4 | 22.1 | 29.1 | 9.1 | 12.7 | 16.2 | 20.3 | 21.5 | 13.2 | 17.2 | 14.7 |

| TFLOPS FP16 (FP8) | 121 (242) | 123 (246) | 177 (353) | 233 (466) | 36 (73) | 51 (102) | 65 (130) | 81 (163) | 43 | 26.4 | 138 | 118 |

| Bandwidth (GBps) | 272 | 272 | 288 | 504 | 224 | 360 | 448 | 448 | 288 | 384 | 560 | 512 |

| TDP (watts) | 115 | 115 | 160 | 200 | 130 | 170 | 200 | 220 | 165 | 230 | 225 | 225 |

| Launch Date | Jul 2023 | Jul 2023 | May 2023 | Apr 2023 | Jan 2022 | Feb 2021 | Dec 2020 | Oct 2020 | May 2023 | Mar 2021 | Sep 2022 | Sep 2022 |

| Launch Price | $299 | $299 | $399 | $599 | $249 | $329 | $399 | $499 | $269 | $479 | $349 | $289 |

| Online Price | $300 | $300 | $380 | $585 | $220 | $260 | $275 | $400 | $250 | $310 | $340 | $240 |

There are twelve GPUs listed in the above table if you scroll to the right, representing the most useful comparisons for the RTX 4060, but the first column is the most pertinent. The new GeForce RTX 4060 uses Nvidia's AD107 GPU, which is also the same chip found in the RTX 4060 and 4050 Laptop GPUs.

The RTX 4060 uses the full AD107 chip, with 24 streaming multiprocessors (SMs), each with 128 CUDA cores. That gives the total shader count of 3,072. Astute mathematicians will note that this is less than the previous generation RTX 3060's 3,584 shaders. However, as with the rest of the RTX 40-series lineup, clock speeds are significantly higher at 2460 MHz, compared to 1777 MHz on the 3060. The result is that peak compute performance ends up being 19% higher.

Memory bandwidth is lower for raw throughput, at 272 GB/s compared to the RTX 3060's 360 GB/s. But the L2 cache has ballooned from 3MB on the 3060 to 24MB on the 4060, and Nvidia says that improves the effective bandwidth by 67% — to 453 GB/s. We'll discuss the memory subsystem and its ramifications more on the next page.

One thing to note is that the RTX 4060 comes with an x8 PCIe interface, just like the RTX 4060 Ti, where the RTX 4070 and above use an x16 link width. This is similar to the RX 7600 and previous generation RTX 3050, where cutting the additional PCIe lanes helps to keep the die size smaller. This shouldn't matter much with most modern PCs, but if you're planning to upgrade an older PC that only supports PCIe 3.0 with an RTX 4060, you may lose a bit of performance compared to what we'll show in our benchmarks.

Looking at the competition based on relatively similar pricing, we have a lot of options. AMD has the new RX 7600 8GB card, along with the previous generation RX 6700 XT 12GB and RX 6700 10GB. From Intel, there's the Arc A770 8GB and Arc A750. Then Nvidia also has to contend with existing cards like the RTX 3060, RTX 3060 Ti, and RTX 3070. It's a safe bet that Nvidia can match or exceed cards from AMD and Intel when it comes to ray tracing performance and AI workloads, but it will likely lose out to the 3060 Ti and above in those same tasks. Rasterization performance should prove to be a tougher battle for the newcomer.

We'll also include results from the RTX 2060, which launched in early 2019. Many gamers skip a generation or two on hardware, and Nvidia (like AMD and the RX 7600) is pitching the RTX 4060 as a great upgrade path for people still using cards like the GTX 1060, RTX 2060, or RX 570/580/590. For all the complaining about the RTX 40-series and its higher generational pricing, it's also nice to see Nvidia match or beat the pricing on the two previous generation GPUs. The RTX 2060 launched at $349 and later dropped to $299, while the RTX 3060 launched at $329 but rarely saw that price until the last few months.

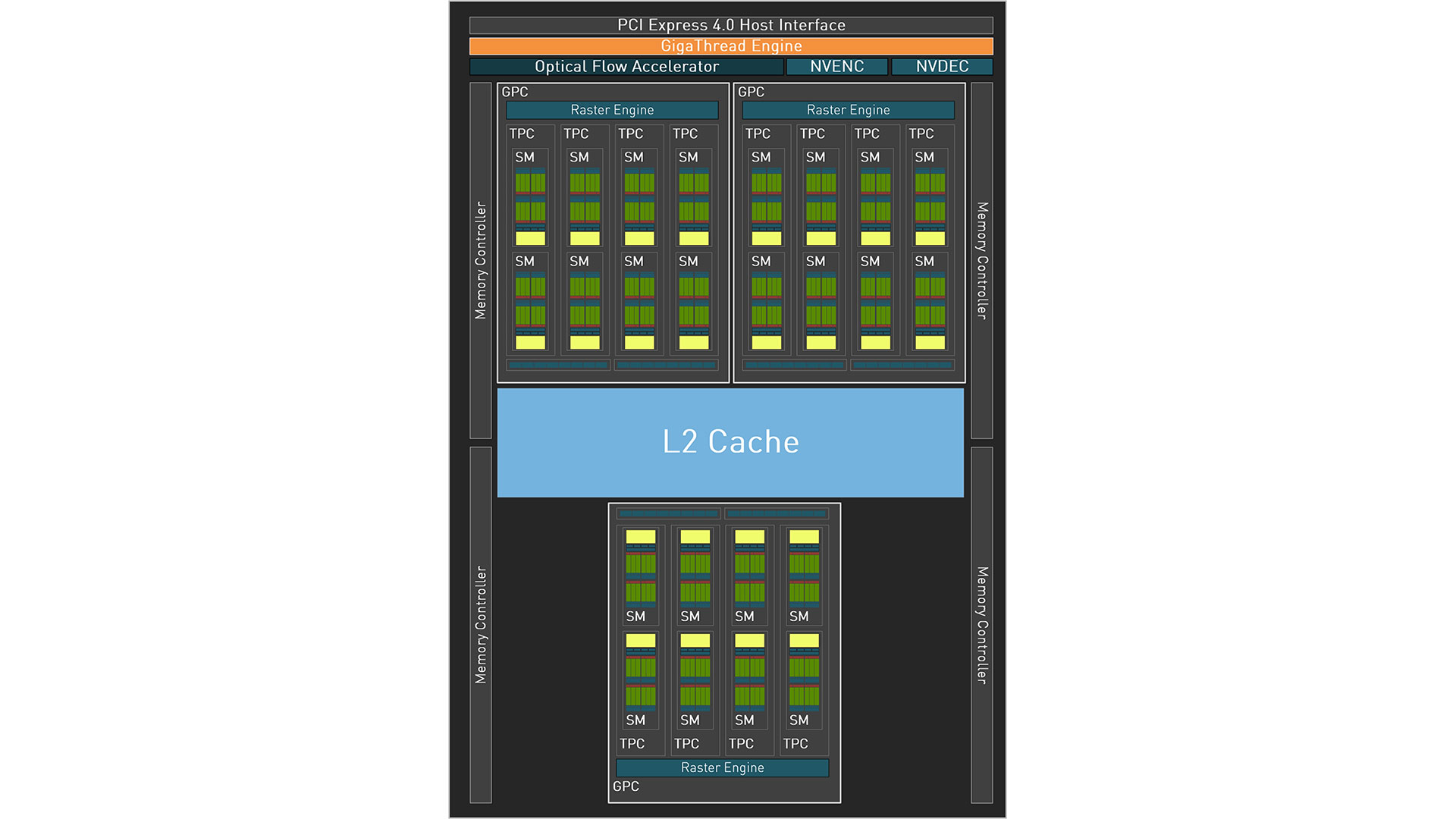

The block diagram for the RTX 4060 / AD107 shows just how much Nvidia has trimmed things down in order to hit mainstream pricing. Most of the other Ada chips have multiple NVDEC / NVENC blocks, but AD107 has just one of each. As noted above, there are also just 24 total SMs, spread among three GPCs (Graphics Processing Clusters). Finally, Nvidia provides up to 8MB of L2 cache per 32-bit memory channel, but the RTX 4060 only has 6MB enabled for a total of 24MB. (The mobile RTX 4060 gets the full 32MB, if you're wondering.)

As with other Ada Lovelace chips, the RTX 4060 includes Nvidia's 4th-gen Tensor cores, 3rd-gen RT cores, new and improved NVENC/NVDEC units for video encoding and decoding with AV1 support, and a significantly more powerful Optical Flow Accelerator (OFA). The latter is used for DLSS 3, and all indications are that Nvidia has no intention of trying to enable Frame Generation on Ampere and earlier RTX GPUs.

The tensor cores now support FP8 with sparsity. It's not clear how useful that is for various workloads, but AI and deep learning have certainly leveraged lower precision number formats to boost performance without significantly altering the quality of the results — at least in some cases. It will ultimately depend on the work being done, and figuring out just what uses FP8 versus FP16, plus sparsity, can be tricky.

Of course, running AI models on a budget mainstream card like the RTX 4060 isn't really the primary goal. Yes, Stable Diffusion will work, and we'll show test results later. Other AI models that can fit in the 8GB VRAM will run as well. However, anyone that's serious about AI and machine learning will almost certainly want a GPU with more processing muscle and more VRAM.

- MORE: Best Graphics Cards

- MORE: GPU Benchmarks and Hierarchy

- MORE: All Graphics Content

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: Nvidia GeForce RTX 4060 Introduction

Next Page RTX 4060: Is 8GB of VRAM Insufficient?

Jarred Walton is a senior editor at Tom's Hardware focusing on everything GPU. He has been working as a tech journalist since 2004, writing for AnandTech, Maximum PC, and PC Gamer. From the first S3 Virge '3D decelerators' to today's GPUs, Jarred keeps up with all the latest graphics trends and is the one to ask about game performance.

-

healthy Pro-teen Nvidia's marketing be like: In germany you could maybe save $132 over 4 years if you play 20hours/week due to energy costs compared to the 3060. That is hilarious and obviously the tiny 50 tier die is efficient lol.Reply -

tennis2 @JarredWaltonGPU I feel compelled to give you huge props for the stellar write-up!Reply

Only made it halfway through the article, so I'll have to come back when I have time to finish, but wow....there's so much good stuff here. I hope everyone takes the time to fully read this article. I know that can get tricky once the "architecture launch" article is done for a generation. It's nice to be able to learn some new things from a "standard" GPU review even after I've been reading reviews from multiple sites of every GPU launched for the past.....10+ years. -

lmcnabney The expectations for GPUs going back decades is that the new generation will provide performance in-line with one or sometimes two tiers above it from the prior generation. That means the 4060 should perform somewhere between a 3060ti and 3070. It doesn't. It can't even meet the abilities of the 3060ti. This card should not receive a positive review because it fails at meeting the minimum performance standard.Reply -

oofdragon Utterly crap.. can't even match a 3060Ti, but of course since that amazing accomplishment goes to the failure 4060Ti. Meanwhile anyone can get a 6700XT with 12GB VRAM for half the price. FAIL FAIL FAIL FAIL FAIL FAIL FAILReply -

Elusive Ruse Thanks for the review, however your test suite is outdated. You need to consider bringing a host of newer releases.Reply -

DSzymborski Replyoofdragon said:Utterly crap.. can't even match a 3060Ti, but of course since that amazing accomplishment goes to the failure 4060Ti. Meanwhile anyone can get a 6700XT with 12GB VRAM for half the price. FAIL FAIL FAIL FAIL FAIL FAIL FAIL

You're comparing a used GPU to a new GPU. That increased risk is a fundamental difference which has to be addressed in any comparison. -

Elusive Ruse Reply

You can get a new one around $310-$320DSzymborski said:You're comparing a used GPU to a new GPU. That increased risk is a fundamental difference which has to be addressed in any comparison. -

DSzymborski ReplyElusive Ruse said:You can get a new one around $310-$320

He's literally quoting $200 above as his point of comparison. That's a used card. -

randyh121 Reply

lol the 6700XT is nearly 500$ and the 4060 runs betteroofdragon said:Utterly crap.. can't even match a 3060Ti, but of course since that amazing accomplishment goes to the failure 4060Ti. Meanwhile anyone can get a 6700XT with 12GB VRAM for half the price. FAIL FAIL FAIL FAIL FAIL FAIL FAIL

keep lying to yourself amd-cope