Tom's Hardware Verdict

The Zotac GTX 1650 Super is able to reach 60 fps or more in many titles when running 1080p resolutions and is a good value at $160. It’s SFF-friendly at just over six inches long. We just wish its fans were quieter under load.

Pros

- +

Small size

- +

Good performance per dollar

Cons

- -

No ‘fan off’ capability

- -

Fans get loud under load

- -

4GB VRAM

Why you can trust Tom's Hardware

Nvidia’s Turing based lineup has matured over recent months and filled in performance gaps on the mid-range and high-end segments by adding the ‘Super’ branded cards. The new GPUs (RTX 2080 Super/RTX 2070 Super/RTX 2060 Super) hit the market with better performance than their predecessors and a lower price. More recently, the budget end has received the same attention with the recent release of the GTX 1660 Super and the GTX 1650 Super we have in our hands today.

The GTX 1650 Super fits into Nvidia’s product stack above the GTX 1650 and below the GTX 1660. With a huge performance gap and a price difference of about $70, there was ample room to add a card without blurring the lines between products. And for consumers, getting a notably faster card option for a $10 price increase over the launch price of the GTX 1650 feels like a worthy upgrade option.

The GTX 1650 was released in April 2019 for $150, while today the Zotac GTX 1650 Super hits the shelves at $160. For that meager price difference you end up with a card that performs a lot closer to the GTX 1660. Where the GTX 1650 can struggle in some games at 1080p resolution using ‘Ultra’ game settings, the Super variant is able to run a lot more AAA titles (but not all) at 60 fps thanks to its higher shader count and bump to GDDR6 memory.

Like the GTX 1660 Super, the 1650 Super will not have reference or Founders Edition models from Nvidia. Because of this, we have a Zotac GTX 1650 Super for testing. The Zotac version sports a dual-fan cooling solution, requires a single 6-pin power connector, and is compact enough for small-form-factor builds.

Features

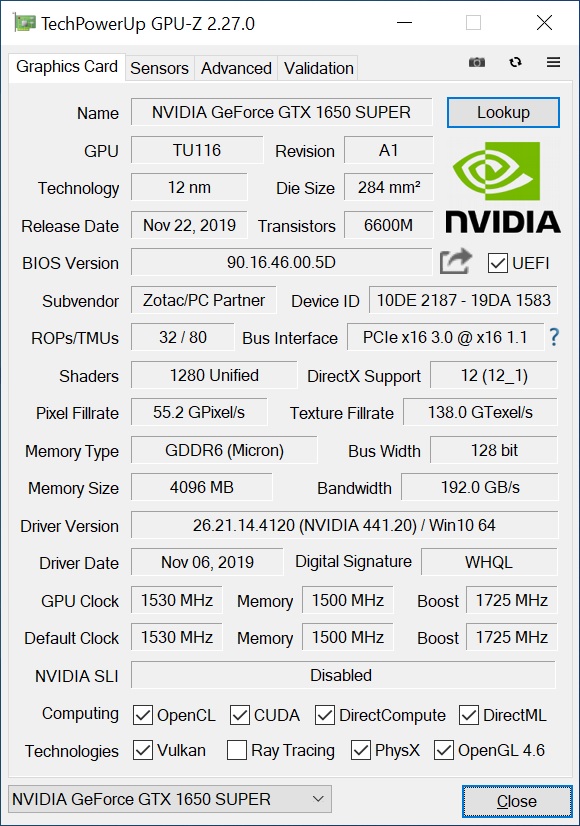

The GTX 1650 Super is based on the Turing architecture and uses a variant of the TU116 silicon found in the GTX 1660 and 1660 Ti, just like the 1660 Super. As with other GTX-branded cards, there’s no RTX support here for ray tracing. The TU116 die is manufactured on the 12nm FFN (FinFET Nvidia) process by TSMC, consists of 6.6 billion transistors and measures in at 284 mm². The 1650 Super brings 1,280 cores, 80 TMUs, and 32 ROPS. This is down from the 1408/88/48 on the 1660 and 1660 Super, but above the GTX 1650 and its 896/56/32.

The GTX 1650 Super brings GDDR6 to the table versus the non-super using GDDR5. The new memory sits on the same 128-bit bus, using four 32-bit memory controllers. This update significantly improves memory bandwidth from 128 GBps to 192 GBps. Memory capacity remains the same at 4GB, which today can be a concern for some titles running ultra settings. Running out of VRAM can significantly affect performance, so users will have to be careful with memory-heavy games, especially as time goes on and VRAM requirements increase.

The Zotac GTX 1650 Super has a 1,530 MHz base clock and 1,500 MHz (12,000 MHZ effective) memory clock. The core boost clock is listed as 1,725 MHz, the same as the reference clock, but the faster memory yields a 50% boost in bandwidth. The new core and increased clocks bump up power use values from 75W to 100W. Most card partners recommend the 1650 Super to be paired with a 350W or greater power supply--which shouldn’t be an issue with most standard ATX power supplies.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Additionally, the GTX 1650 Super includes the Turing NVENC encoder, rather than the Volta encoder included in the original GTX 1650 and the TU117 chip. NVENC encoding on the Turing-based cards decreases CPU utilization and improves performance, especially with higher-resolution encoding and decoding.

Below is a complete list of specifications for the budget range of Nvidia GPUs.

Specifications

| Header Cell - Column 0 | Geforce GTX 1650 | Geforce GTX 1650 Super | GeForce GTX 1660 | GeForce GTX 1660 Super |

|---|---|---|---|---|

| Architecture (GPU) | Turing (TU117) | Turing (TU116) | Turing (TU116) | Turing (TU116) |

| ALUs | 896 | 1280 | 1408 | 1408 |

| Peak FP32 Compute (Based on Typical Boost) | 2.9 TFLOPS | 4.4 TFLOPS | 5 TFLOPS | 5 TFLOPS |

| Tensor Cores | N/A | N/A | N/A | N/A |

| RT Cores | N/A | N/A | N/A | N/A |

| Texture Units | 56 | 80 | 88 | 88 |

| ROPs | 32 | 32 | 48 | 48 |

| Base Clock Rate | 1485 MHz | 1530 MHz | 1530 MHz | 1530 MHz |

| Nvidia Boost/AMD Game Rate | 1665 MHz | 1725 MHz | 1785 MHz | 1785 MHz |

| AMD Boost Rate | N/A | N/A | N/A | N/A |

| Memory Capacity | 4GB GDDR5 | 4GB GDDR6 | 6GB GDDR5 | 6GB GDDR6 |

| Memory Bus | 128-bit | 128-bit | 192-bit | 192-bit |

| Memory Bandwidth | 128 GB/s | 192 GB/s | 192.1 GB/s | 336 GB/s |

| L2 Cache | 1MB | 1.5MB | 1.5MB | 1.5MB |

| TDP | 75W | 100W | 120W | 125W |

| Transistor Count | 4.7 billion | 6.6 billion | 6.6 billion | 6.6 billion |

| Die Size | 200 mm² | 284 mm² | 284 mm² | 284 mm² |

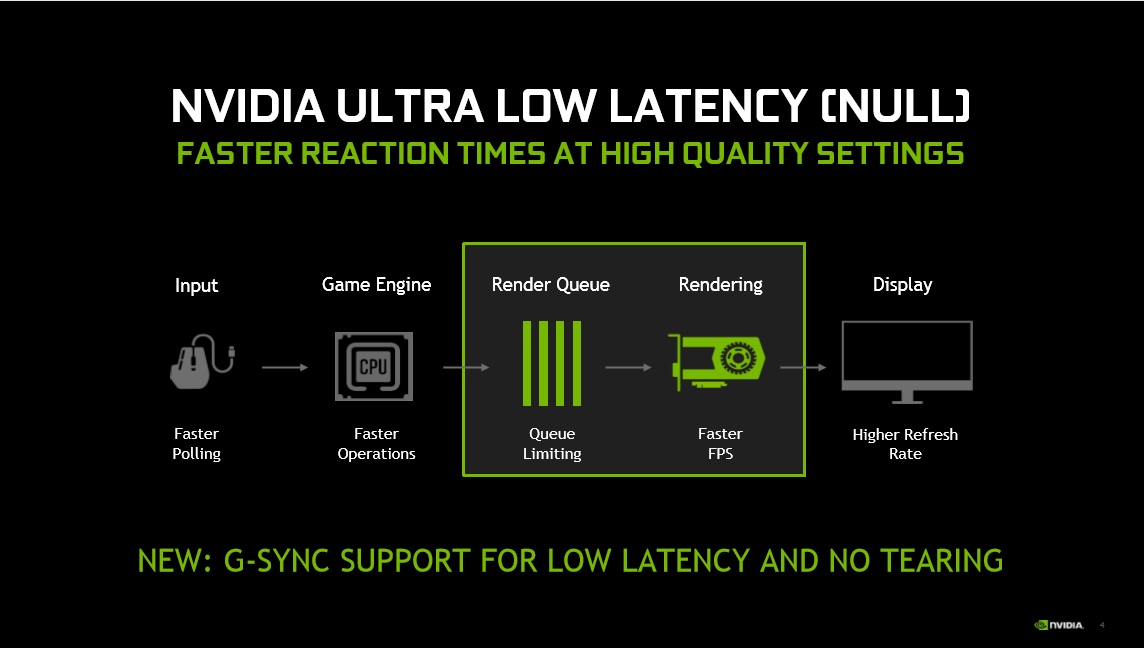

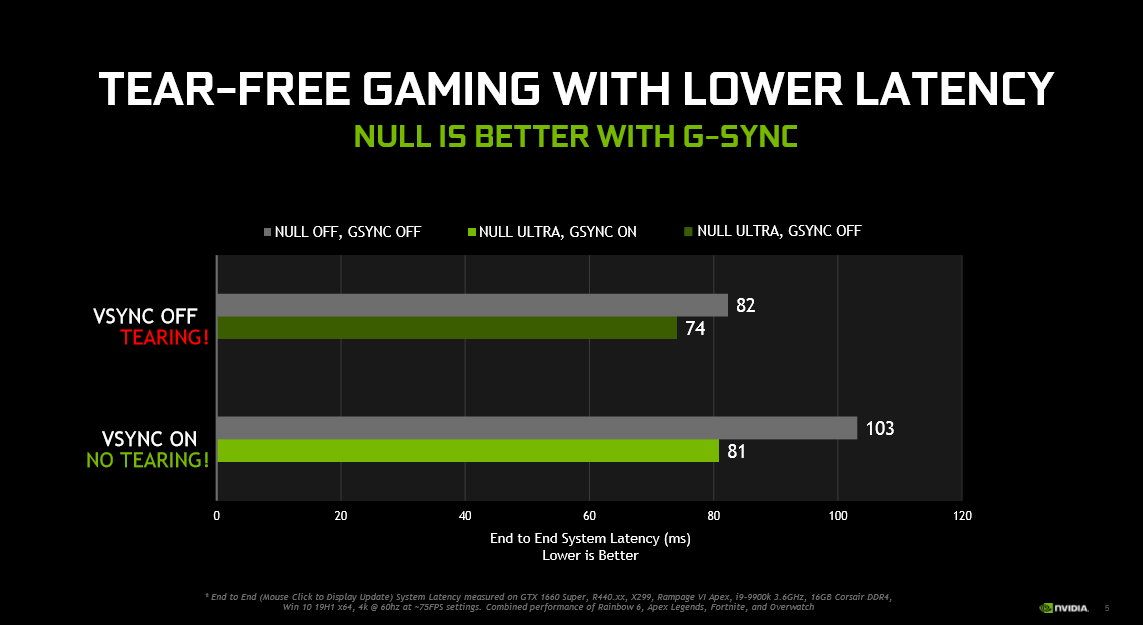

In addition to the changes in hardware for the video cards, Nvidia also made some existing features more readily available when the GTX 1650/1660 Super were announced. The first example is Nvidia’s Ultra Low Latency (NULL) mode. In a nutshell, the NULL mode is enabled when the frames per second (fps) go above the refresh rate of the monitor and is said to prevent screen tearing without adding latency. Nvidia states when using NULL with G-Sync (these features working together is the new bit), latency improves significantly when compared to only using Vsync, and the company feels the feature is a good balance between image quality (preventing screen tearing) and latency.

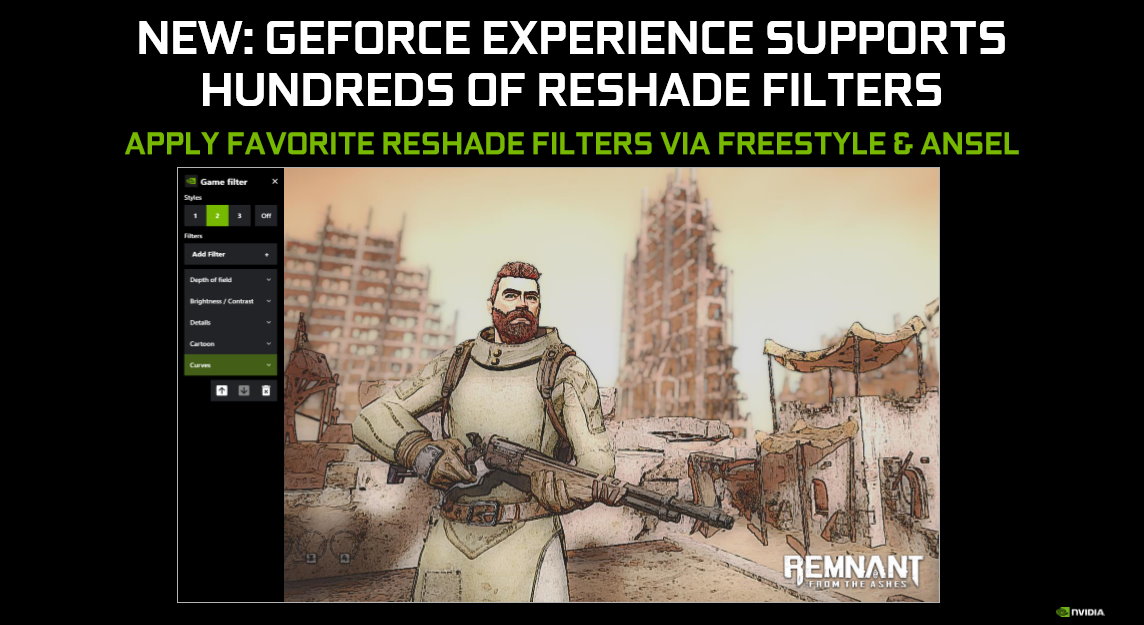

Nvidia’s ReShade, a modding/post processing tool, allows users to to create post processing filters for games and use them in real time to game as well as on still photos / screenshots. The update here is integrating support for Reshade into the GeForce Experience via Freestyle or through Ansel. In order to implement filters, extract it into the proper path, activate Ansel (Alt+F2) or Freestyle (Alt+F3) and you can apply the Reshade filters you like, bringing an Instagram-like experience to your gaming.

One specific filter, image sharpening, has been available through Freestyle for a while, but you can now access it through the Nvidia Control Panel. This change gives the feature another access point, improves performance and will allow for increased game support. Image sharpening is supported on all DX9/11/12 games.

Design

Zotac’s GTX 1650 Super measures 6.2 x 4.5 x 1.4-inches (158.5 x 115.2 x 35.3mm) and is a 2-slot card that’s slim enough to let you actually use the slot below. The diminutive size of the card means it should be able to fit in most systems without issue. Zotac says it should fit in “99%” of chassis, though you should always check your case’s specs before making a purchase.

At the budget level, video cards tend to forgo fancy shrouds and backplates in an effort to keep costs down. The Zotac card doesn’t stray from this theme and includes a simple black plastic shroud with grey accents above and below the two fans. The black PCB is visible, sticking up about a half inch above the shroud. Overall, it’s an unassuming looking card that will hide well within most build themes. There’s no RGB lighting here either, and we wouldn’t expect it at this price point.

Connectivity consists of three ports -- DisplayPort (1.4), HDMI (2.0b) and a Dual Link DVI-D, all capable of driving three displays total. Multiple monitors may need adapters, depending on their inputs. While this is a minor issue, I still like to see dual HDMI ports at this level, since HDMI is the most-common connector on mainstream monitors and TVs.

Cooling the card are the two fans and a large but simple aluminum heatsink attached to the TU116 core. The GDDR6 memory is also cooled by the monolithic cooler, making contact through thick thermal pads. Meanwhile the 3+1-phase VRMs are cooled by their own small heatsink covering all of the MOSFETs. We can’t say this is the best solution, but at this price point we don’t expect to see direct-contact heatpipe coolers either. Setups similar to this are typical. The heatsink and cooler combo manages to keep the card running within parameters, though under load the pair of 70mm fans can easily be heard.

How We Tested Zotac’s GTX 1650 Super

Recently, we’ve updated our GPU test system to a new platform and swapped from an six-core i7-8086K to an eight-core Core i9-9900K. The CPU sits in an MSI Z390 MEG Ace motherboard, along with 2x16GB Corsair DDR4 3200 MHz CL16 RAM (CMK32GX4M2B3200C16). Keeping the CPU cool is a Corsair H150i Pro RGB AIO along with a 120mm Sharkoon fan for general airflow across the test system. Storing our OS and gaming suite is a single 2TB Kingston KC2000 NVMe PCIe 3.0 x4 drive.

The motherboard was updated to the latest (at this time) BIOS (version 7B12v16) from August 2019. Optimized defaults were used to setup the system. We then enabled the memory’s XMP profile to get the memory running at the rated 3200 MHz CL16 specification. No other changes or performance enhancements were enabled. The latest version of Windows 10 (1903) was used.

As time goes on we will build our database of results back up based on this test system. For now we will include GPUs that are close in performance to the card that is being reviewed. Here we have a Gigabyte GTX 1650 Gaming OC, Zotac GTX 1660 Amp and an EVGA GTX 1660 Super SC Ultra. Representing AMD in this case is an XFX RX 590 Fat Boy. We’ve been waiting for weeks to test AMD’s new Navi-based RX 5500 card, but have only seen OEM versions.

Our list of games test games is currently Tom Clancy’s The Division 2, Ghost Recon: Breakpoint, Borderlands 3, Gears of War 5, Strange Brigade, Shadow of The Tomb Raider, Far Cry 5, Metro: Exodus, Final Fantasy XIV: Shadowbringers, Forza Horizon 4 and Battlefield V. These titles represent a broad spectrum of genres and APIs, which gives us a good idea of the relative performance difference between the cards. We’re using driver build 441.20 for the Nvidia cards and Adrenalin 2019 Edition 19.11.3 for AMD.

We capture our frames per second (fps) and frame time information by running OCAT during our benchmarks. In order to capture clock and fan speed, temperature, and power, GPUz's logging capabilities are used. Soon we’ll resume using the Powenetics based system found in previous reviews, as soon as the equipment is ready.

MORE: Best Graphics Cards

MORE: Desktop GPU Performance Hierarchy Table

MORE: All Graphics Content

Current page: Feature and Specifications

Next Page Performance Results: 1920 x 1080 (Ultra)

Joe Shields is a staff writer at Tom’s Hardware. He reviews motherboards and PC components.

-

Mileta Cekovic It would be interesting to see how Radeon RX 5500 and especially RX 5500 XT fare against RTX 1650 Super and what the prices of them will be.Reply

With RTX 1650 Super @ $160, Nvidia has given AMD a serious task to beat this price/performance level. -

King_V This, I think, has definitely put AMD on notice. A little hard to say for certain, and I wish the RX 570 4GB and RX 580 8GB results were on the charts as well, but, depending on the game, it looks like the 1650 Super is at least equal to the RX 570, and sometimes matches the RX 590.Reply

Given the $160 price point, it seems the RX 570, 580, and 590 are going to have to adjust prices downward.

Further, I'm thinking that AMD is not going to be able to get by with only matching the RX 580's performance with the RX 5500. Or, if they do, they will have to definitely undercut the 1650 Super's price, which will put even further downward pressure on the Polaris cards.

On the other hand, I don't know what to think about the RX 5500 - at one point it was stated to be a 150W card, then it was 110W. What little performance data we have, and it's precious little data, pegs it at around RX 580 performance. I'm hoping this is all a case of AMD holding their cards close to their chest, but, as it stands, that's not overly promising, given what the 1650 Super offers in performance... and AMD should worry.