AMD Radeon RX Vega 56 8GB Review

Why you can trust Tom's Hardware

Overclocking, Underclocking, Efficiency & Temperature

Frequencies & Corresponding Game Performance

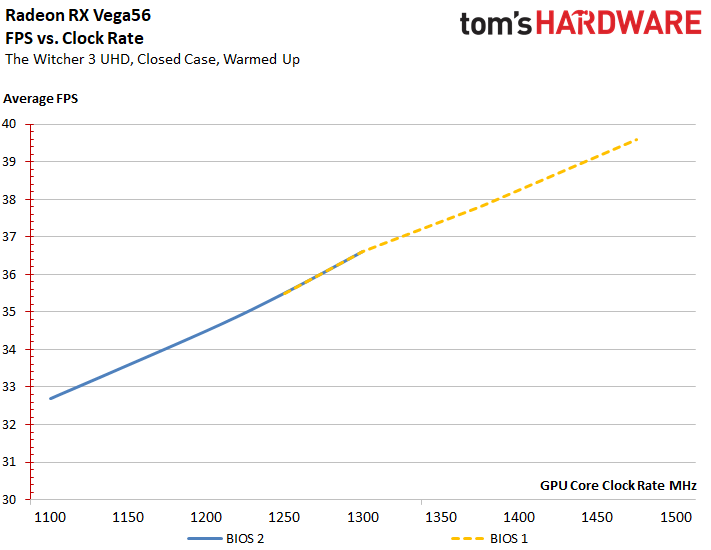

Again, we're using The Witcher 3 at 4K for our worst-case scenario. This yields an average of 36.6 FPS at 1305 MHz using the Balanced mode's stock settings, giving us a good 100% baseline.

Switching to the secondary BIOS and dropping the power limit by 25% results in a 1101 MHz clock rate and surprisingly high 32.7 FPS average frame rate. For 85% of the default GPU frequency we measure 89% of the default configuration's performance. Not bad.

Conversely, pushing the card to its limit via overclocking gets us almost 1500 MHz and 39.6 FPS. So, a frequency increase of almost 15% yields just 8% more gaming performance.

From the lowest to the highest average clock rate, we observe an increase of 36.4%. However, gaming performance goes up by just 21.1%. That's not great scaling, but it's not terrible either. What's more important is the power consumption accompanying those numbers. Unfortunately, this is where the data gets ugly.

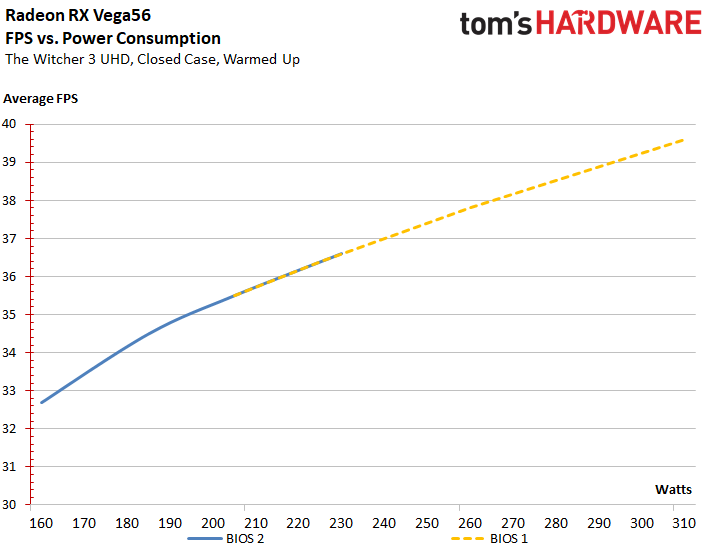

Using the secondary BIOS with a power limit reduced by 25% gets us 159.4W and 32.7 FPS. Compared to the stock settings, just 71.6% of the power consumption serves up 89% of the gaming performance.

Going back to a worst-case scenario, the overclocked card averages 39.6 FPS, but consumes 310.6W doing so. Trading 39.5% more power consumption for an 8%-higher frame rate isn’t acceptable. The efficiency curve drops off rapidly with increasing clock rates and the additional power those frequencies necessitate.

The spread between the lowest to the highest power consumption is a massive 94%, while gaming performance increases by only 21.1%. Almost double the power consumption for one-fifth more gaming performance is what we'd call catastrophic.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

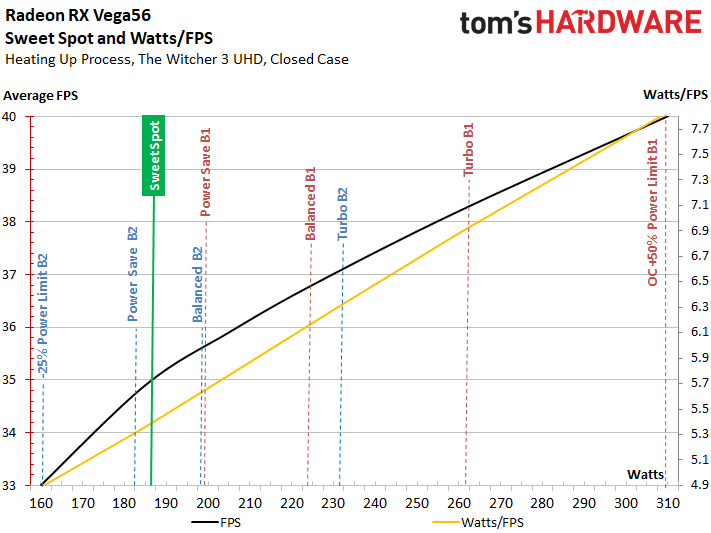

Efficiency & Sweet Spot

If all of the results we generated are combined, and the curves' start and end points are given a common basis, then we get an FPS/watt ratio illustrating the relationship between gaming performance and power consumption. The point at which the distance between both curves is at its greatest represents the so-called sweet spot. This is where the card operates at peak efficiency, right before it starts going the other direction.

Radeon RX Vega 56's sweet spot seems to be right around 188W to 190W, according to our measurements. Strangely, AMD placed three settings close to it, but didn’t place a single one right on.

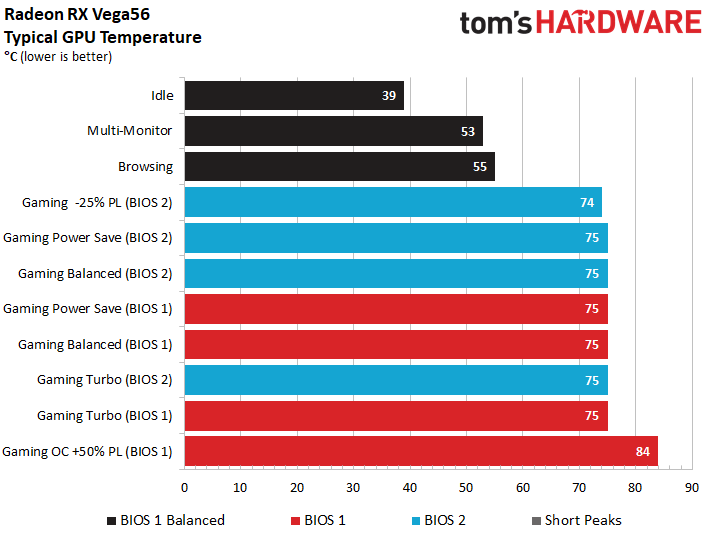

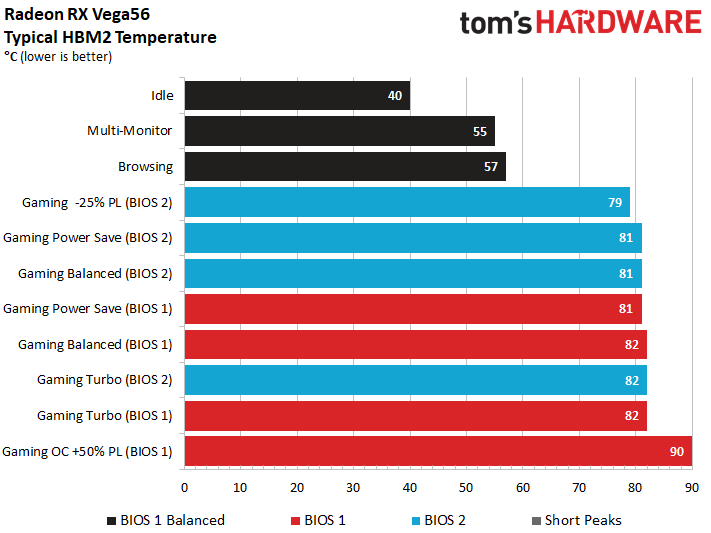

GPU & Memory Temperatures

The temperatures are very similar across all six power profiles. This is due to the fan's aggressive controller, which responds quickly when power consumption increases. The combination of overclocking and a power limit adjustment of +50% proves to be too much, though. AMD's thermal solution can't keep up, and our measurements show an additional 10°C compared to the predefined settings.

At maximum load, the HBM2 averages up to an additional 6°C on top of the GPU temperature in question. Our overclocking efforts pushed it beyond the 90°C mark, leaving us a little nervous.

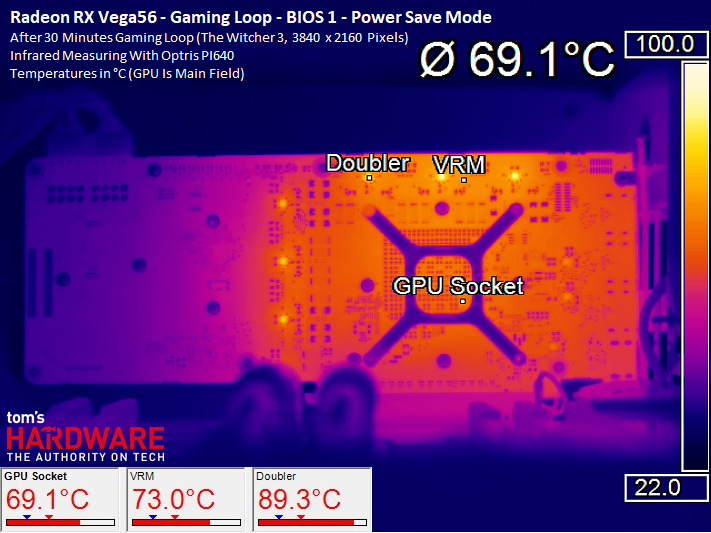

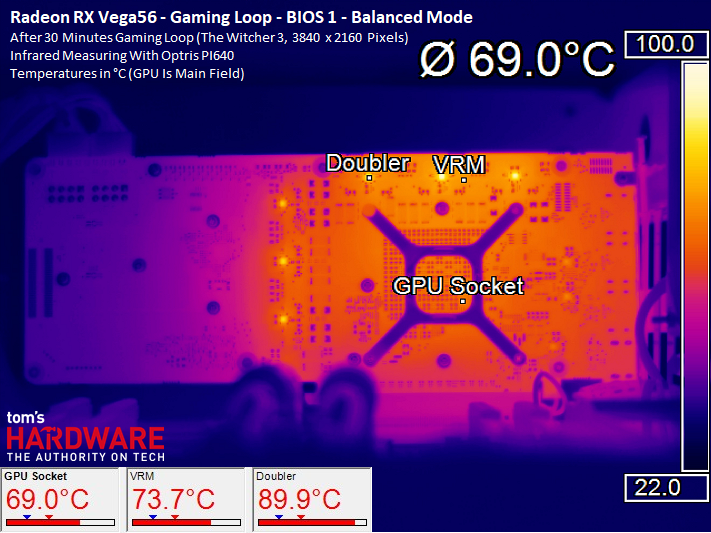

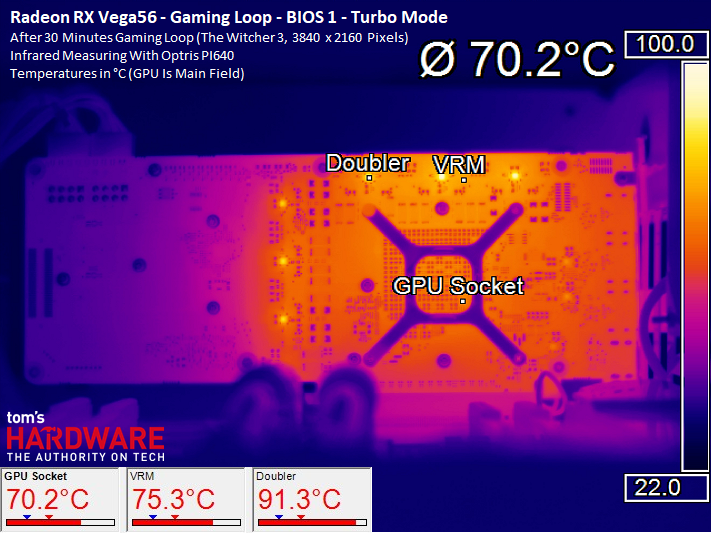

Primary BIOS Thermal Images

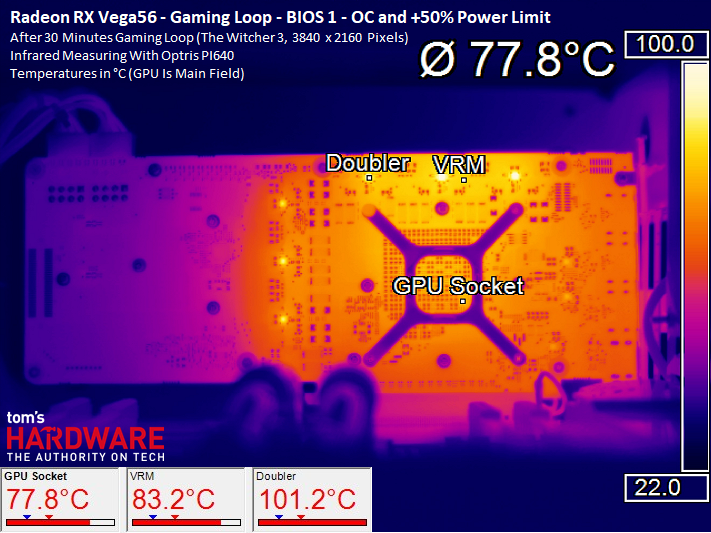

For each of the two BIOSes, we provide thermal images for all three driver-based profile settings. These show how the card’s power consumption and fan speed affects the temperatures of various on-board components.

As mentioned, though, all of the temperatures are fairly similar until we get to the overclocked configuration. This is due to the fan controller’s ability to maintain a target temperature between 74 and 75°C, regardless of what that means to our ears.

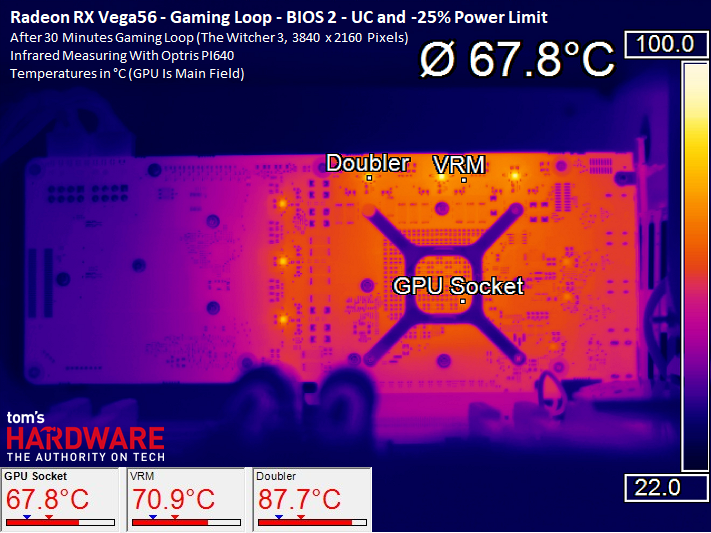

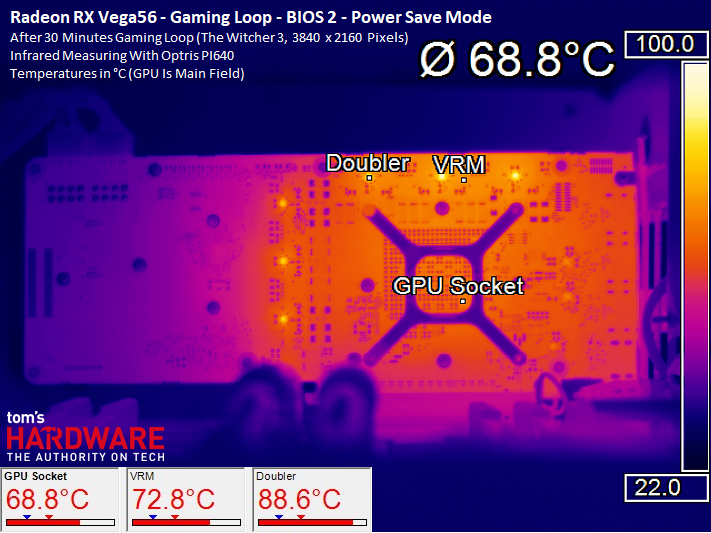

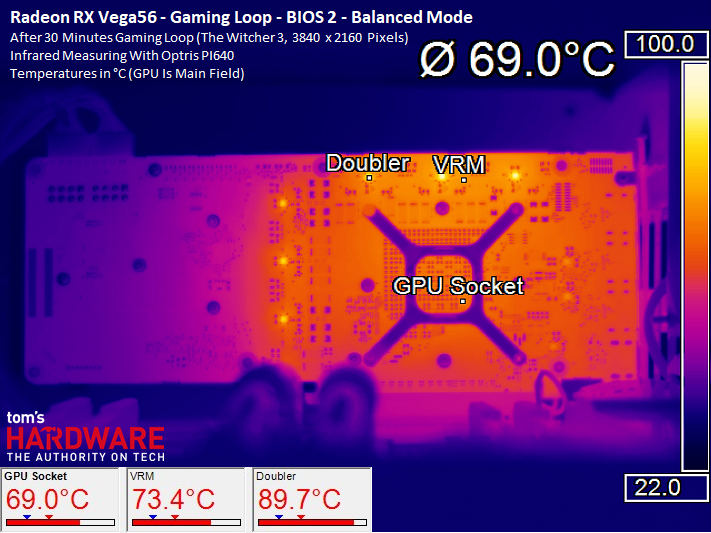

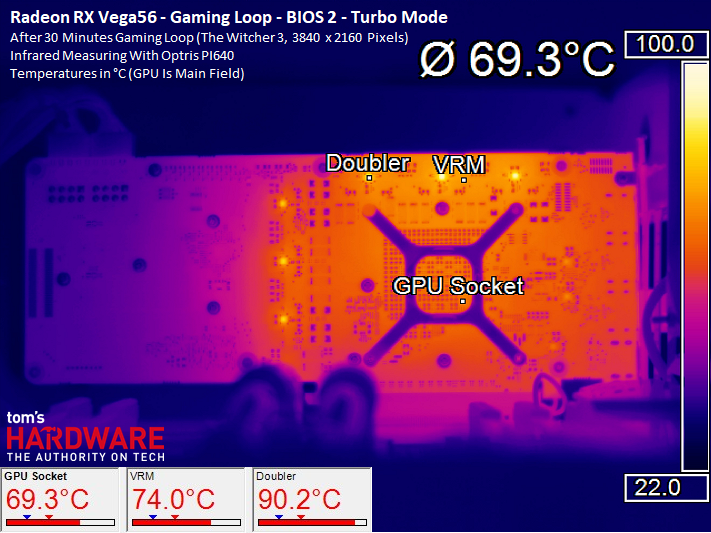

Secondary BIOS Thermal Images

We start with the underclocked settings, resulting in 160W of power consumption. At that level, we notice a deviation from the six driver profile-based average temperatures as well. The difference isn’t as pronounced as it was for the overclocked setting, though.

Similar to what we observed from the primary BIOS, the other temperatures increase only slightly as power consumption rises; they're kept in check by an especially responsive fan.

MORE: Best Graphics Cards

MORE: Desktop GPU Performance Hierarchy Table

MORE: All Graphics Content

Current page: Overclocking, Underclocking, Efficiency & Temperature

Prev Page Power Consumption: Testing Eight Different Settings Next Page Fan Speed & Noise-

kjurden What a crock! I didn't realize that Tom's hardware pandered to the iNvidiot's. AMD VEGA GPU's have rightfully taken the performance crown!Reply -

Martell1977 Vega 56 vs GTX 1070, Vega goes 6-2-2 = Winner Vega!Reply

Good job AMD, hopefully next gen you can make more headway in power efficiency. But this is a good card, even beats the factory OC 1070. -

Wisecracker Thanks for the hard work and in-depth review -- any word on Vega Nano?Reply

Some 'Other Guys' (Namer Gexus?) were experimenting on under-volting and clock-boosting with interesting results. It's not like you guys don't have enough to do, already, but an Under-Volt-Off Smack Down between AMD and nVidia might be fun for readers ...

-

pavel.mateja No undervolting tests?Reply

https://translate.google.de/translate?sl=de&tl=en&js=y&prev=_t&hl=de&ie=UTF-8&u=https://www.hardwareluxx.de/index.php/artikel/hardware/grafikkarten/44084-amd-radeon-rx-vega-56-und-vega-64-im-undervolting-test.html&edit-text= -

10tacle Reply20112576 said:What a crock! I didn't realize that Tom's hardware pandered to the iNvidiot's. AMD VEGA GPU's have rightfully taken the performance crown!

Yeah Tom's Hardware does objective reviewing. If there are faults with something, they will call them out like the inferior VR performance over the 1070. This is not the National Inquirer of tech review sites like WCCTF. There are more things to consider than raw FPS performance and that's what we expect to see in an honest objective review.

Guru3D's conclusion with caveats:

"For PC gaming I can certainly recommend Radeon RX Vega 56. It is a proper and good performance level that it offers, priced right. It's a bit above average wattage compared to the competitions product in the same performance bracket. However much more decent compared to Vega 64."

Tom's conclusion with caveats:

"Even when we compare it to EVGA’s overclocked GeForce GTX 1070 SC Gaming 8GB (there are no Founders Edition cards left to buy), Vega 56 consistently matches or beats it. But until we see some of those forward-looking features exposed for gamers to enjoy, Vega 56’s success will largely depend on its price relative to GeForce GTX 1070."

^^And that's the truth. If prices of the AIB cards coming are closer to the GTX 1080, then it can't be considered a better value. This is not AMD's fault of course, but that's just the reality of the situation. You can't sugar coat it, you can't hide it, and you can't spin it. Real money is real money. We've already seen this with the RX 64 prices getting close to GTX 1080 Ti territory.

With that said, I am glad to see Nvidia get direct competition from AMD again in the high end segment since Fury even though it's a year and four months late to the party. In this case, the reference RX 56 even bests an AIB Strix GTX 1070 variant in most non-VR games. That's promising for what's going to come with their AIB variants. The question now is what's looming on the horizon in an Nvidia response with Volta. We'll find out in the coming months. -

shrapnel_indie We've seen what they can do in a factory blower configuration. Are board manufacturers allowed to take 64 and 56 and do their own designs and cooling solutions, where they can potentially coax more out of it (power usage aside)? Or are they stuck with this configuration as Fury X and Fury Nano were stuck?Reply -

10tacle No, there will be card vendors like ASUS, Gigabyte, and MSI who will have their own cooling. Here's a review of an ASUS RX 64 Strix Gaming:Reply

http://hexus.net/tech/reviews/graphics/109078-asus-radeon-rx-vega-64-strix-gaming/ -

pepar0 Reply

Will any gamers buy this card ... will any gamers GET to buy this card? Hot, hungry, noisy and expensive due to the crypto currency mining craze was not what this happy R290 owner had in mind.20112412 said:Radeon RX Vega 56 should be hitting store shelves with 3584 Stream processors and 8GB of HBM2. Should you scramble to snag yours or shop for something else?

AMD Radeon RX Vega 56 8GB Review : Read more