The Week In CPUs And Storage: Intel Shipping Purley (Skylake-EP) Xeons, Just Not To You

Intel professed its love for all things AI at its inaugural AI Day. Intel chose to christen the event with an unexpected announcement that it is already shipping the Purley generation, otherwise known as the Skylake-EP Xeons, to its besties and no one else. The company also announced its new Lake Crest and Knights Crest products. We'll get down in the Purley and AI weeds after the recap.

We've been on a gaming laptop review binge of late, with the ASUS G752VS, the MSI GP62MVR Leopard Pro, and the MSI GT73VR Titan Pro-201 all having made the trip through our extensive testing regimen. If you choose to go the more traditional desktop route, we also have updated our Best CPUs article with the latest deals. We may be on the cusp of Intel's Kaby Lake and AMD's Zen releases, but with Black Friday looming, there are sure to be plenty of deals on the current, or even previous, generation CPUs that will get the job done at a great price.

If desktop PCs and gaming laptops don't provide enough horsepower, perhaps a bleeding-edge multi-billion dollar supercomputer cluster will do the trick. Supercomputing 2106 (SC16) provided plenty of news on the latest and literally the greatest computers on the planet. Even the fastest clusters fall prey to the perils of slow storage, so HPC often uses burst buffers to address the problem. Seagate contends that it can fix its slow HDD storage with some of its fast flash whilst eliminating costly and complex buffers, but if you have to ask how much it costs...well, you know the drill.

Google is lighting up neural networks to improve its translation services of nine languages as it plods its way along to all 103 supported languages. Google may not have the Babel Fish yet, but it's getting there.

The OpenAI group aims to keep the world free from the evils of proprietary AI, some of which may be used for nefarious purposes. The OpenAI nonprofit joined forces with Microsoft's Azure services to leverage the power of its vast phalanx of Nvidia K80 GPUs to train its AI algorithms. Here's hoping OpenAI can stop Skynet before it gains consciousness.

Several companies decided this is the week of the external storage device, so Seagate, ADATA, Plextor and Buffalo all released new products. Speed or capacity, take your pick, but you can't have both. We learned that the Seagate 5TB HDD is actually an SMR device, so it's slow even by HDD standards. For some reason, Seagate continues to avoid labeling its consumer SMR HDDs what they are, which is Shingled Magnetic Recording. Hopefully, it will rectify that soon. The Seagate 5TB HDD takes the capacity crown, while the rest of the smaller, speedy external SSDs run circles around it.

If all of that talk of slow storage has got you down, never fear, Samsung's 960 EVO is here. The M.2 NVMe SSD brings enough speed to set hearts aflutter, such as up to 3.2GB/s of sequential read speed and 380,000 random read IOPS. Unfortunately, there is a profound performance differential between the 250GB and 1TB model. Thank goodness for reviewers. We set the record straight in the Samsung 960 EVO review, and also updated the Best SSDs list just in time for the holidays.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Intel Holds First Artificial Intelligence Day, Breaks Out The Wet Blanket

Intel had a big presence at SC16, as expected, and made a slew of announcements. These included a new and overpriced Xeon E5-2699A (4.8% Linpack gain, 20% price increase), an FPGA-powered Deep learning Inference Accelerator, and word that the Omni Path-infused Knight's Landing models are shipping, which will come in handy as its silicon photonics products evolve.

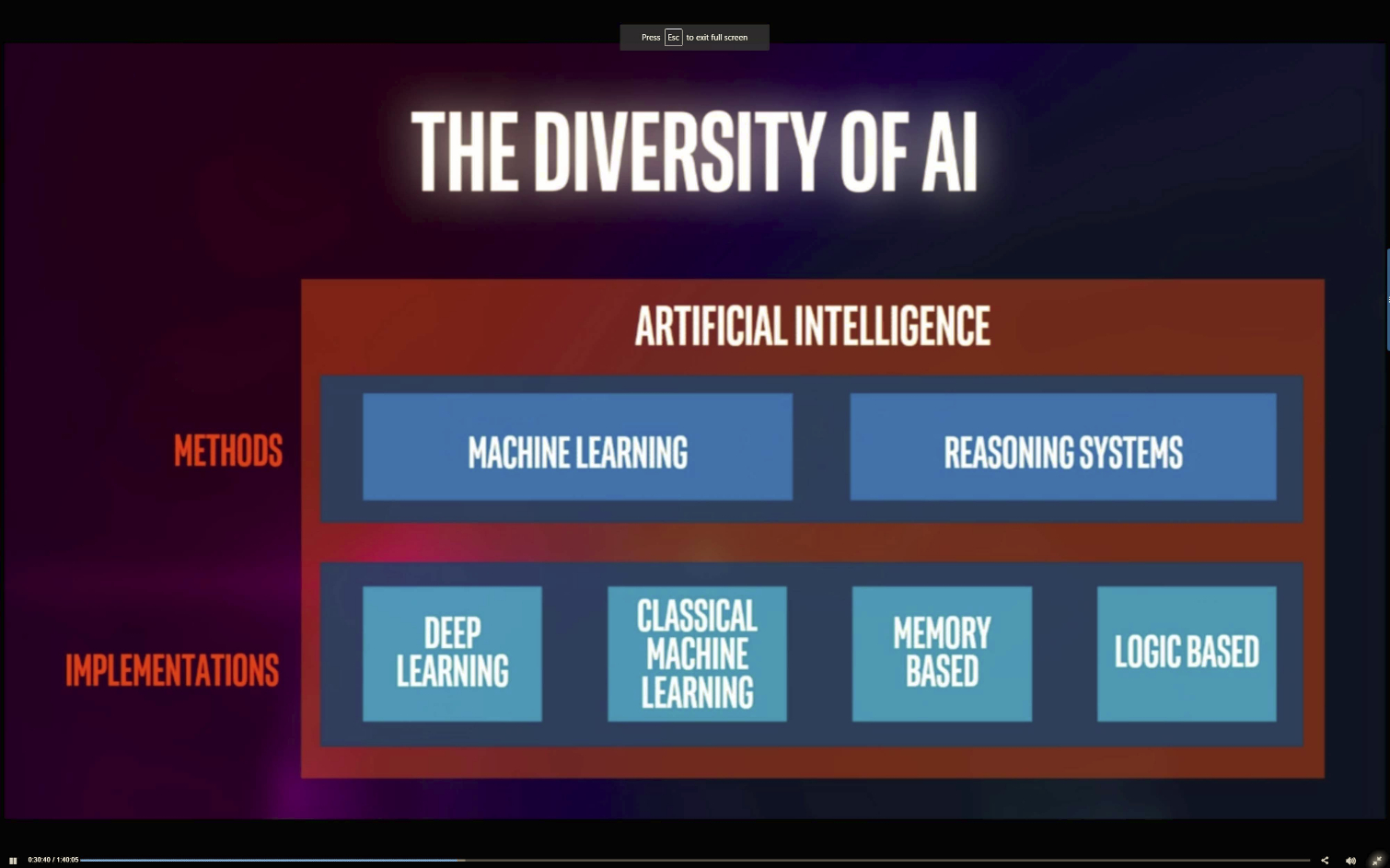

Intel piggybacked on SC16 with its first Artificial Intelligence Day, or "AI Day" for short, on November 17. The move to interrelated artificial intelligence, machine learning, and deep learning workloads is happening rapidly on devices that range from the smartphone in our hand to the data center. The shift to the new workloads is profound, and Intel predicts that the number of compute cycles dedicated to AI will expand 12x by 2020. Unfortunately for Intel, the normal CPU isn't the preferred platform for AI workloads; ASICs, GPUs, and FPGAs step into these roles quite nicely. Intel has a strategy to compete in all of these segments, which we'll come back to shortly.

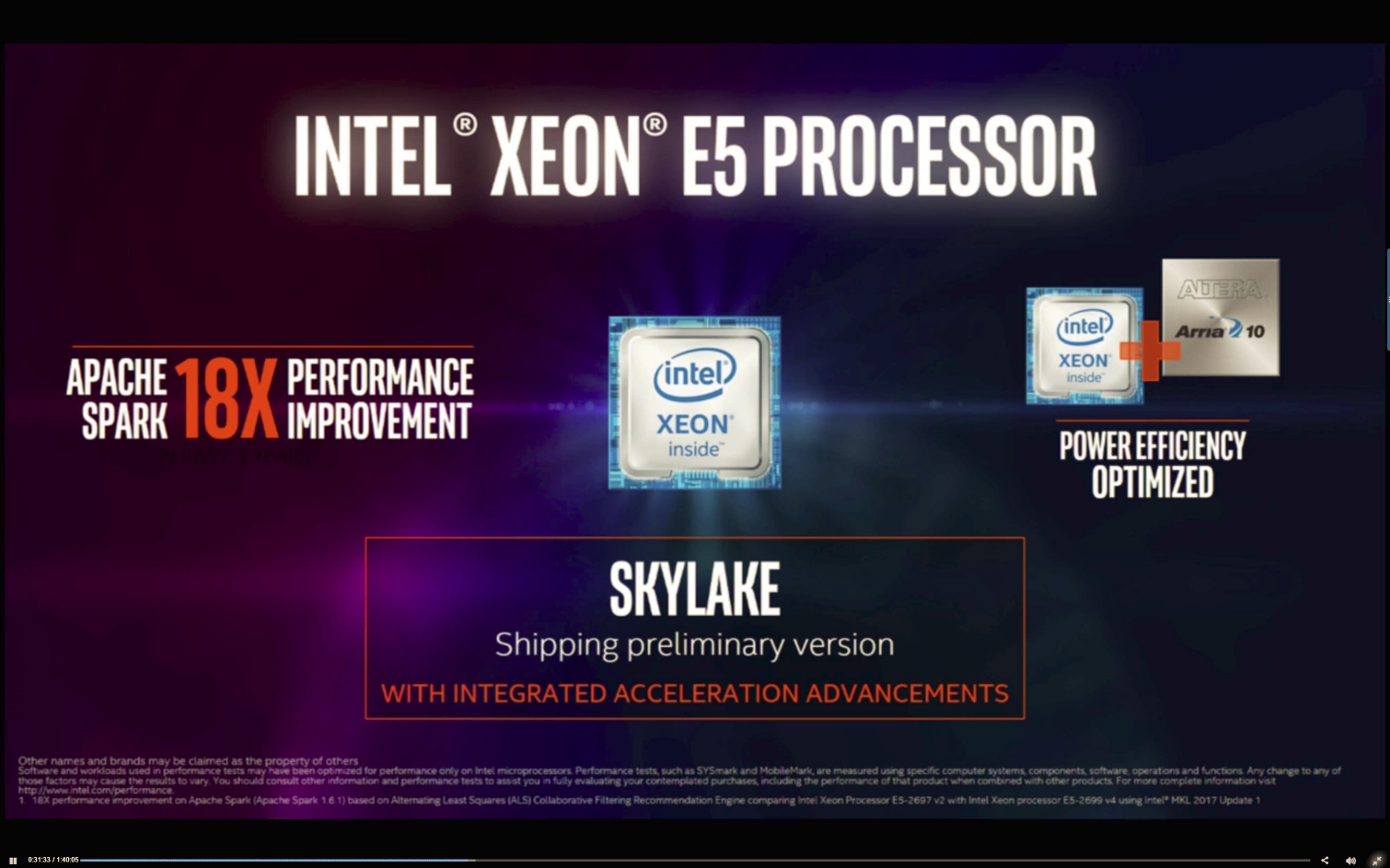

Diane Bryant, Executive Vice President and General Manager of Intel's Data Center Group, dropped a bombshell announcement that the company is already shipping Xeon E5 Skylake-EP, also known as the Purley platform, to several undefined customers. Intel is delivering the leading "preliminary production versions" with a targeted feature set to its largest unnamed cloud service providers and HPC customers. The Purley processors won't become available to the public until the middle of 2017, and Bryant noted that all major OEMs are working on full-featured systems that they will release at that time.

We've known for several years that Intel bakes specialized features into the existing Xeon lines for hyperscale customers, such as Facebook, Google, Amazon, Tencent, and Baidu, that Intel doesn't activate in the normal SKUs. Bryant noted that the preliminary Skylake versions come with integrated acceleration advancements, and also spoke about the addition of FPGAs with the processors, which provides lower latency and increases performance. This admission isn't entirely shocking; Intel began displaying working CPU silicon with integrated FPGAs in 2014 and also had Skylake-EP Xeon demos with integrated FPGAs at SC16. We've also seen Purley motherboards in the wild.

Shipping some versions of the pending Xeons to customers in volume ahead of schedule, and on an exclusive basis, raises questions about whether Intel is giving some customers an unfair advantage over others. I think the argument has merit. Google, for instance, announced an enhanced partnership with Intel at the AI Day event, and it likely has access to the new more efficient and faster Purley for its search and cloud services. Theoretically, if Intel then denied that same access to Microsoft, which is a "competitor" to Google with Bing and Azure, you could view that as preferential treatment.

The same theory also holds true with Chinese hyperscalers, such as Baidu and Tencent, which have operations that rival the best the U.S. has to offer. It would raise ethical questions if Intel were to favor one Chinese hyperscaler over another, or even more alarming, perhaps prioritize one region or country over another.

Questions abound over Intel's release strategy, and most of them aren't good. Intel is unquestionably in the position to be a kingmaker, but which king does it make, and should it make one in the first place?

Xeon Phi Knights Mill

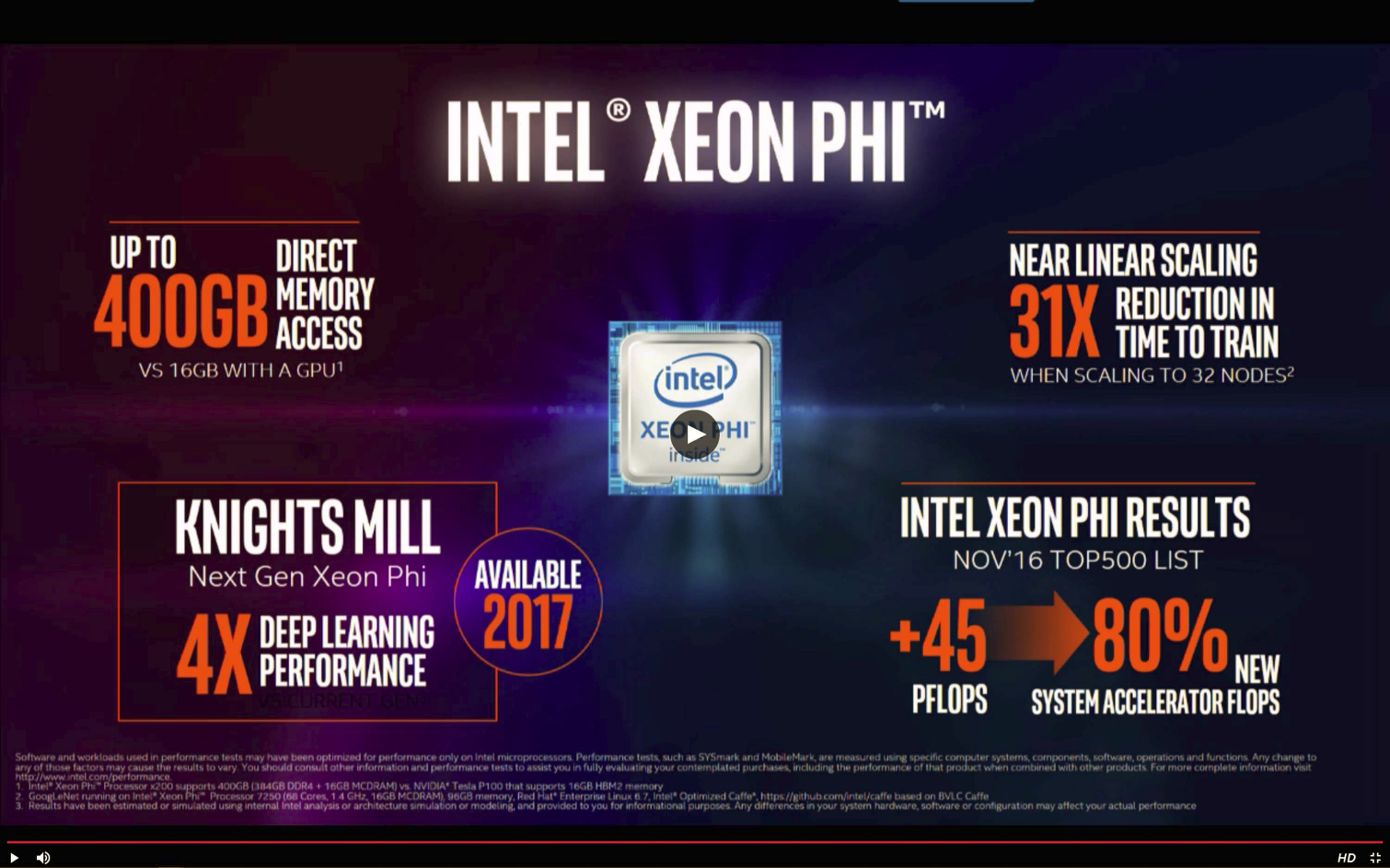

At IDF 2016, Bryant pre-announced the Xeon Phi Knights Mill, which is the next iteration of the Knight's Landing platform, but provided very little context.

Intel opened up a bit more at AI Day and claimed that Knights Mill's single- and half-precision support helps power up to 4x the deep learning performance of its predecessor. Knights Mill will be available in 2017.

Intel also noted that Xeon Phi provides direct access to (up to) 400GB of memory, which is a tangible advantage over the current GPU limitation of 16GB. The current Knight's Landing products feature 16GB of on-package MCDRAM (Multi-Channel DRAM) Micron HBM, but we can imagine that Intel will arm future Xeon Phi products (possibly Knight's Mill) with denser 3D XPoint. We already know the company is infusing the second-generation Purley products with 3D Xpoint, so adding it to Xeon Phi products would be a natural progression of the technology.

Intel claimed that Xeon Phi offers a 31x reduction in time to train when scaling to 32 nodes, which is near-linear scaling (a rare feat with any workload). Of course, there are far more parameters, such as power efficiency and cost, that come into play, but scalability is a plus.

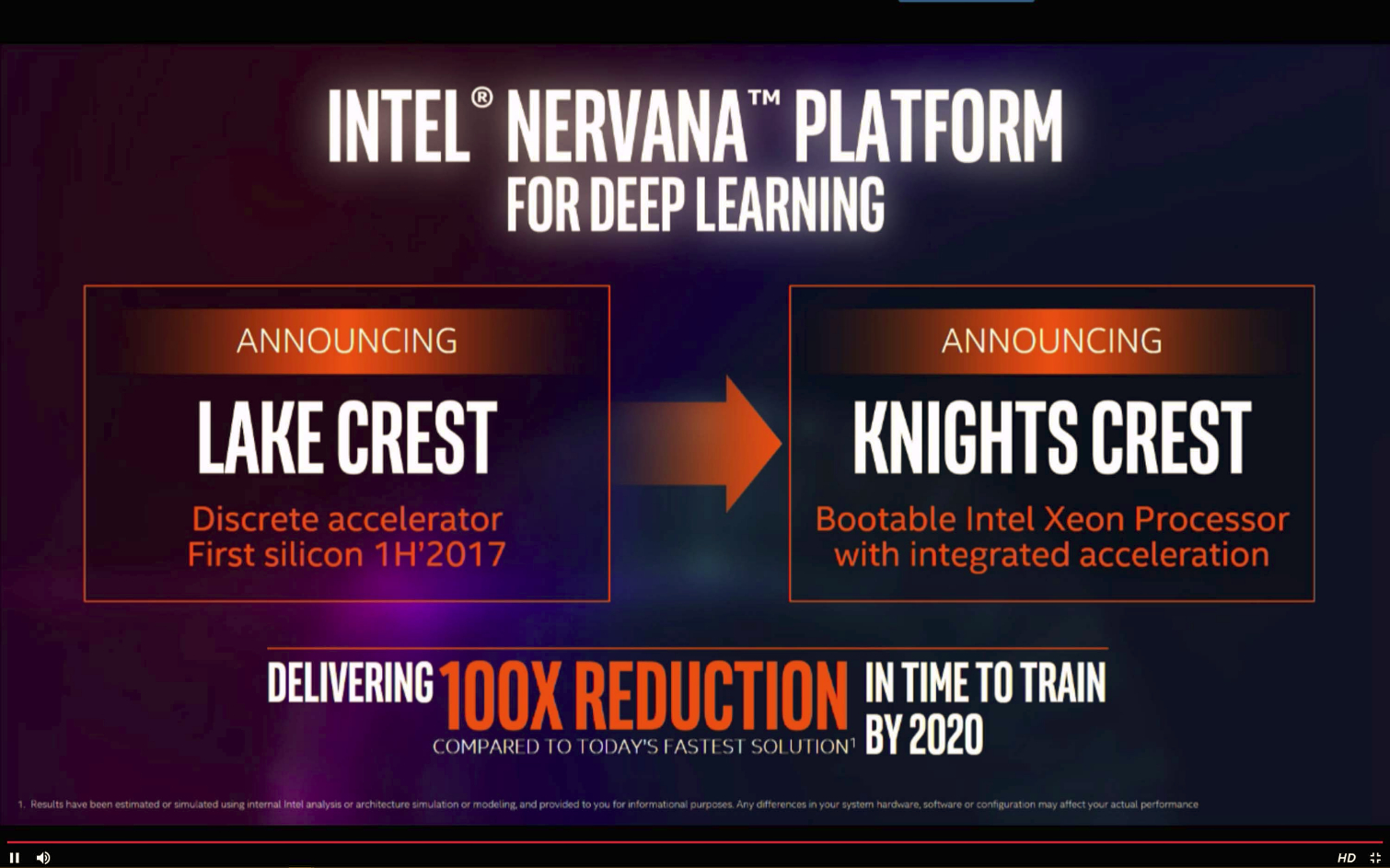

And Now...Drumroll Please...Lake Crest And Knights Crest

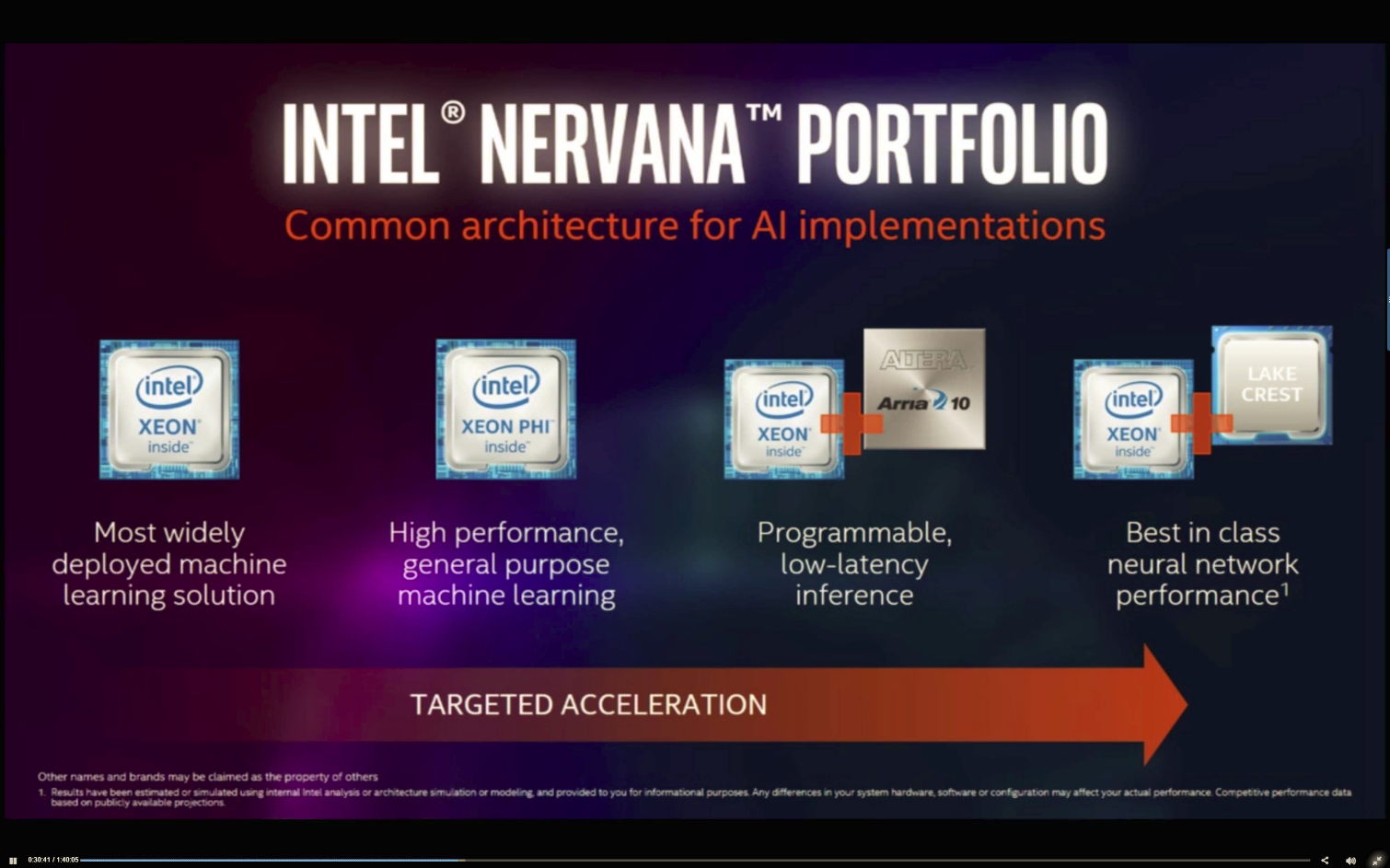

Intel announced that its first Lake Crest silicon would come to market in the first half of 2017. The Lake Crest accelerator is borne of Intel's Nervana acquisition, and will provide "best in class neural networking performance at launch." Intel didn't provide any further details, though we assume this is the Intel-branded Nervana Engine.

Intel also announced Knights Crest, which merges a bootable Xeon with Intel's "Best-in-class deep learning engine" integrated into one solution. Intel provided no further details, but this could be a merging of a Nervana ASIC and Xeon CPUs. Speculation is sure to abound, but we know Intel has a blended Xeon/FPGA in the hopper. FPGAs are great for rapid re-programmability, but ASICs are more efficient and a Xeon/ASIC combination covers the remainder of the bases.

AI on IA

Intel's "Artificial Intelligence on Intel Architecture" (AI on IA) messaging is indicative of the company's goals. The world of computing, at all levels, is shifting to AI and all of the various components that entails. AI is a rapidly evolving space, so it is best to have a solution to address every aspect, and that includes both hardware and software. This thought obviously isn't lost on Intel.

Intel already has the CPU portion well under control, which goes without saying, but CPUs aren't the primary engine behind most AI workloads. Intel's $16.7 billion Altera acquisition brings FPGAs under its umbrella, while its $400 million Nervana acquisition brings ASICs and software into the equation. Intel also has its Movidius acquisition pending for an undisclosed sum, and it purchased Saffron Technology for an undisclosed sum in October 2015. Intel's roster is set.

Intel faces intense competition from Nvidia, AMD, and IBM, among others, in the vast AI market. The company is clearly lining up its options to keep its stranglehold on the data center alive and well. Intel is also permeating its AI strategy down into the smaller devices on the edge, which meshes very well with its newfound love for everything IoT. One can see the big picture emerging already, and if Intel executes, it's a Mona Lisa for shareholders.

This year's inaugural Intel AI Day was a small affair, but my money says next year's event will be much larger.

Paul Alcorn is the Editor-in-Chief for Tom's Hardware US. He also writes news and reviews on CPUs, storage, and enterprise hardware.

-

bit_user I can understand if general release of Skylake-EP got held back because some features aren't yet ready for prime time. I just hope they had an open & fair bidding process for their customers who were interested in the specialized SKUs.Reply

I still think the delay sucks almost as much as the rumors that Kabylake-X will have only 16 PCIe lanes, even though it'll share the same socket-2066 as the 28/44-lane Skylake-X. So, if you're only going to get 16 lanes and 4 cores, why would you even put a Kaby in that socket? -

Cerunnos This talk of "preferred customers" is quite absurd. There has been and always will be "customized" SKUs for certain customers. This is simply customized chips. With FPGAs built into the CPU, each chip can then be modified to suit a particular demand. It isn't as if Intel isn't selling them to customers, but the fact that what it sells to each customer is most likely tailored to favor a certain type of workload (most likely defined by the customer). Sometimes the customer may actually work with them in implementing a specific design. These designs will most likely never be made available to other parties due to it being a joint project that has a ton of legal restrictions.Reply

Same example, Microsoft and Sony both set the specifications for AMD to carry out for console SoCs. AMD customized the chip for each party according to what they want. Using the same logic here, you can say that AMD shafted Microsoft with the reduced GPU horsepower even if it clearly was Microsoft's decision. -

Paul Alcorn Reply18887195 said:This talk of "preferred customers" is quite absurd. There has been and always will be "customized" SKUs for certain customers. This is simply customized chips. With FPGAs built into the CPU, each chip can then be modified to suit a particular demand. It isn't as if Intel isn't selling them to customers, but the fact that what it sells to each customer is most likely tailored to favor a certain type of workload (most likely defined by the customer). Sometimes the customer may actually work with them in implementing a specific design. These designs will most likely never be made available to other parties due to it being a joint project that has a ton of legal restrictions.

Same example, Microsoft and Sony both set the specifications for AMD to carry out for console SoCs. AMD customized the chip for each party according to what they want. Using the same logic here, you can say that AMD shafted Microsoft with the reduced GPU horsepower even if it clearly was Microsoft's decision.

The point is not that they sell customized versions, that is well known, and has gone on for a few years (as noted in the article).

The concern is the length of time that they are offering exclusivity of the new architecture - 6 months or more from the outward appearance of it, and the limited number of customers that can attain them. Remember, Intel controls anywhere from 94-99.5% of the data center, depending upon which analyst firm you believe. That gives it a considerable amount of power, actually an unprecedented amount of power.

-

Cerunnos Reply18887289 said:The point is not that they sell customized versions, that is well known, and has gone on for a few years (as noted in the article).

The concern is the length of time that they are offering exclusivity of the new architecture - 6 months or more from the outward appearance of it, and the limited number of customers that can attain them. Remember, Intel controls anywhere from 94-99.5% of the data center, depending upon which analyst firm you believe. That gives it a considerable amount of power, actually an unprecedented amount of power.

Skylake-EP is most likely available to anyone that is already an Intel partner (as in, they buy from them in somewhat medium to large quantities). This type of seeding is also not very unique to the Purley platform, but what is special is that there are more customized versions tailored to each order.

We've been working on the Purley platform for some time already and the quantities we purchase from Intel are very low when compared to any of the firms listed in the article. -

samopa Article says:Reply

Theoretically, if Intel then denied that same access to Microsoft, which is a "competitor" to Google with Bing and Azure, you could view that as preferential treatment.

Question:

Is USA denied same access to its technology advancement (such as F-22 Raptor) to other countries (such as North Korea) also a preferential treatment ? (it allows the access to its "ally" countries, that's also makes it "unfair" competition) -

Paul Alcorn Reply18887348 said:Article says:

Theoretically, if Intel then denied that same access to Microsoft, which is a "competitor" to Google with Bing and Azure, you could view that as preferential treatment.

Question:

Is USA denied same access to its technology advancement (such as F-22 Raptor) to other countries (such as North Korea) also a preferential treatment ? (it allows the access to its "ally" countries, that's also makes it "unfair" competition)

Well, matters of national security, or things under the guise of such (I imagine at times), are a whole 'nother ballpark. However, lawsuits are filed over an operating system vendor with a near-monopoly pushing their own version of a web browser. It's a bit different when it falls into that category, though I am no law professor and cannot untangle that for you with any authority :)

-

bit_user Reply

...where to start. Well, the reason this is even a cause for concern is that the US has laws designed to prevent unfair business practices, since this could disadvantage consumers.18887348 said:Question:

Is USA denied same access to its technology advancement (such as F-22 Raptor) to other countries (such as North Korea) also a preferential treatment ? (it allows the access to its "ally" countries, that's also makes it "unfair" competition)

Now, when you're talking about selling weapons to foreign countries, there's the opposite concern. We don't want to sell them to anyone who might use them against us or our allies.

It's like comparing apples and oranges. They're different situations, with different laws and incentives.

-

Paul Alcorn Reply18887341 said:multi-billion dollar supercomputer cluster

...is where?

Actually quite a few in China now, as well, but a bunch here in the US. The Sunway TaihuLight is actually the fastest in the world now, 10,649,600 CPU cores!!! It was built with China-developed RISC chips, largely because of US export restrictions from CFUIS.

It's so odd, Intel can't sell some chips in China, but IBM and AMD can licence their tech and both of those are now being built in China. Still can't figure out how that is allowed, or how it makes sense. -

bit_user Reply

Specifically multi-$B?18887369 said:18887341 said:multi-billion dollar supercomputer cluster

...is where?

Actually quite a few in China now, as well, but a bunch here in the US.

They just accelerated an existing project. It's only 28 nm, and those cores are really more akin to GPU cores, as well. It's not general-purpose programmable, like a Xeon would be. Still, impressive.18887369 said:The Sunway TaihuLight is actually the fastest in the world now, 10,649,600 CPU cores!!! It was built with China-developed RISC chips, largely because of US export restrictions from CFUIS.

That restriction was merely punitive. China did something we didn't like, such as taking out Github wit their Great Firehose or hacking Google, and we responded by banning sale of a few CPU SKUs to them. Whatever the reason, it wasn't publicly disclosed.18887369 said:It's so odd, Intel can't sell some chips in China, but IBM and AMD can licence their tech and both of those are now being built in China. Still can't figure out how that is allowed, or how it makes sense.

We have almost no leverage over China, any more. There's pretty much nothing short-term we could do that wouldn't hurt ourselves worse. That's what happens when you pit a country run by politicians who just think about getting re-elected in 2, 4, or 6 years vs. one that has a unified party and a unified, regular planning process. I'm not saying I'd rather live in China, but their system does have certain advantages.