Improving Nvidia RTX 4090 Efficiency Through Power Limiting

We tested the 4090 Founders Edition at 50% to 120% power limits

The Nvidia GeForce RTX 4090 delivers new levels of performance, lands at the top of our GPU benchmarks hierarchy, and is the fastest of the best graphics cards. For all that, it's not the most efficient of GPUs, but that's mostly due to design decisions. We can improve efficiency — and potentially reduce the risk of melting 4090 power adapters — through power limiting. This is basically a quick and easy alternative to underclocking and undervolting, and the opposite of overclocking.

For our testing, we've taken Nvidia's RTX 4090 Founders Edition and run it through eight of our most demanding tests to see what happens to performance, clock speeds, temperatures, and power requirements at the various settings. We tested in 10% increments, starting at 120% and dropping down to just 50% to provide the full range of options.

Before we get to the results of our testing, note that without increasing the GPU clock speed, the higher power limits don't really do much for performance. We've tested RTX 4090 overclocking elsewhere and found that in our standard gaming test suite, even at 4K ultra settings, overclocking only increased performance on the RTX 4090 by about 4%. In our more demanding DXR (DirectX Raytracing) test suite, however, overclocking proved a bit more helpful and increased performance by about 9% overall.

In short, the more demanding a game happens to be at whatever settings we test with, the more impact we'll see from changing the power limit. Conversely, there are a lot of games that don't tax a GPU like the RTX 4090 much at all, even at 4K and maxed-out settings — Microsoft Flight Simulator is a perfect example of this, given its CPU-limited nature. Such games may run just as fast at lower power limits, but that's because the GPU wasn't hitting its 450W TBP (Total Board Power) limit to begin with.

RTX 4090 Power Limiting Test Setup

We're using our existing Core i9-12900K test PC. We would likely increase power consumption from the graphics card if we were to upgrade to the new Core i9-13900K, but probably not by too much. We're using our DXR test suite for the power-limited testing, with the addition of A Plague Tale: Requiem, a recent release that also happens to support DLSS3. We wanted to include at least one set of tests using DLSS2 and DLSS3 (we tested both) to see what that did for performance and efficiency.

The other games we use for testing are Bright Memory Infinite Benchmark (far more impressive looking and demanding than the actual game), Control Ultimate Edition, Cyberpunk 2077, Fortnite, Metro Exodus Enhanced Edition, and Minecraft. We mostly use the maximum quality setting with all ray tracing options enabled, without DLSS of any form, though we didn't enable "Psycho" lighting in Cyberpunk. Note also that we enabled HairWorks and Advanced PhysX in Metro Exodus Enhanced, which is slightly more demanding than the previous testing we've conducted using that game. In other words, these results should only be compared within this article, as other reviews may have used slightly different settings.

Again, we've run all eight of the above gaming tests at eight different power limits on the RTX 4090 Founders Edition. Besides the default 100% limit, we tested at 110% and 120% to see if simply increasing the power limit would help performance. Then we also tested at 90%, 80%, 70%, 60%, and 50% to try and improve efficiency.

We capture all performance data using Nvidia's FrameView utility, which also collects GPU clock speeds, temperatures, and power consumption data. While the power consumption reported by the software isn't exactly the same as real-world power use measured using external tools like Powenetics, looking at the results indicates that Nvidia's software reports figures that are within about 10W of what we measured using Powenetics, with the added benefit being that we're able to collect a bunch of data much more quickly.

With that preamble out of the way, let's hit the test results.

RTX 4090 Overall Power Efficiency

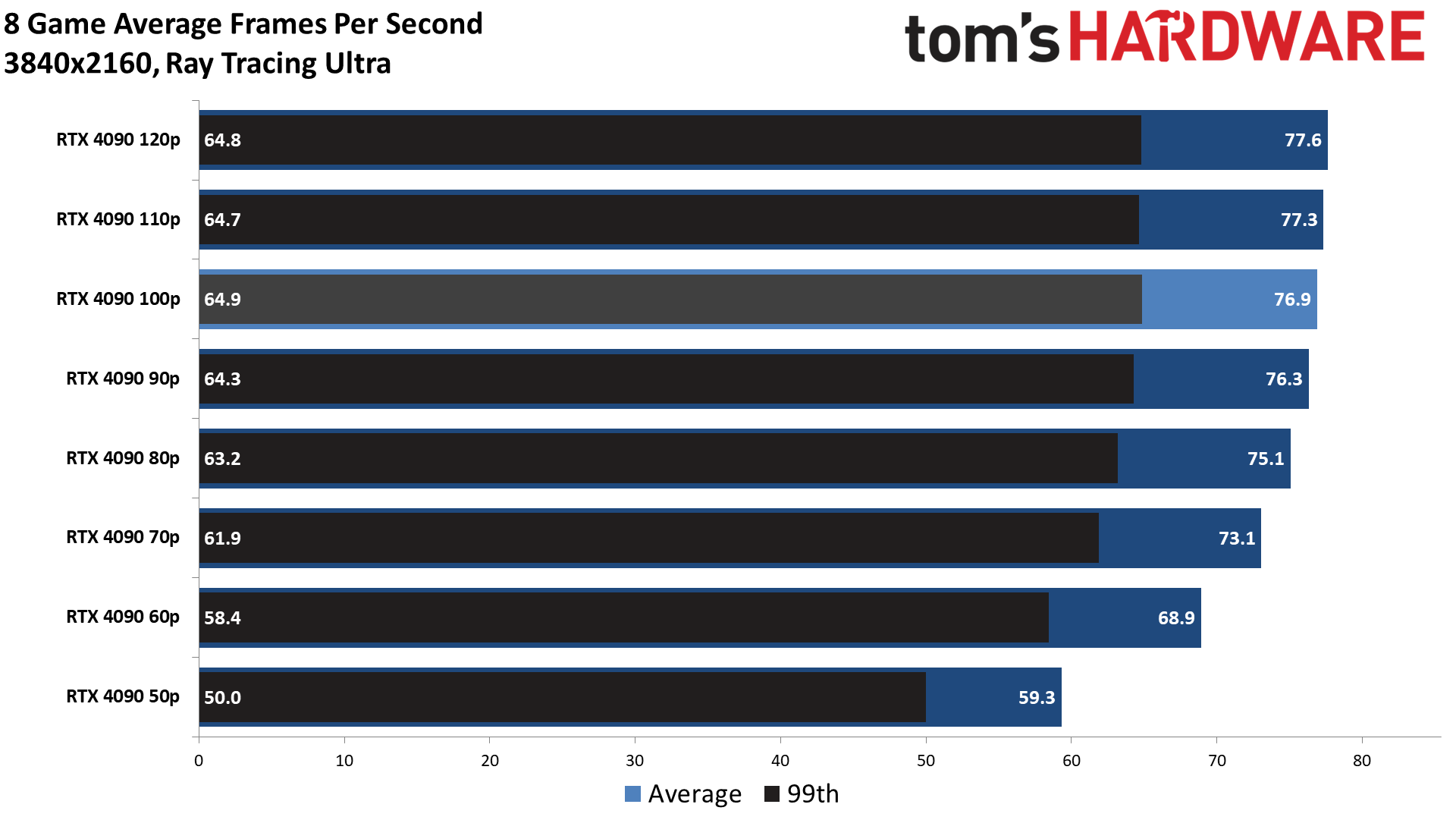

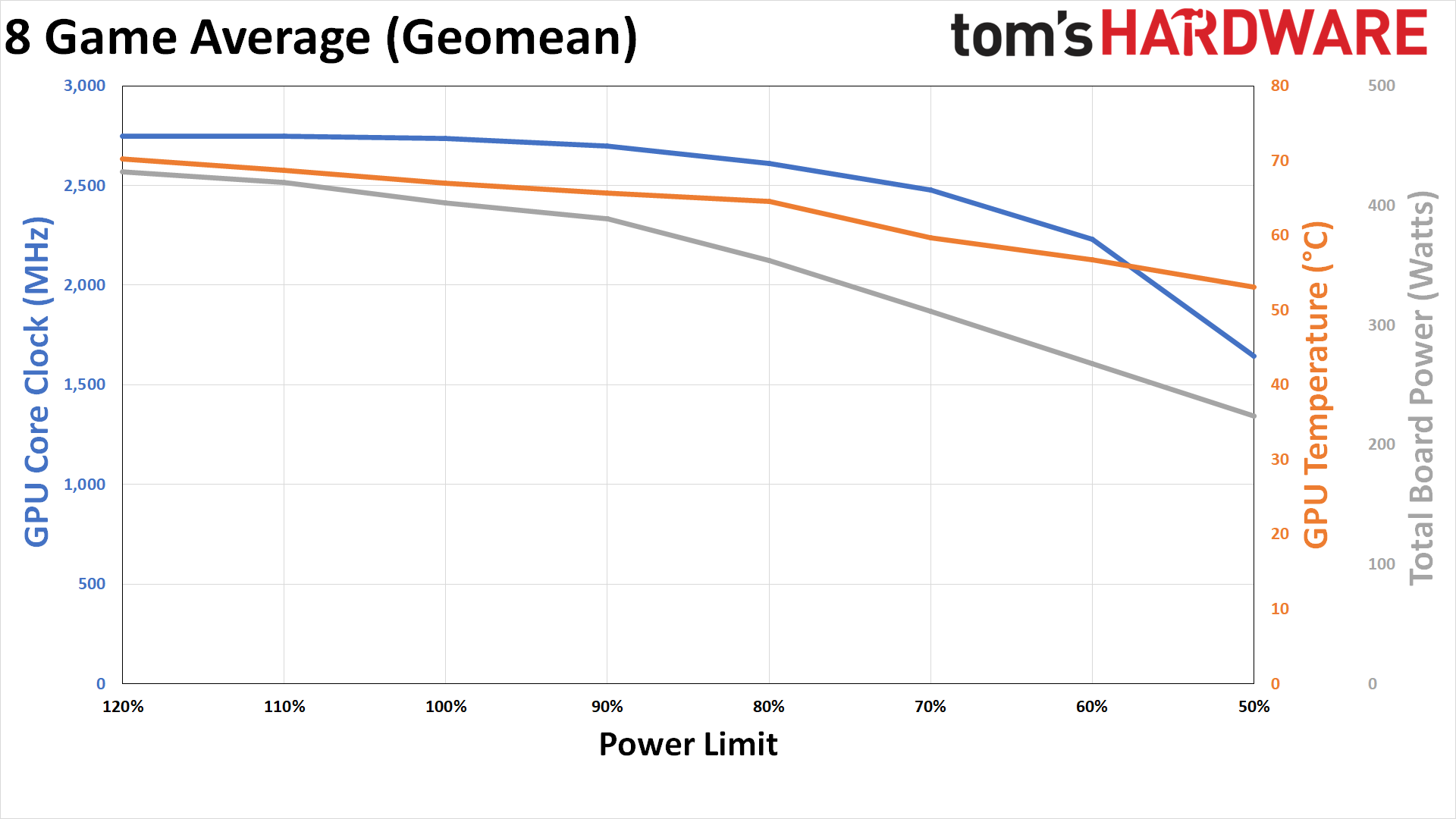

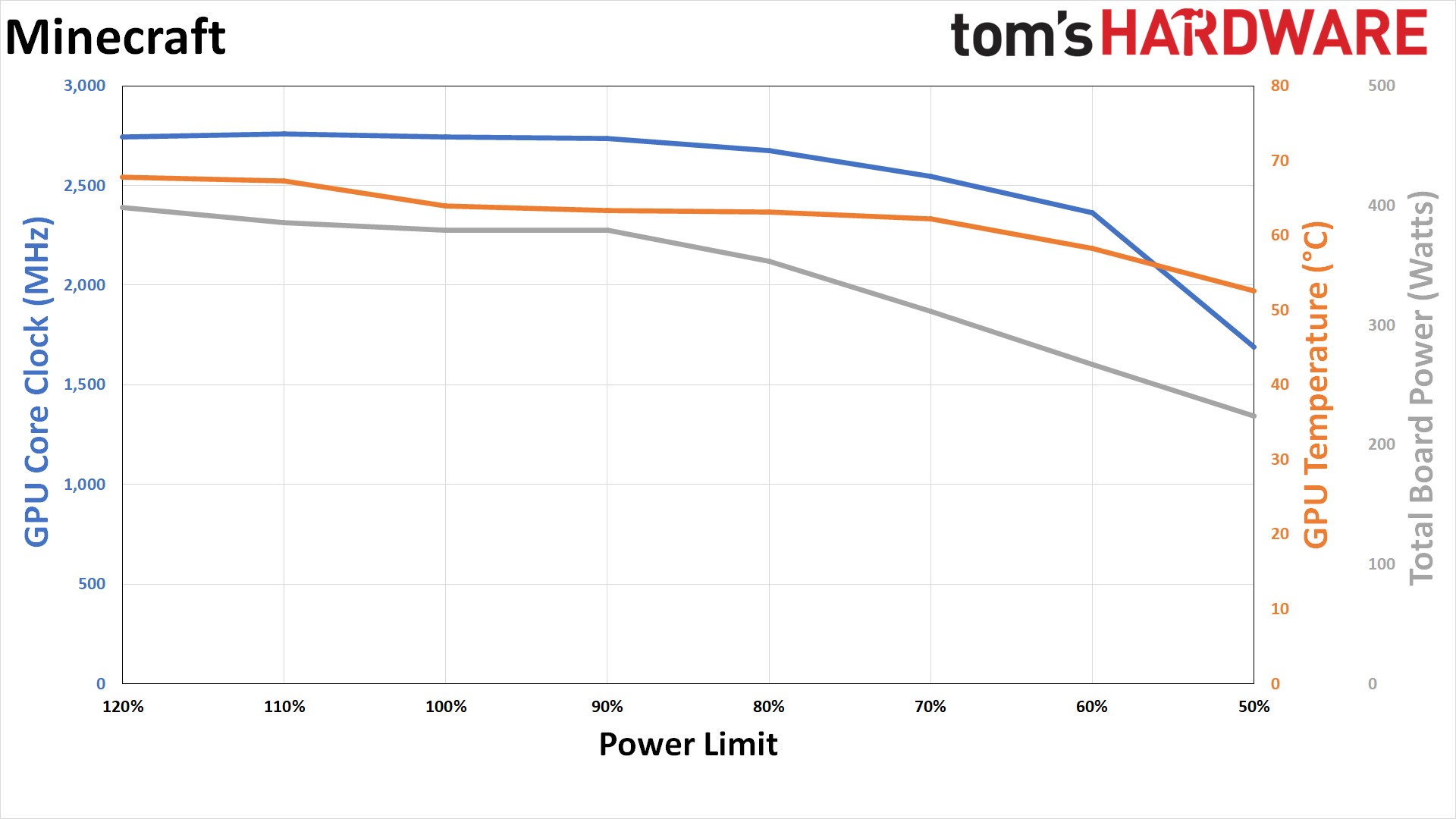

We have two separate charts, one showing our usual performance data (average and 99th percentile FPS) and the second showing power, temperature, and GPU clocks. Note that we didn't attempt to adjust the GDDR6X clocks, so potentially, as we drop the power limit, the memory will consume a slightly larger portion of the overall power budget. We'll start with the overall results, showing the geometric mean (equal weighting to all scores) of our eight tests.

In terms of performance, it's immediately clear that there's almost no change either by raising the power limit by up to 20% or by dropping it by 10%. There's also only a very slight 2.3% drop in frame rates with an 80% power limit — measurable but not particularly meaningful. Even with a 70% power limit, performance is still just 5% slower than at stock, which drops to 10% slower at 60% and 23% slower at 50%.

In terms of pure efficiency, every power-limited configuration ends up being better than the stock settings. Performance per watt, at 4K and in the most demanding games, measured 0.175 FPS/W at 100%. Efficiency drops at 110% and 120%, to 0.169 FPS/W and 0.166 FPS/W, respectively. Going in the other direction, it improves to 0.179 at 90%, 0.194 at 80%, 0.214 at 70%, 0.234 at 60%, and finally 0.237 FPS/W at 50%.

Again, that's "better" across the suite of power limits. However, gains are clearly tapering off at 50%, and pure performance still matters — most people don't buy a $1,600 graphics card just to limit performance while improving efficiency! But if you're worried about the adapter melting and are waiting for a replacement to arrive, cutting power use should certainly reduce the risk.

The average power use across our eight game tests was 402.3W at stock, which increased to 428.3W with the 120% power limit — so a theoretical 20% increase in power limit only caused a real-world increase of 6.5% due to other system bottlenecks. More interesting is the drop in power use, clocks, and temperature as we limit the power. At 80%, which seems to be the sweet spot on the efficiency curve, the average power use is 353.8W, about the same as what you'd see from an RTX 3090 or 3080 Ti. GPU temperature also dropped a couple of degrees, and clock speed still averaged 2,611 MHz.

With the 50% power limit, the average power draw was only 224W, GPU temperature was just 53C, but the core clocks are now down to 1,644 MHz. So performance would probably still be competitive with an RTX 3090 Ti, but we'd still be more inclined to increase the power limit to 60% or 70%.

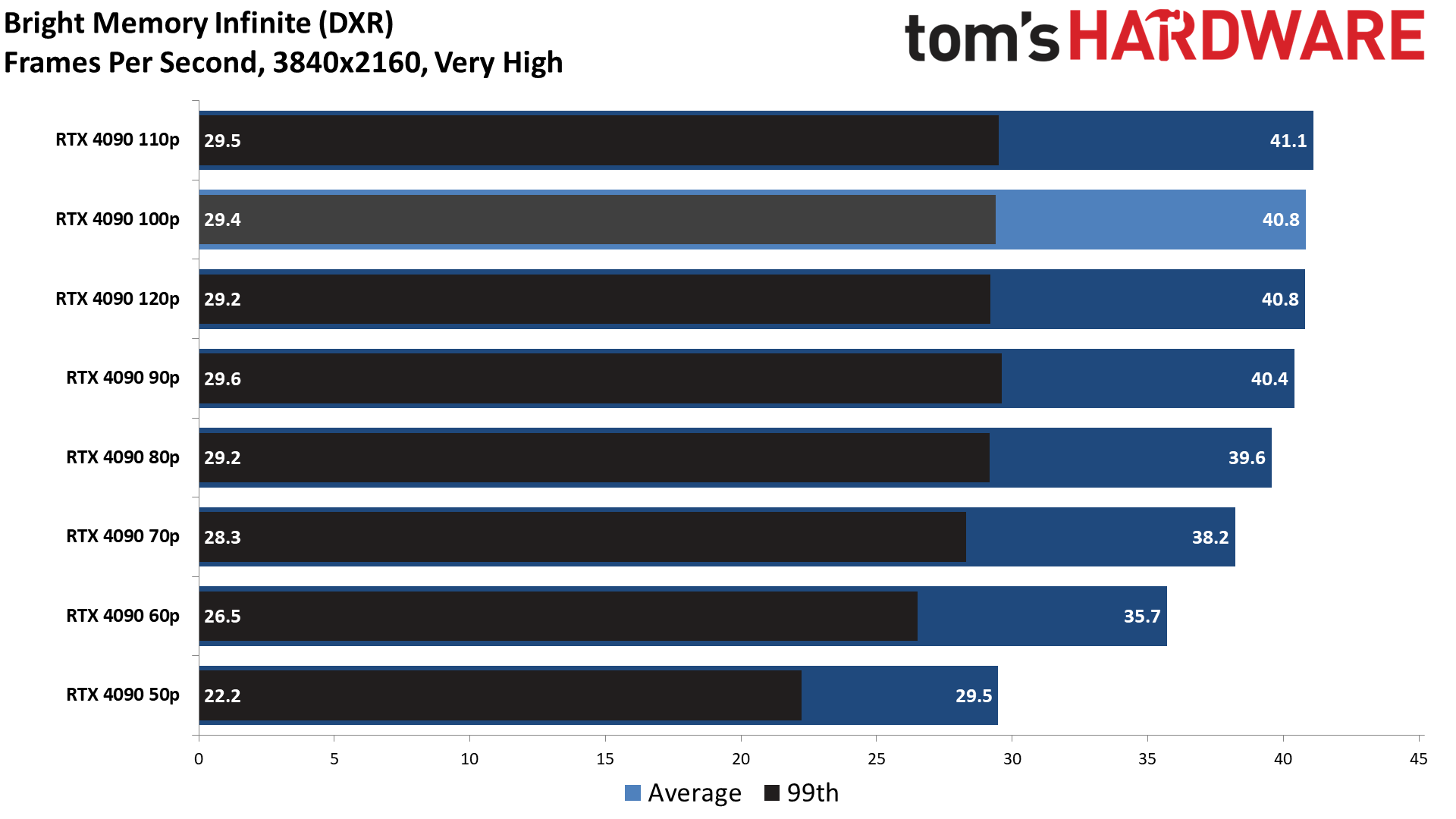

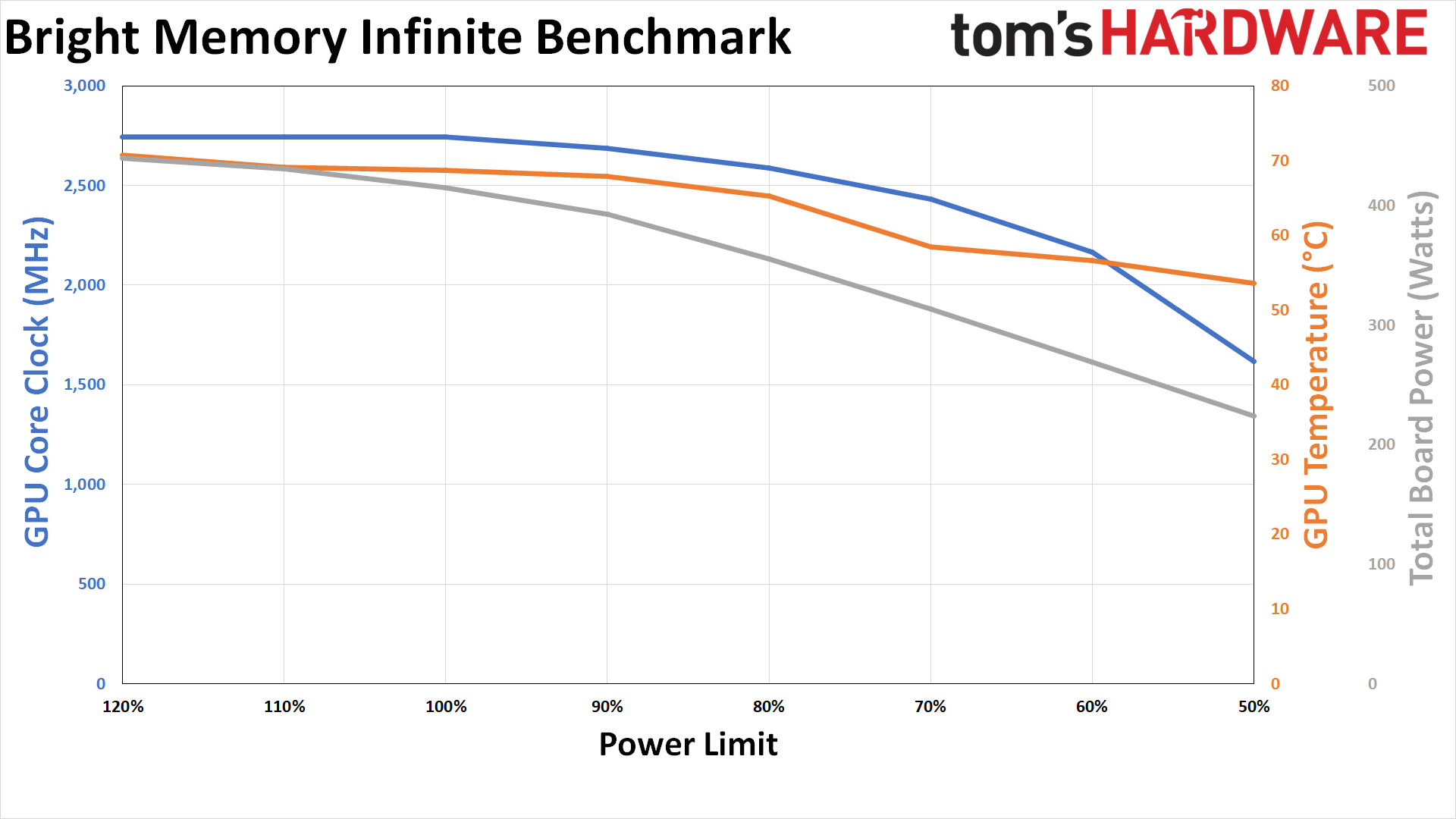

Bright Memory Infinite Benchmark Power Efficiency

The Bright Memory Infinite testing results are very similar to the overall chart. Performance barely changes at all, with up to 20% more power available due to clock speeds hitting their limit. Dropping the power limit to 80% decreases the power use by 14% (355W instead of 415W) while only reducing performance by 3%.

The power draw at stock on the reference RTX 4090 Founders Edition is still well over 400W, though it's nowhere near the 450W TBP maximum. There have been reports that the RTX 4090 "only uses about 350W while gaming," but those are clearly not universal truths. In CPU-limited scenarios, GPU power use will drop significantly, but in the most demanding games — particularly ray tracing games — you'll regularly see more than 400W at 4K ultra settings.

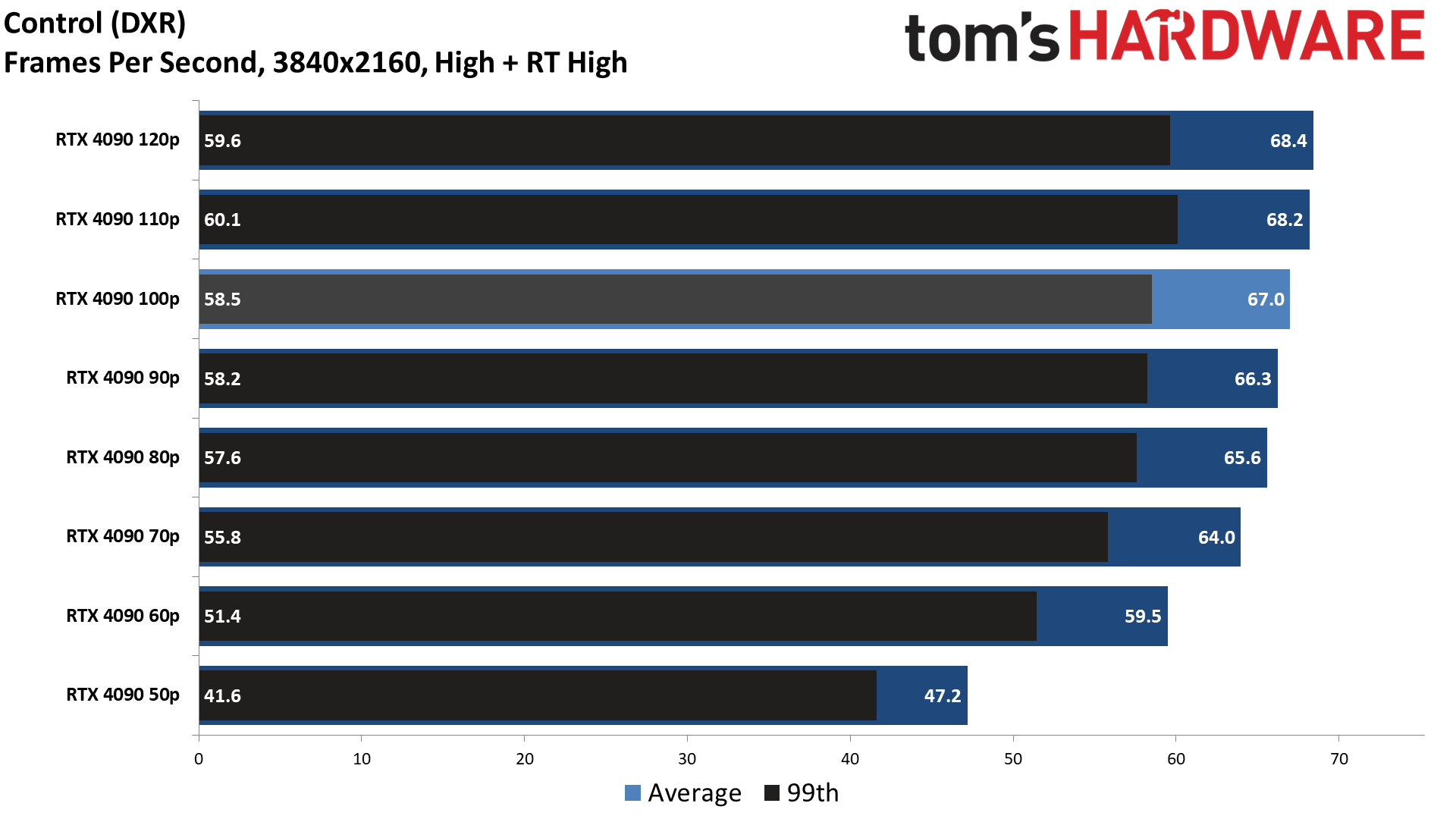

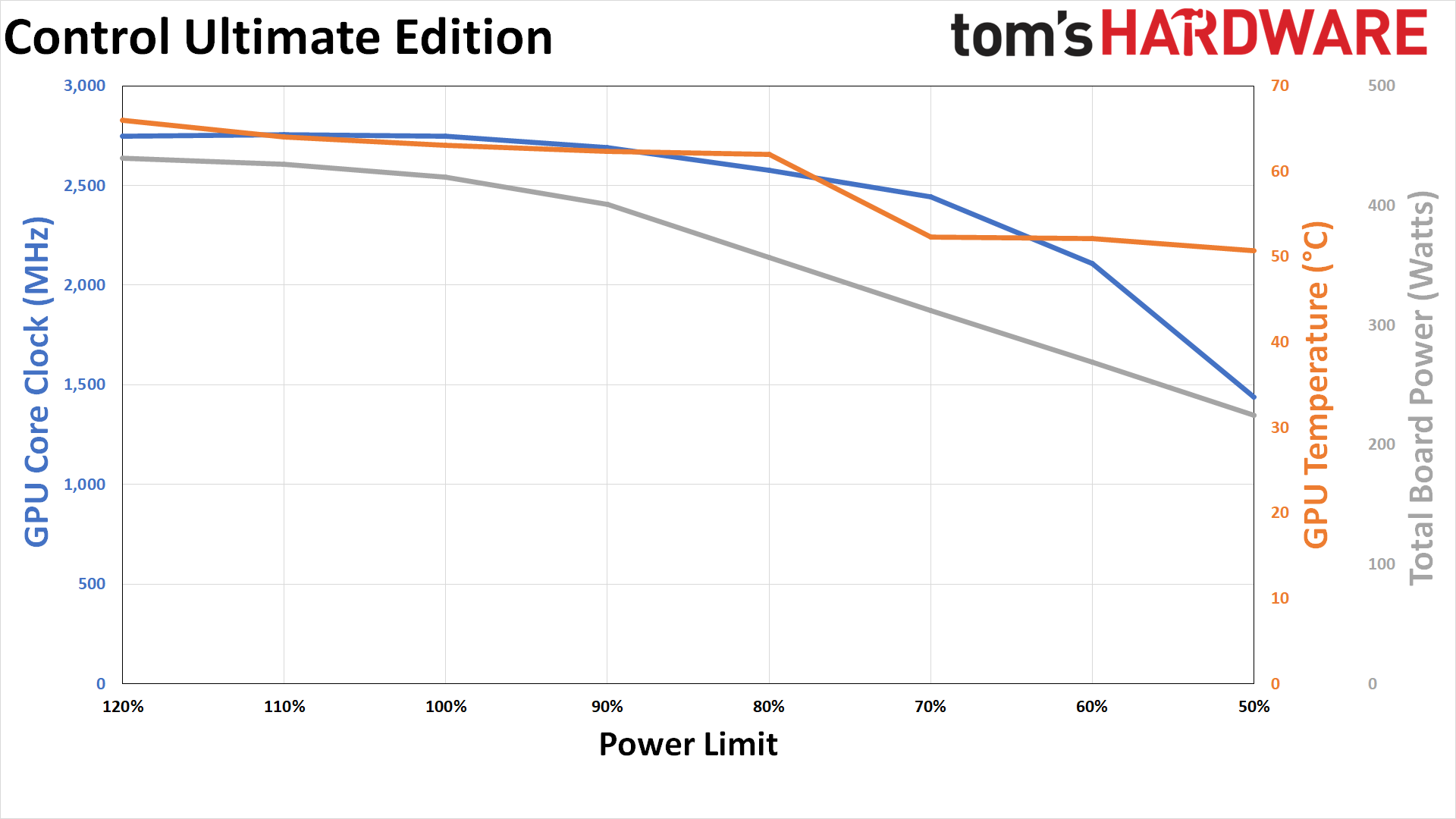

Control Ultimate Edition Power Efficiency

Control Ultimate Edition is one of the more demanding games in our test suite, at least as far as power consumption is concerned. At stock, the 4090 FE used 424W of power, so only 26W below the TBP. That should mean it will be affected more by power limit changes than some of the other games.

In practice, things aren't all that different from Bright Memory Infinite. Power use at 80% drops to 357W, a 17% reduction, while performance only drops by 2.1%. It's possible lengthier gaming sessions might show more of a difference, as time constraints limited our testing to a few minutes per game, but clearly the 4090 at stock settings isn't targeting maximum efficiency.

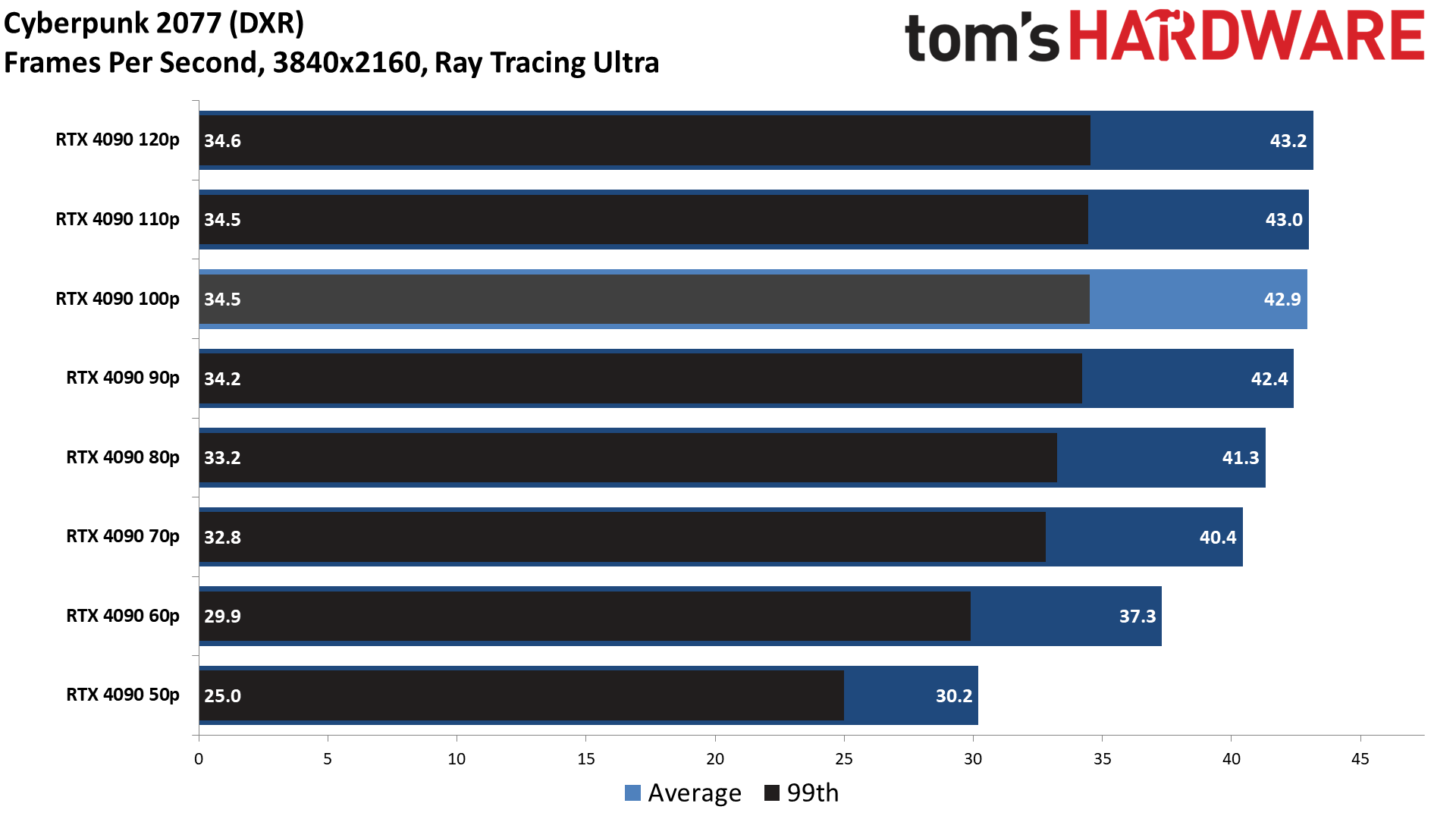

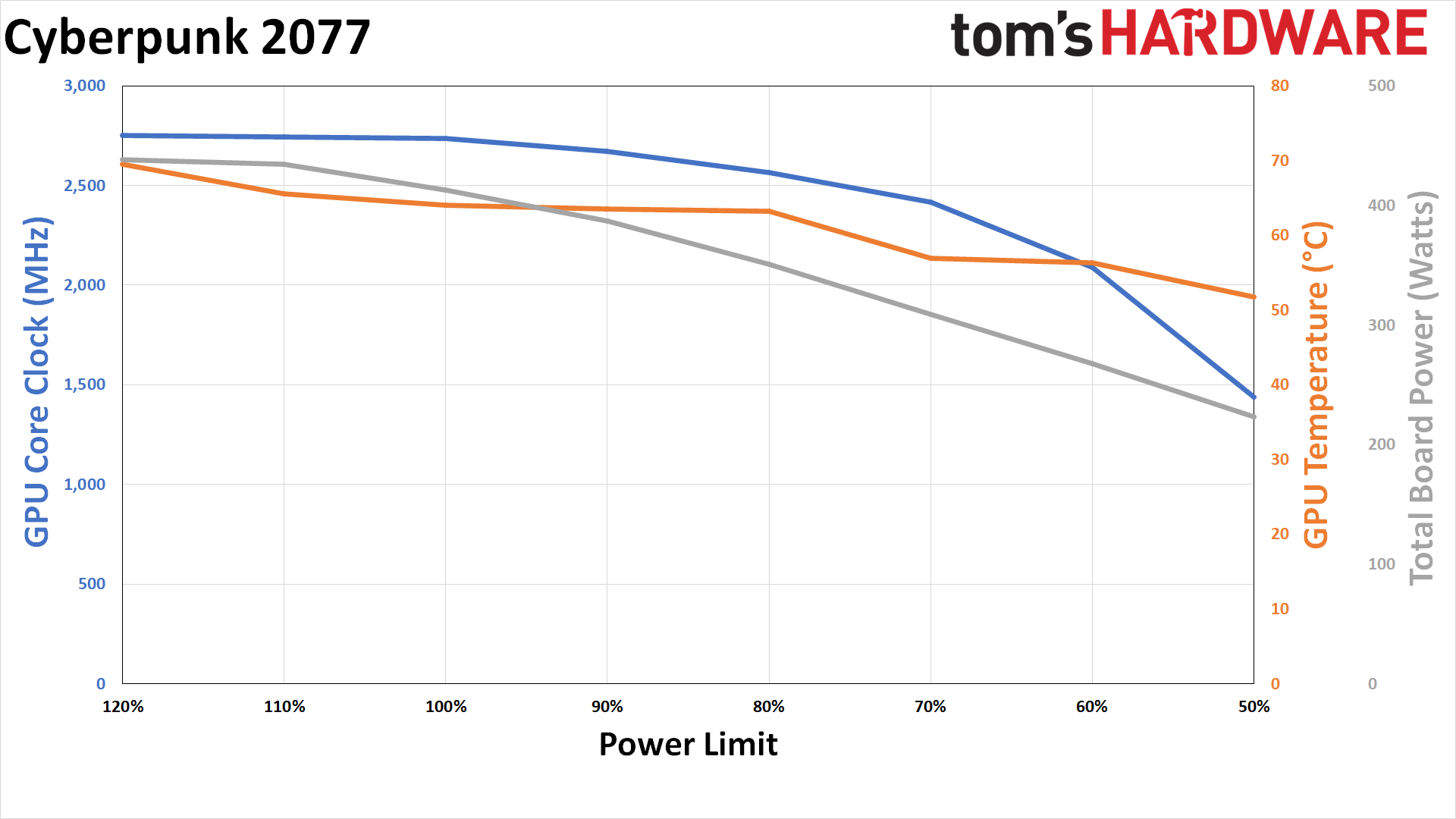

Cyberpunk 2077 Power Efficiency

Cyberpunk 2077 has a reputation as being one of the most demanding games around. Interestingly, it used less power at stock in our tests than several of the other games. We measured 413W of power use at 100%, which dropped to 351W at 80%.

The ideal balance between power and performance once again seems to be 70% or 80%. At 80%, you get 96% of the performance with 85% of the power use, while the 70% limit gives you 94% of the performance at 75% of the power use. (You don't use 70% or 80% of the base power because the power limit stems from the 450W TBP.)

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

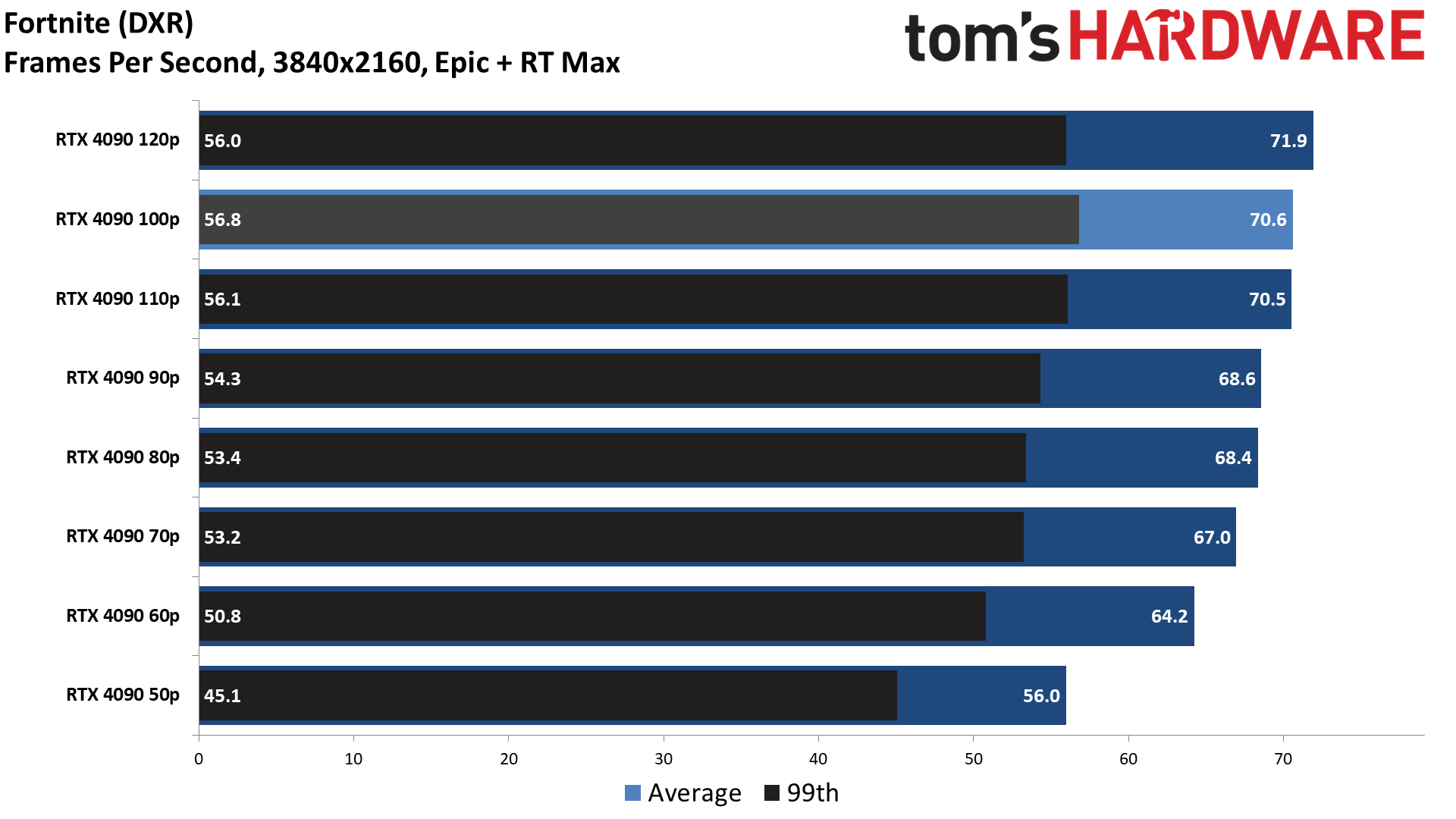

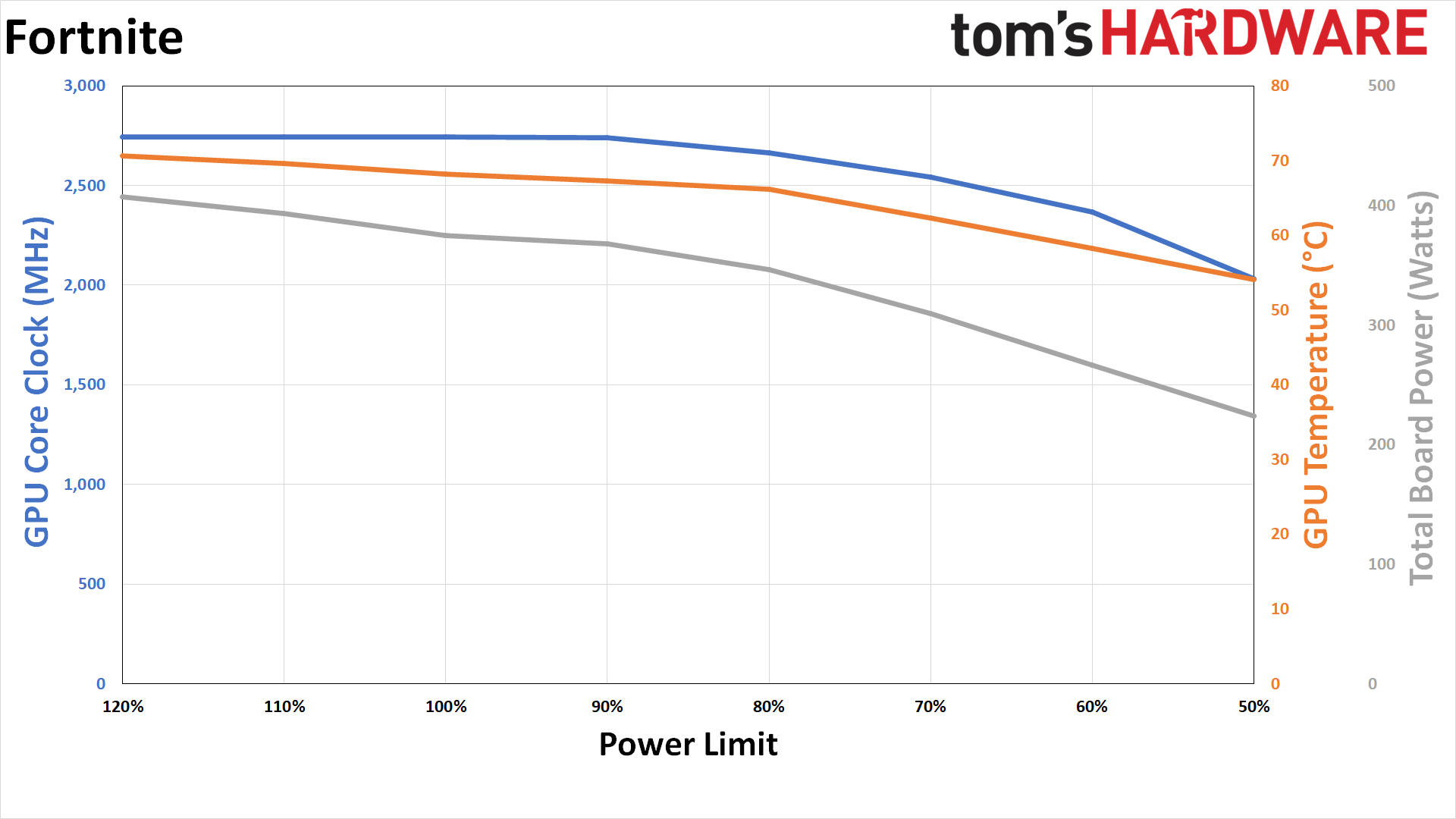

Fortnite (DXR) Power Efficiency

Fortnite, even with maxed-out ray tracing settings and without DLSS, ends up being one of the least demanding games in our test suite — and if you're running without ray tracing effects, it would have even lower power requirements. At stock, the RTX 4090 only consumes 375W of power, and even at a 120% power limit, it only hits 407W. Performance losses from reducing the power limit should be even lower, though actual power savings may not be as significant either.

The 90% and 80% power limit both deliver similar performance, at least within the margin of error — 3% slower than stock, with power use of 368W and 346W, respectively. The 70% limit drops power use to just 309W, an 18% reduction versus stock, while performance is down 9%. The best setting for Fortnite, based on our testing, seems to be 60%: you get 91% of the base performance while using 29% less power.

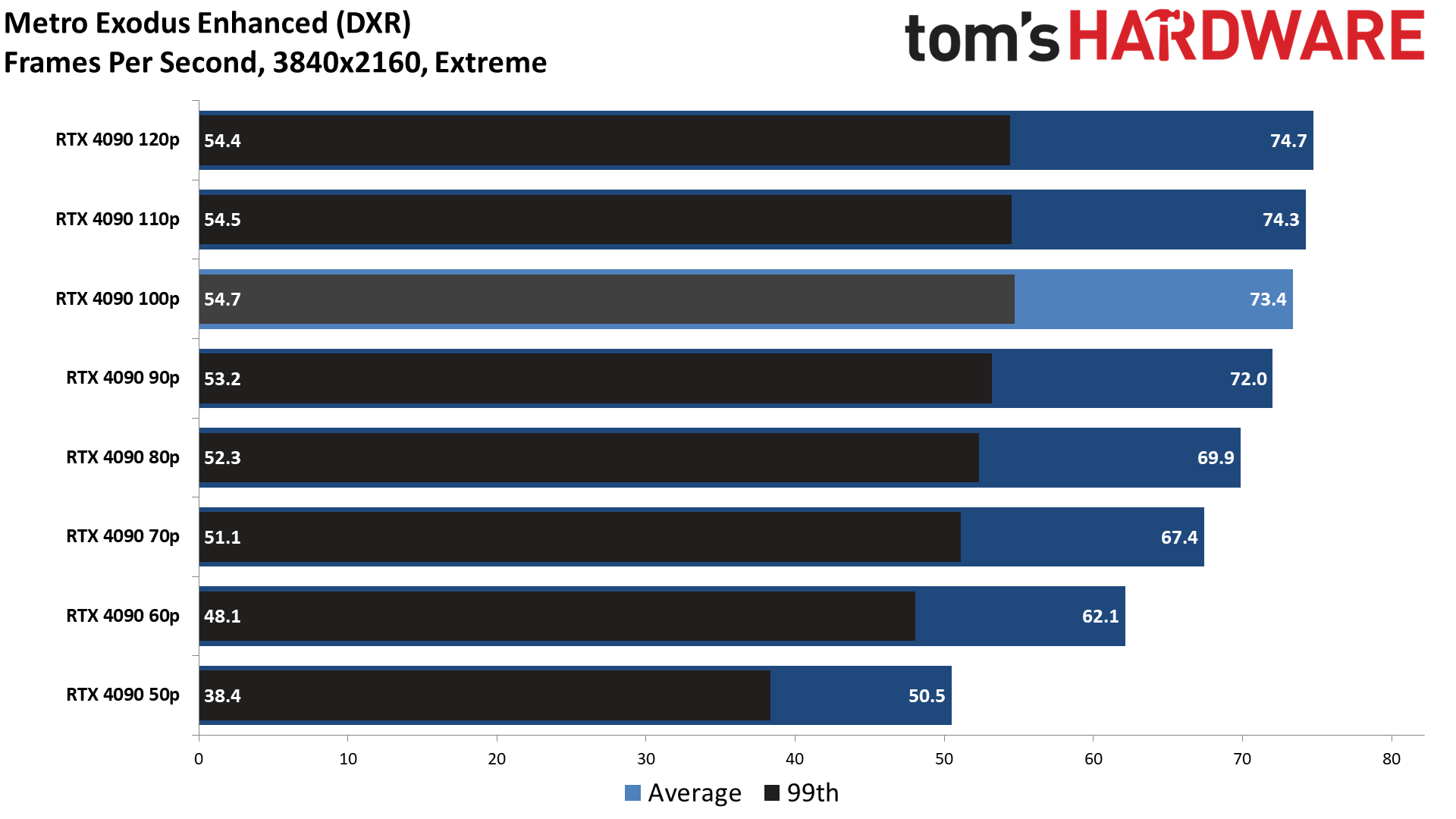

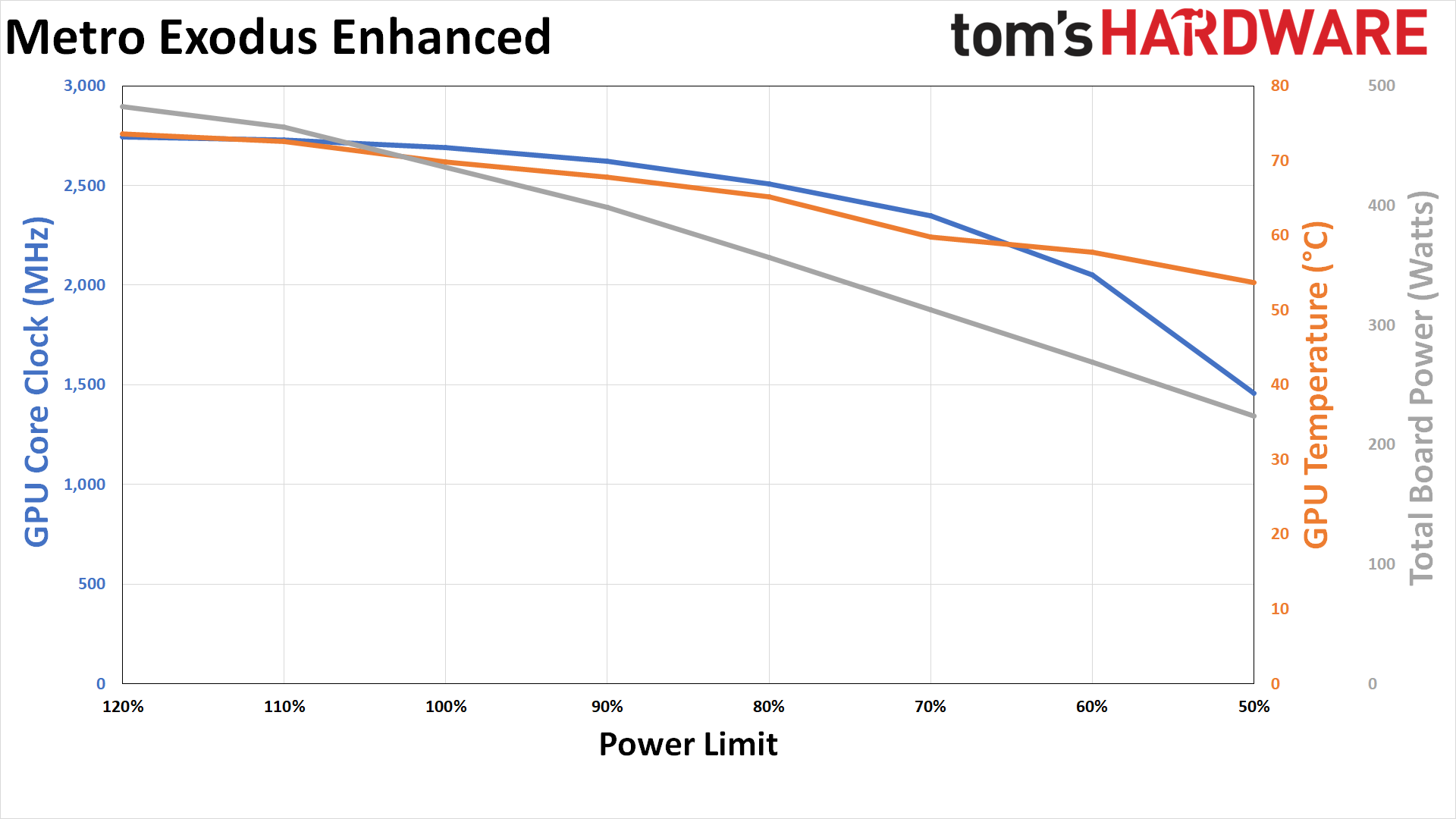

Metro Exodus Enhanced Edition Power Efficiency

Somewhat surprisingly, Metro Exodus Enhanced Edition ended up as the most demanding game from our test suite. We're using the Extreme preset, but we also enable HairWorks and Advanced PhysX. Even at stock settings, the RTX 4090 uses 432W, which jumps up to 465W with a 110% power limit and 483W with a 120% limit. That's interesting, considering none of the other games even managed to break 450W at the 120% setting. Unfortunately, the performance gains from the higher power limit are still marginal, at 1.2% and 1.8%.

Given the baseline measurements, reducing the power limit as expected causes a slightly larger drop in performance. At an 80% power limit, actual power use drops by 18% while performance drops by 5%. Each additional 10% drop in power reduces performance, though 70% still yields 92% of the base FPS. The final two power limits create a larger dip in performance, and efficiency at the 50% setting is actually worse than at 60%.

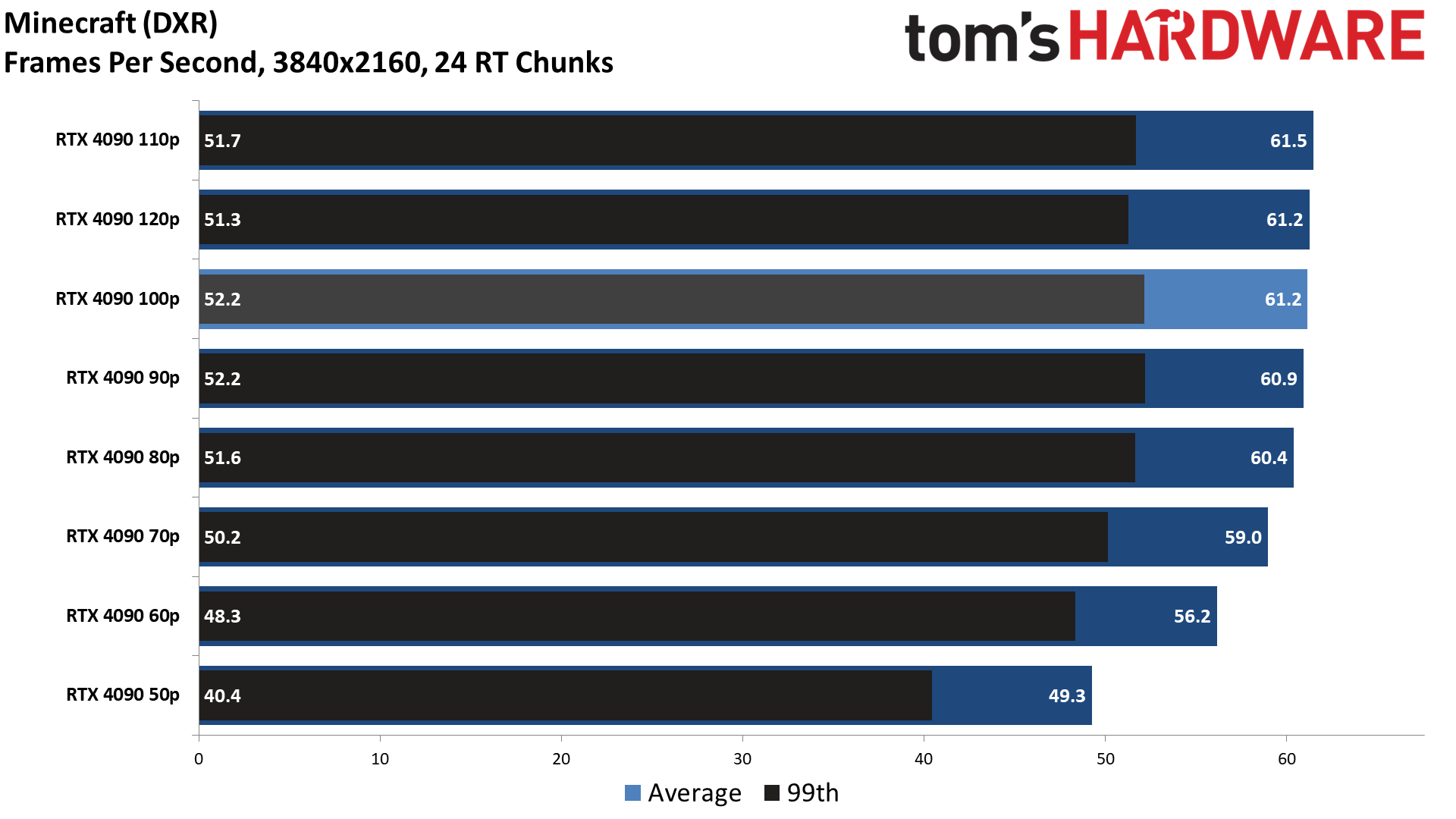

Minecraft Power Efficiency

Minecraft without ray tracing can run on a potato, but with DXR enabled, it's often quite demanding. It uses "full path tracing" to provide extensive lighting, reflection, and shadow effects, but it seems we've hit the limits of what it needs with the RTX 4090, as the base power use is only 380W. Like Fortnite, that means we see less of a performance impact by limiting power than in some of the other games.

The optimal setting on the RTX 4090 Founders Edition looks to be 60%, which reduces power use by 30% but only drops performance by 8%. Technically the 50% setting still improves performance per watt, but the additional 10% reduction in power use also causes an 11% decrease in performance.

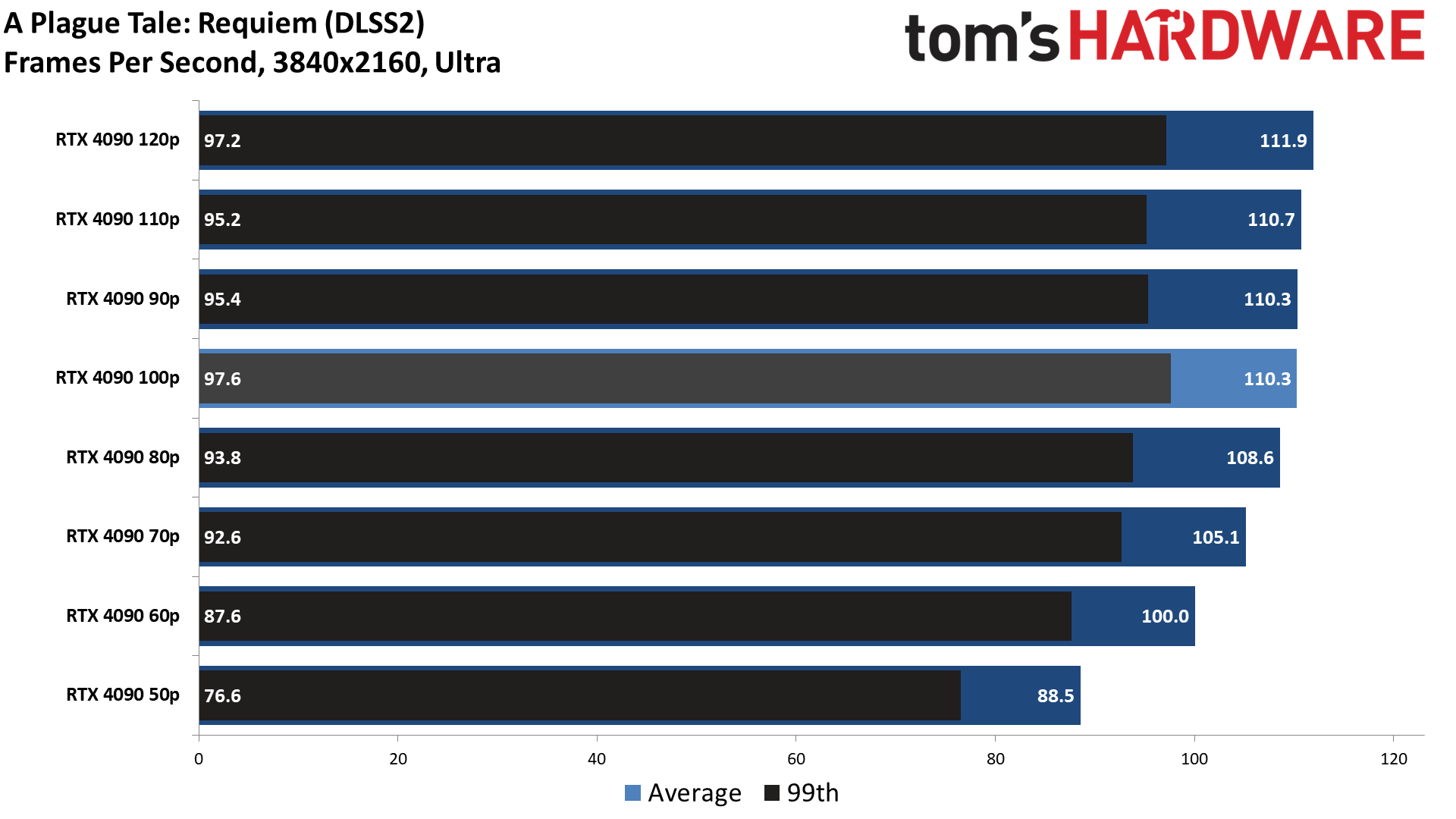

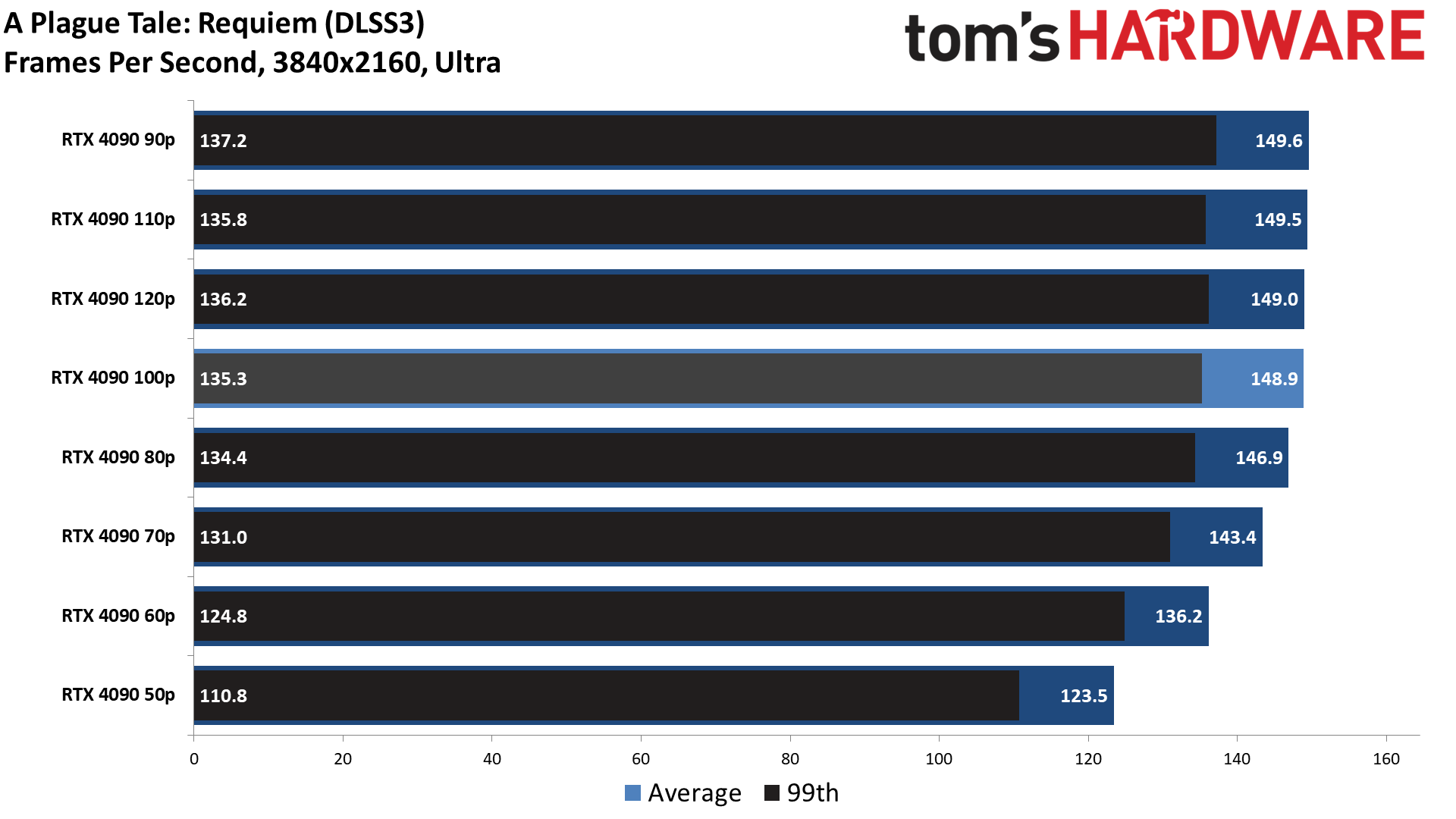

A Plague Tale: Requiem Power Efficiency

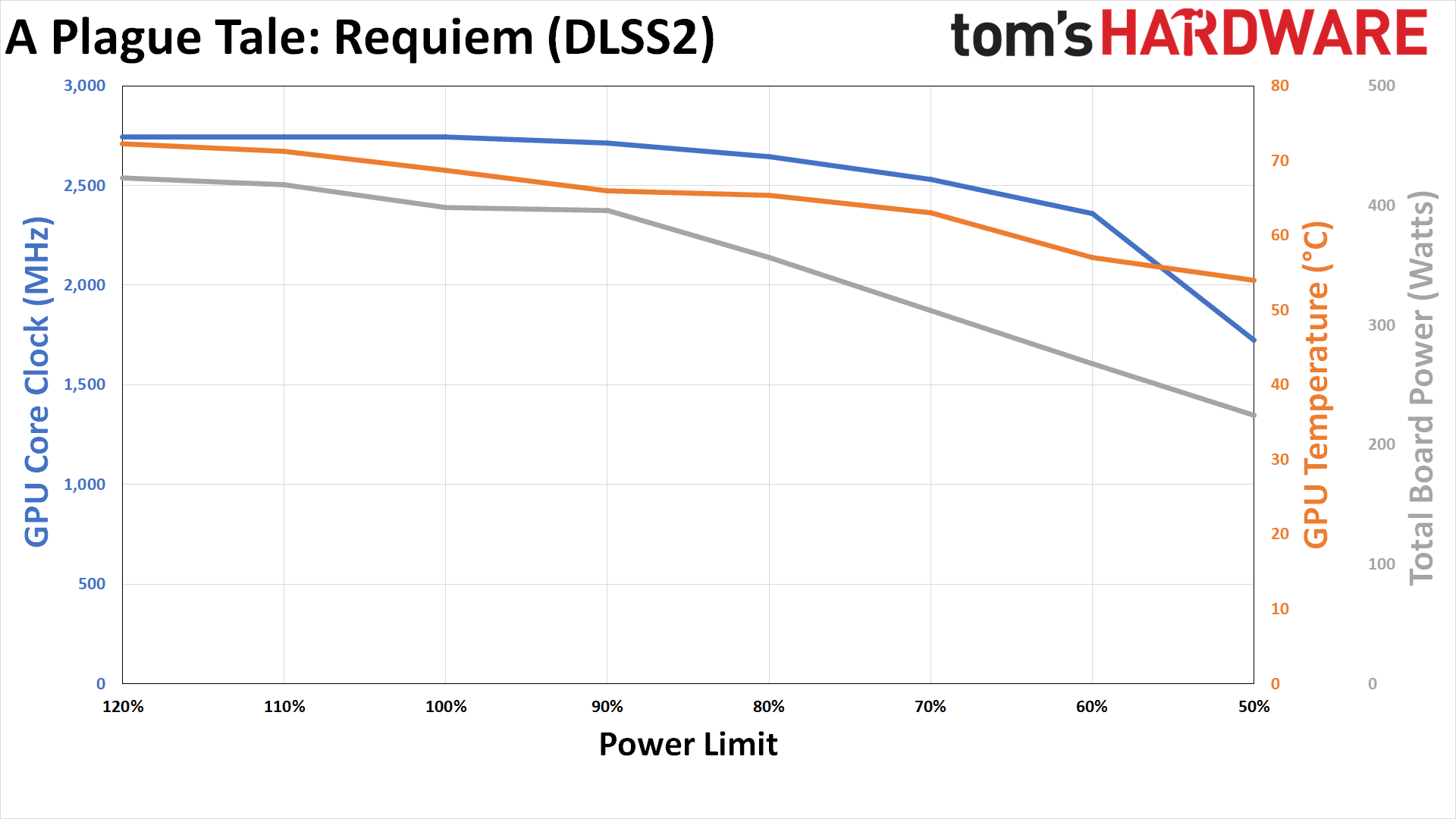

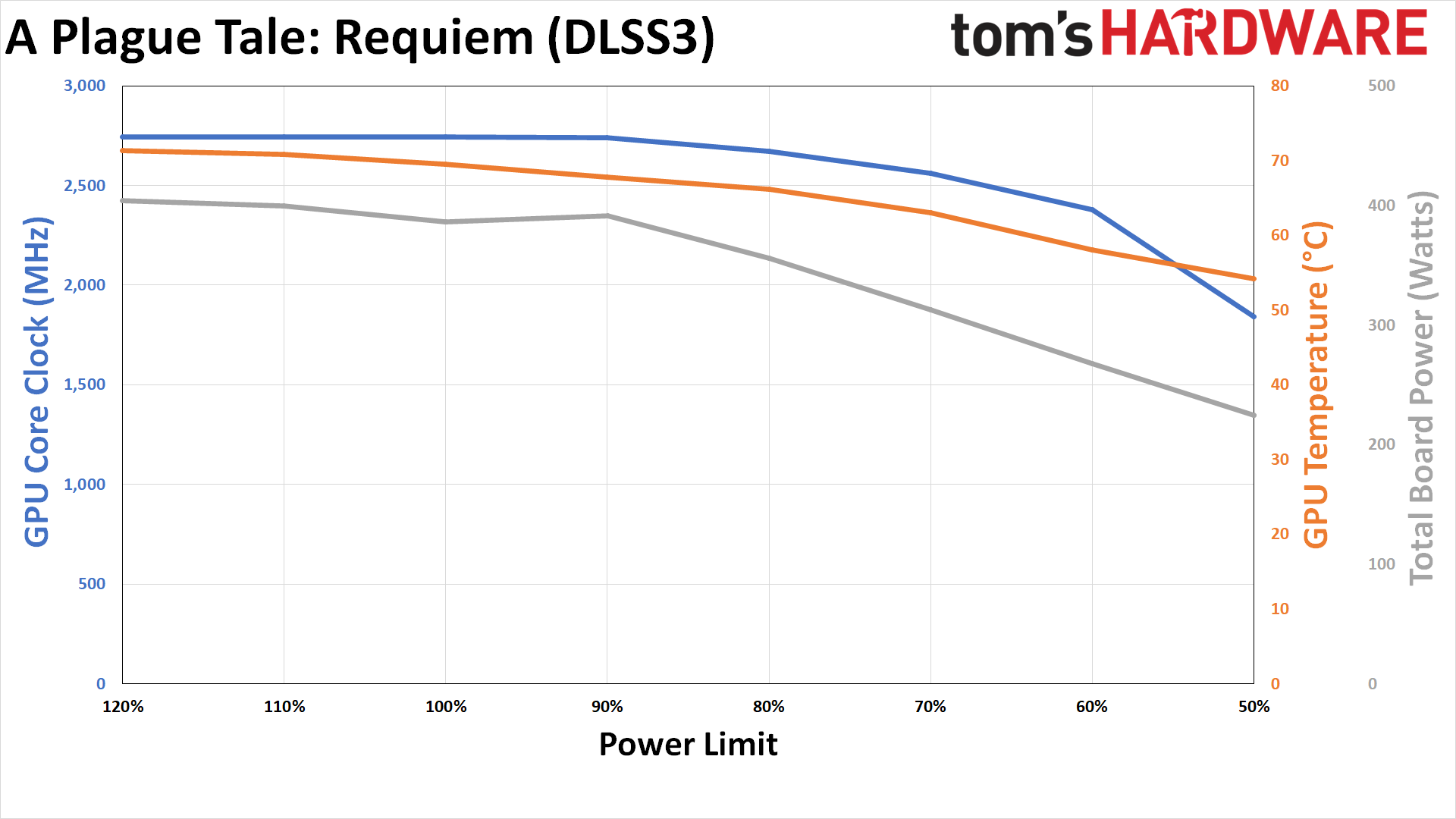

A Plague Tale: Requiem is the only game where we tested with DLSS 2 and DLSS 3 (Frame Generation) just to see how much that affects power use. In theory, Frame Generation should reduce the GPU load while increasing performance — though it also increases latency. And that's precisely what we see in our testing.

With DLSS 2 Super Scaling enabled, in Quality mode, power consumption at stock settings is 398W. That's slightly lower than several other games but not the lowest we tested. DLSS 3 drops that to 387W, so there is not a huge difference overall, and it also breaks the 144 fps mark.

With DLSS 3 enabled, framerates stay above 144 fps at the 80% power limit and fall just below that mark at 70%, so you only lose 1% and 4% of the base performance. However, power use doesn't drop as much because of the lower starting point: it's down just 8% on the 80% setting and 19% on the 70% setting. With DLSS 2, the performance and power impact was slightly larger but hardly worthy of discussion.

Nvidia RTX 4090 Power Efficiency: Closing Thoughts

There's an ideal spot on the voltage and frequency curve for maximum efficiency, which is what Nvidia likes to target with its laptop GPUs. For desktops, however, chasing maximum performance at the cost of efficiency is often the name of the game. For example, with the RTX 4090, the final ~5% in performance basically requires 15–20% more power.

Given the issues users have encountered with the apparently faulty 16-pin power adapter, anyone who already purchased an RTX 4090 and who still uses the adapter might want to look into reducing power consumption — along with removing the side panel on the case and doing their best to avoid putting any stress on the cable connector. While lower power use doesn't guarantee the connector won't fail and melt, it should at least make it less likely to happen while we wait for what will almost certainly be a manufacturer recall of the adapter.

On the bright side (for Nvidia), the actual cost of the adapter cable should be relatively trivial compared to the cost of an RTX 4090 graphics card. Another bright side, this time for users, is that you can improve overall efficiency by 20–30% if you run a 4090 with a 70% or 60% power limit… but you'll also reduce performance on your shiny new and not yet melted extreme GPU.

Results with other RTX 4090 cards will also likely differ from what we've shown here. Factory overclocked models often run at higher voltages and don't necessarily work as well at reduced power limits, at least in our experience. Still, what we've done here was simple enough. All you need is a utility like MSI Afterburner and then set an appropriate power limit.

We're certainly curious to see how AMD responds to the 4090 melting adapter debacle. Of course, we know AMD has no intention of using the 16-pin 12VHPWR connector on its upcoming RDNA 3 GPUs, but AMD has been beating the efficiency drum for the past few generations. So RDNA 3 might end up being a lot more efficient than Ada Lovelace, at least if you stick with Nvidia's default power settings. Will it be faster, though? That remains to be seen.

- MORE: Best Graphics Cards

- MORE: GPU Benchmarks and Hierarchy

- MORE: All Graphics Content

Jarred Walton is a senior editor at Tom's Hardware focusing on everything GPU. He has been working as a tech journalist since 2004, writing for AnandTech, Maximum PC, and PC Gamer. From the first S3 Virge '3D decelerators' to today's GPUs, Jarred keeps up with all the latest graphics trends and is the one to ask about game performance.

-

Neilbob That looks pretty much conclusive to me. Except for those people who insist they can actually tell the difference between 100FPS and 102FPS, there's no reason not to run this monstrosity in a more power efficient manner.Reply

I really wish the manufacturers would default to 75-80% (or less) power and let users decide via software/drivers if the extra less-than-5% performance is worth the bother, rather than chasing the performance-at-all- costs-because-benchmarks-are-all-important thing that seems to be going on lately. -

bit_user Thanks for testing this, @JarredWaltonGPU ! If I ever got such a beast of a card, I had already planned to put it on a leash. Articles like this one are key for showing us the consequent tradeoffs.Reply -

bit_user Reply

In the era of cut-throat benchmark competition, it's unrealistic to expect manufacturers to do this of their own accord.Neilbob said:I really wish the manufacturers would default to 75-80% (or less) power and let users decide via software/drivers if the extra less-than-5% performance is worth the bother, rather than chasing the performance-at-all- costs-because-benchmarks-are-all-important thing that seems to be going on lately.

I think a good first move would be some kind of energy labeling regulation that requires the energy usage of the default config to be accurately characterized. Similar to how we insist automobiles advertise their fuel-efficiency, according to a prescribed measurement methodology. -

AgentBirdnest Wow! Those are really interesting results. I'm not gonna get a 4090, but it's still fascinating to know that I could lower the power by 30% or even 40% in the summer so I don't get cooked in my gaming room, and would only miss out on ~10% performance that I probably wouldn't notice.Reply

Awesome work, Jarred. I've really wanted to see some tests exactly like this. -

JarredWaltonGPU Reply

There are going to be cases where the losses are larger (ie, Metro Exodus Enhanced), but yeah, Nvidia really stomped on the performance pedal — screw efficiency! Which is interesting as other Ada Lovelace parts (like the RTX 6000 48GB) aren't pushing nearly as hard.AgentBirdnest said:Wow! Those are really interesting results. I'm not gonna get a 4090, but it's still fascinating to know that I could lower the power by 30% or even 40% in the summer so I don't get cooked in my gaming room, and would only miss out on ~10% performance that I probably wouldn't notice.

Awesome work, Jarred. I've really wanted to see some tests exactly like this. -

-Fran- Yes, let's keep reducing the power of the most expensive hardware we buy going forward to get less performance so we can manage the heat and power outputs. Makes perfect buying sense!Reply

Why are we allowing Companies to pass that burden to us, again?

Regards. -

bit_user Reply

Benchmarks, benchmarks, benchmarks! Being the top-performer translates into premium prices and greater sales volume. The temptation to turn up clock speeds just a little more has proven too great form companies to resist.-Fran- said:Why are we allowing Companies to pass that burden to us, again?

For efficiency to be prioritized, the market needs to value it. As I've been saying, a step towards that would be some standardization of metrics that can be used to compare products on that basis. -

_dawn_chorus_ Great article. I'd love to see a similar one for Raptor lakes13900k/700k/600k.Reply

The power draw and heat are the two main factors making me hesitant to buy.