AMD Radeon RX 560 4GB Review: 1080p Gaming On The Cheap

Why you can trust Tom's Hardware

Temperatures, Clock Rates & Overclocking

Overclocking

Manual overclocking got us all the way to 1460 MHz under The Witcher 3. This represents a respectable 134 MHz boost. We also increased the memory clock rate by 200 MHz without any stability problems over an hour-long session in the same game.

At a glance, that doesn't seem like a lot of headroom. But depending on the game, it could mean anywhere from 7- to 10%-higher frame rates. The temperatures don't climb out of control, either.

Temperatures & Frequencies

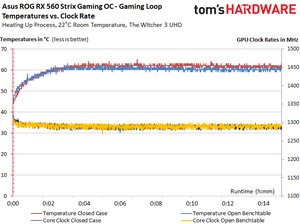

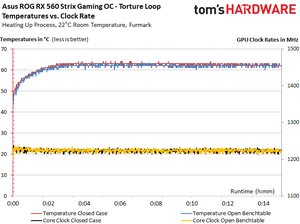

First, we compare temperatures and clock rates before and after overclocking.

| Header Cell - Column 0 | Initial Value | Final Value |

|---|---|---|

| Open Benchtable | ||

| GPU Temperature | 42°C | 61°C |

| GPU Frequency | 1326 MHz | 1298 - 1301 MHz |

| Ambient Temperature | 22°C | 22°C |

| Closed Case | ||

| GPU Temperature | 43°C | 62°C |

| GPU Frequency | 1326 MHz | 1281 - 1294 MHz |

| Ambient Temperature | 25°C | 31°C |

| OC (Open Benchtable) | ||

| GPU Temperature (3550 RPM) | 43°C | 69°C |

| GPU Frequency | 1460 MHz | 1451 -1460 MHz |

| Ambient Temperature | 22°C | 36°C |

In Depth: Temperature vs. Clock Frequency

In order to illustrate our results, we're presenting the data for 15 minutes of our warm-up phase:

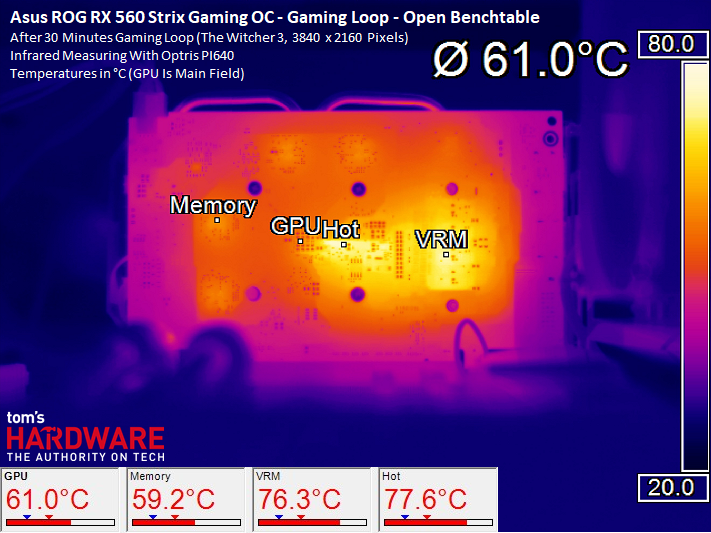

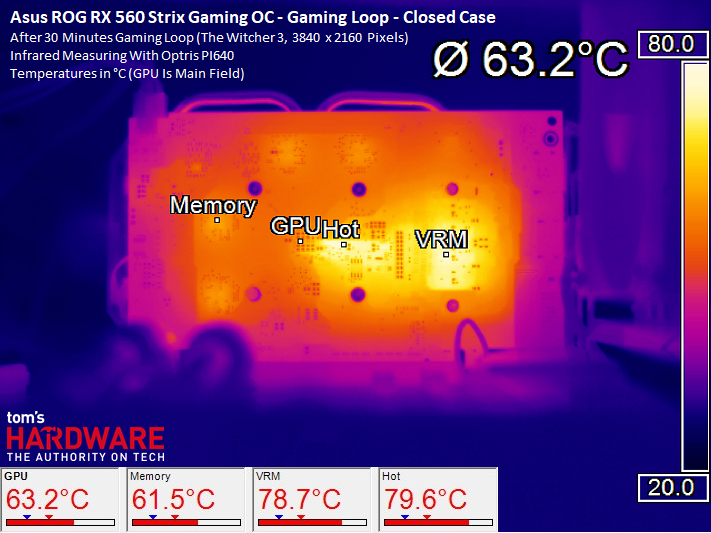

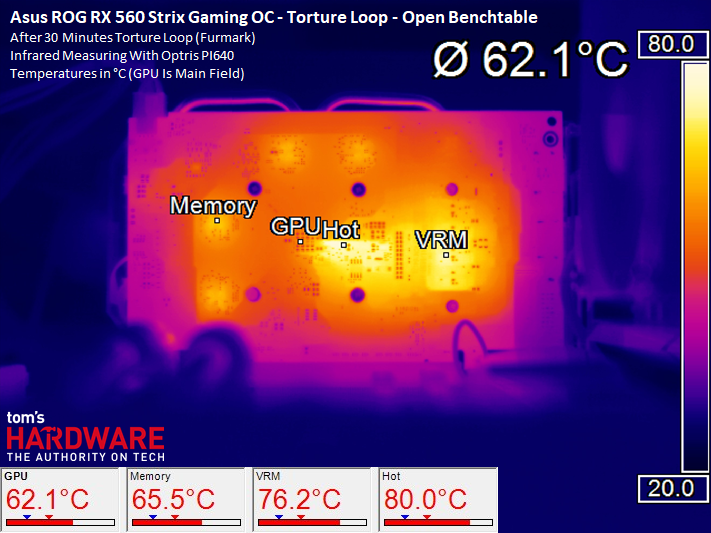

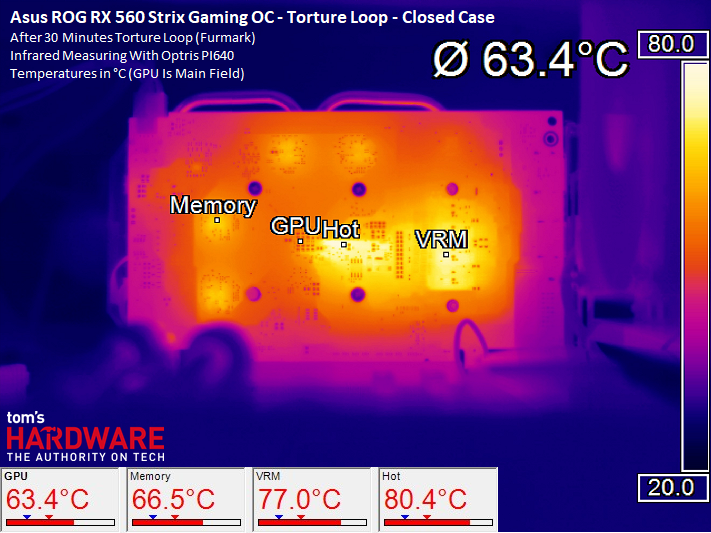

Infrared Temperature Analysis

Our infrared temperature analysis shows that the VRM area and a spot close to the GPU package get very hot. All of these values are still in an acceptable range, though. Ultimately, the 100W consumed by this card doesn't pose much of a challenge for its thermal solution.

MORE: Best Graphics Cards

MORE: Desktop GPU Performance Hierarchy Table

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

MORE: All Graphics Content

Current page: Temperatures, Clock Rates & Overclocking

Prev Page Power Consumption Next Page Cooling & Noise-

RomeoReject Cutting it by $20 would make it a $100 card. They'd likely be losing money at that price point.Reply -

firerod1 Reply20235344 said:Cutting it by $20 would make it a $100 card. They'd likely be losing money at that price point.

I meant this card since it’s 1050 ti price while offering 1050 performance. -

cryoburner Reply...we couldn’t wait to see how Radeon RX 560 improved upon it.

Is that why you waited almost half a year to review the card? :3 -

shrapnel_indie Reply20235672 said:...we couldn’t wait to see how Radeon RX 560 improved upon it.

Is that why you waited almost half a year to review the card? :3

Did you read the review?

At the beginning of the conclusion:

The pace at which new hardware hit our lab this summer meant we couldn’t review all of AMD’s Radeon RX 500-series cards consecutively.

-

Wisecracker 4GB on the Radeon RX 560 = "Mining Card"Reply

The minimal arch (even with the extra CUs) can't use 4GB for gaming like the big brother 570. The 2GB RX 560 even trades blows with its 4GB twin, along with the 2GB GTX 1050, at the $110-$120 price point for the gamer bunch.

Leave the RX 560 4GB for the "Entrepreneurial Capitalist" crowd ...

-

bit_user I think your power dissipation for the 1050 Ti is wrong. While I'm sure some OC'd model use more, there are 1050 Ti's with 75 W TDP.Reply

Also, I wish the RX 560 came in a low-profile version, like the RX 460 did (and the GTX 1050 Ti does). This excludes it from certain applications. It's the most raw compute available at that price & power dissipation. -

senzffm123 correct, i got one of those 1050 TI with 75 W TDP in my rig, doesnt have a power connector as well. hell of a card!Reply -

turkey3_scratch My RX 460 I bought for $120 back in the day (well, not that far back). There were some for $90 I remember, too. Seems like just an RX 460. Well, it is basically an RX 460.Reply -

jdwii Man Amd what is up with your GPU division for the first time ever letting Nvidia walk all over you in performance per dollar, performance per watt and overall performance, this is very sad.Reply

Whatever Amd is doing with their architecture and leadership in the GPU division needs to change. I can't even think of a time 2 years ago and before where nvidia ever offered a better value.