Nvidia GeForce GTX 1080 Graphics Card Roundup

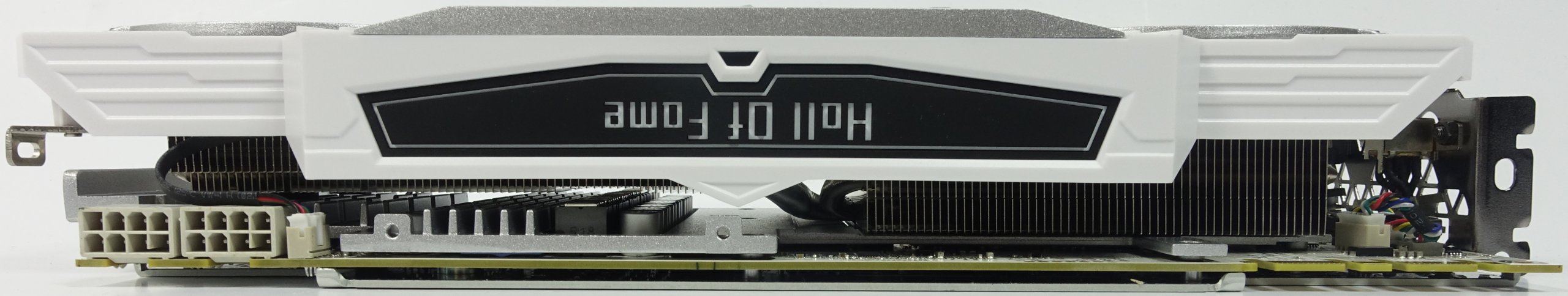

Galax/KFA² GTX 1080 Hall of Fame

Why you can trust Tom's Hardware

This card, which is sold in Germany under the name KFA² GeForce GTX 1080 Hall of Fame, is marketed internationally using the Galax brand. Both are exactly the same, though. They come from Galaxy Microsystems, which also refers to itself as Galaxy and Galaxytech. You won't find the company's cards on Newegg or Amazon. Rather, the only way we've found to buy the 1080 HoF is through galax.com.

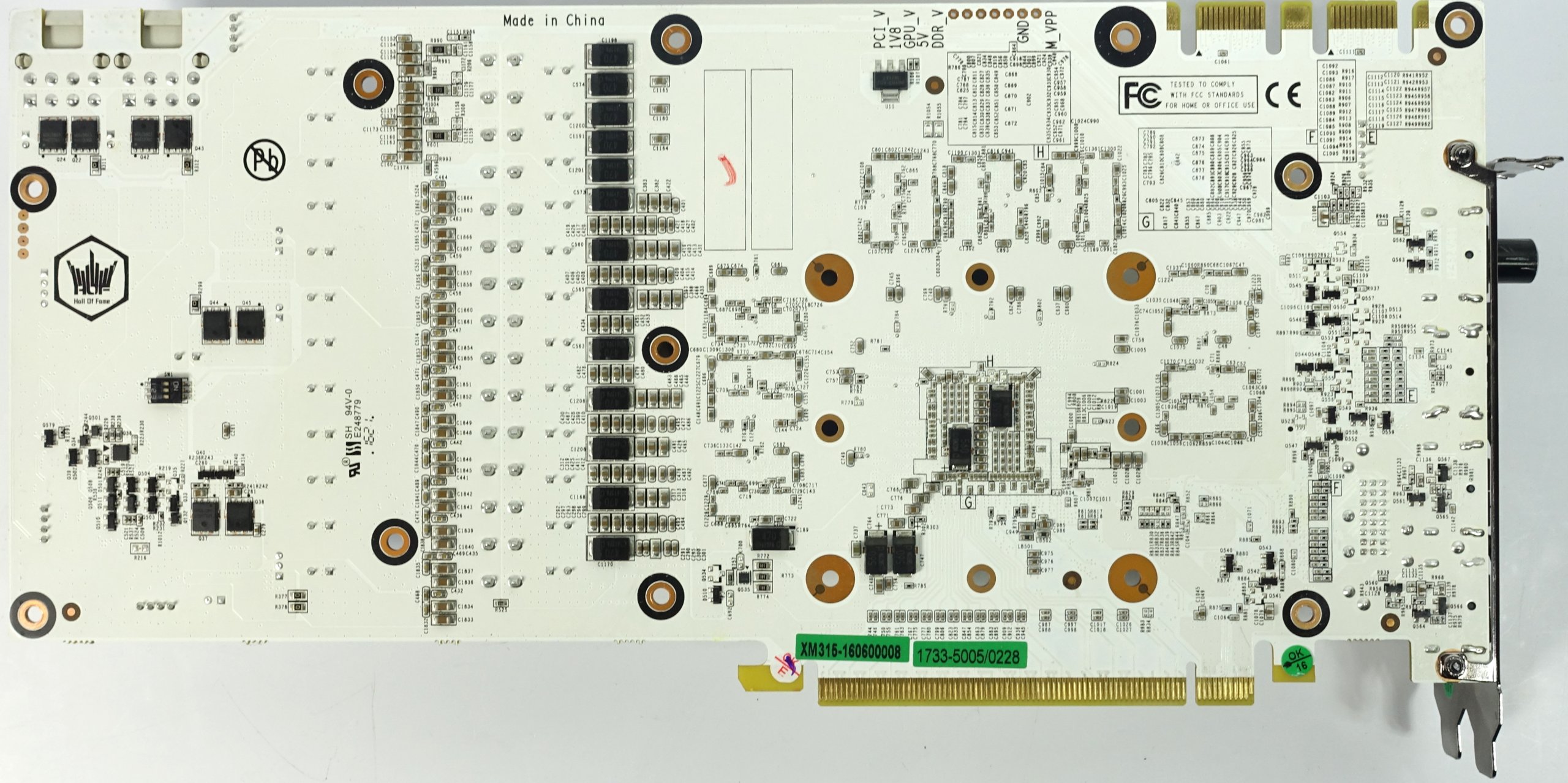

Why might you feel compelled to poke the company's site? To begin, the 1080 Hall of Fame has a very different look. It sports a white shroud, white body panels, a white backplate, and even a white PCA. Anyone with an MSI Titanium-series motherboard (Z170A or X99) has a match made in heaven.

Beyond the premium paint scheme, we're also impressed by Galax's bundled extras. Among the extraneous gimmicks, you also get a structural support to keep the heavy card from flexing in its slot.

Technical Specifications

MORE: Best Graphics Cards

MORE: Desktop GPU Performance Hierarchy Table

MORE: All Graphics Content

Exterior & Interfaces

The cooler shroud is made of blindingly white plastic with aluminum highlights for additional eye candy. Overall, Galax went for a mechanical appearance with lots of bold edges and corners.

Weighing 46oz (1315g), this card is very heavy, which explains the support you get in the box. With a length of 12.5in (31.7cm), smaller cases may have a hard time accommodating the 1080 Hall of Fame. A height of five and one-third inches (13.5cm) from the motherboard slot's top edge isn't too subtle either. Although this is a dual-slot card on paper, some smaller parts are an extra 2mm wider, resulting in a total width of 1.46in (3.7cm).

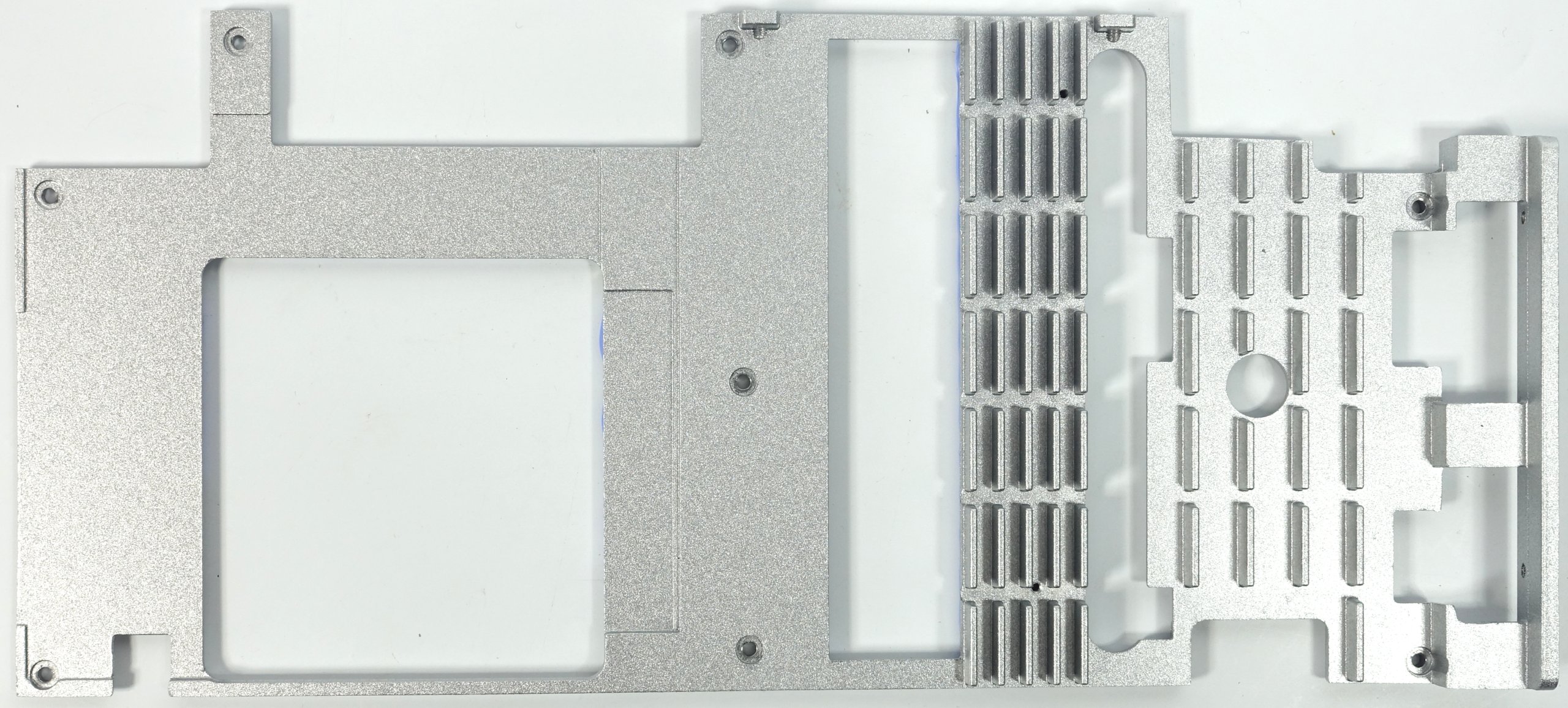

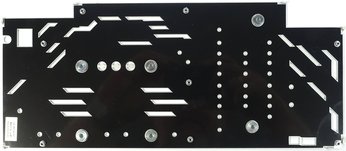

The back of the board is covered by a single-piece aluminum plate with ventilation holes cut into it. Plan for an additional one-fifth of an inch (5mm) in depth beyond the plate, which may become relevant in multi-GPU configurations, particularly if your motherboard's PCIe slots are two spaces apart.

We heavily discourage using this heavy card without its backplate. Despite the front-side mounting and bundled cooling frame, structural stability would suffer significantly without that support.

The top of the card features a back-lit "Hall of Fame" logo and two white auxiliary power connectors.

Vertically-oriented fins prevent air from exiting the front or back of the card. A button on the output bracket lets you set the fans to run a maximum speed; it serves no other purpose and definitely isn't a BIOS switch.

The rear bracket features five outputs, of which a maximum of four can be used simultaneously in a multi-monitor setup. In addition to one dual-link DVI-D connector (be aware that there is no analog signal), the bracket also exposes one HDMI 2.0b and three DisplayPort 1.4-ready outputs. The rest of the plate is mostly solid, with several openings cut into it that look like they're supposed to improve airflow, but don't actually do anything.

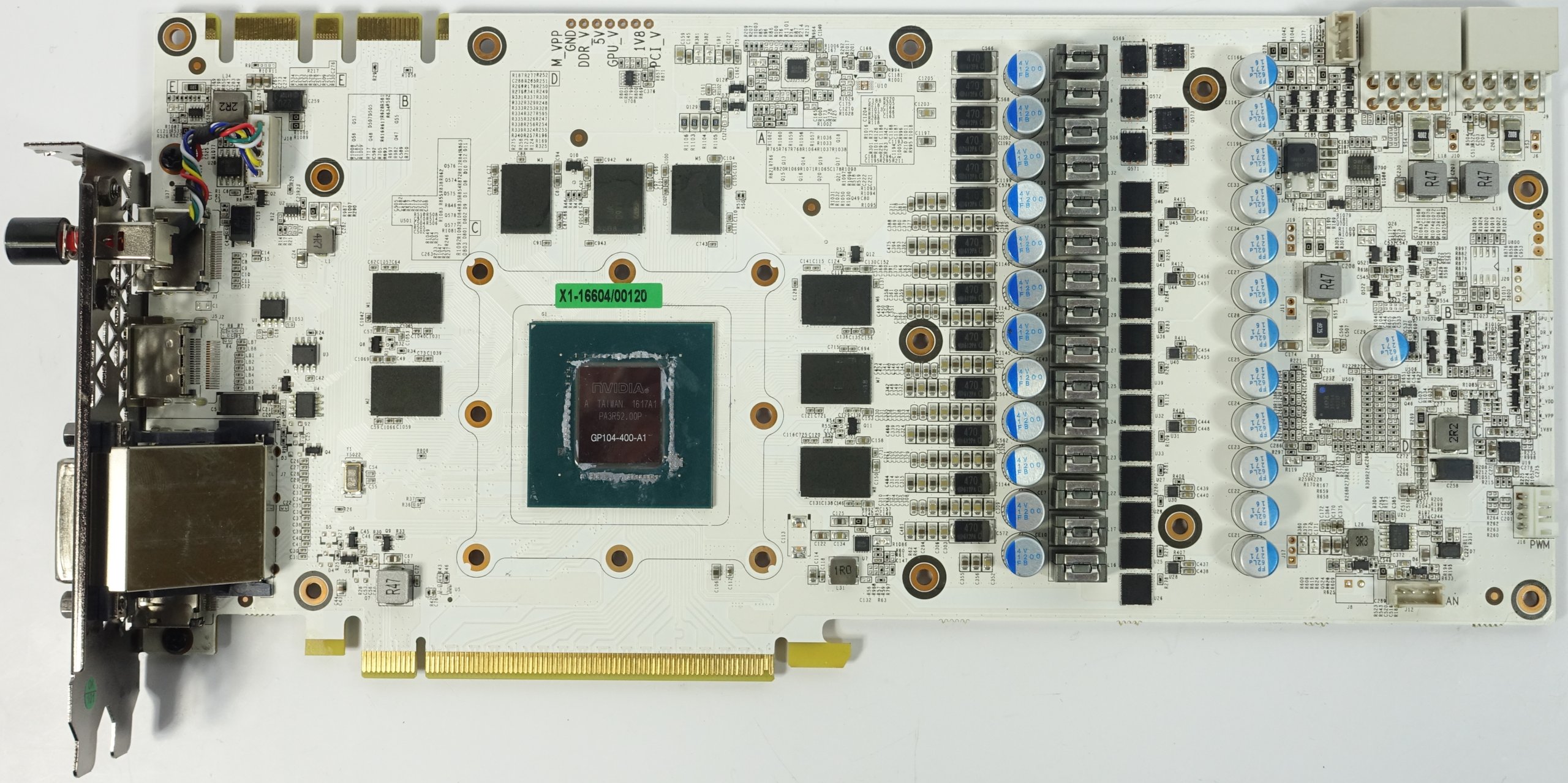

Board & Components

As far as memory is concerned, the 1080 HoF is the same as its competition. It uses GDDR5X memory modules from Micron, which are sold along with Nvidia's GPU to board partners. Eight memory chips (MT58K256M32JA-100) transferring at 10 MT/s are attached to a 256-bit interface, allowing for a theoretical bandwidth of 320 GB/s.

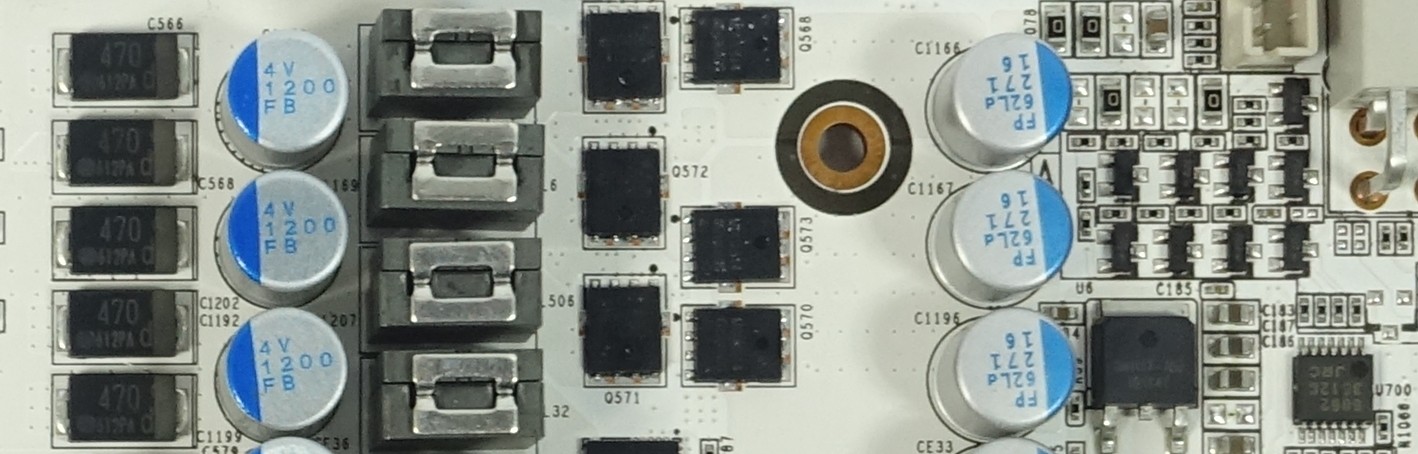

The rest of the PCB is considerably more interesting, though. KFA²/Galax proudly advertises a 12+3-phase layout. That's not technically true, so how does the company arrive at the number on its marketing material?

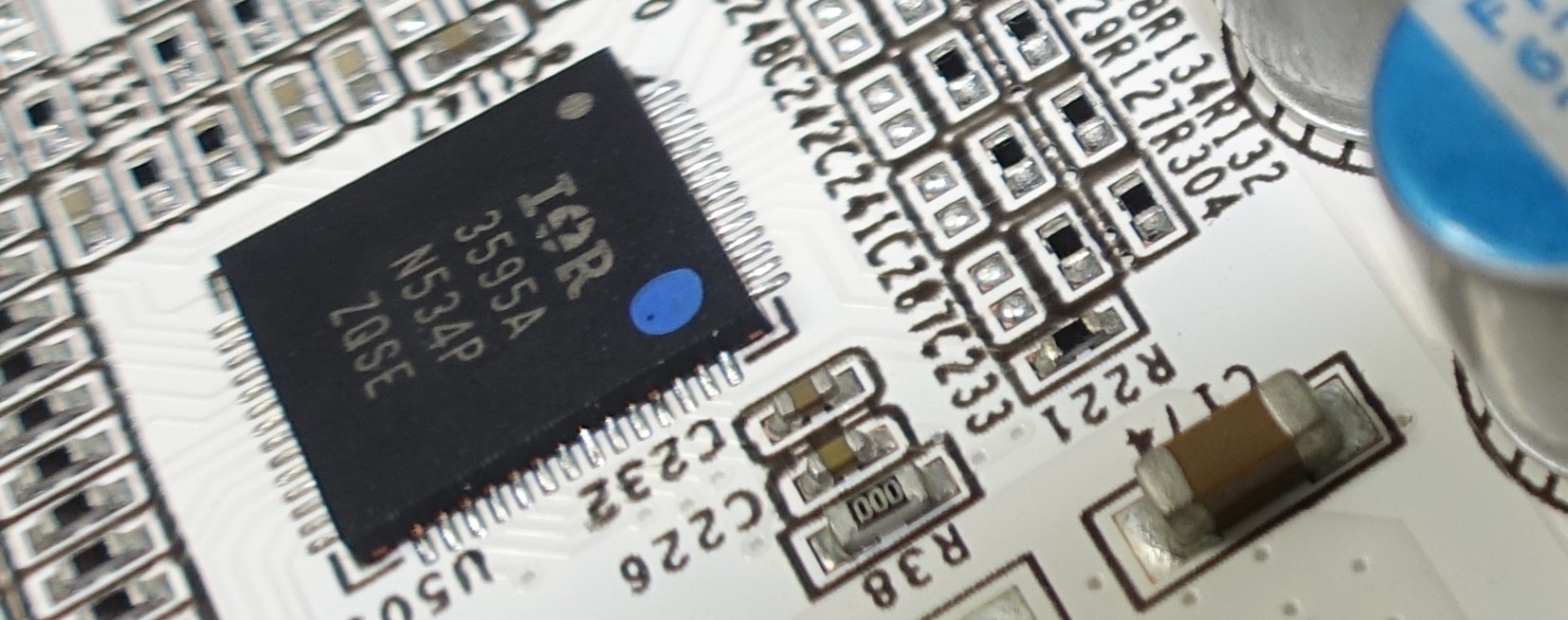

Let's start with a look at the GPU's voltage regulation. Unlike other manufacturers, KFA²/Galax went with International Rectifier's good old IR3595A, which we used to find on a lot of AMD graphics cards. This chip can be used as either a single-loop or dual-loop PWM controller. All six phases provided in loop one are used for the GPU. The rest are regulated by other means.

The reasons why KFA² went with the IR3595A, even though it still uses VRM11 instead of OVR4, are likely found in the fact that it offers firmware programmers more options for customization and complete control over voltage regulation. This also provides some distinct advantages for extreme overclocking, since the chip allows the use of additional extensions. In short, there are benefits. On the other hand, it may conflict with the sensor loops of third-party tools like GPU-Z if monitoring is activated.

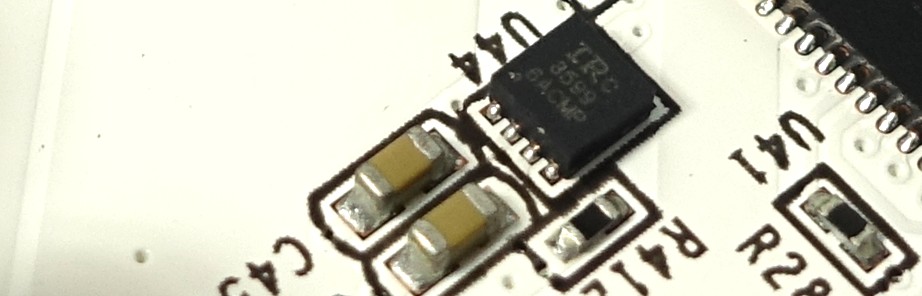

But where does the 12-phase marketing claim come from? The 1080 HoF has a small IR3599, which implements a so-called doubler that splits each phase in two individual converter circuits. Thus, there are six true phases and 12 converter circuits.

This isn't a new trick. It does improve the distribution of current, creating a larger cooling area for the MOSFETs. Furthermore, the shunt connection also reduces the circuit's internal resistance as a whole.

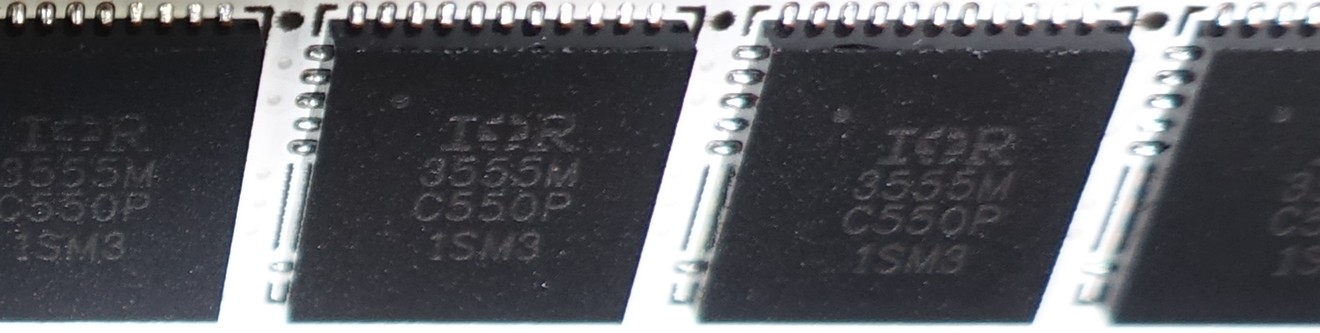

Since such a high number of converter rails also leads to a shortage of space, this card uses a highly integrated IR3555 with high- and low-side MOSFETs, a gate driver, and Schottky diode on a single chip, freeing up a little bit of real estate. Spoiler alert: compared to EVGA's GTX 1080 FTW, this concept works very well when it comes to cooling the card.

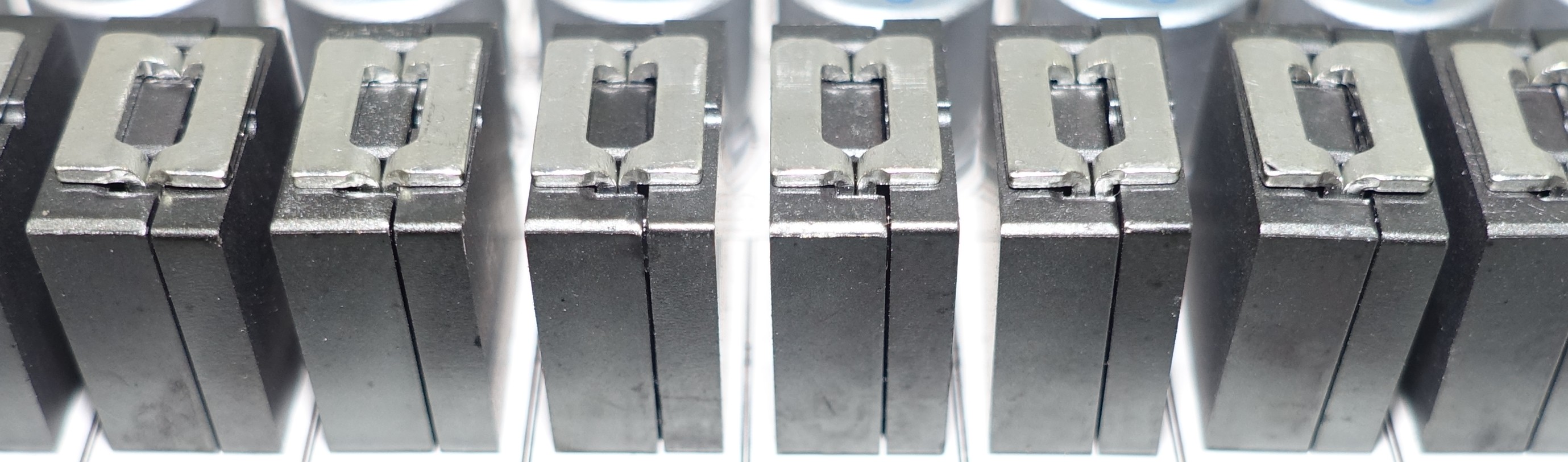

In order to fit all of the requisite components on its circuit board (that's 12 coils for the GPU and three for the memory/memory controller), Galax relies on vertically-stacked coils encapsulated in a ferrite frame. They're more susceptible to vibration though, and tend to buzz. We'll explore their impact on acoustics shortly.

Three phases are reserved for the GDDR5X and memory controller, employing conventional N-channel MOSFETS for the high- (MDU 1514) and low-sides (MDU 1511), along with an external gate driver. Additionally, a uP9509 is used as the PWM controller. The coils are the same ones used for the GPU.

Current monitoring is handled by an INA3221. Two familiar capacitors are installed right below the GPU to absorb and equalize peaks in voltage.

Power Results

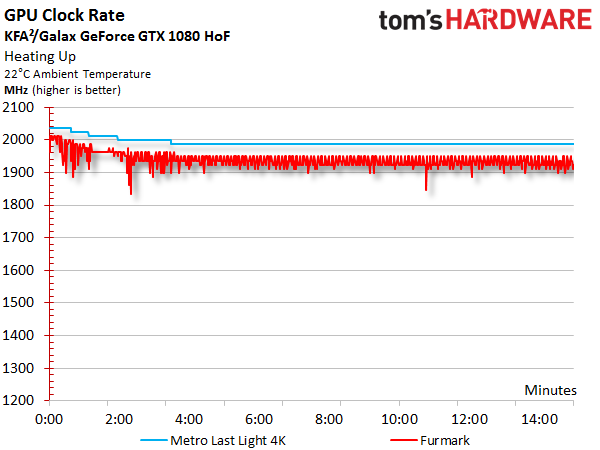

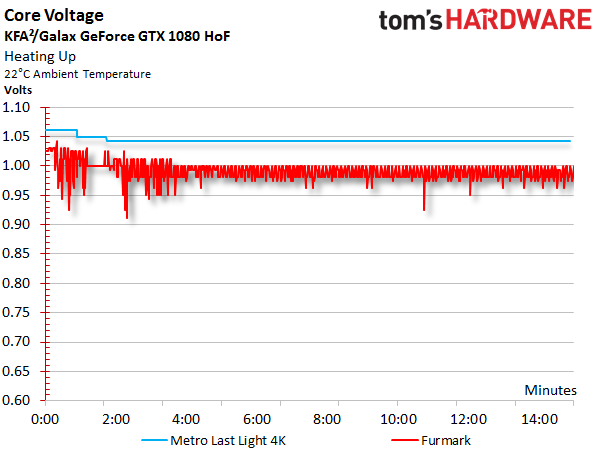

Before we look at power consumption, we should talk about the correlation between GPU Boost frequency and core voltage, which show such striking similarity that we deliberately put their graphs one after the other. KFA²/Galax sets a very high power target that facilitates a relatively constant GPU Boost frequency. It only drops slightly as the temperature increases, and our voltage readings mirror that behavior.

After warming up in a variable-load gaming scenario, the GPU Boost clock rate that started at 2025 MHz stabilizes at 1987 MHz, and then slides to 1923 MHz under constant load. While we measure up to 1.062V at first, voltage later drops to an average of 1.043V.

Summing up measured voltages and currents, we arrive at a total consumption figure we can easily confirm with our test equipment by monitoring the card's power connectors.

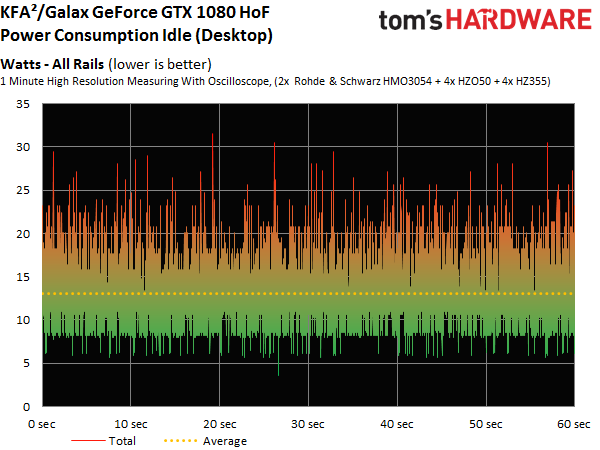

As a result of Nvidia's restrictions, manufacturers sacrifice the lowest possible frequency bin in order to gain an extra GPU Boost step. So, the GTX 1080 HoF's power consumption is disproportionately high as it idles at 278 MHz.

| Power Consumption | |

|---|---|

| Idle | 13W |

| Idle Multi-Monitor | 16W |

| Blu-ray | 15W |

| Browser Games | 118-139W |

| Gaming (Metro Last Light at 4K) | 193W |

| Torture (FurMark) | 239W |

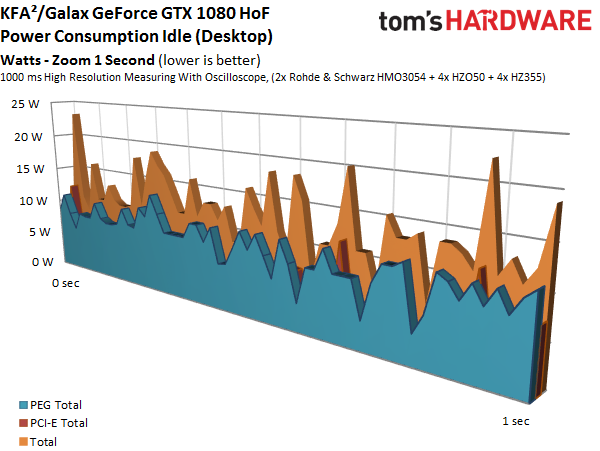

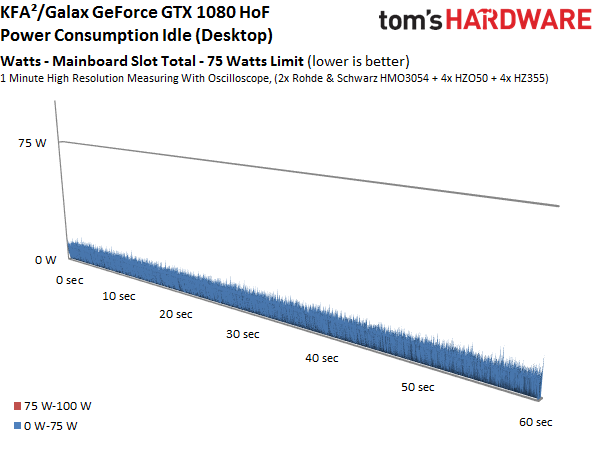

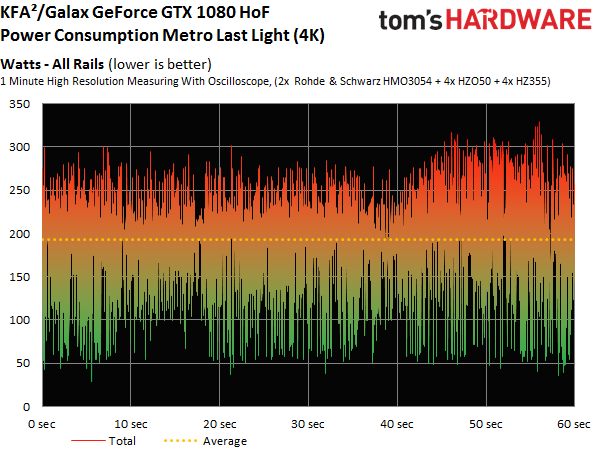

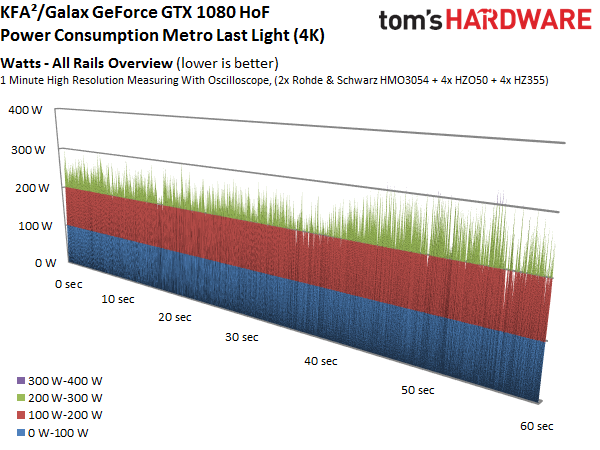

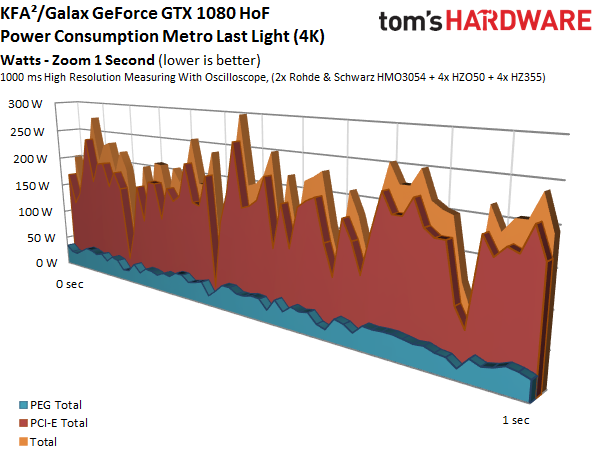

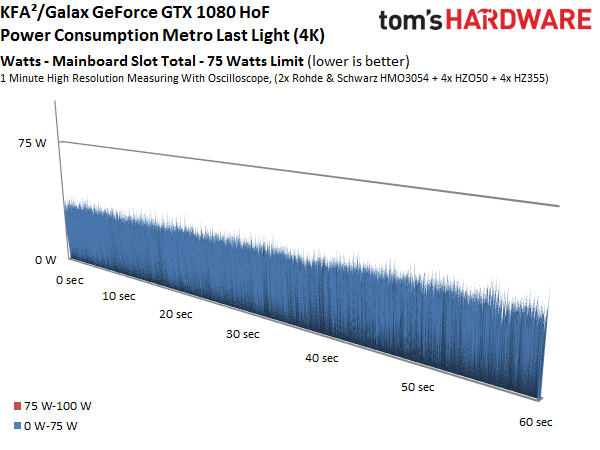

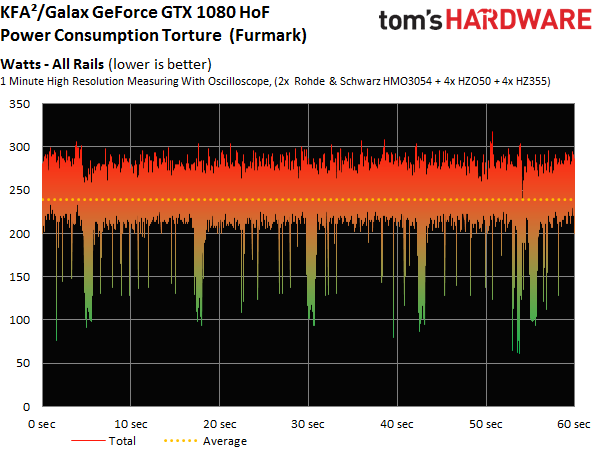

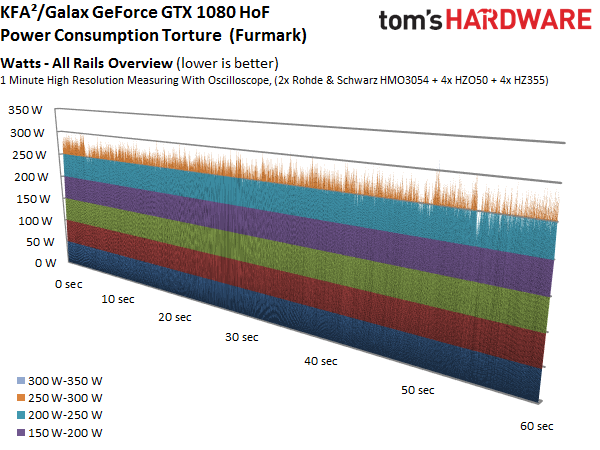

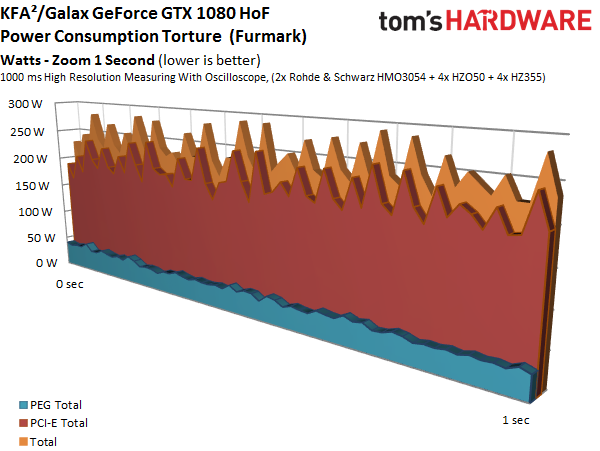

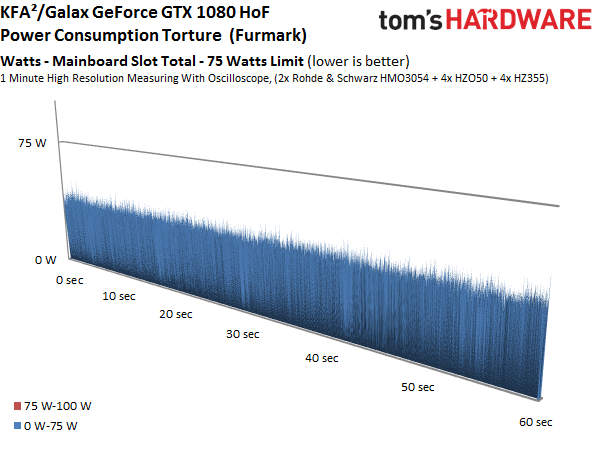

Now let's take a more detailed look at power consumption when the card is idle, when it's gaming at 4K, and during our stress test. The graphs show the distribution of load between each voltage and supply rail, providing a bird's eye view of variations and peaks:

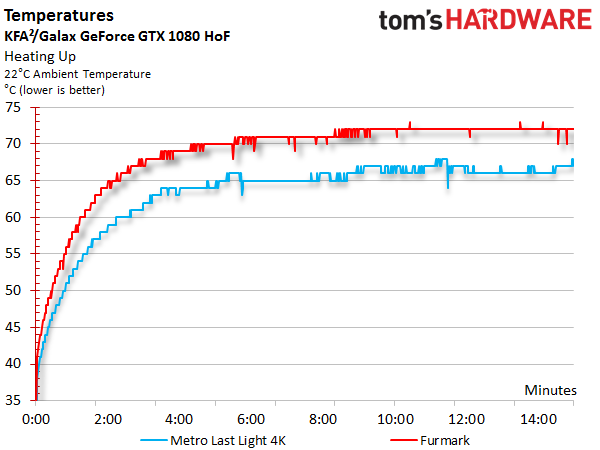

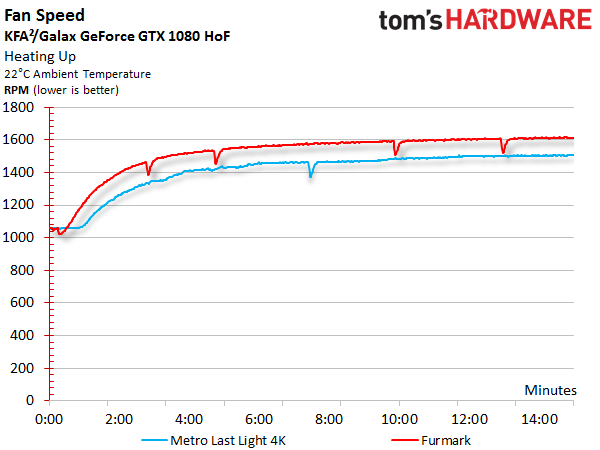

Temperature Results

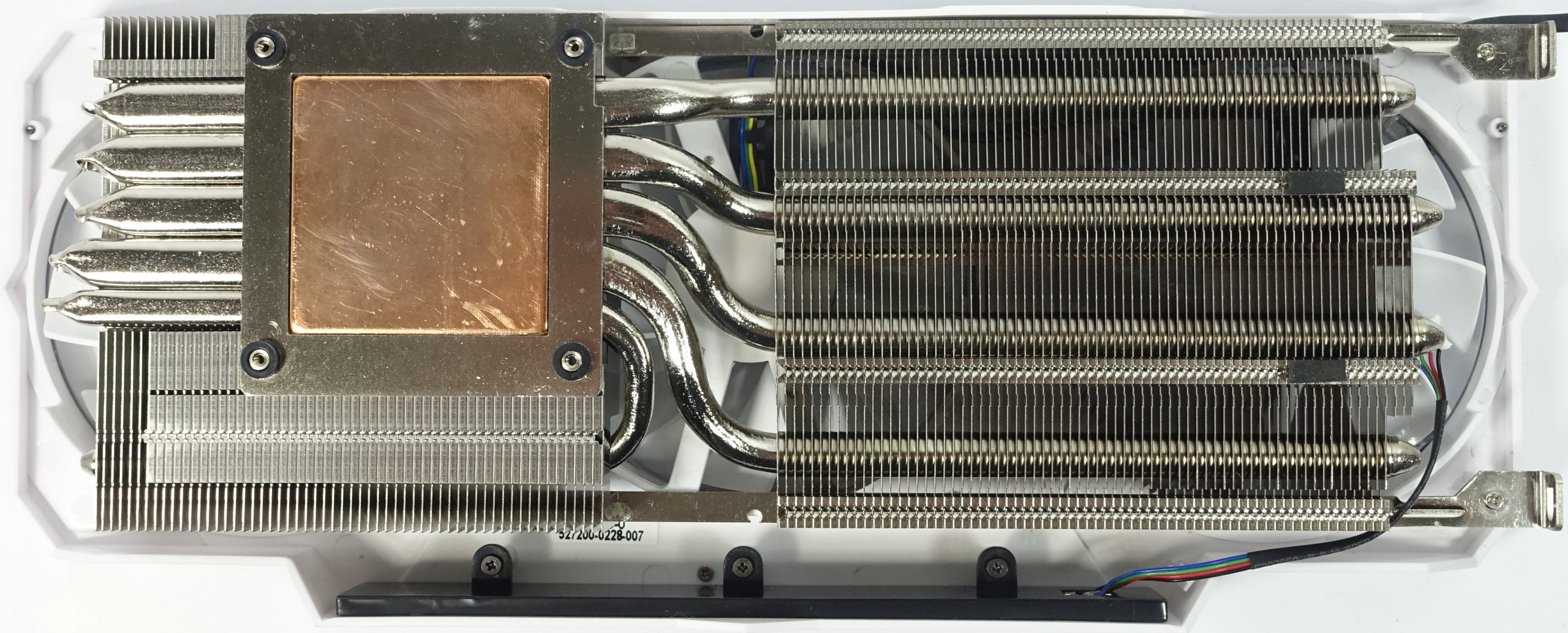

Naturally, heat output is directly related to power consumption, and the 1080 HoF's ability to dissipate that thermal energy can only be understood by looking at its cooling solution.

As with the 1080 Founders Edition, the backplate is mostly aesthetic; it doesn't serve much practical purpose. At best, it helps with the card's structural stability. But the thermal solution is completely sufficient anyway, as our benchmarks show.

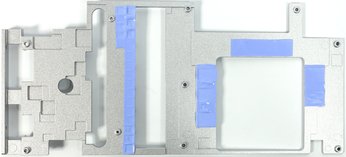

KFA² applies a black coating to the back of its plate, improving the absorption of heat. The mounting and cooling frame is made from injection-molded aluminum and placed on top of the PCB. Then it's attached to the backplate with several screws. This structure, which sandwiches the board, also cools the voltage regulators and memory modules through thermal pads.

Why does this concept work so well on the GTX 1080 HoF and not on EVGA's GTX 1080 FTW? Looking at the top of the frame, we see a number of fins that not only increase the cooler's surface area but also take better advantage of available airflow.

The heat sink itself is one of the largest and heaviest you'll find, aside from the solutions that Palit and Gainward use. But KFA²/Galax doesn't stop there.

The cooler relies on a copper block topped with one quarter-inch (6mm) pipe and three more measuring one-third of an inch (8mm) in diameter. During our gaming test, we observed temperatures of only 151°F (66°C) on an open bench, and 154°F (68°C) to 156°F (69°C) in a closed case. Clearly this card's thermal performance is good.

But the high power target does take its toll during our stress test. Power consumption as high as 240W heats the GPU up to 162°F (72°C) on an open bench and 165°F (74°C) in a closed case. Then again, none of us actually play FurMark.

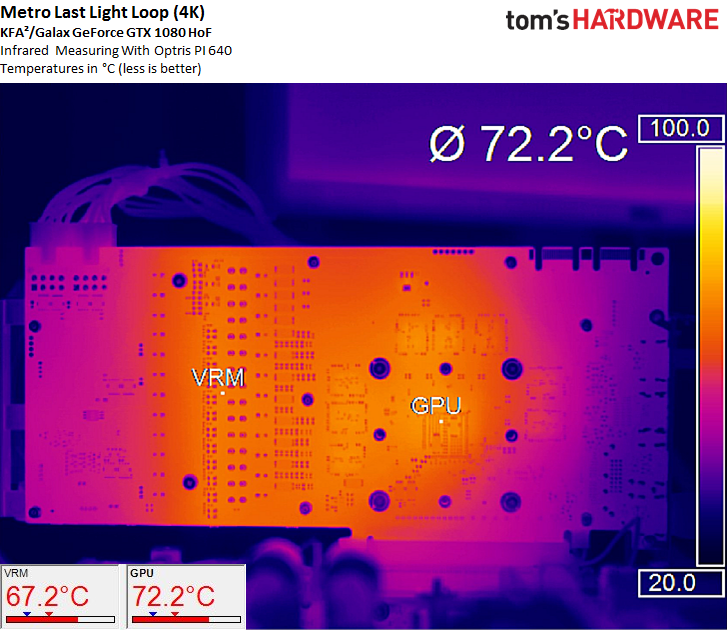

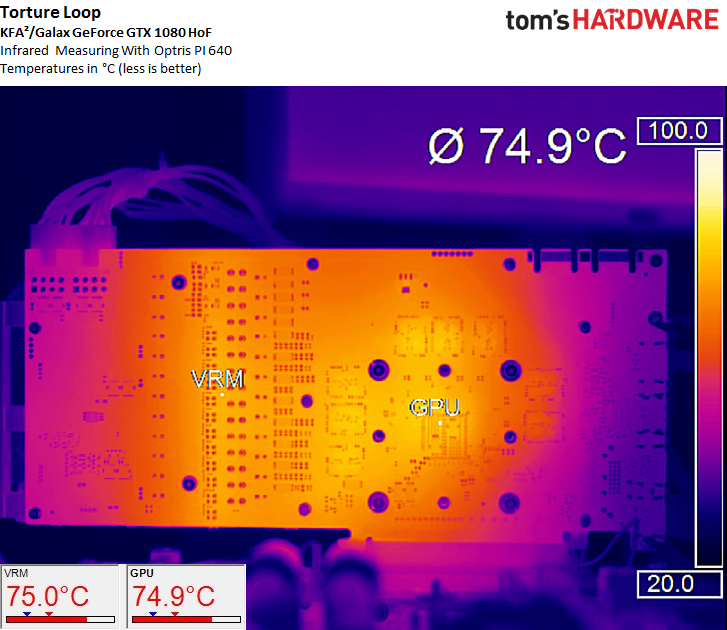

One look at the infrared images reveals the effectiveness of well-planned VRM and memory cooling, even without the need for additional built-in heat sinks. On the other hand, KFA²/Galax's massive mounting/cooling frame wouldn't fit on smaller cards.

A reading of 154°F (68°C) below the armada of VRMs sets a new record, pleasantly surprising us in the process. The GPU enjoys competent cooling as well. In the end, the package is hotter than its graphics processor.

During our stress test, the cooler has to handle an additional 46W, driving temperatures higher. Yet even a reading of 167°F (75°C) is still mild compared to some of the other cards we're benchmarking.

Sound Results

We started looking at Galax's 1080 HoF back in July, and the initial results identified some acoustic issues. Our German team contacted the manufacturer directly and, together with its R&D department, solved the problems we wrote about. The newest cards have been modified according to our guidance, and as of August 2016, they're in mass production. If you buy today, you'll get one of the improved boards.

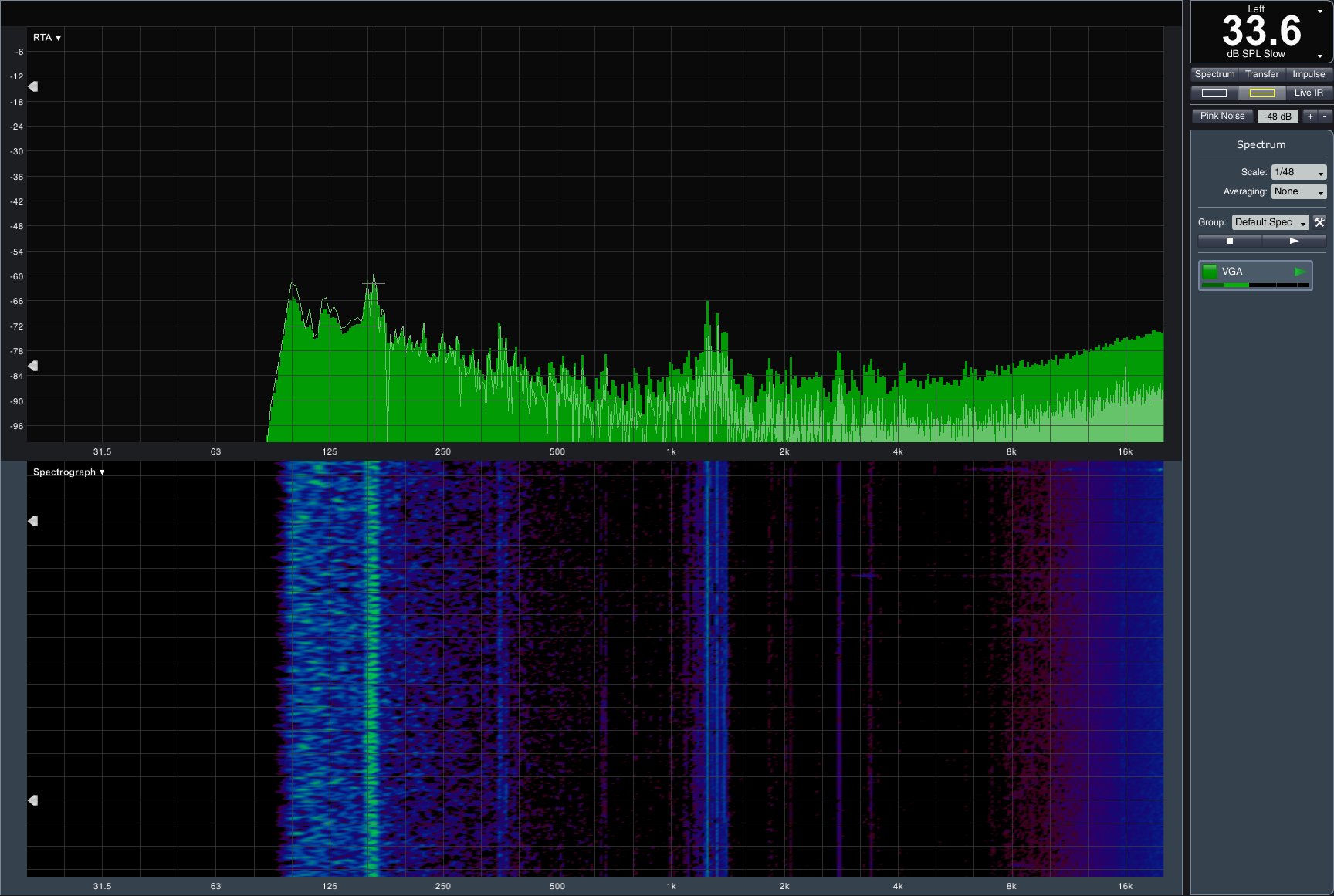

Let's start with the fan curve, which shows that KFA2/Galax made a conscious decision to eschew semi-passive operation, even at idle. The complaint we originally had was a relatively high 33 percent minimum duty cycle. This is what we saw:

A 33.6 dB(A) result is hardly what you'd call quiet. Our first attempt to tweak the fan controls down to 28 percent duty cycle still left us at 32.6 dB(A). Low-frequency peaks were particularly obtrusive, and the sandwiched PCB caused those sounds to resonate in our chassis.

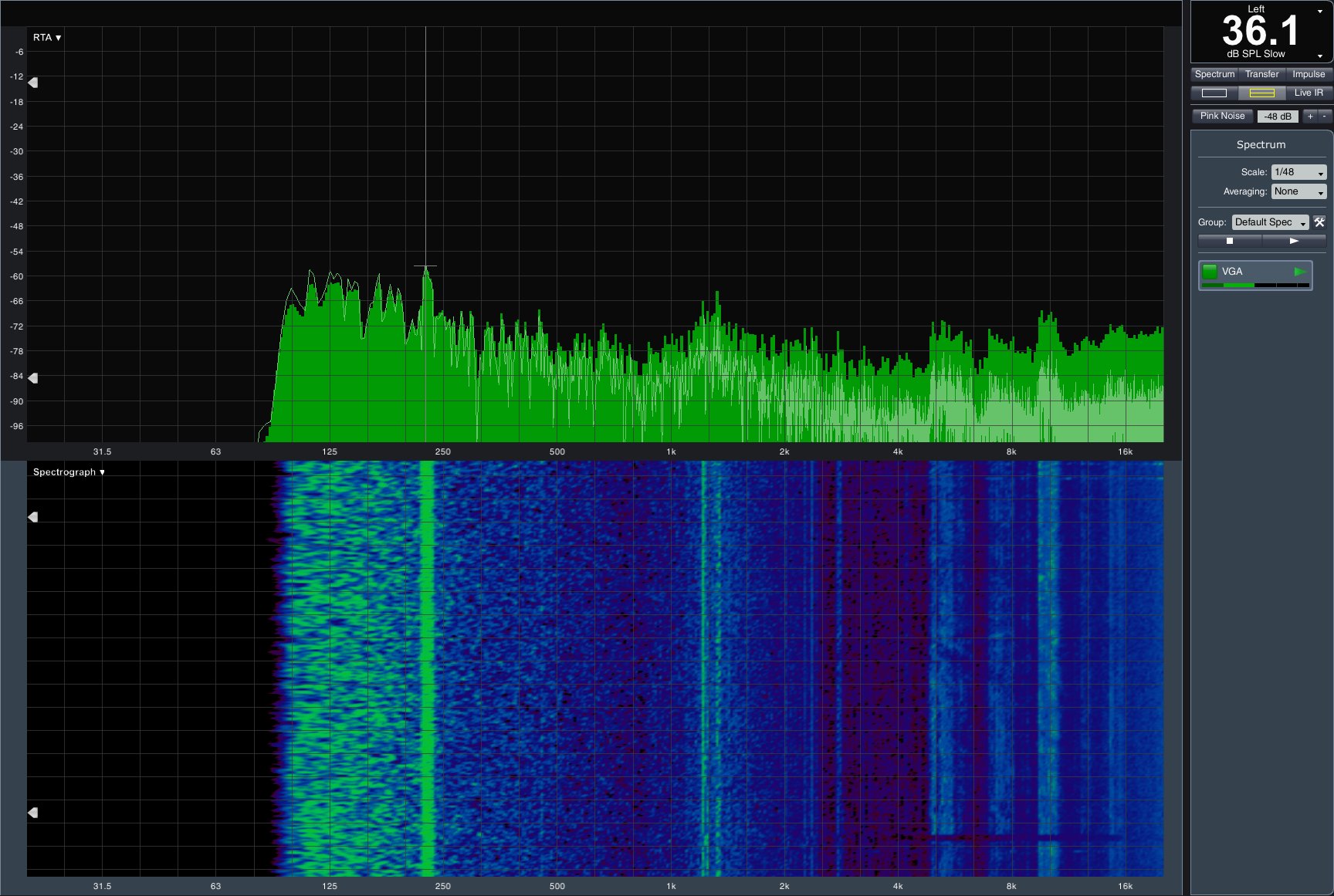

Under full load, the fans wound up to maximum performance and became even more noticeable. During our stress test they peaked near 38 dB(A). We could have lived with 36.1 dB(A) during our gaming loop, but those low frequencies just dominated the otherwise tolerable sounds.

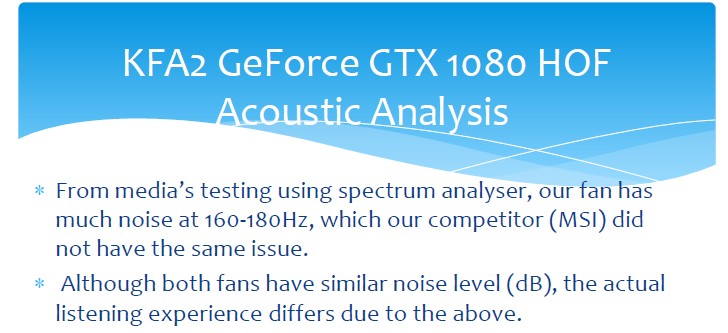

After the testing we published in July, KFA²/Galax passed our measurements on to its fan supplier with a request for comment and a demand for improvement. When the dust cleared, the manufacturer provided a history of the issue.

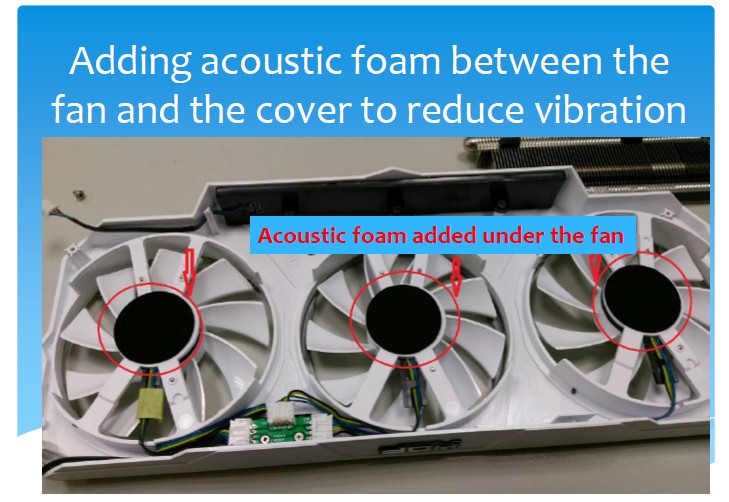

In almost every card, regardless of vendor, the fan modules are screwed directly to cover. Depending on the resonance frequency of this structure, there is a chance it'll match the fan noise and form an acoustically disastrous alliance. In order to prevent that, Galax installs those modules without any direct contact.

The fan vendor's measurements not only confirmed our objections, but also proved the effectiveness of its solution. This included a blind test carried out with employees and third-parties.

As the saying goes, trust but always verify. That's why we want to get our hands on current versions of KFA²/Galax GeForce GTX 1080 Hall of Fame and GeForce GTX 1070 Hall of Fame, which would allow us to retake our measurements. We'll publish the results in an update when this happens.

Galax/KFA² GTX 1080 Hall of Fame

Reasons to buy

Reasons to avoid

MORE: Best Deals

MORE: Hot Bargains @PurchDeals

Current page: Galax/KFA² GTX 1080 Hall of Fame

Prev Page EVGA GeForce GTX 1080 FTW Gaming ACX 3.0 Next Page Gigabyte GTX 1080 G1 GamingGet Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Igor Wallossek wrote a wide variety of hardware articles for Tom's Hardware, with a strong focus on technical analysis and in-depth reviews. His contributions have spanned a broad spectrum of PC components, including GPUs, CPUs, workstations, and PC builds. His insightful articles provide readers with detailed knowledge to make informed decisions in the ever-evolving tech landscape

-

ledhead11 Love the article!Reply

I'm really happy with my 2 xtreme's. Last month I cranked our A/C to 64f, closed all vents in the house except the one over my case and set the fans to 100%. I was able to game with the 2-2.1ghz speed all day at 4k. It was interesting to see the GPU usage drop a couple % while fps gained a few @ 4k and able to keep the temps below 60c.

After it was all said and done though, the noise wasn't really worth it. Stock settings are just barely louder than my case fans and I only lose 1-3fps @ 4k over that experience. Temps almost never go above 60c in a room around 70-74f. My mobo has the 3 spacing setup which I believe gives the cards a little more breathing room.

The zotac's were actually my first choice but gigabyte made it so easy on amazon and all the extra stuff was pretty cool.

I ended up recycling one of the sli bridges for my old 970's since my board needed the longer one from nvida. All in all a great value in my opinion.

One bad thing I forgot to mention and its in many customer reviews and videos and a fair amount of images-bent fins on a corner of the card. The foam packaging slightly bends one of the corners on the cards. You see it right when you open the box. Very easily fixed and happened on both of mine. To me, not a big deal, but again worth mentioning. -

redgarl The EVGA FTW is a piece of garbage! The video signal is dropping randomly and make my PC crash on Windows 10. Not only that, but my first card blow up after 40 days. I am on my second one and I am getting rid of it as soon as Vega is released. EVGA drop the ball hard time on this card. Their engineering design and quality assurance is as worst as Gigabyte. This card VRAM literally burn overtime. My only hope is waiting a year and RMA the damn thing so I can get another model. The only good thing is the customer support... they take care of you.Reply -

Nuckles_56 What I would have liked to have seen was a list of the maximum overclocks each card got for core and memory and the temperatures achieved by each coolerReply -

Hupiscratch It would be good if they get rid of the DVI connector. It blocks a lot of airflow on a card that's already critical on cooling. Almost nobody that's buying this card will use the DVI anyway.Reply -

Nuckles_56 Reply18984968 said:It would be good if they get rid of the DVI connector. It blocks a lot of airflow on a card that's already critical on cooling. Almost nobody that's buying this card will use the DVI anyway.

Two things here, most of the cards don't vent air out through the rear bracket anyway due to the direction of the cooling fins on the cards. Plus, there are going to be plenty of people out there who bought the cheap Korean 1440p monitors which only have DVI inputs on them who'll be using these cards -

ern88 I have the Gigabyte GTX 1080 G1 and I think it's a really good card. Can't go wrong with buying it.Reply -

The best card out of box is eVGA FTW. I am running two of them in SLI under Windows 7, and they run freaking cool. No heat issue whatsoever.Reply

-

Mike_297 I agree with 'THESILVERSKY'; Why no Asus cards? According to various reviews their Strixx line are some of the quietest cards going!Reply -

trinori LOL you didnt include the ASUS STRIX OC ?!?Reply

well you just voided the legitimacy of your own comparison/breakdown post didnt you...

"hey guys, here's a cool comparison of all the best 1080's by price and performance so that you can see which is the best card, except for some reason we didnt include arguably the best performing card available, have fun!"

lol please..