GPU vs. CPU Upgrade: Extensive Tests

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

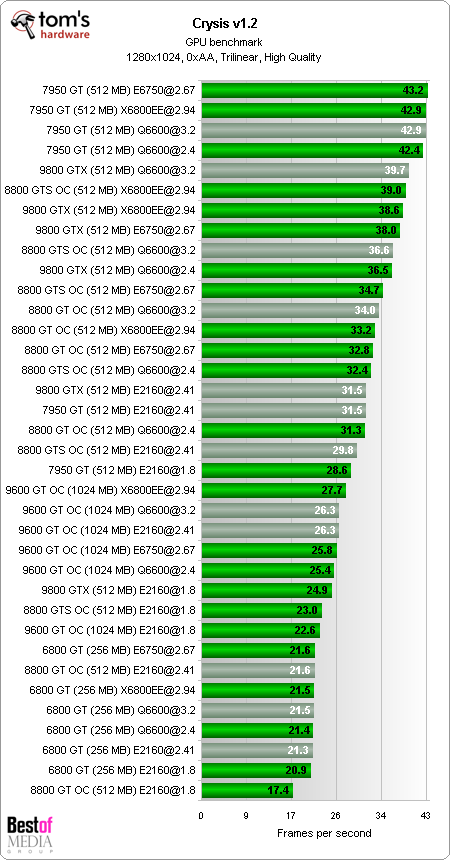

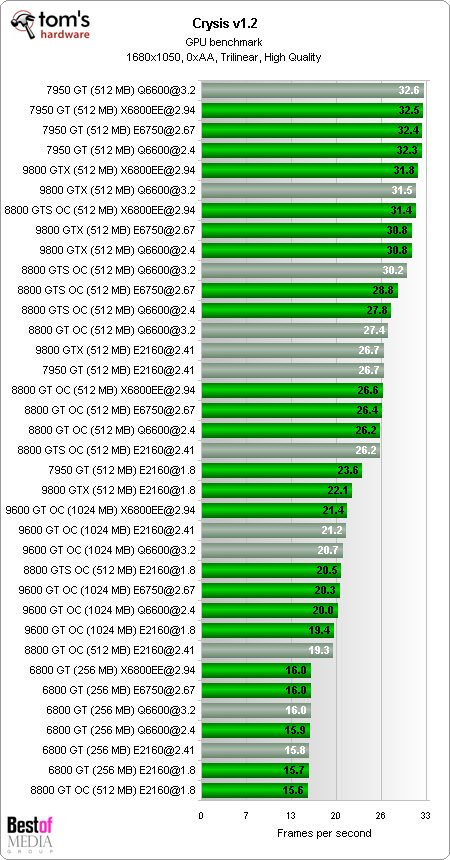

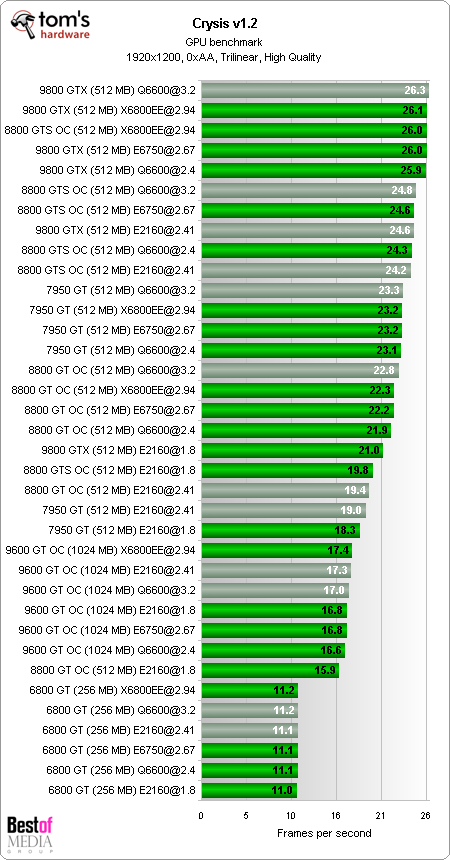

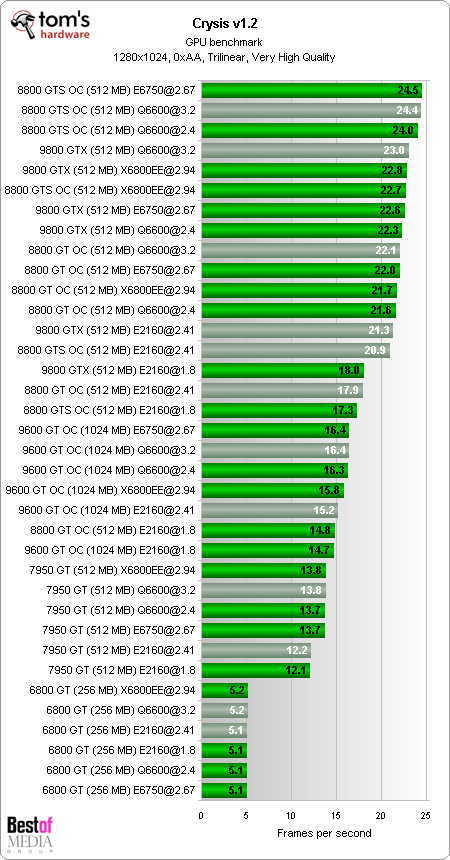

Crysis v1.2

Note that the Geforce 6800 GT and Geforce 7950 GT run with the image quality set at Low (1280, 1680, 1920 pixels) and at Medium (1280 pixels), whereas the Geforce 8 and 9 were tested at High (1280, 1680, 1920 pixels) and Very High (1280 pixels). These settings are necessary for the older graphics card to be in a position to send a playable number of frames to the monitor. These differences mean that sometimes a weaker graphics chip can move up to a higher position in the rankings than a more powerful Geforce 8 or 9. The better frame rate is, in these cases, only achieved by reducing the image quality.

The Geforce 6800 GT does not react to increased CPU power. The Geforce 7950 GT needs at least 2.67 to 3.0 GHz, or more CPU cache, in order for the graphics performance to be fully exploited. The Geforce 6 and 7 only run with DirectX 9, which doesn’t make much difference as the graphics quality is set to Low; this means that there are few effects where you would actually see a difference.

The Geforce 9600 GT compares favorably with the more powerful Geforce 8 and 9. A CPU basic level of 2.67 GHz or a quad core processor should be used. The new G92 graphics cards cooperate much better when they have sufficient clocking rates available. Overclocking the CPU ekes out a couple of extra frames for the Geforce 8800 and Geforce 9800 GTX.

Article continues below

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

-

randomizer That would simply consume more time without really proving much. I think sticking with a single manufacturer is fine, because you see the generation differences of cards and the performance gains compared to geting a new processor. You will see the same thing with ATI cards. Pop in an X800 and watch it crumble in the wake of a HD3870. There is no need to inlude ATI cards for the sake of this article.Reply -

randomizer This has been a long needed article IMO. Now we can post links instead of coming up with simple explanations :DReply -

yadge I didn't realize the new gpus were actually that powerful. According to Toms charts, there is no gpu that can give me double the performance over my x1950 pro. But here, the 9600gt was getting 3 times the frames as the 7950gt(which is better than mine) on Call of Duty 4.Reply

Maybe there's something wrong with the charts. I don't know. But this makes me even more excited for when I upgrade in the near future. -

This article is biased from the beginning by using a reference graphics card from 2004 (6800GT) to a reference CPU from 2007 (E2140).Reply

Go back and use a Pentium 4 Prescott (2004) and then the basis of these percentage values on page 3 will actually mean something. -

randomizer yadgeI didn't realize the new gpus were actually that powerful. According to Toms charts, there is no gpu that can give me double the performance over my x1950 pro. But here, the 9600gt was getting 3 times the frames as the 7950gt(which is better than mine) on Call of Duty 4. Maybe there's something wrong with the charts. I don't know. But this makes me even more excited for when I upgrade in the near future.I upgraded my X1950 pro to a 9600GT. It was a fantastic upgrade.Reply -

wh3resmycar scyThis article is biased from the beginning by using a reference graphics card from 2004 (6800GT) to a reference CPU from 2007 (E2140).Reply

maybe it is. but its relevant especially with those people who are stuck with those prescotts/6800gt. this article reveals an upgrade path nonetheless -

randomizer If they had used P4s there would be o many variables in this article that there would be no direction and that would make it pointless.Reply -

JAYDEEJOHN Great article!!! It clears up many things. It finally shows proof that the best upgrade a gamer can make is a newer card. About the P4's, just take the clock rate and cut it in half, then compare (ok add 10%) hehehReply -

justjc I know randomizer thinks we would get the same results, but would it be possible to see just a small article showing if the same result is true for AMD processors and ATi graphics.Reply

Firstly we know that ATi and nVidia graphics doesn't calculate graphics in the same way, who knows perhaps an ATi card requiers more or less processorpower to work at full load, and if you look at Can you run it? for Crysis(only one I recall using) you will see the minimum needed AMD processor is slover than the minimum needed Core2, even in processor speed.

So any chance of a small, or full scale, article throwing some ATi and AMD power into the mix?