Tom's Hardware Verdict

Gigabyte’s Windforce 3X delivers practical benefits in the GeForce GTX 1660 Gaming OC 6G, facilitating lower operating temperatures, slower-spinning fans, and more aggressive clock rates than competing cards. While we’re typically not proponents of spending more money than necessary on mainstream GPUs, this is one model worth its modest premium.

Pros

- +

Windforce 3X cooler is great for low temps without much noise

- +

Slight factory overclock

- +

RGB lighting

- +

Fair price compared to base-level 1660s

Cons

- -

Overclock doesn’t do much for real-world performance

- -

Extra-long heat sink takes up more room in your chassis

Why you can trust Tom's Hardware

Gigabyte GeForce GTX 1660 Gaming OC 6G Review

If you play your favorite games at 1920 x 1080, Nvidia’s GeForce GTX 1660 graphics card offers some of the best performance per dollar out there, along with excellent performance per watt. In our launch review, Nvidia GeForce GTX 1660 Review: The Turing Onslaught Continues, we found Gigabyte’s GeForce GTX 1660 OC 6G to be more than 16% faster than a previous-gen GeForce GTX 1060 6GB at a similar 120W TDP (and a lower price).

It takes a much more expensive Radeon RX Vega 56 to outperform the GeForce GTX 1660, and a slower Radeon RX 580 to rival its value. That’s why we were quick to praise the 1660 for its combination of playable frame rates, conservative power consumption, and a $220 starting price.

Gigabyte’s GeForce GTX 1660 Gaming OC 6G is the company’s flagship implementation of the 1660, offering higher core clock rates, more aggressive cooling, and a bit of RGB flair. Typically, we’d expect those extras to impose a hefty premium. But Gigabyte only asks for an extra $10/£10 compared to its base-level models. That seems like a small price to pay for the benefits of a truly beefed-up board.

Meet Gigabyte’s GeForce GTX 1660 Gaming OC 6G

We don’t often get our hands on multiple models from the same vendor in a given product class. The 1660 Gaming OC 6G is one step up from the 1660 OC 6G we already reviewed though, giving us a nice foundation for quantifying the reasons you’d want to spend a few dollars more.

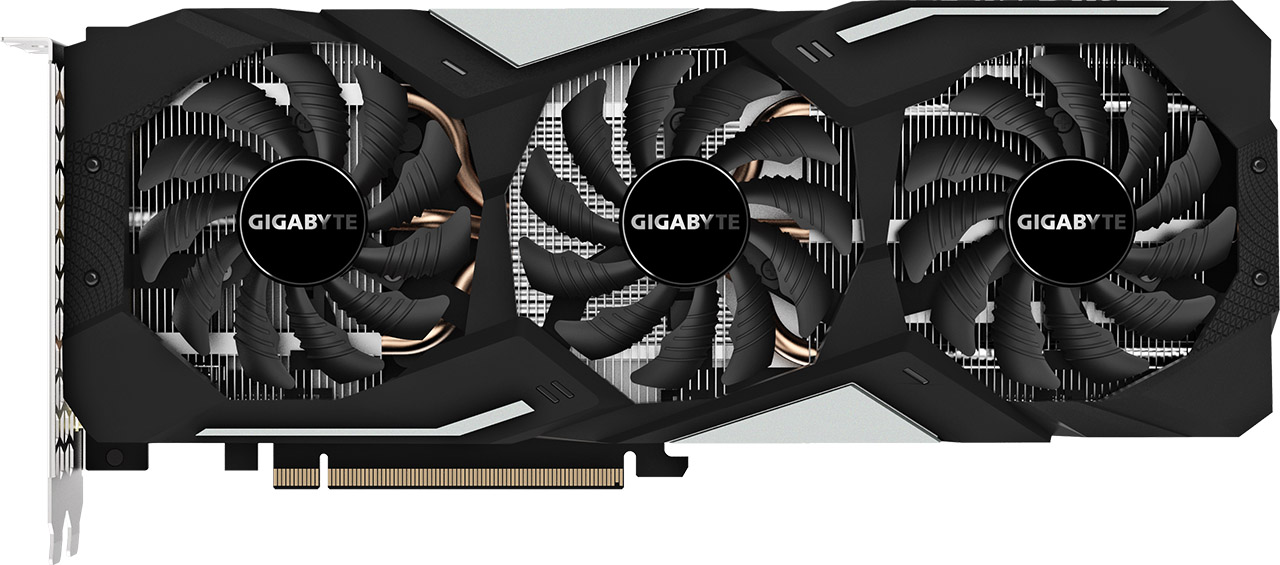

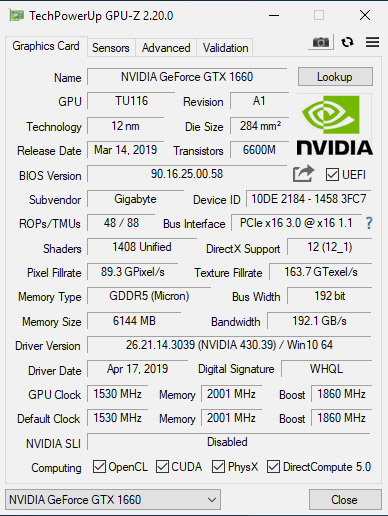

Right out of the gate, we know that the 1660 Gaming OC 6G sports a GPU Boost rating of 1,860 MHz, which is 75 MHz higher than Nvidia’s reference specification. Its TU116 processor is topped with a larger three-piece sink cooled by a trio of 80mm fans. We expect the big Windforce 3x thermal solution to yield measurable advantages when it comes time to take temperature readings, though it’ll be interesting to see how the 1660 OC 6G’s two 90mm fans stack up in comparison.

You will, of course, need more room in your case to accommodate the 1660 Gaming OC 6G. It’s similar in size to the premium GeForce RTX 2060 Gaming OC Pro 6G and GeForce RTX 2070 Gaming OC 8G, which are more than 11 inches (28cm) long, 4.4 inches (11.3cm) tall, and 1.5 inches (3.9cm) thick.

The aforementioned Windforce 3X cooler hangs off the PCB’s back edge by more than 2”. As you might imagine, a thermal solution designed to cope with RTX 2070’s 175W TDP and RTX 2060’s 160W power limit would be more than ample for GTX 1660’s 120W ceiling. Under the fan shroud, however, Gigabyte does cut costs with a less dense sink that weighs 1.49lb. (677g) instead of the RTX 2060 card’s 1.79lb. (811g). And although both designs employ a multi-part configuration, the 1660 Gaming OC 6G utilizes three heat pipes between them, whereas the higher-end RTX 2060 employs four. Those pipes are flattened in the middle and placed directly over Nvidia’s GPU, where they draw heat away before dissipating it through the fin arrays.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

On the cooler’s left side, closest to the display outputs, one part of the sink contacts voltage regulation circuitry underneath through thermal pads. On the right side, another piece of the sink doesn’t touch the board at all. It simply helps the middle section keep TU116 and its surrounding memory modules running at the lowest temperature possible.

Three 80mm fans sit on top of the thermal solution. The outside fans spin counter-clockwise, while the middle fan rotates clockwise. Turbulence is purportedly kept to a minimum, generating less competing airflow from adjacent fans. Then, at idle, the fans stop spinning altogether by virtue of a feature that Gigabyte calls 3D Active Fan. Enthusiasts who prefer to maintain lower idle temperatures can disable 3D Active Fan using downloadable Aorus Engine software. Frankly, the fans make so little noise that we prefer to keep them spinning (even though the semi-passive mode is one of this card’s competitive advantages).

Around the fans, Gigabyte uses plastic gratuitously to form a shroud. Sharp angles and silver accents do look nice, though folds over the card’s top and bottom block air moving through the vertically-oriented fins. A freer-flowing arrangement, particularly above the heat sink, might have helped the already-impressive thermal performance. Instead, that’s where we find this card’s RGB-backlit Gigabyte logo.

Still, the company’s relatively light cooler performs admirably. In Metro: Last Light, we observed the Gaming OC 6G consuming about 129W. Although that’s about 3W more than the 1660 OC 6G, Gigabyte’s flagship model topped out 3°C lower. Moreover, it maintained 1,980 MHz through our run, whereas the base-level card demonstrated a range of 1,920 to 1,935 MHz.

Gigabyte’s 1660 Gaming OC 6G sports a plastic backplate. This plate doesn’t do anything for rigidity, and it certainly doesn’t improve the card’s cooling. The plate may, however, help protect the PCB in case something gets dropped on it.

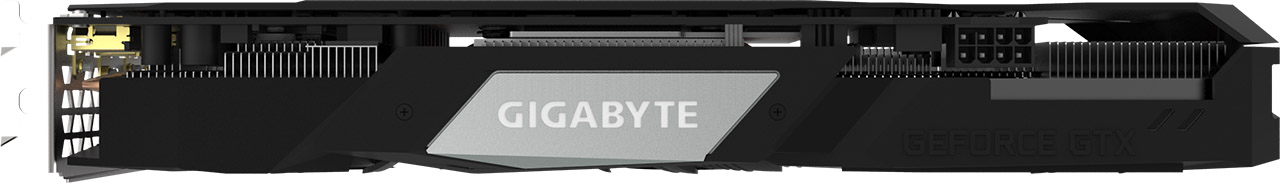

We approve of Gigabyte’s display output choices. Three DisplayPort connectors and a single HDMI interface cover the most common multi-monitor configurations. Down at this level, VirtualLink support isn’t really necessary. And doing away with DVI yields a freer-flowing grille. Unfortunately, the potential benefit of additional airflow is lost since the cooler’s fins move air perpendicular to the bracket.

| Header Cell - Column 0 | Gigabyte GeForce GTX 1660 Gaming OC 6G | Gigabyte GeForce GTX 1660 OC 6G | GeForce GTX 1660 Ti | GeForce GTX 1060 FE | GeForce GTX 1070 FE |

|---|---|---|---|---|---|

| Architecture (GPU) | Turing (TU116) | Turing (TU116) | Turing (TU116) | Pascal (GP106) | Pascal (GP104) |

| CUDA Cores | 1408 | 1408 | 1536 | 1280 | 1920 |

| Peak FP32 Compute | 5.2 TFLOPS | 5 TFLOPS | 5.4 TFLOPS | 4.4 TFLOPS | 6.5 TFLOPS |

| Tensor Cores | N/A | N/A | N/A | N/A | N/A |

| RT Cores | N/A | N/A | N/A | N/A | N/A |

| Texture Units | 88 | 88 | 96 | 80 | 120 |

| Base Clock Rate | 1530 MHz | 1530 MHz | 1500 MHz | 1506 MHz | 1506 MHz |

| GPU Boost Rate | 1860 MHz | 1785 MHz | 1770 MHz | 1708 MHz | 1683 MHz |

| Memory Capacity | 6GB GDDR5 | 6GB GDDR5 | 6GB GDDR6 | 6GB GDDR5 | 8GB GDDR5 |

| Memory Bus | 192-bit | 192-bit | 192-bit | 192-bit | 256-bit |

| Memory Bandwidth | 192 GB/s | 192 GB/s | 288 GB/s | 192 GB/s | 256 GB/s |

| ROPs | 48 | 48 | 48 | 48 | 64 |

| L2 Cache | 1.5MB | 1.5MB | 1.5MB | 1.5MB | 2MB |

| TDP | 120W | 120W | 120W | 120W | 150W |

| Transistor Count | 6.6 billion | 6.6 billion | 6.6 billion | 4.4 billion | 7.2 billion |

| Die Size | 284 mm² | 284 mm² | 284 mm² | 200 mm² | 314 mm² |

| SLI Support | No | No | No | No | Yes (MIO) |

The GPU at the heart of GeForce GTX 1660 is specifically named TU116-300-A1. It’s a close relative of the GeForce GTX 1660 Ti’s TU116-400-A1, trimmed from 24 Streaming Multiprocessors to 22. We’re obviously still dealing with a processor devoid of Nvidia’s future-looking RT and Tensor cores, measuring 284mm² and composed of 6.6 billion transistors manufactured using TSMC’s 12nm FinFET process.

Gigabyte takes this chip, with 1,408 CUDA cores, and bumps the reference 1,785 MHz GPU Boost frequency up to 1,860 MHz—a 4% increase. Six 32-bit memory controllers give TU116 an aggregate 192-bit bus, which is populated by 8 Gb/s GDDR5 modules pushing up to 192 GB/s. That’s comparable to GeForce GTX 1060 6GB, and a 33% reduction compared to GeForce GTX 1660 Ti. Combined with the loss of two SMs, dropping from GDDR6 to GDDR5 memory accounts for GeForce GTX 1660’s lower performance versus 1660 Ti.

Each memory controller is associated with eight ROPs and a 256KB slice of L2 cache. In total, TU116 exposes 48 ROPs and 1.5MB of L2. GeForce GTX 1660’s ROP count compares favorably to RTX 2060, which also utilizes 48 render outputs. But TU116’s L2 cache slices are half as large compared to TU106.

All of Gigabyte's graphics cards include three years of warranty coverage, and some of them add a fourth year when you register on the company's website. The GeForce GTX 1660 Gaming OC 6G is not one of those special models. In its segment, however, three years of coverage is fairly standard.

How We Tested Gigabyte’s GeForce GTX 1660 Gaming OC 6G

Obviously, GeForce GTX 1660 is more mainstream than the other Turing-based cards we’ve reviewed. As such, our graphics workstation, based on an MSI Z170 Gaming M7 motherboard and Intel Core i7-7700K CPU at 4.2 GHz, is apropos. The processor is complemented by G.Skill’s F4-3000C15Q-16GRR memory kit. Crucial’s MX200 SSD is included, joined by a 1.6TB Intel DC P3700 loaded down with games.

As far as competition goes, the 1660 mostly goes up against GeForce GTX 1060 6GB, though we include GeForce GTX 1070 and 1070 Ti as well. All of those cards are included in our line-up, along with GeForce RTX 2060 and GeForce RTX 2070. On the AMD side, we’re mostly interested in Radeon RX 590, although Radeon RX Vega 64 and Radeon RX Vega 56 make for interesting additions, too.

Our benchmark selection includes Ashes of the Singularity: Escalation, Battlefield V, Destiny 2, Far Cry 5, Forza Horizon 4, Grand Theft Auto V, Metro: Last Light Redux, Shadow of the Tomb Raider, Tom Clancy’s The Division 2, Tom Clancy’s Ghost Recon Wildlands, The Witcher 3 and Wolfenstein II: The New Colossus.

The testing methodology we're using comes from PresentMon: Performance In DirectX, OpenGL, And Vulkan. In short, these games are evaluated using a combination of OCAT and our own in-house GUI for PresentMon, with logging via GPU-Z.

We're using driver version 430.39 to test Gigabyte’s GeForce GTX 1660 and build 417.54 for everything else. AMD’s cards utilize Crimson Adrenalin 2019 Edition 18.12.3.

MORE: Best Graphics Cards

MORE: Desktop GPU Performance Hierarchy Table

MORE: All Graphics Content

Current page: Gigabyte GeForce GTX 1660 Gaming OC 6G Review

Next Page Gaming at 1920 x 1080