Intel Xeon E5-2600 v4 Broadwell-EP Review

Why you can trust Tom's Hardware

Power Consumption

There is no way to understate the impact of power consumption and efficiency on the modern datacenter. Power use is the killer of all things bottom-line. Datacenters worldwide consumed an estimated 416.2 terawatts (416.2 trillion watts) of electricity in 2014, which is more than 182 entire countries (out of 192 globally). Even more alarming, datacenter power use is quadrupling every two years, and a recent Japanese study concluded that at today's rate, its datacenters will consume all of the country's electricity output by 2030.

The pressure to reduce power consumption is an overbearing burden, but at the same time, the demands for more processing power are increasing every year. Deploying more efficient CPUs simultaneously increases performance per watt, frees up floor space and relaxes cooling requirements. All three of those variables function as multipliers that can either reduce or inflate datacenter expenditures dramatically.

Intel took several important steps to reduce power consumption with its previous-generation Haswell-EP products, including the addition of an on-package power delivery system. It also added per-core P-state control and moved to DDR4 memory, which helped reduce platform power use, further improving efficiency.

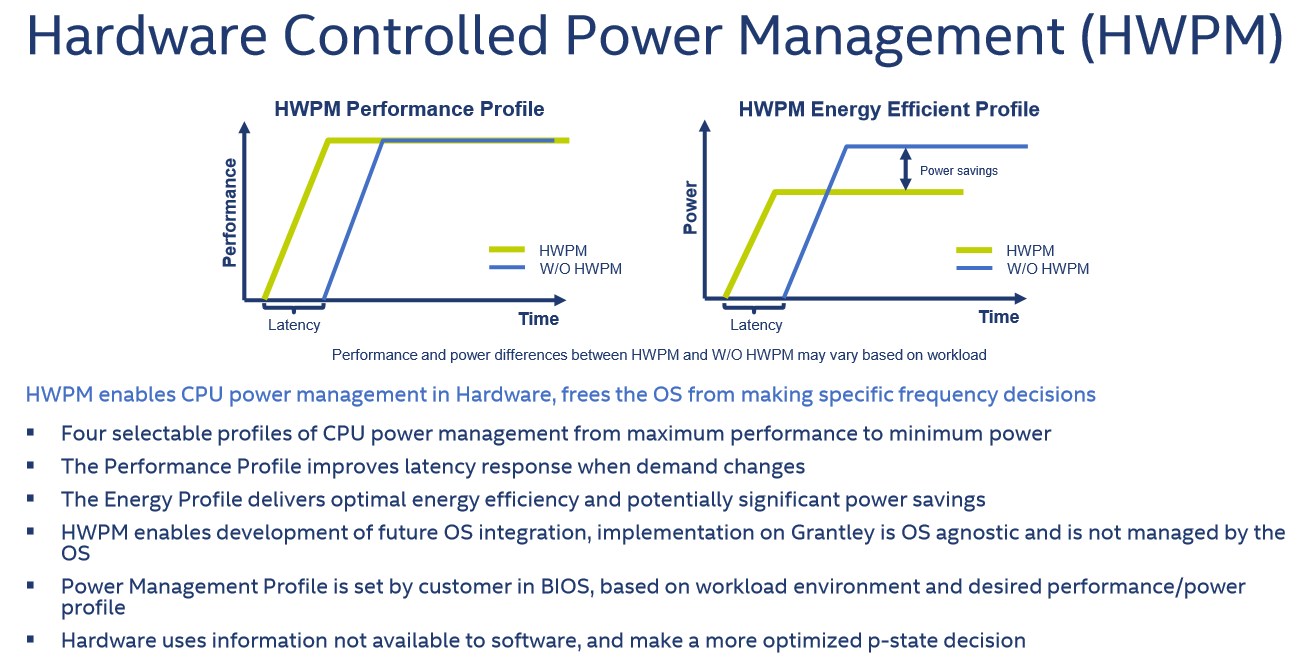

The Xeon E5-2600 v4 family offers these same advantages, but also brings about the introduction of Hardware Controlled Power Management (HWPM). Under normal circumstances, most servers accept hints from the operating system that indicate when it is appropriate to adjust the power state. Unfortunately, this process is slow in relation to how fast a CPU can make decisions internally, and not all software developers are utilizing the feature.

HWPM turns power management over to the CPU, offering up to four power profiles that optimize the server for each use-case, which you can specify in the BIOS. The CPU then adjust power state settings dynamically, depending on the chosen profile. Not only is this process faster, but it's also compatible with all operating systems (the CPU simply ignores the OS hints). Intel is in the early stages of HWPM development, but expects the feature to evolve rapidly.

Linux-Bench Power Consumption

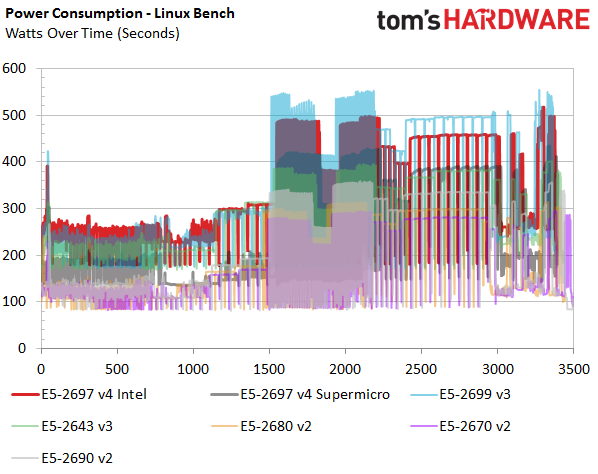

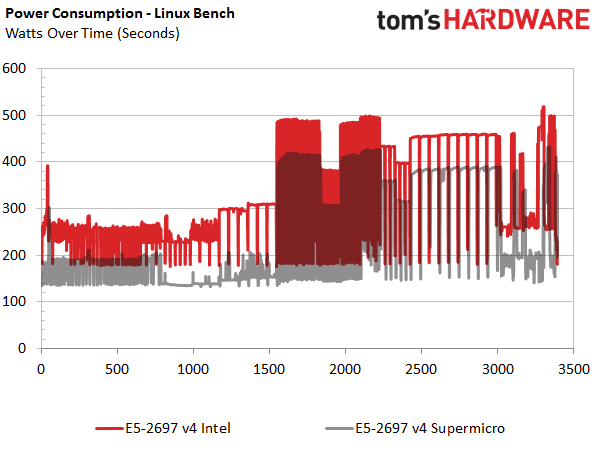

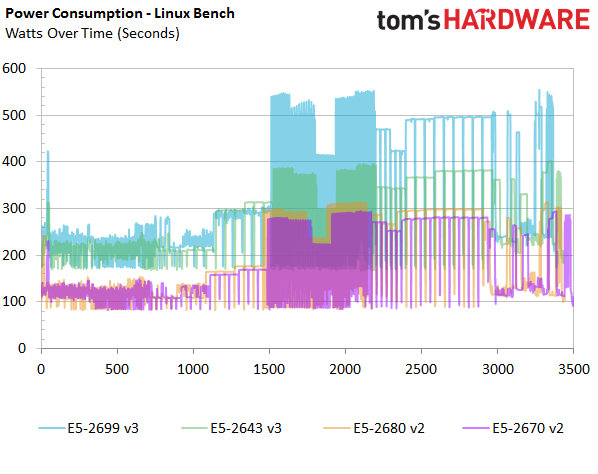

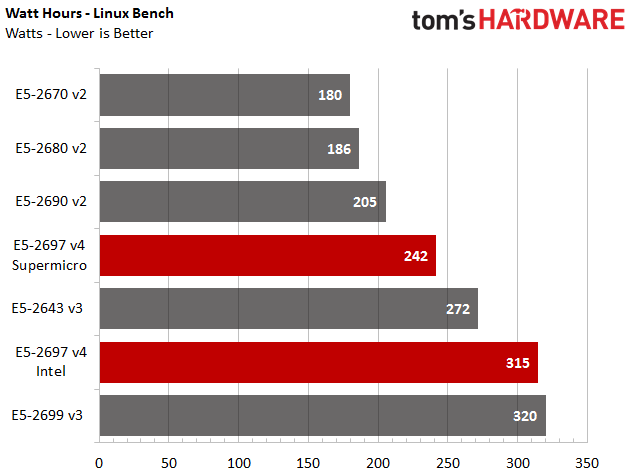

We measured power consumption during the Linux-Bench script, which provides a nice comparison point for each system as the test progresses. The first slide provides a view of the test servers in one image. Then, we provide two additional slides that paint a clearer picture.

The Supermicro platform is unsurprisingly more efficient than Intel's software development platform. After all, it's a production-class system with 80 PLUS Titanium-rated PSUs.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

The Xeon E5-2699 v3 CPUs consume more power than our Broadwell-EP samples in the same Intel development platform, which speaks volumes given their similar core count. The -2643 v3 consumes less power by virtue of its lower core count. This is an important consideration; it is not wise to provision excess CPU resources that exceed the requirements of the workload. It's better to pick the right CPU for your application.

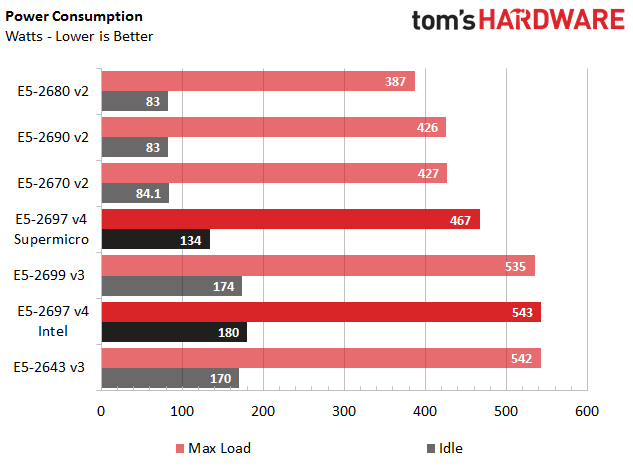

Power Load And Idle

We measured peak power consumption during a Linpack run to characterize each platform's use. Again, Supermicro's configuration is more efficient than Intel's in both maximum and idle consumption. We also recorded the watt-hours consumed during the entire Linux-Bench script, and found the Supermicro platform offering a more refined efficiency story. The second-gen Xeon E5s do use less power, but they're also a lot slower.

Paul Alcorn is the Editor-in-Chief for Tom's Hardware US. He also writes news and reviews on CPUs, storage, and enterprise hardware.

-

utroz Hmm well we know that Broadwell-E chips must be coming very very soon if Intel let this info out.Reply -

bit_user Wasn't there supposed to be a 4-core 5.0 GHz SKU? Single-thread performance still matters, in many cases.Reply

-

turkey3_scratch Reply17746082 said:Wasn't there supposed to be a 4-core 5.0 GHz SKU? Single-thread performance still matters, in many cases.

In most server applications it doesn't matter as much as multithreaded performance. If you need single-core strength, getting a consumer chip is actually better, but you probably aren't running a server if single-threaded is your focus. -

PaulyAlcorn ReplyWasn't there supposed to be a 4-core 5.0 GHz SKU? Single-thread performance still matters, in many cases.

I read the rumors on that as well, but nothing official has surfaced as of yet to my knowledge. -

bit_user Reply

Try telling that to high-frequency traders. I'm sure they want the reliability features of Xeons (ECC, for example), but the highest clock speed available.17746141 said:17746082 said:Wasn't there supposed to be a 4-core 5.0 GHz SKU? Single-thread performance still matters, in many cases.

In most server applications it doesn't matter as much as multithreaded performance. If you need single-core strength, getting a consumer chip is actually better, but you probably aren't running a server if single-threaded is your focus.

And the fact that Intel even released low-core high-clock SKUs is an acknowledgement of this continuing need. Clock just not as high as I'd read. With the other specs basically matching the Haswell version, the only difference is ~5% IPC improvement. Seems pretty poor improvement, for a die-shrink.

-

firefoxx04 Would nice to have a quad core xeon that turbos at 4.4ghz just like the 4790k. I had to go with a 4690k when building an autocad system because it only uses one core and needs that core to be fast... this means i have to sacrifice ecc support.Reply -

bit_user Reply

On wccftech (not the most reliable source, I know), they claimed:17746160 said:Wasn't there supposed to be a 4-core 5.0 GHz SKU? Single-thread performance still matters, in many cases.

I read the rumors on that as well, but nothing official has surfaced as of yet to my knowledge.

Model: Intel Xeon E5-2602 V4

Cores/threads: 4/8

Base clock: 5.1 GHz

Turbo clock: TBD

L3 Cache: 5 MB

TDP: 165W

Given what we know about 2.5 MB/core of L3 Cache, the 5 MB figure sounds suspicious. It's conceivable they could disable some to hit the target TDP, I guess.

-

firefoxx04 We cant get skylake to consistently hit 5ghz... why would a xeon chip suddenly hit 5ghz?Reply -

JamesSneed Reply17746312 said:We cant get skylake to consistently hit 5ghz... why would a xeon chip suddenly hit 5ghz?

I'm not saying the 5Ghz rumor is true but Intel has always known which chips can hit higher clocks during certification if the chip is a top end or low end chip cores disabled etc. I'm sure they could cherry pick a few to sell for $$$ if they wanted. Now are they I have no real idea. -

bit_user Reply

Well, I was surprised, too.17746312 said:We cant get skylake to consistently hit 5ghz... why would a xeon chip suddenly hit 5ghz?

There are obviously things you can do in chip design that allow one to reach different timing targets. And I was hoping they might've refined their 14 nm process, since the time the first Broadwells launched. So, I thought, with more TDP headroom afforded by this socket (roughly double what Skylake has to work with), maybe they could do it.

I thought maybe Intel was addressing some pent-up demand for high clockspeed applications. That said, it seemed particularly odd in Broadwell, given that it generally seems oriented towards lower clockspeed / lower power applications.

But maybe it was a typo, or even a blatant lie, in order to track down leakers.