MSI Optix MAG341CQ Curved Ultra-Wide Gaming Monitor Review: A Price Breakthrough

Why you can trust Tom's Hardware

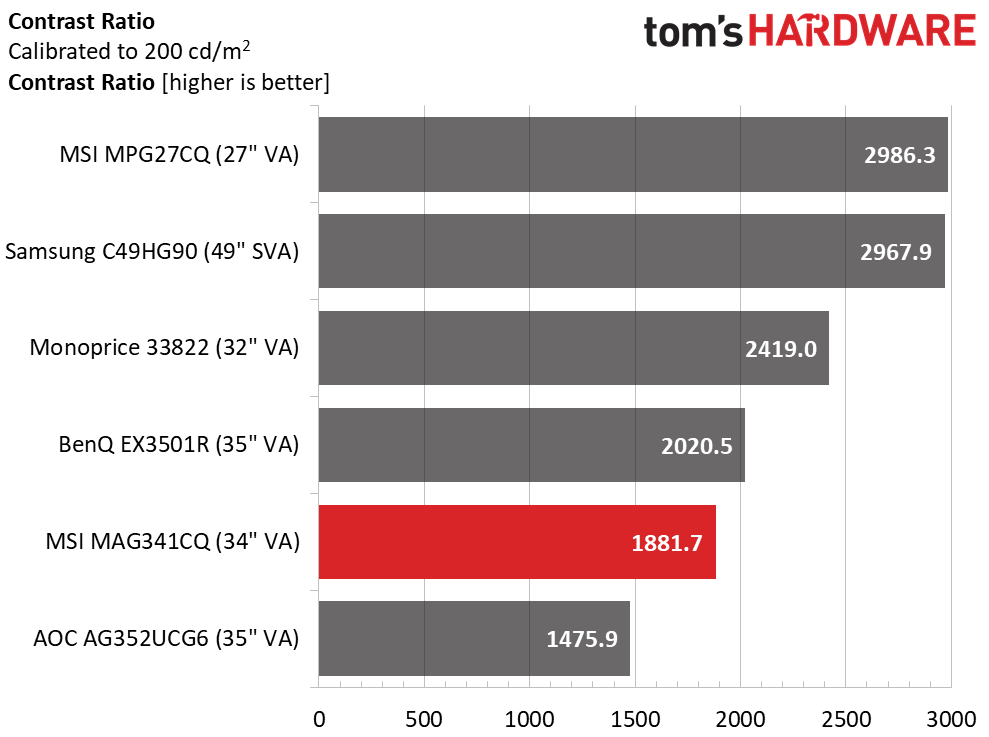

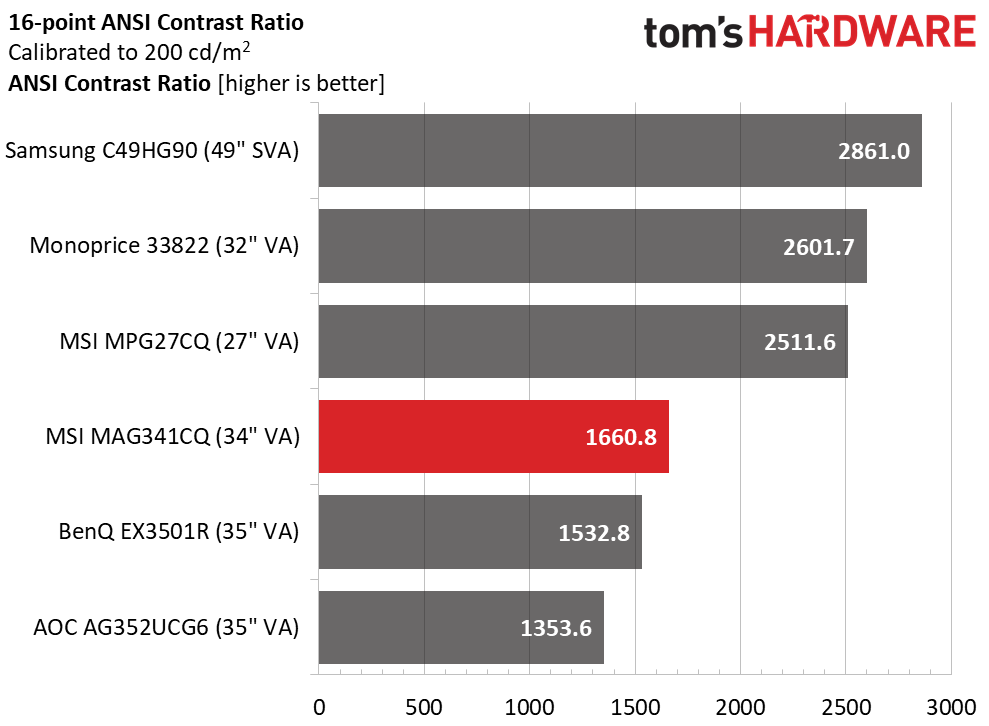

Brightness and Contrast

To read about our monitor tests in-depth, check out Display Testing Explained: How We Test Monitors and TVs. We cover Brightness and Contrast testing on page two.

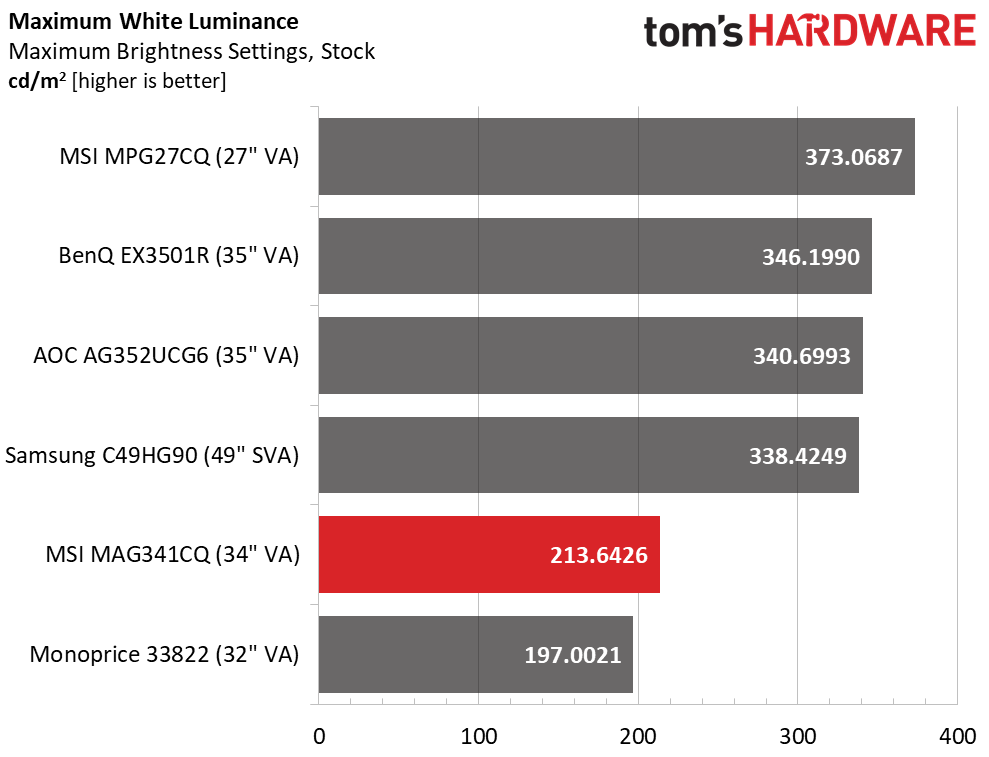

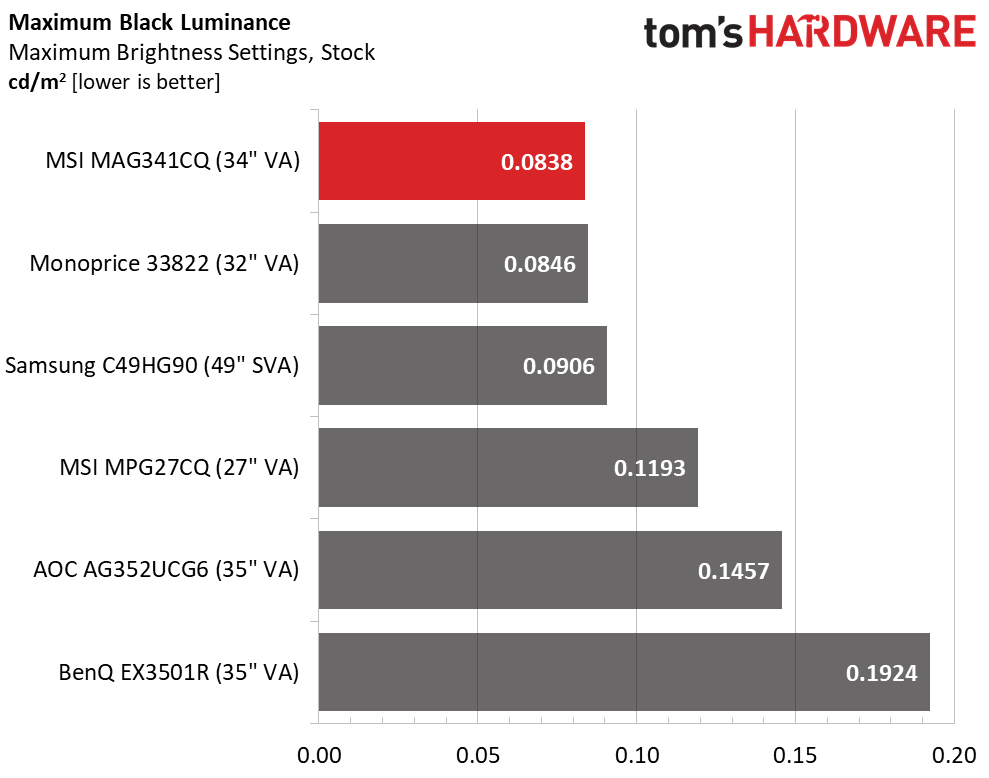

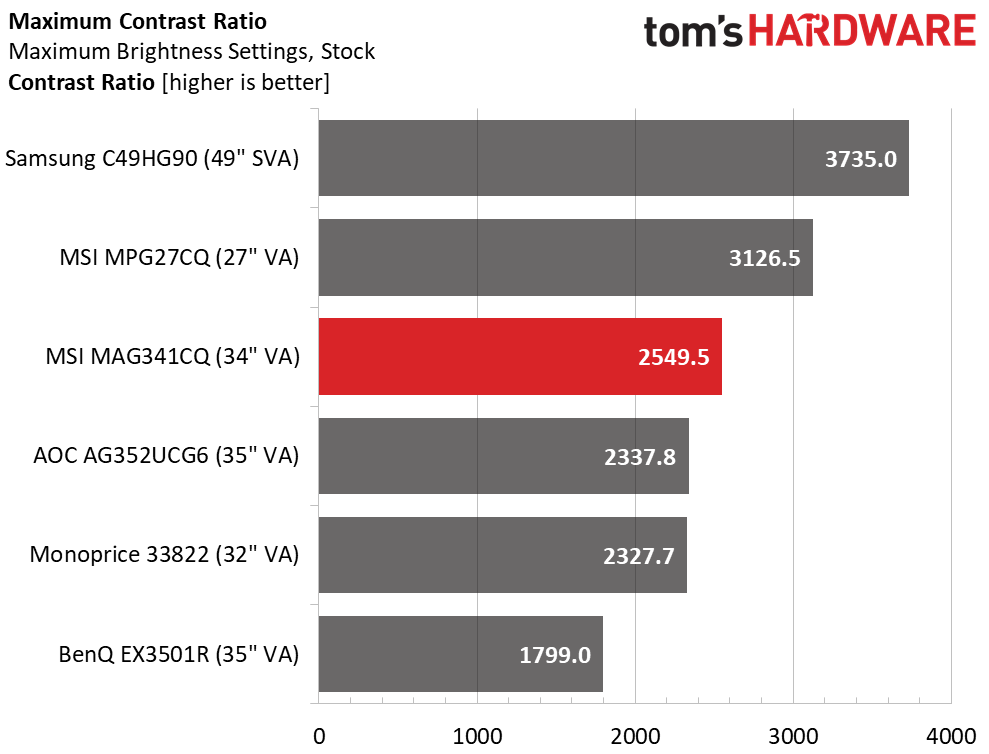

Uncalibrated – Maximum Backlight Level

For comparison, we brought in a group of VA panels. On the 16:9 side is MSI’s Optix MPG27CQ and Monoprice’s 33822. Ultra-wides include BenQ’s EX3501R, AOC’s Agon AG352UCG6 and the mega-wide Samsung C49HG90.

The MAG341CQ is rated at 250 nits but our sample only managed 213.6 at maximum brightness settings. But that’s not a deal-breaker as that is more than enough light for the average indoor space; although, you should avoid bright, sunny windows. We’d like to see at least 300 nits to provide some headroom for calibration, which can reduce peak output.

Black levels were VA-dark with our review monitor earning first place. This is precisely the reason for buying a VA panel and why they’re our favorite for entertainment. That extra depth is easy to see and really enhances gaming and video content. Resulting contrast is mid-pack in our group and about average for VA screens as a whole.

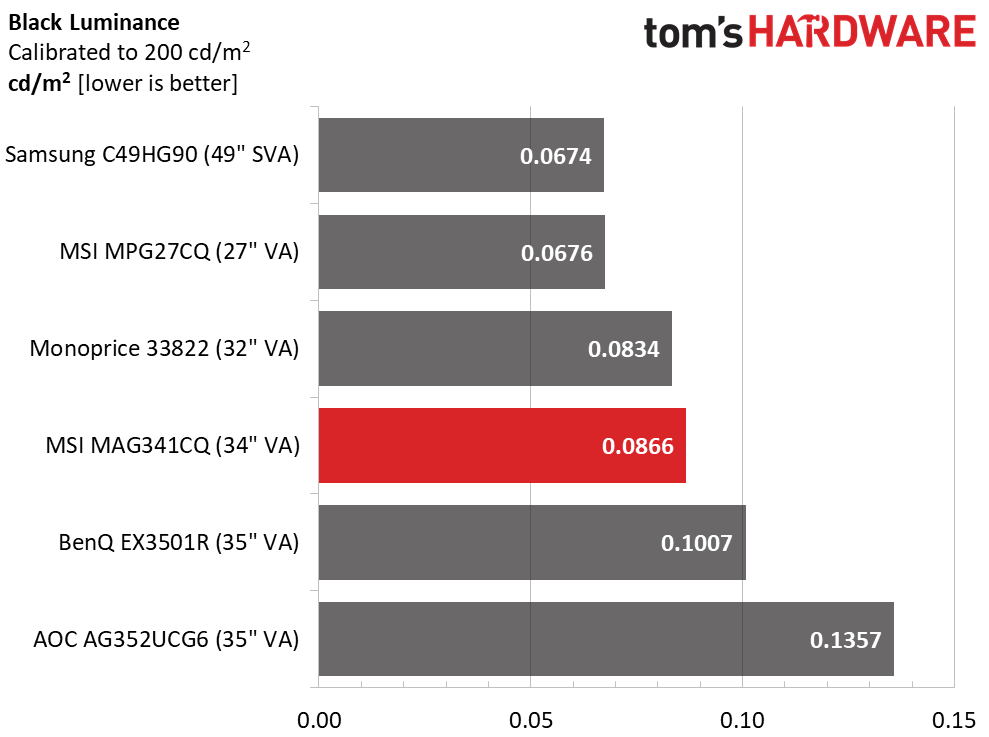

After Calibration to 200 nits

After calibration, the MAG341CQ’s black level didn’t notably change, but peak white dropped to 163 nits with the brightness slider maxed. Again, this was enough light for most indoor environments, but there was no headroom left.

We had to reduce contrast a bit to fix a gamma issue, and our changes to the RGB sliders cost us some dynamic range. 1,881.7:1 is still a respectable contrast ratio, but all the other screens here fared better, except for the AOC. In the ANSI contrast test, the MAG341CQ moved up a spot with a solid 1,660.8:1 score. While that wasn’t enough to win in this group, it’s significantly better than any IPS monitor short of a full-array backlight model.

MORE: Best Gaming Monitors

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

MORE: How We Test Monitors

MORE: All Monitor Content

Current page: Brightness and Contrast

Prev Page Features and Specifications Next Page Grayscale, Gamma and Color

Christian Eberle is a Contributing Editor for Tom's Hardware US. He's a veteran reviewer of A/V equipment, specializing in monitors. Christian began his obsession with tech when he built his first PC in 1991, a 286 running DOS 3.0 at a blazing 12MHz. In 2006, he undertook training from the Imaging Science Foundation in video calibration and testing and thus started a passion for precise imaging that persists to this day. He is also a professional musician with a degree from the New England Conservatory as a classical bassoonist which he used to good effect as a performer with the West Point Army Band from 1987 to 2013. He enjoys watching movies and listening to high-end audio in his custom-built home theater and can be seen riding trails near his home on a race-ready ICE VTX recumbent trike. Christian enjoys the endless summer in Florida where he lives with his wife and Chihuahua and plays with orchestras around the state.

-

Energy96 Why do these “high end” gaming monitors always seem to come with free sync instead of the Nvidia G-sync. Most people willing to shell out $450 and up on a monitor are going to be running Nvidia cards which makes the feature useless. With an Nvidia card it isn’t even worth considering a monitor that doesn’t support G-sync.Reply -

mitch074 @energy96: because including G-sync requires a proprietary Nvidia scaler, which is very expensive, while Freesync is based off a standard and thus much cheaper. So, someone owning an Nvidia card would have to pay 600 bucks for a similarly featured screen.Reply -

Energy96 This is completely false. Free sync does not work with Nvidia cards, only Radeon. There is a sort of hack work around but it’s worse than just buying a g-sync monitor.Reply -

Energy96 @energy96: because including G-sync requires a proprietary Nvidia scaler, which is very expensive, while Freesync is based off a standard and thus much cheaper. So, someone owning an Nvidia card would have to pay 600 bucks for a similarly featured screen.Reply

I know this. My point was most people who buy a Radeon card are doing it because they are on a budget. It’s unlikely they will have the funds for a high end gaming monitor that is $450+. That’s more than they likely would have spent on the Radeon card.

Majority of people dropping that much or more on a gaming monitor will be running Nvidia cards. I know it adds cost but if you are running Nvidia a free sync monitor is out of the question. Free sync seems pointless in any monitor that is much over $300. -

cryoburner Reply

The real question you should be asking is why a supposedly "high end" graphics card doesn't support a standard like VESA Adaptive-Sync, otherwise branded as FreeSync. You should be complaining on Nvidia's graphics card reviews that they still don't support the open standard for adaptive sync, not that a monitor doesn't support Nvidia's proprietary version of the technology that requires special hardware from Nvidia to do pretty much the same thing. It's not just AMD that will be supporting VESA Adaptive-Sync either, as Intel representatives have stated on at least a couple occasions that they intend to include support for it in the future, likely with their upcoming graphics cards. Microsoft's Xbox consoles also support FreeSync, albeit a less-standard implementation over HDMI.21646897 said:Why do these “high end” gaming monitors always seem to come with free sync instead of the Nvidia G-sync. Most people willing to shell out $450 and up on a monitor are going to be running Nvidia cards which makes the feature useless. With an Nvidia card it isn’t even worth considering a monitor that doesn’t support G-sync.

Nvidia doesn't support it because they want to sell you an overpriced chipset as a part of your monitor, and they want you arbitrarily locked into their hardware ecosystem once competition heats up at the high-end. I suspect that even they may support it eventually though. They're just holding out so that they can price-gouge their customers as long as they can.

-

Energy96 I can agree with this, but currently it’s the only choice we have. I’m not downgrading to a Radeon card. It would be nice if some of these monitors at least offered versions with it. I know a few do but the selection is very limited.Reply -

mitch074 Reply

A 1440p monitor and a Vega 56 go well together - if you have a 1080/1080ti/2070/2080/2080ti then yes it's a "downgrade", but if you run a triple screen off a Radeon card already, then it's not.21651950 said:I can agree with this, but currently it’s the only choice we have. I’m not downgrading to a Radeon card. It would be nice if some of these monitors at least offered versions with it. I know a few do but the selection is very limited.

I think my RX480 could run Dirt Rally on that thing quite well, for example - not everybody buy these things for a 144fps+ shooter. -

Dosflores Reply21650670 said:Free sync seems pointless in any monitor that is much over $300.

FreeSync isn't expensive to implement, unlike G-Sync, so why should companies not implement it? You seem to think that the only reason there can ever be to buy a new monitor is adaptive sync, which isn't true. People may want a bigger screen, higher resolution, higher refresh rate, better contrast… If they want G-Sync, they can expend extra on it. If they don't think it is worth it, they can save money. FreeSync doesn't harm anyone.

Buying a $450 monitor seems pointless to me after having spent $1200+ on a graphics card. The kind of monitor that Nvidia thinks is a good match for it is something like this:

https://www.tomshardware.com/reviews/asus-rog-swift-pg27u,5804.html