ATI Radeon HD 5870: DirectX 11, Eyefinity, And Serious Speed

Eyefinity: A Tangible Benefit, Today

When you go down a list of the fastest single graphics card in the world’s specifications, display outputs just don’t jump out at you as the reason to sink nearly $400 into a high-end GPU. If you only use one monitor, that’s understandable. But I'll tell you right now that you’re missing out. For several years I used four LCDs to work across, and always had at least one window up on each. I’ve since stepped back to three LCDs, but even as I type, I have 10 Firefox windows open, this story in Word, Outlook, two emails, Excel, Adobe Acrobat, and a Trillian conversation—more content than will even fit on three displays. In order to achieve this, I have an embarrassing combination of Radeon HD 4850 and Radeon X1650 cards plugged in (hey, I need everything else for benchmarking, and my Quadro NVS 440 doesn’t game).

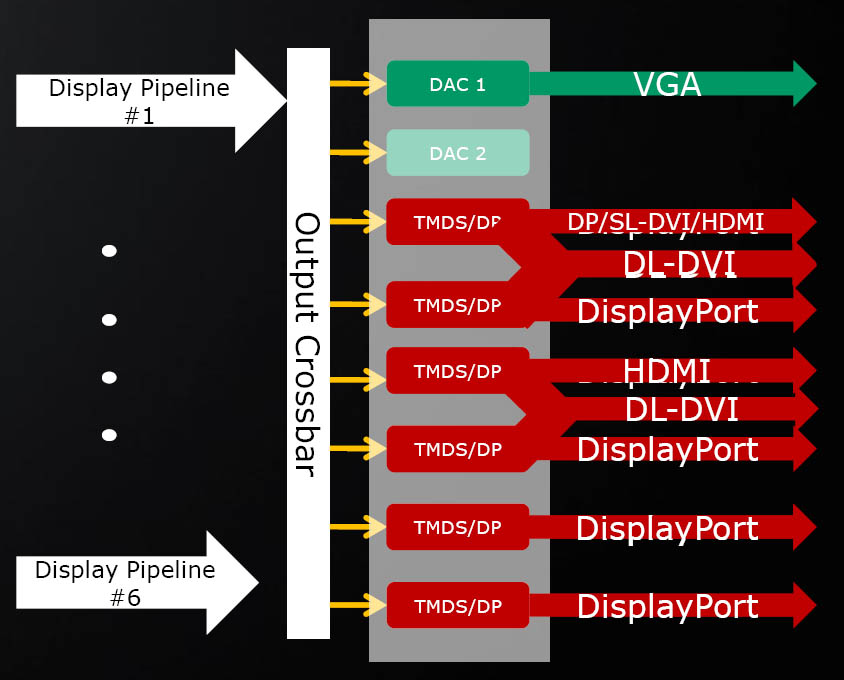

ATI’s Eyefinity technology is the answer. The days of two DACs and a pair of TMDS transmitters constituting the entire display pipeline are gone. The Radeon HD 5870 features three independent display outputs, divided out across two dual-link DVI ports, an HDMI output, and a DisplayPort connector. In a standard desktop environment, you’d use the two DVI and single DisplayPort outputs.

DisplayPort is actually the enabler behind all of this. Unlike DVI, DisplayPort doesn’t require a dedicated clocking source for each output. So, all of the chips in ATI’s Evergreen family (with the exception of the lowest-end models) have the capability built-in to drive as many as six DisplayPorts given six on-die display controllers. The Radeon HD 5870, specifically, taps Cypress’ two internal clocking sources, plus one of the DisplayPort pipelines, to yield its three independent display outputs. The above diagram shows the output configurations Eyefinity is capable of supporting.

That’s cool and all, but it’s nothing compared to the Radeon HD 5870 Eyefinity⁶ Edition, which takes full advantage of the GPU’s DisplayPort pipelines. Using six mini-DisplayPort outputs, the card lets you drive as many monitors—right up to the largest 30” LCDs. Theoretically, Eyefinity supports up to an 8192x8192 maximum aggregate screen resolution. But a sextet of 30 inchers running 2560x1600 really only totals 7680x3200. You can expect the Eyefinity⁶ card to launch sometime between now and the end of the year. By the time that happens, you can expect to see Samsung ultra-thin bezel LCDs that make the break between monitors in a multi-display configuration less jarring.

Using Eyefinity

Alright, I feel silly typing Eyefinity over and over, so it’s Radeon HD 5870 from here on out—hopefully by now you know the card gives you three display outputs.

ATI was generous enough to let me borrow a trio of gorgeous Dell U2410s, which I set on the one place certain to get hit me hit in the head by my wife: the dining room table. It was so worth it, though, and the three-output thing isn’t even new to me. I saw ATI’s display technology at the press briefing for 5870, but it’s entirely different to sit down in front of three 24” LCDs in your own home and play games, run benchmarks, and mess with settings.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

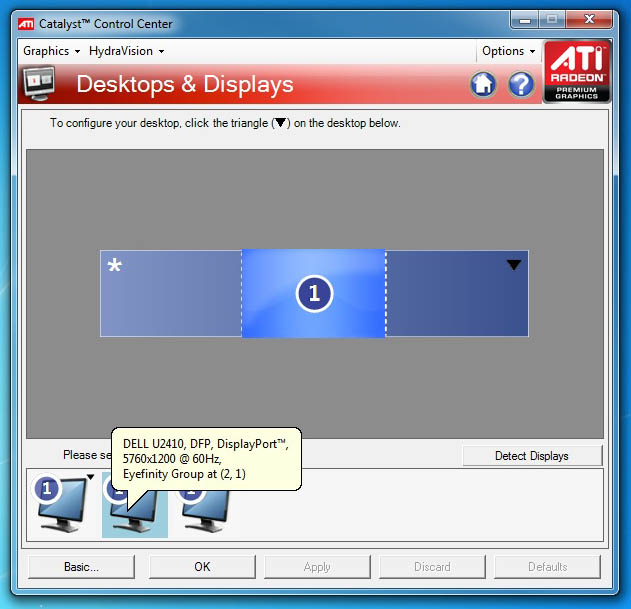

Configuring three displays to run in single large surface mode is intuitive under Windows 7 (it’ll also work under Windows Vista and Linux). I set my desktop to 5760x1200 and tiled the background to keep things familiar. This is the way you’d want to game, but it’s not the way you would want to use a computer. In SLS mode, you literally have the equivalent of one huge desktop. Maximize Outlook and you span the app across the whole surface. ATI says you can set hotkeys to toggle between SLS and independent display modes, but this must not be as intuitive, because I couldn’t make it happen. Criticism number one: there needs to be a smoother way to swap between an independent display “productivity” mode and gaming across the single large surface.

My first order of business after getting the desktop configured was firing up H.A.W.X. The relatively-fluid flight sim lends itself to the lower frame rates experienced at 5760x1200. With High detail settings and anti-aliasing turned off I was still getting 44 frames per second. If you’ve had a hard time getting into the game up until now, this is the way to do it. The visual experience is truly stunning. I immediately started running through the other games in our benchmark suite:

| Game Benchmarks, Single Radeon HD 5870 @ 5760x1200 (No AA / No AF), in FPS | |

|---|---|

| H.A.W.X. | 44 |

| Far Cry 2 | 54.12 |

| Left 4 Dead | 81.04 |

| S.T.A.L.K.E.R.: Clear Sky | 28.8 |

| World in Conflict | Crash |

| Resident Evil 5 | Not Compatible |

| Grand Theft Auto IV | 29.61 |

Originally I had planned for that to be a three-column chart; when I saw S.T.A.L.K.E.R. at 28 frames and Grand Theft Auto IV at 29 frames, my first thought was: now here’s a reason to buy two Radeon HD 5870s. But CrossFire doesn’t yet work. ATI’s driver team is looking at the issue, it says, but there is no ETA on when a pair of 5870s might be used to bolster performance further. Criticism number two: no CrossFire? Really?

For the time being, games like H.A.W.X., Far Cry 2, and Left 4 Dead are at least playable on a single Radeon HD 5870, which is still amazing when you consider the last triple-head graphics card to cross our test bench was Matrox’s Parhelia, a card that ambitiously offered triple-output gaming and an underpowered graphics processor. But you do have to turn off extras like anti-aliasing in order to maintain reasonable frame rates with the 5870.

Here’s my last nit-pick: the 1” of bezel between each pair of displays isn’t really an issue when you’re working on the Windows desktop, but it’s certainly more distracting in gaming environments. Samsung’s ultra-thin bezels can’t come soon enough. When they do (in their single, 3x1, and 3x2 configurations), Eyefinity will become that much sexier.

Current page: Eyefinity: A Tangible Benefit, Today

Prev Page DirectCompute Next Page Multimedia: Mostly The Same, Plus High-Def Audio-

crosko42 So it looks like 1 is enough for me.. Dont plan on getting a 30 inch monitor any time soon.Reply -

jezza333 Looks like the NDA lifted at 11:00PM, as there's a load of reviews now just out. Once again it shows that AMD can produce a seriously killer card...Reply

Crysis 2 on an x2 of this is exactly what I'm waiting for. -

LORD_ORION Err... I thought I was going to see more for the price. Regardless, I think ATI missed the mark here. I am interested in playing games on my HDTV since me and my monitor don't care about these higher resolutions. Fail cakes... Nivida is undoubtedly going to rape ATI in performance with the 300 series. This is good news for mainstream prices however.... you can ptobably upgrade to a current DX10 board soon for a very good price, and then buy a 5850 for $100 in a year from now. Result? Don't but a 5000 series card yet until the price comes down? Heh, I bet the cards will be $100 less in December if the 300 series launches.Reply

This is not to say I am an Nvidia fan, just undoubtedly you would do well for yourself to hold off for a bit if you want to buy a 5000 series... as the price will come down for a good price/performance ratio soon enough. -

cangelini viper666why didn't they thest it against a GTX 295 rather than 280??? its far superior...Reply

Ran it against a GTX 295 and a 285 and 285s in SLI :) -

Annisman I refuse to buy until the 2GB versions come out, not to mention newegg letting you buy more than 1 at a time, paper launch ftl.Reply