Intel Xeon E5-2600 v2: More Cores, Cache, And Better Efficiency

Intel recently launched its Xeon E5-2600 v2 CPU, based on the Ivy Bridge-EP architecture. We got a couple of workstation-specific -2687W v2 processors with eight cores and 25 MB of L3 cache each, and are comparing them to previous-generation -2687Ws.

Power Consumption And Efficiency

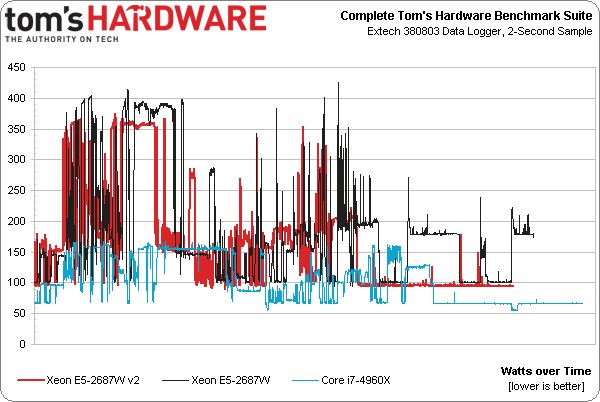

Our benchmark suite is automated so that tests run in the same order each time, with the same delays between commands. There is even a period of idle time injected at the end to capture the reality that even high-end workstations aren’t under load 24x7. At the end of that idle period, the workstation shuts itself down automatically.

As that’s happening, we log power consumption. The above chart represents power use through the run. We also get a sense for how long each configuration takes to finish the batch file and turn itself off, given the length of each line. Right away it’s clear that two Xeon E5-2687W v2s complete our battery of benchmarks faster than first-gen -2687Ws, and they do it using less energy.

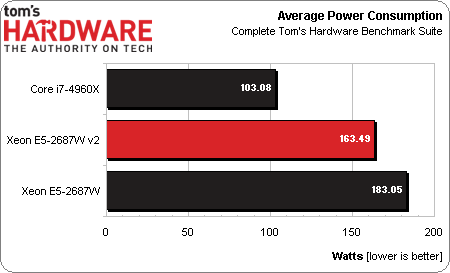

Averaging the data points together shows that, indeed, the newer Xeons use 20 W less through our suite. That’s pretty remarkable considering:

Article continues below- The new Xeons operate at higher clock rates under load and in lightly-threaded apps.

- The new Xeons have 5 MB more of shared L3 cache each.

- The average results have a ton of single-threaded work and idle time factored in; considering threaded workloads-only would exacerbate the difference.

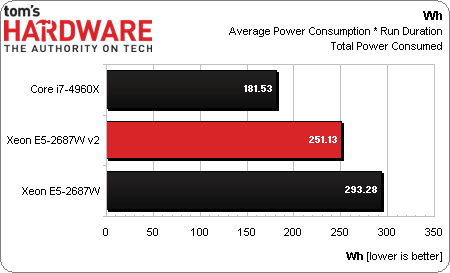

Of course, the averages themselves don’t take into account how quickly a given platform got its job done, dropped to idle, and stopped using power. For that, we need to create a unit of energy by multiplying wattage by the time it takes to finish our workload.

Those single-threaded tasks and that idle time give Intel’s Core i7 a big advantage when it comes to average power consumption. However, because the two Xeon E5-2687W v2s are so much faster, they gain quite a bit of ground when we factor performance into the equation.

Compared to first-gen E5s, the new -2687W v2s use less power and are faster. That’s a recipe for an efficiency sweep, reflected in a 42 Wh advantage in our benchmark suite.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: Power Consumption And Efficiency

Prev Page Results: Compression Next Page Ivy Bridge-EP: Faster And More Efficient On The Same Platform-

GL1zdA1 Does this mean, that the 12-core variant with 2 memory controllers will be a NUMA CPU, with cores having different latencies when accessing memory depending on which MC is near them?Reply -

Draven35 The Maya playblast test, as far as I can tell, is very single-threaded, just like the other 3d application preview tests I (we) use. This means it favors clock speed over memory bandwidth.Reply

The Maya render test seems to be missing O.o

-

Cryio Thank you Tom's for this Intel Server CPU. I sure hope you'll make a review of AMD's upcoming 16 core Steamroller server CPUReply -

voltagetoe If you've got 3ds max, why don't you use something more serious/advanced like Mental Ray ? The default renderer tech represent distant past like year 1995.Reply -

lockhrt999 "Our playblast animation in Maya 2014 confounds us."@canjelini : Apart from rendering, most of tools in Maya are single threaded(most of the functionality has stayed same for this two decades old software). So benchmarking maya playblast is as identical as itunes encode benchmarking.Reply -

daglesj I love Xeon machines. As they are not mainstream you can usually pick up crazy spec Xeon workstations for next to nothing just a few years after they were going for $3000. They make damn good workhorses.Reply -

InvalidError @GL1zdA1: the ring-bus already means every core has different latency accessing any given memory controller.Memory controller latency is not as much of a problem with massively threaded applications on a multi-threaded CPU since there is still plenty of other work that can be done while a few threads are stalled on IO/data. Games and most mainstream applications have 1-2 performance-critical threads and the remainder of their 30-150 other threads are mostly non-critical automatic threading from libraries, application frameworks and various background or housekeeping stuff.Reply -

mapesdhs Small note, one can of course manually add the Quadro FX 1800 to the relevant fileReply

(raytracer_supported_cards.txt) in the appropriate Adobe folder and it will work just

fine for CUDA, though of course it's not a card anyone who wants decent CUDA

performance with Adobe apps should use (one or more GTX 580 3GB or 780Ti is best).

Also, hate to say it but showing results for using the card with OpenCL but not

showing what happens to the relevant test times when the 1800 is used for CUDA

is a bit odd...

Ian.

PS. I see the messed-up forum posting problems are back again (text all squashed

up, have to edit on the UK site to fix the layout). Really, it's been months now, is

anyone working on it?