Stalker: Clear Sky - Is Your System Ready?

Test--The Benefit Of Changing Resolutions?

We tested at the maximum graphics quality and the highest illumination model. Extended dynamic illumination of objects (DirectX 10), anti-aliasing, and anisotropic filtering were deactivated and the range of view was set to maximum. The test system consists of a Core 2 Duo E6750 CPU at its standard frequency of 2.66 GHz, 4 GB of memory, and a GeForce 8800 GTS 512.

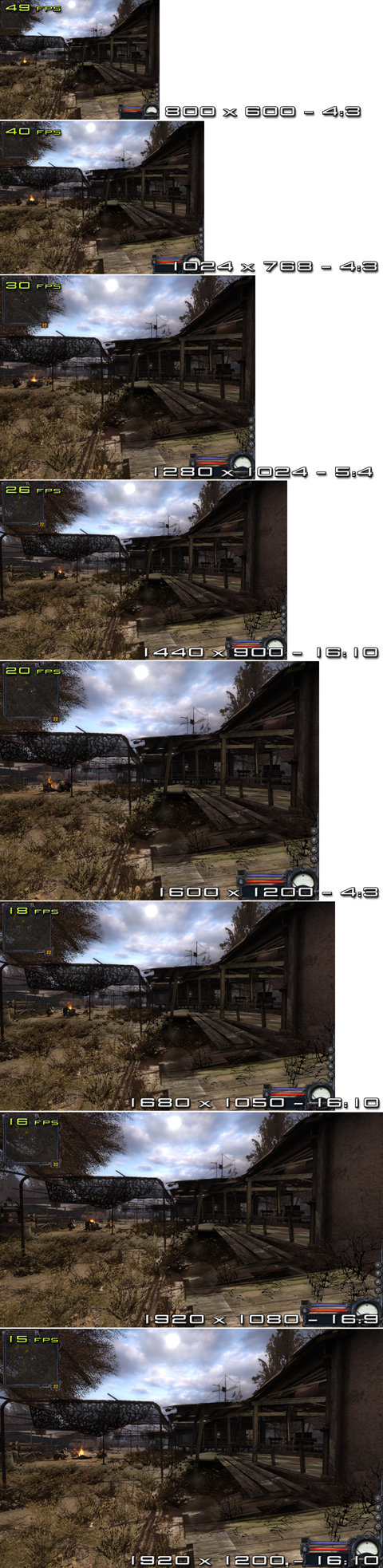

The question that keeps coming up is what is a monitor with widescreen resolution good for? If a game supports this resolution, there won’t be any distortion of width or black borders. The following pictures show the visible area and resolution, and the aspect ratio is next to it. You can see the achievable frame rate in the top left corner. All 16:10 formats have a wide image and show more of the picture. The 16:9 format has the widest image, which is a significant advantage because you see even more of the environment.

In the table you’ll see two percentages. The baseline of the first one is 800x600 pixels and it shows the loss in frame rate that occurs as you change to higher resolutions. The baseline of the second percentage is 1920x1200 pixels and it illustrates the gain in percentage terms when you decrease resolution.

The test scene we chose is challenging for the graphics card. The optimal setting for graphics quality would be 1280x1024 pixels. If you own a monitor with a resolution of 1920x1200 pixels, you will have to set the resolution to 1440x900 pixels to stay within your aspect ratio and still have a smooth frame rate for maximum graphics quality. You only gain 20 percent in performance when changing to 1680x1050. Given the baseline of 15 fps, this is not enough to exceed 25 fps.

| Resolution in Pixels | fps | Percent | Percent |

|---|---|---|---|

| 800x600 | 49 | 100 | 327 |

| 1024x768 | 40 | 82 | 267 |

| 1280x1024 | 30 | 61 | 200 |

| 1440x900 | 26 | 53 | 173 |

| 1600x1200 | 20 | 41 | 133 |

| 1680x1050 | 18 | 37 | 120 |

| 1920x1080 | 16 | 33 | 107 |

| 1920x1200 | 15 | 31 | 100 |

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: Test--The Benefit Of Changing Resolutions?

Prev Page Anomalies Make Life Harder Next Page Test--Graphics Menu And Quality Settings-

magicandy Aside from the numerous pages that don't work, was a 24-page analysis of STALKER, a fairly mediocre shooter, really necessary? Is a 24-page analysis of any game necessary? Sigh anything to please devs and grab ad dollars -_-Reply -

haplo602 hmm ... I loled when I saw the graphics error in the water tests. the camo-net disappeared everywhere it obscured water in all modes other than static. but you get a nice looking water effect :-)Reply

I was wondering if I can get this game to run on reasonable terms on a HD4670 or HD3850. Based on the HD3870 results, it looks like it would be playable ... -

cangelini V3ctorPage 7 is not available... It gives an error, as if the page doesn't exist.Reply

Not sure what you're seeing (or not, in this case), but everything is working over here. Any more detail? -

randomizer magicandySigh anything to please devs and grab ad dollars -_-Dude, the GSC can't even afford to hire a dozen half-decent developers, let alone pay for ads.Reply -

xx12amanxx I like the article ive been playing this on a 4870 for about a week now and absolutly love it!Reply