The Myths Of Graphics Card Performance: Debunked, Part 1

Did you know that Windows 8 can gobble as much as 25% of your graphics memory? That your graphics card slows down as it gets warmer? That you react quicker to PC sounds than images? That overclocking your card may not really work? Prepare to be surprised!

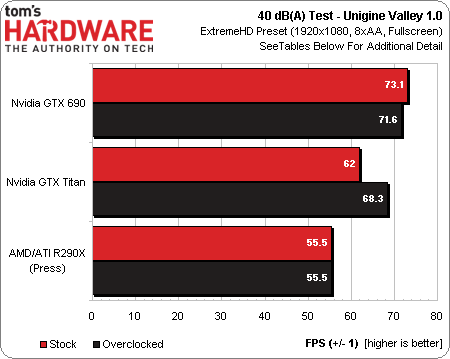

Can Overclocking Hurt Performance At 40 dB(A)?

Myth: Overclocking always yields performance benefits

Setting a specific fan profile, and letting cards throttle until they reach stability, yields an interesting and repeatable test.

| Card | Ambient (°C) | Fan Setting | Fan RPM | dB(A) ±0.5 | GPU1 Clock | GPU2 Clock | Memory Clock | FPS |

|---|---|---|---|---|---|---|---|---|

| Radeon R9 290X | 30 | 41% | 2160 | 40.0 | 870-890 | n/a | 1250 | 55.5 |

| Radeon R9 290XOverclocked | 28 | 41% | 2160 | 40.0 | 831-895 | n/a | 1375 | 55.5 |

| GeForce GTX 690 | 42 | 61% | 2160 | 40.0 | 967-1006 | 1032 | 1503 | 73.1 |

| GeForce GTX 690Overclocked | 43 | 61% | 2160 | 40.0 | 575-1150 | 1124 | 1801 | 71.6 |

| GeForce GTX Titan | 30 | 65% | 2780 | 40.0 | 915-941 | n/a | 1503 | 62 |

| GeForce GTX TitanOverclocked | 29 | 65% | 2780 | 40.0 | 980-1019 | n/a | 1801 | 68.3 |

Only the GeForce GTX Titan performs better when it's overclocked. The Radeon R9 290X gets absolutely no benefit, while the GeForce GTX 690 actually loses performance at our 40 dB(A) test point, cutting clock rate as low as 575 MHz when we overclock.

This test shows how much more performance headroom the Titan has compared to the other cards. Although it doesn't match the GeForce GTX 690, the overclocked Titan gets close, leaving the Radeon R9 290X further behind than more typical benchmarks might suggest.

Another interesting point is how much higher the ambient temperature gets with a GeForce GTX 690 in my case (12-14 °C). That's the effect of its center-mounted axial fan, which blows hot air back into the chassis, limiting thermal headroom. In most real-world cases, we'd expect a similar scenario. So, the trade-offs between more noise for more performance (or the other way around) need to be considered based on your own tastes.

Now, with V-sync, input lag, graphics memory, and benchmarking at a specific acoustic footprint explored in-depth, we'll get back to work on part two, which already includes exploring PCIe transfer rates, display sizes, deep-dives on proprietary vendor technologies, and value for your dollar. Of course, if there are other topics you'd like to see us broach, please let us know in the comments section!

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: Can Overclocking Hurt Performance At 40 dB(A)?

Prev Page Testing Performance At A Constant 40 dB(A)-

manwell999 The info on V-Sync causing frame rate halving is out of date by about a decade. With multithreading the game can work on the next frame while the previous frame is waiting for V-Sync. Just look at BF3 with V-Sync on you get a continous range of FPS under 60 not just integer multiples. DirectX doesn't support triple buffering.Reply -

hansrotec with over clocking are you going to cover water cooling? it would seem disingenuous to dismiss overclocking based on a generating of cards designed to run up to maybe a speed if there is headroom and not include watercooling which reduces noise and temperature . my 7970 (pre ghz editon) is a whole different card water cooled vs air cooled. 1150 mhz without having to mess with the voltage on water with temps in 50c without the fans or pumps ever kicking up, where as on air that would be in the upper 70s lower 80s and really loud. on top of that tweeking memory incorrectly can lower frame rateReply -

hansrotec I thought my last comment might have seemed to negative, and i did not mean it in that light. I did enjoy the read, and look forward to more!Reply -

hansrotec I thought my last comment might have seemed to negative, and i did not mean it in that light. I did enjoy the read, and look forward to more!Reply -

noobzilla771 Nice article! I would like to know more about overclocking, specifically core clock and memory clock ratio. Does it matter to keep a certain ratio between the two or can I overclock either as much as I want? Thanks!Reply -

chimera201 I can never win over input latency no matter what hardware i buy because of my shitty ISPReply -

immanuel_aj I'd just like to mention that the dB(A) scale is attempting to correct for perceived human hearing. While it is true that 20 dB is 10 times louder than 10 dB, but because of the way our ears work, it would seem that it is only twice as loud. At least, that's the way the A-weighting is supposed to work. Apparently there are a few kinks...Reply