CPU vs GPU: We tested 16 hardware combinations to show which upgrade will boost your gaming performance the most

Four CPUs paired with four GPUs to show how the hardware combinations stack up.

Your once-great PC is feeling a bit long in the tooth and can no longer handle the games you want to play — we've all been there. It's time for an upgrade, but you're not sure if you need a completely new PC build or if you can make it another couple of years with a few judicious component upgrades. Many users will upgrade their GPU with one of the best graphics cards and leave everything else in place. But would they get more for the money if they spent it all on a CPU instead? And what about some combination of hardware upgrades?

Executive Summary (TLDR)

- Use a balanced approach when selecting a CPU and GPU, with midrange CPUs generally working well with midrange GPUs.

- Pairing top-end graphics cards with an older/slower CPUs can result in a significant loss of performance, particularly at lower resolutions.

- RTX 4080 with an older/slower 8700K CPU loses up to 40% of its performance at 1080p, 33% at 1440p, and 10% at 4K.

- RTX 3080 loses up to 25% of its performance at 1080p, but only 10% at 1440p and 4% at 4K.

- Using an RTX 2080 with an 8700K only drops performance 10% at 1080p, and less than 5% at 1440p and 4K.

In a perfect world, you'd have a massive database of performance testing at your fingertips and you could simply plug in any combination of CPU and GPU to see how it would perform. Tools like 3DMark try to provide that sort of service, but given the myriad combinations of hardware, not to mention the fact that 3DMark isn't actually a game engine, you end up with many gaps in the knowledge you seek.

Today, we're going to fill in some of those gaps by putting four different CPUs and GPUs to the test — mixed and matched so we'll test every GPU with every CPU. This is by no means an exhaustive selection of hardware, but we'll use our full current GPU test suite of 19 games at four different settings/resolution combinations. That gives us 16 total reference points showing how different hardware combinations stack up. That should be sufficient to help you plan for your next PC upgrade.

2023 PC

AMD Ryzen 7 7800X3D

ASRock Z670E Taichi

G.Skill Trident Z5 Neo 2x16GB DDR5-6000 CL30

Crucial T700 4TB SSD

be quiet! 1600W Dark Power Pro 13

Cooler Master ML280 Mirror

Windows 11 Pro 64-bit

2022 PC

Intel Core i9-13900K

MSI MEG Z790 Ace DDR5

G.Skill Trident Z5 2x16GB DDR5-5600 CL28

Crucial T700 4TB SSD

be quiet! 1600W Dark Power Pro 13

Cooler Master PL360 Flux

Windows 11 Pro 64-bit

2019 PC

Intel Core i9-11900K

MSI MEG Z490 Godlike

CORSAIR Vengeance RGB 2x16GB DDR4-3600 C16

Crucial T700 4TB SSD

be quiet! 1600W Dark Power Pro 13

Cooler Master PL360 Flux

Windows 11 Pro 64-bit

2017 PC

Intel Core i7-8700K

MSI MEG Z390 Ace

CORSAIR Vengeance RGB 2x16GB DDR4-3600 C16

Crucial T700 4TB SSD

be quiet! 1600W Dark Power Pro 13

NZXT Kraken Z73

Windows 11 Pro 64-bit

GRAPHICS CARDS

Nvidia RTX 2080 (2018)

Nvidia RTX 3050 8GB (2021)

Nvidia RTX 3080 10GB (2020)

Nvidia RTX 4080 (2022)

There's an elephant over in the CPU corner, of course: You can't just upgrade your processor, at least in most cases. If you're on a socket AM4 motherboard, running a first generation Ryzen chip, you could upgrade to a Zen 3 Ryzen 5000-series CPU. AMD's AM4 is the longest-lived platform of all time, though it's been replaced by socket AM5 now. Intel platforms meanwhile typically only support two generations of processors. So, if you have an 8th Gen Core Intel CPU from 2017–2018, the best you can do without replacing at least the motherboard along with the processor, would be a 9th Gen Core chip from 2018–2019.

Depending on your upgrade path, you may also need to replace your system RAM. Most reasonably recent systems use at least DDR4 memory — Intel made the switch to DDR4 in 2015 and AMD's socket AM4 platform also requires DDR4. Intel started supporting DDR5 on its socket LGA1700 platform with Alder Lake 12th Gen Core in late 2021, though the platform still supports DDR4 as well. AMD's AM5 platform made a wholesale switch to DDR5, marking a clean break from the past.

For our look at CPU and GPU upgrade options, we have four different test platforms. We drew the line with Windows 11 (23H2), which requires TPM support and some other bits and bobs. While it's possible to work around some of the limitations, we opted to go with the officially supported Intel 8th Gen Core i7-8700K as our oldest CPU. Then we have the Core i9-11900K from early 2021 and the Core i9-13900K from late 2022. Rounding things out, AMD's Ryzen 9 7800X3D from 2023 reigns as the current king of the best CPUs for gaming.

All the specs for our test PCs can be seen in the boxout. We have 32GB (2x16GB) of memory for all four PCs, with DDR4-3600 RAM in the 8700K and 11900K, DDR5-5600 XMP for the 13900K, and DDR5-6000 EXPO for the 7800X3D. We used a Crucial 4TB T700 drive, since that's large enough to comfortably hold our gaming test suite, though the oldest platform we tested only ran the drive at PCIe 3.0 speeds, and the 11900K has a PCIe 4.0 interface.

Our test GPUs consist of Nvidia cards, to keep things consistent — all support DLSS and use the same 555.85 drivers (except for The Last of Us, Part 1 which was tested with 552.44 drivers due to a bug in the 555 drivers that prevented the game from running on 8GB cards). All four GPUs have all been among the best graphics cards at some point in the past six years, though only the RTX 4080 Super (a proxy for the 4080) currently ranks on our list.

Note that we're using the original RTX 4080, but if you're actually in the market for such a GPU, you're better off getting the 4080 Super variant simply because it's more financially viable — it costs $200 less than the vanilla RTX 4080 that launched in 2022, and it's basically the same performance (within 3%). The RTX 3080 (10GB) was Nvidia's second-fastest 30-series card when it launched, and the same goes for the RTX 2080. Both were later displaced by the 2080 Super and 3080 Ti, but we're sticking to the initial GPUs rather than the mid-cycle refreshes. We also added the RTX 3050 8GB (not the new 3050 6GB variant) to show how things change with what is basically the slowest desktop RTX card — it's slightly slower than even the RTX 2060, though some games now prefer the 8GB of VRAM of the 3050.

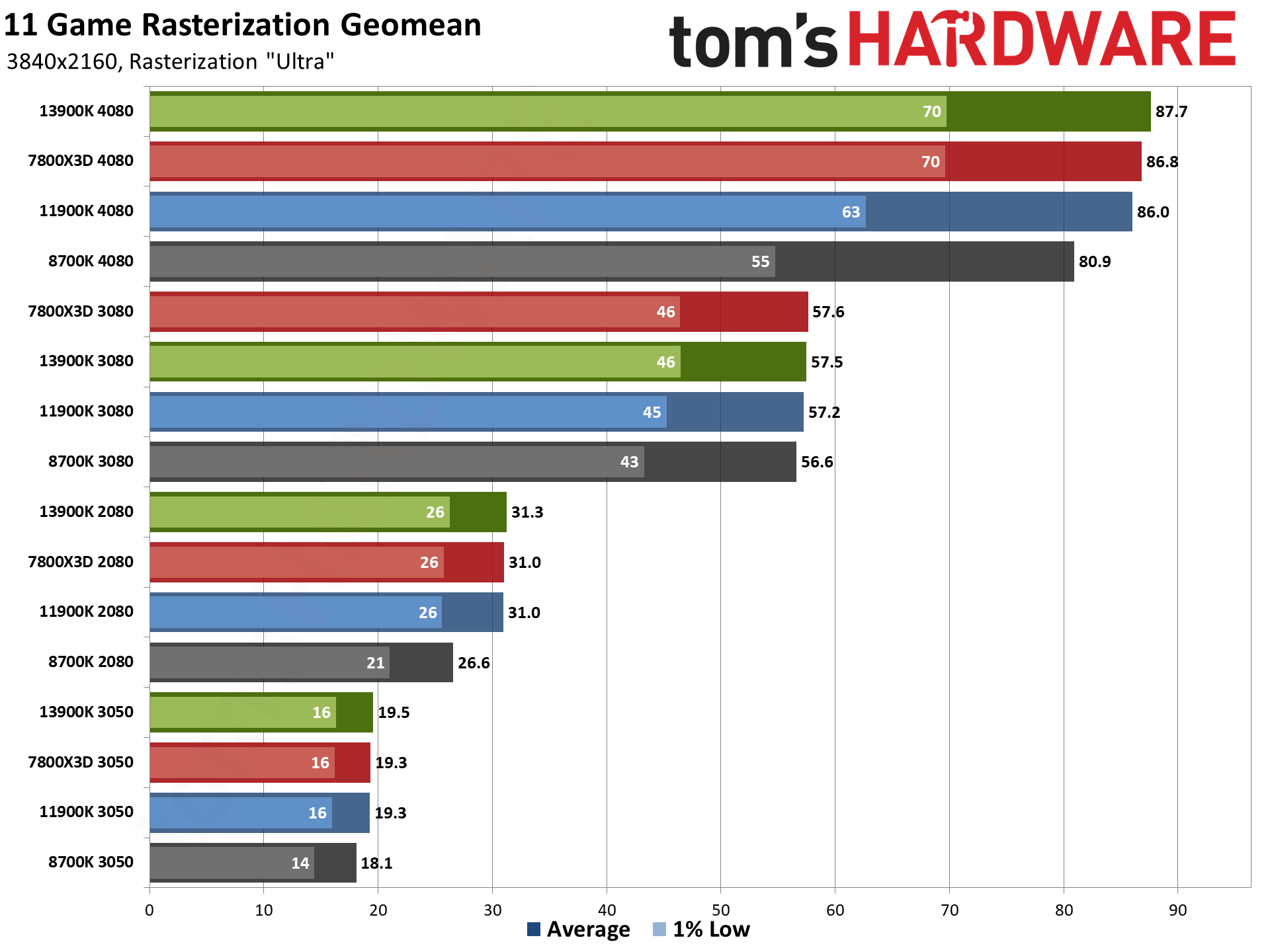

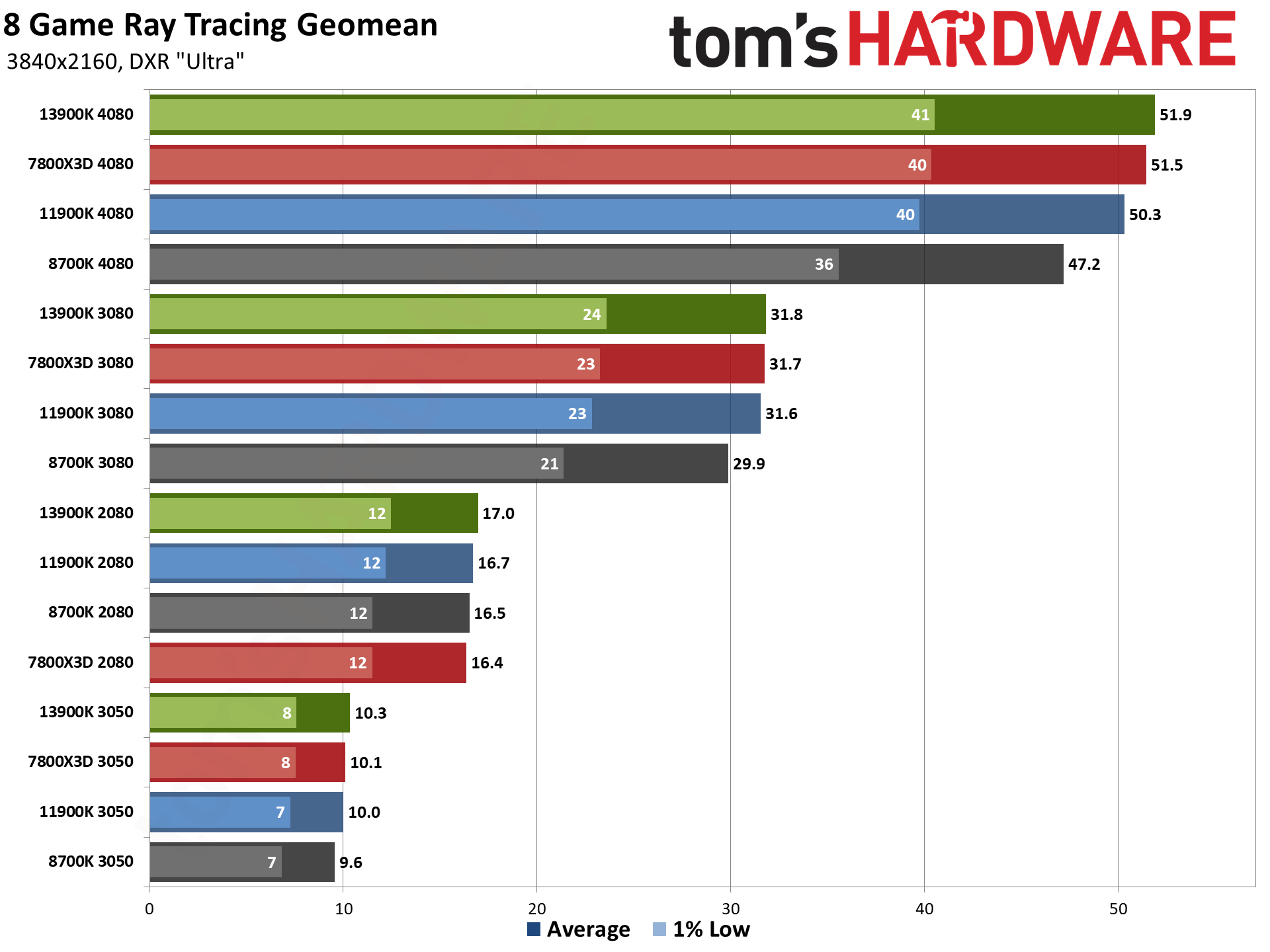

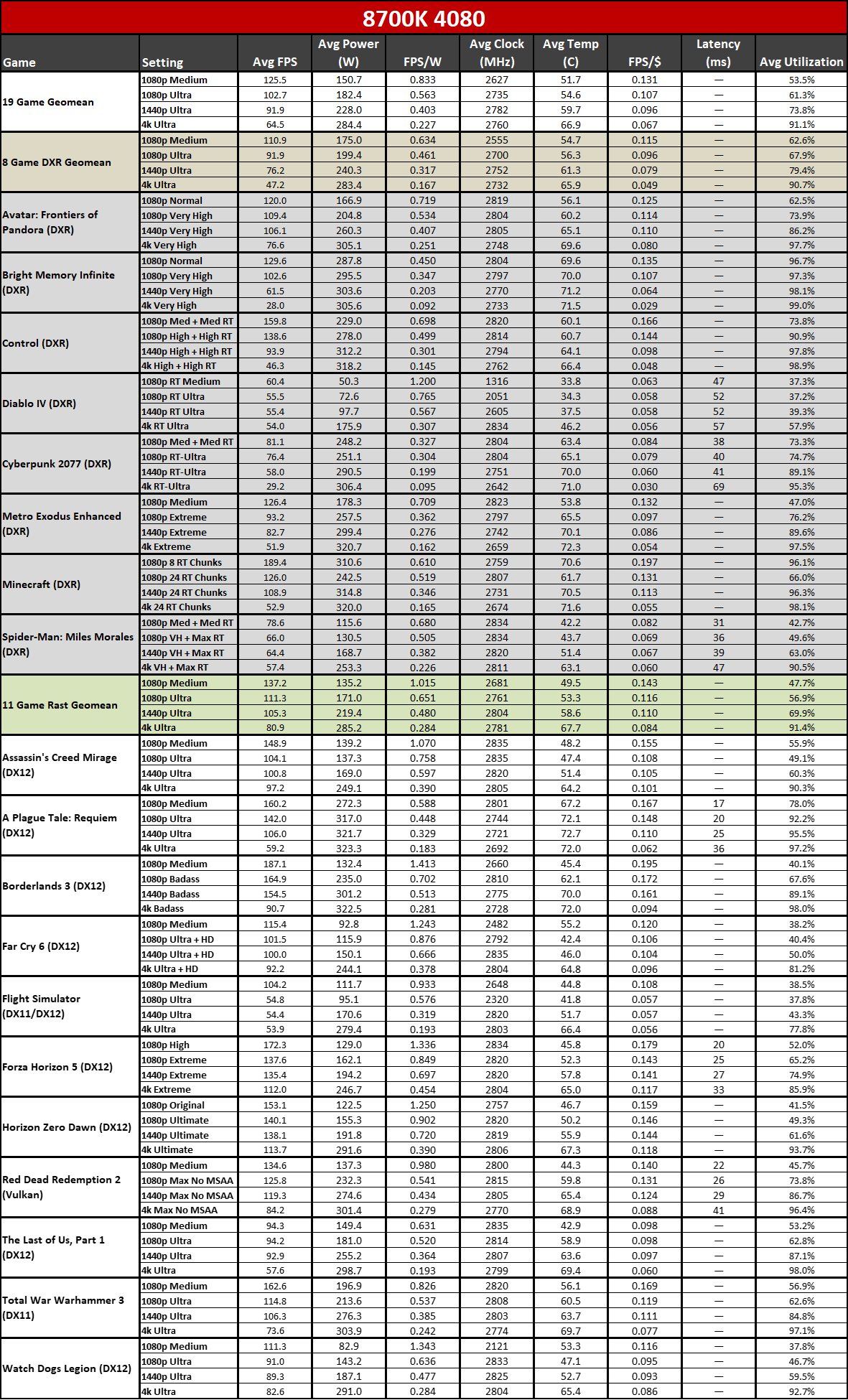

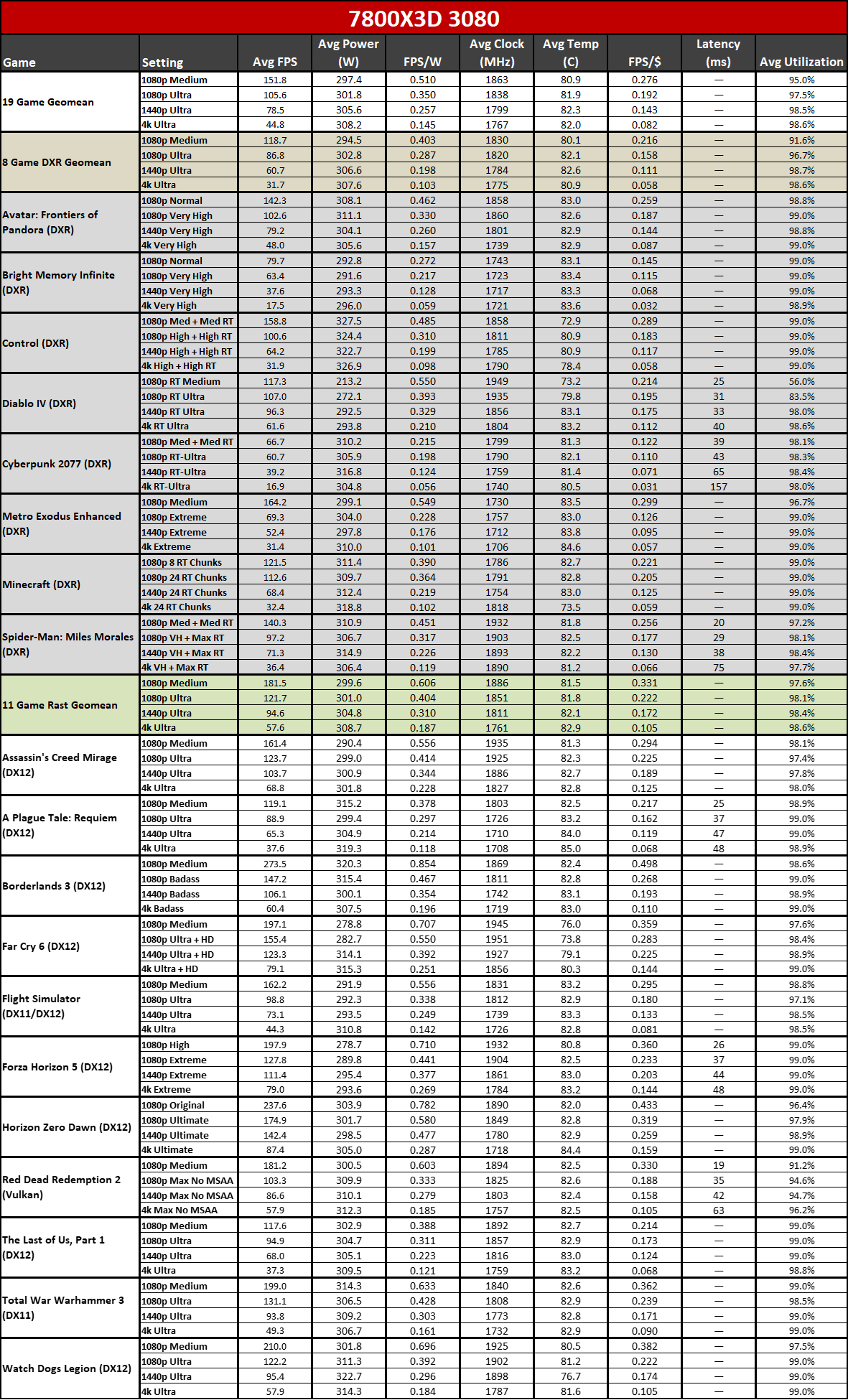

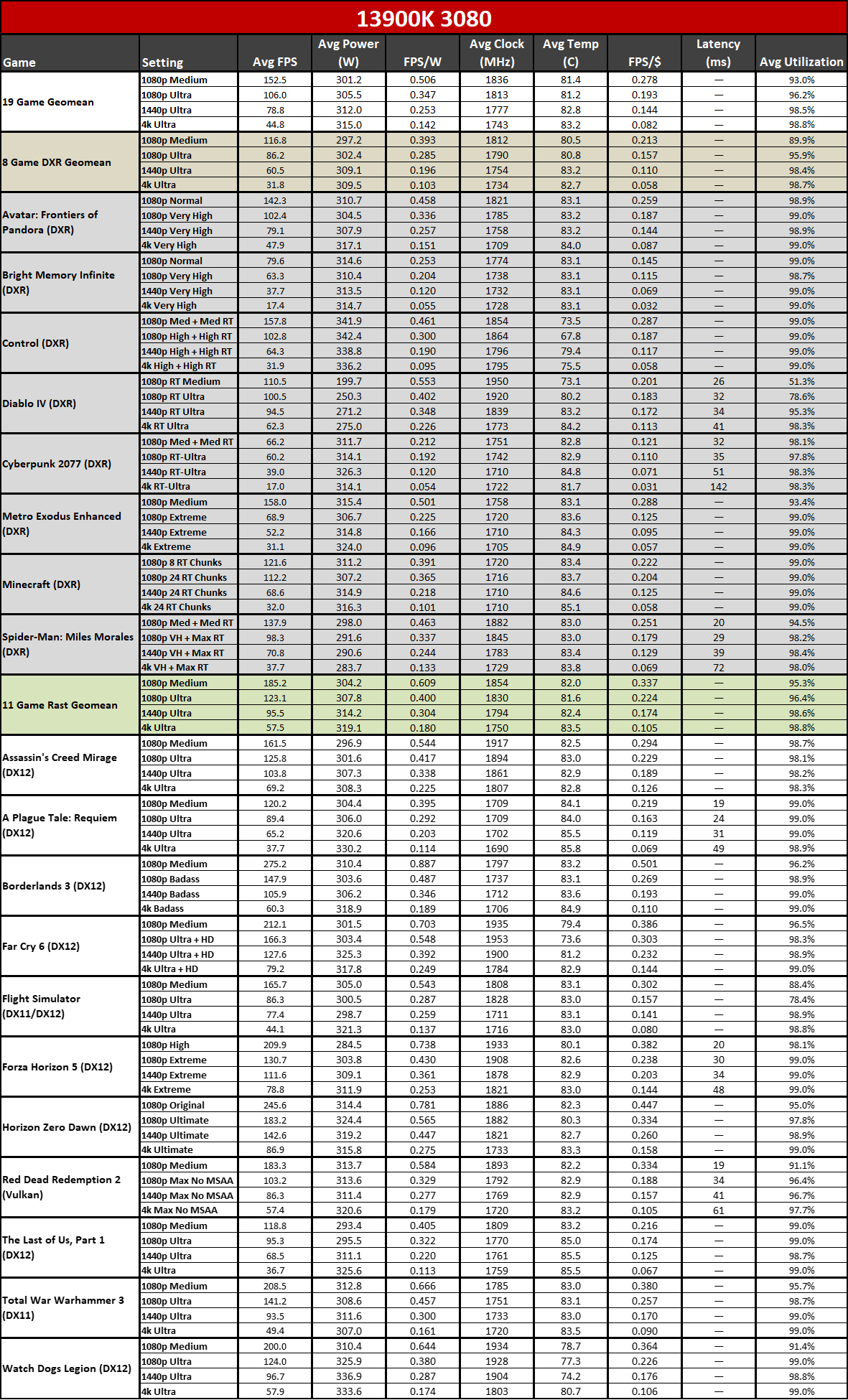

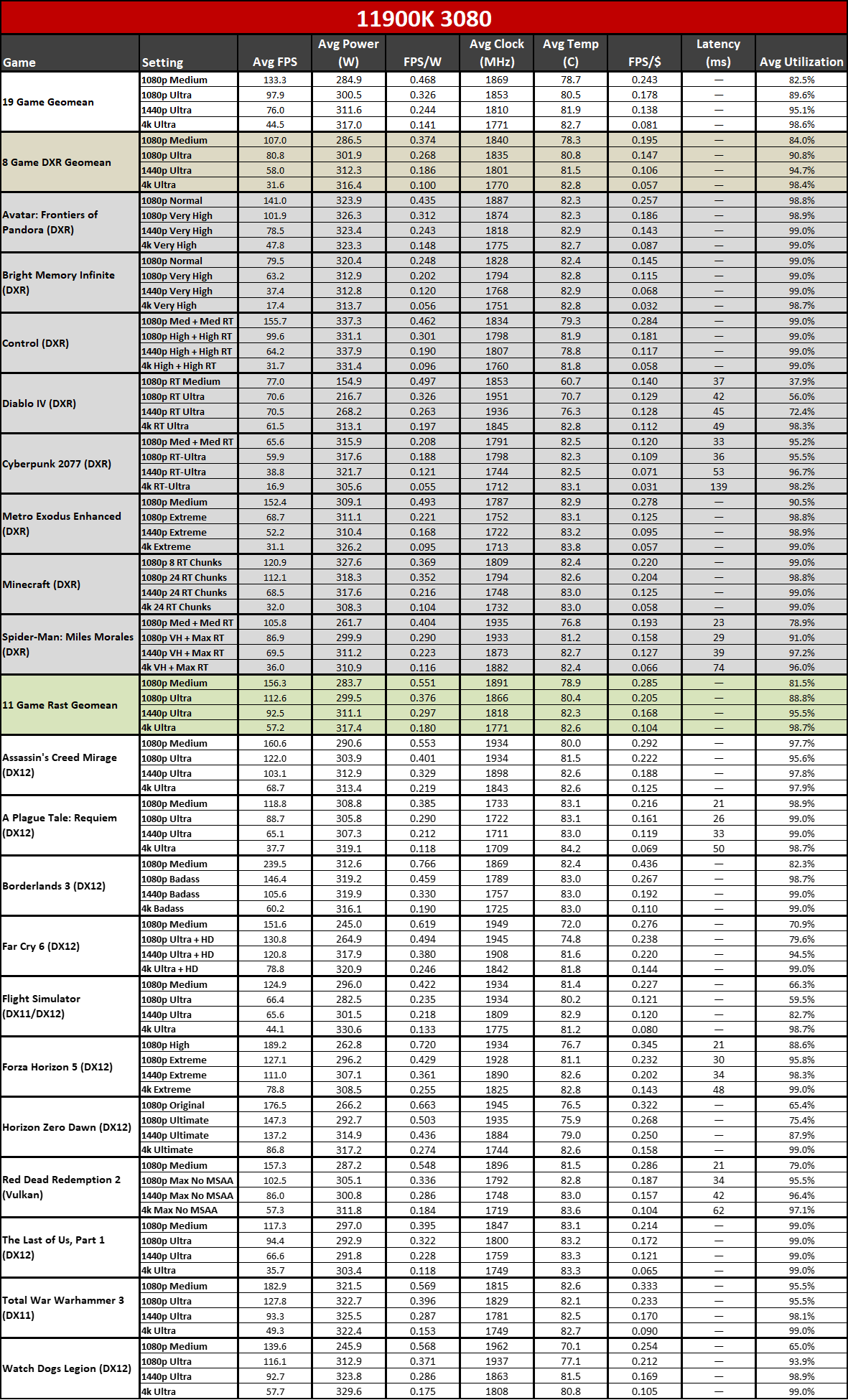

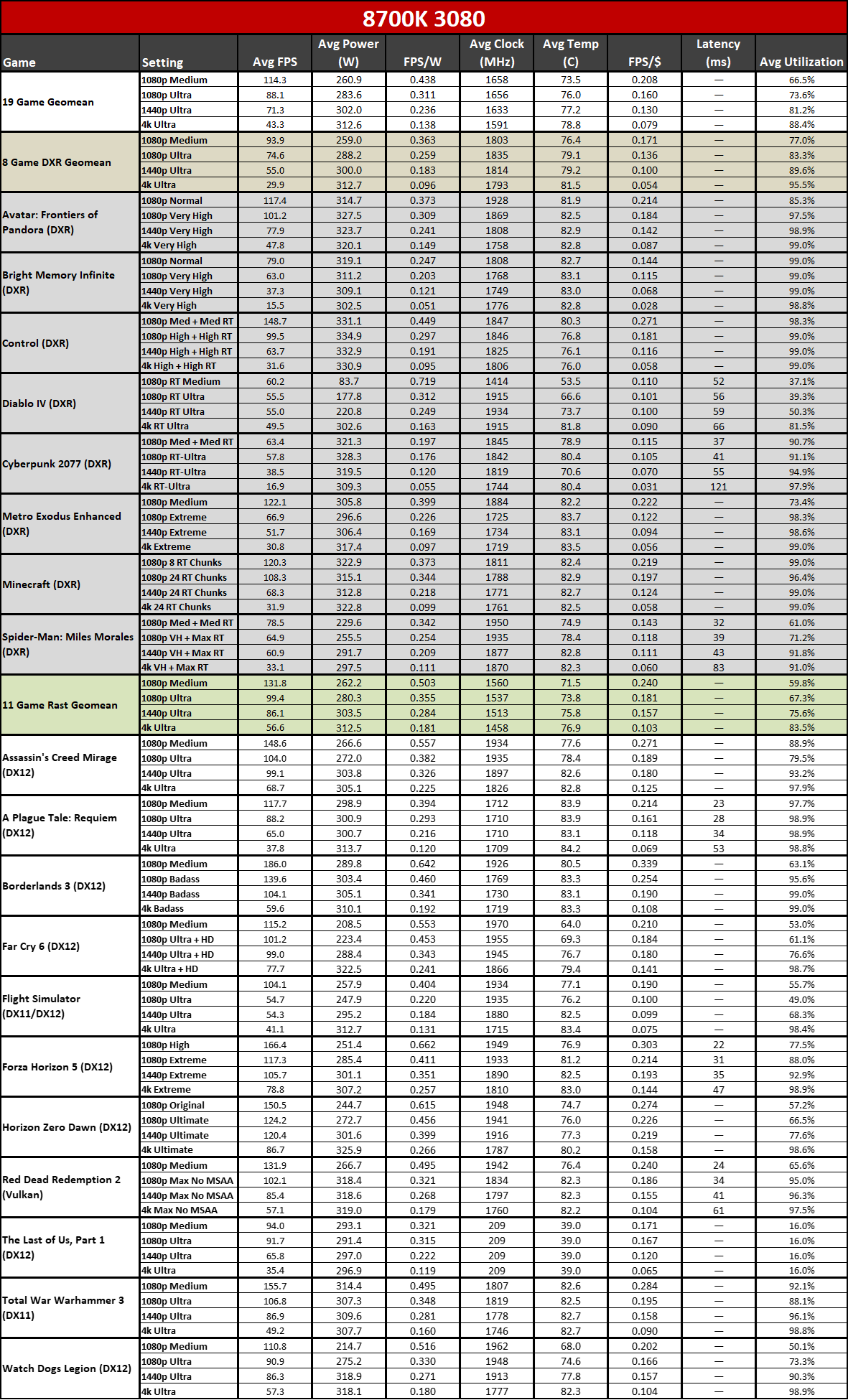

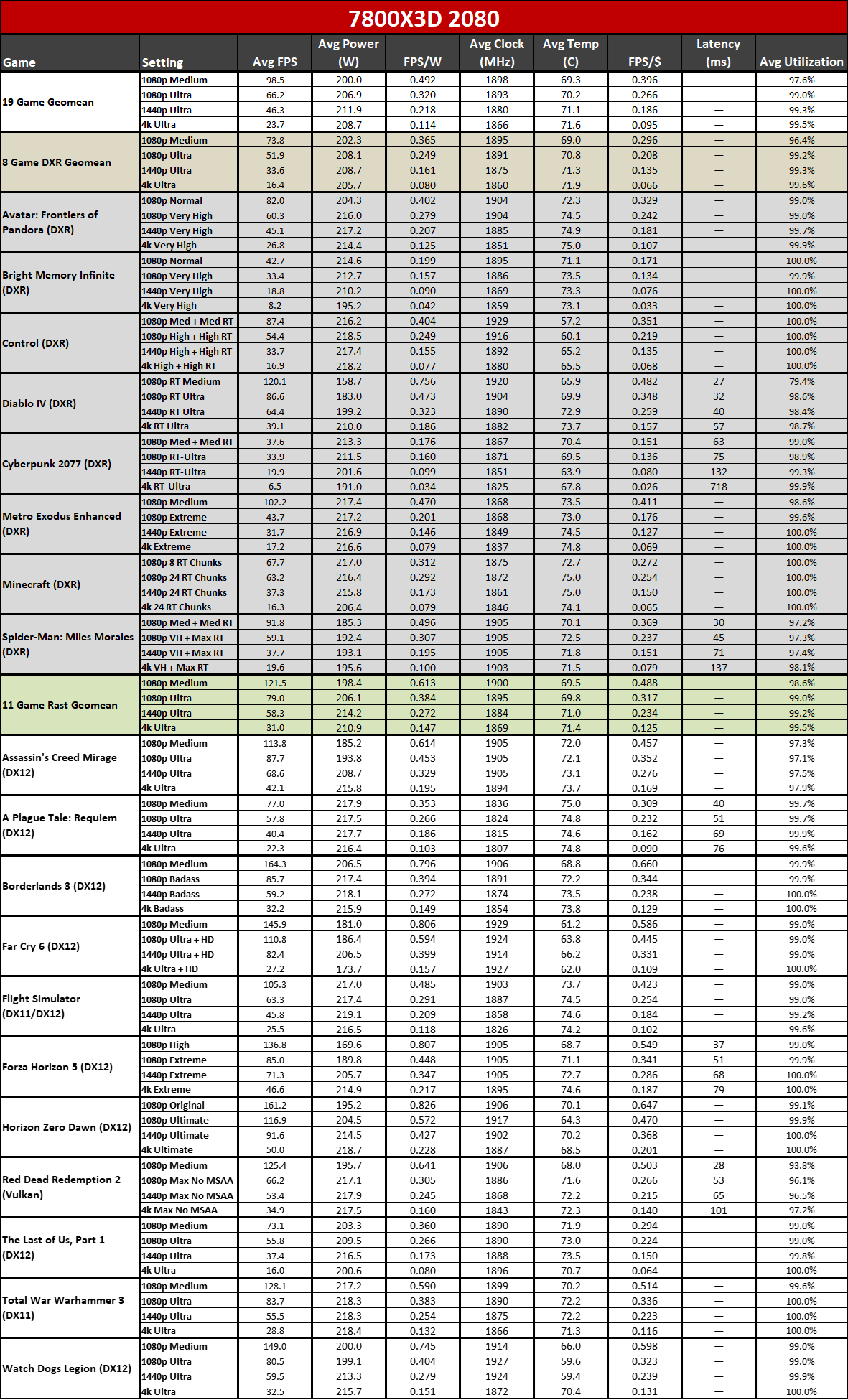

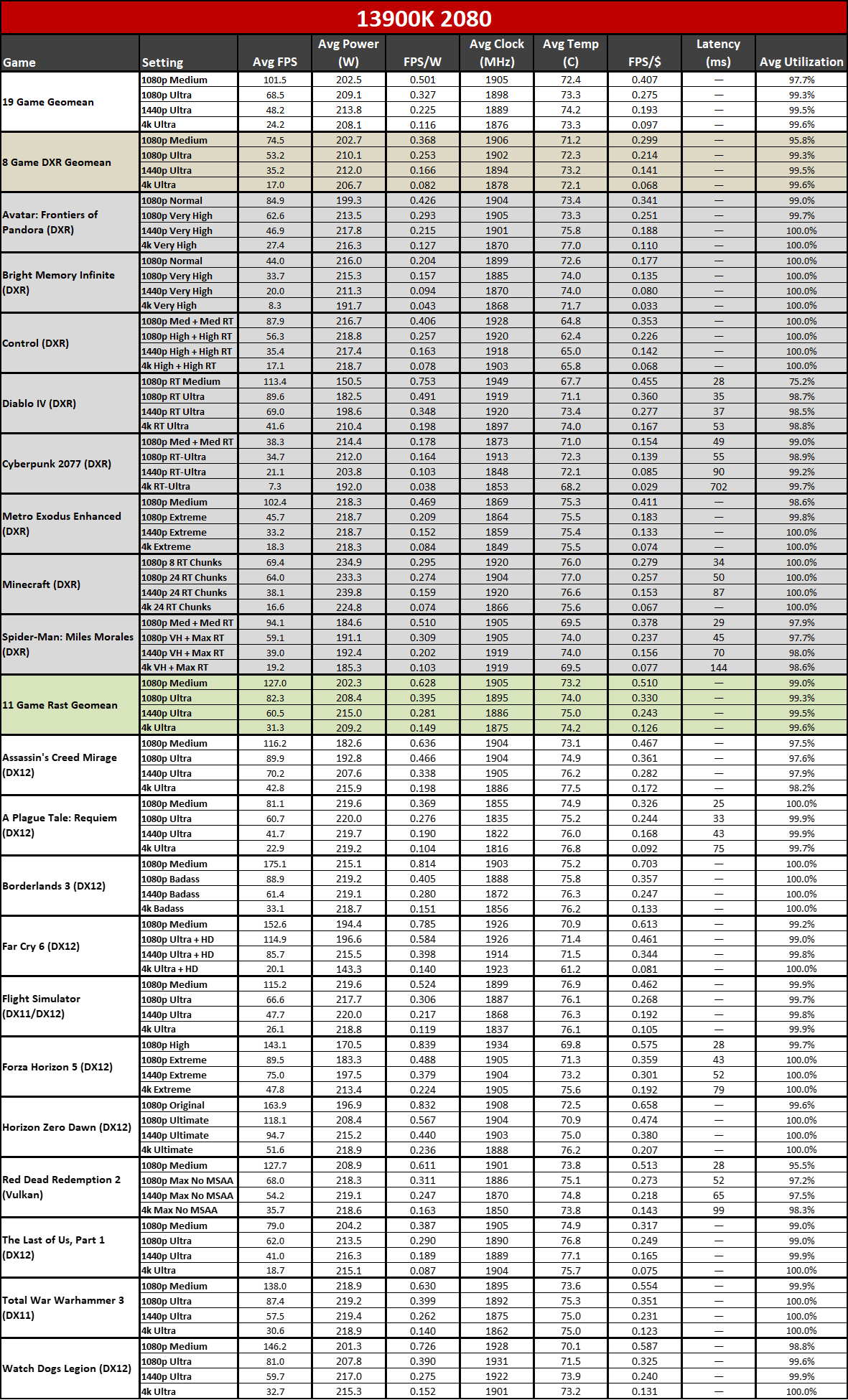

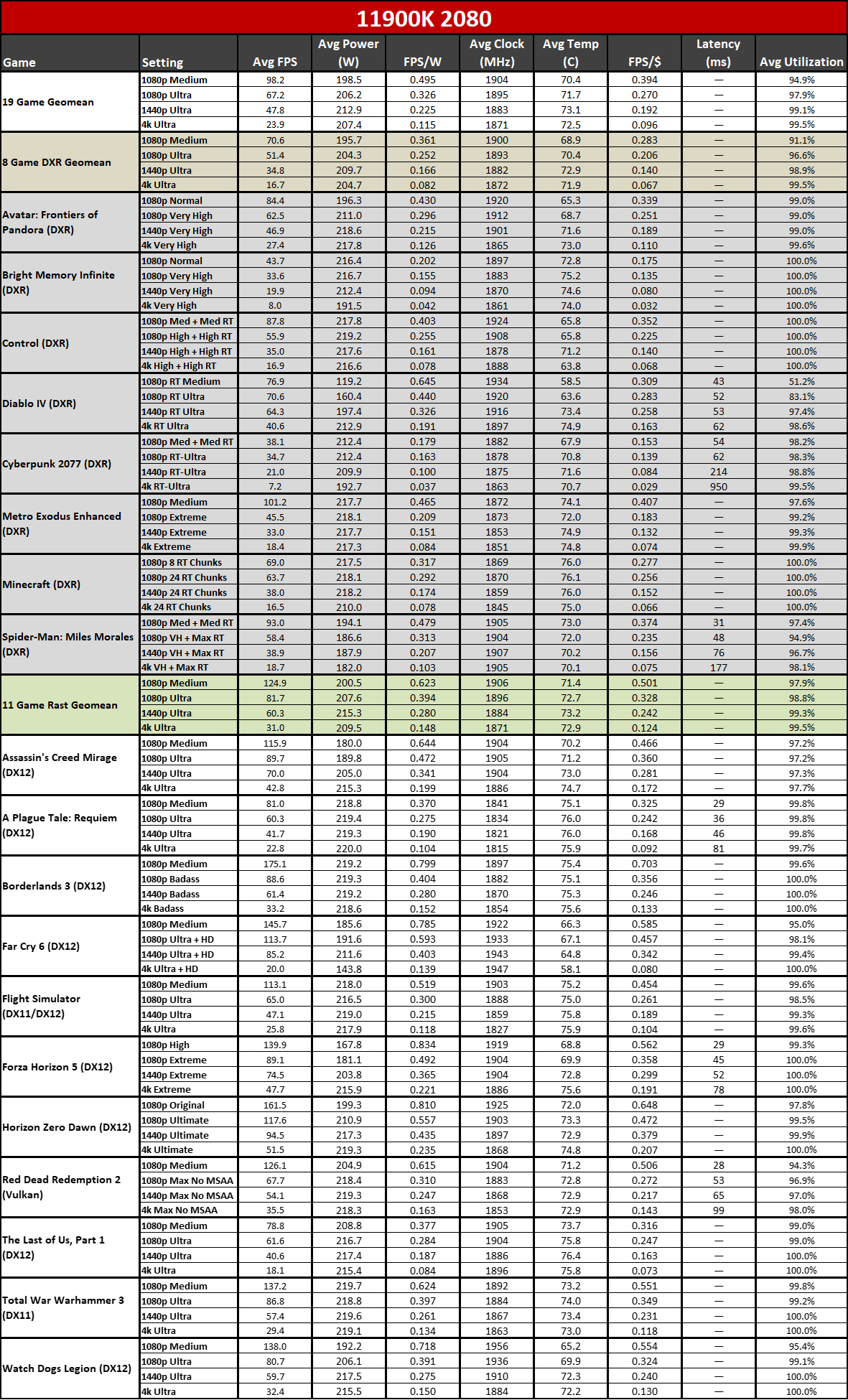

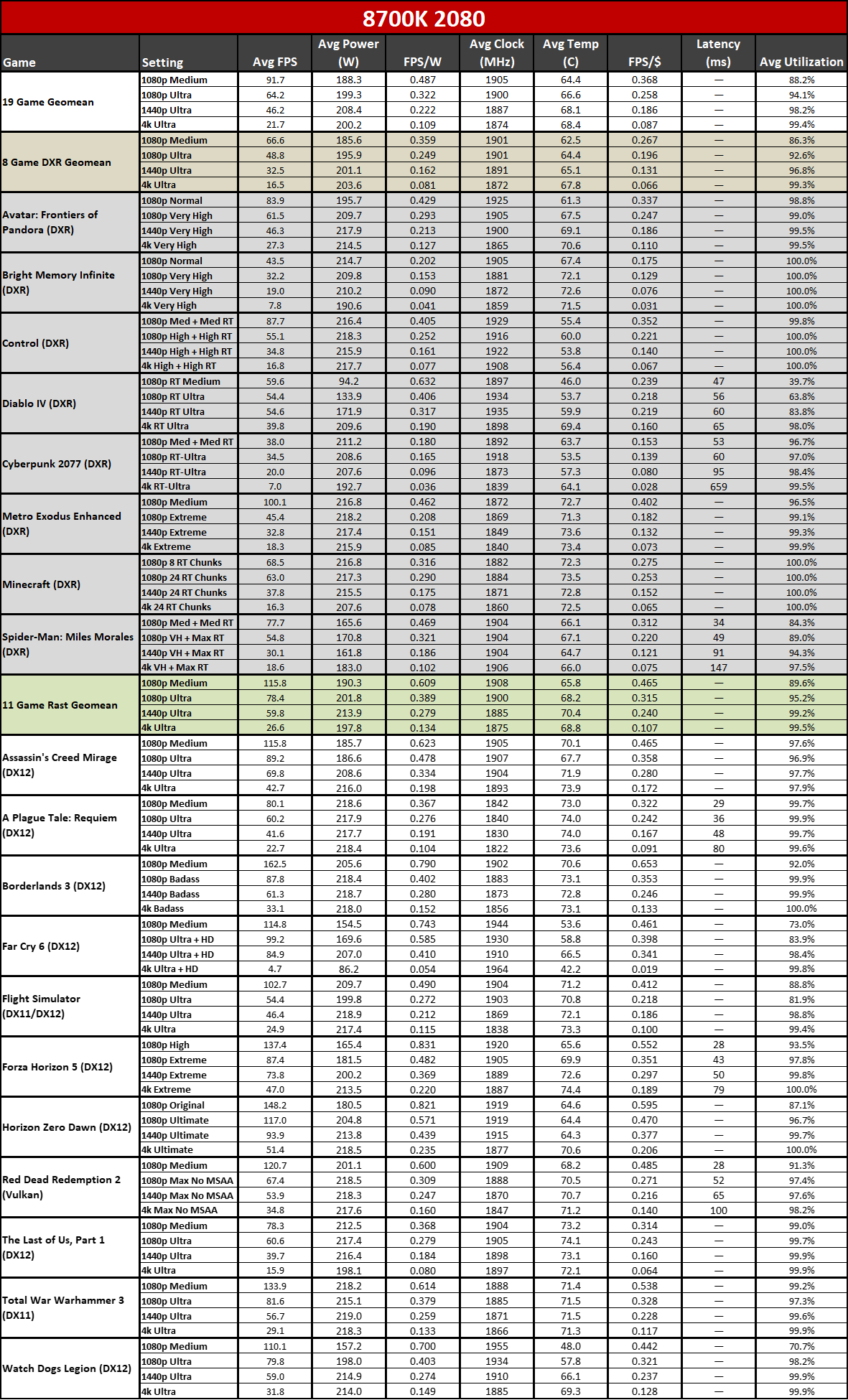

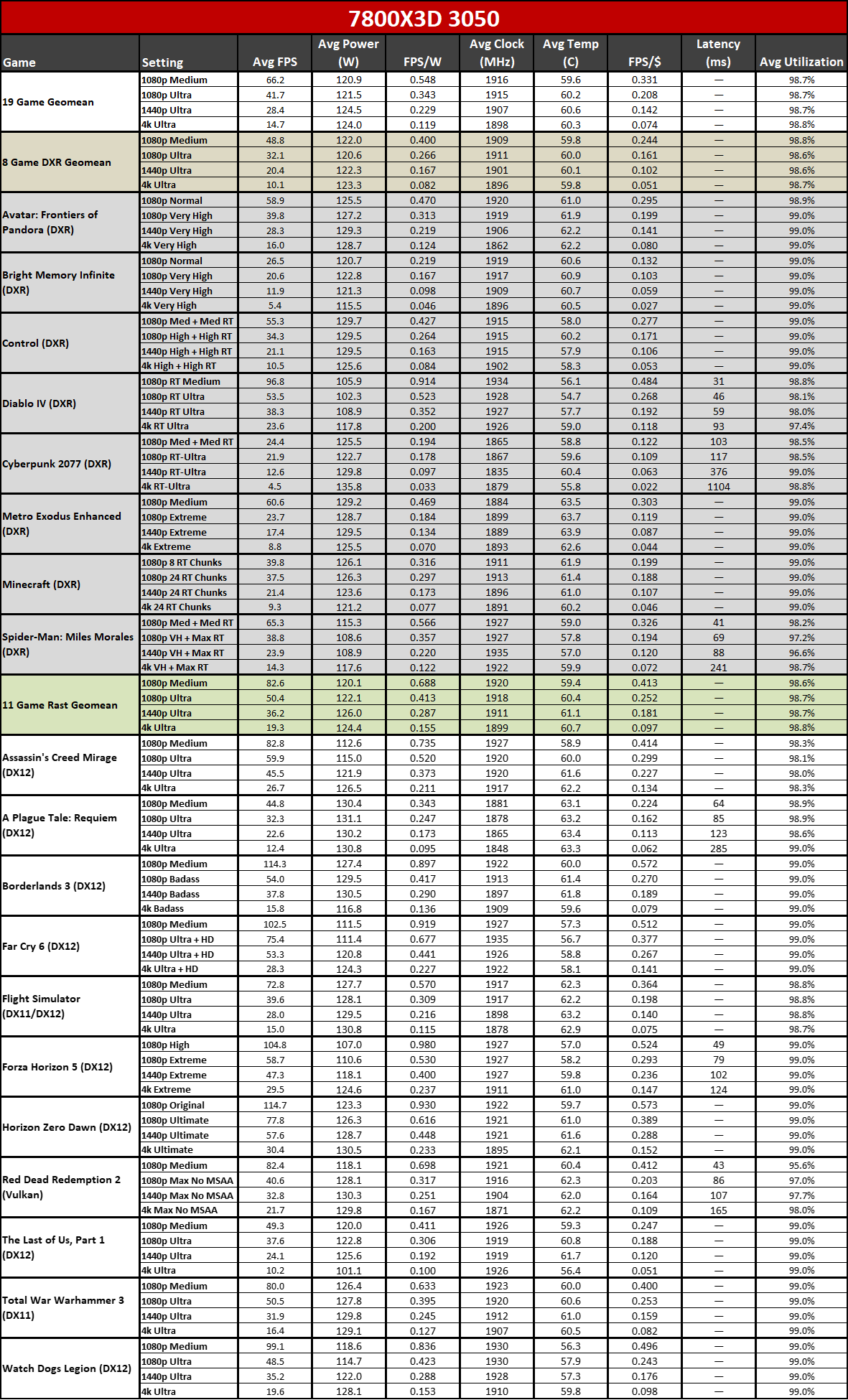

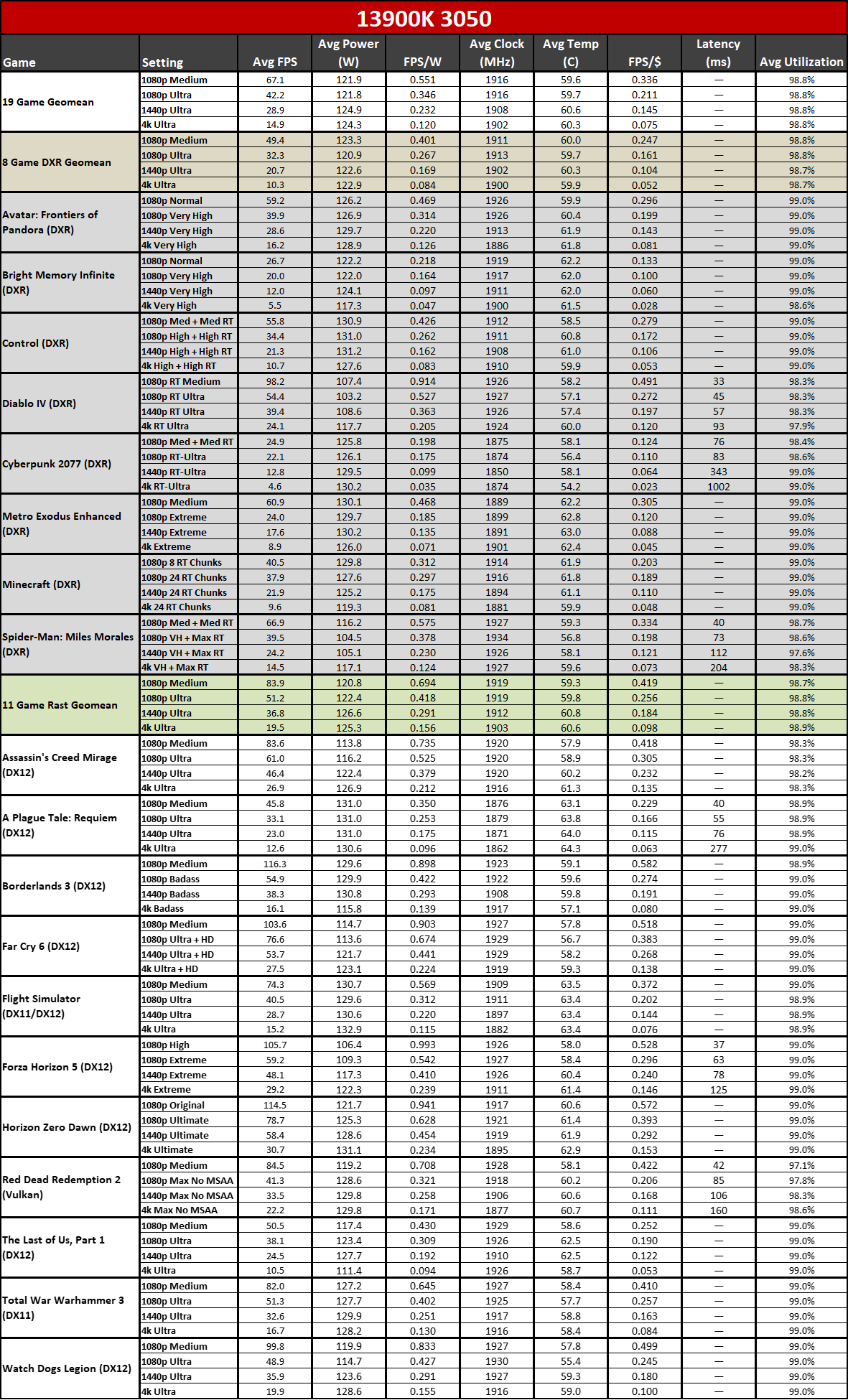

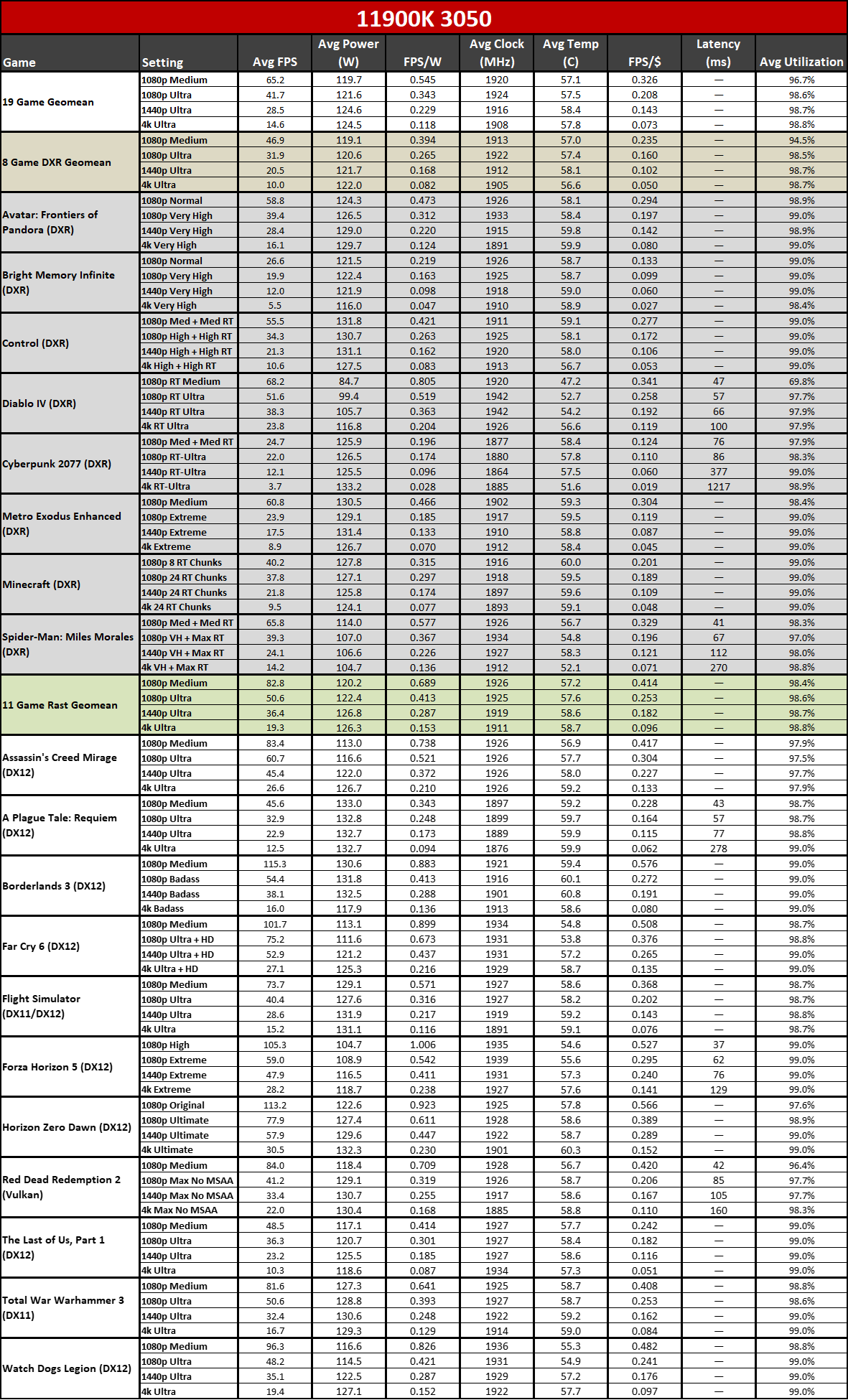

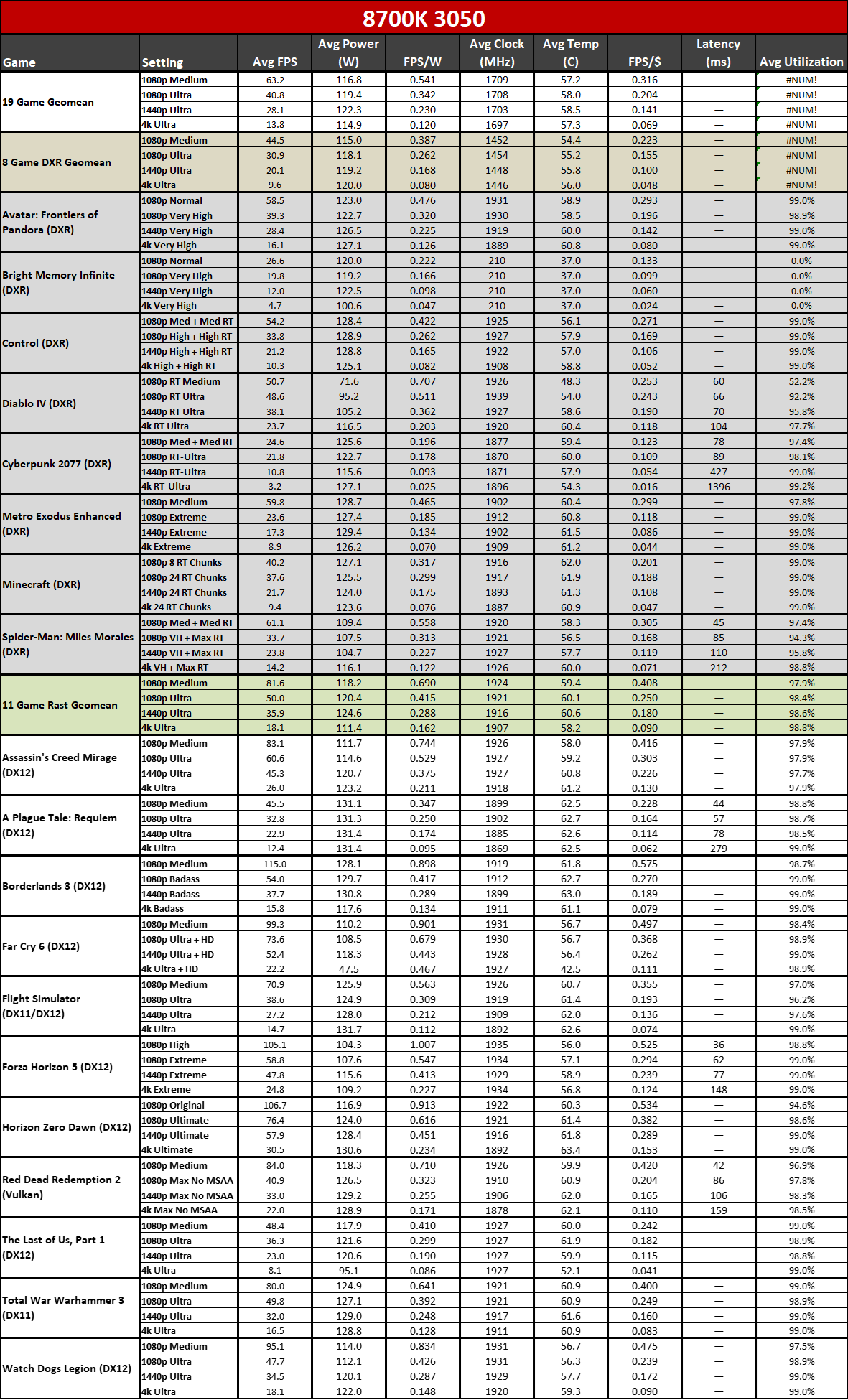

We're using our full 19-game GPU test suite, with eight ray tracing (DXR, or DirectX Raytracing) enabled games and eleven rasterization games — we left ray tracing disabled in these, even if a game supports it. Each setting gets tested at least three times, and we discard the first run and then take the better result of the remaining two runs. We break things down into an overall geometric mean (equal weighting to every game), separate geomeans of just the rasterization and ray tracing games, and then the individual game results.

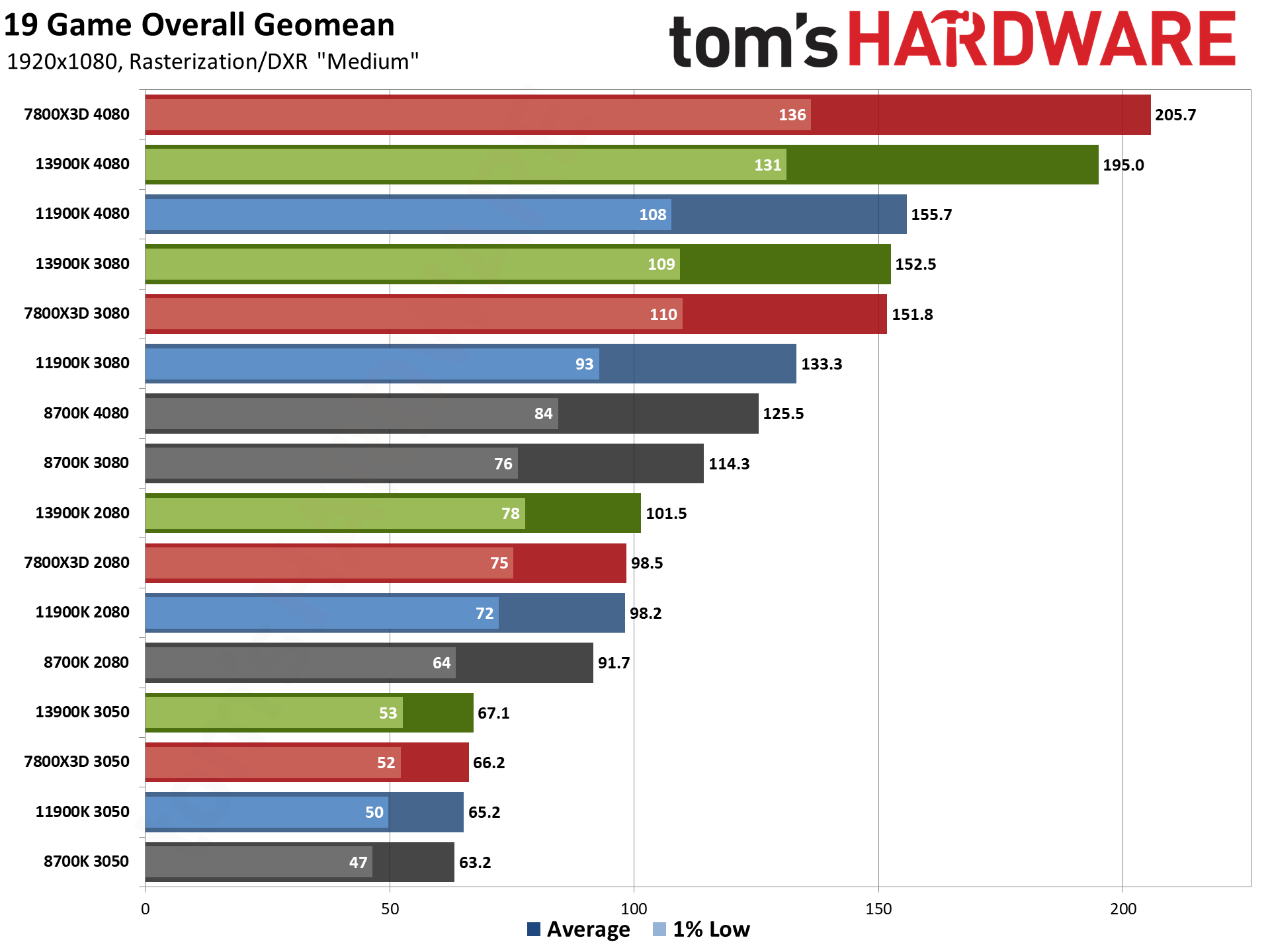

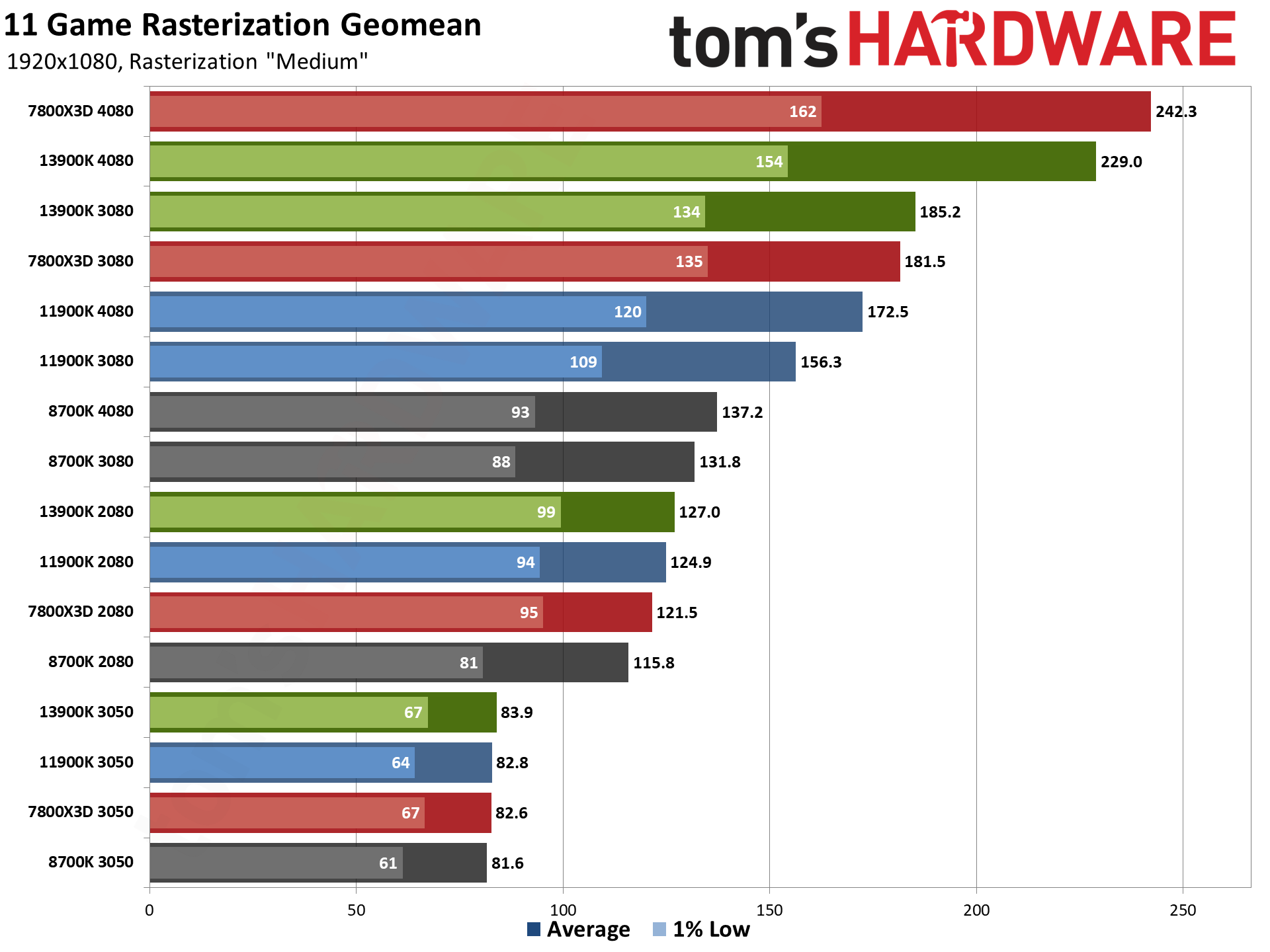

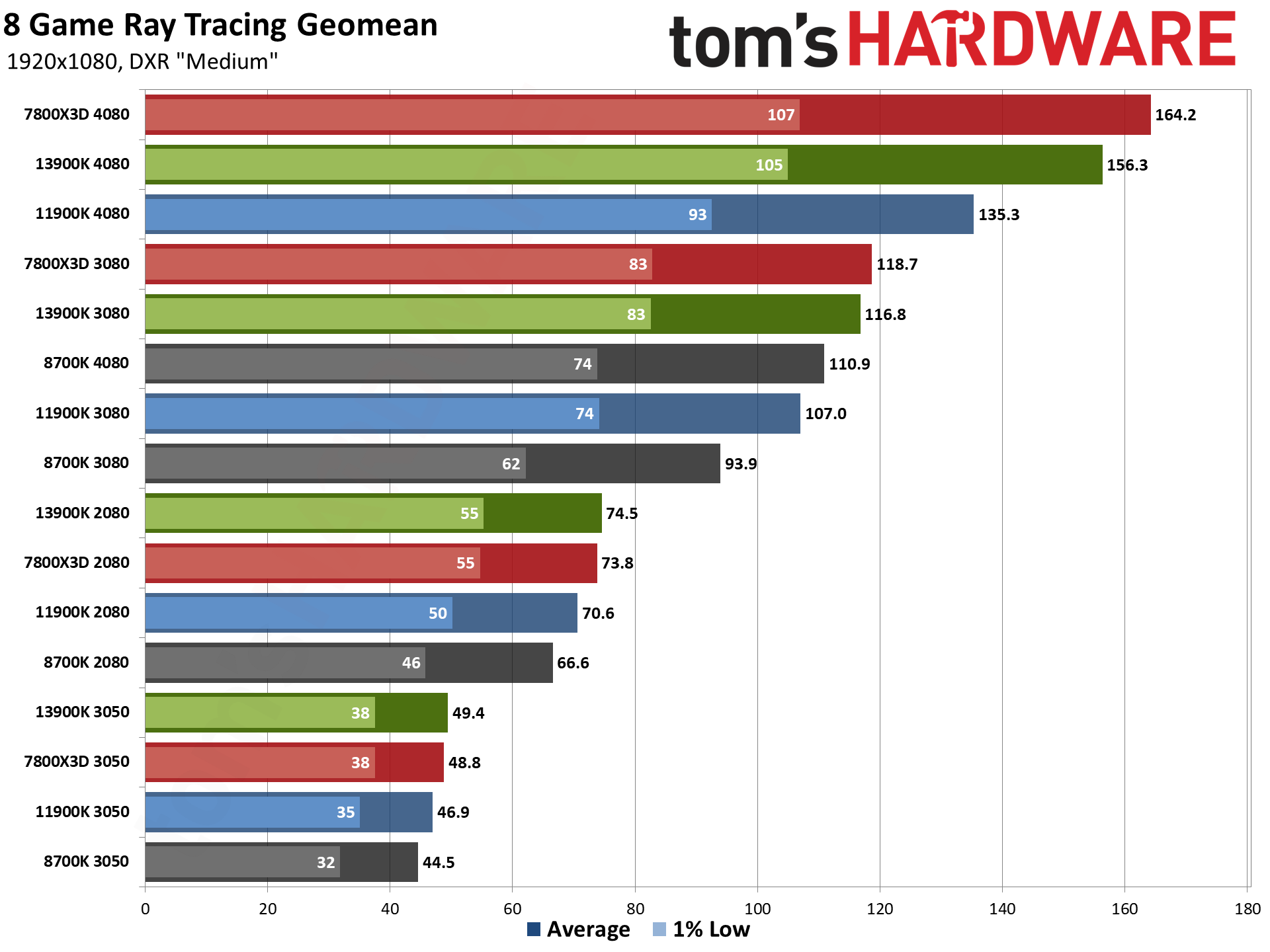

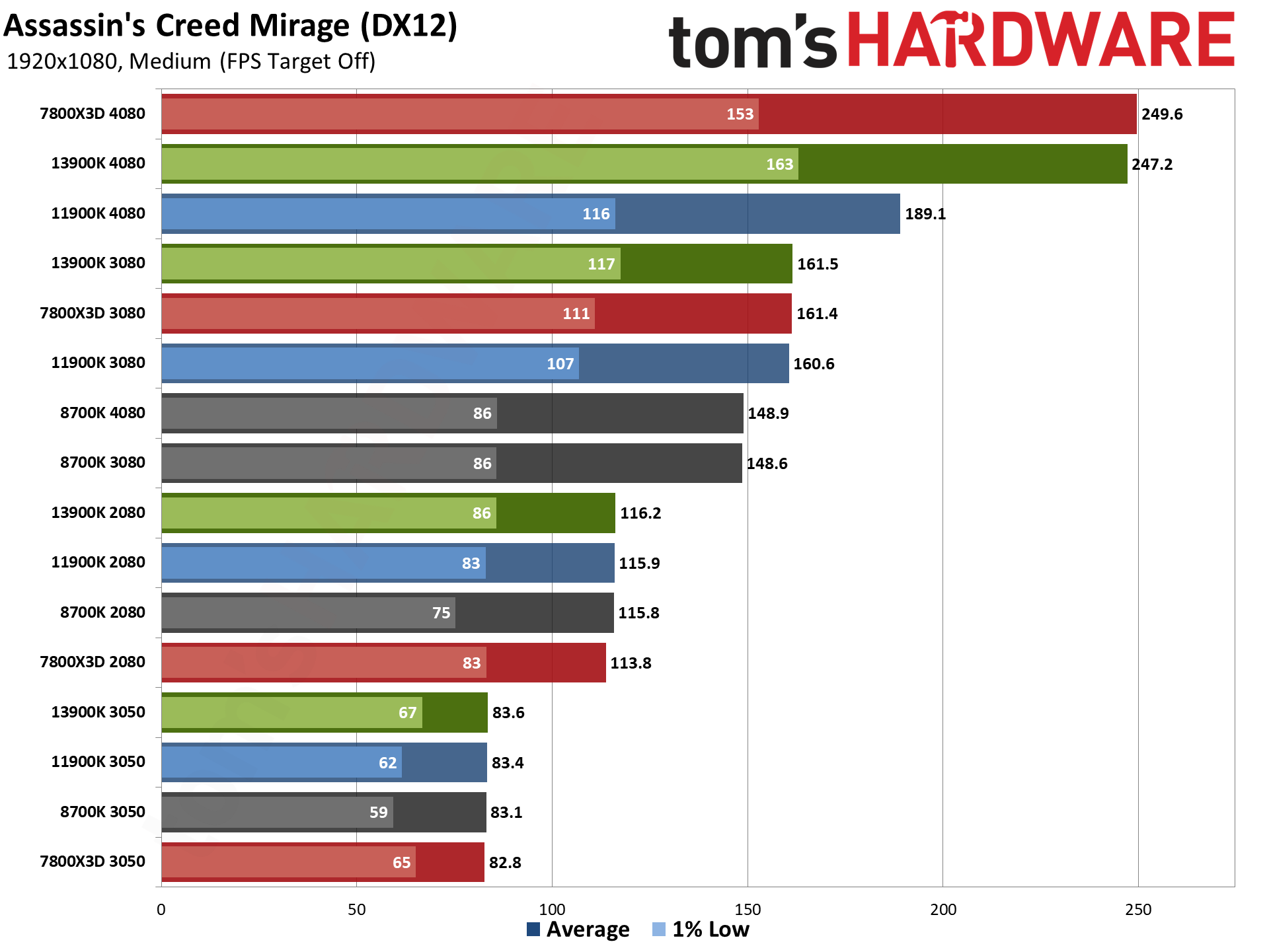

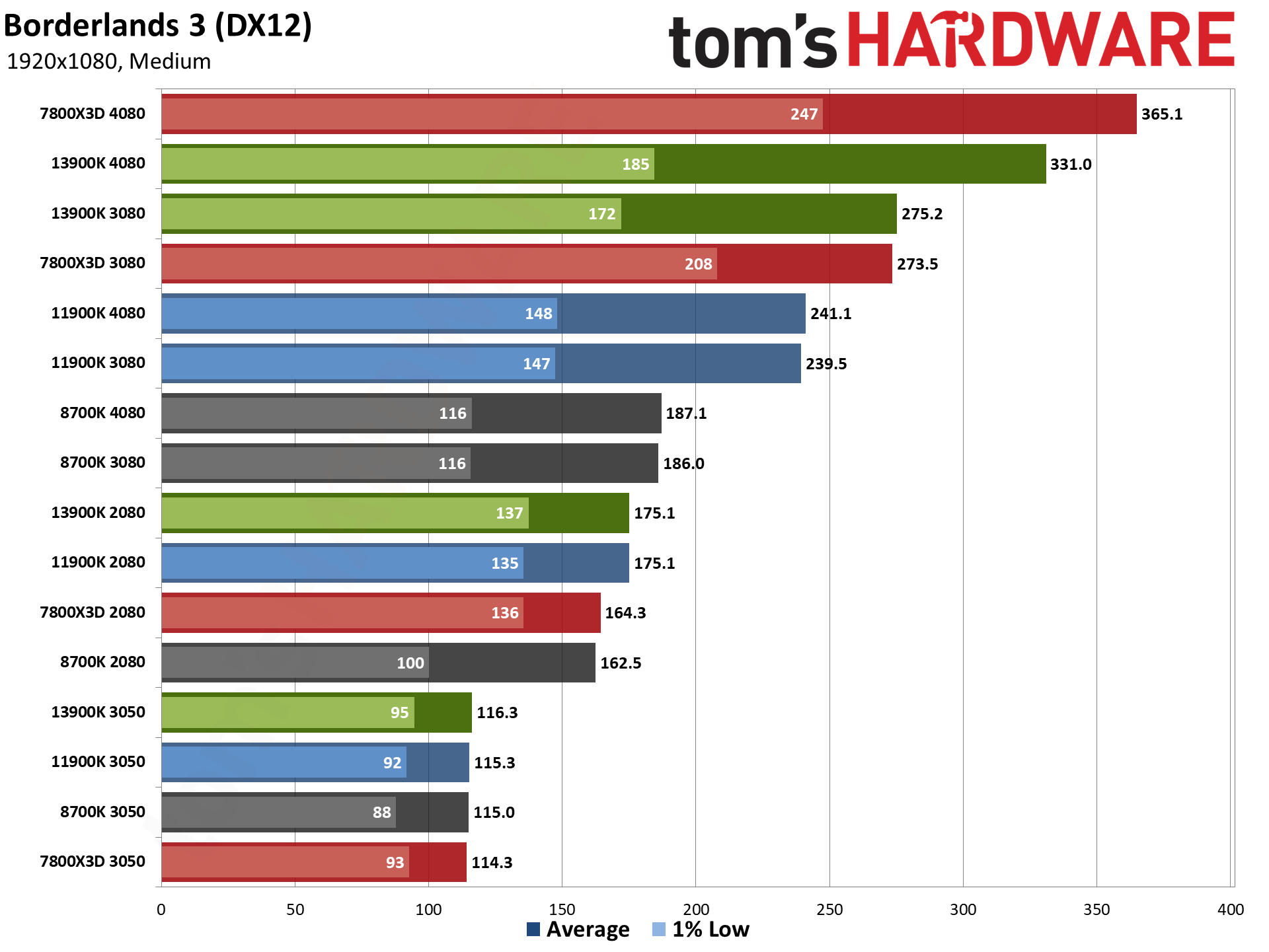

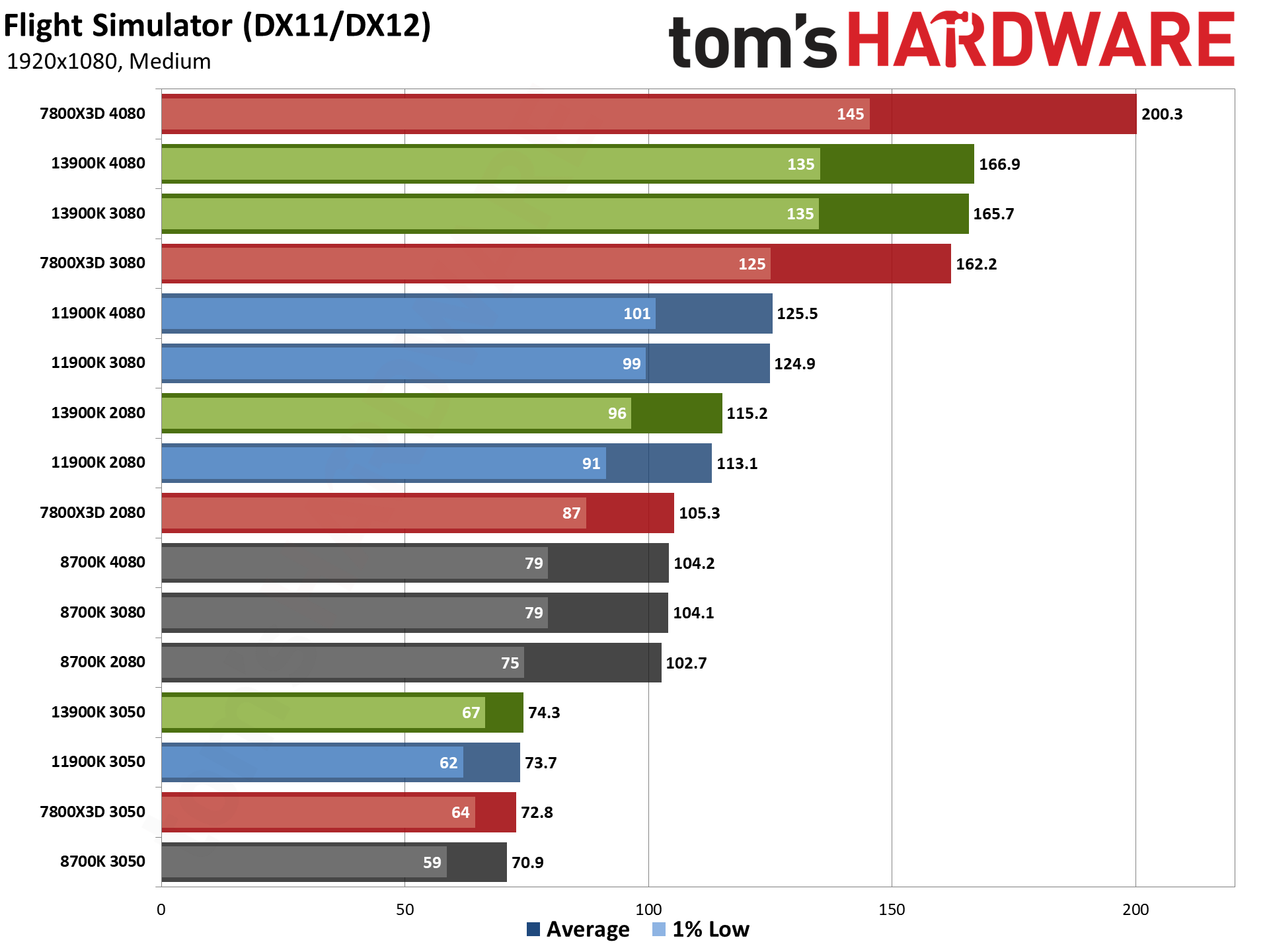

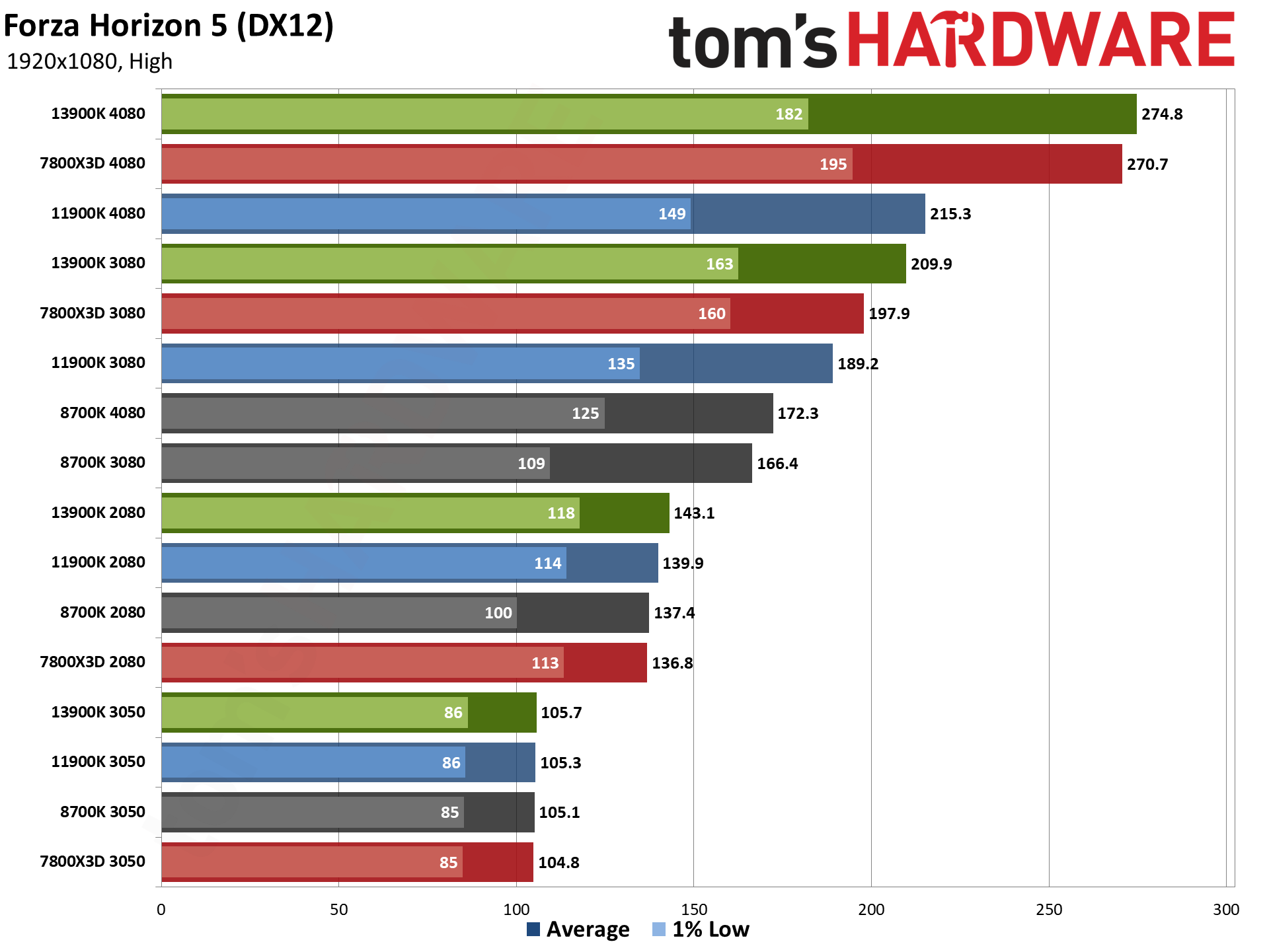

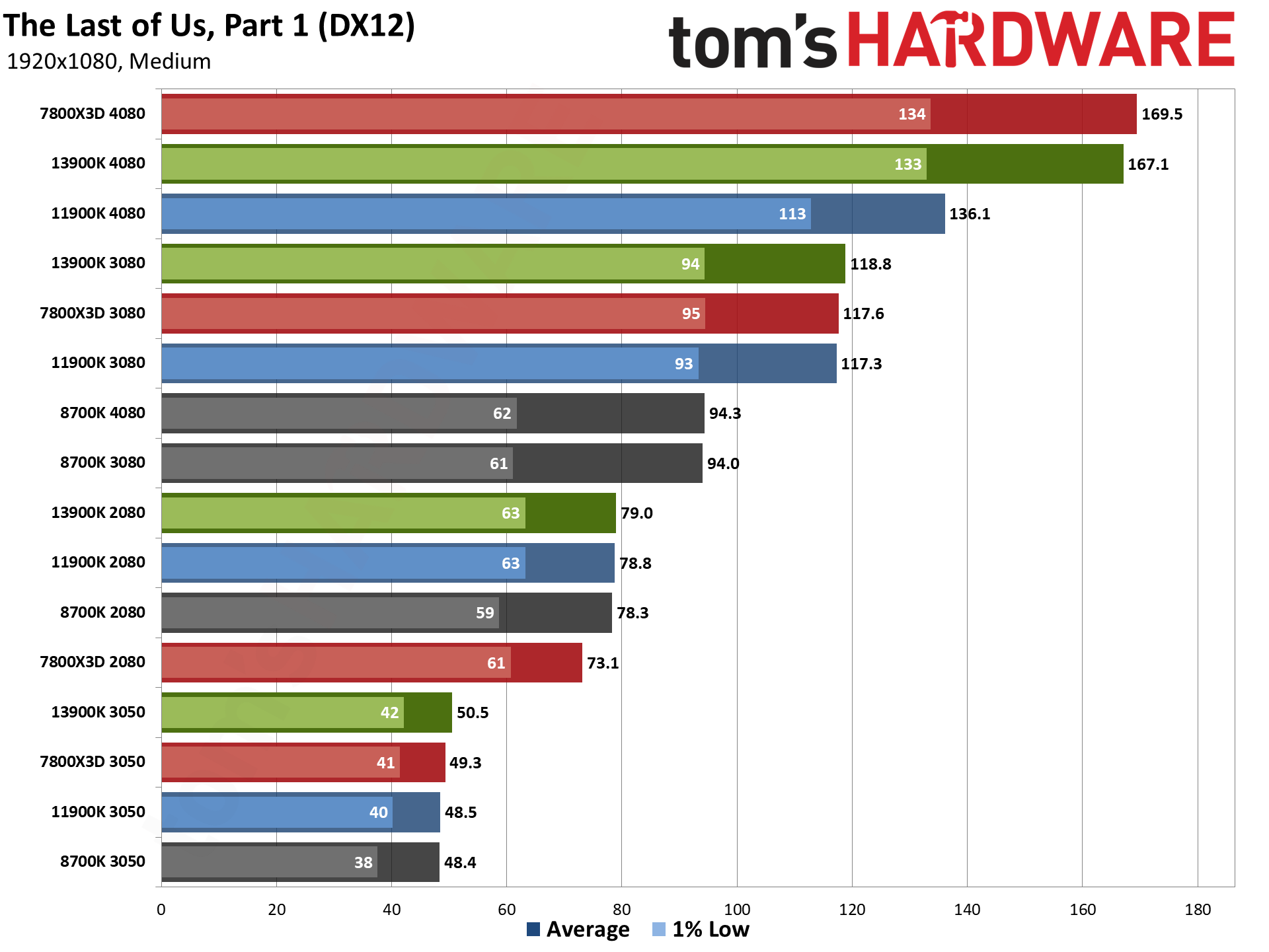

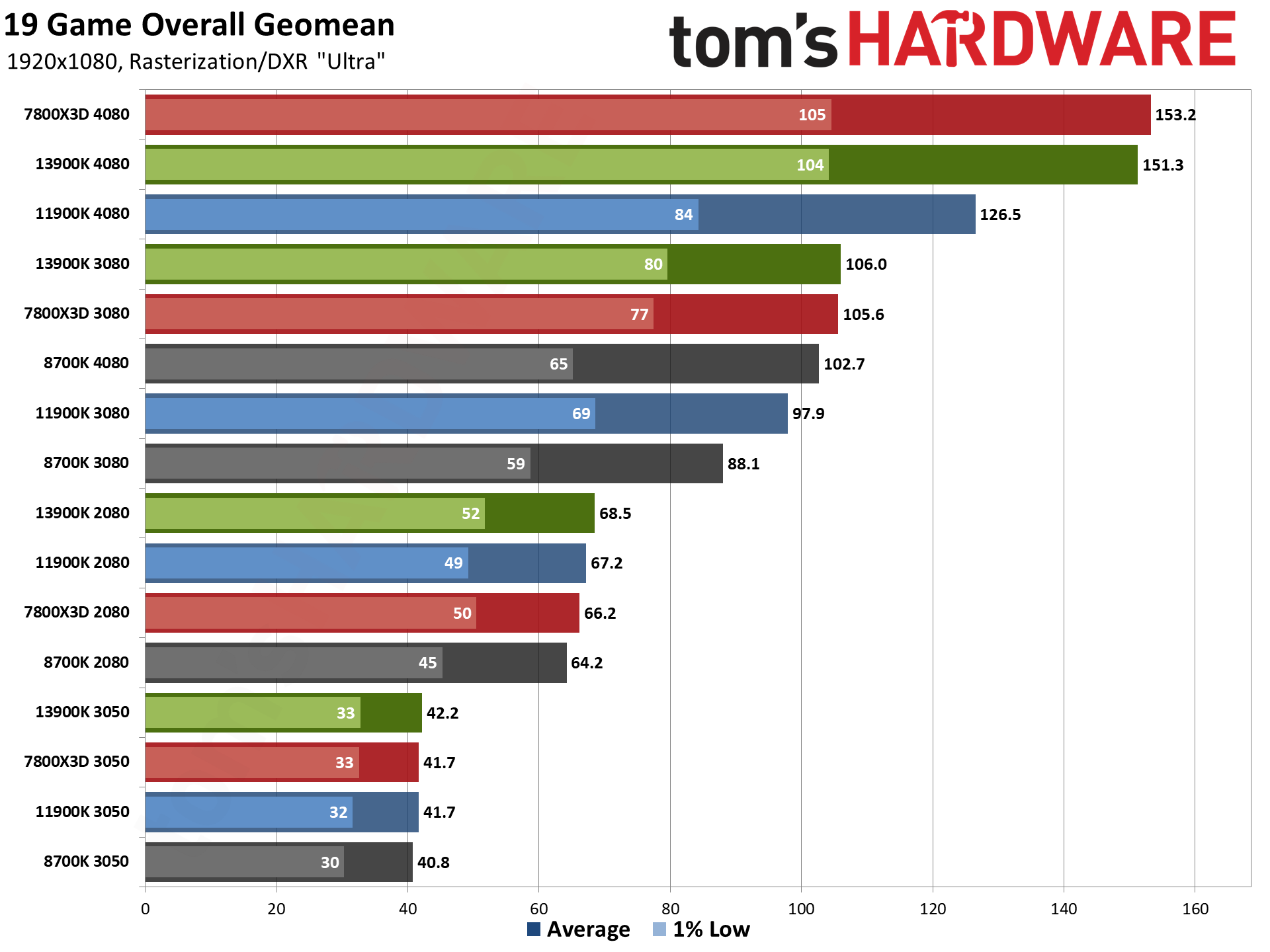

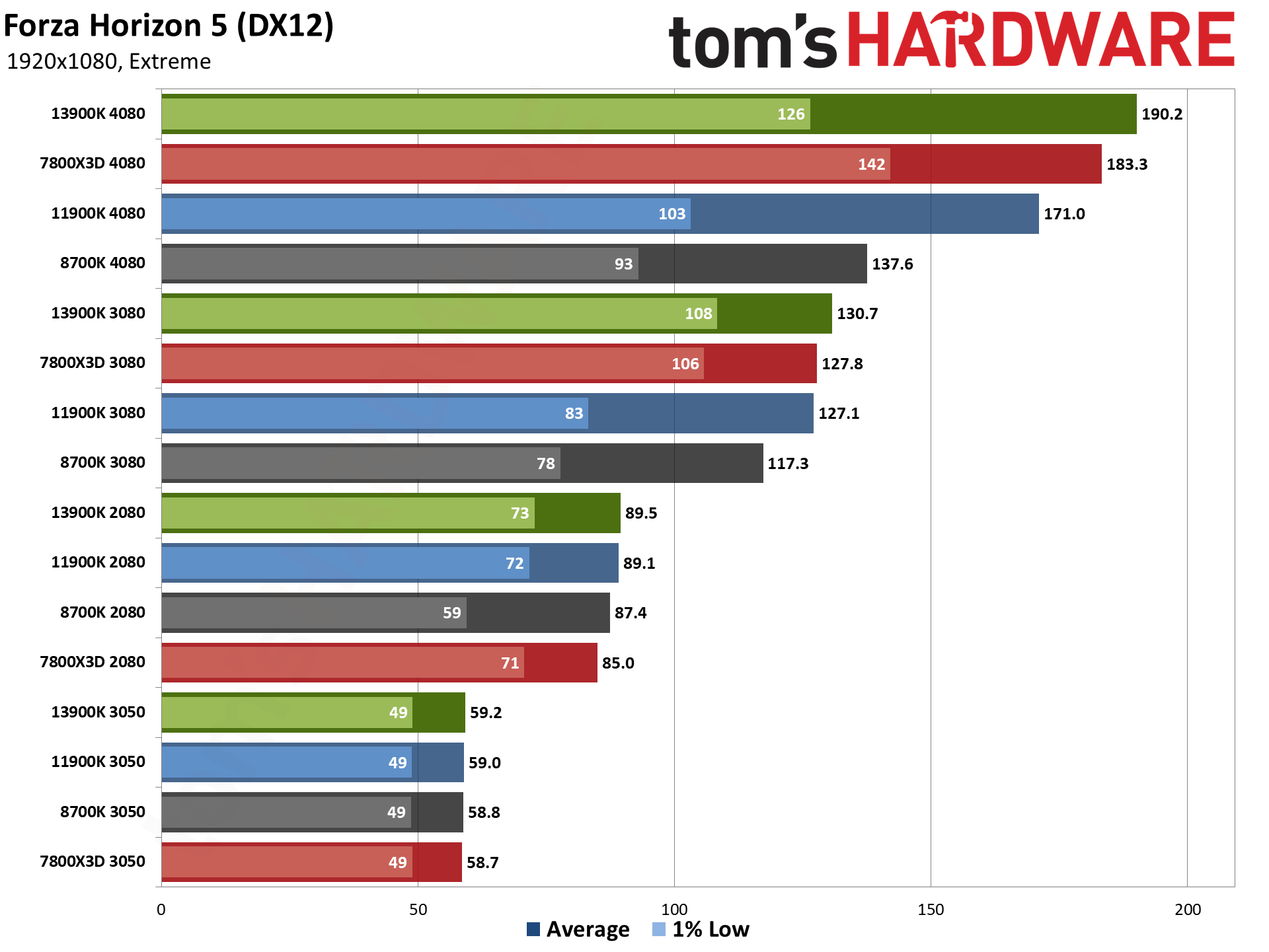

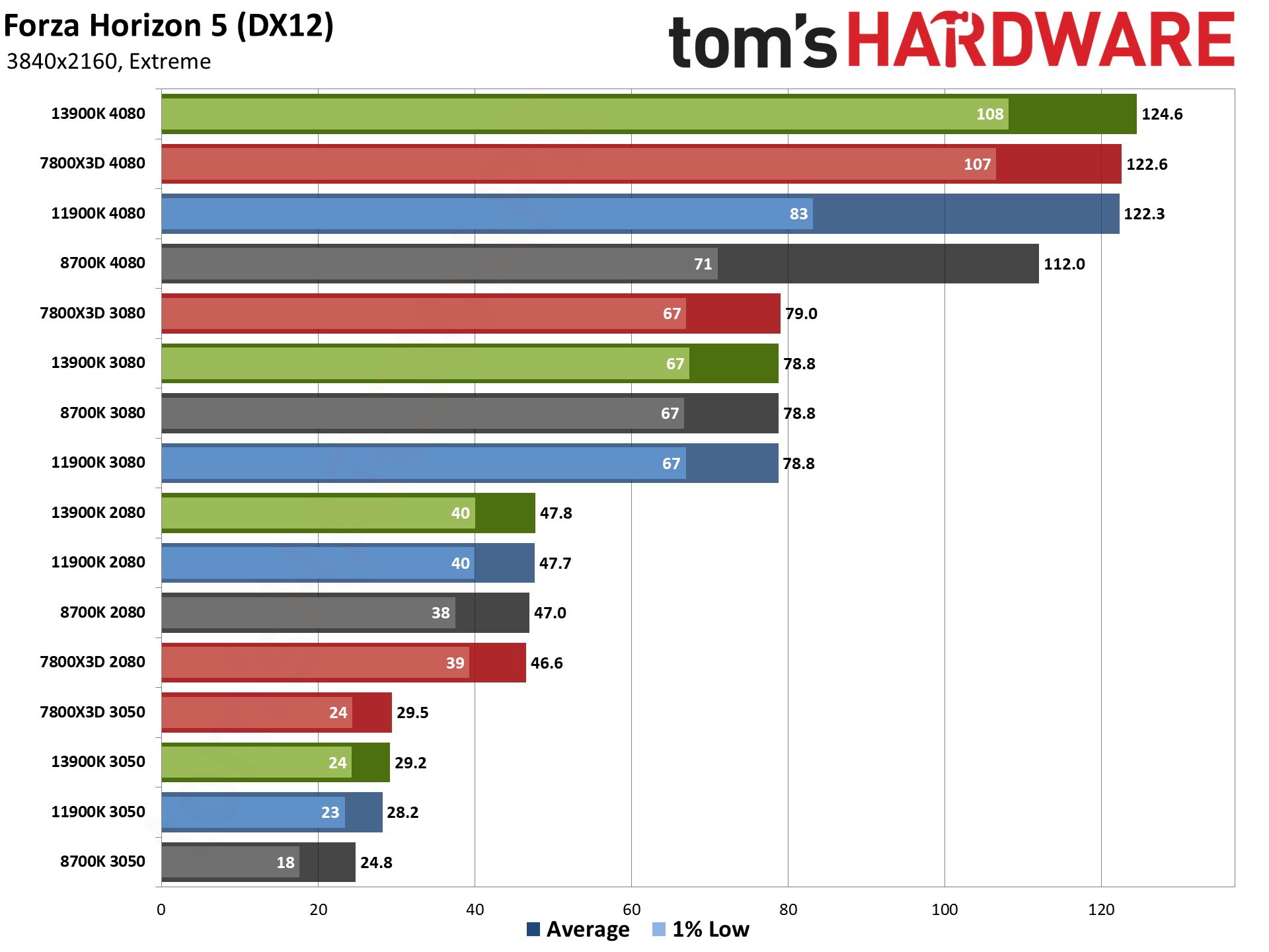

GPU vs CPU upgrades: 1080p Medium

Let's start with 1080p medium; these are the results that should be the most CPU dependent. As you'd expect, the 7800X3D with the RTX 4080 lands at the top of our chart, with the 13900K coming in 5% behind. Then there's a pretty sizable drop to the 11900K with the 4080, which basically ties the 7800X3D and 13900K with the previous generation RTX 3080. And that's where things start to get interesting.

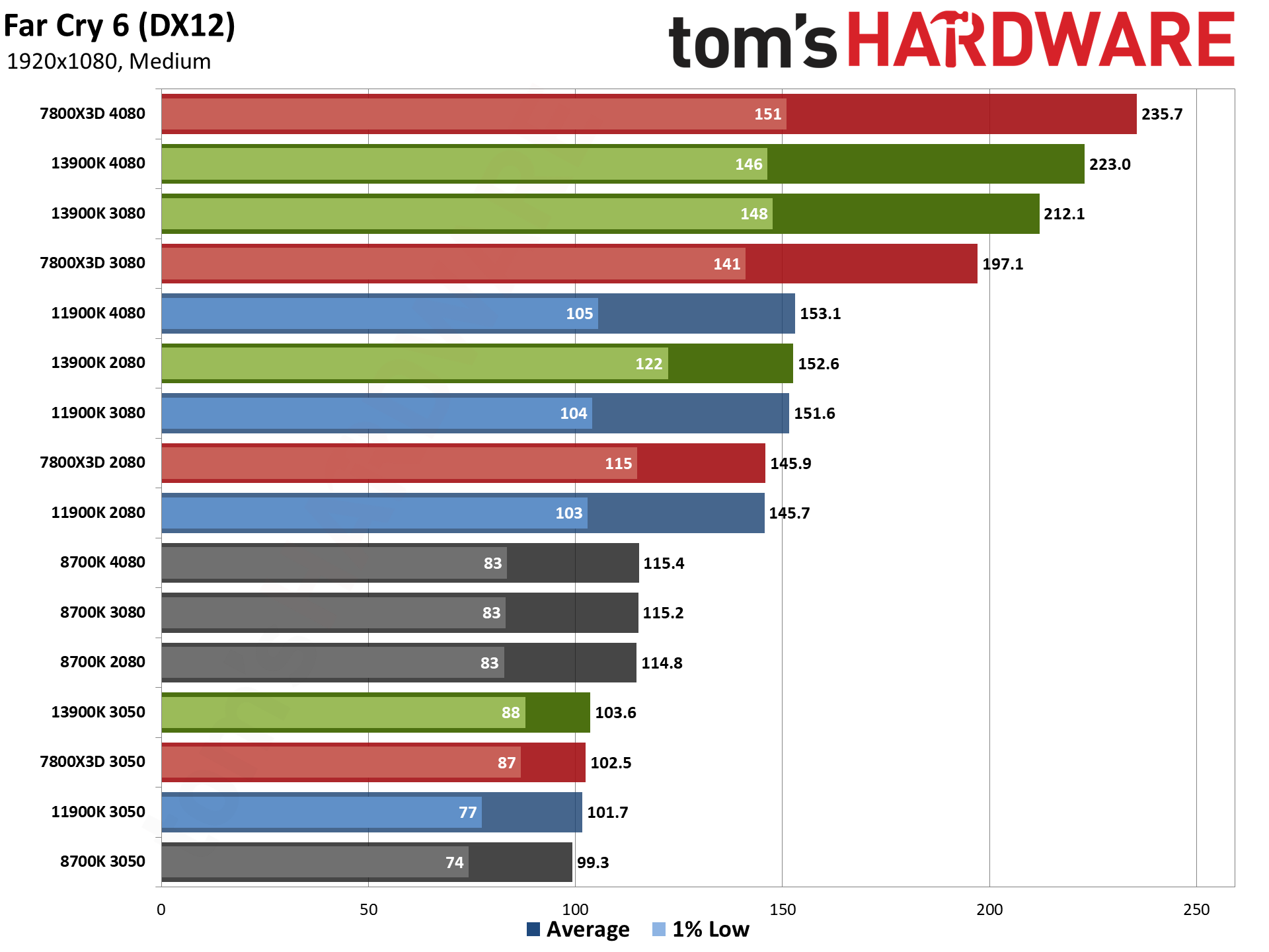

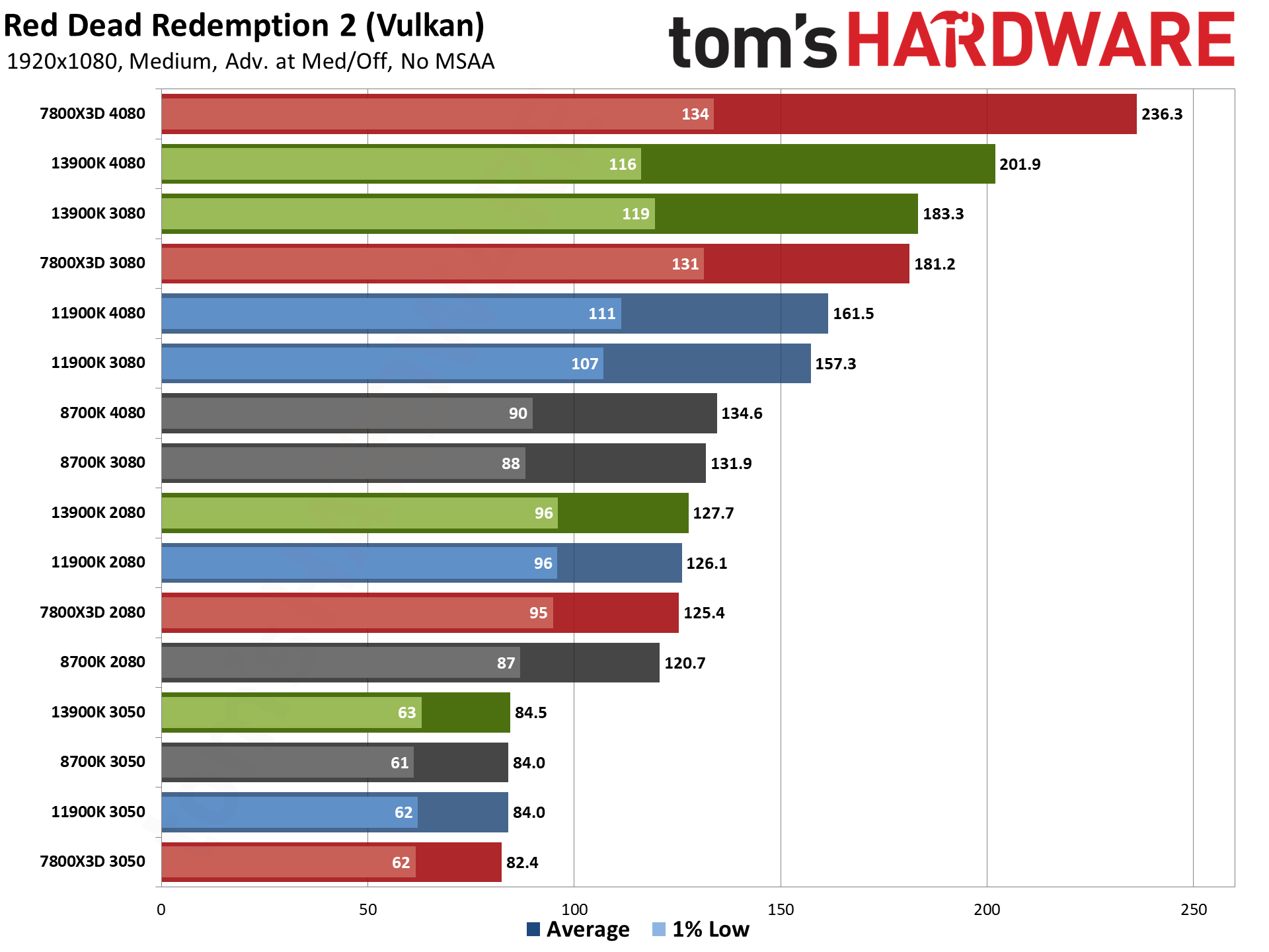

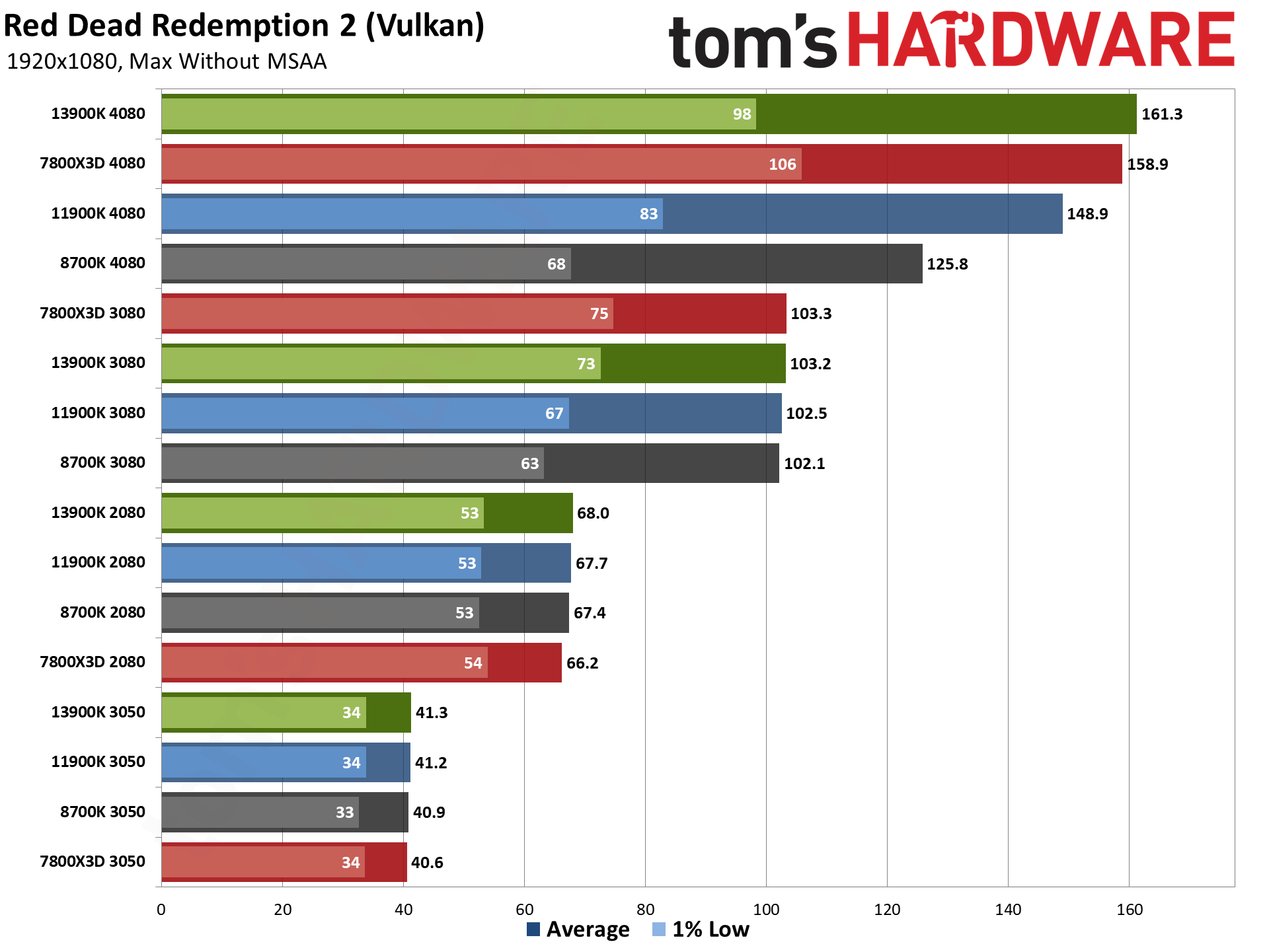

Pairing the RTX 4080 with the oldest CPU of our test hardware ends up yielding worse performance than a 3080 with any of the newer CPUs. In fact, the 4080 with the 8700K only beats the 3080 with the same CPU by 10%. Using those same GPUs with the 7800X3D, you'd see a 36% increase in gaming performance — versus a 28% improvement on the 13900K, and 17% with the 11900K.

This shows one of the most important lessons when it comes to contemplating what to upgrade. If you have an older CPU, moving to the fastest (or second fastest) graphics card may not give you a significant performance boost, particularly at lower resolutions and settings. You want to keep at least some level of balance between the two core components of a gaming PC.

On the other hand, a new CPU will only do so much if you have a slower GPU. The RTX 3050 8GB card ranks at the bottom of the charts, regardless of which CPU you use. Going from a seven years old 8700K to a newer CPU only nets at best a 7% improvement in performance. All four systems with the RTX 2080 are the next step up in the charts, indicating they're generally not hitting major CPU limitations yet. It's only with the 3080 and 4080 that the CPU begins to be a bigger limiting factor.

And yes, the "fastest CPU for gaming" actually came in second place when paired with the older and slower RTX 3050. That's not the only place where the 7800X3D failed to beat the 13900K, as the 2080 and 3080 also (slightly) favored Intel's processor. There are some variables in play besides just the CPU, and perhaps some of the lack of performance comes from the lack of ReBAR (Resizable Base Address Register) with the 2080, as well as driver optimizations likely targeting newer hardware.

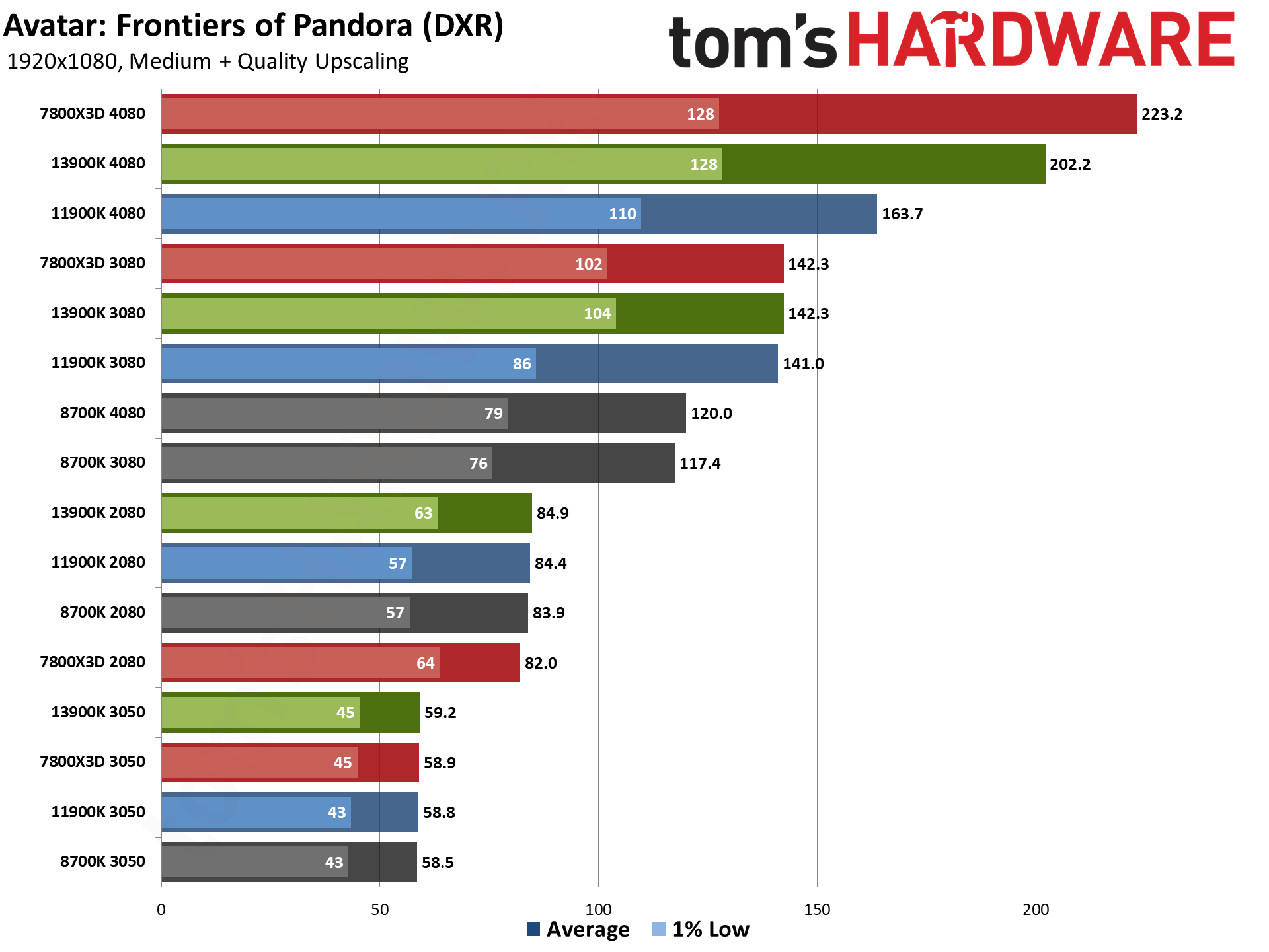

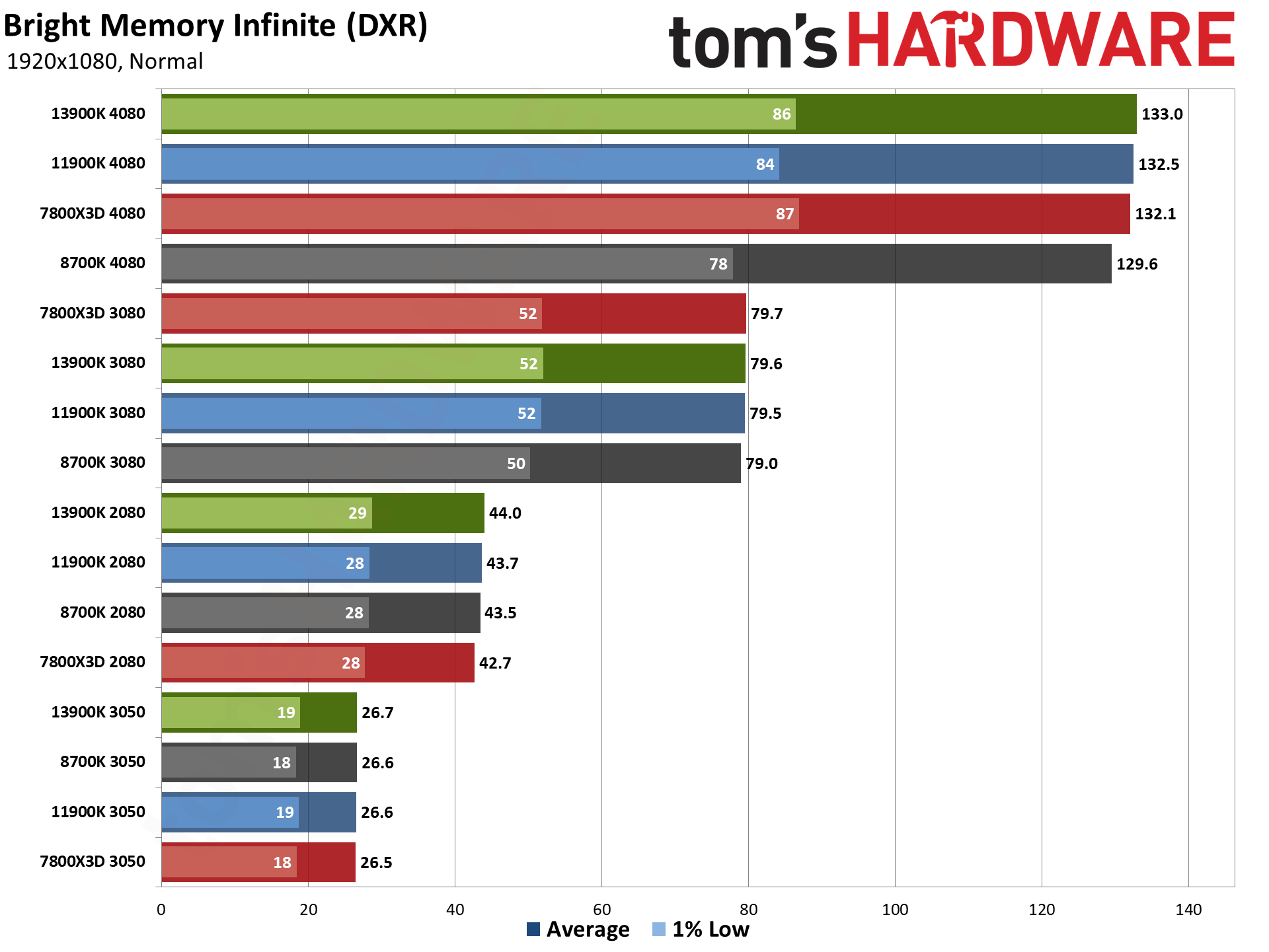

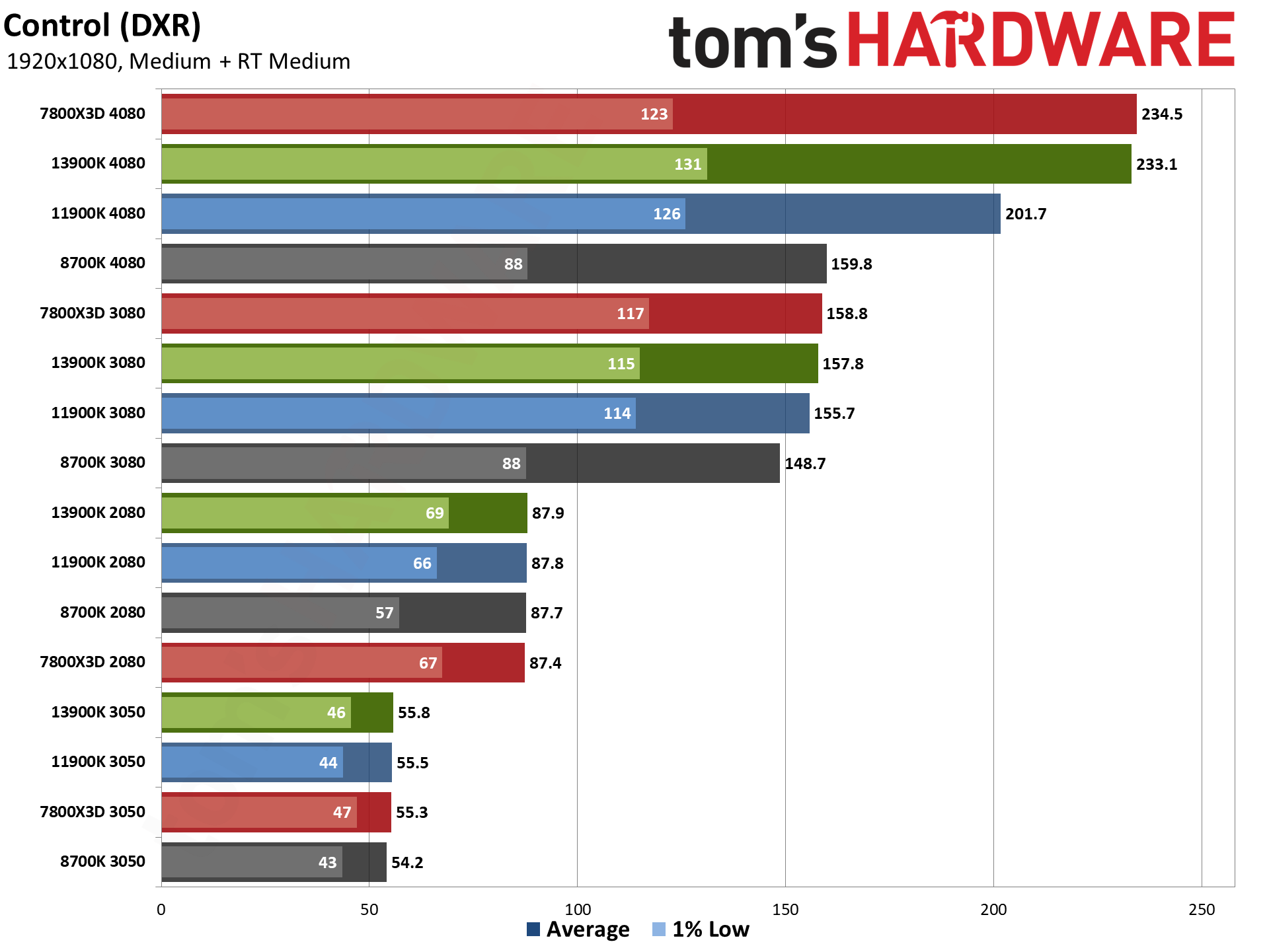

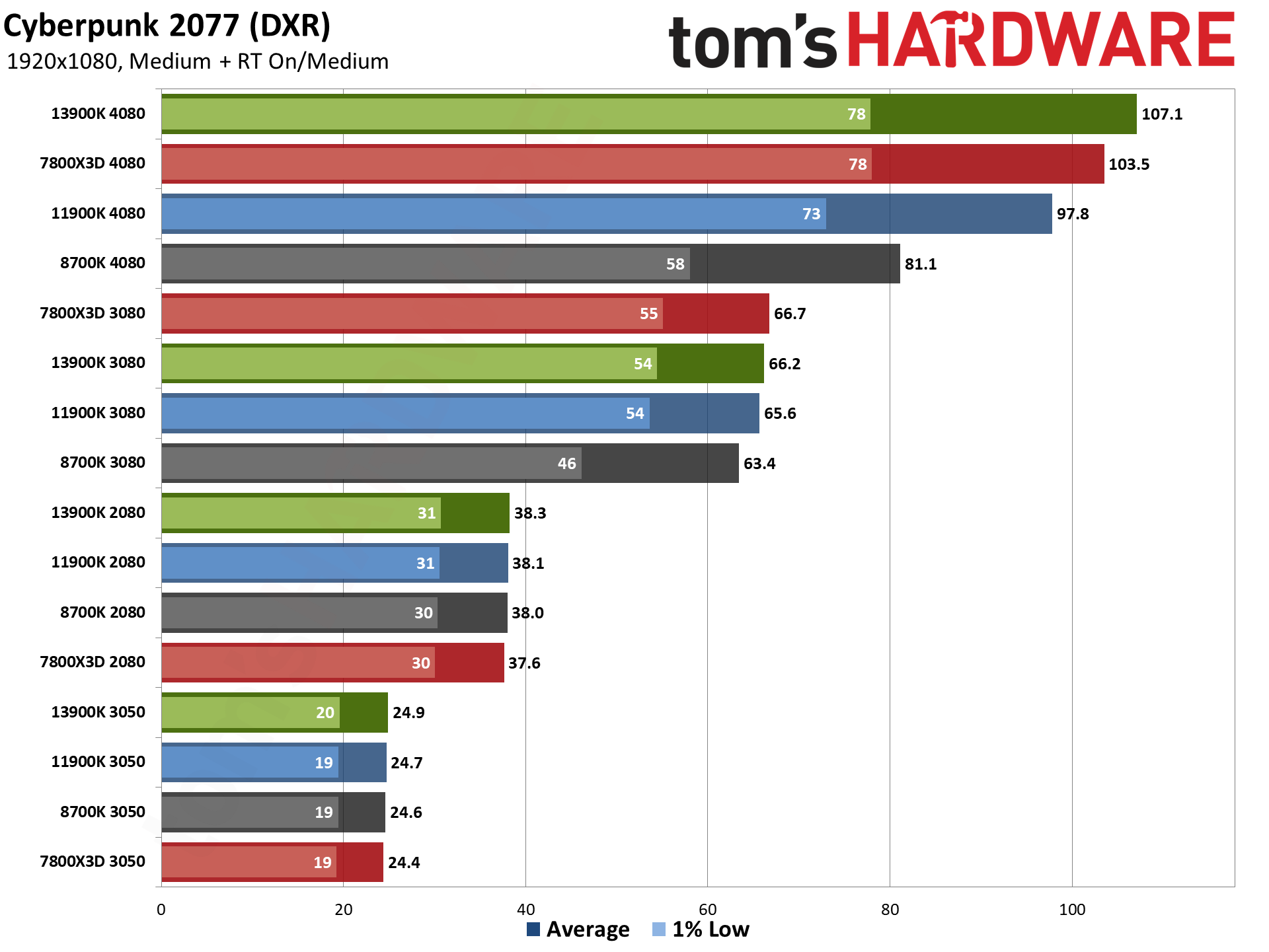

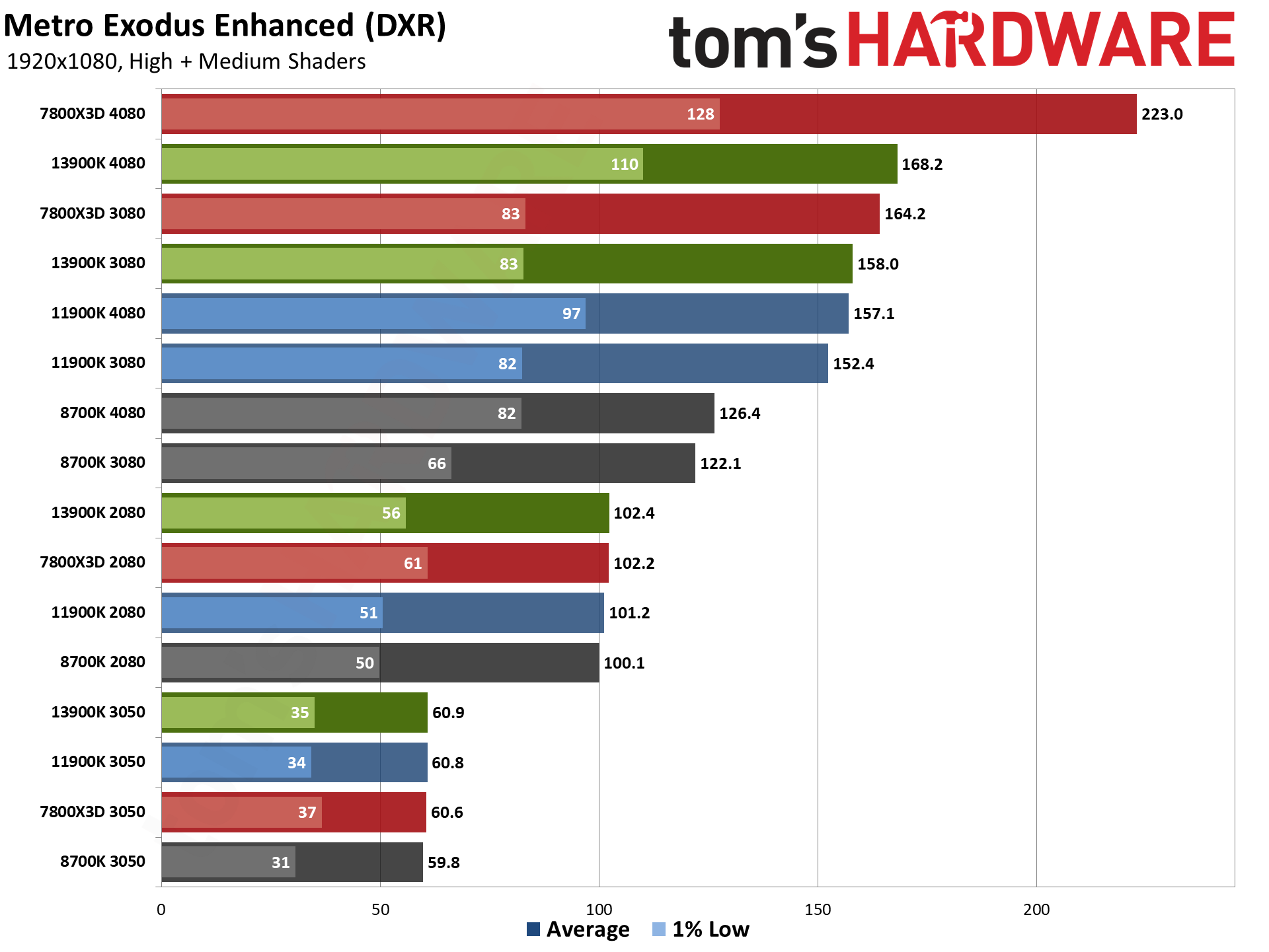

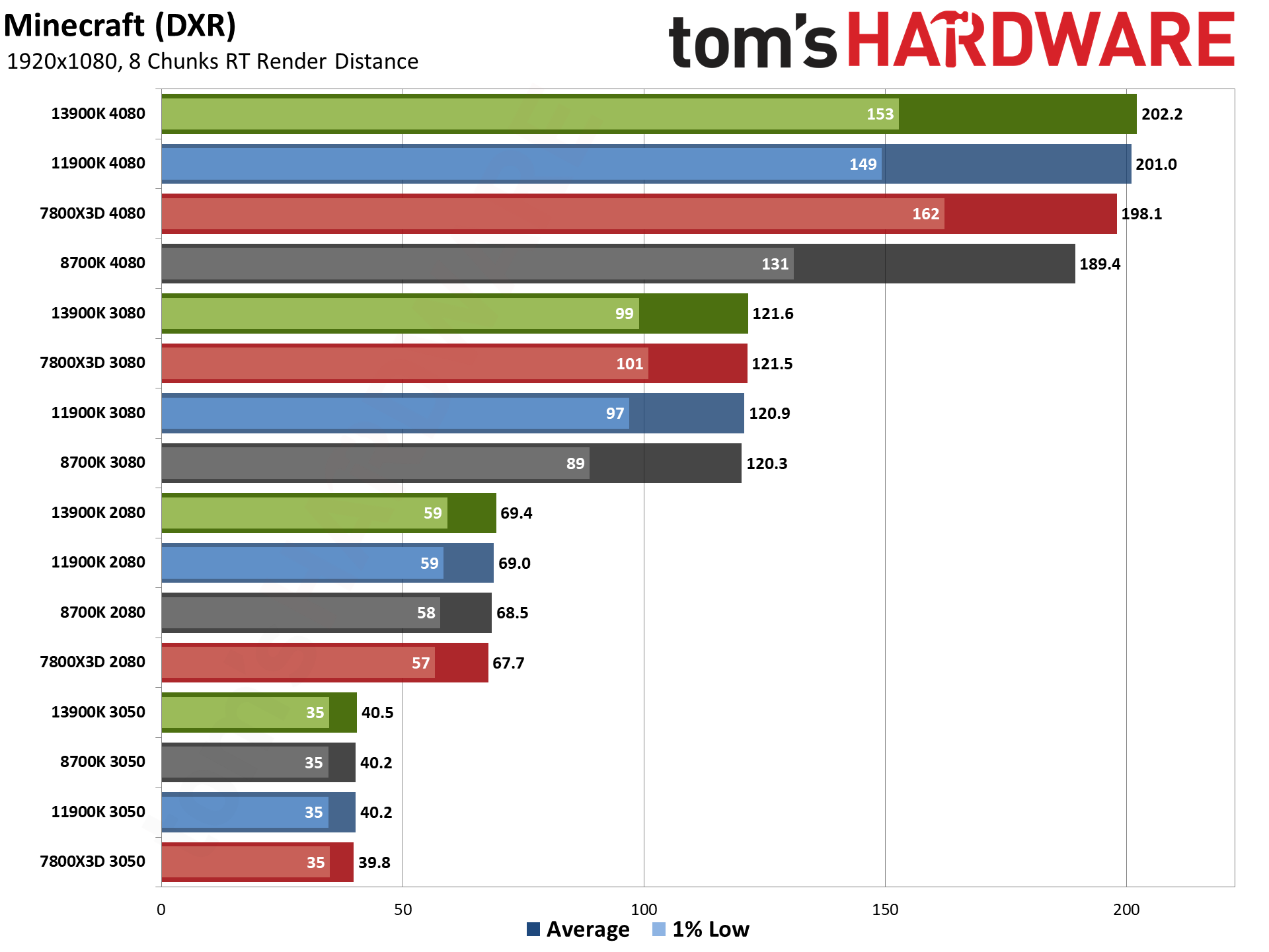

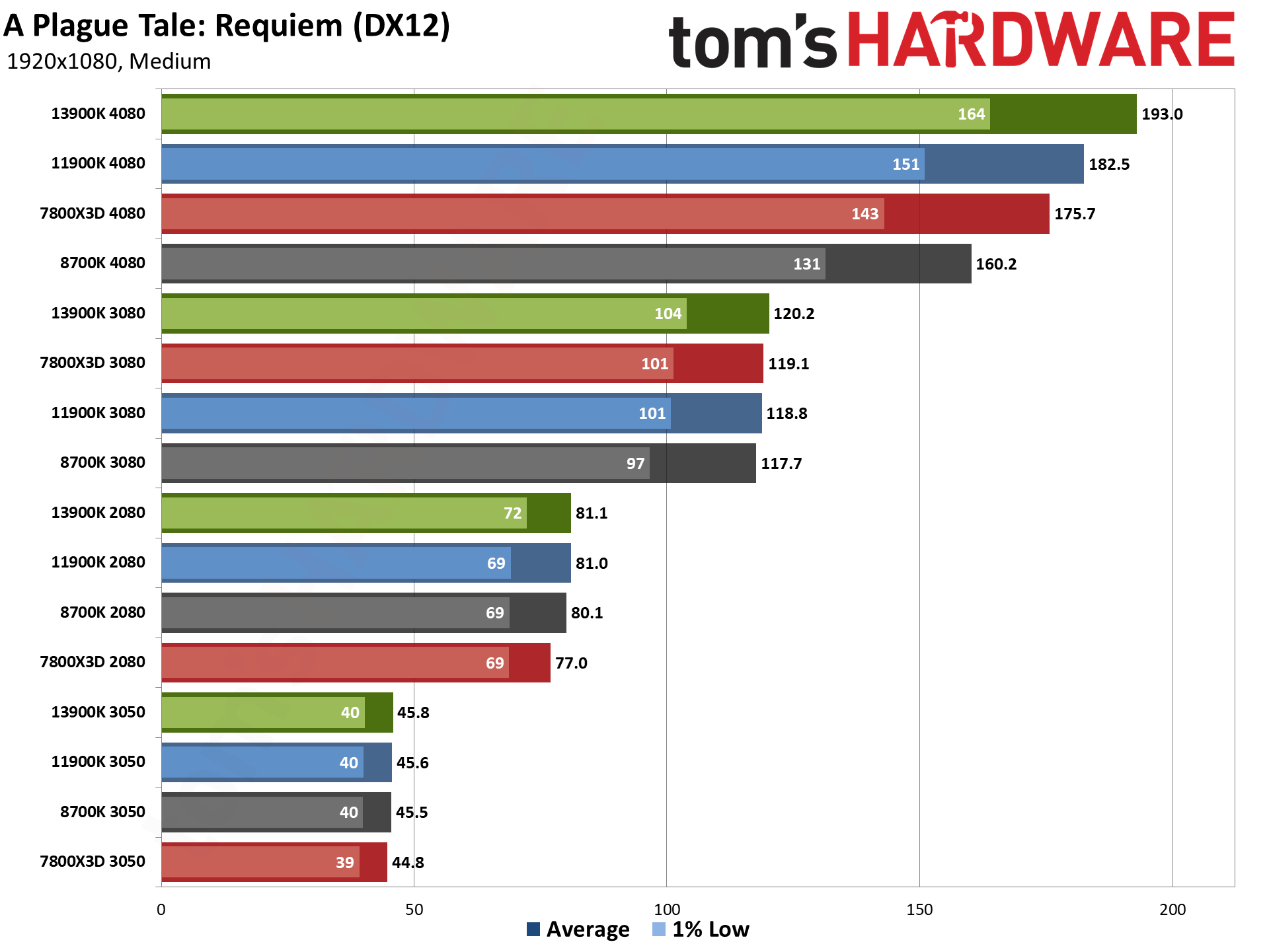

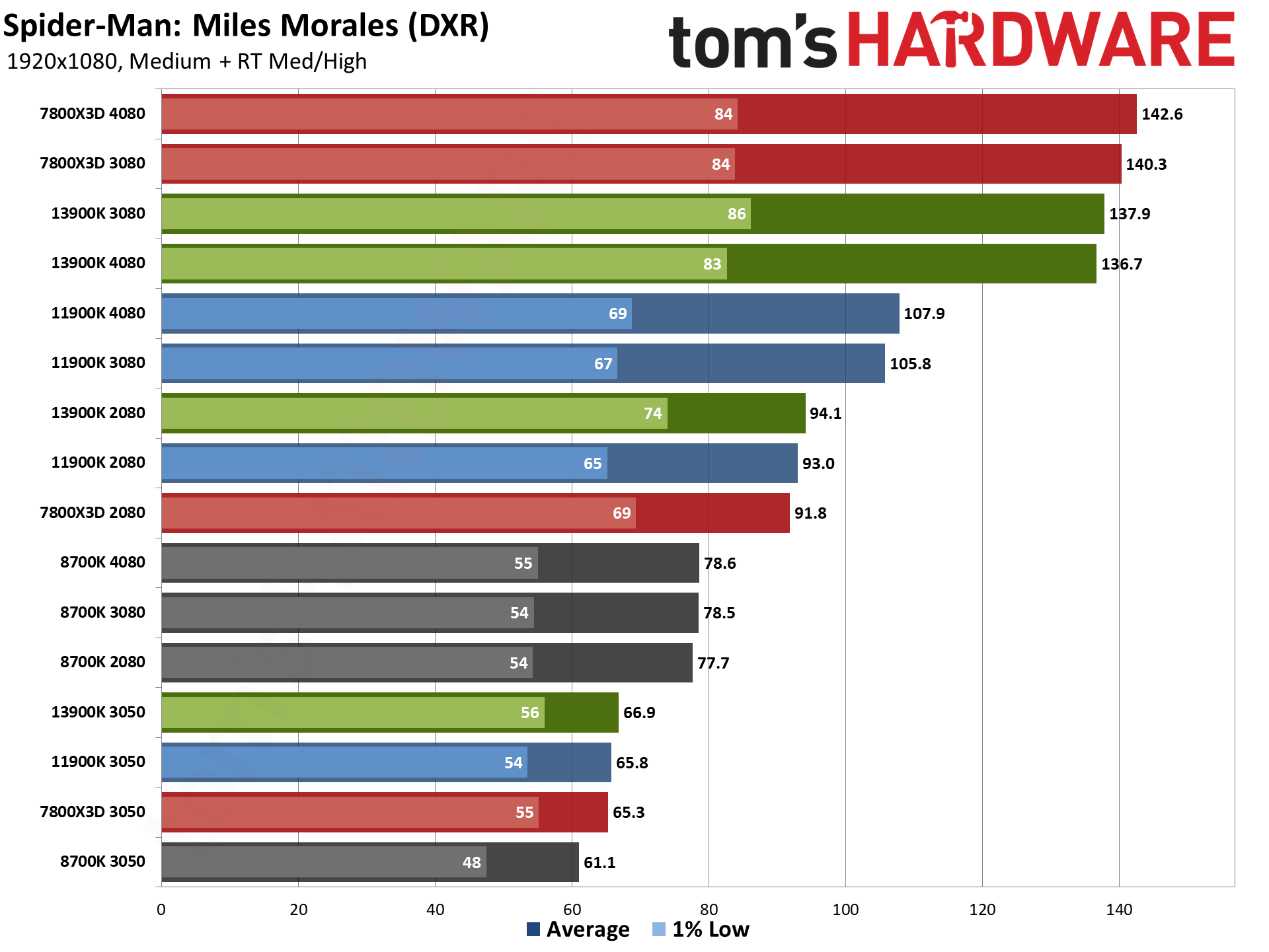

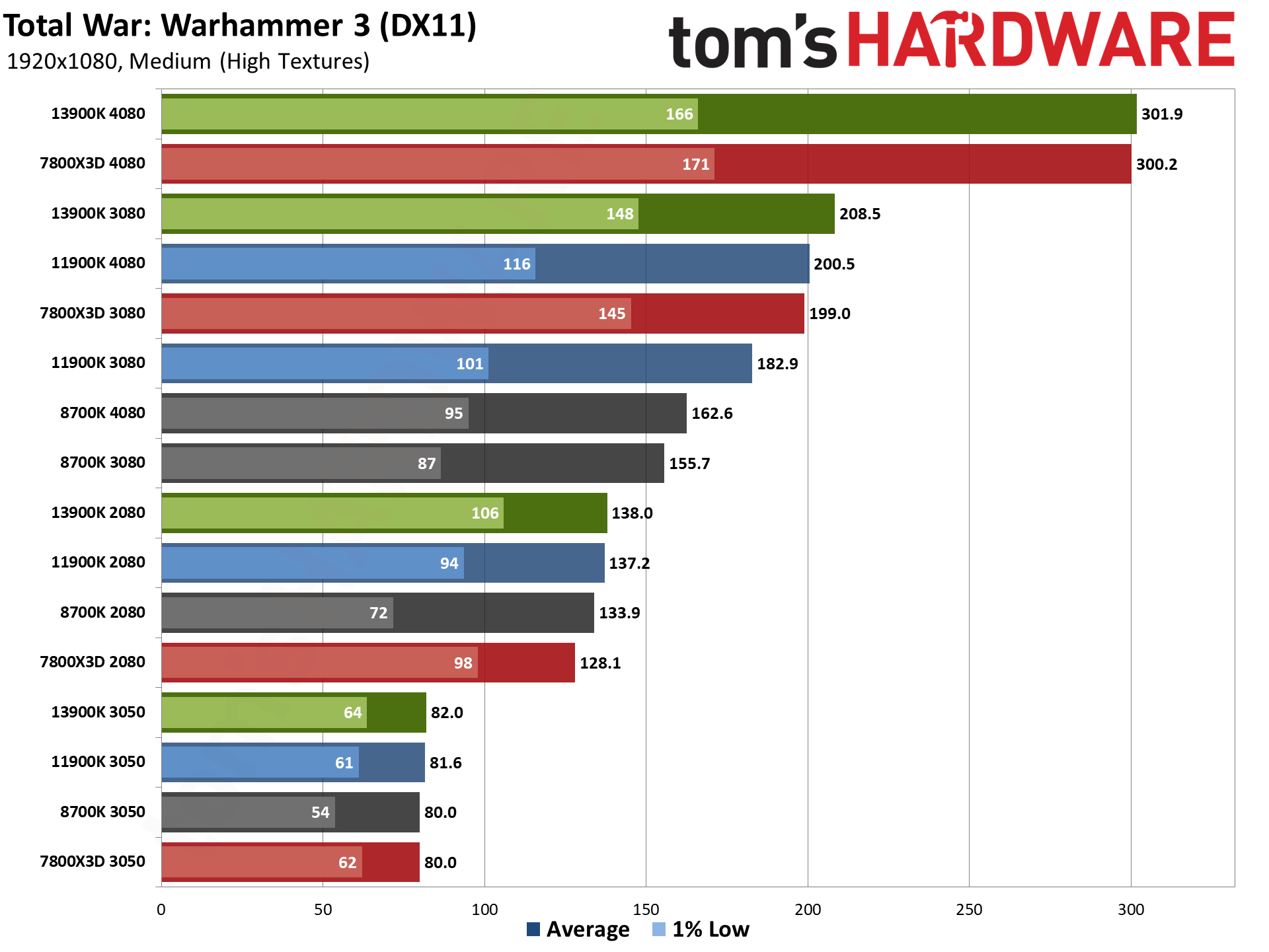

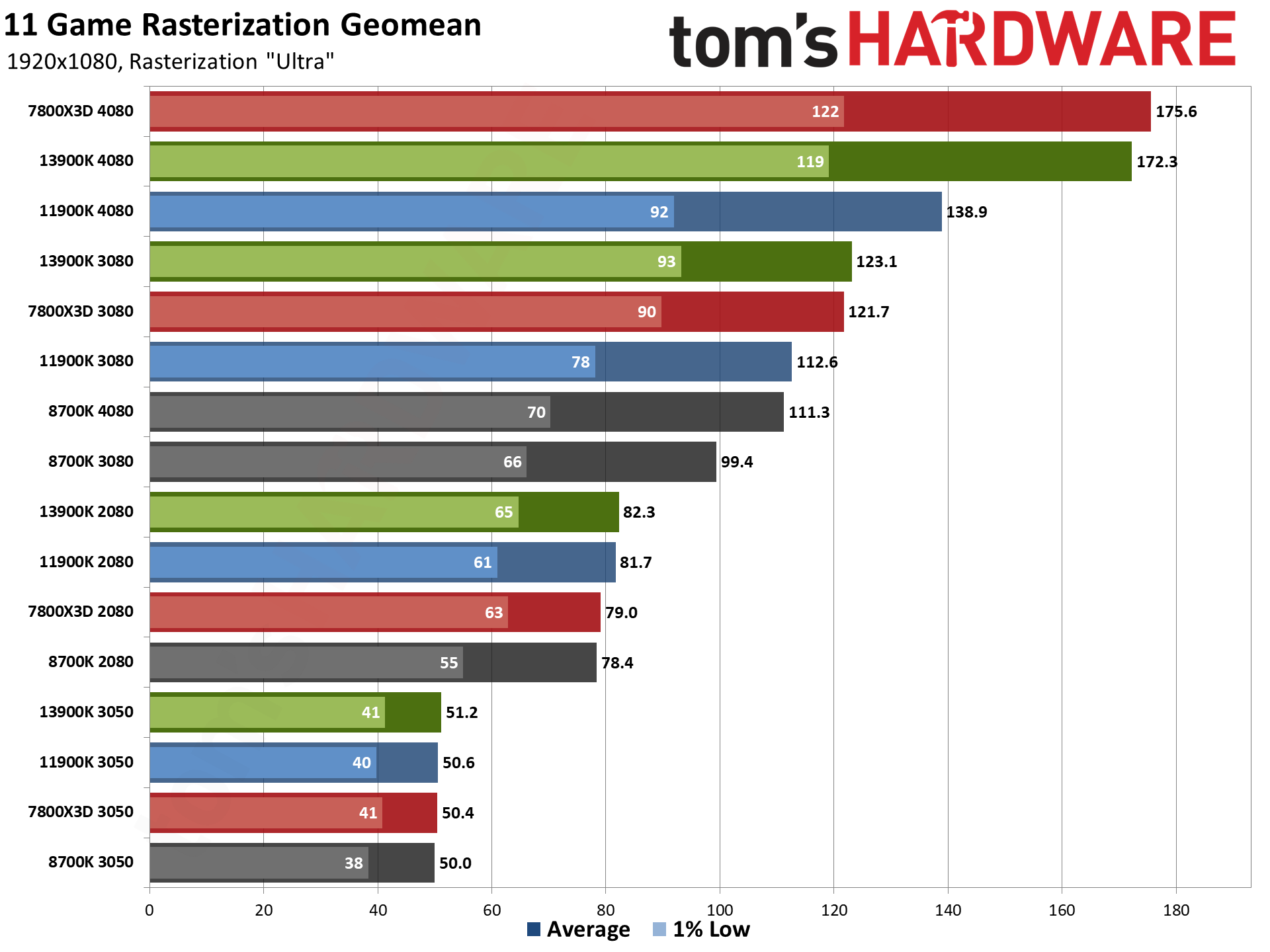

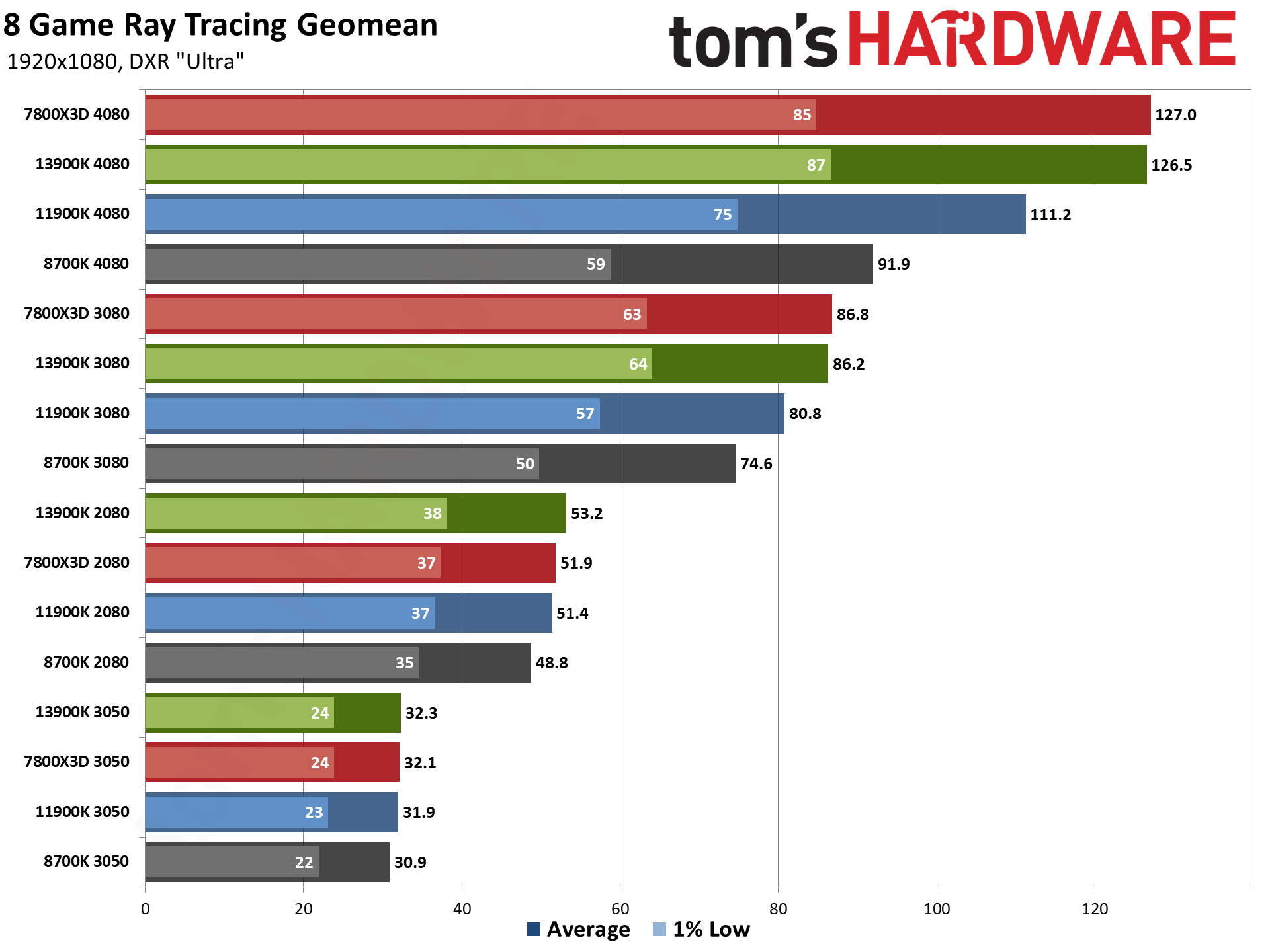

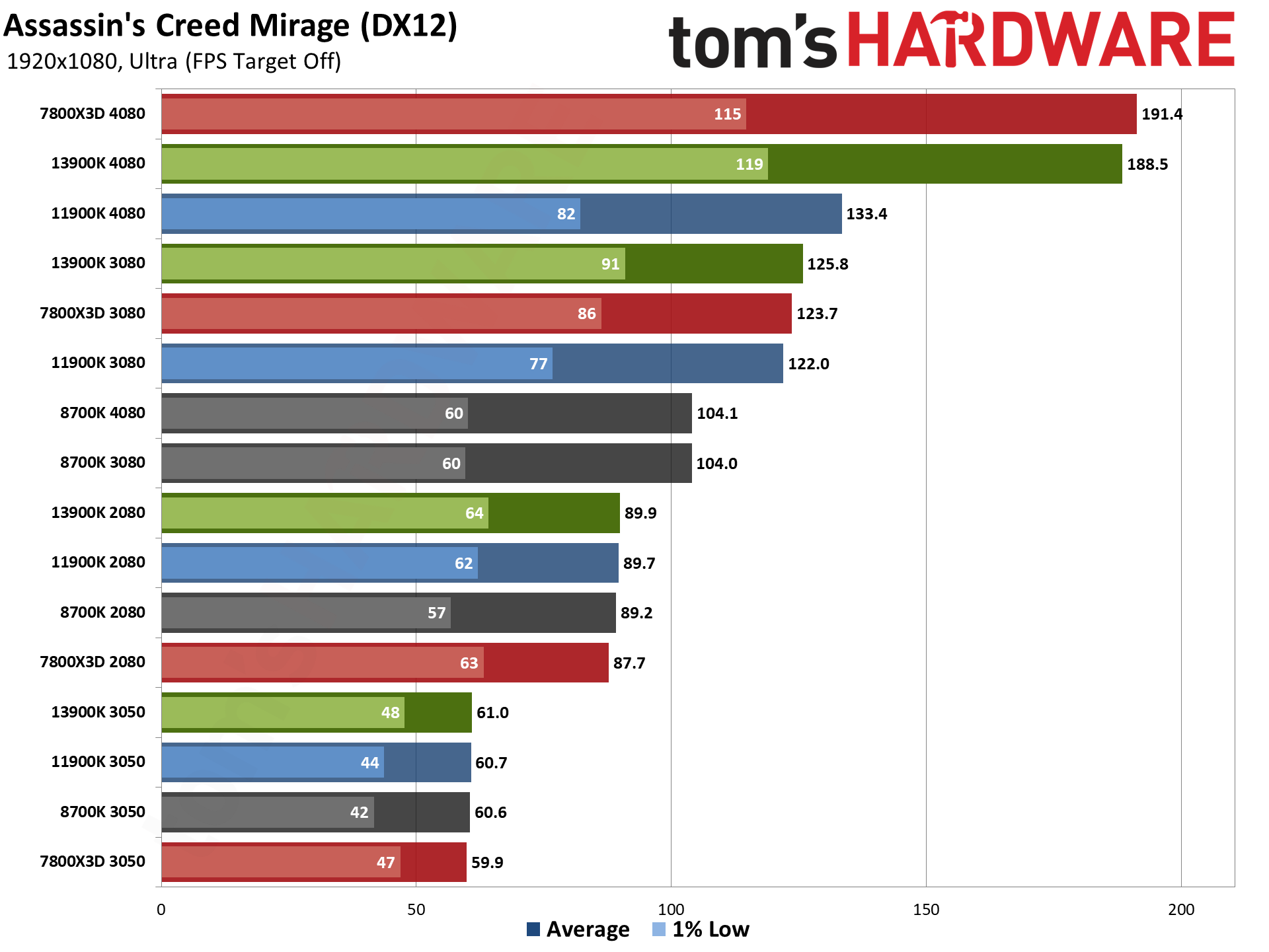

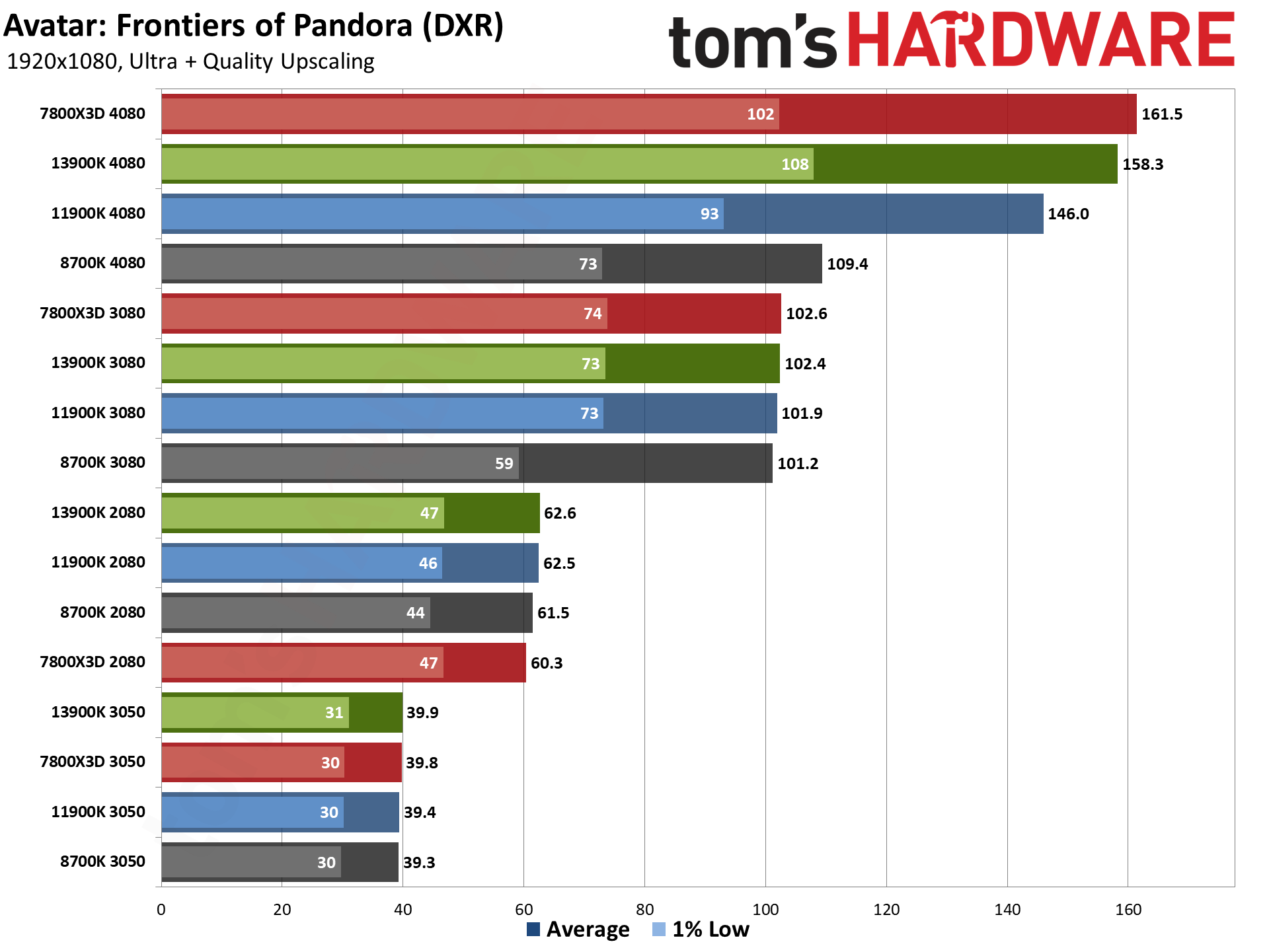

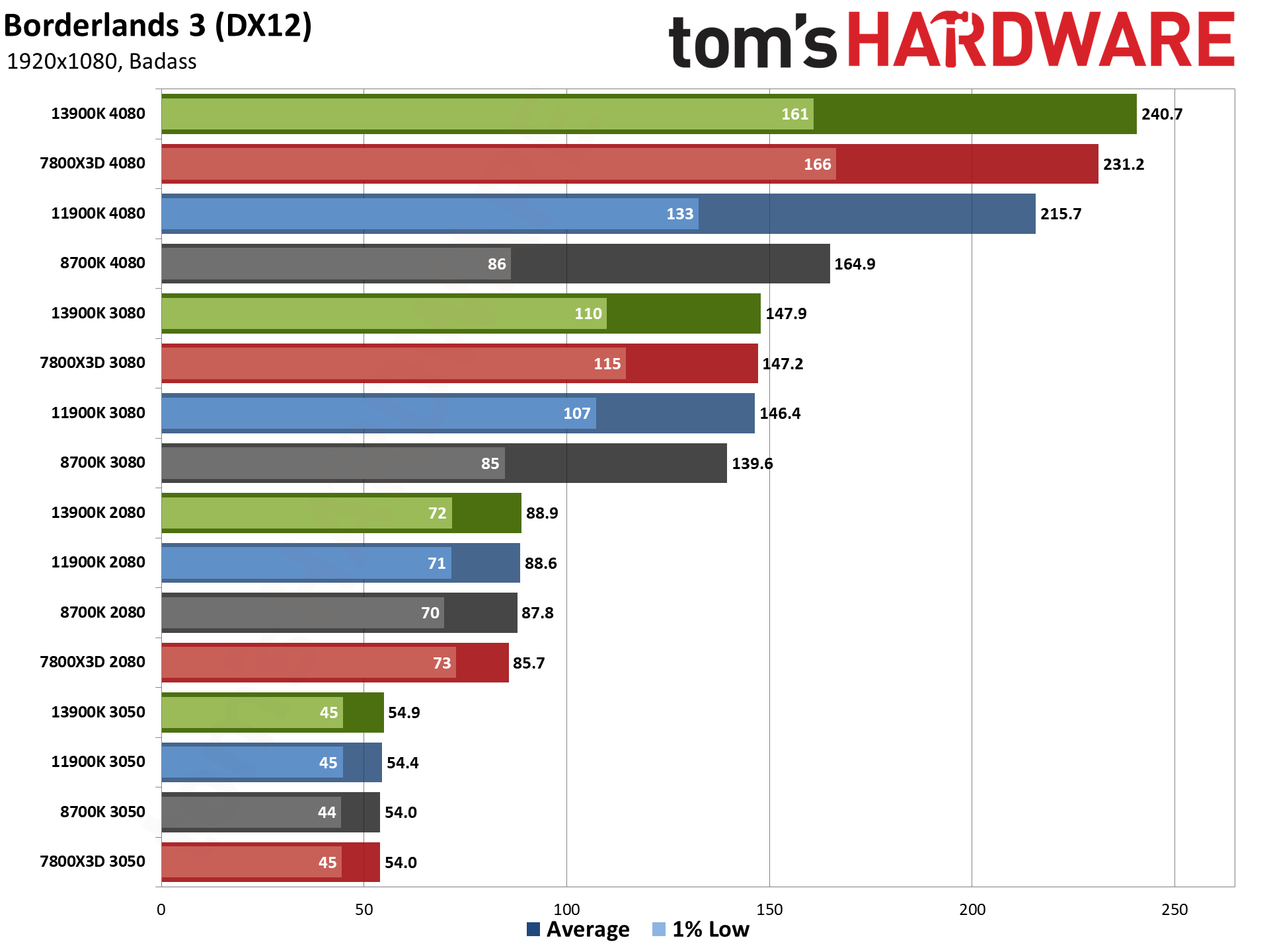

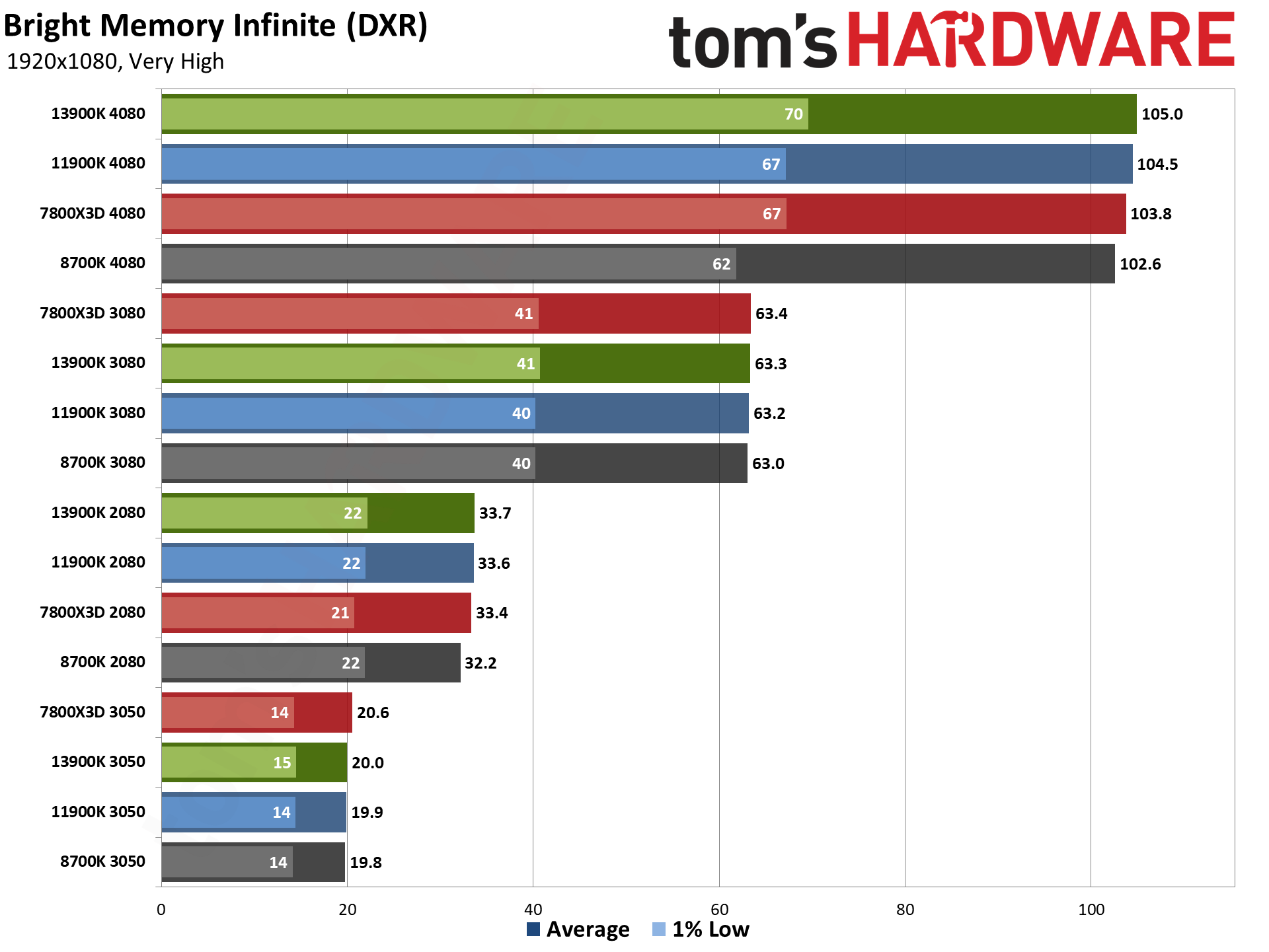

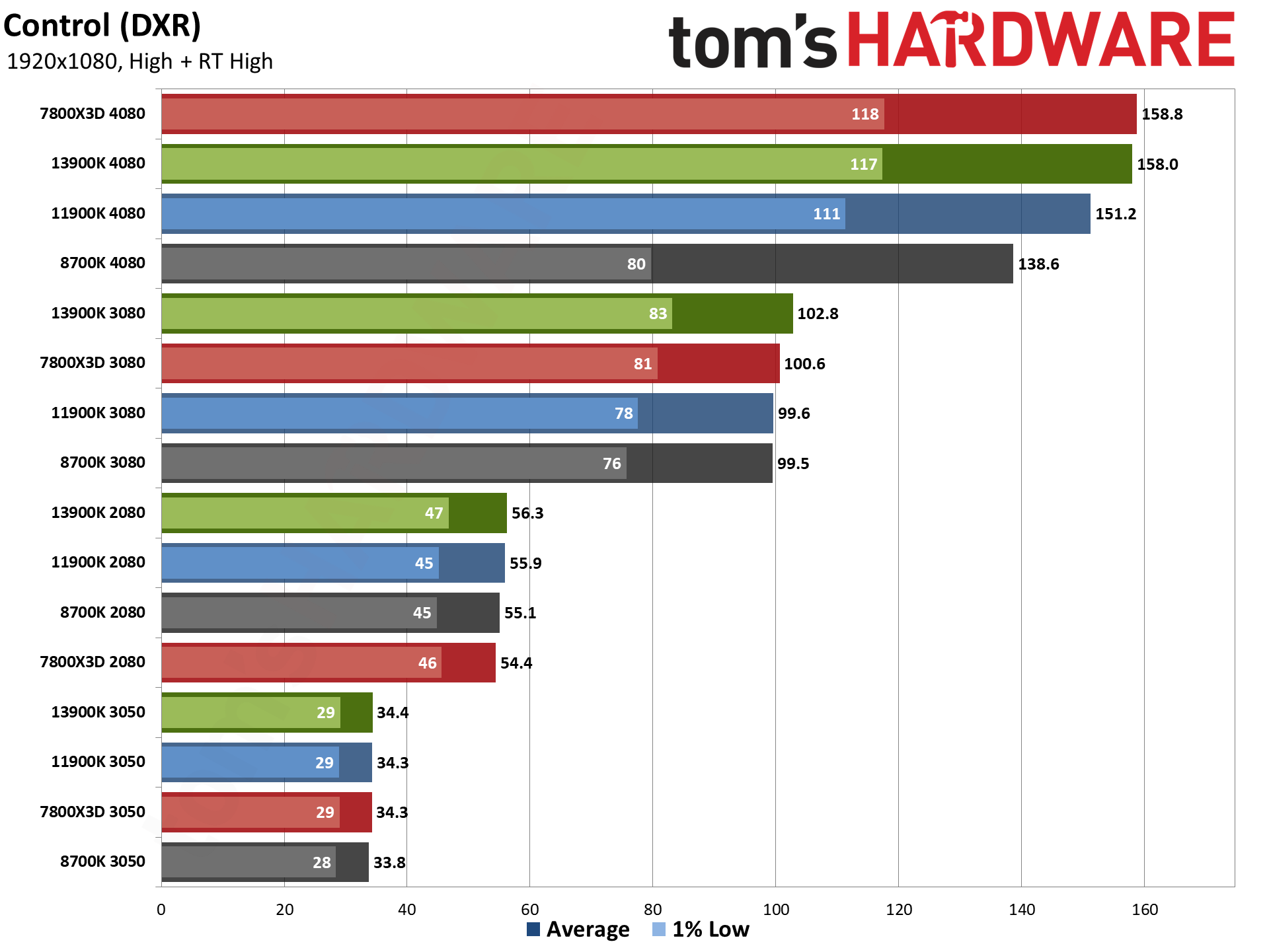

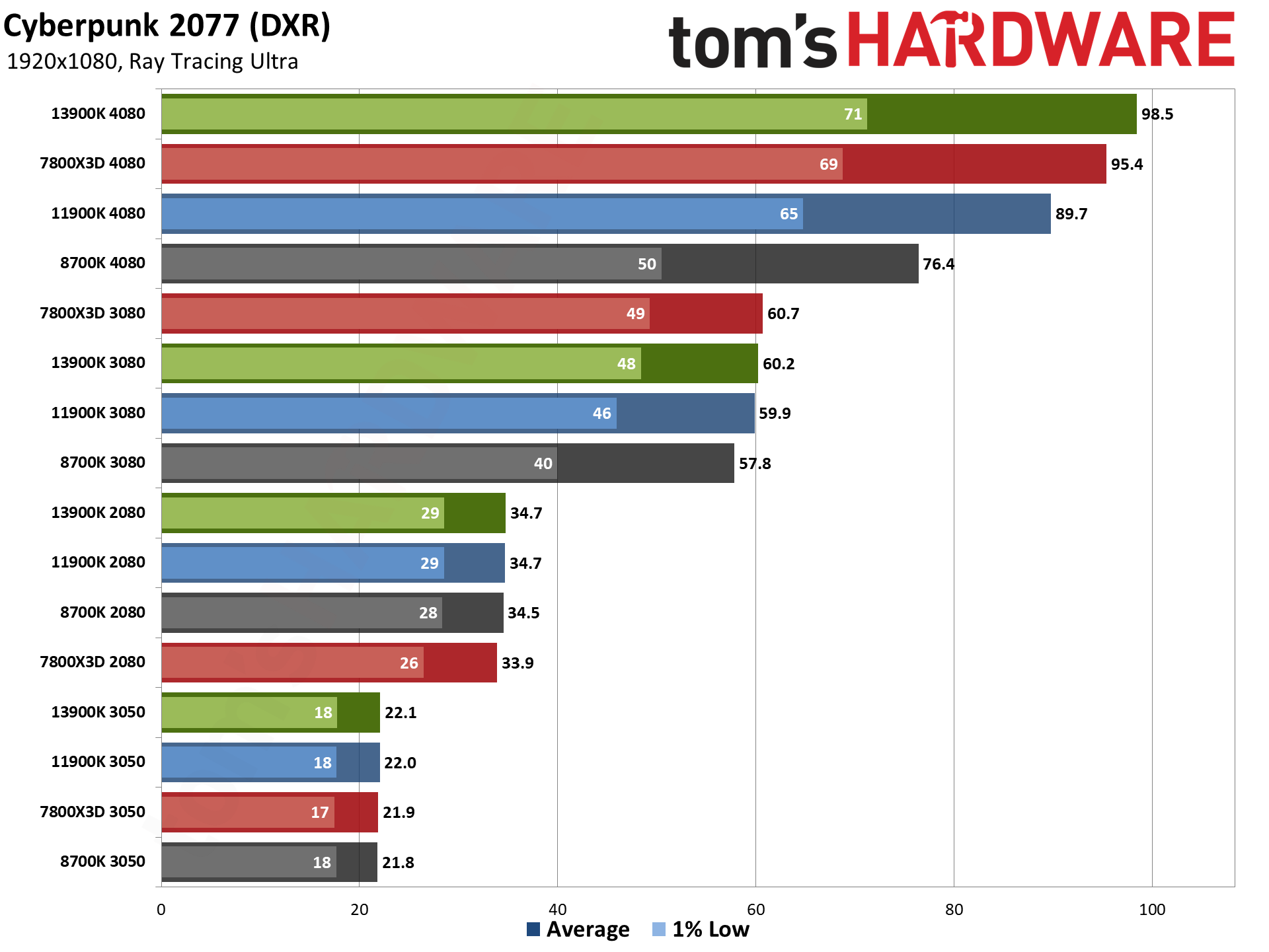

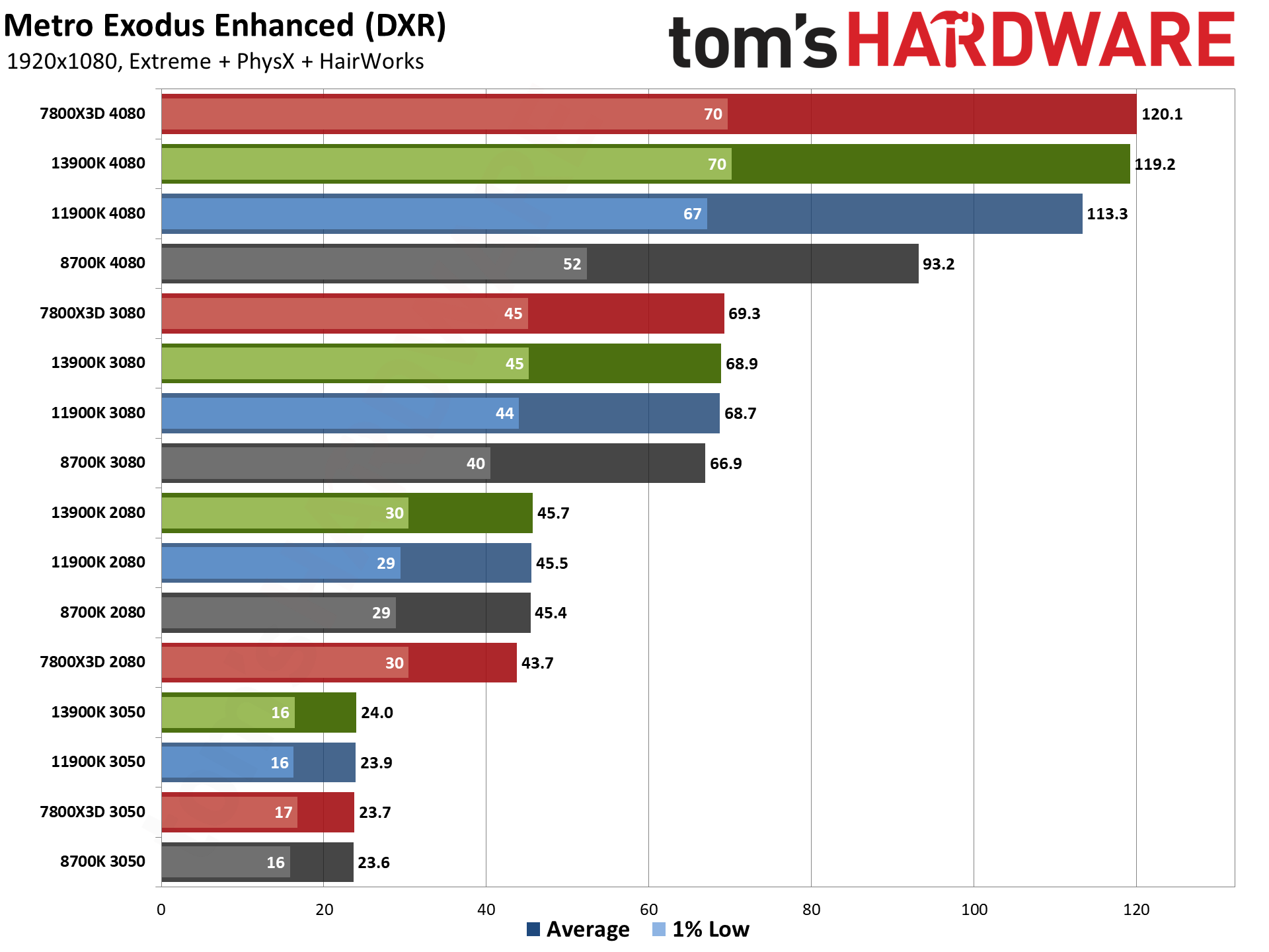

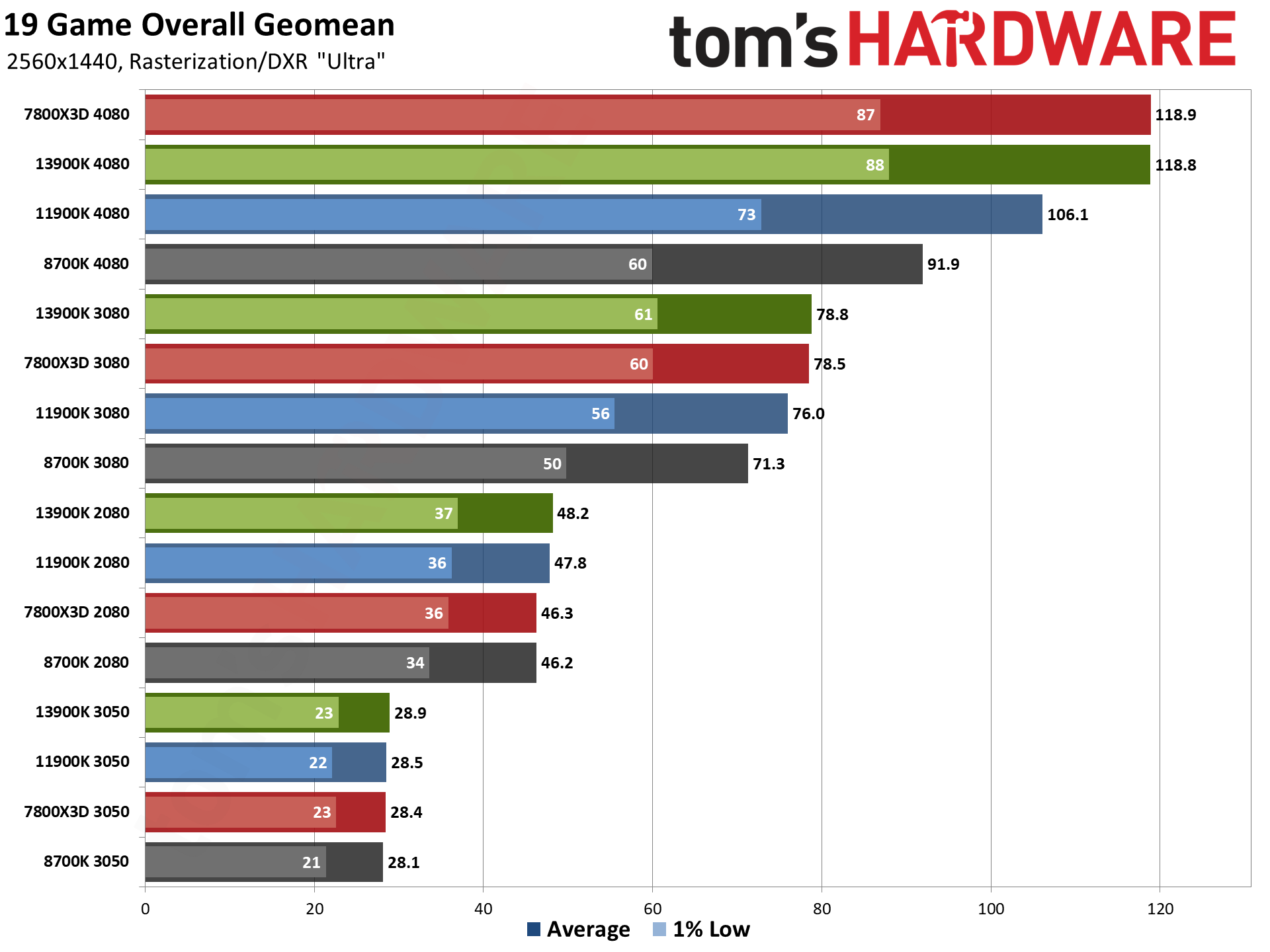

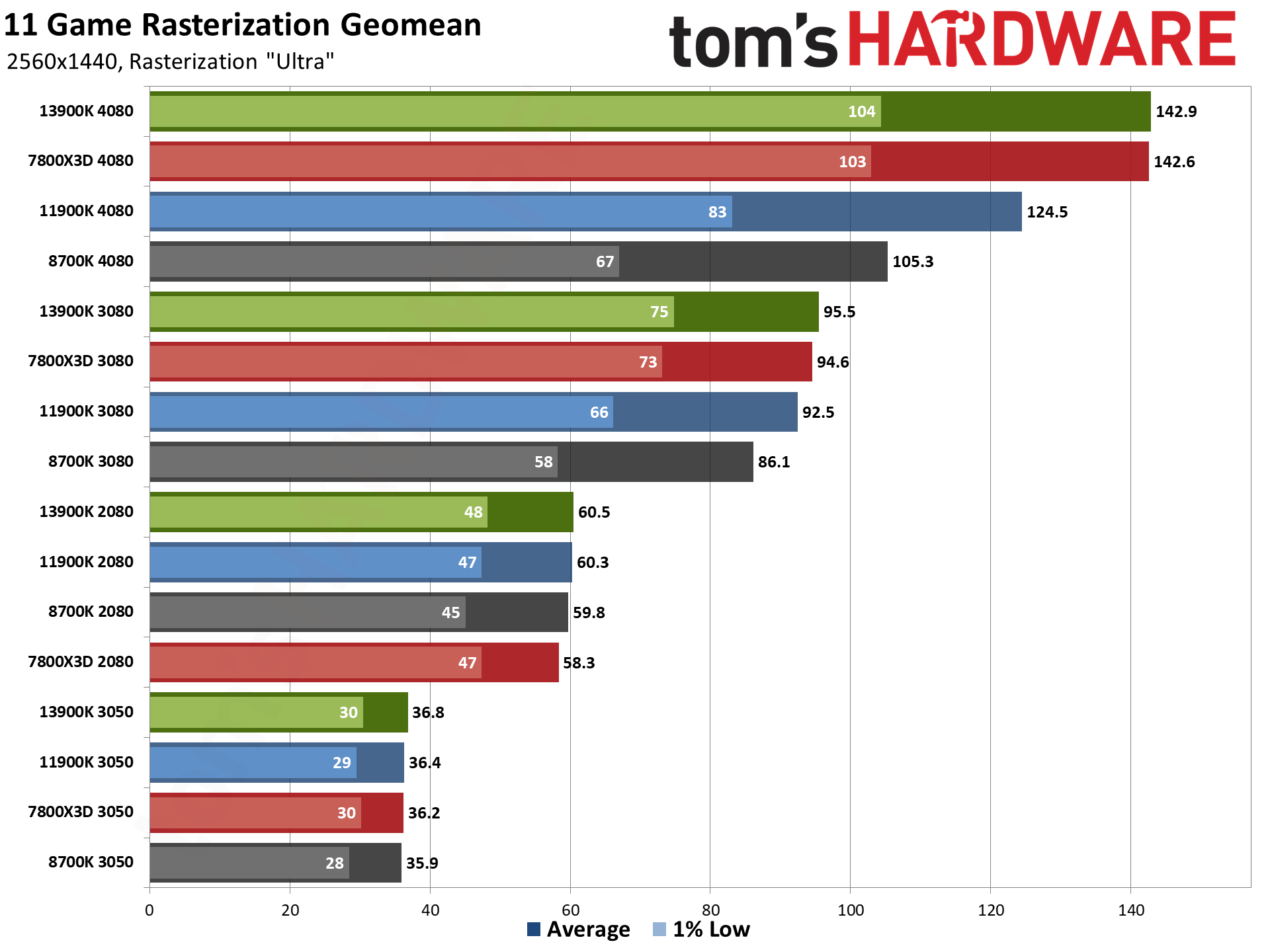

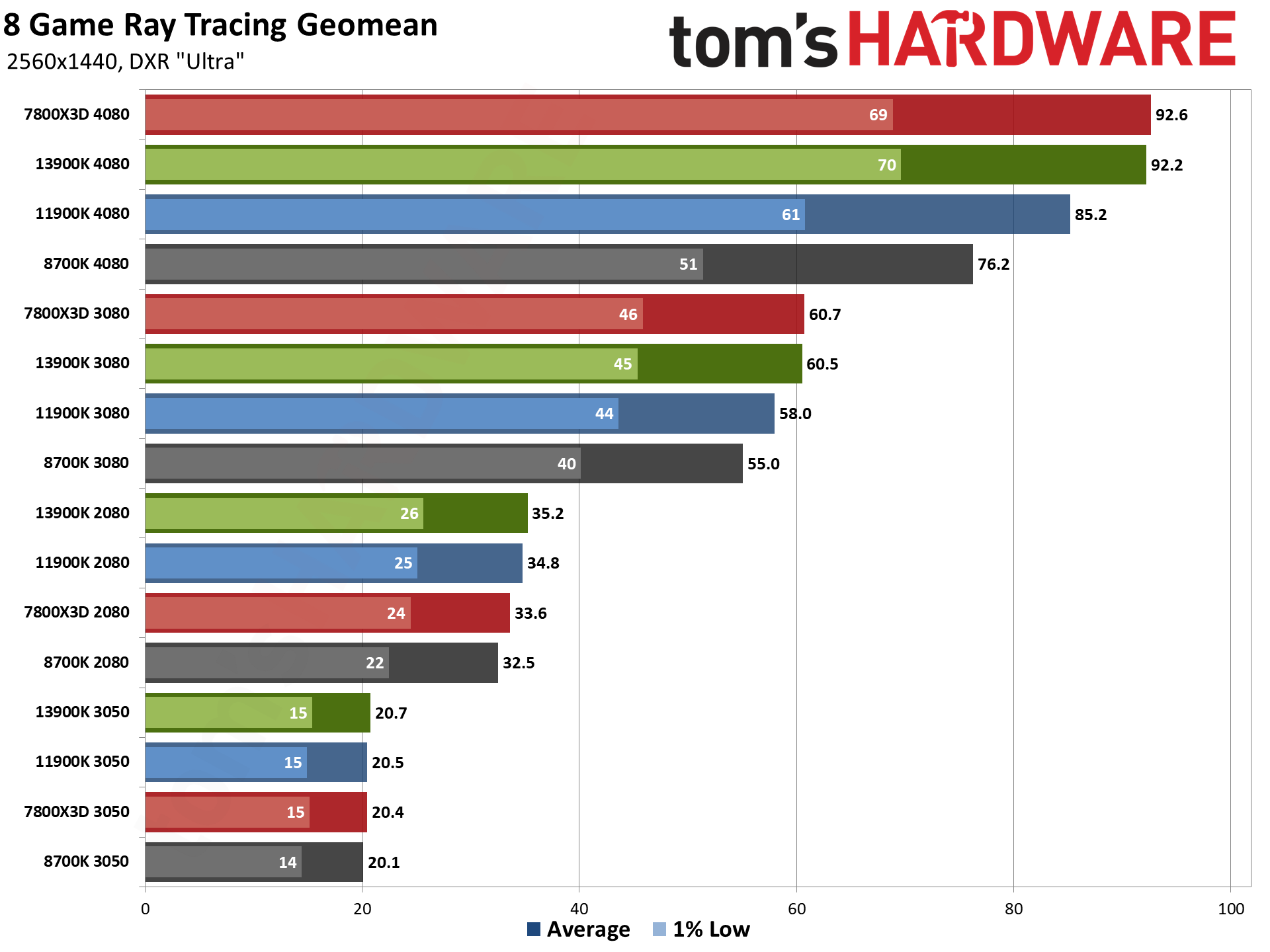

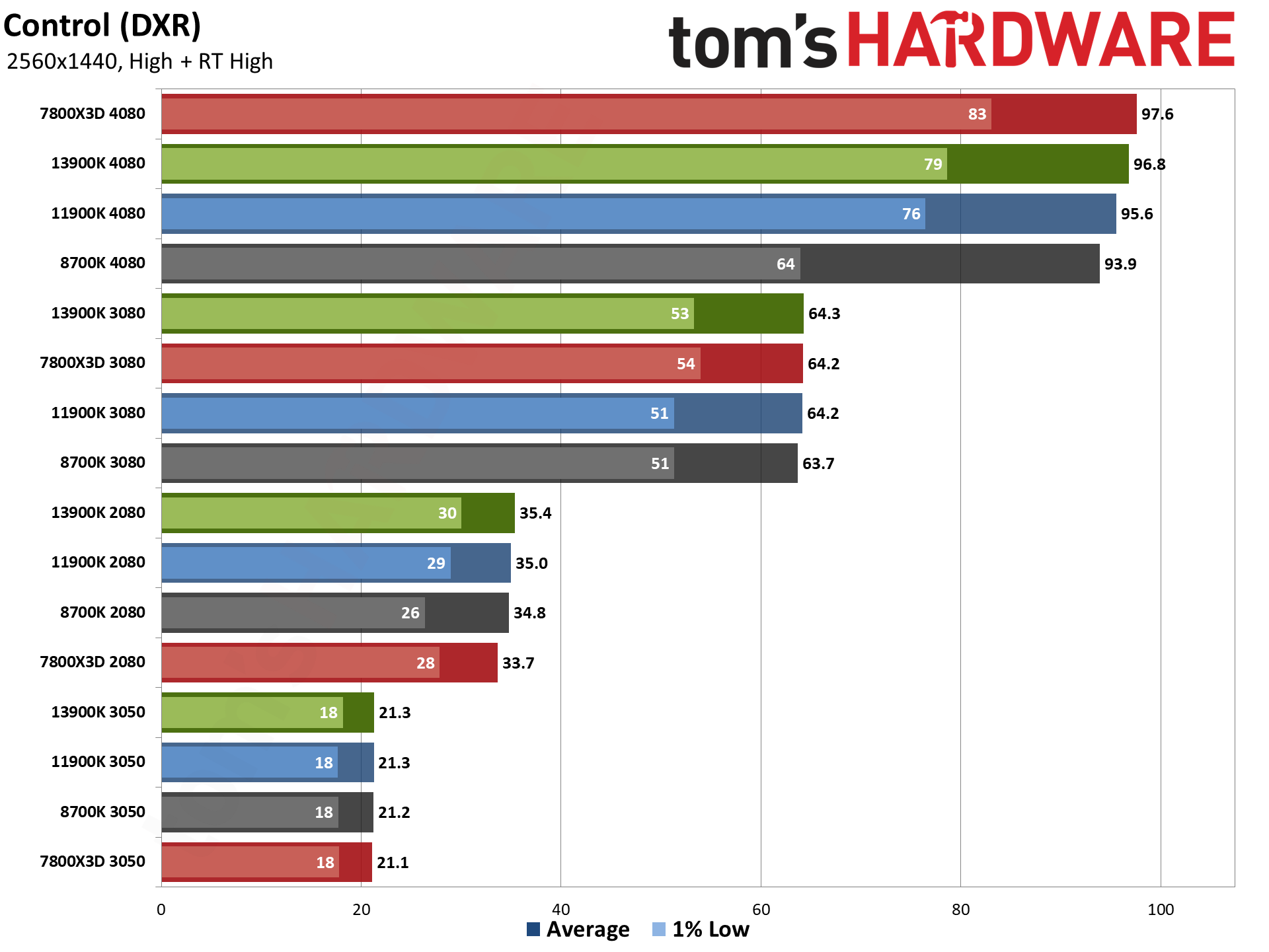

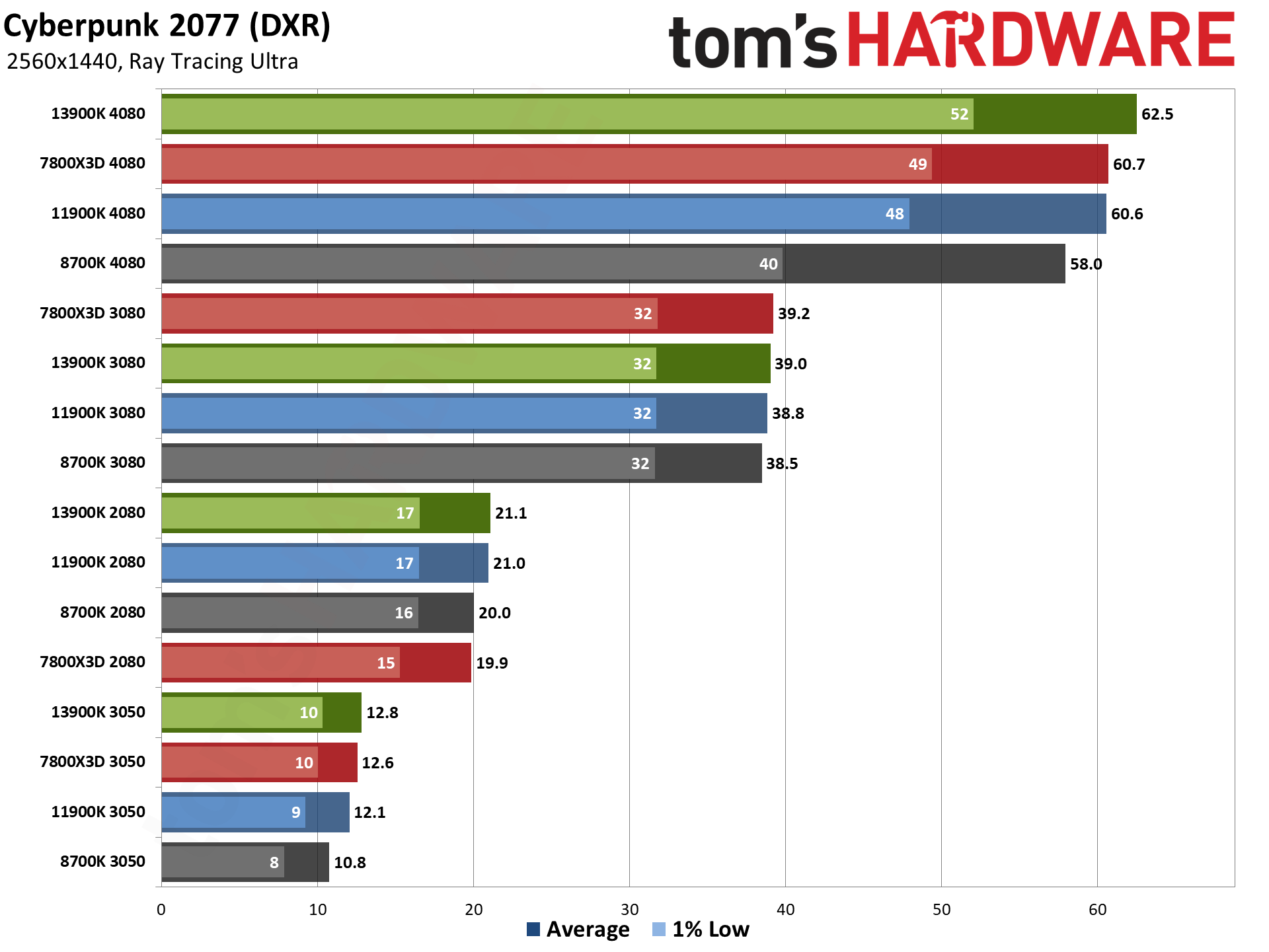

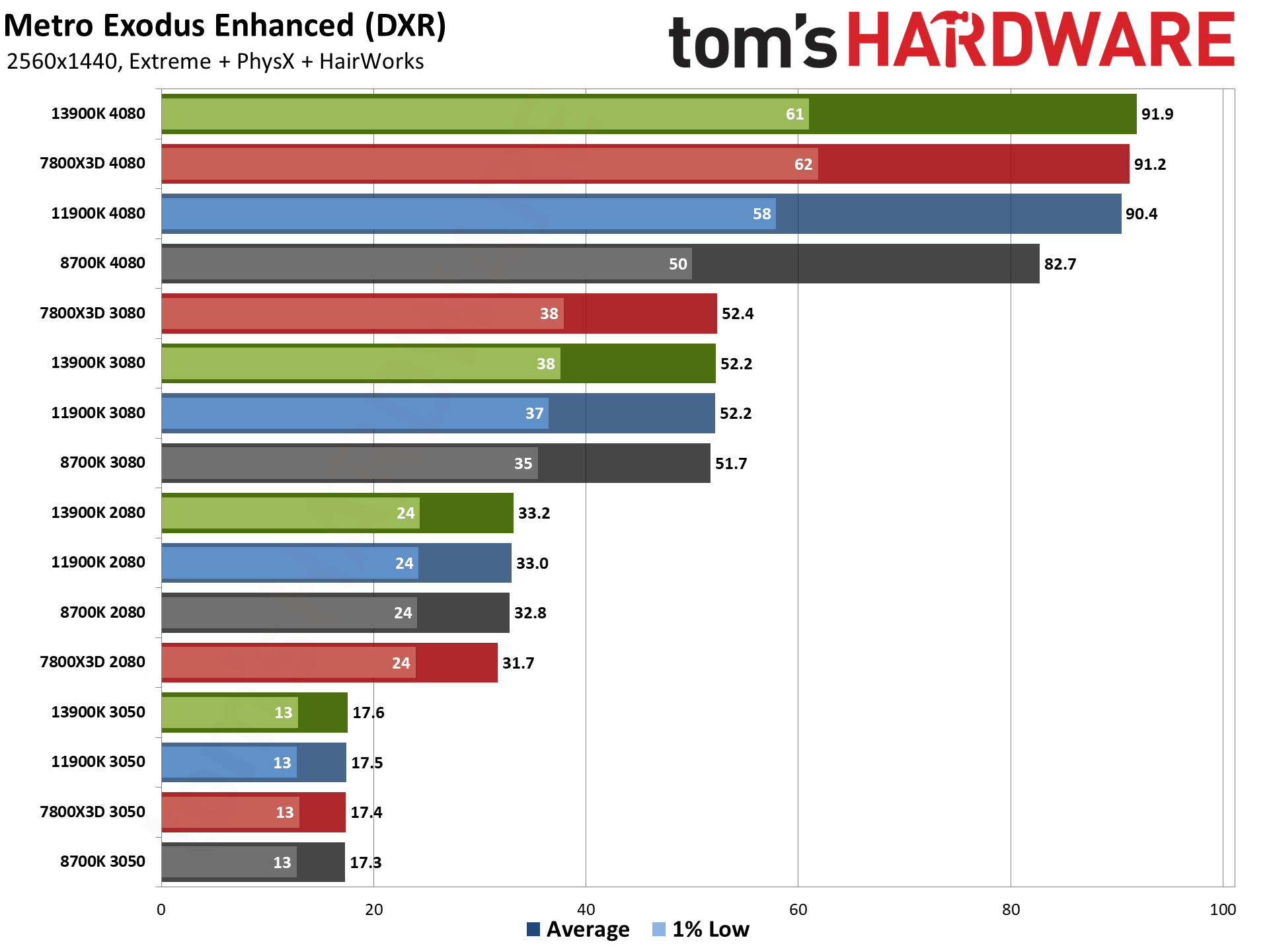

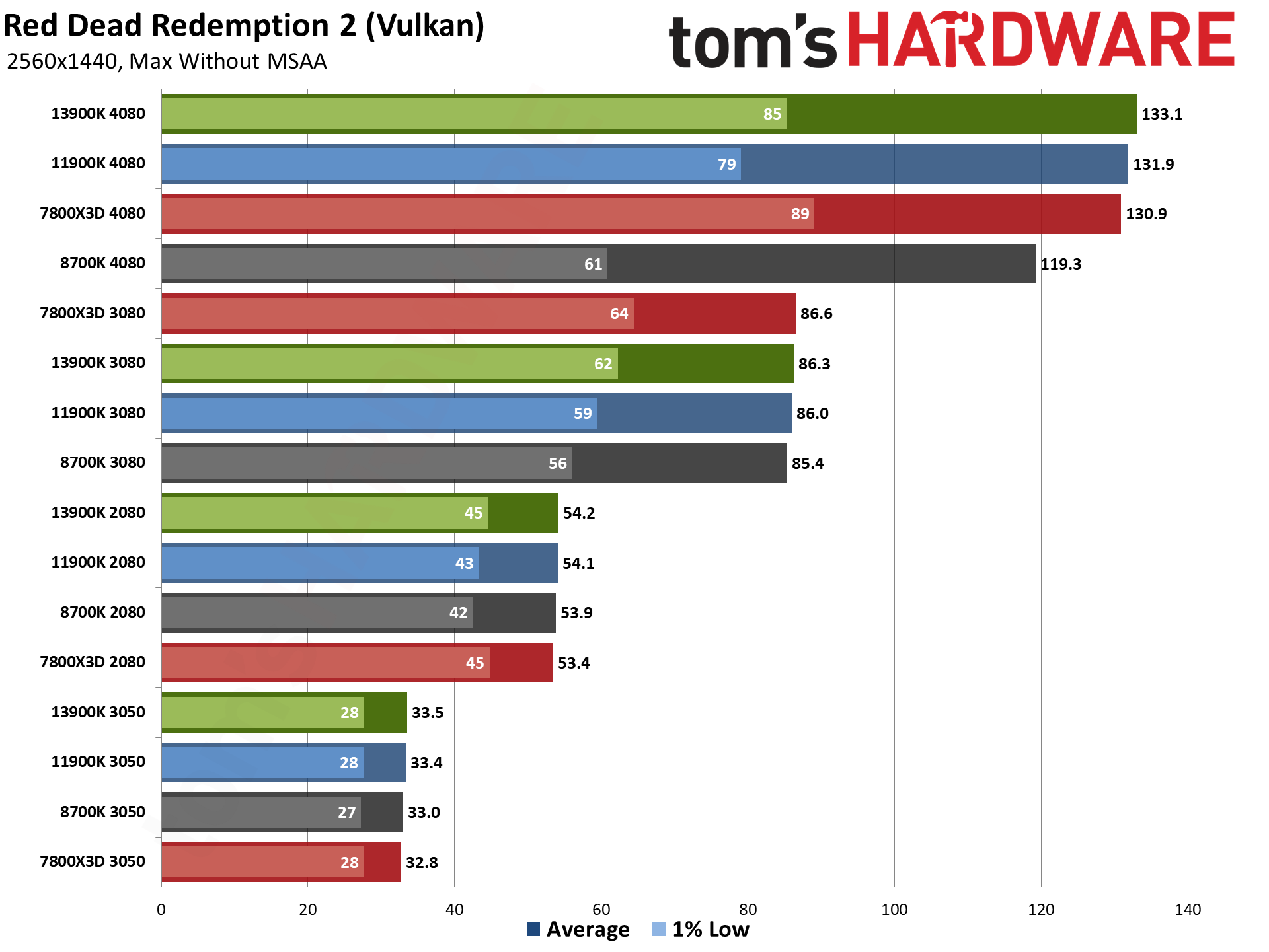

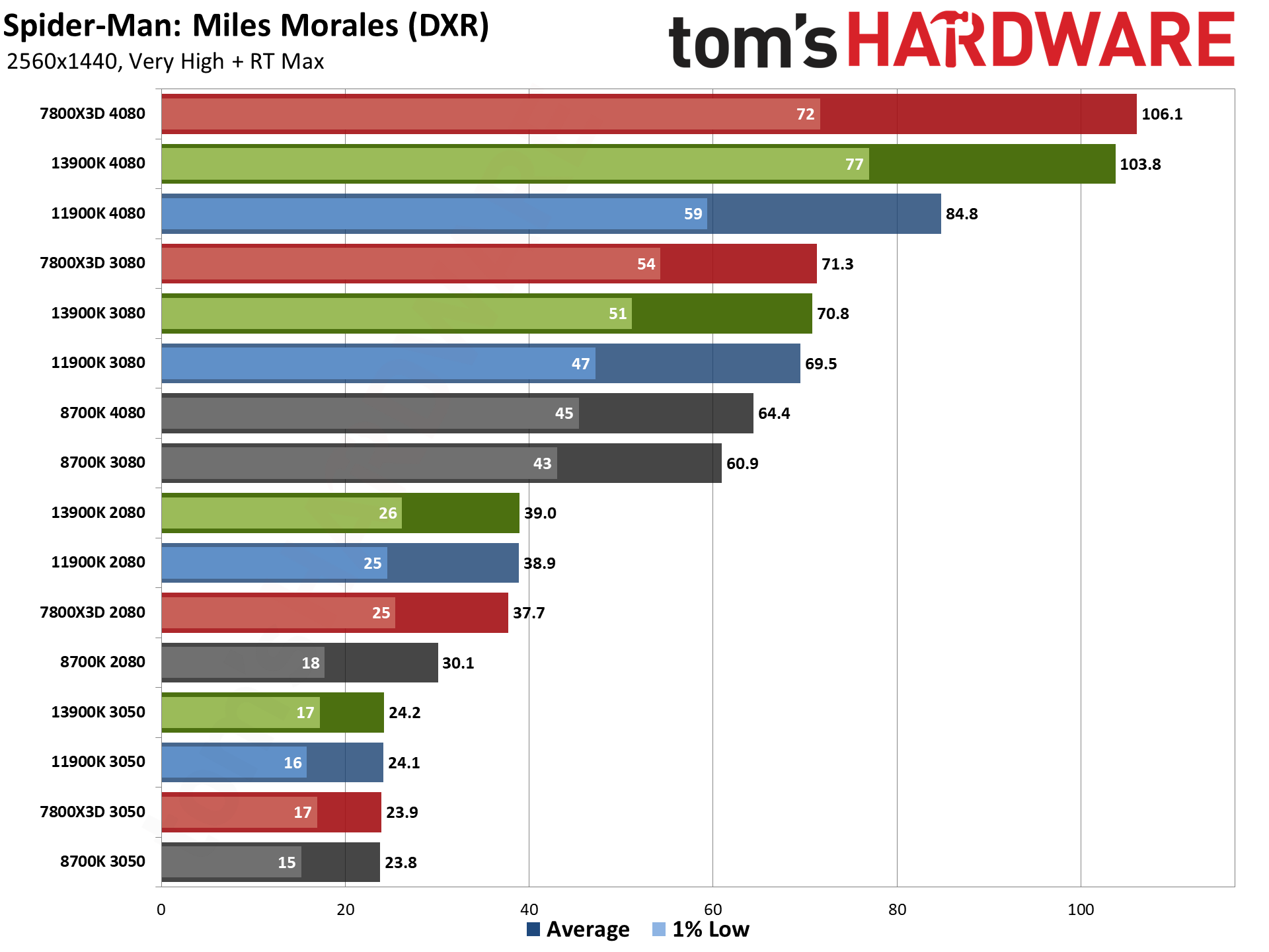

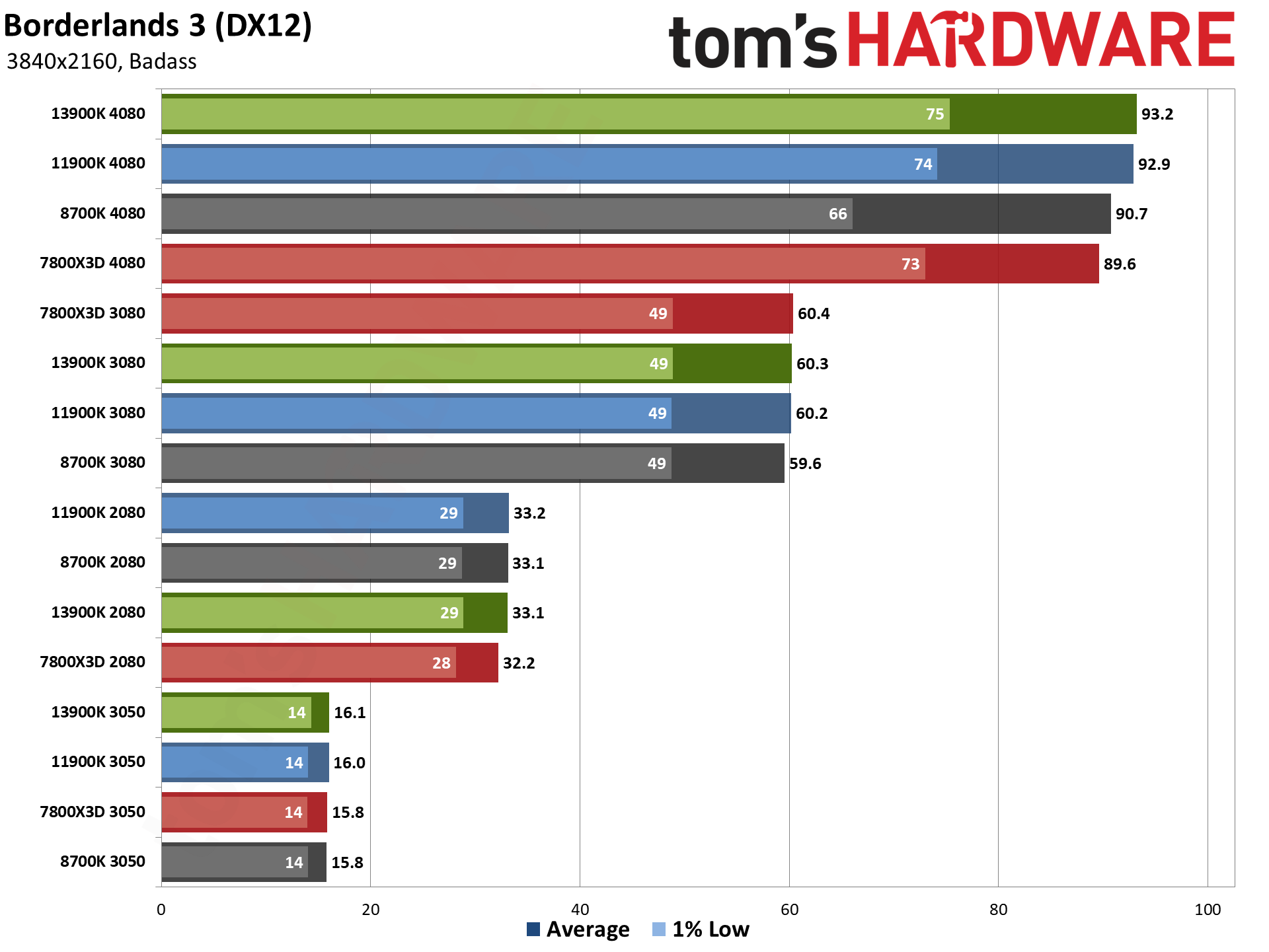

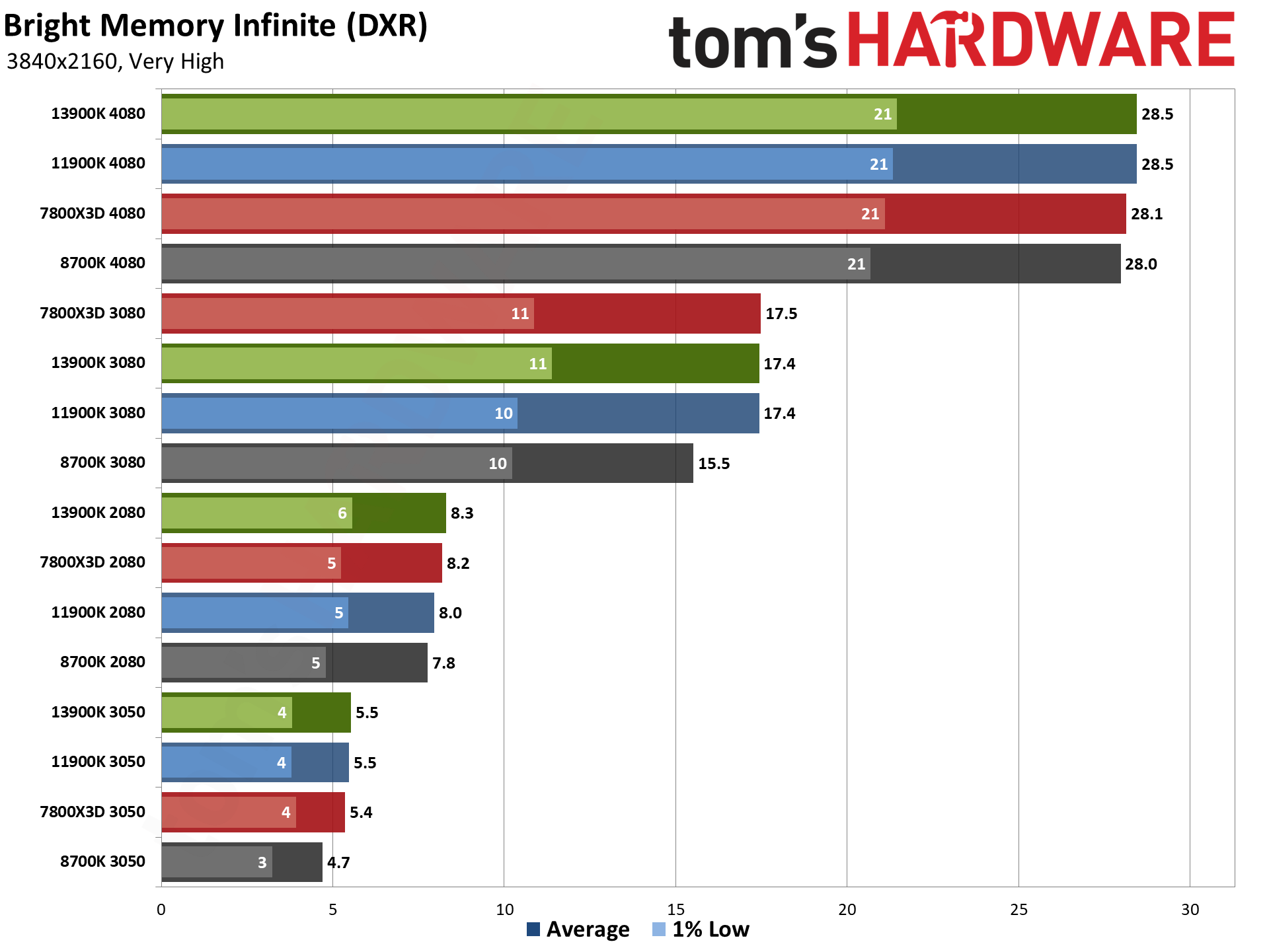

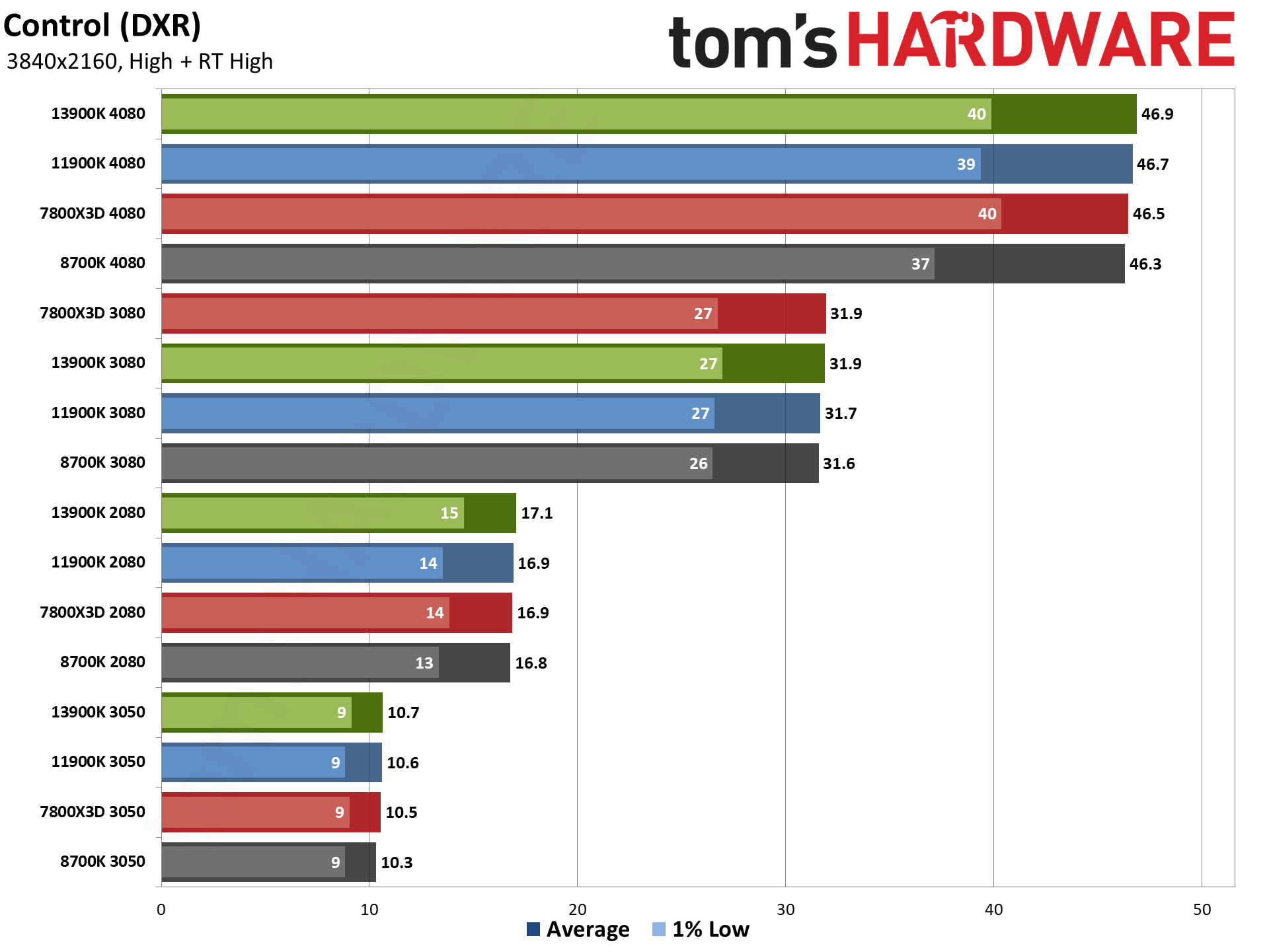

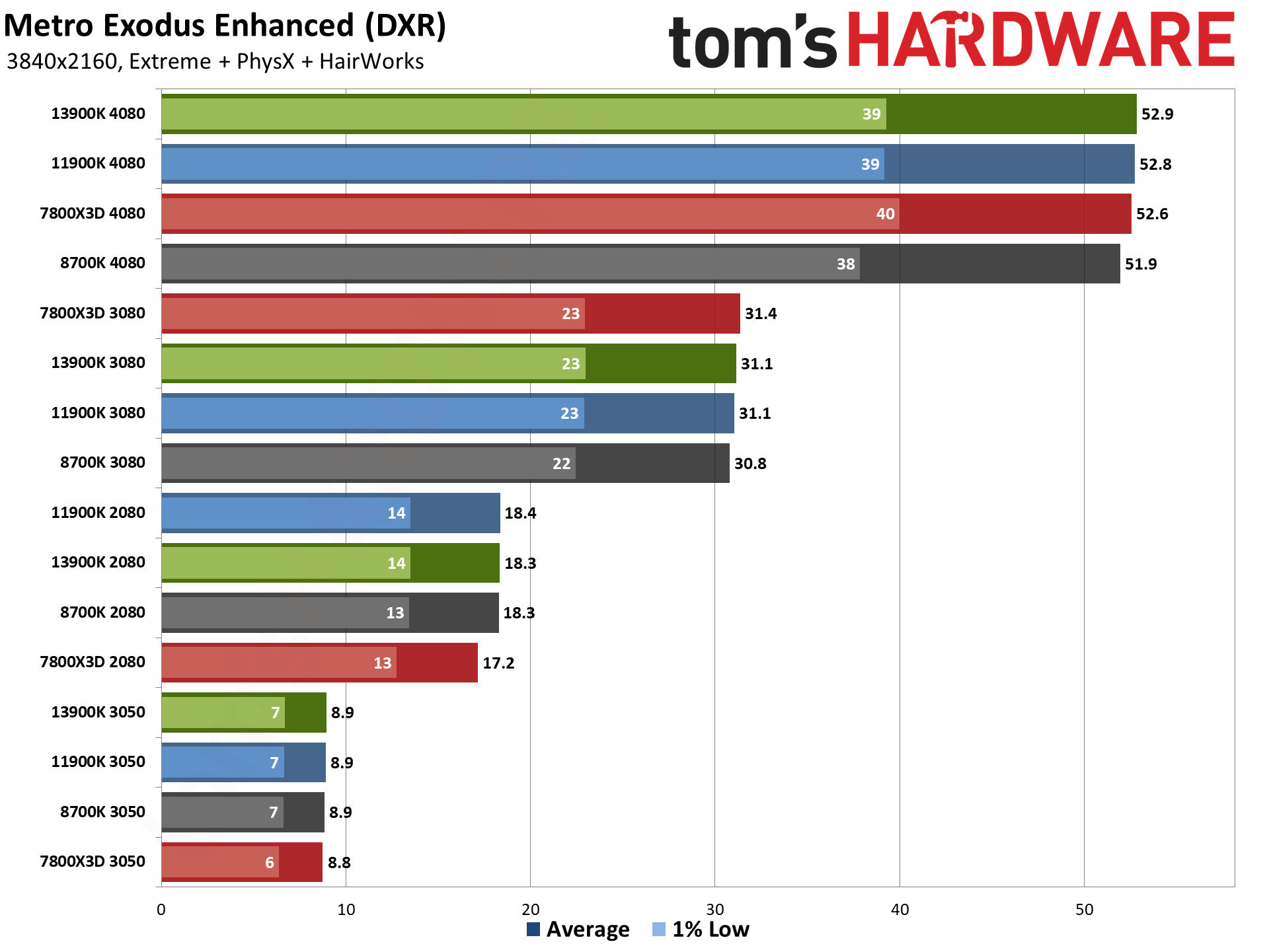

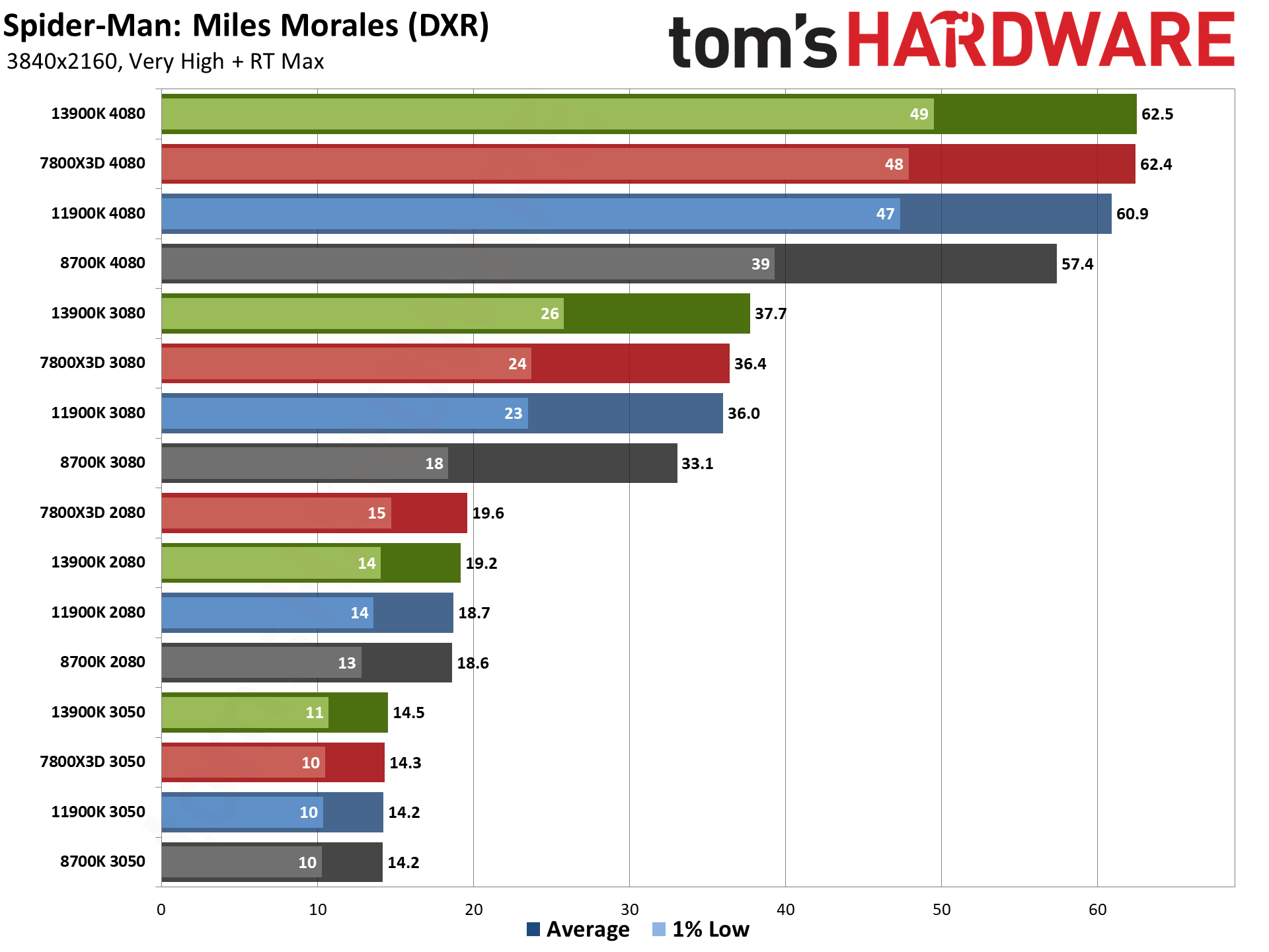

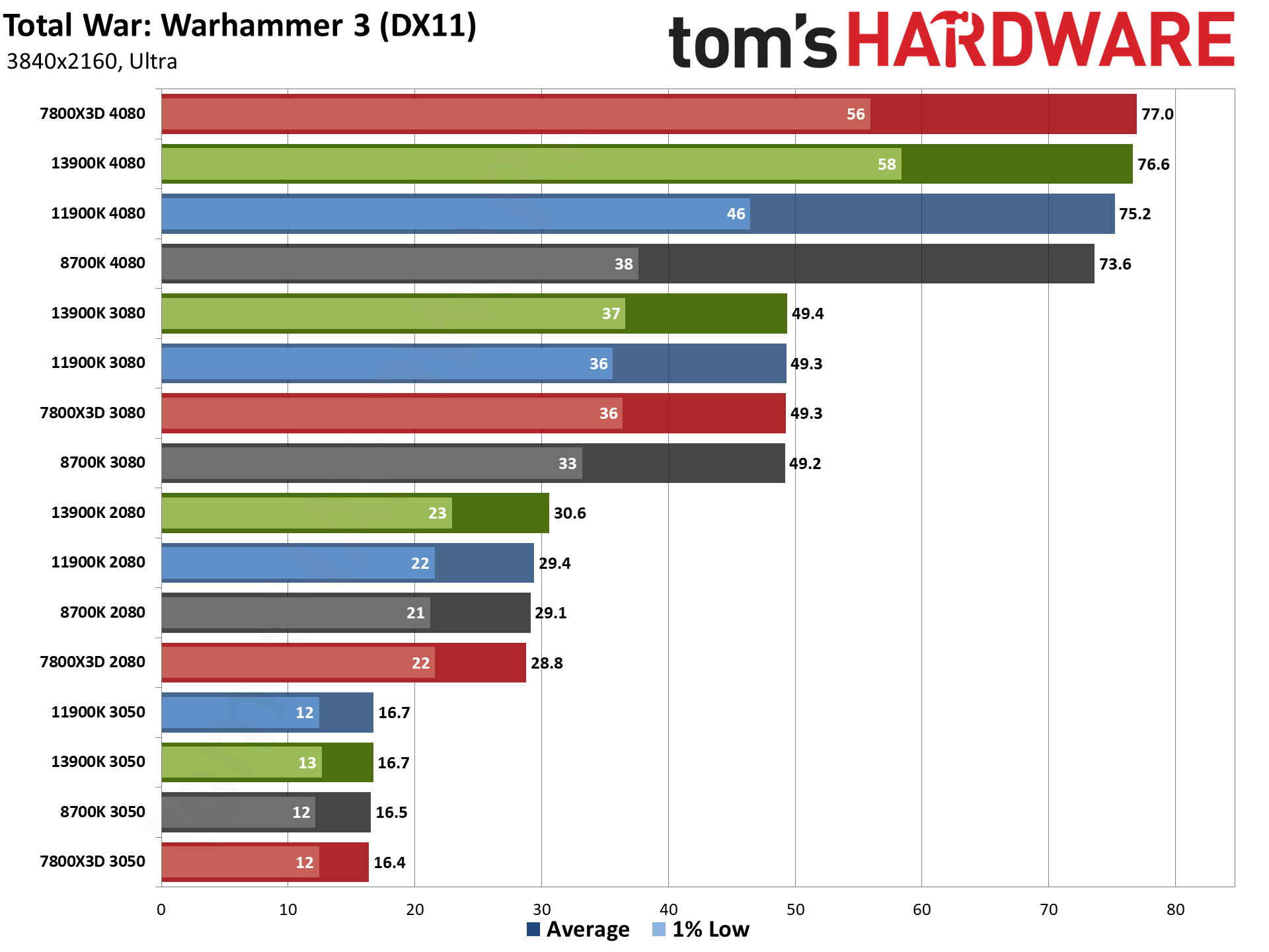

That's all just focusing on the overall performance, of course, and individual games can show larger or smaller differences. The rasterization and DXR overall charts mostly echo the overall results, though the gains from a faster CPU tend to be muted on the more graphically demanding games — DXR games in particular.

Minimum framerates — or the average fps of the bottom 1% of frames that we use, which acts as a reasonably proxy for overall consistency of framerate — show much larger differences as well. Even with the RTX 3050 where performance is mostly GPU limited, the 13900K improved 1% lows by 13% overall. Stepping up to the RTX 2080, there's a 22% improvement in minimum fps, and that increases to 44% with an RTX 3080. And if you move from the 8700K to a 7800X3D with the RTX 4080, minimum fps improves by 61%.

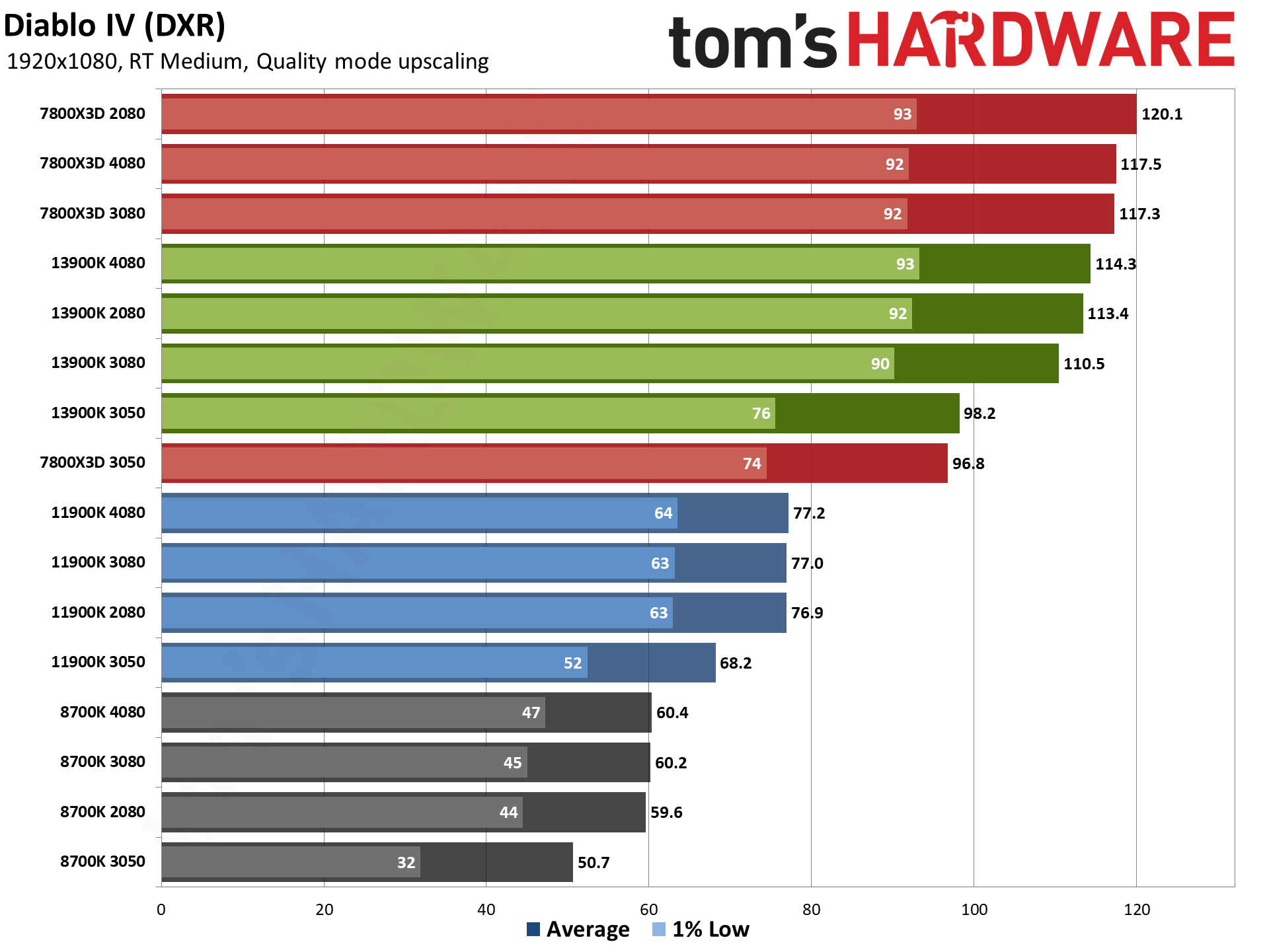

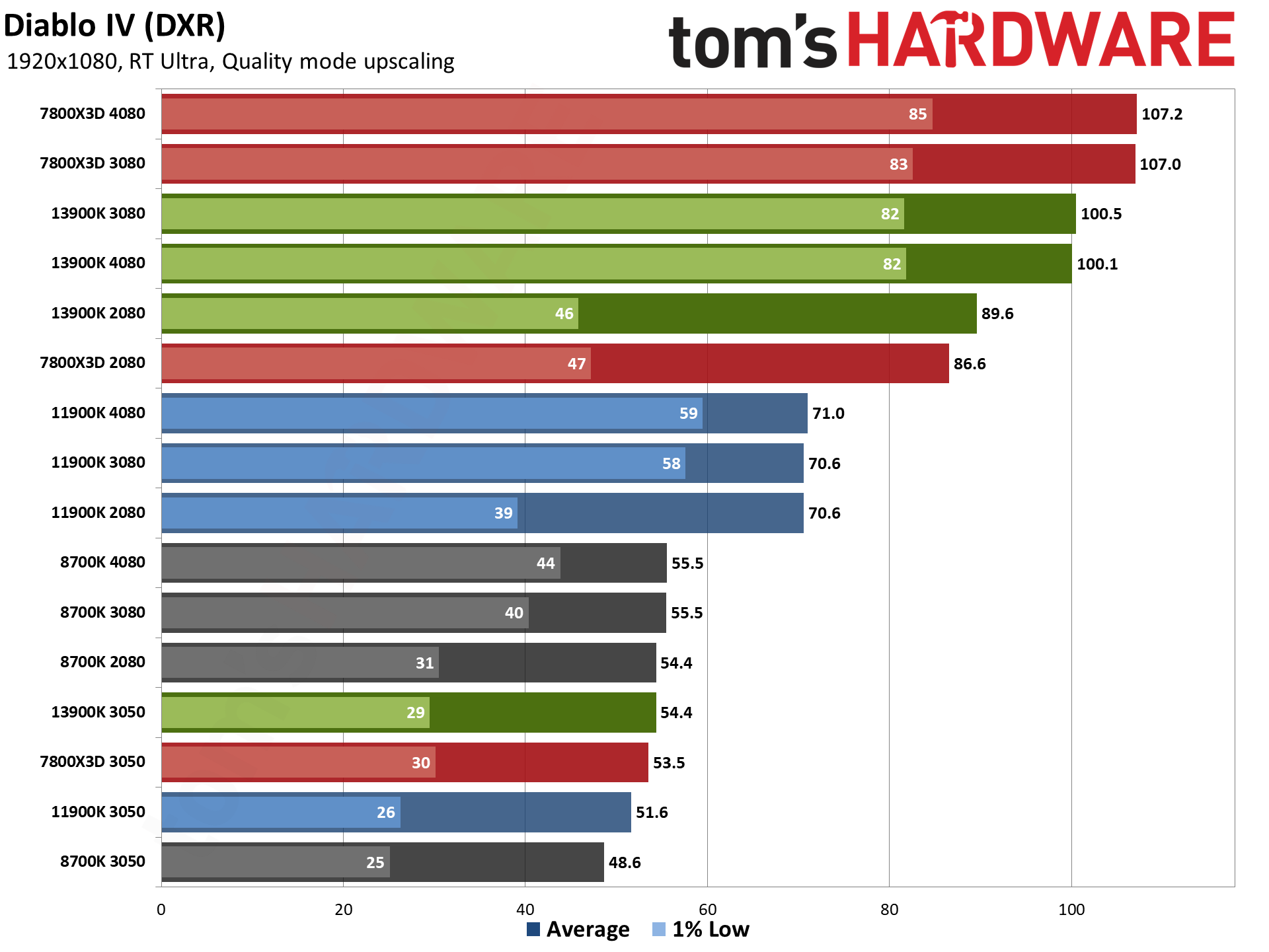

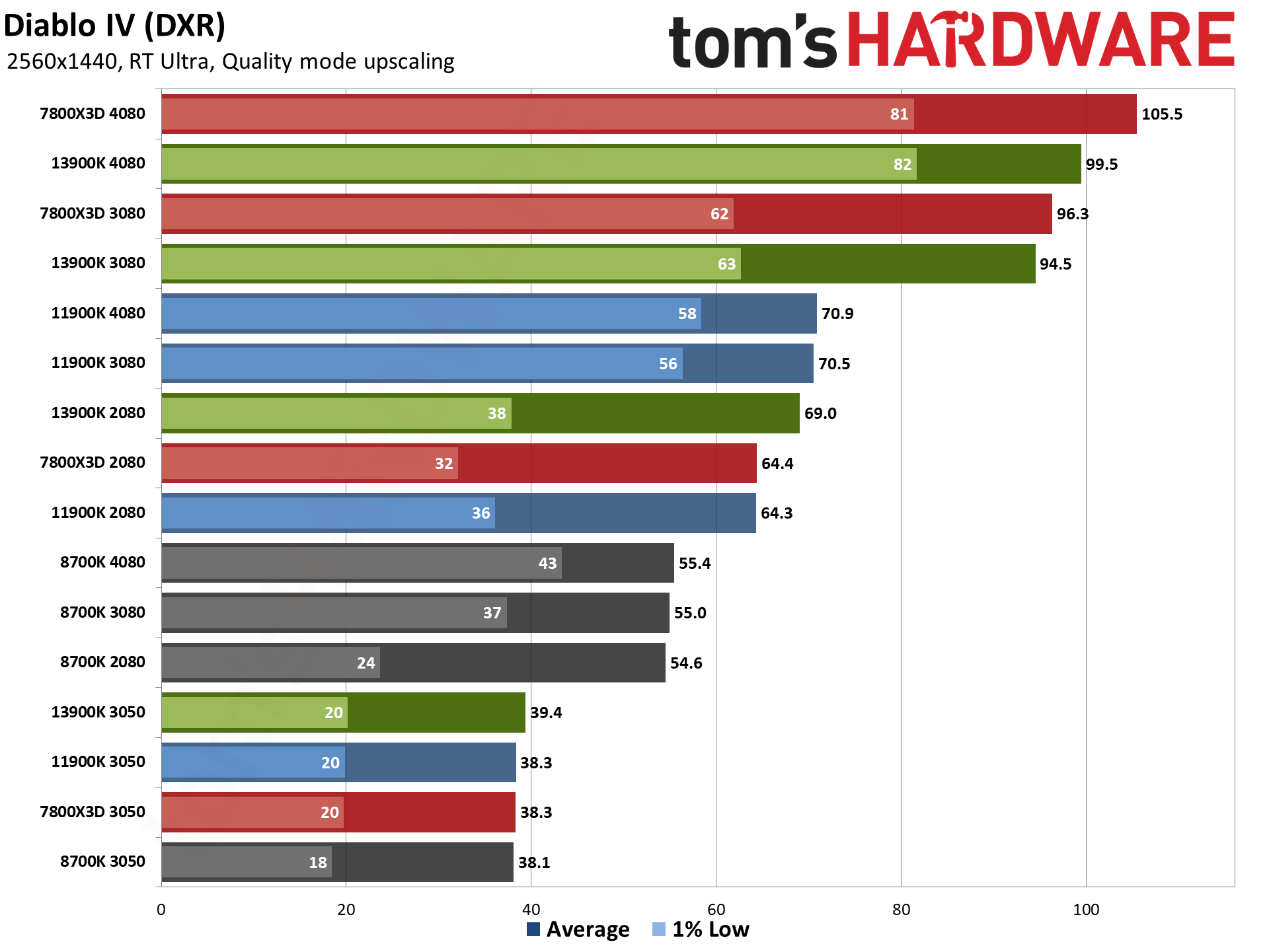

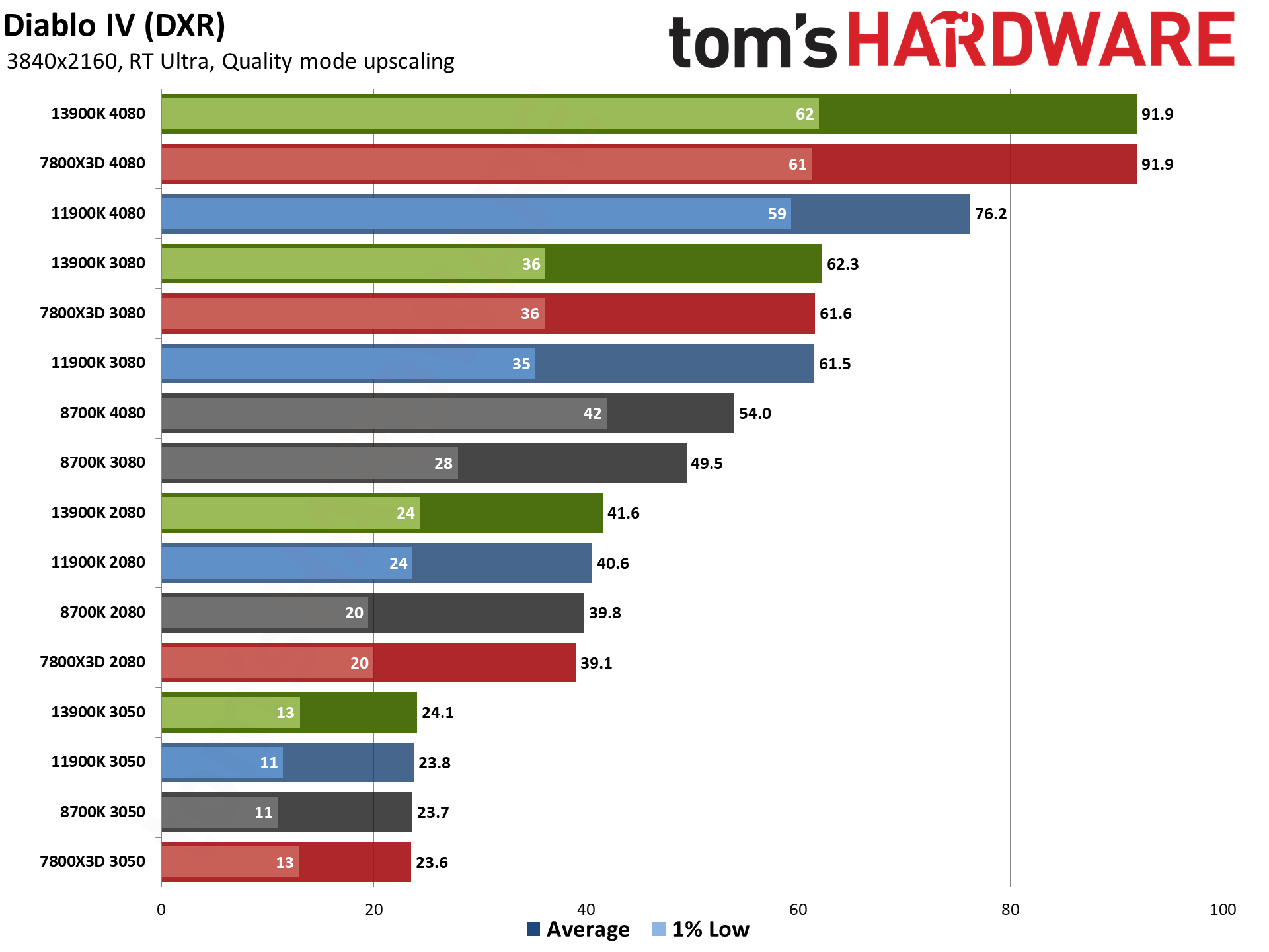

One final interesting item to pull out from all the individual gaming results is Diablo IV. The game runs quite well in general on a wide range of hardware... until you try to turn on ray tracing. With DXR enabled, there's a pretty massive CPU bottleneck that comes into play. At RT Medium settings, with DLSS Quality mode upscaling, even the combination of 7800X3D and 4080 only managed 118 fps — and the 7800X3D with the 2080 actually did slightly better at 120 fps.

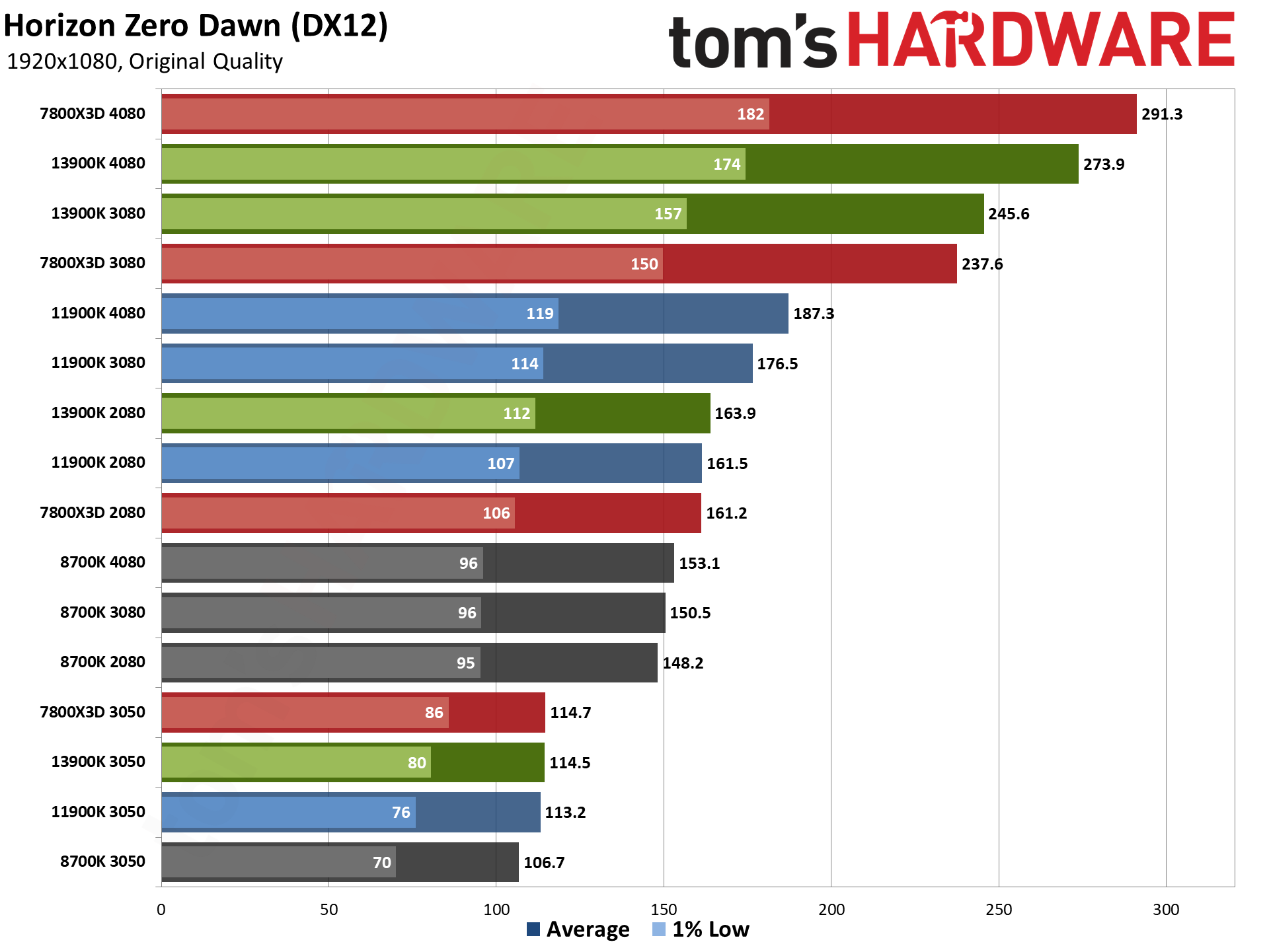

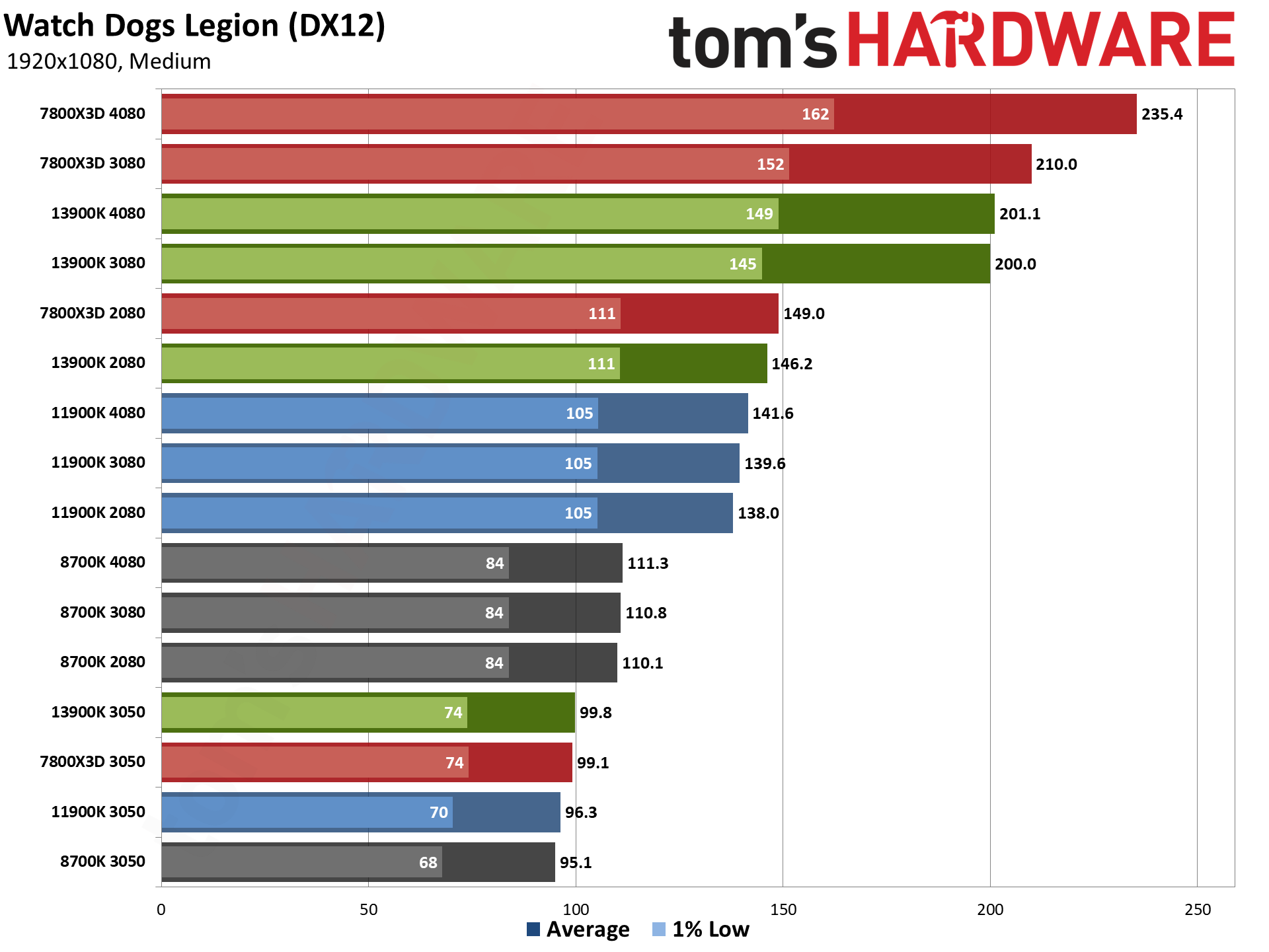

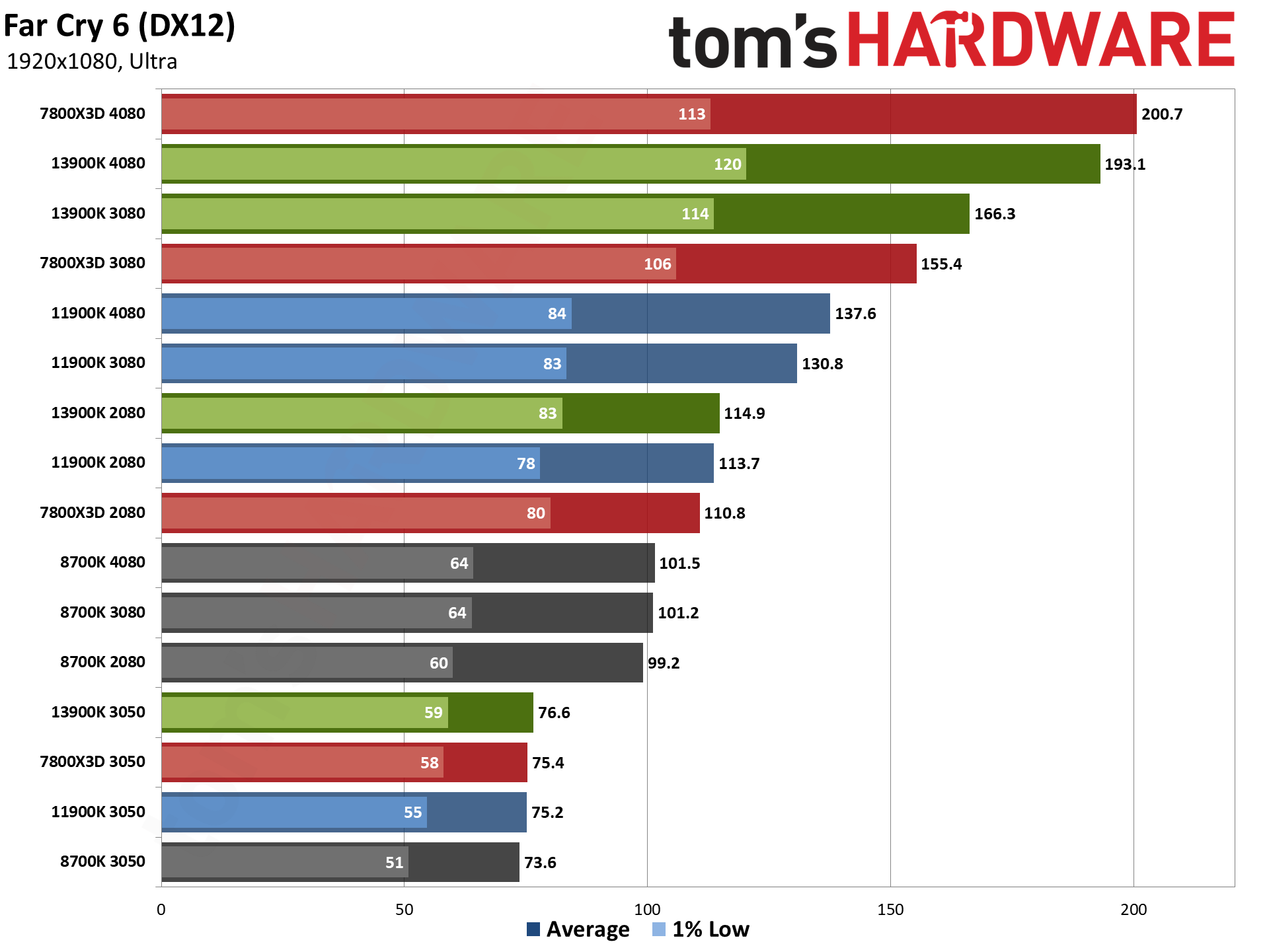

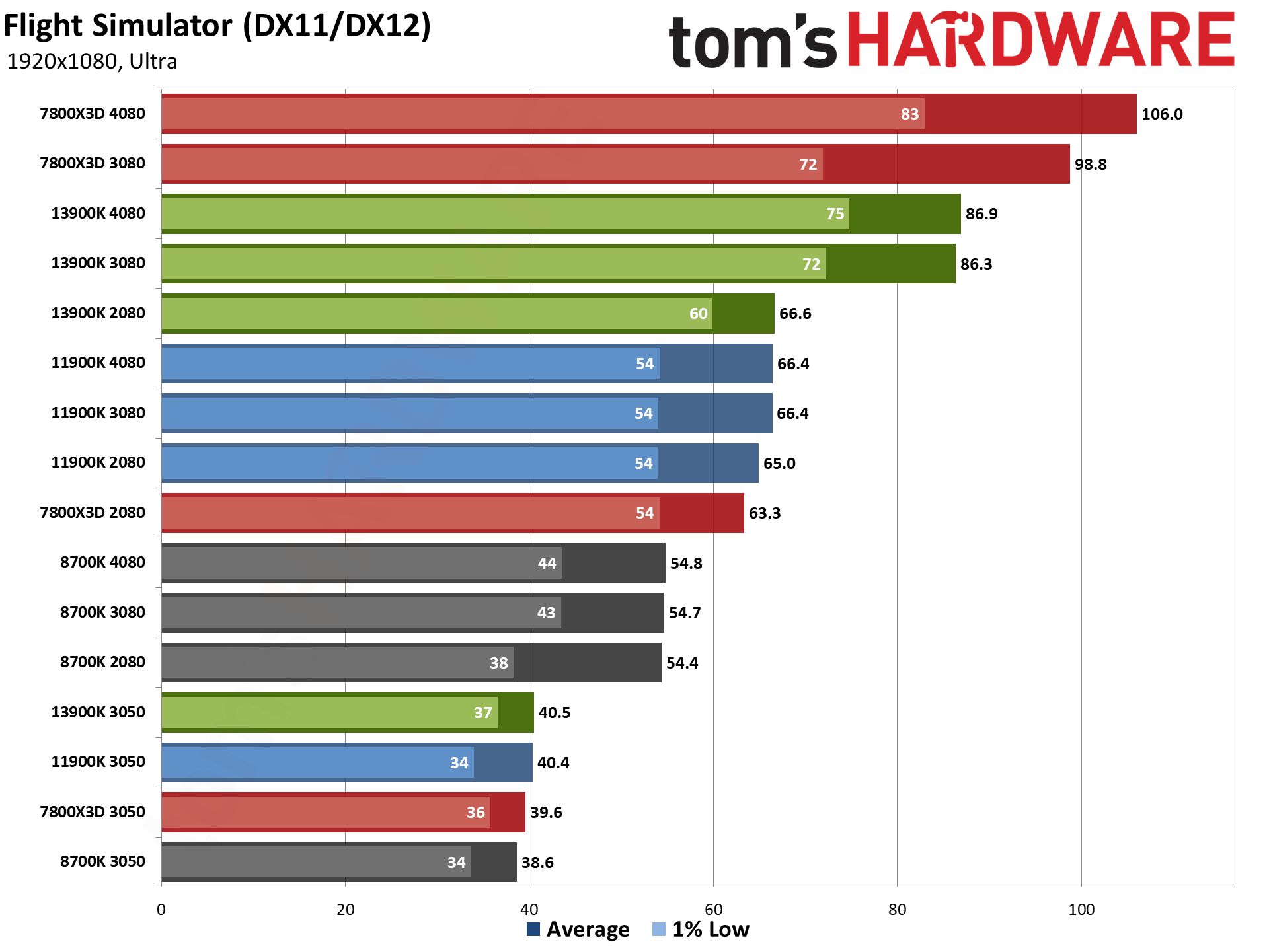

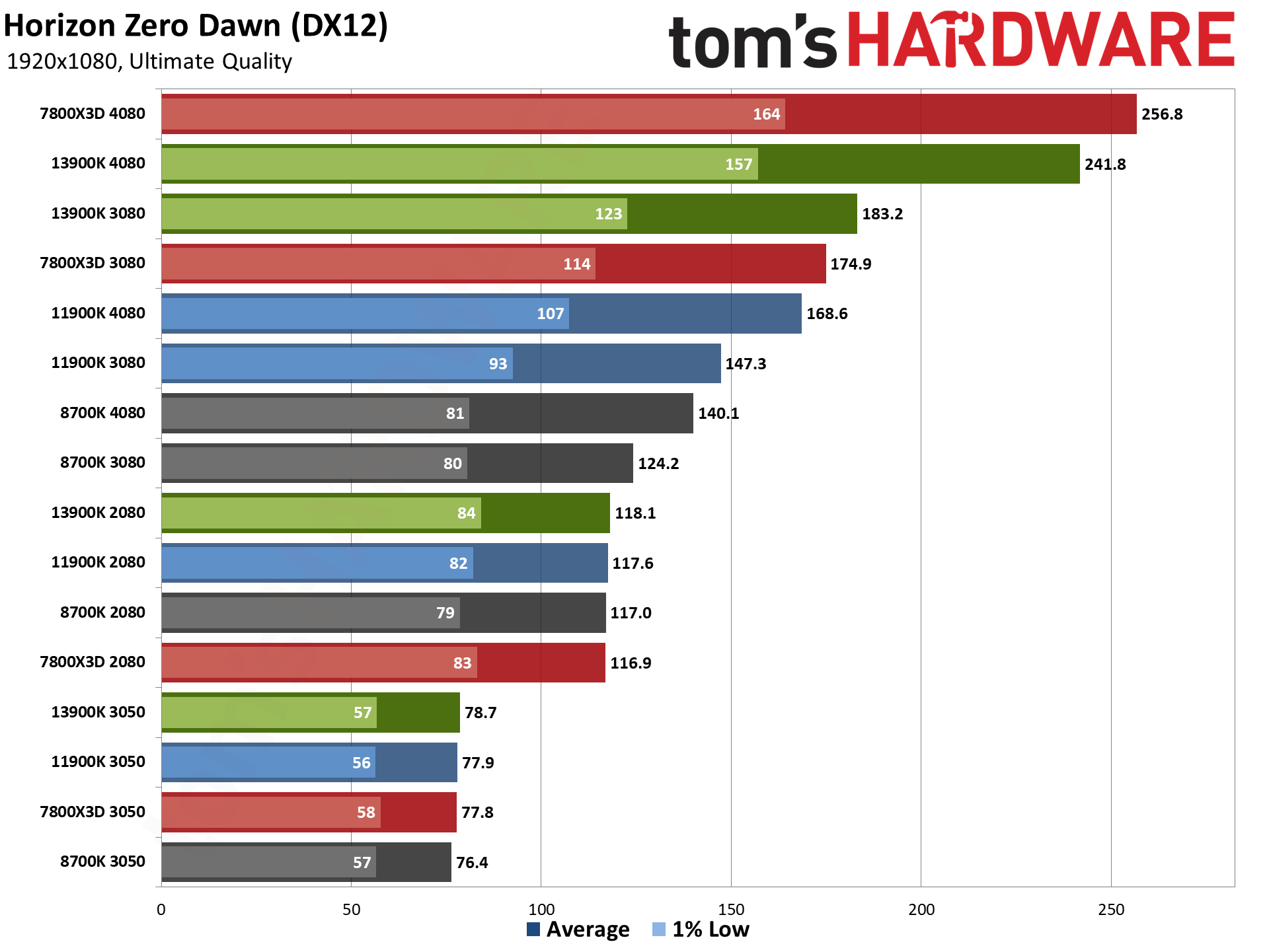

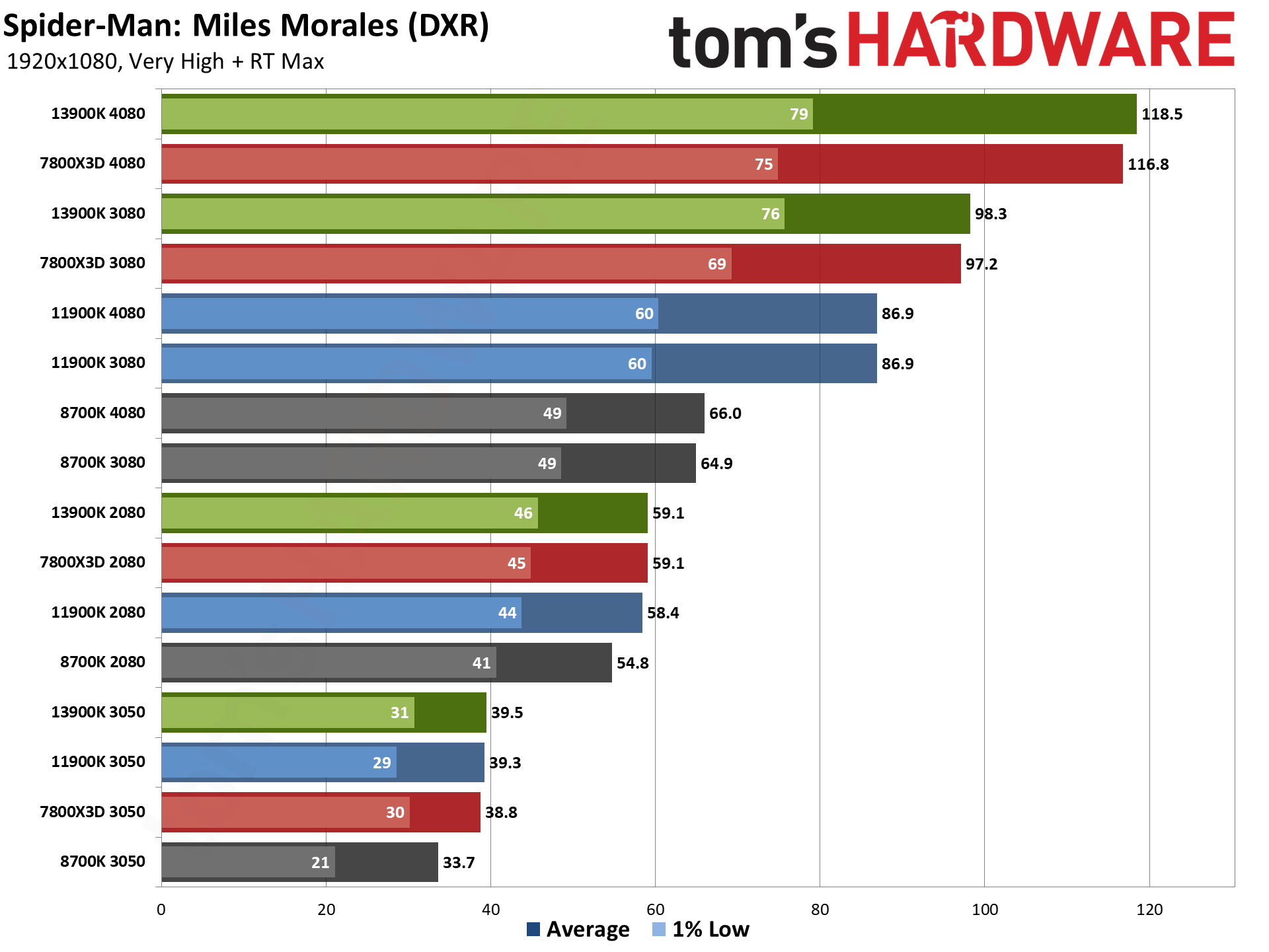

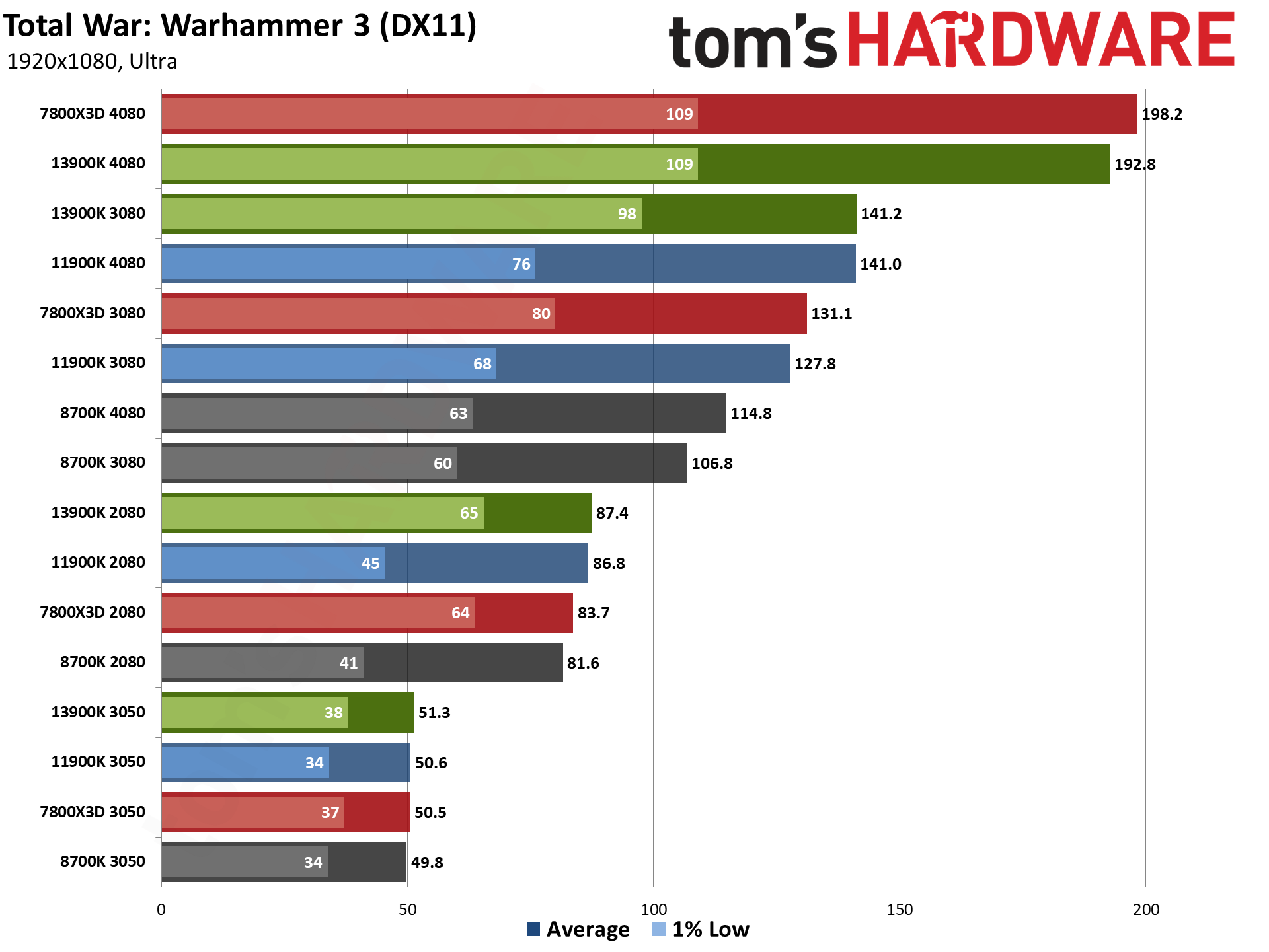

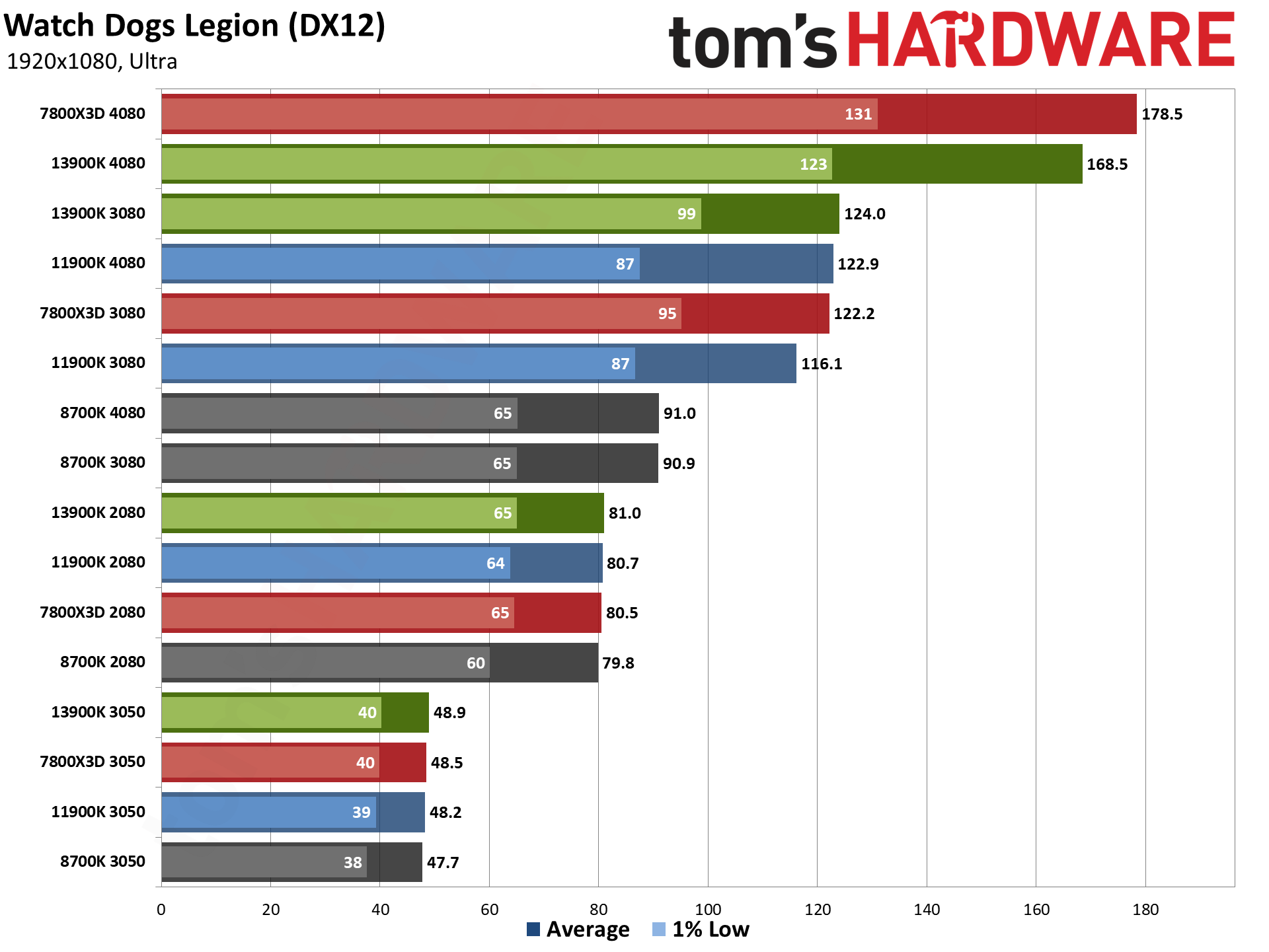

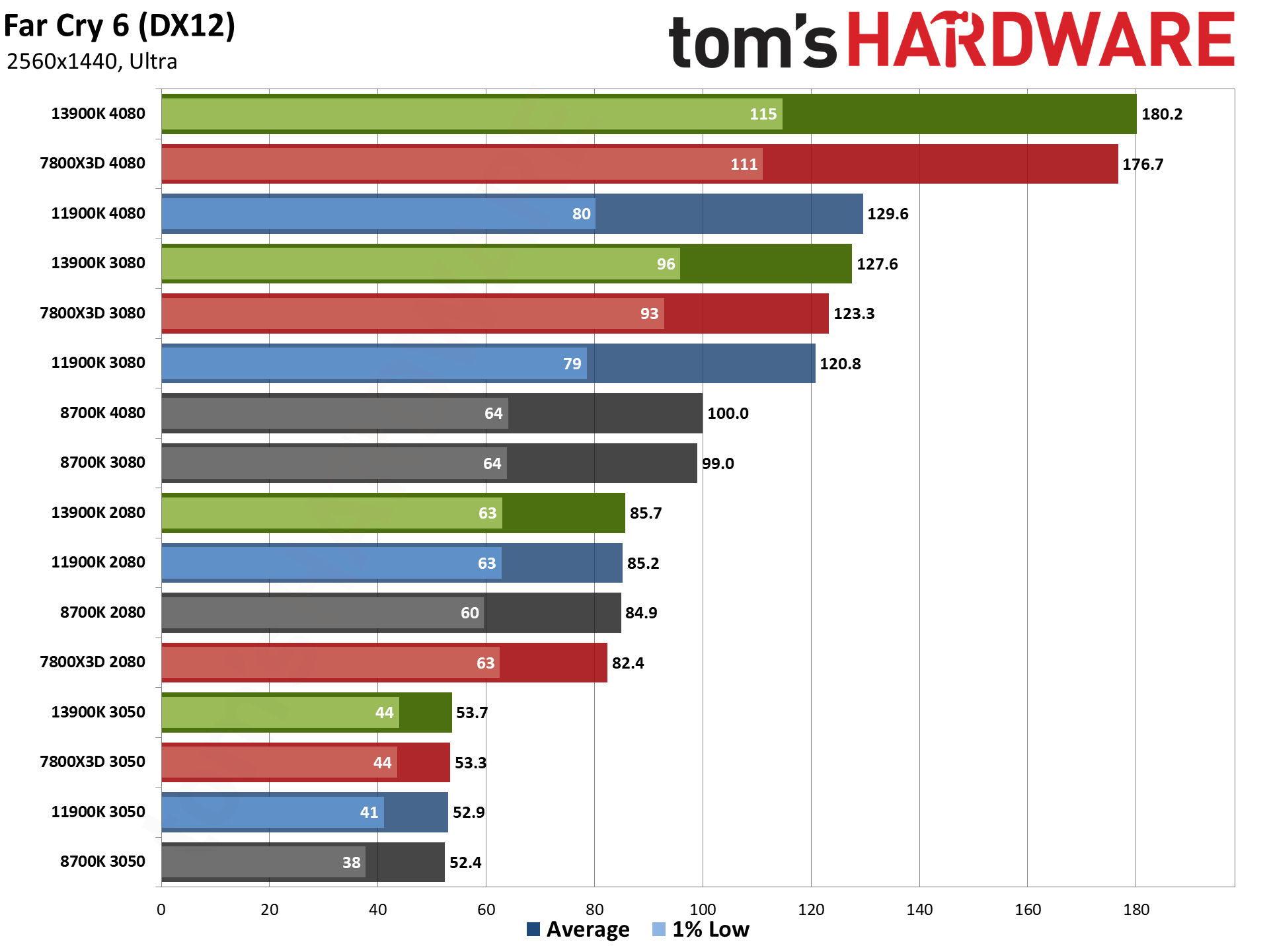

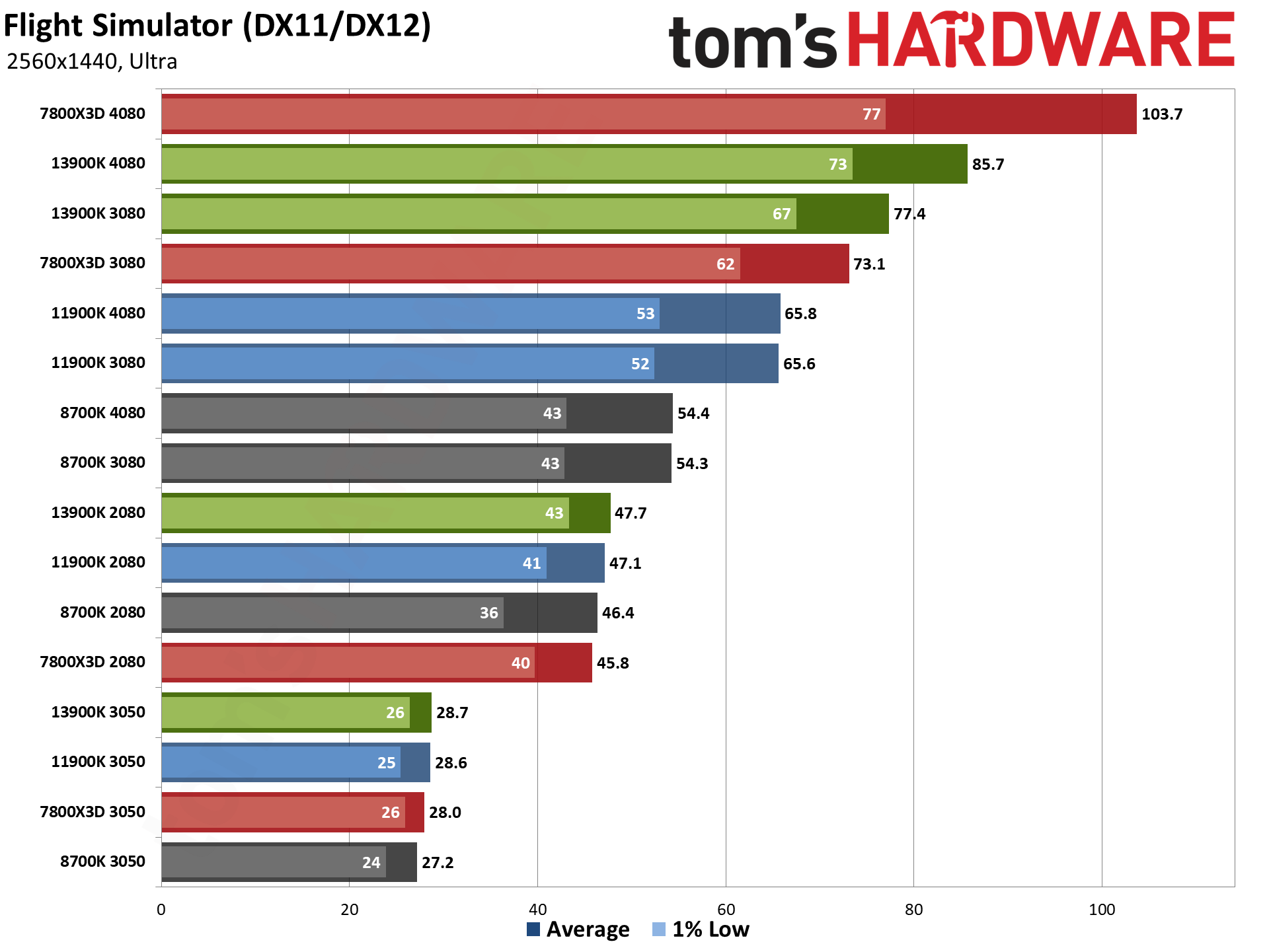

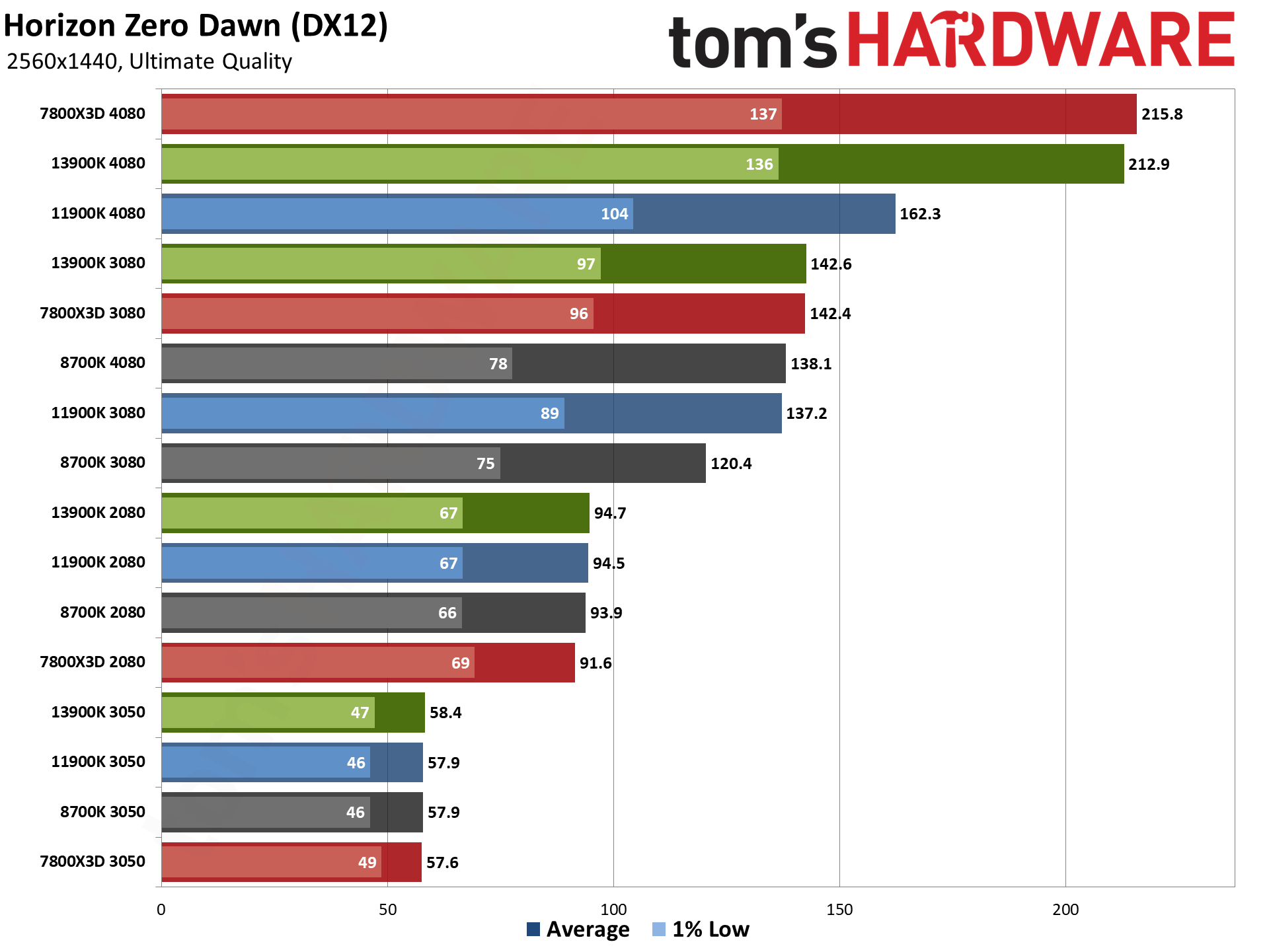

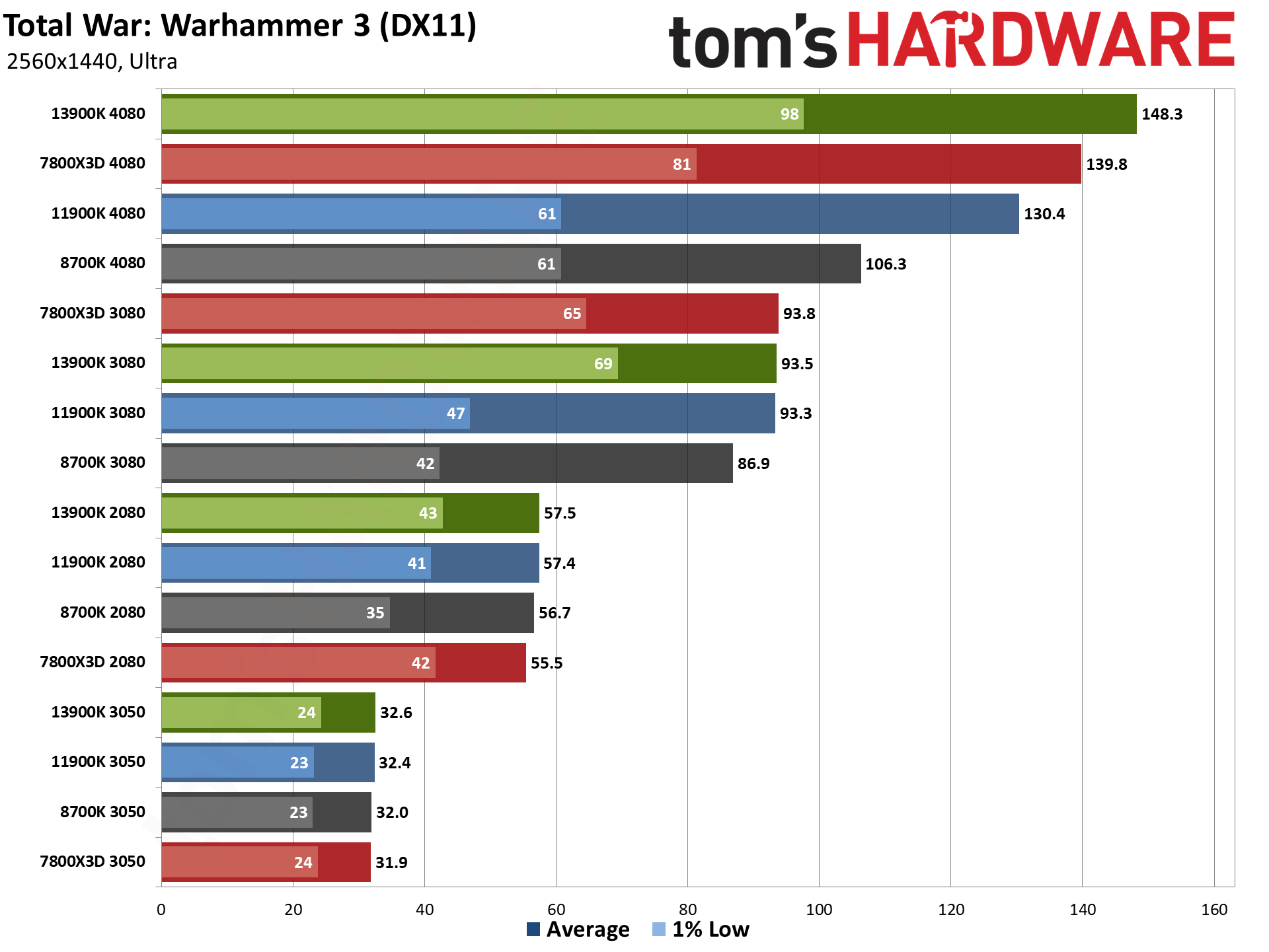

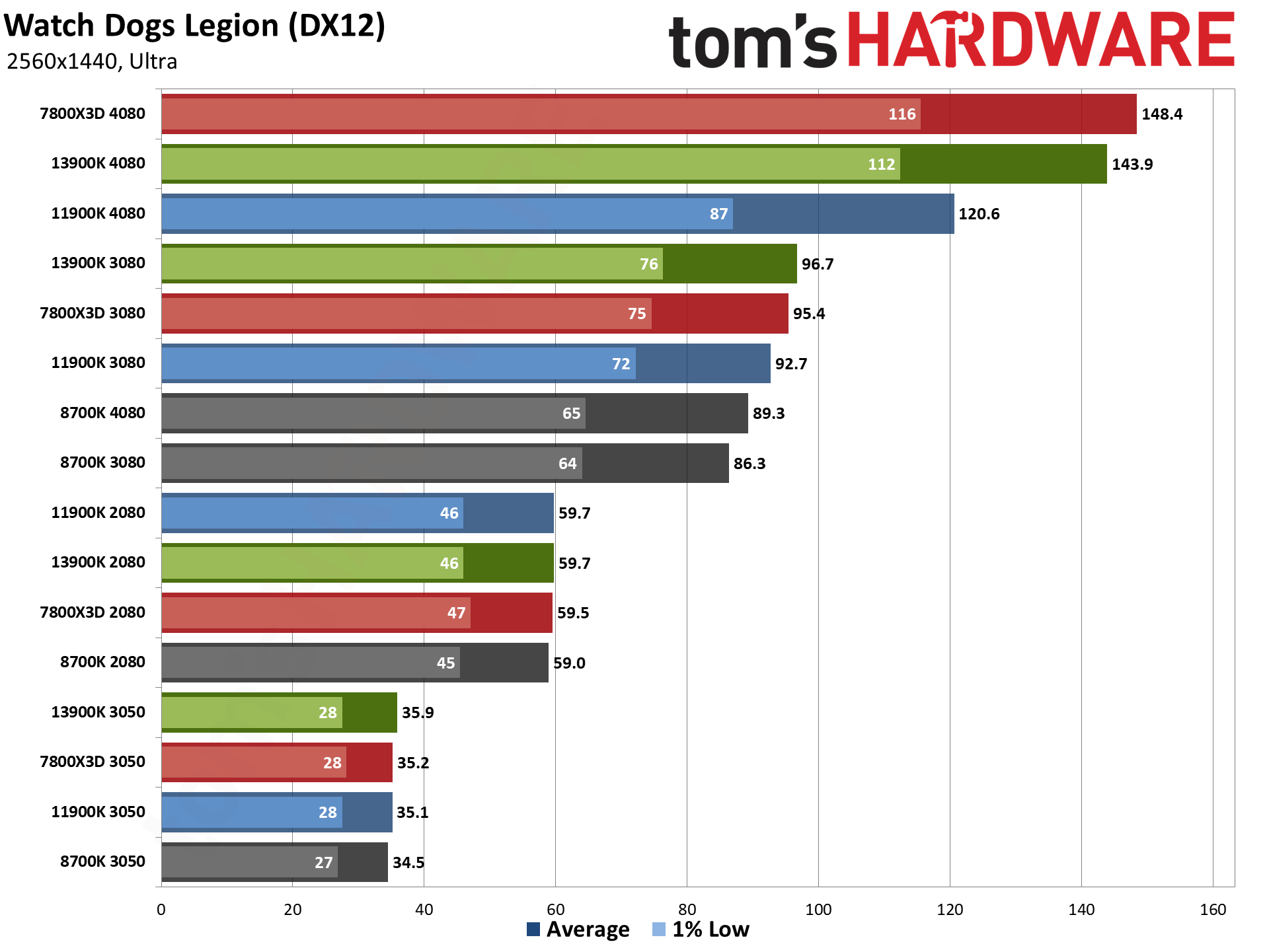

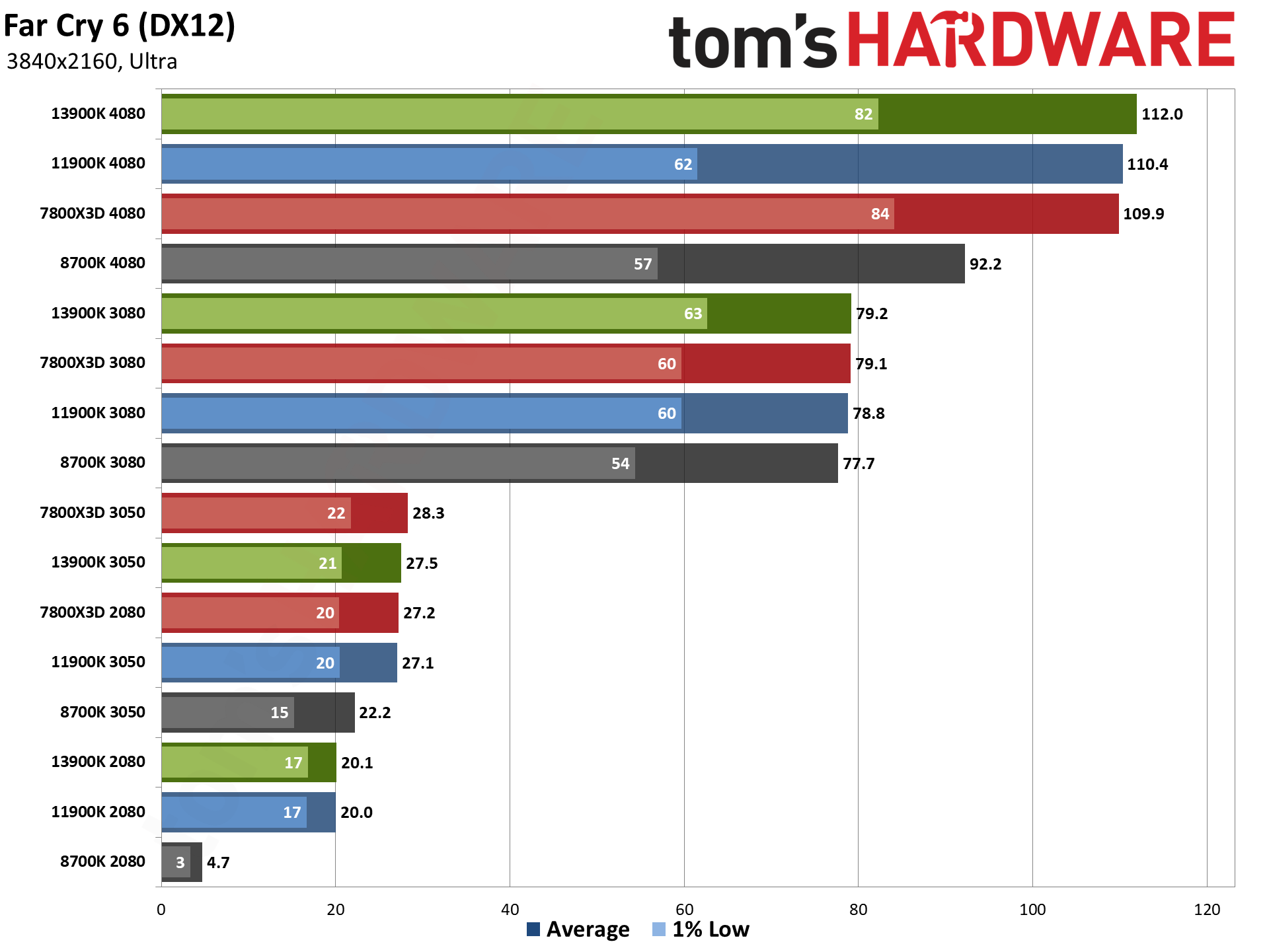

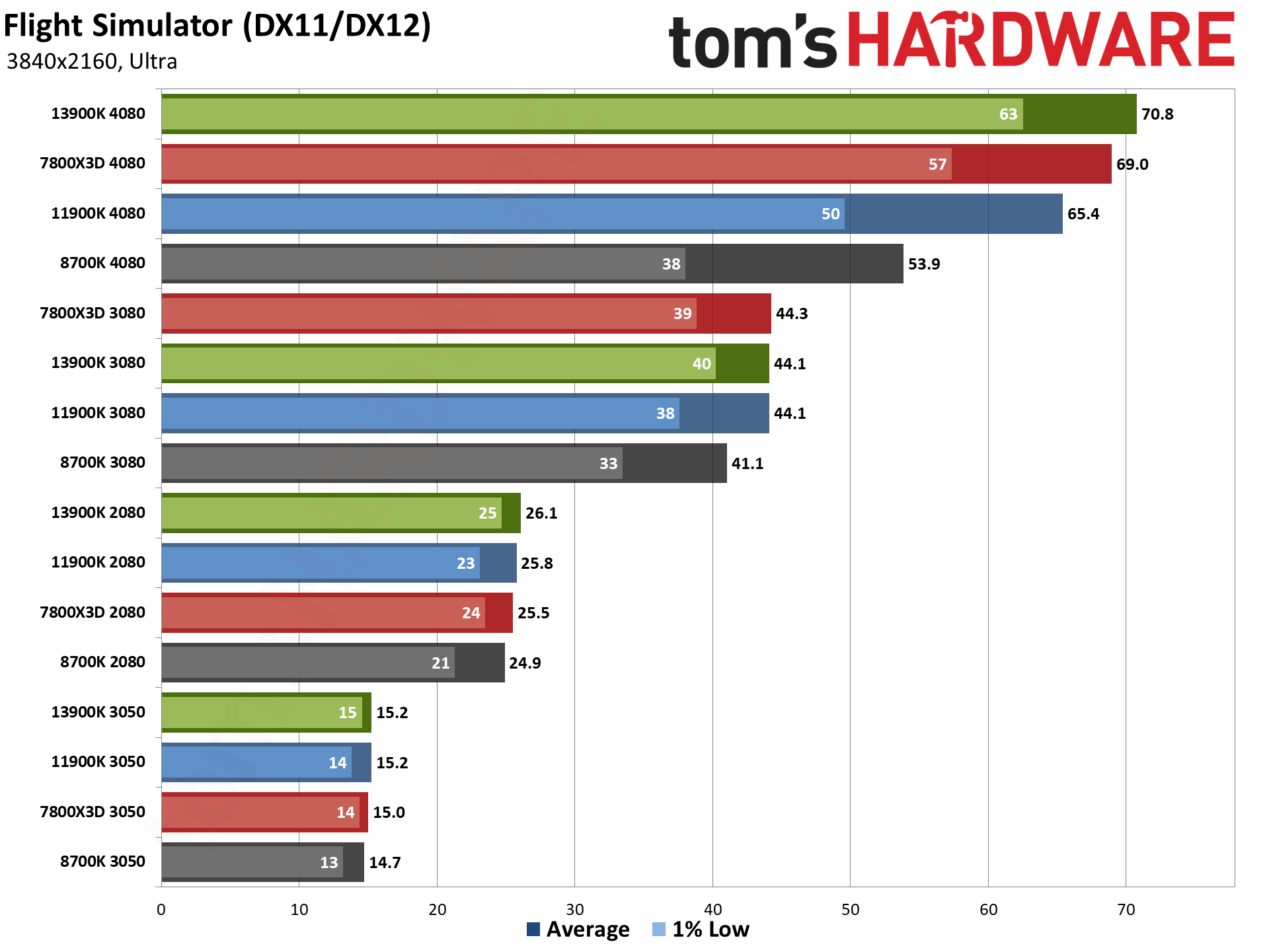

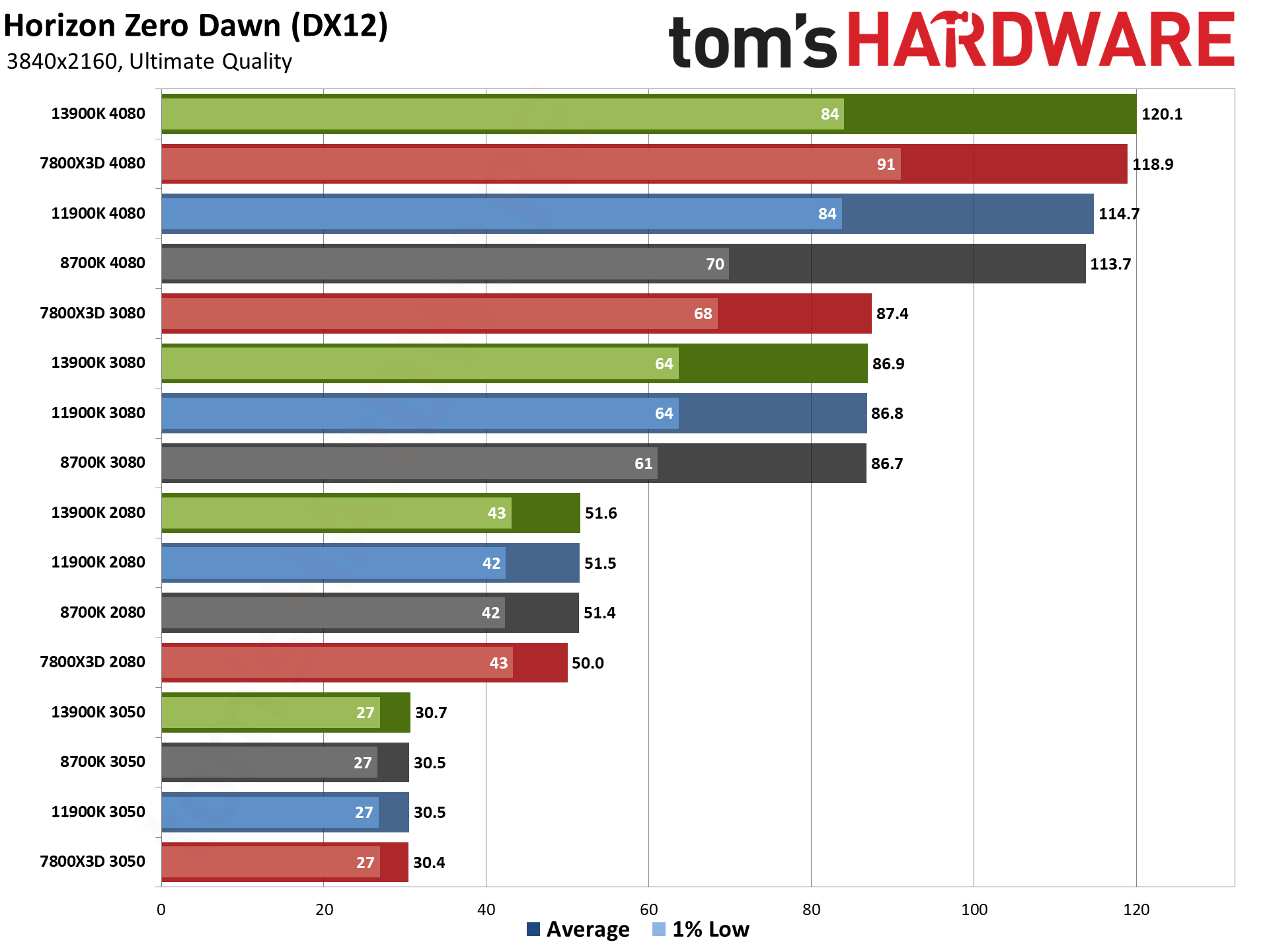

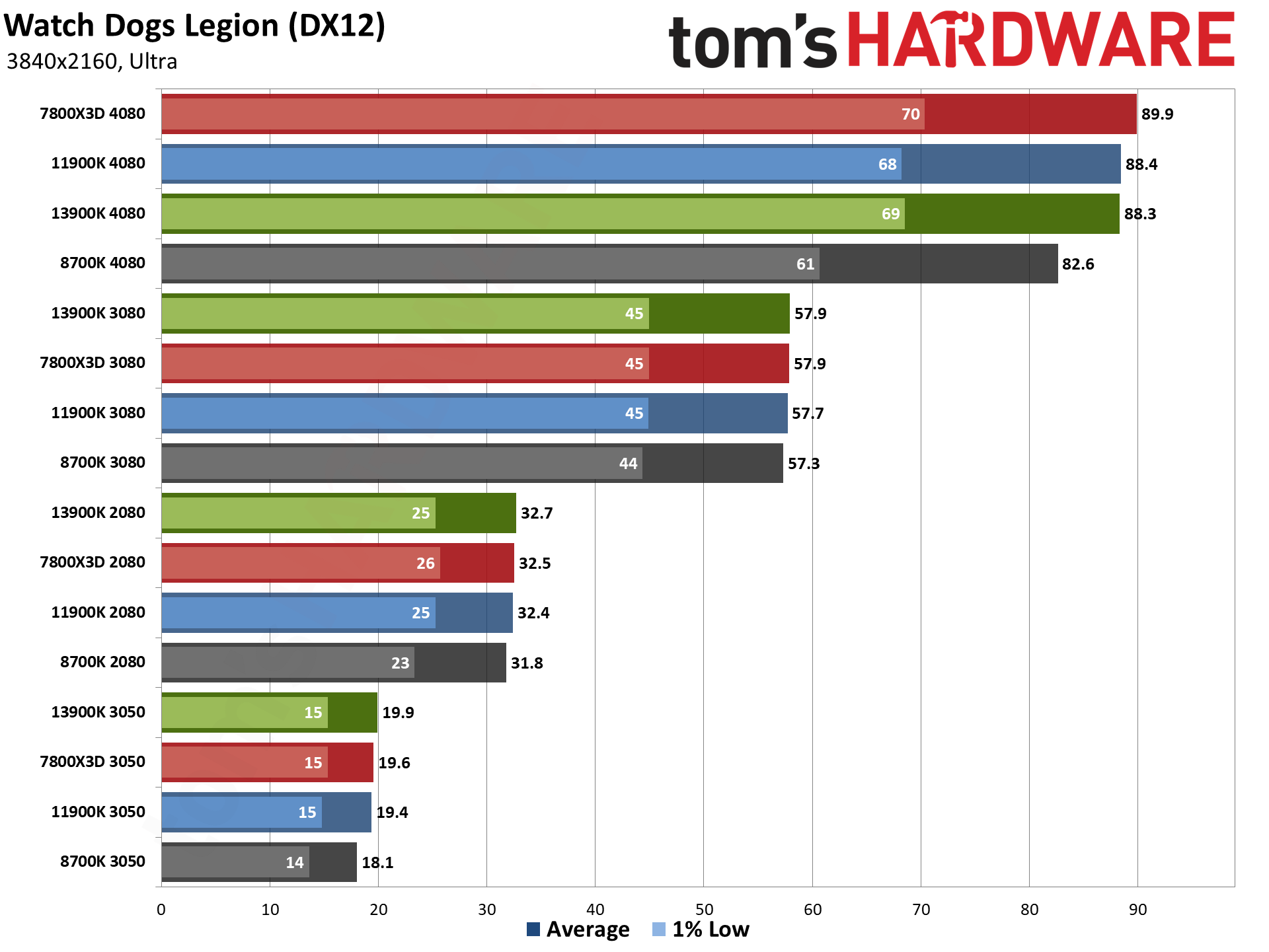

Diablo IV with DXR ends up as the most CPU-limited game in our suite, as indicated by the clumping of red/green/blue/grey in the chart. Even with an RTX 3050, you could almost double your framerate by upgrading from an 8700K to a 13900K or 7800X3D. Other games that are more CPU limited include Far Cry 6, Flight Simulator, Horizon Zero Dawn, Spider-Man: Miles Morales, and Watch Dogs Legion.

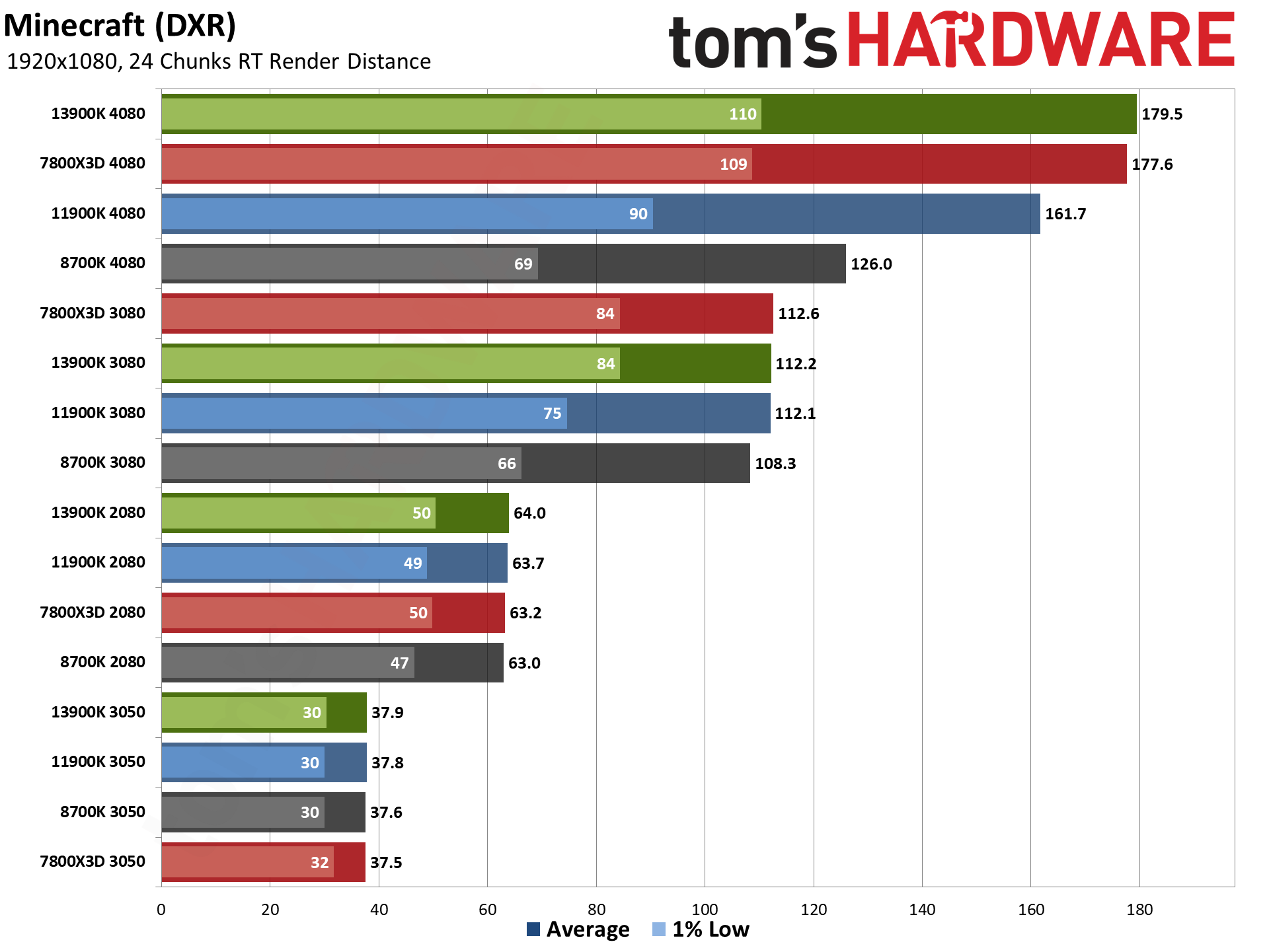

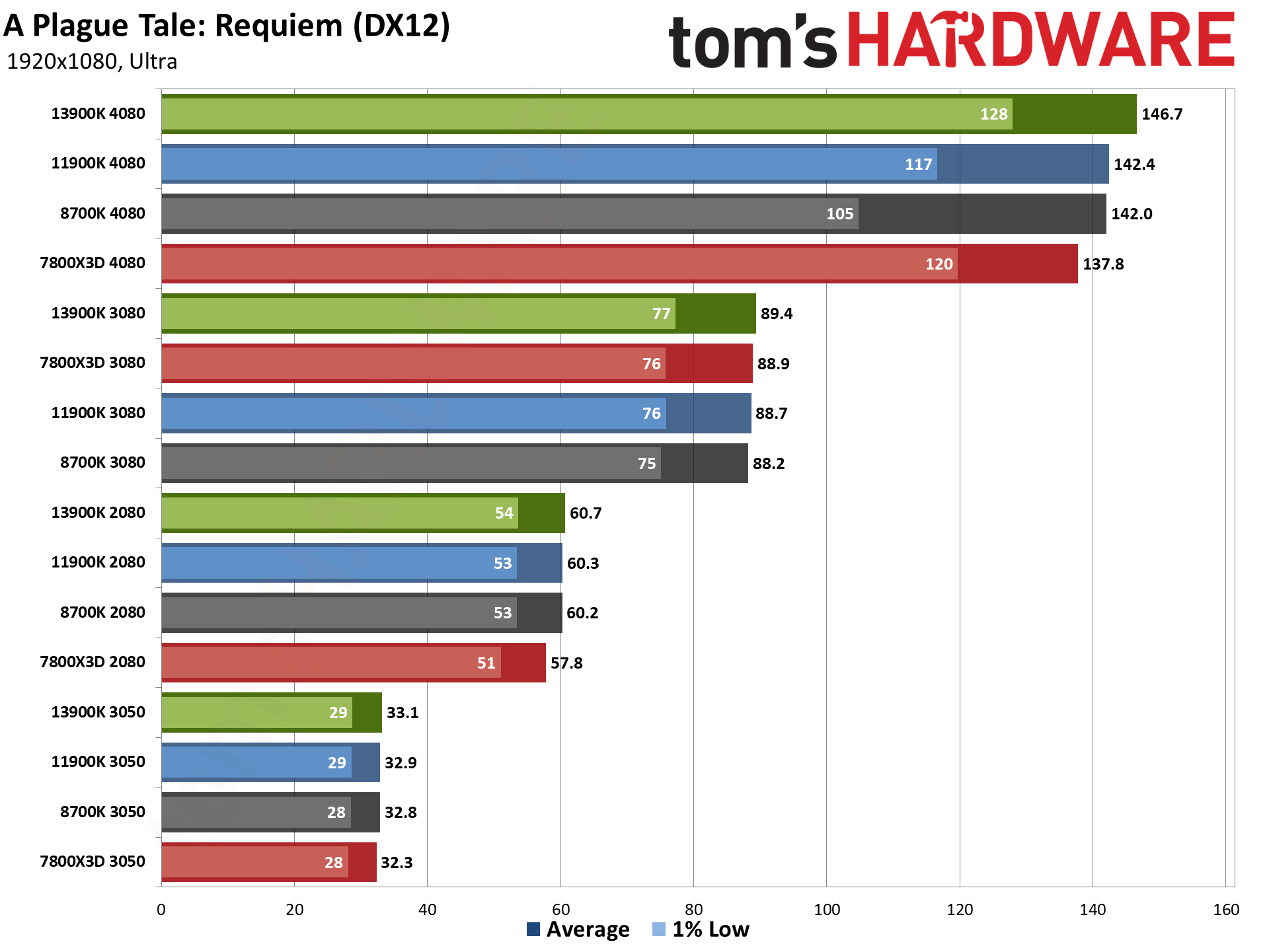

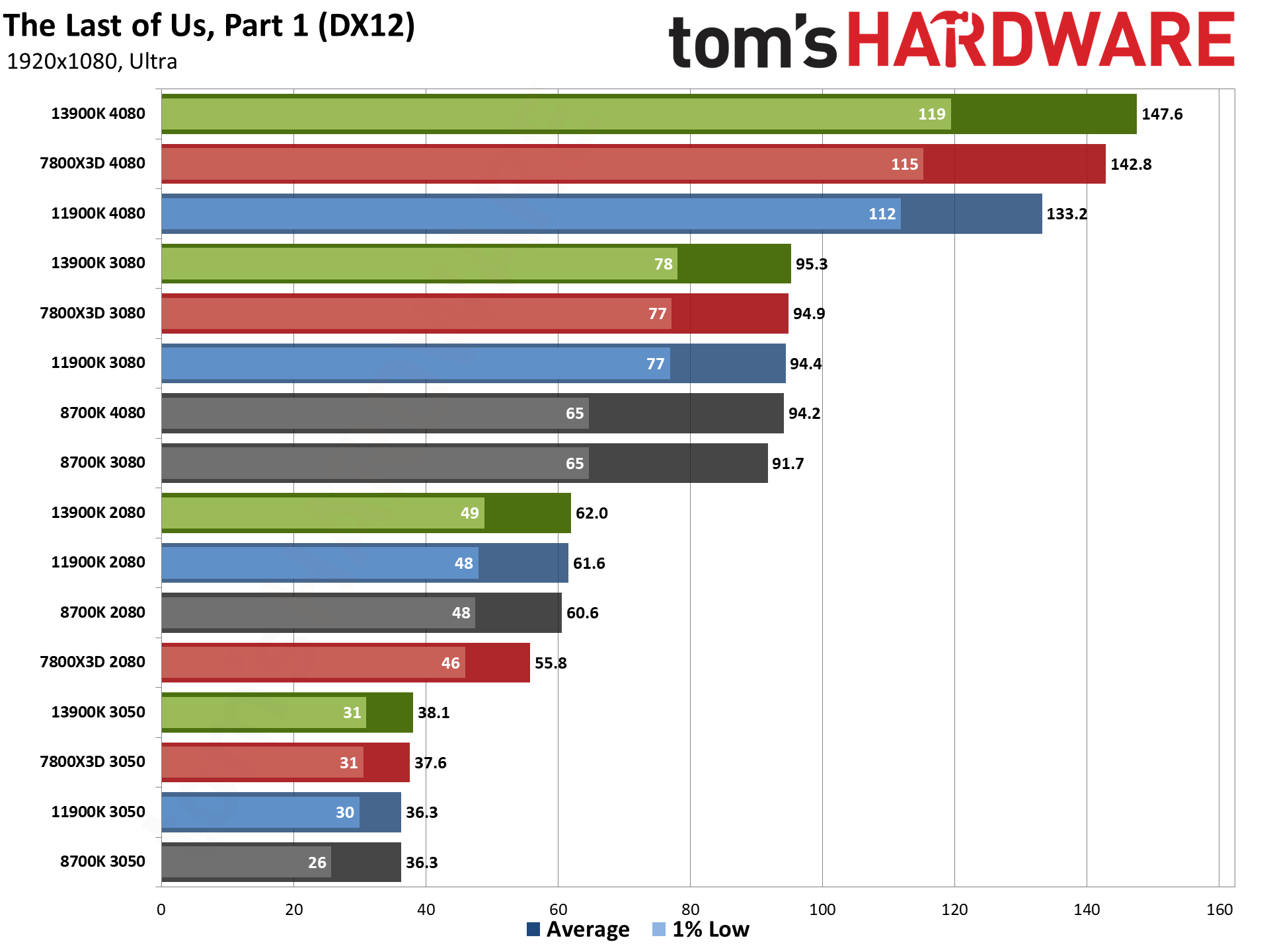

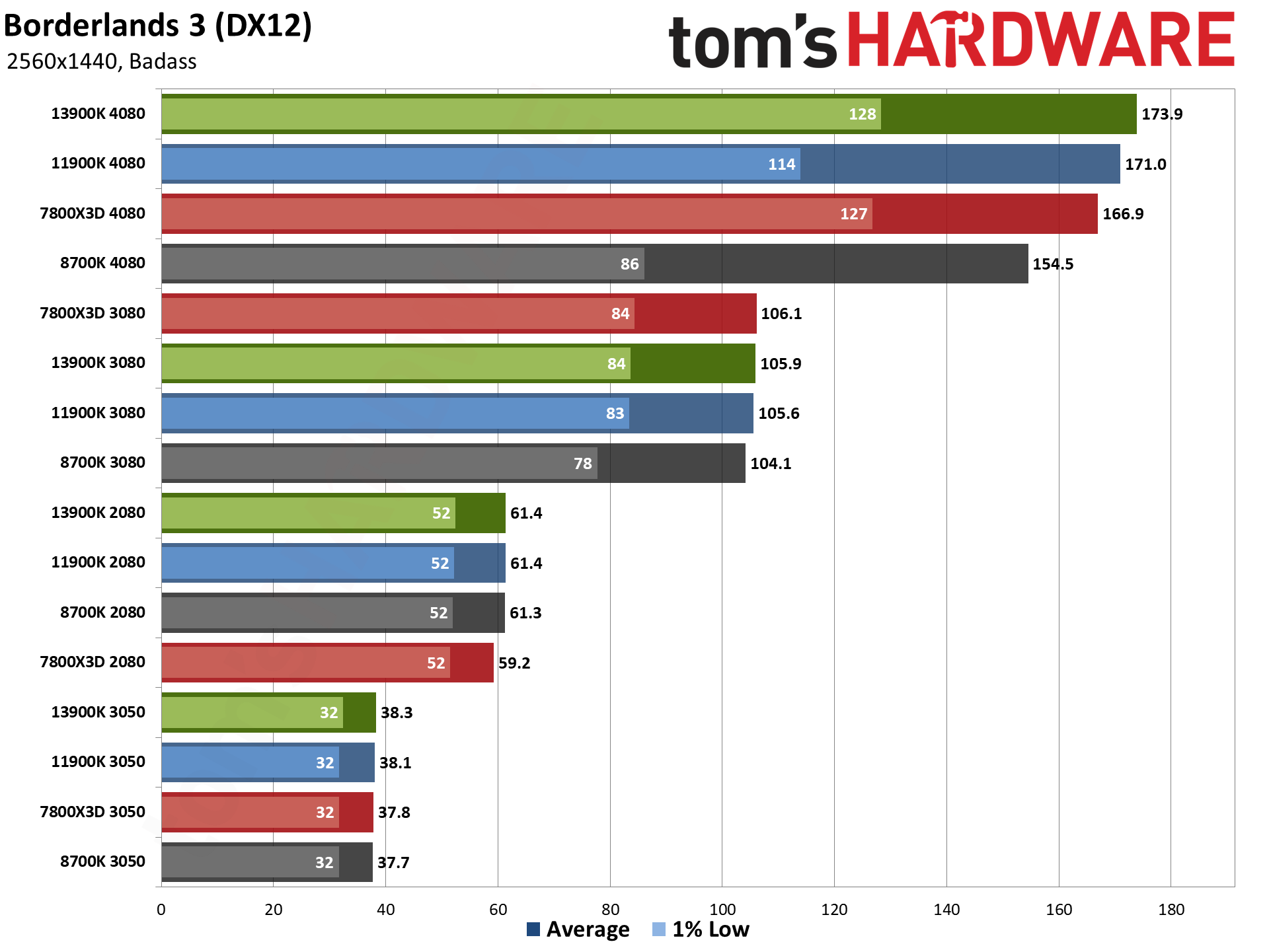

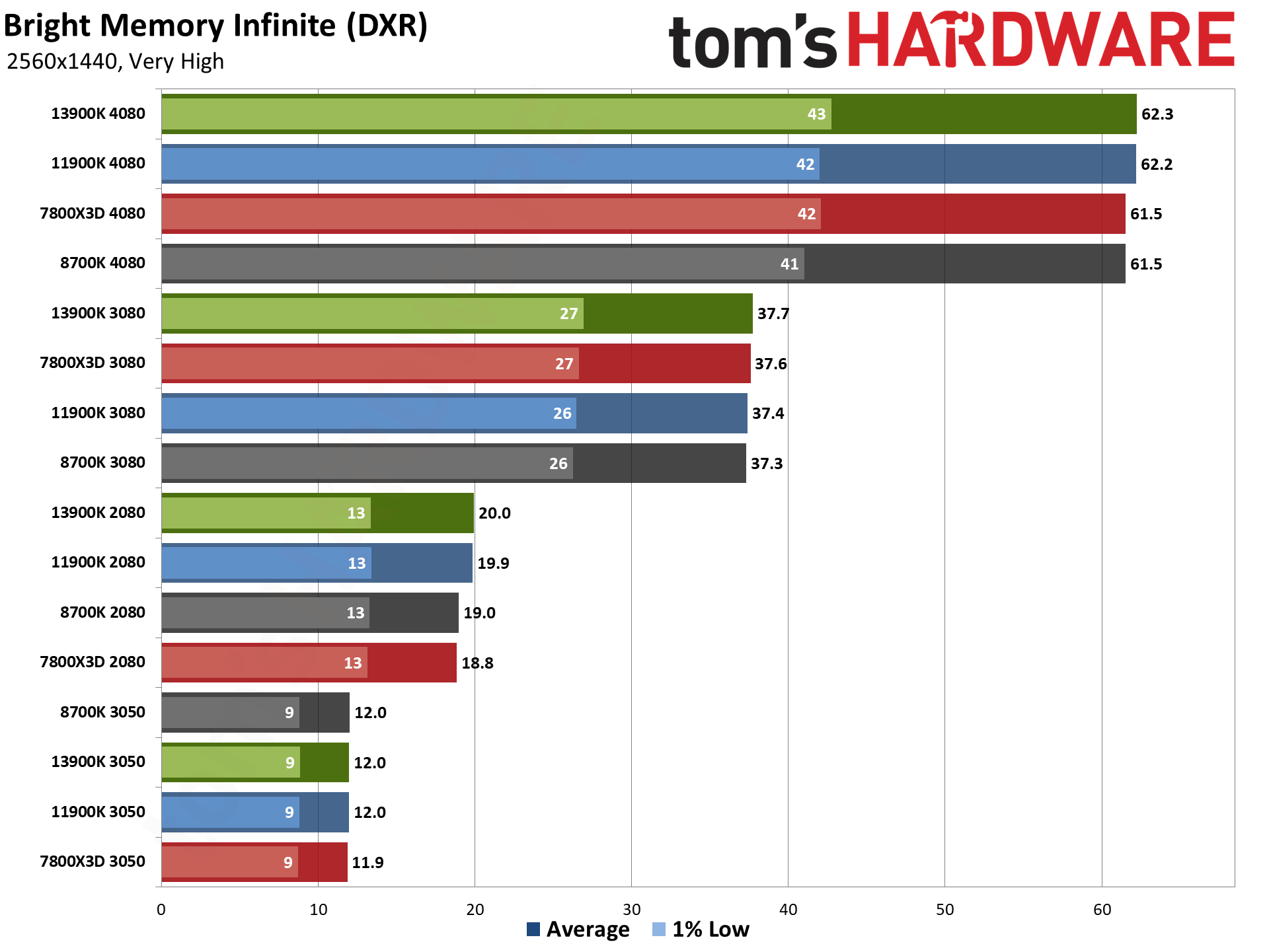

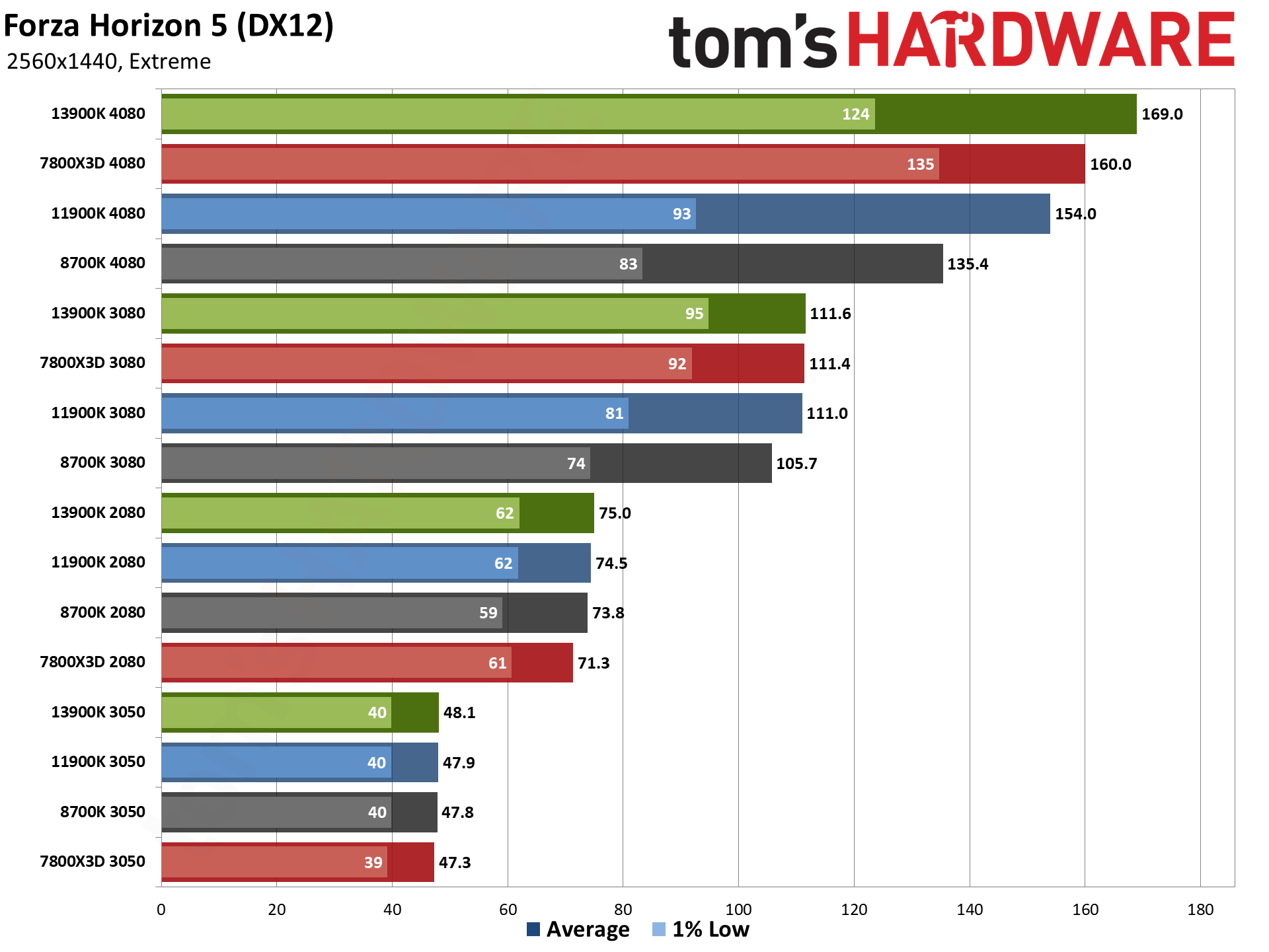

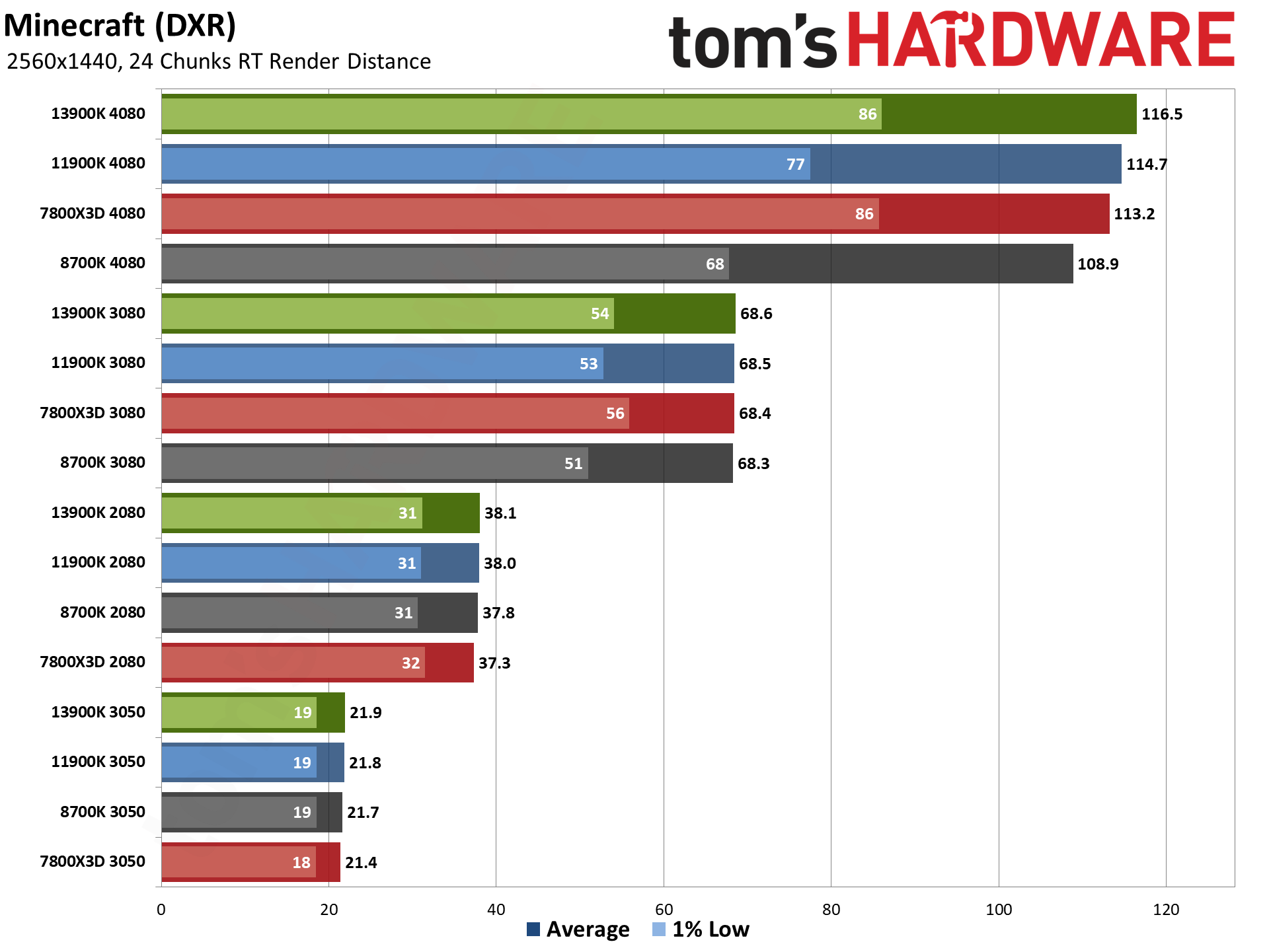

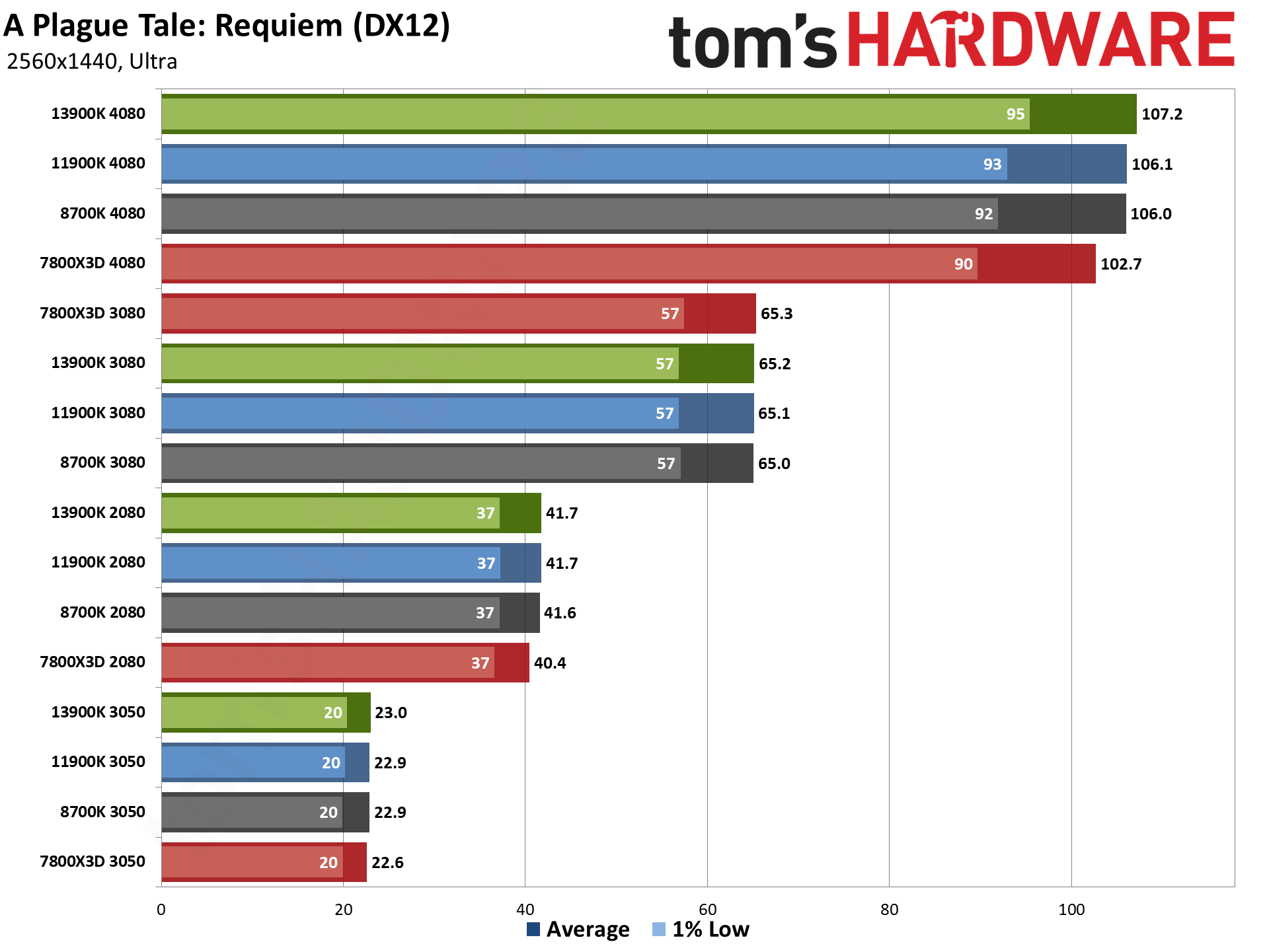

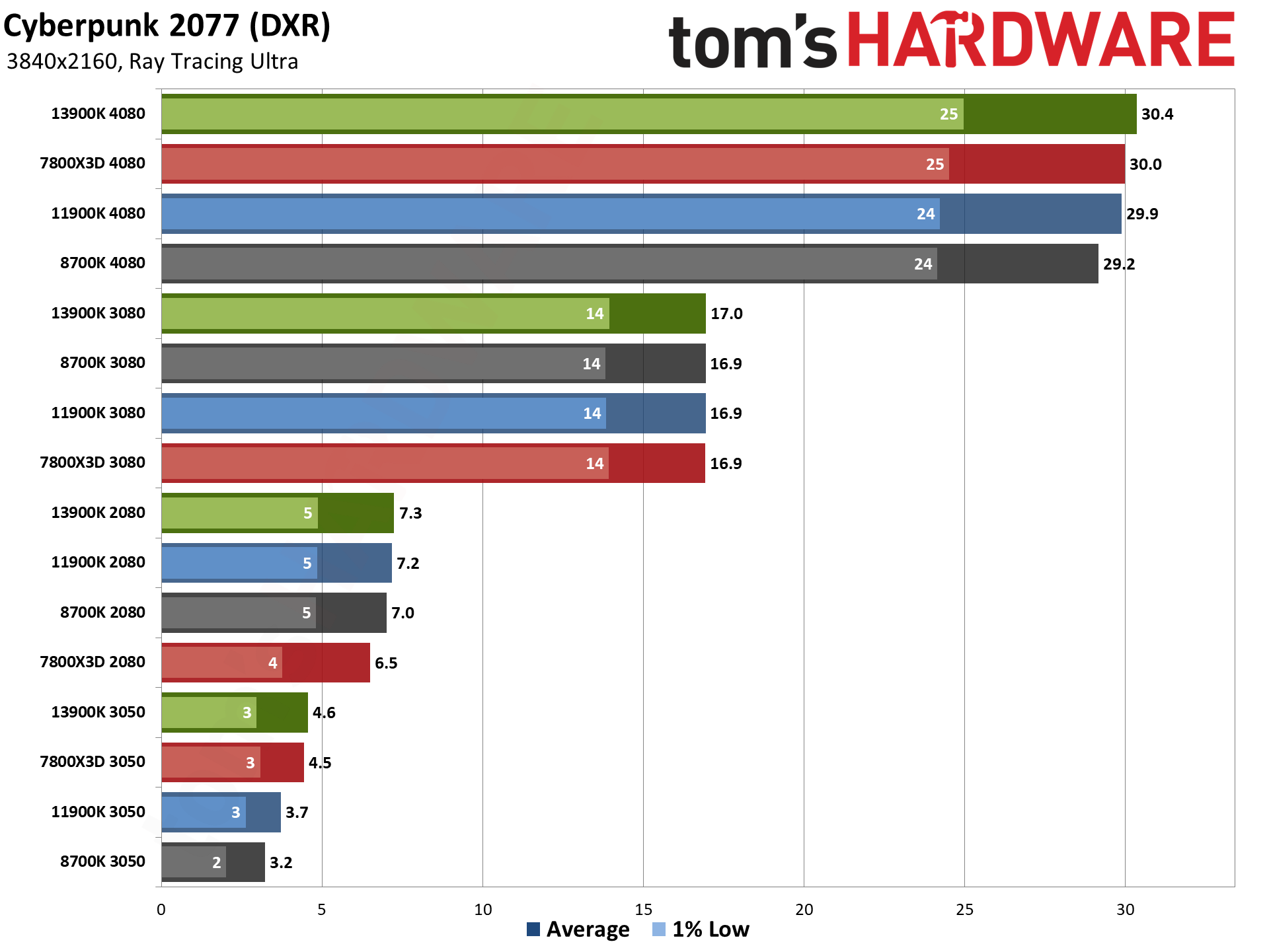

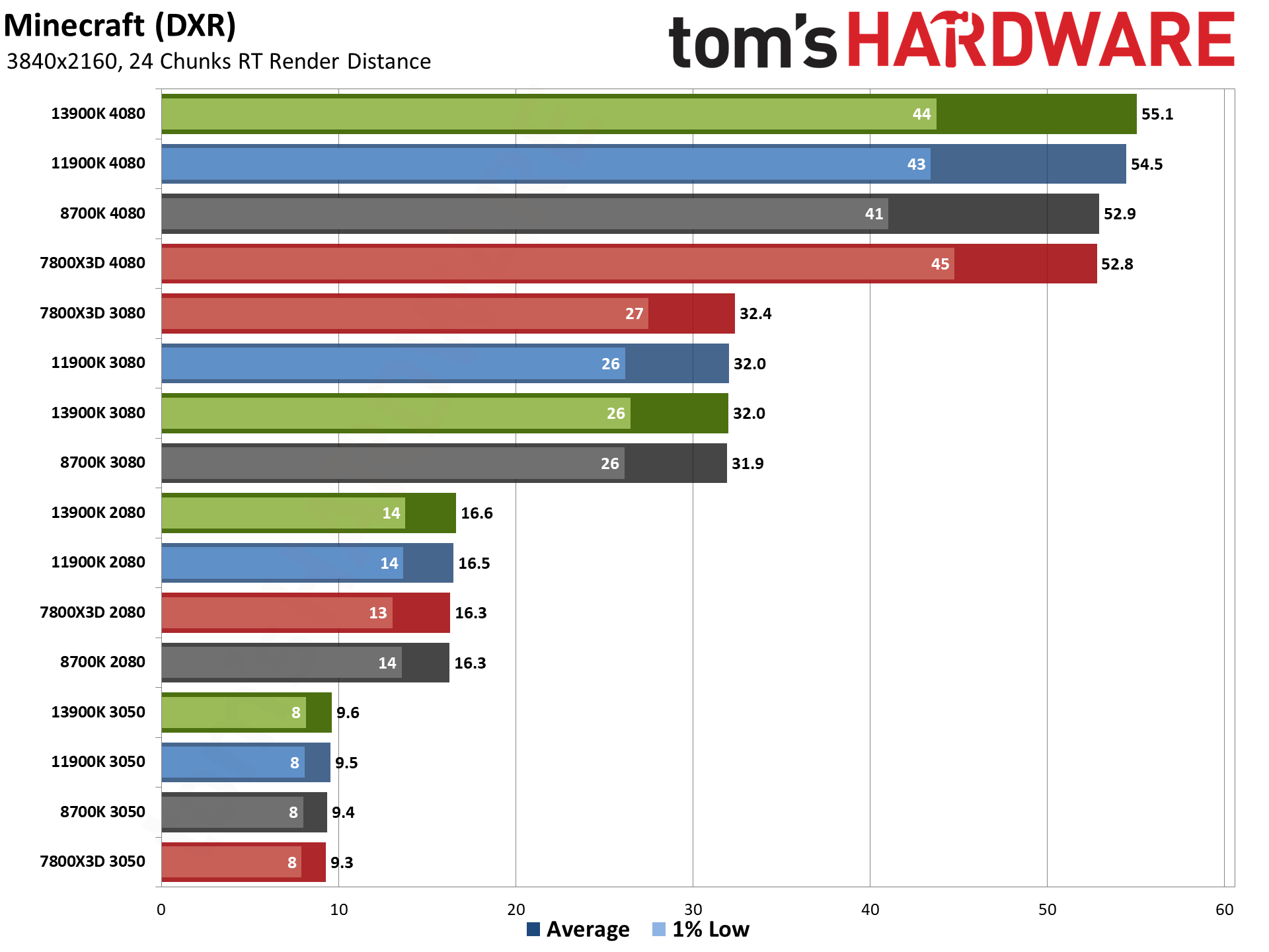

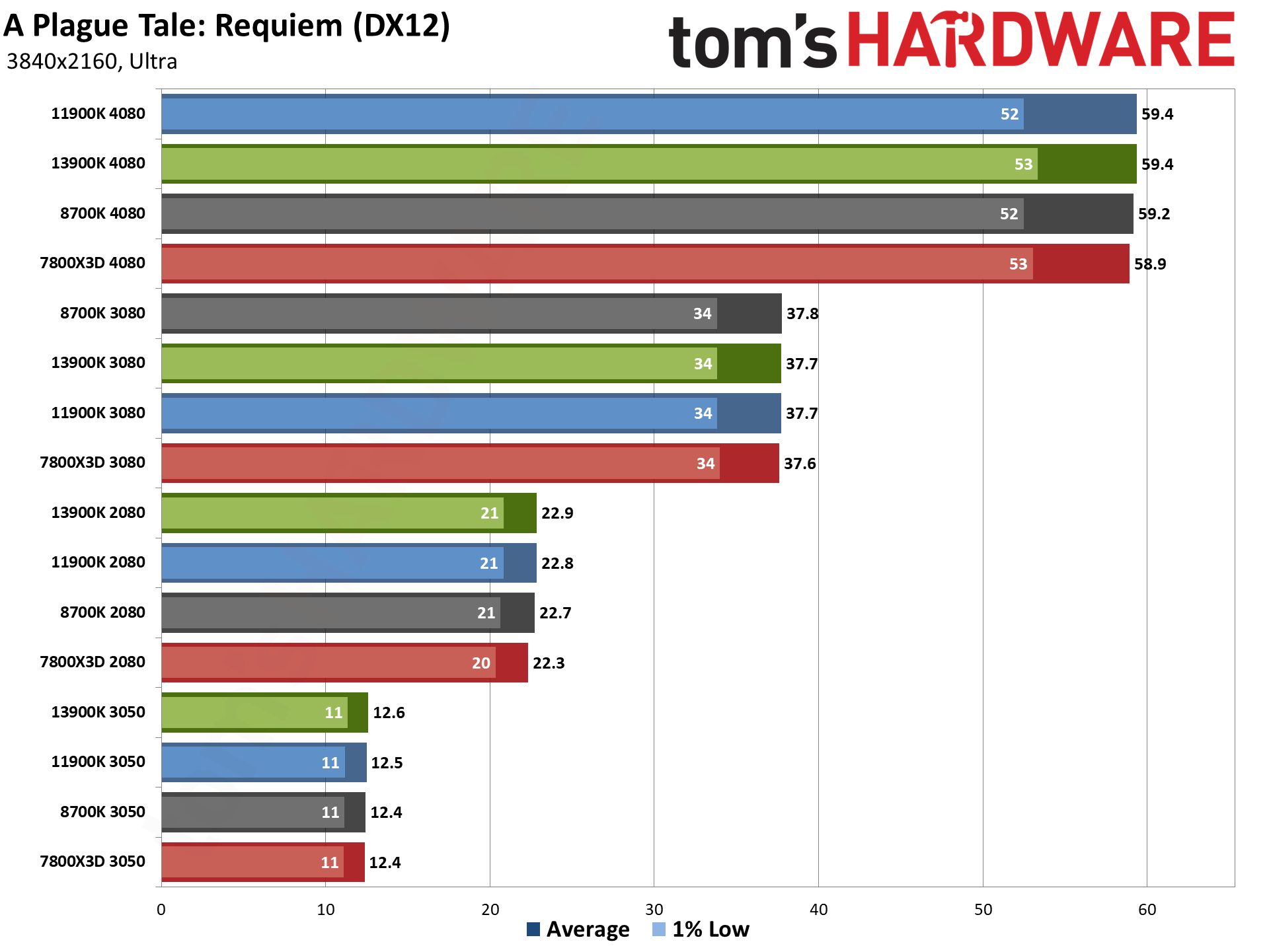

On the other side of the fence, even at 1080p, there are still several games that are almost entirely GPU limited — you'll see four distinct tiers of performance with all four CPU colors at each level. A Plage Tale: Requiem, Bright Memory Infinite, Control (mostly), Cyberpunk 2077, Minecraft, and The Last of Us (mostly). As we increase the resolution and quality settings, more of the games will become primarily GPU limited and will start to look like the Plague Tale chart.

GPU vs CPU upgrades: 1080p Ultra

If you only look at the 1080p medium results, upgrading your CPU as well as your graphics card might seem like the only sensible way to go, but even the shift to 1080p ultra starts to level the playing field among the various CPUs. Overall, the 4080 with either the 7800X3D or 13900K CPU performs best, but now the 11900K only falls off the pace by 16% — still noticeable but not quite as bad as the 20–24 percent deficit we measured at 1080p medium.

The 8700K on the other hand still ends up as a significant bottleneck. Again, any of the three newer CPUs paired with an RTX 3080 would provide a better 1080p ultra experience than the 8700K with a 4080. That's the overall view, of course — there are definitely games where the 8700K is still fast enough to keep the 4080 busy.

If you're a gamer using a 1080p display, however, you're probably not also looking at a $1000 GPU as a reasonable upgrade option. Or maybe you built your current gaming PC back when the 8700K and RTX 2080 were basically as good as it got, six years ago, and you want to upgrade.

Either way, we wouldn't feel much need to move beyond the RTX 3080 for 1080p gaming, even at ultra settings — and the 3080 offers very similar performance to the newer and less expensive RTX 4070, with the RTX 4070 Super being a decent $50 upsell, if you check our GPU benchmarks hierarchy.

For the older and slower GPUs, graphics card bottlenecks become the limiting factor. There's only a 3% spread among the RTX 3050 results, and a moderately larger 7% spread on the RTX 2080 results. But as soon as we move up to the faster GPUs, the gap between the CPUs starts to grow. The 13900K and 7800X3D are about 20% faster than the 8700K with the RTX 3080, and that grows to nearly a 50% lead with the RTX 4080.

The GPU continues to be the biggest limiting factor for the more demanding games, including most of the DXR-enabled titles. Most of those show four distinct tiers of performance based on the GPU, with only the 4080 needing more than the slowest CPU from our testing. Diablo IV remains a noteable exception, with a massive CPU bottleneck when DXR gets enabled. (The easy and sensible solution is to not play that game with ray tracing turned on, but that's a different topic.)

Far Cry 6 and Flight Simulator are also pretty CPU limited, at least with the 3080 and 4080 cards. If you're trying to find a good GPU upgrade for an older 8700K, again we wouldn't go beyond a 3080 for 1080p gaming — eight of the games show less than a 10% improvement with an RTX 4080 versus the RTX 3080 when using the 8700K, with a 17% increase overall. Compare that to the 7800X3D where only a single game (Flight Simulator) shows less than a 10% improvement from moving to the 4080, and the overall increase is 45%.

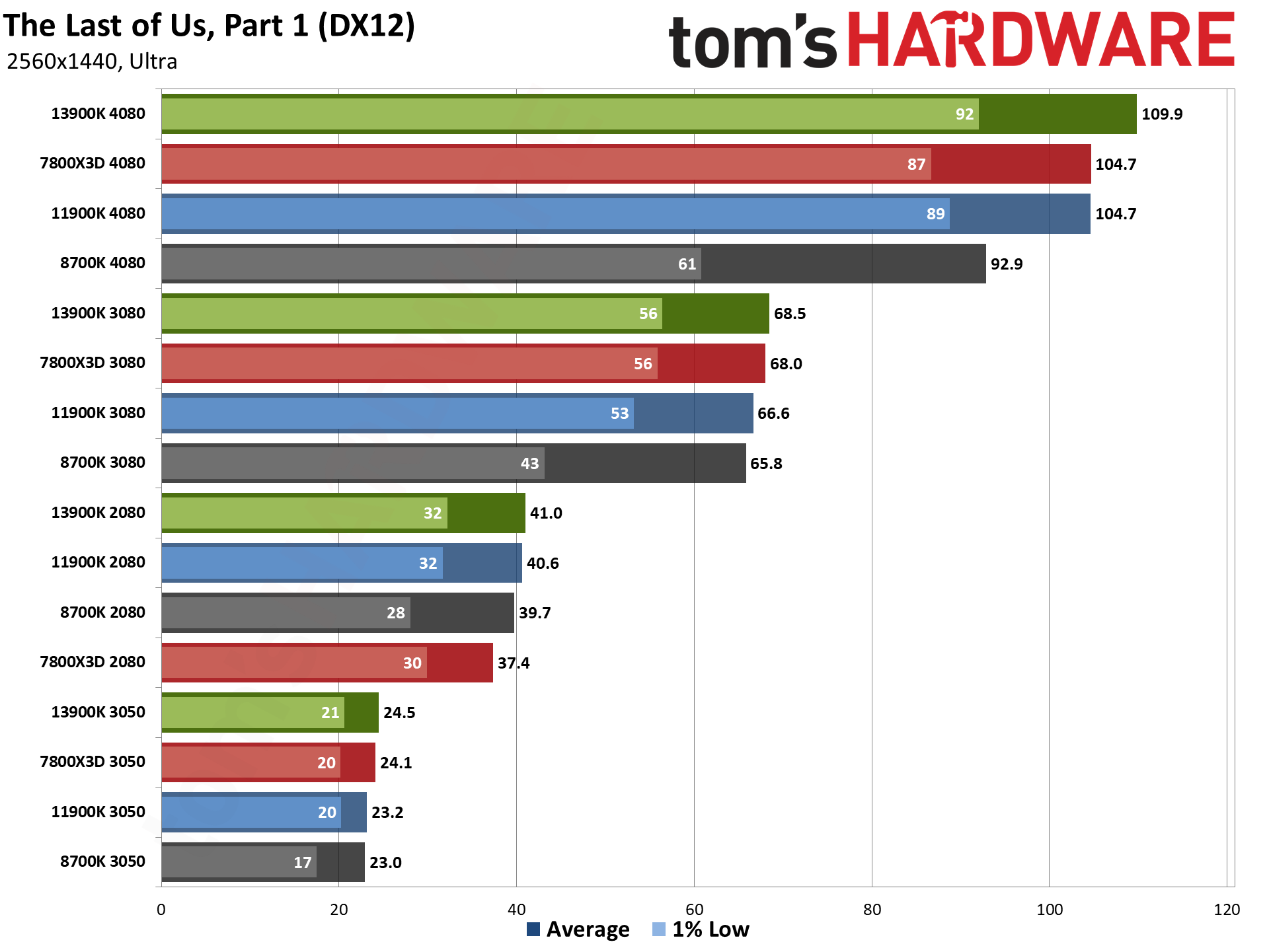

GPU vs CPU upgrades: 1440p Ultra

The importance of the CPU becomes far less of a factor once we step up to 1440p ultra. Our two slowest GPUs show less than a 5% difference between the 8700K and 13900K — and again, it's a bit curious to see the Ryzen 7 7800X3D somewhat underperforming when paired with the RTX 2080 and RTX 3050, as even the 11900K delivered slightly higher results overall.

Even the RTX 3080 doesn't need a ton of CPU performance to keep it happy, with an 11% spread between the fastest and slowest CPUs. But once we get to the RTX 4080, the 7800X3D returns to the top spot, just fractionally ahead of the 13900K, with both outperforming the 11900K by 12%. There's an even bigger gap to the 8700K, with nearly a 30% advantage by upgrading to a newer CPU.

So, if you're rocking a top-tier GPU like the RTX 4080 or above, or the RX 7900 XTX, but you're running a five or six years old CPU, you're still giving up a lot of performance at 1440p ultra and it's time for an upgrade — or at least, it will be time to upgrade once AMD's Zen 5 and Ryzen 9000 CPUs arrive, unless you want to wait a bit longer for Intel's Arrow Lake CPUs.

So the overall results don't show much of a difference, and that goes for the rasterization and DXR charts as well. There's a 2.5% spread on the rasterization results with the RTX 3050, a 3.8% spread with the 2080, and 11% spread on the 3080, and a 36% spread with the 4080. With the DXR chart, it's a 3% spread on the 3050, 8.3% on the 2080, 10% with the 3080, and 22% with the 4080. But there are still some noteworthy items in the individual charts.

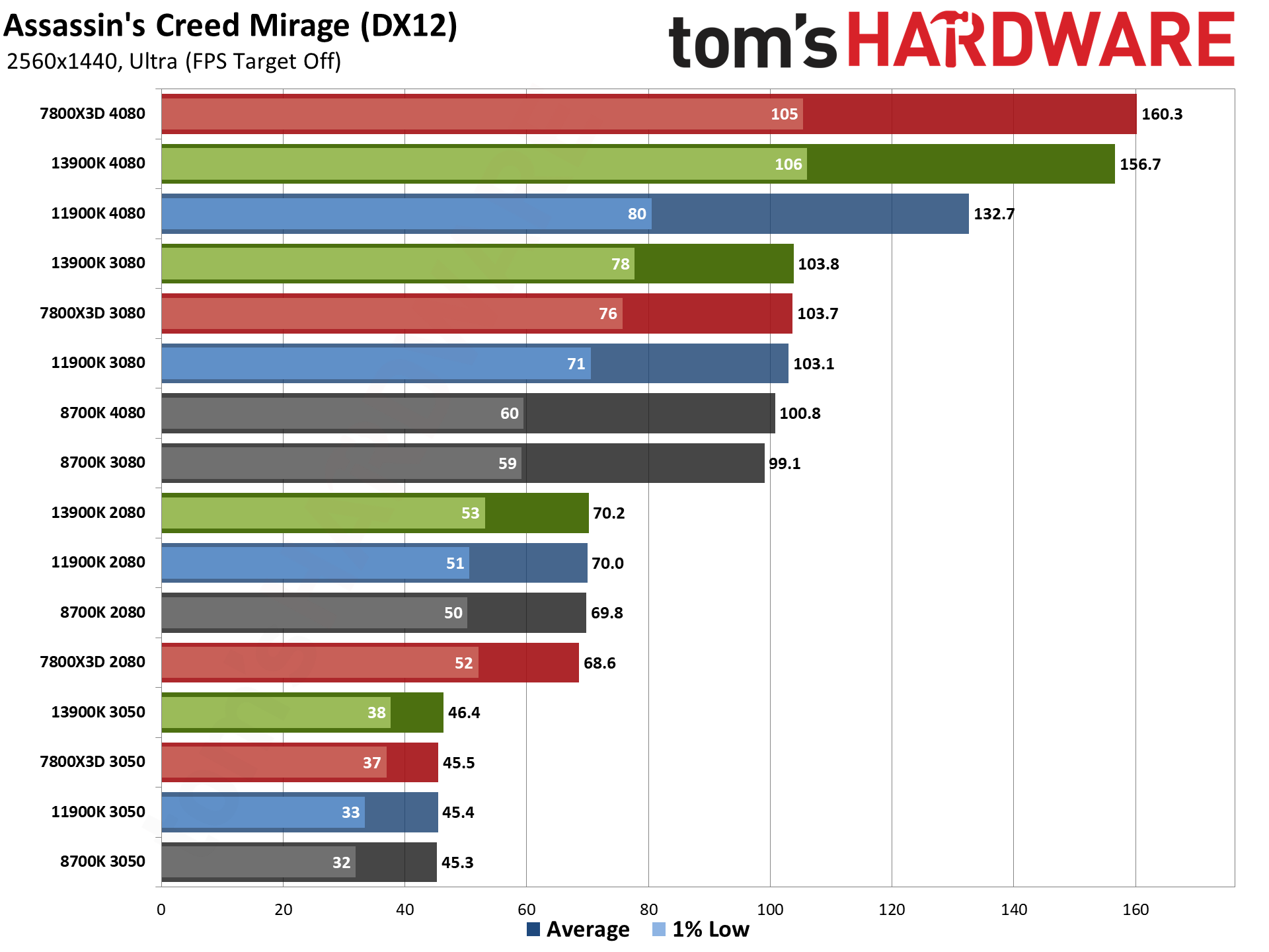

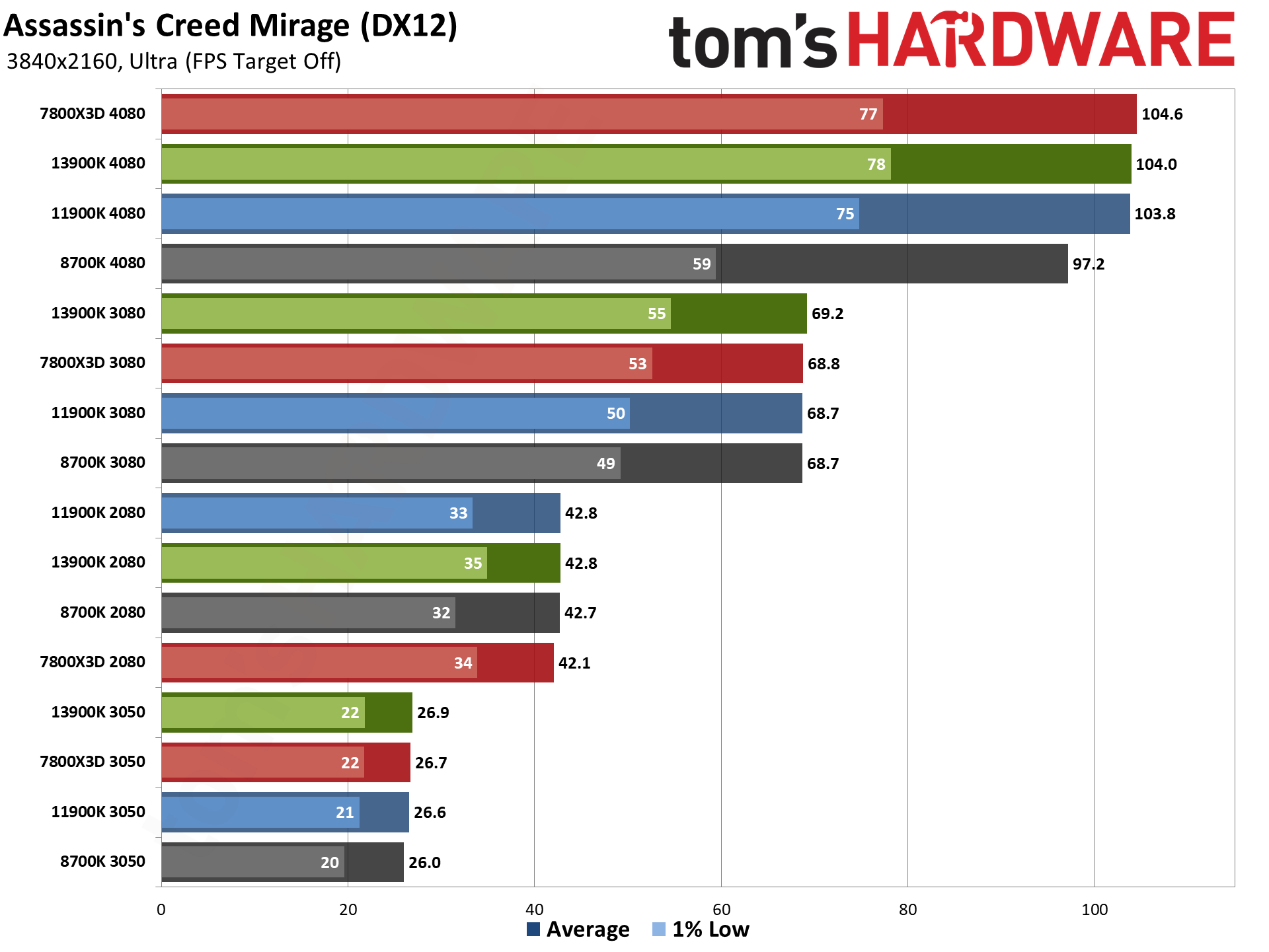

Assassin's Creed Mirage shows almost no difference between the 3080 and 4080 on the 8700K, even at 1440p. The same goes for the 8700K and those GPUs in Far Cry 6, Flight Simulator, Spider-Man: Miles Morales, and Watch Dogs Legion. But the real killer on the CPU continues to be Diablo IV with DXR, where the 4080, 3080, and 2080 are still basically tied at just 55 fps. Note that the 1% lows do show a much larger spread, however, with the 2080 dipping to just 24 fps while the 4080 maintains a much steadier 43 fps.

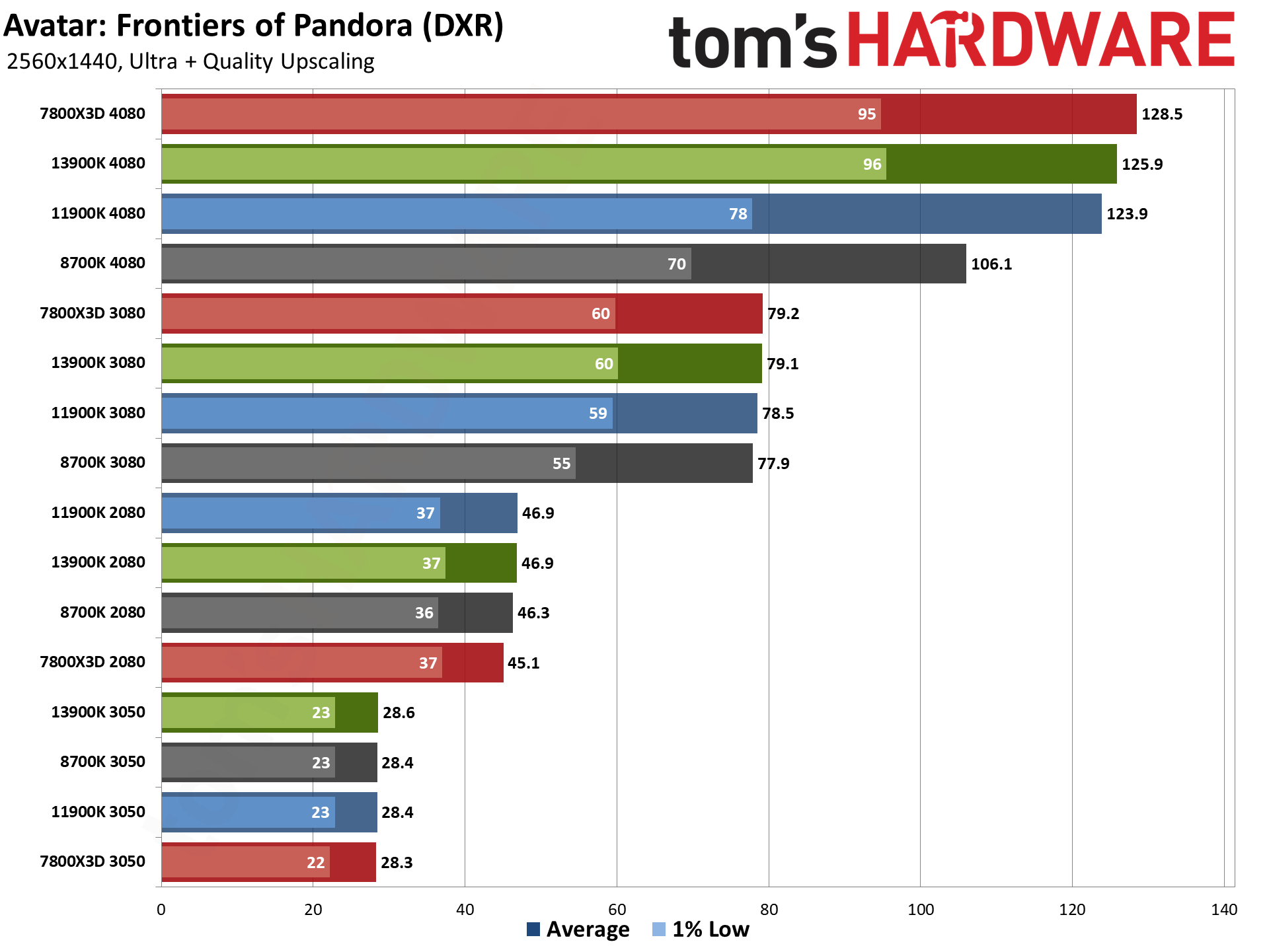

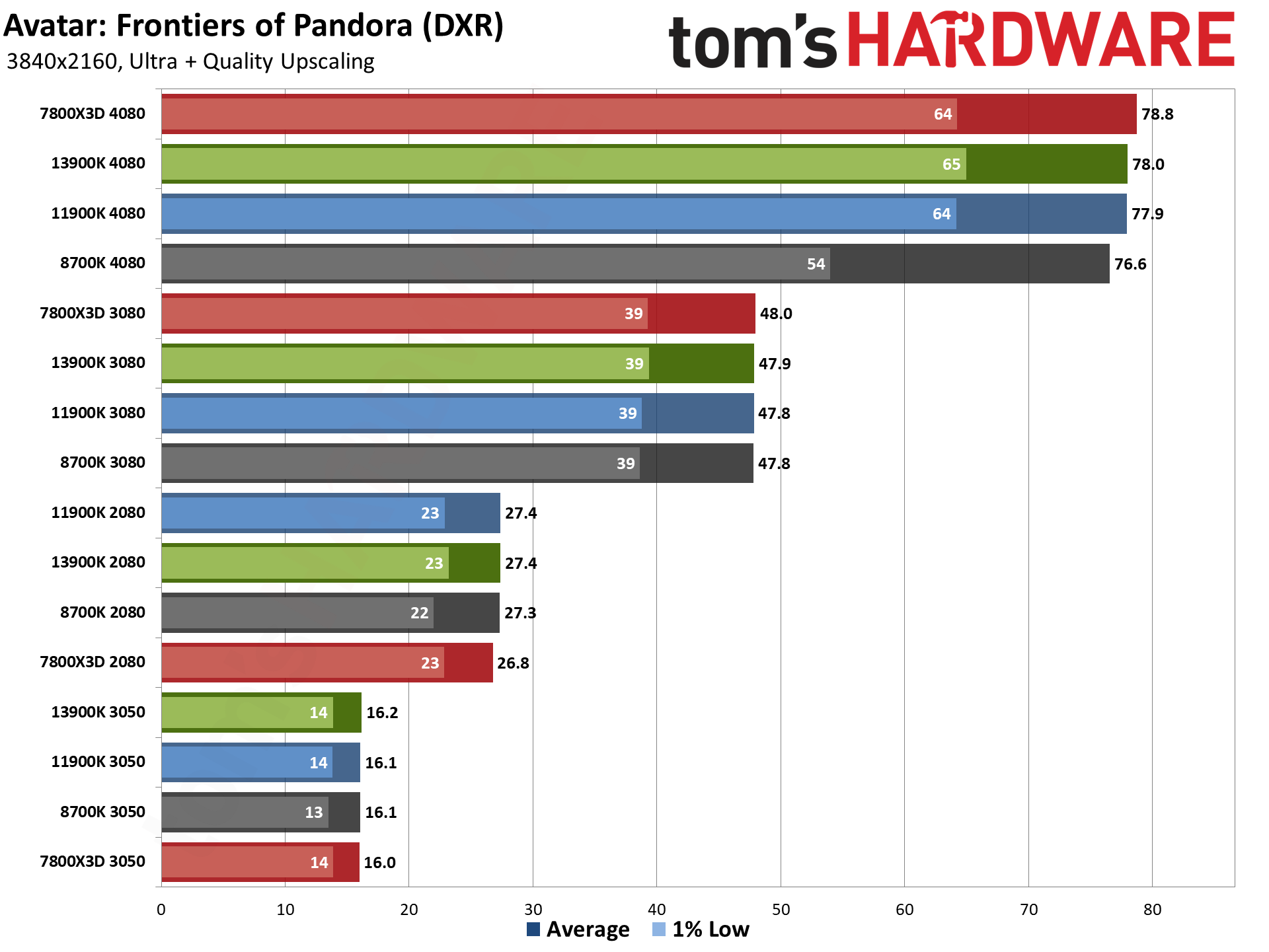

Something else to note here is that upscaling algorithms like DLSS, FSR 2/3, and XeSS can all shift the bottleneck more toward the CPU as well — which perhaps partly explains the Diablo IV results, though Avatar doesn't hit CPU limits nearly as hard. If you turn on upscaling in Flight Simulator or some of the other games that are already showing CPU bottlenecks, though, you'd see more instances of the 8700K holding back the RTX 4080.

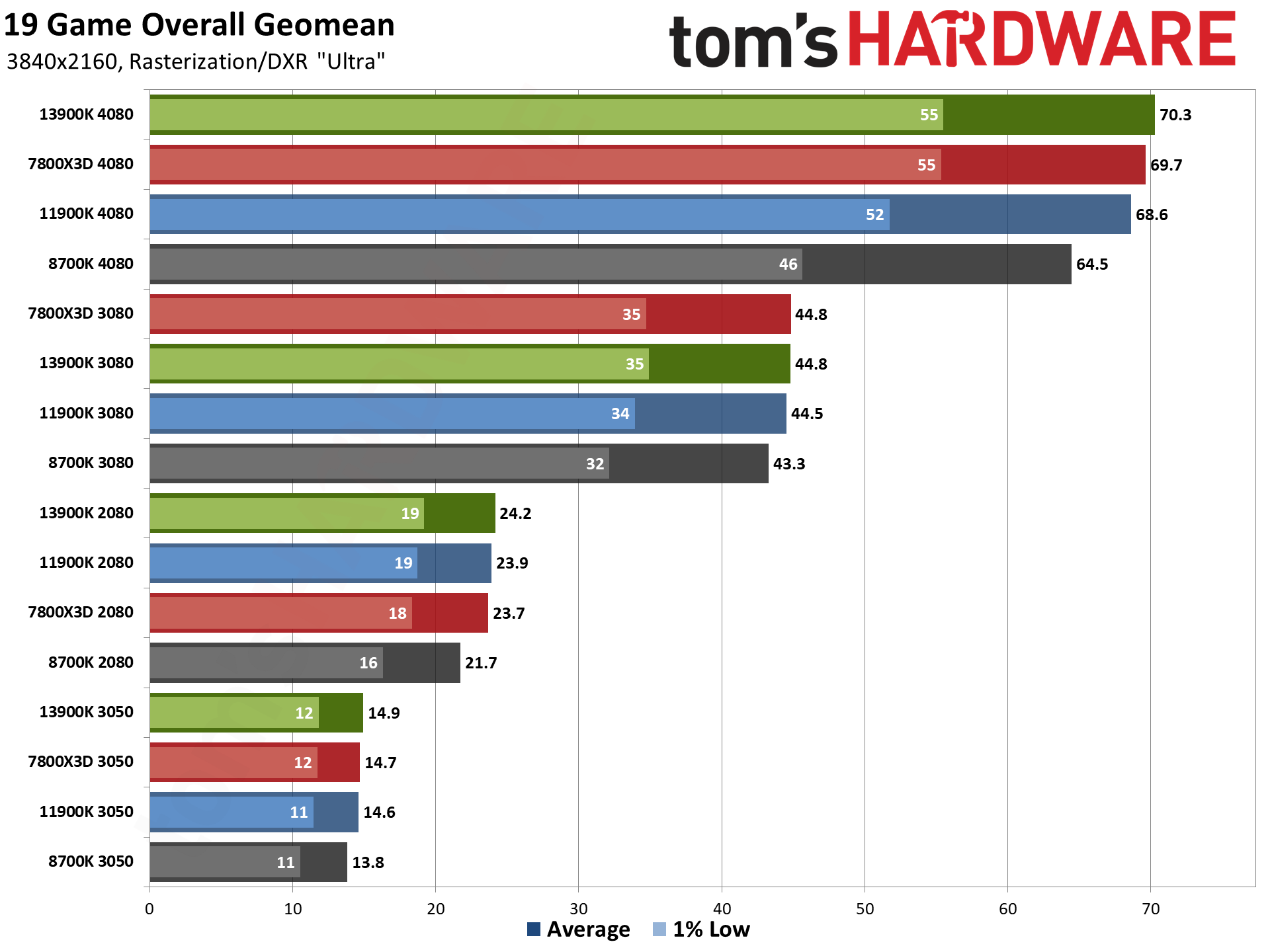

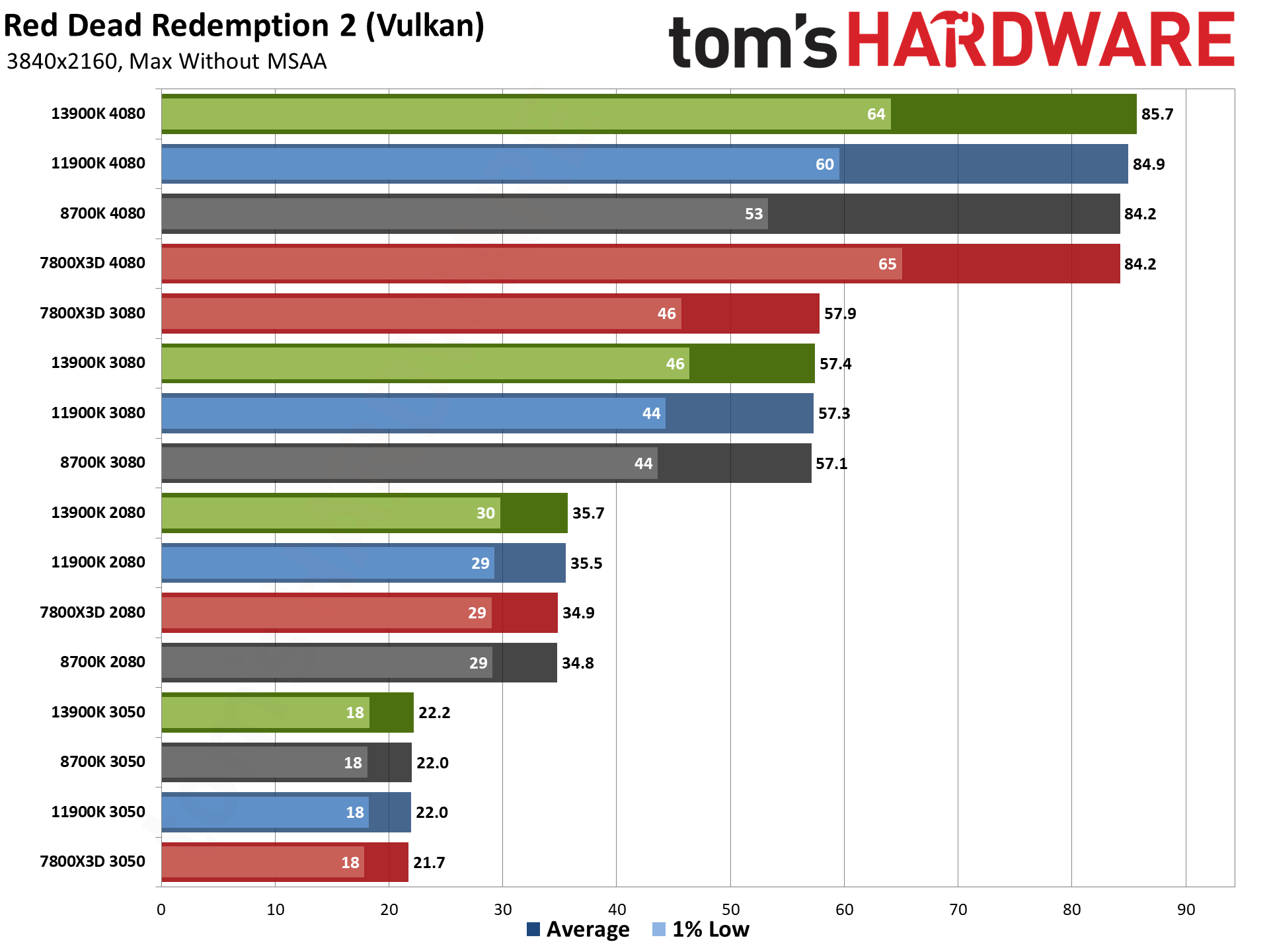

GPU vs CPU upgrades: 4K Ultra

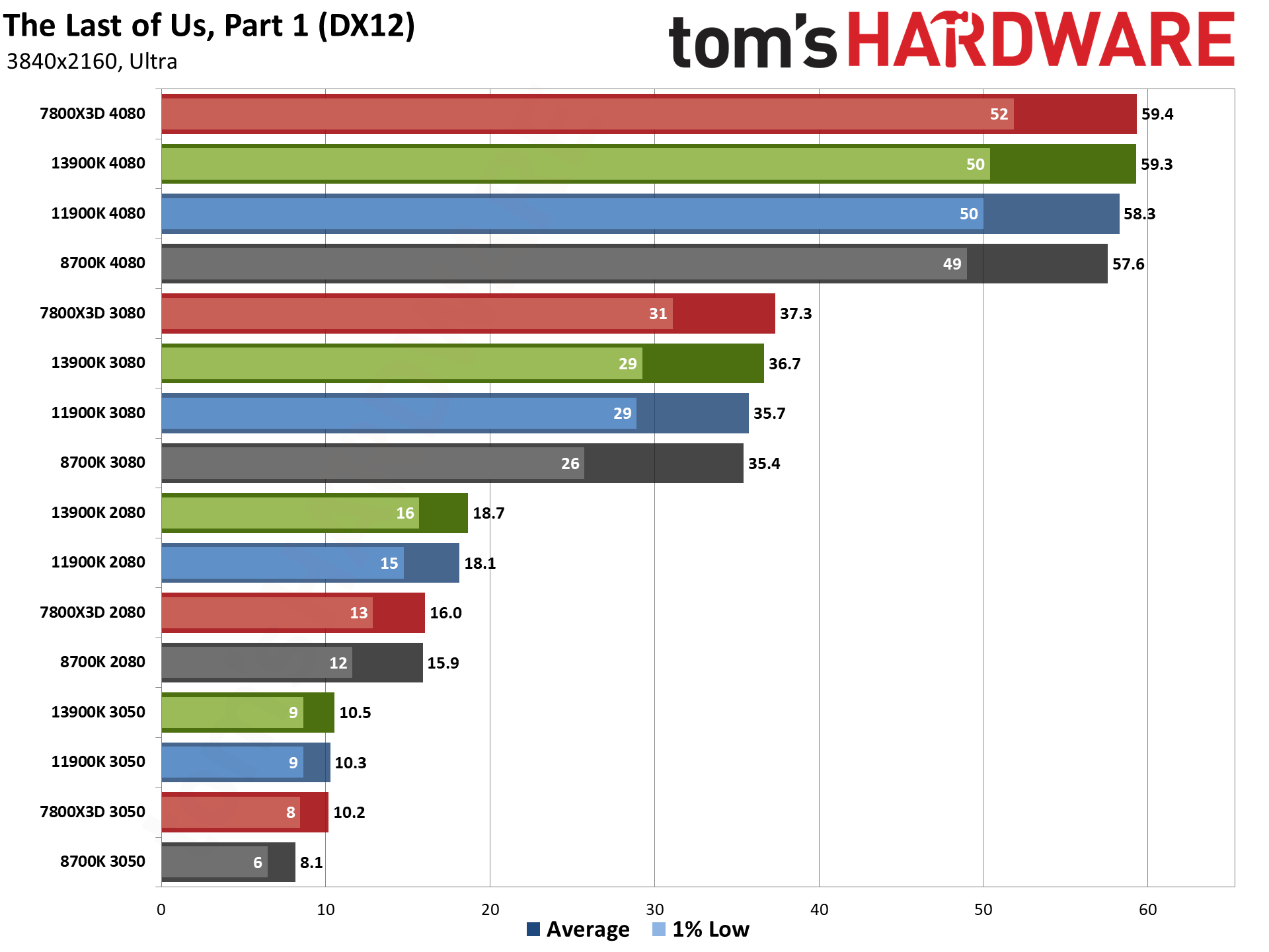

We don't normally do any CPU performance testing in games at 4K ultra, and this is why. Yes, there's still some separation between the fastest and slowest processors, but going from the 8700K to the 13900K or 7800X3D only nets an 8–9 percent improvement in performance. Obviously, the newer CPUs are much faster than that, but at 4K the GPU becomes the major bottleneck in just about every game we've tested.

So, if you're a 4K gamer — or you're planning to become one with a new 4K monitor upgrade — you'll generally want to get the fastest, most expensive graphics card you can justify before worrying about your CPU. The RTX 3080, which again also acts as a proxy for the RTX 4070, delivers nearly the same gaming experience across all four of our test platforms, with the 8700K falling just 3% behind the other CPUs. Curiously, we see a larger 9% deficit with the RTX 2080 and a 6% deficit with the RTX 3050, but that's due to a few outliers in our test suite where 8GB of VRAM becomes a serious issue.

You can easily spot the outliers in the individual game charts. Far Cry 6 as an example almost totally fails to run on the combination of 8700K and 2080. We tried repeating the test dozens of times, rebooting the PC, cleaning drivers, etc. It just doesn't want to ever give a decent result — but that's a known problem with the game, where run to run variance at 4K ultra becomes massive on most 8GB cards.

The other games all perform as expected, with the PCIe 3.0 interface of the old platform having a slight effect in Cyberpunk 2077 and The Last of Us. Diablo IV also continues to be problematic with DXR enabled on the 8700K — which is easily fixed by turning off ray tracing and losing the very (very!) slight improvements in reflections and shadows, but we were mostly interested in pushing settings as high as possible to see how that impacted the various platforms.

Seeing almost no difference in framerates at 4K ultra doesn't mean the CPU doesn't matter at all, of course. There are lots of other tasks that will benefit from additional CPU performance, including just loading games and pre-compiling shaders. The Last of Us for example is notorious for taking a while to compile the shaders the first time you launch it on a new GPU. On the 7800X3D and 13900K processors, it takes around 10 minutes. On the 8700K, it probably took at least twice that long — we stopped paying attention and just went off to do something else for 30 minutes.

A slower CPU can also result in more stutters and framerate inconsistencies. We run each test multiple times and use the best result (after discarding the first run), which represents something of a best-case scenario. Diablo IV, to cite that example again, has a ton of stuttering as you enter new areas on the 8700K, to the point that it can at times become unplayable — you definitely don't want to try hardcore mode with a slower CPU and DXR enabled.

Even the 11900K has more stuttering, as you can see with the 63 fps result for 1% lows when using the RTX 4080, compared to 70 on the 13900K and 7800X3D. Given that's averaged across 19 games, you can tell just from the overall chart that there are a few games that will struggle with maintaining a consistent framerate. Far Cry 6, Flight Simulator, Horizon Zero Dawn, and Total War: Warhammer 3 are the main culprits, if you're wondering.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

GPU vs CPU upgrades: Power, temperatures, and clocks

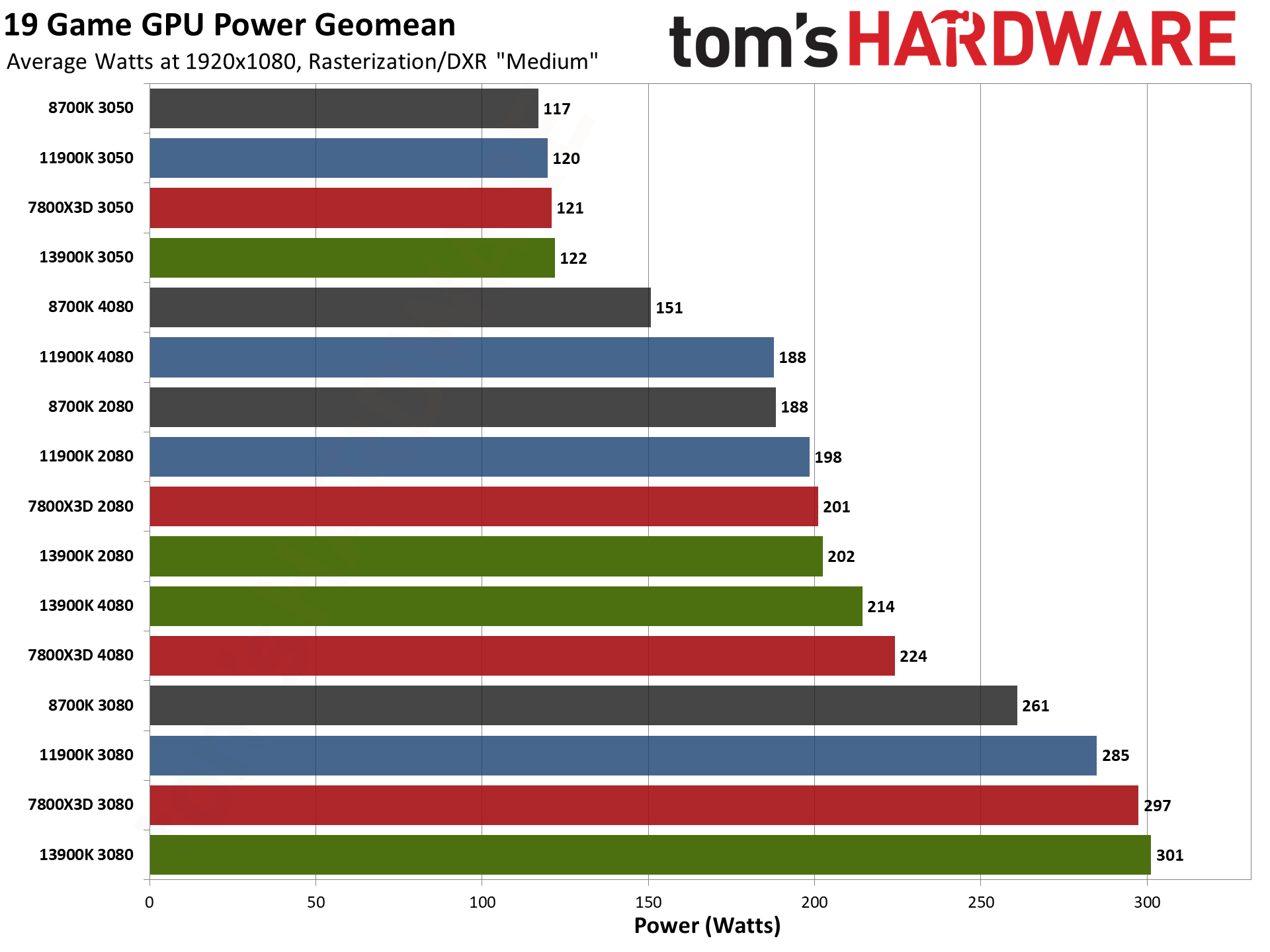

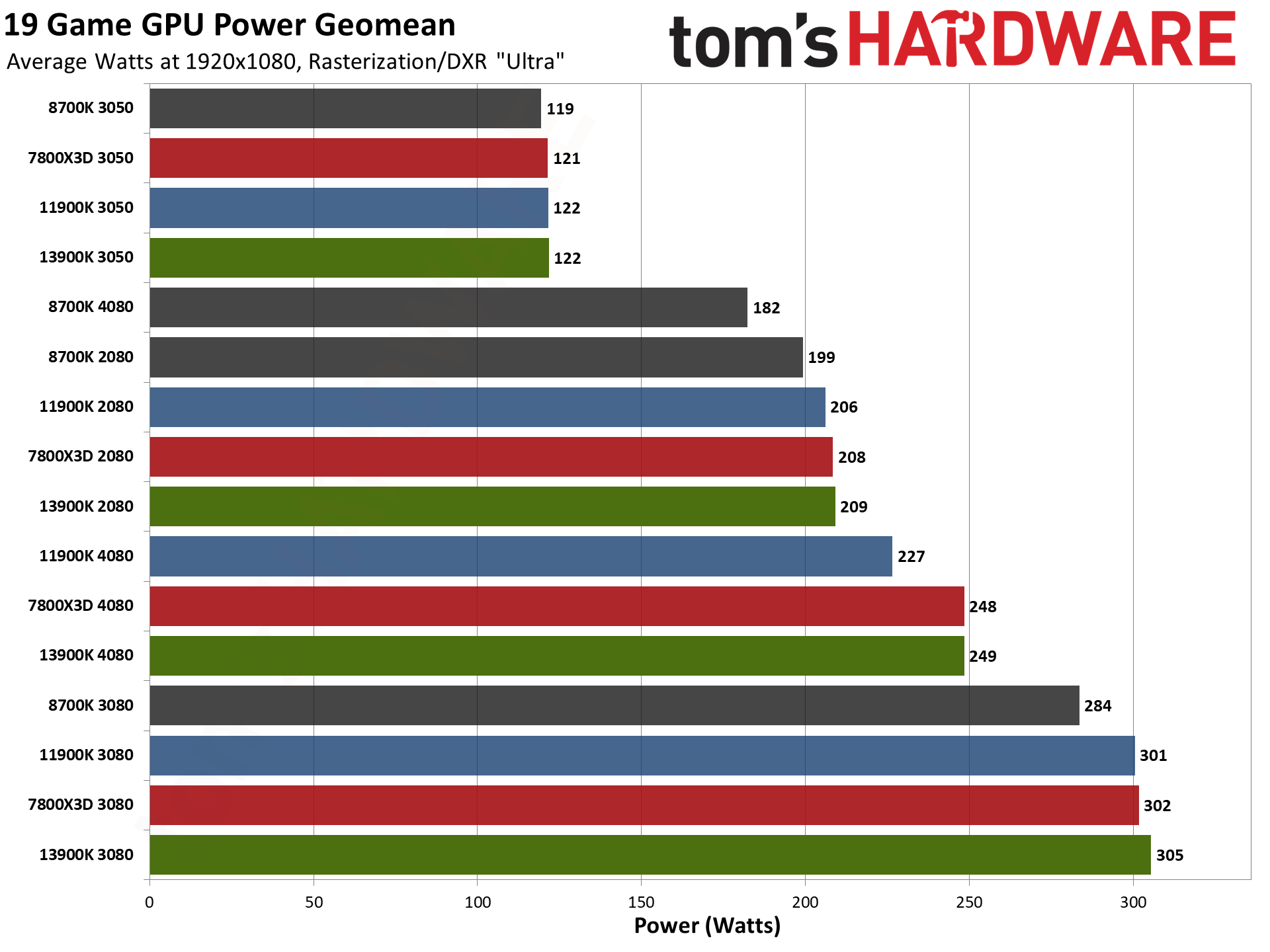

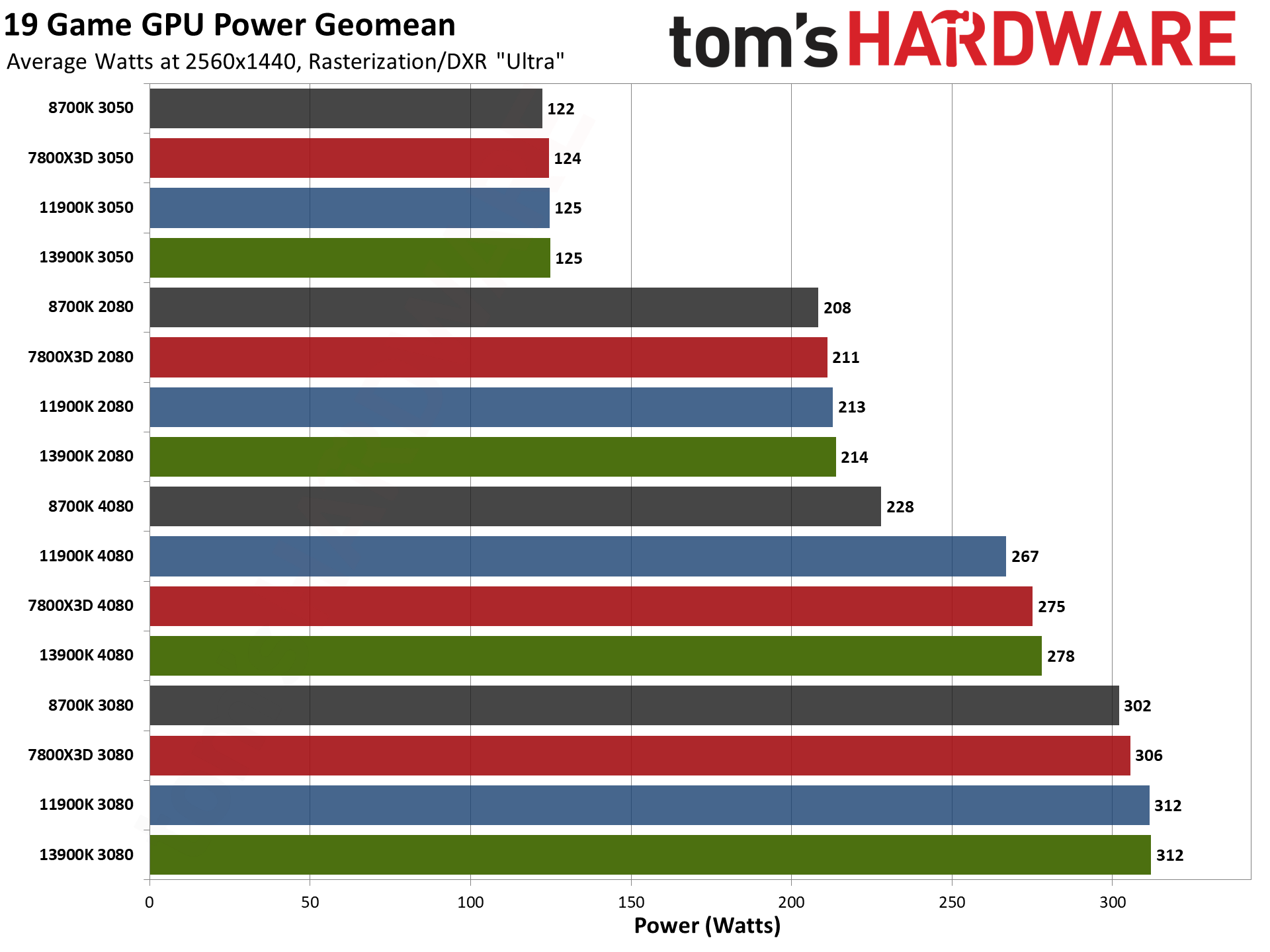

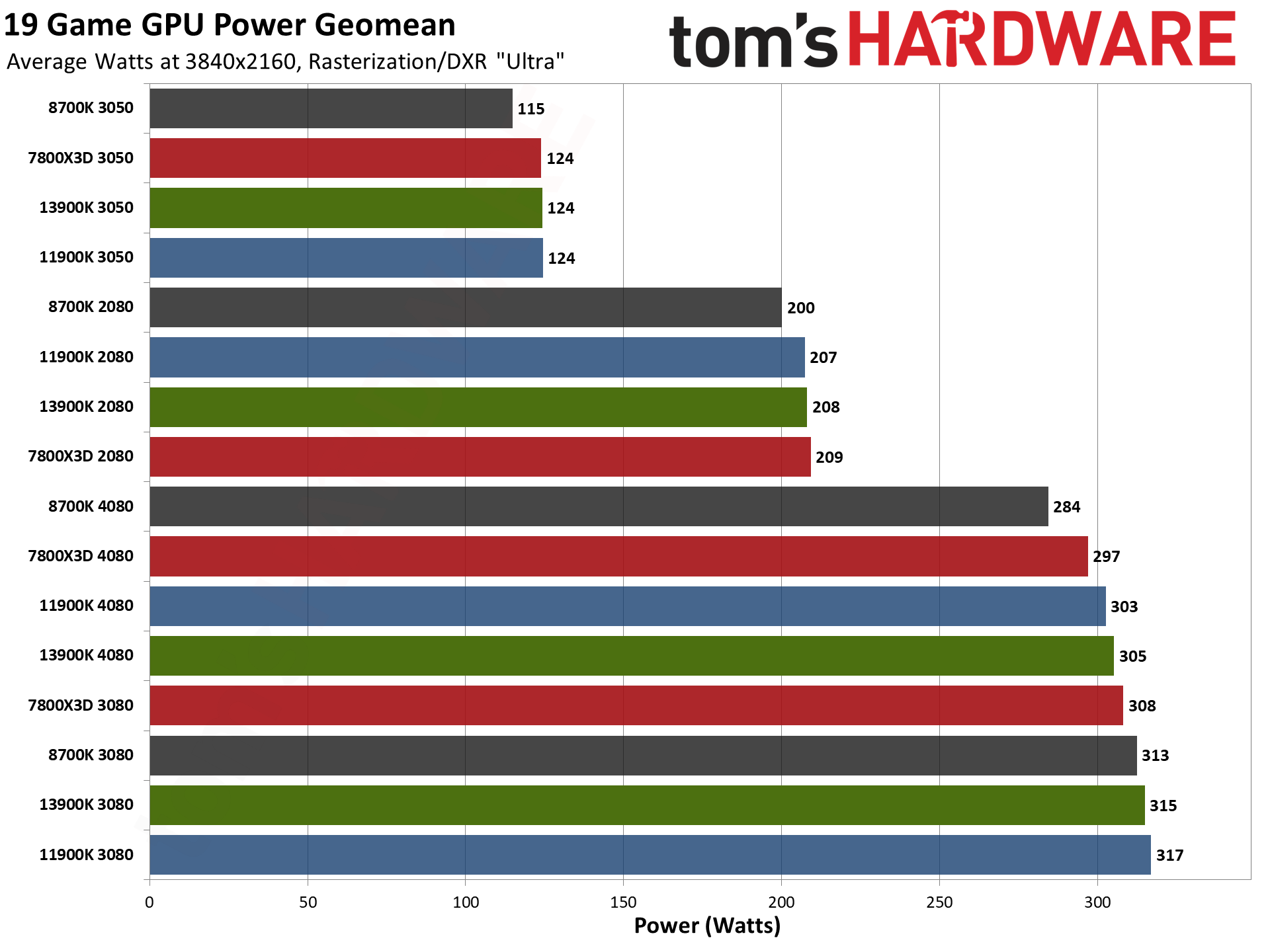

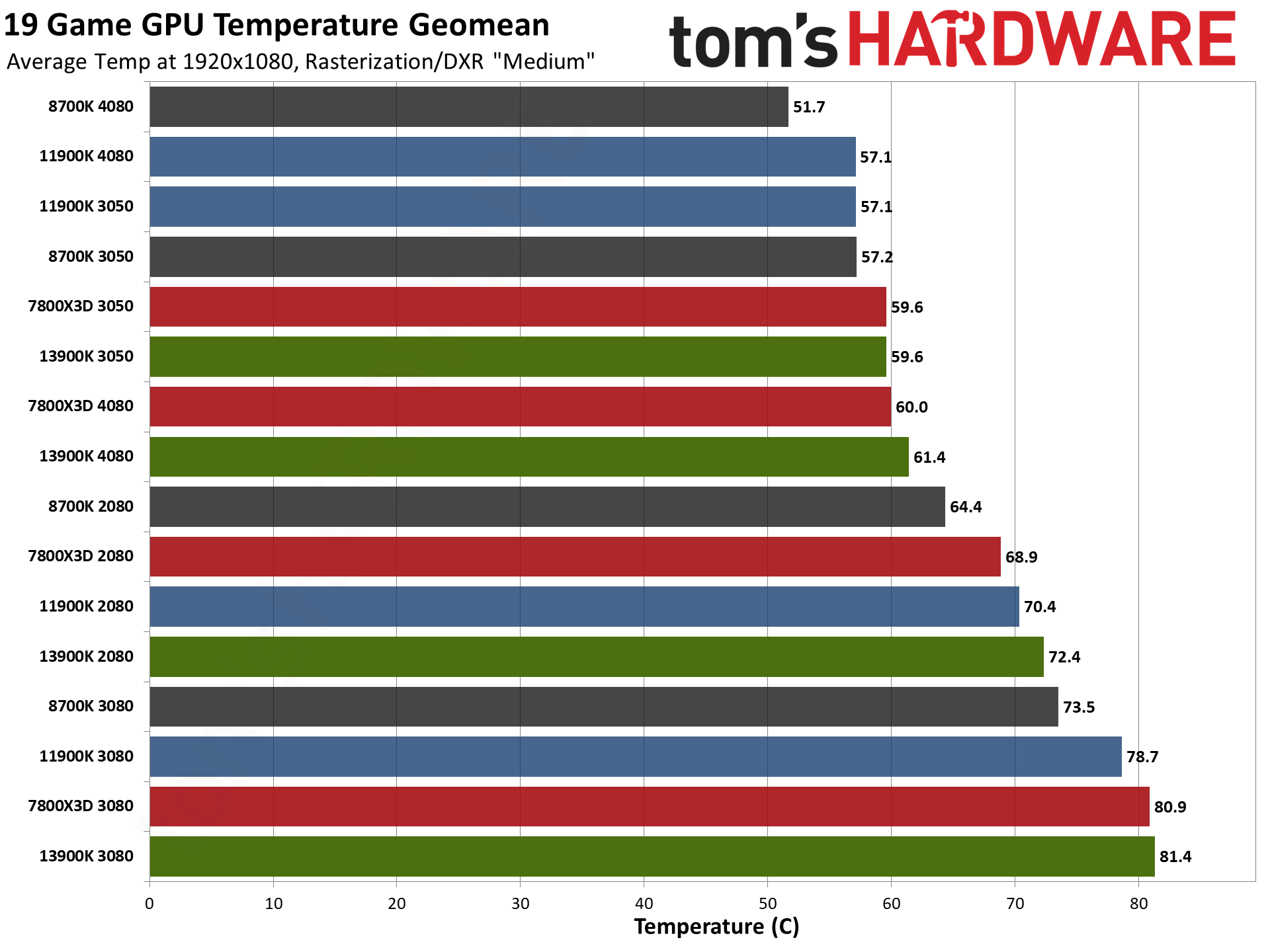

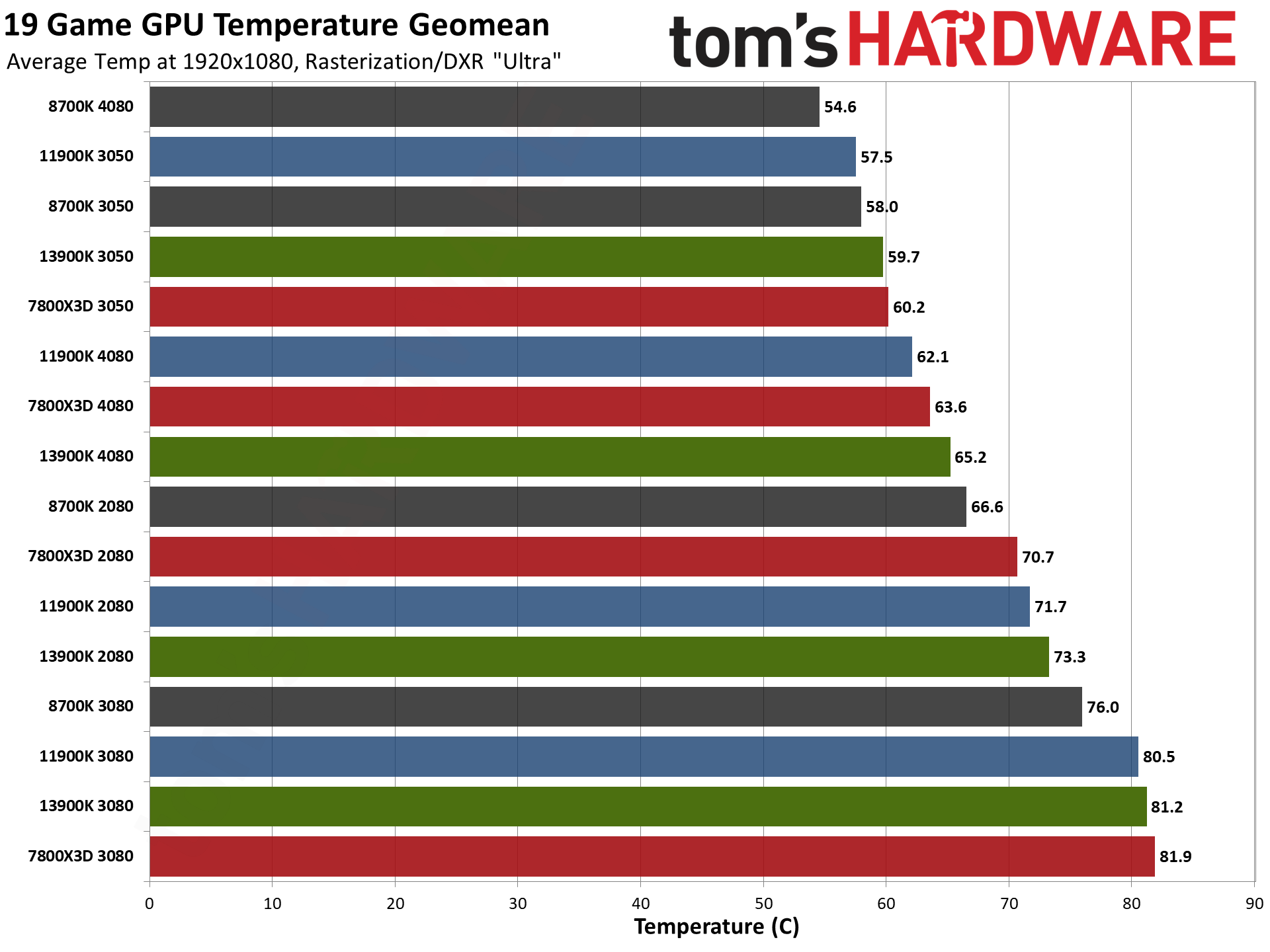

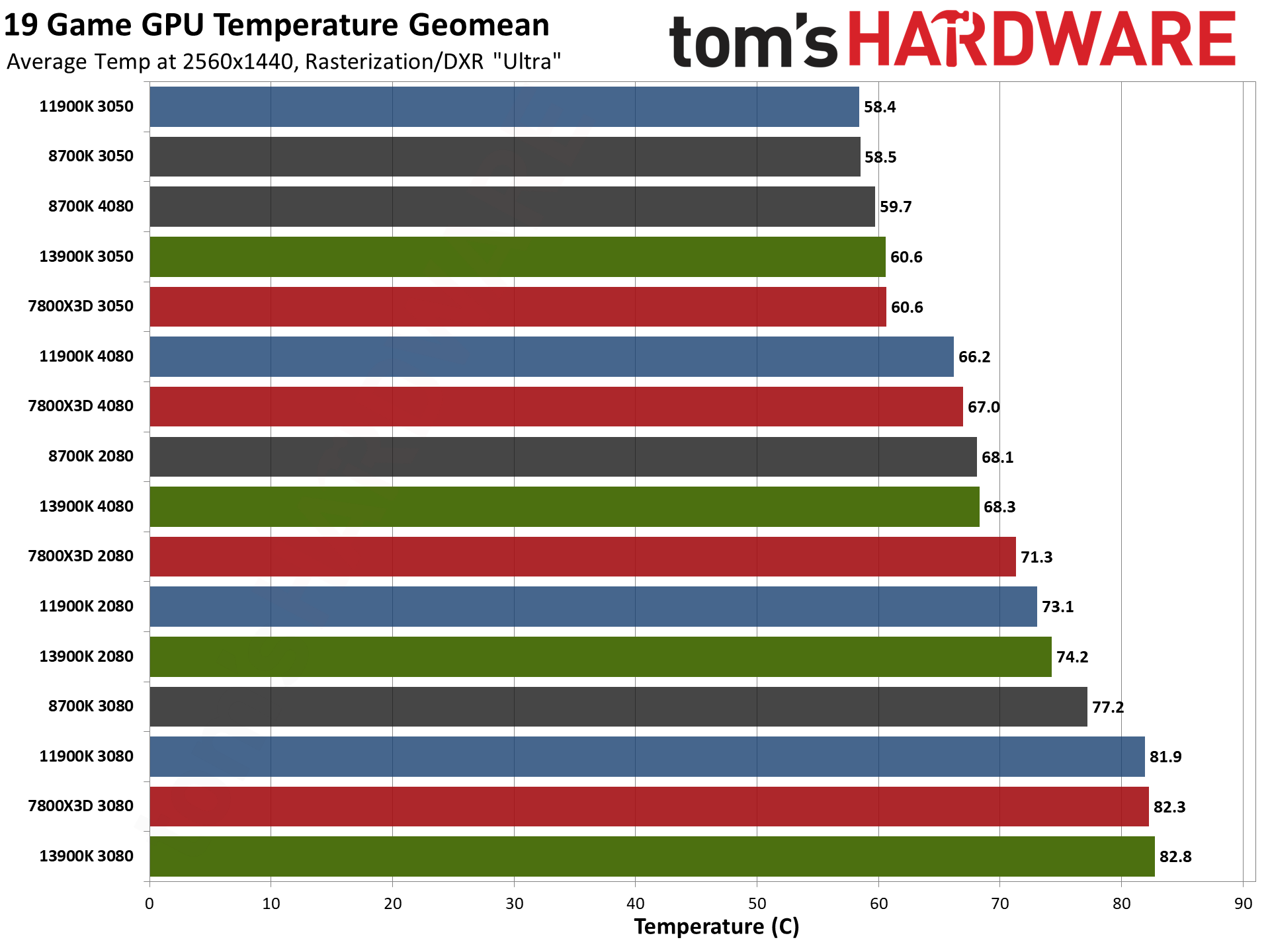

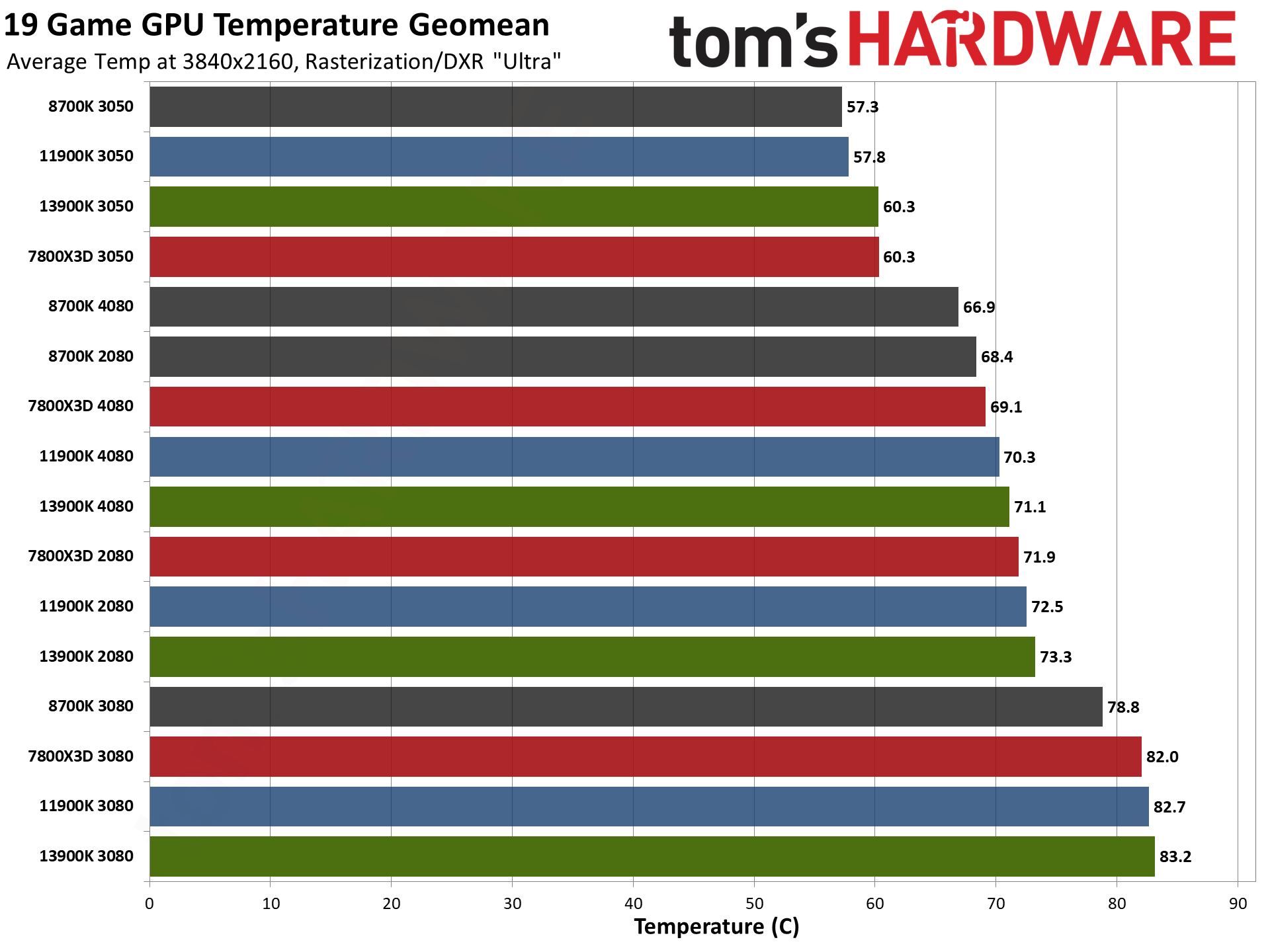

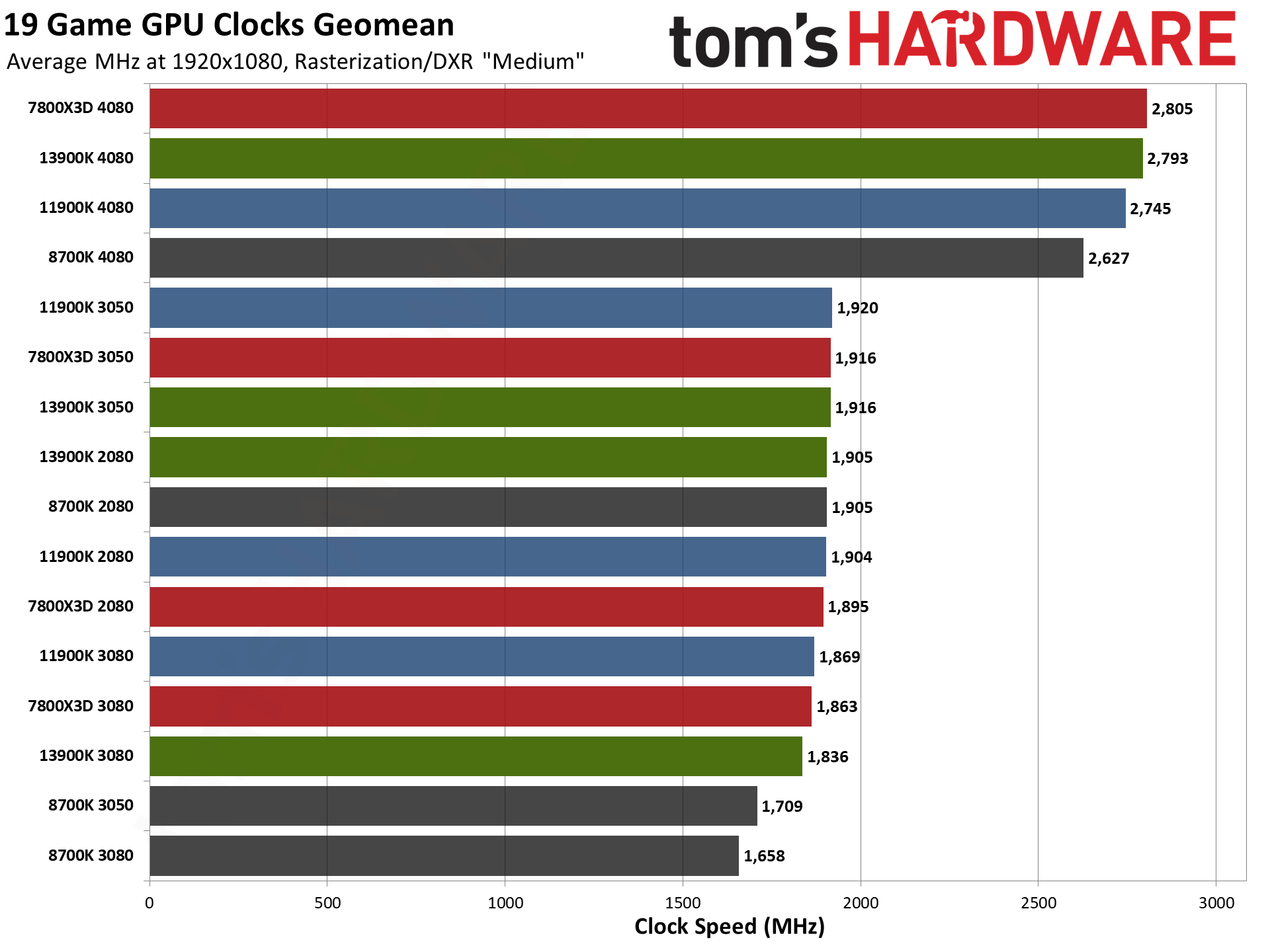

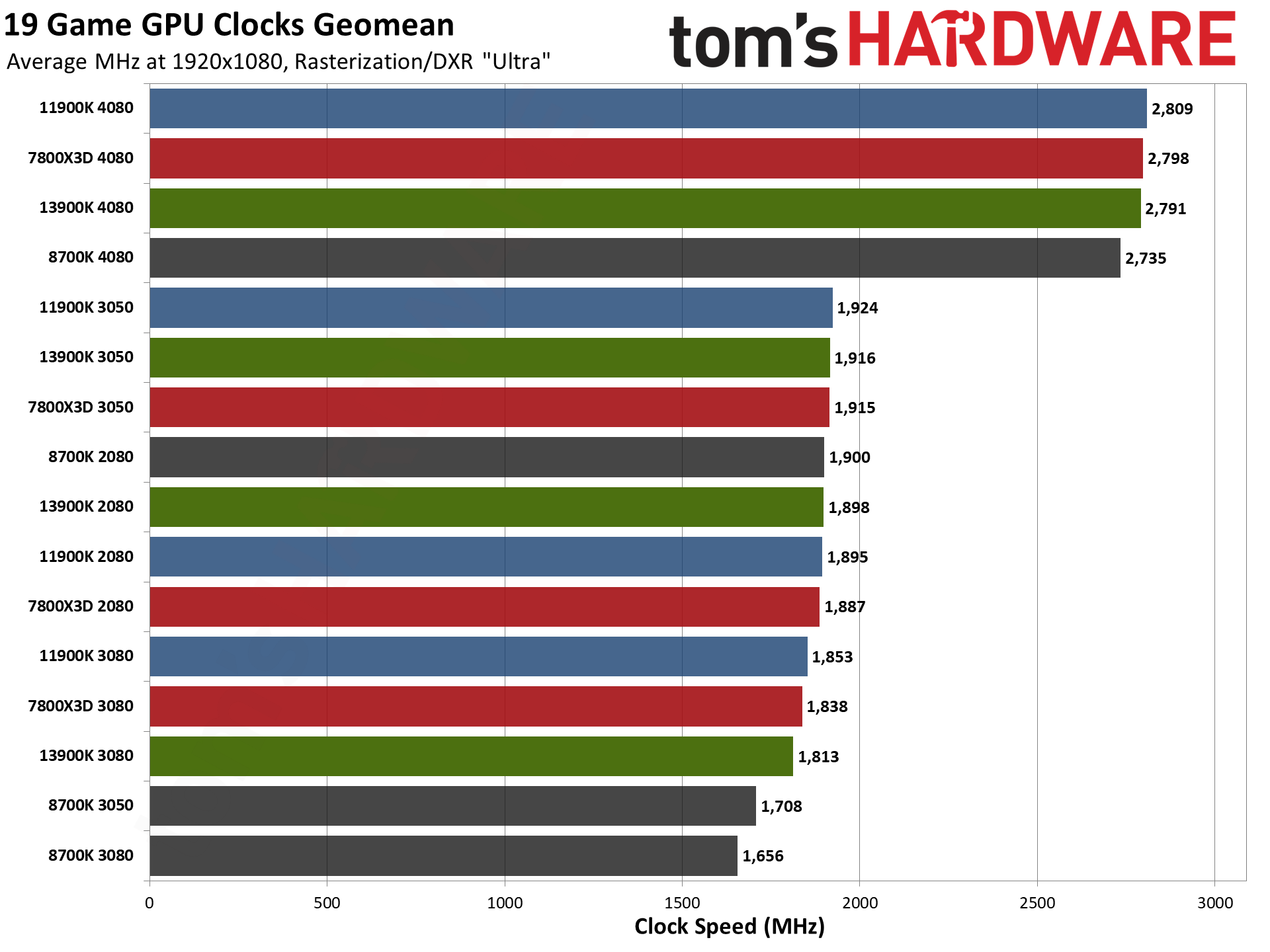

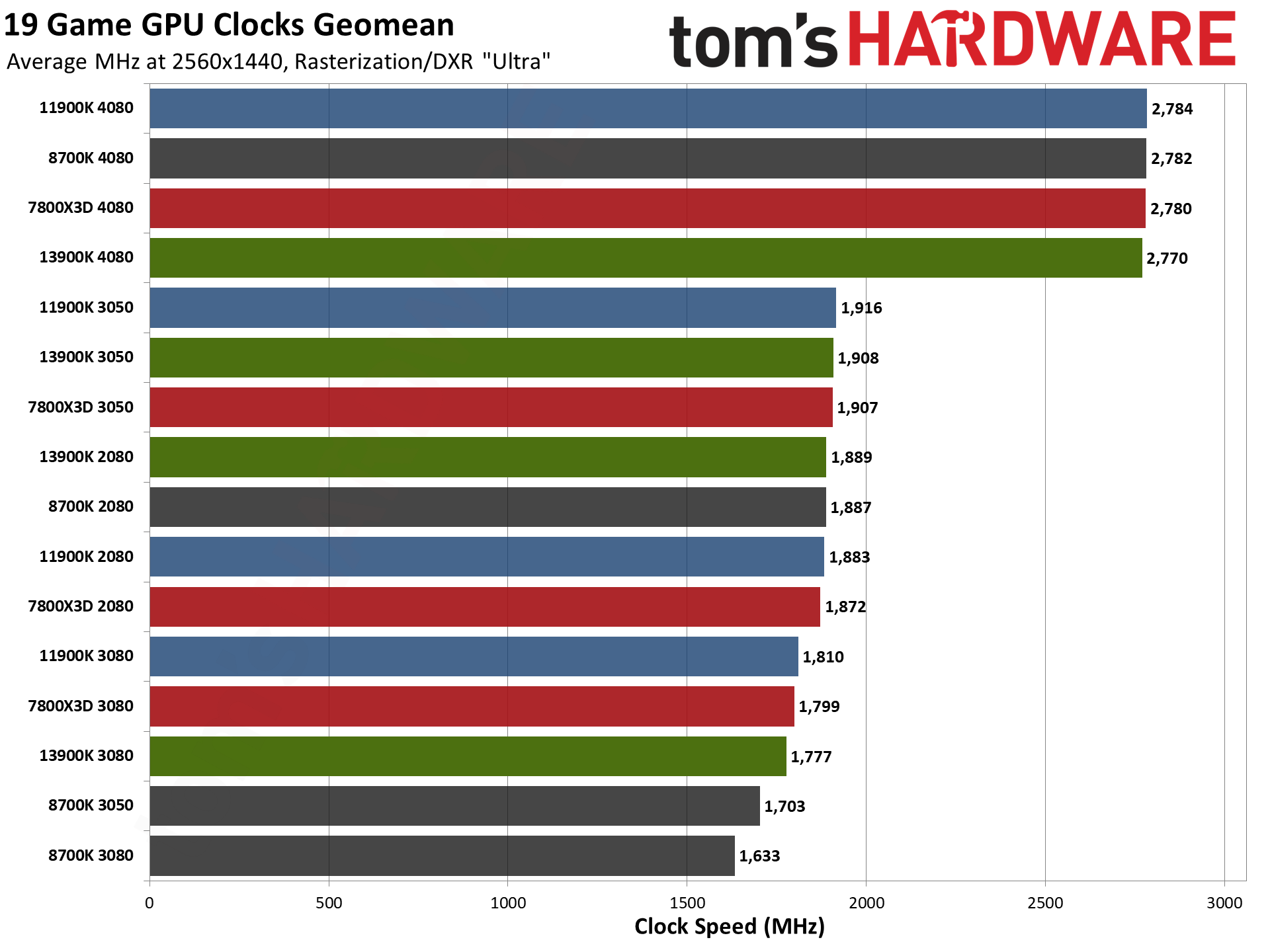

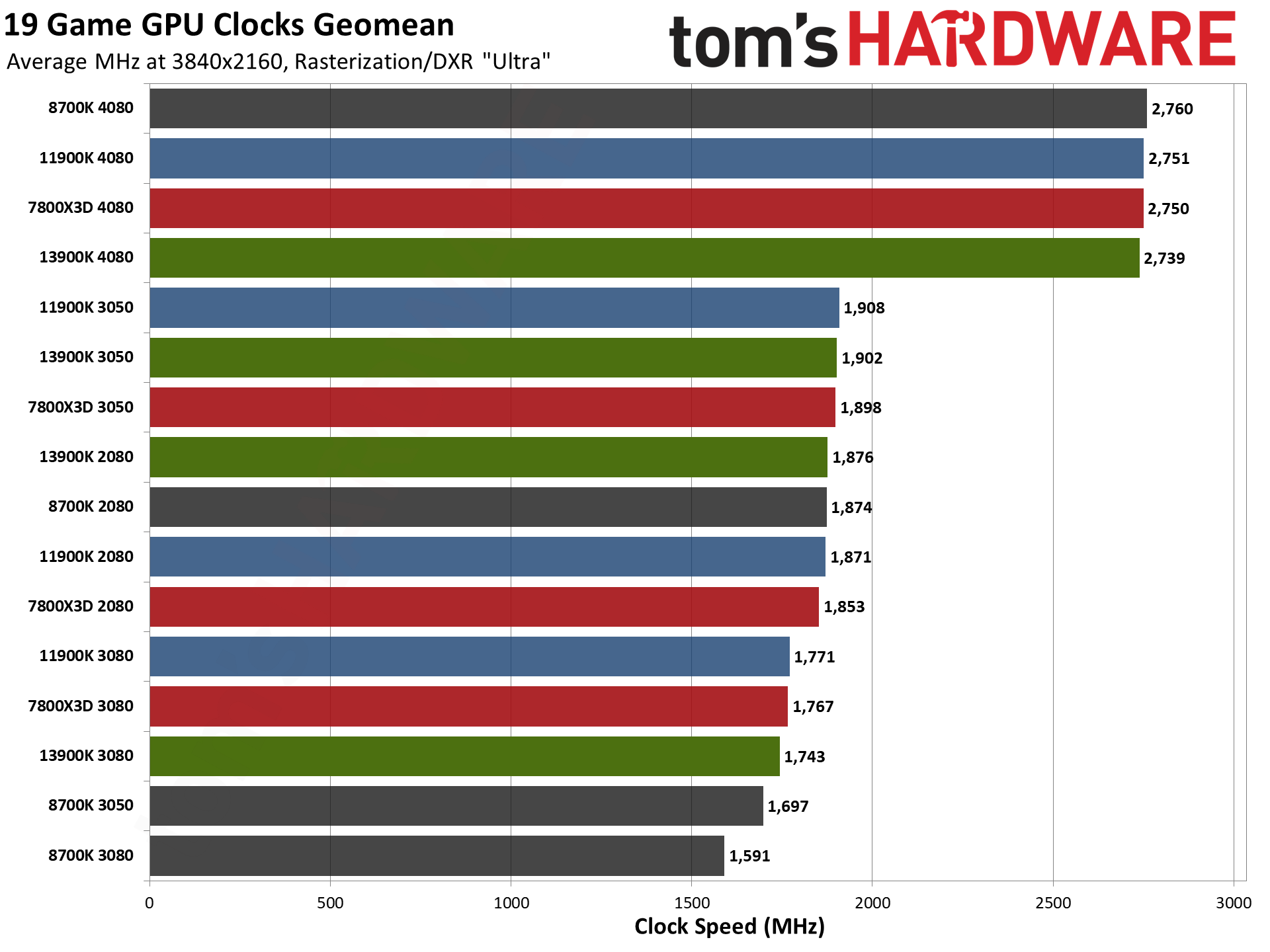

Since we collected all the data, we've also provided the GPU power, temperature, and clock speed results. These are averaged across all 19 games and aren't super interesting to dissect, but you can clearly see instances where the CPU holds the GPU back.

As a well-trodden example, the RTX 4080 on the 8700K averages just 284W of power use at 4K ultra, compared to 305W/308W on the two faster processors. That in turn means lower temperatures, though clock speeds end up being higher since the GPU isn't straining as hard and can simply run at its maximum clocks.

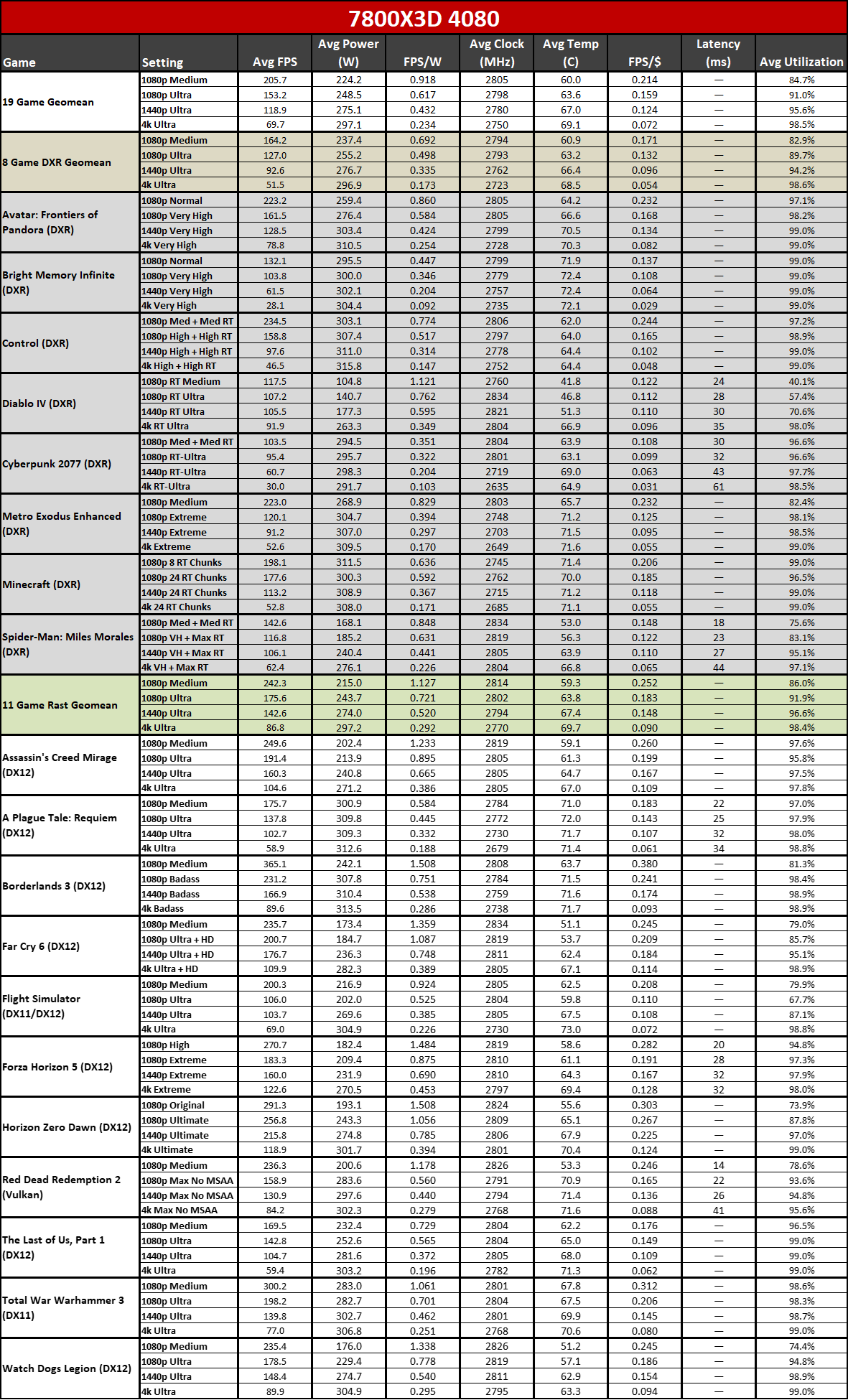

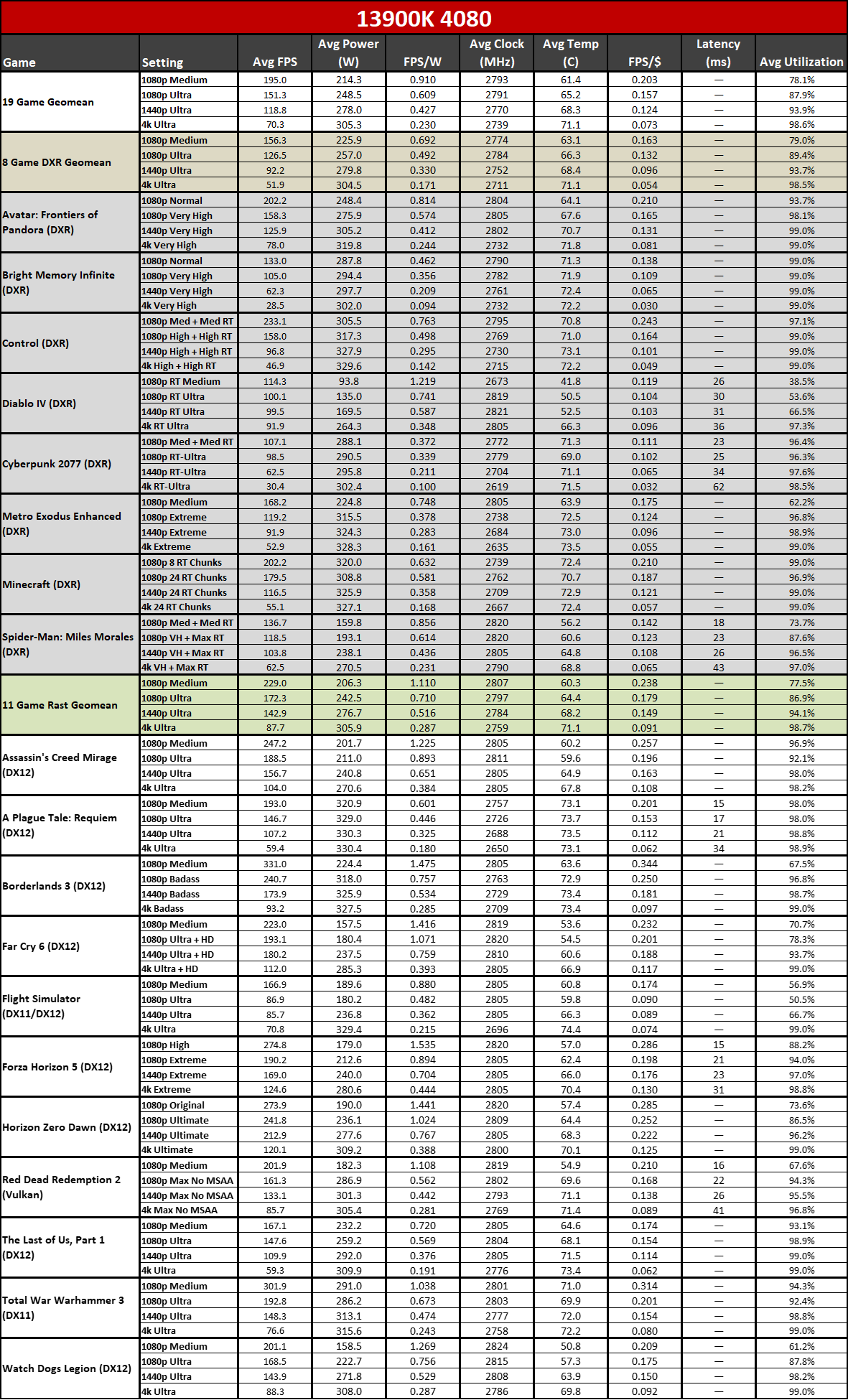

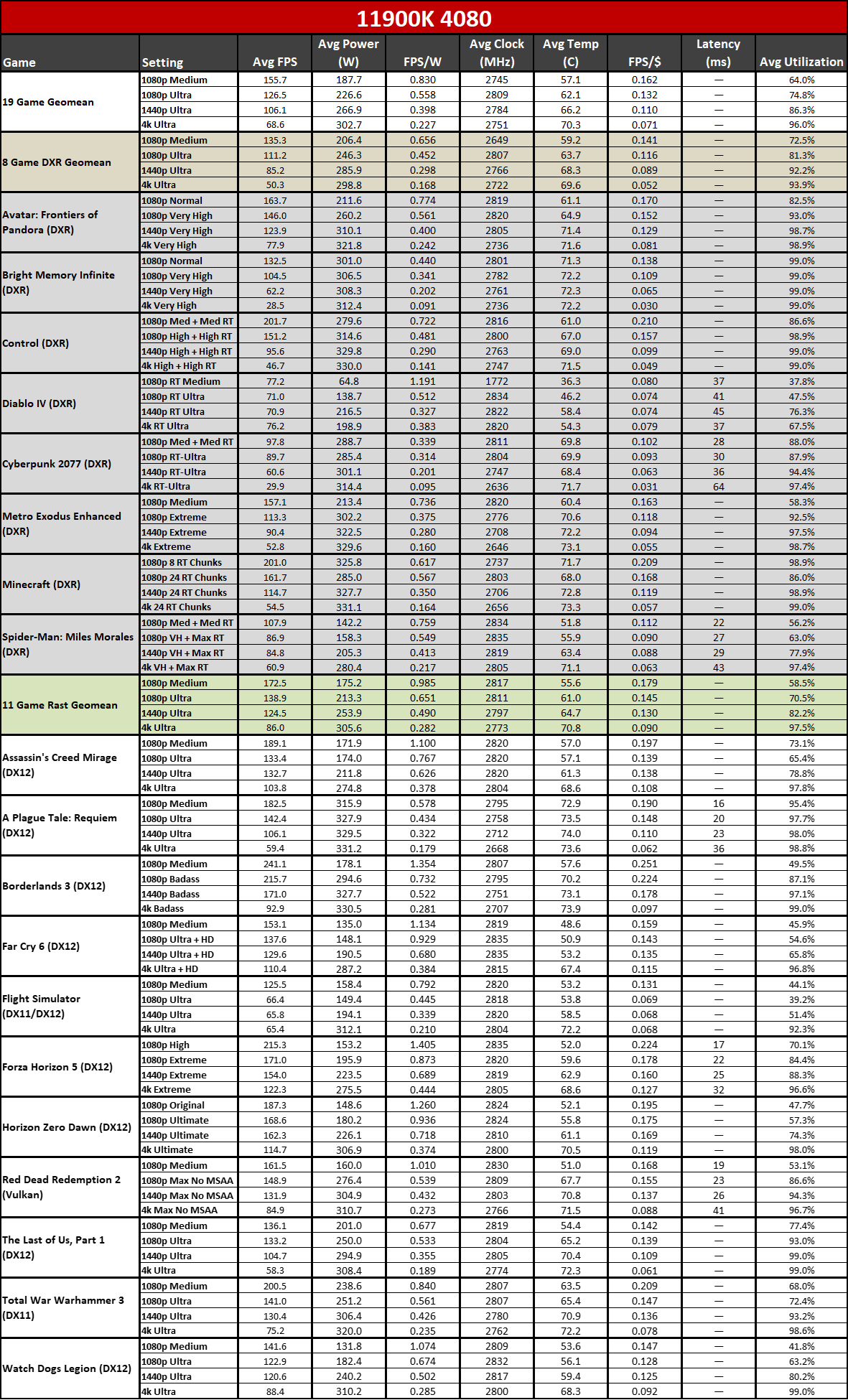

We also have our full test results that show efficiency of the GPU (FPS/W) and the relative value (FPS/$). We've grouped things by GPU, and you can see that you get proportionally less "value" from a GPU upgrade if you're using an older CPU. Again, we won't try to fully dissect all the data here, but it's available for those who want to see it.

GPU vs CPU upgrades: Balancing your PC build

PC enthusiasts likely won't find anything too shocking about all of these results. Putting together a gaming PC requires a balanced approach if you want to get the most out of your build, which is why most people who buy extreme performance graphics cards like the RTX 4080 Super and RTX 4090 also purchase an equally extreme CPU, motherboard, RAM, etc. to go with it. Likewise, mainstream builds tend to use modest GPUs, CPUs, and other components.

You don't need to use the fastest CPU to get a good gaming experience, particularly at 4K and even 1440p, but when top-tier hardware can cost $1,000 or more, you also don't want other bottlenecks holding you back. And when the next generation Nvidia Blackwell RTX 50-series GPUs arrive, alongside the AMD RDNA 4 GPUs, the bottleneck will shift even more toward the CPU on older systems.

Results will also vary — often significantly — from game to game. We've provided a relatively large look at 19 different games from the past four years, but there are tens of thousands of games available these days. If you're playing a lot of pixel art games or indie titles, chances are high that they're not going to push your GPU or CPU as hard as most of the games we used for testing.

There are also plenty of PC gamers still getting by with hardware that's slower than even the lowest combination (i7-8700K with RTX 3050 8GB) that we used for testing. If you're using such a PC and it does what you need, great — be happy and don't worry about upgrading until you find something that doesn't work the way you'd like.

While it's been interesting to go back and test four different hardware combinations, it's a very time consuming process and we've only scratched the surface of the major configurations people could be using. Looking at the major desktop graphics cards of the past six years, there have been (by my count) 65 different GPUs from AMD, Intel, and Nvidia. That's the easy part.

There have also been around 127 different CPUs from AMD and Intel going back to 2017 — and that's not counting the F/T/S parts or Pentium chips, AMD's XT parts, or any of the various AMD APUs. If we wanted to test each of those 65 GPUs with every CPU, that's only 8,255 permutations, requiring about one full day per configuration. See you in 23 years or so...

In other words, what I'm saying is that there's no intent to go and test a bunch of other combinations of older CPUs and GPUs beyond this current set of 16 results. Thankfully, even this relatively small sampling provides a decent startng point, and when combined with our CPU benchmarks hierarchy and our GPU benchmarks hierarchy, you can get a decent idea of what to expect.

Keep in mind that a lot of the time, a newer CPU or GPU from a lower tier will also perform about the same as a previous gen higher tier part, like the 4070 matching the 3080, and the 4070 Super matching (mostly) a 3090 Ti; the same goes for Core i7-14700K and i9-13900K. There's a good chance that the future RTX 5070 will perform similarly to the current 4080, and Arrow Lake and Zen 5 midrange parts will look like the current high-end offerings.

Whatever your hardware, if you're in the market to upgrade and only thought the GPU mattered for gaming, we've hopefully provided some additional food for thought.

Jarred Walton is a senior editor at Tom's Hardware focusing on everything GPU. He has been working as a tech journalist since 2004, writing for AnandTech, Maximum PC, and PC Gamer. From the first S3 Virge '3D decelerators' to today's GPUs, Jarred keeps up with all the latest graphics trends and is the one to ask about game performance.

-

JarredWaltonGPU FYI, I've redirected the initial article to a new URL (which also changes the comment thread), to see how that impacts traffic. Initial comments can be found here: https://forums.tomshardware.com/threads/should-you-upgrade-your-gpu-or-cpu-for-faster-gaming-we-tested-many-hardware-combos-to-find-out.3847626/Reply -

thestryker As always these are invaluable for comparisons and figuring out what to upgrade when. Hoping for at least some 7900 XT/XTX on 8700K results in the future!Reply

Just to help anyone who might have different hardware with some rough analogs (just for gaming):

8700K is a little bit faster than AMD's Zen 2 X SKUs, but close enough for comparison

11900K is a little faster than 12100 and a little slower than 12400 for Intel and right around the 5600/X for AMD

(not going to compare AMD to NV GPUs as more can go into this than raw performance)

RTX 3080 is generally faster than the 4070, but slower than 4070 Super (it's enough faster than anything 20 series nothing matches here)

RTX 2080 is about the performance of the RTX 4060

RTX 3050 is a little faster than the 1660 Ti/Super and a little slower than 2060 6GB and about the same as the GTX 1080 -

Unolocogringo Thanks for the effort you put into this type of article.Reply

This is information that is asked for a lot in the forums. -

Mattzun I was looking for this article to see if any of the complaints about the gaming performance of the 9700X even matter on anything other than a 4090.Reply

I found the article very valuable - it just doesn't matter if a 9700X is 5 percent or 25 percent faster than a 7700X when a much slower Intel 11th gen is close to maxing out a 4080 at 4k

Its too bad that there are far more people viewing and commenting on the completely irrelevant poor performance of a 9700X at 1080P/low when they are gaming on a 4070 or 4080 at 1440P or 4K with DLSS.

If articles like this aren't feasible due to viewership, could you add a page to GPU reviews that shows how one game that scales with CPU (i.e. Watch Dogs) works at several resolutions with a couple of CPUs.

It would be helpful for deciding if a GPU upgrade would be helpful for someone who doesn't want to upgrade their entire system.

Alternately, an article like how much CPU do you need for a 3080 would be useful for whatever the next mid-high end cards come out. -

JarredWaltonGPU Reply

Yeah, I find the 1080p testing results on CPUs to only be moderately useful. But if you look at my GPU benchmarks, what you'd discover is that CPU bottlenecks really only matter with the top two or three current GPUs at lower settings. 4K ultra, you probably would see a 2-3 percent difference between i9-14900K and i5-14600K, or Ryzen 7 7800X3D and Ryzen 5 7600X, even with cards like the 4090.Mattzun said:I was looking for this article to see if any of the complaints about the gaming performance of the 9700X even matter on anything other than a 4090.

I found the article very valuable - it just doesn't matter if a 9700X is 5 percent or 25 percent faster than a 7700X when a much slower Intel 11th gen is close to maxing out a 4080 at 4k

Its too bad that there are far more people viewing and commenting on the completely irrelevant poor performance of a 9700X at 1080P/low when they are gaming on a 4070 or 4080 at 1440P or 4K with DLSS.

If articles like this aren't feasible due to viewership, could you add a page to GPU reviews that shows how one game that scales with CPU (i.e. Watch Dogs) works at several resolutions with a couple of CPUs.

It would be helpful for deciding if a GPU upgrade would be helpful for someone who doesn't want to upgrade their entire system.

Alternately, an article like how much CPU do you need for a 3080 would be useful for whatever the next mid-high end cards come out.