Acer XB280HK 28-inch G-Sync Ultra HD Gaming Monitor Review

Acer breaks new ground with its XB280HK. Offering an Ultra HD resolution and G-Sync in a reasonably-priced 28-inch package, this monitor is unique.

Why you can trust Tom's Hardware

G-Sync Setup And Performance

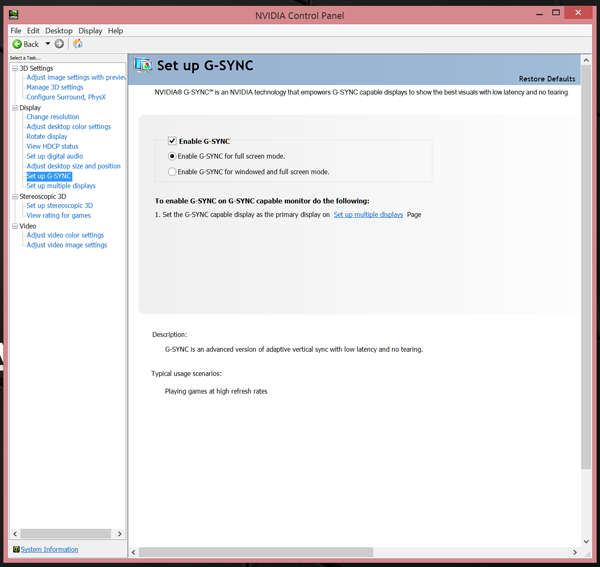

By now I’m sure you know the drill for enabling G-Sync. Make sure you have the latest Nvidia drivers installed. We used version 353.06 dated May 27th in our test system. Then make sure you have at least a GeForce GTX 650Ti or better. Our PC employs a GeForce GTX Titan X, which you really need at 4K. From there, open the Nvidia control panel and check the appropriate box under “Set up G-Sync”.

Unlike older builds, you no longer need to enable G-Sync in two places. And the latest drivers support variable refresh in both windowed and full-screen applications.

Once configured, we played Far Cry 4 at various settings to see how different frame rates affected the experience. At 3840x2160, we maintained around 45 FPS with the detail preset at High. This was just low enough to cause a tiny bit of judder. Still, there were no tears. Upping the detail level to Ultra reduced the frame rate to around 35. The only difference was a tiny bit more judder, but no apparent rise in input lag. Tearing was still non-existent.

At this point, I'd say that 30 FPS is a practical lower limit not just for G-Sync, but for game play in general. Anything less and you’re looking at major judder and an obvious reduction in responsiveness. Debates about what happens below 30 FPS in a G-Sync vs. FreeSync comparison are moot in my opinion because that’s where on-screen motion is simply too slow to provide a decent experience.

Any frame rates from 35 up to the XB280HK’s maximum of 60 make for ideal playability. Will higher rates make things smoother? Absolutely. But at Ultra HD, you’re going to need more processing power than even a single Titan X can provide. Our G-Sync experience with this screen is overwhelmingly positive.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: G-Sync Setup And Performance

Prev Page Pixel Response And Input Lag Next Page Conclusion

Christian Eberle is a Contributing Editor for Tom's Hardware US. He's a veteran reviewer of A/V equipment, specializing in monitors. Christian began his obsession with tech when he built his first PC in 1991, a 286 running DOS 3.0 at a blazing 12MHz. In 2006, he undertook training from the Imaging Science Foundation in video calibration and testing and thus started a passion for precise imaging that persists to this day. He is also a professional musician with a degree from the New England Conservatory as a classical bassoonist which he used to good effect as a performer with the West Point Army Band from 1987 to 2013. He enjoys watching movies and listening to high-end audio in his custom-built home theater and can be seen riding trails near his home on a race-ready ICE VTX recumbent trike. Christian enjoys the endless summer in Florida where he lives with his wife and Chihuahua and plays with orchestras around the state.

-

Frozen Fractal On first glance I thought I wouldn't have to see "we will be reviewing XB270HU soon" quote again. But then I realized it's XB280HK. Oh well, guess have to endure that quote for few more time :rolleyes:Reply

Contrast ratio, brightness, chromacity & gamma tracing is where XB280HK looses the ground, but to be fair, most of the gamers won't be noticing much difference at all. But it is kind of disappointing to see Planar do better in these fields than Acer utilizing the same panel. I don't know, maybe the overdrive somehow worsen the results?

But ofcourse, it does well on uniformity and response time. Makes me wonder why XB280HK doesn't have ULMB if it's supposed to be a bundled feature with G-Sync. That should've helped in 60Hz panels more, rather than 144Hz ones.

But anyway, XB280HK looks promising, although I don't think 4K is what I prefer for gaming+life (although I do for gaming only). -

Frozen Fractal Reply16328127 said:Sorry if i missed it but what version display port is it?

It's 1.2a I presume. Since that's what is capable of 4K@60Hz other than HDMI 2.0 -

boju Should be at least version 1.2 for 4k @ 60Hz since this version has been doing this since year 2009. v1.2a is the same Res/Hz deal but brings added support for Freesync.Reply

-

picture_perfect Why do they keep pushing 4K for gaming. True gamers have always regarded fps as king and 4K is one-quarter the frame rate of 1080. Gamers don't need expensive 4K monitors driven by expensive cards at ever-lower frame rates (via G-sync). This is chasing the proverbial tail and counterproductive. Regular 1080p, v-synced at a constant 144 fps would be better than all that stuff.Reply -

spagalicious ReplyWhy do they keep pushing 4K for gaming. True gamers have always regarded fps as king and 4K is one-quarter the frame rate of 1080. Gamers don't need expensive 4K monitors driven by expensive cards at ever-lower frame rates (via G-sync). This is chasing the proverbial tail and counterproductive. Regular 1080p, v-synced at a constant 144 fps would be better than all that stuff.

*Competitive Gamers

People that like to play games also like to play games in ultra HD resolutions.

-

picture_perfect 4K is cool but GPUs cant handle it well enough yet. I'd rather have 1080p at high fps and gain an extra 1-2 frames of lag, but to each their own.Reply -

bystander Reply16328109 said:But ofcourse, it does well on uniformity and response time. Makes me wonder why XB280HK doesn't have ULMB if it's supposed to be a bundled feature with G-Sync. That should've helped in 60Hz panels more, rather than 144Hz ones.

ULMB uses flickering to lower persistence, which reduces the motion blur. If you've ever used 60hz CRT monitors, you'll know that flickering is painful on the eyes. This is why ULMB mode is not offered on 60hz monitors, and likely won't be offered on anything less than 75-85hz.

-

bystander Reply16329841 said:4K is cool but GPUs cant handle it well enough yet. I'd rather have 1080p at high fps and gain an extra 1-2 frames of lag, but to each their own.

Top end GPU's can handle 4K just fine. You just don't play it at max settings. What is better, medium to high settings and 4K, or maxed at 1080p? That is a subjective question, and will vary from person to person.

That said, I prefer higher refresh rates than 60hz, so I'll be going 1440p before 4K.