DDR3-1333 Speed and Latency Shootout

Overclocking Results

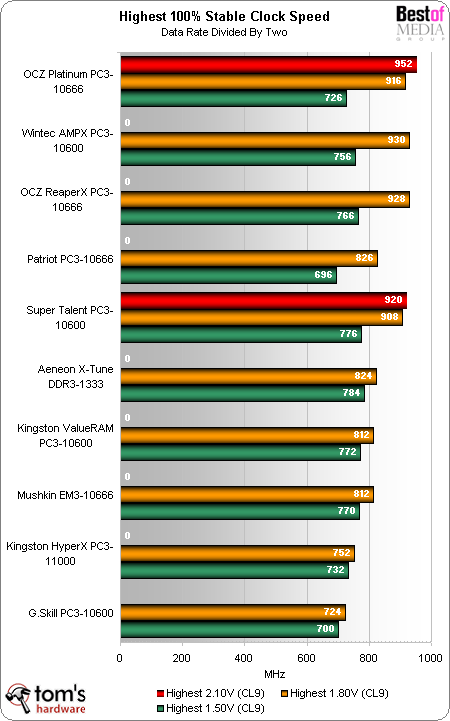

Overclocking often requires increased voltage, but some modules are less tolerant of voltage increases than others. Likewise, some overclockers are more enthusiastic than others, so we picked three voltage levels to represent the majority of builders: stock (1.50 Volts), which is a reasonably safe over-voltage level (1.80 Volts); and an insane "extreme enthusiast" level (2.10 Volts). Notice that even our "reasonably safe" 1.80 volt testing increases the default voltage by 20%, yet we're fairly confident that most modules can tolerate this setting through several years of use.

To keep things fair, we set all modules to loose 9-9-9-24 timings for overclock tests. How far did we get?

OCZ Platinum DDR3-1333 easily beats competitors at 2.10 volts, trouncing even the same brand's "ReaperX" extreme-overclocking model. Wintec AMPX comes in second place overall, with the highest 1.80 volt overclock but an inability to gain from a further increase to 2.10 volts.

We were surprised that the OCZ ReaperX modules didn't overclock better at 2.10 volts than at 1.80 volts, because they're cooled so well. Yet, OCZ wasn't the only company to have its high-end parts fall behind its own lower-rated parts, as Kingston's office-grade PC3-10600 also beat its extreme-performance HyperX PC3-11000.

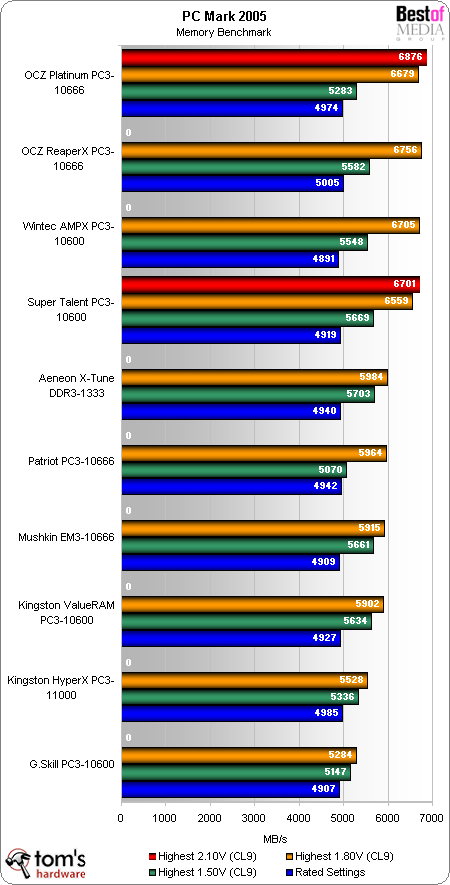

Now we can compare the performance of each kit's "rated timings" to that of its highest-speed CAS 9 settings. PC Mark 2005's memory bench leads the benchmark session.

Did we really need benchmarks to prove the fastest modules had the best performance? Probably not, but they certainly drive the point home. Yet, the OCZ ReaperX's 928 MHz memory clock somehow beat the 930 MHz memory clock of Wintec's AMPX, probably by using a different combination of timings other than the four we set manually.

Current page: Overclocking Results

Prev Page Test Settings: Overclocking Comparison Next Page Overclocking Results, ContinuedGet Tom's Hardware's best news and in-depth reviews, straight to your inbox.

-

dv8silencer I have a question: on your page 3 where you discuss the memory myth you do some calculations:Reply

"Because cycle time is the inverse of clock speed (1/2 of DDR data rates), the DDR-333 reference clock cycled every six nanoseconds, DDR2-667 every three nanoseconds and DDR3-1333 every 1.5 nanoseconds. Latency is measured in clock cycles, and two 6ns cycles occur in the same time as four 3ns cycles or eight 1.5ns cycles. If you still have your doubts, do the math!"

Based off of the cycle-based latencies of the DDR-333 (CAS 2), DDR2-667 (CAS 4), and DDR3-1333 (CAS8), and their frequences, you come to the conclusion that each of the memory types will retrieve memory in the same amount of time. The higher CAS's are offset by the frequences of the higher technologies so that even though the DDR2 and DDR3 take more cycles, they also go through more cycles per unit time than DDR. How is it then, that DDR2 and DDR3 technologies are "better" and provide more bandwidth if they provide data in the same amount of time? I do not know much about the technical details of how RAM works, and I have always had this question in mind.

Thanks -

Latency = How fast you can get to the "goodies"Reply

Bandwidth = Rate at which you can get the "goodies" -

So, I have OCZ memory I can run stable atReply

7-7-6-24-2t at 1333Mhz or

9-9-9-24-2t at 1600Mhz

This is FSB at 1600Mhz unlinked. Is there a method to calculate the best setting without running hours of benchmarks? -

Sorry dude but you are underestimating the ReapearX modules,Reply

however hard I want to see what temperatures were other modules at

a voltage of ~ 2.1v, does not mean that the platinum series is not performant but I saw a ReapearX which tended easy to 1.9v(EVP)940Mhz, that means nearly a DDR 1900, which is something, but in chapter of stability/temperature in hours of functioning, ReapearX beats them all. -

All SDRAM (including DDR variants) works more or less the same, they are divided in banks, banks are divided in rows, and rows contain the data (as columns).Reply

First you issue a command to open a row (this is your latency), then in a row you can access any data you want at the rate of 1 datum per cycle with latency depending on pipelining.

So for instance if you want to read 1 datum at address 0 it will take your CAS lat + 1 cycle.

So for instance if you want to read 8 datums at address 0 it will take your CAS lat + 8 cycle.

Since CPUs like to fill their cache lines with the next data that will probably be accessed they always read more than what you wanted anyway, so the extra throughput provided by higher clock speed helps.

But if the CPU stalls waiting for RAM it is the latency that matters.