Tom's Hardware Verdict

Predictable results are a must in professional workloads, and the W-3175X delivers with a superior blend of performance in both lightly- and heavily-threaded applications. As with most of Intel’s high-end processors, you pay a hefty premium for the privilege of owning one. But the Xeon W-3175X offers an unbeatable experience in exchange.

Pros

- +

High performance in lightly-threaded applications

- +

High performance in multi-threaded applications

- +

Unlocked multiplier makes overclocking easier

Cons

- -

Expensive motherboard is required

- -

Sky-high power consumption

- -

High price-per-core hurts value proposition

Why you can trust Tom's Hardware

Pump up the Voltage

If you're looking for the most extreme CPU available, Intel's overclockable 28-core, 56-thread Xeon W-3175X is the chip for you (provided you can come up with $3,000, plus the cost of an expensive platform). In comparison, AMD's massive Ryzen Threadripper 2990WX is downright affordable at $1,800.

Xeon W-3175X doesn't even match the core count of AMD's flagship. But Intel thinks its Skylake-SP silicon can beat AMD's finest in every type of workload, particularly the heavily-threaded tasks such a CPU was designed for. Think architectural and industrial design, or professional content creation.

As if the processor's hefty price tag wasn't enough, Intel's workstation-oriented Xeon W-3175X needs exotic accommodations for overclocking, too. The company's existing Xeon W models top out at 18 cores and drop into familiar LGA 2066 interfaces. But introducing the highest-end server silicon to workstations required stepping up to the complex LGA 3641 socket, which hasn't seen the light of day outside of data centers.

In order for us to test its Xeon W-3175X, Intel sent over a pre-built PC armed with the gorgeously-appointed $1,500 Asus ROG Dominus Extreme motherboard, featuring two 24-pin ATX connectors, a quartet of eight-pin inputs, and a pair of six-pin connectors for feeding the 255W chip through a ridiculous 32-phase power delivery subsystem. The company also shipped two 1600W EVGA T2 PSUs to serve up sufficient power for overclocking.

If that all sounds extreme to you, then we wholeheartedly agree. If ever there was a CPU able to inspire envy among enthusiasts, this is it. Intel is obviously going all-out to quell the Threadripper uprising. And while our testing determined that the W-3175X offers far more performance in most workloads without the compromises imposed by AMD's 2990WX, Intel still isn't as competitive on the pricing front. Of course, cost is usually a secondary consideration for professionals when time maps over to dollars, and the chip's overclockability will certainly find plenty of fans in high-frequency trading circles. This grants Intel the license to charge big bucks for the best CPU for desktop applications that money can buy.

Intel Xeon W-3175X Specifications

The Xeon W-3175X wades into a increasingly crowded workstation market. While AMD's Threadripper processors aren't officially aimed at that space, their combination of lower prices, higher core counts, and largely unrestrained feature sets (like unlocked multipliers, support for ECC memory, and 64 third-gen PCIe lanes on every model) is attractive among professionals.

In contrast, the Xeon W-3175X is designed for workstations. It supports ECC memory, Intel's vPro management suite, and advanced RAS (Reliability, Availability, Serviceability) features. But its design feels more like an enthusiast part due to an unlocked multiplier. That's a tactic the notoriously-stingy Intel hadn't previously explored in the Xeon W family. Thanks to AMD's enthusiast initiative, though, Intel finds itself playing with new knobs and levers.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Intel recently split its server chips out onto their own platform with a larger interface and a different chipset. However, the first round of Xeon Ws, spanning from four to 18 cores, drop right into the LGA 2066 socket we know from the company's high-end desktop motherboards (albeit paired with a server-specific C422 chipset that prevents Xeon W from working in consumer platforms). Unfortunately, that left Intel's CPUs with 18+ cores stranded on server platforms with the massive LGA 3647 interface. To bring the 3175X to workstations, Intel had to repackage that bigger socket and C620-series chipset for a more accessible form factor. As a result, we end up with a much larger CPU than we're used to seeing in a desktop system. Check out the Core i5-8086K, to the left, and the HEDT-class Core i9-9980XE, to the right, in the picture above.

| Intel Xeon W-3175X Specifications | |

| Socket | LGA 3647 (Socket P) |

| Cores / Threads | 28 / 56 |

| TDP | 255W |

| Base Frequency | 3.1 GHz |

| Turbo Frequency (TB 2.0) | 4.3 GHz |

| L3 Cache | 38.5 MB |

| Integrated Graphics | No |

| Graphics Base/Turbo (MHz) | N/A |

| Memory Support | DDR4-2666 |

| Memory Controller | Six-Channel |

| Unlocked Multiplier | Yes |

| PCIe Lanes | 48 |

The Xeon W-3175X has 28 physical cores with Hyper-Threading technology, allowing it to operate on 56 threads at the same time. A $3,000 price tag means that the 3175X only competes with AMD's 32C/64T Ryzen Threadripper 2990WX in terms of core count. Otherwise, it stands alone as the most expensive chip outside of Intel's full-on Xeon Scalable data center line-up.

Although $3,000 is decidedly steep, bear in mind that the W-3175X is eerily similar to Intel's Xeon Scalable Platinum 8180, which sells for $10,000. Of course, Intel strips features from the W-3175X to prevent data centers from using these chips en masse. For instance, the UPI (Ultra Path Interconnect), which allows multiple Xeons Scalable processors to work together, is disabled.

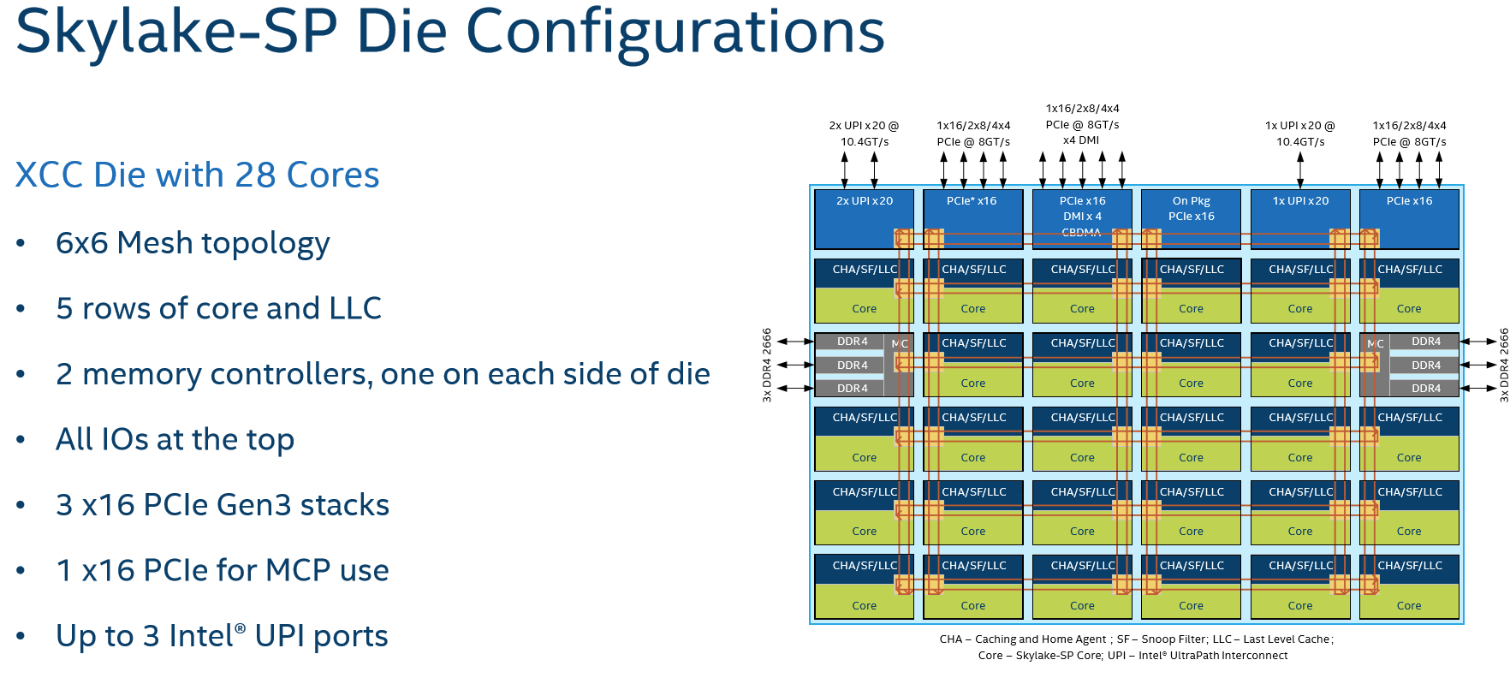

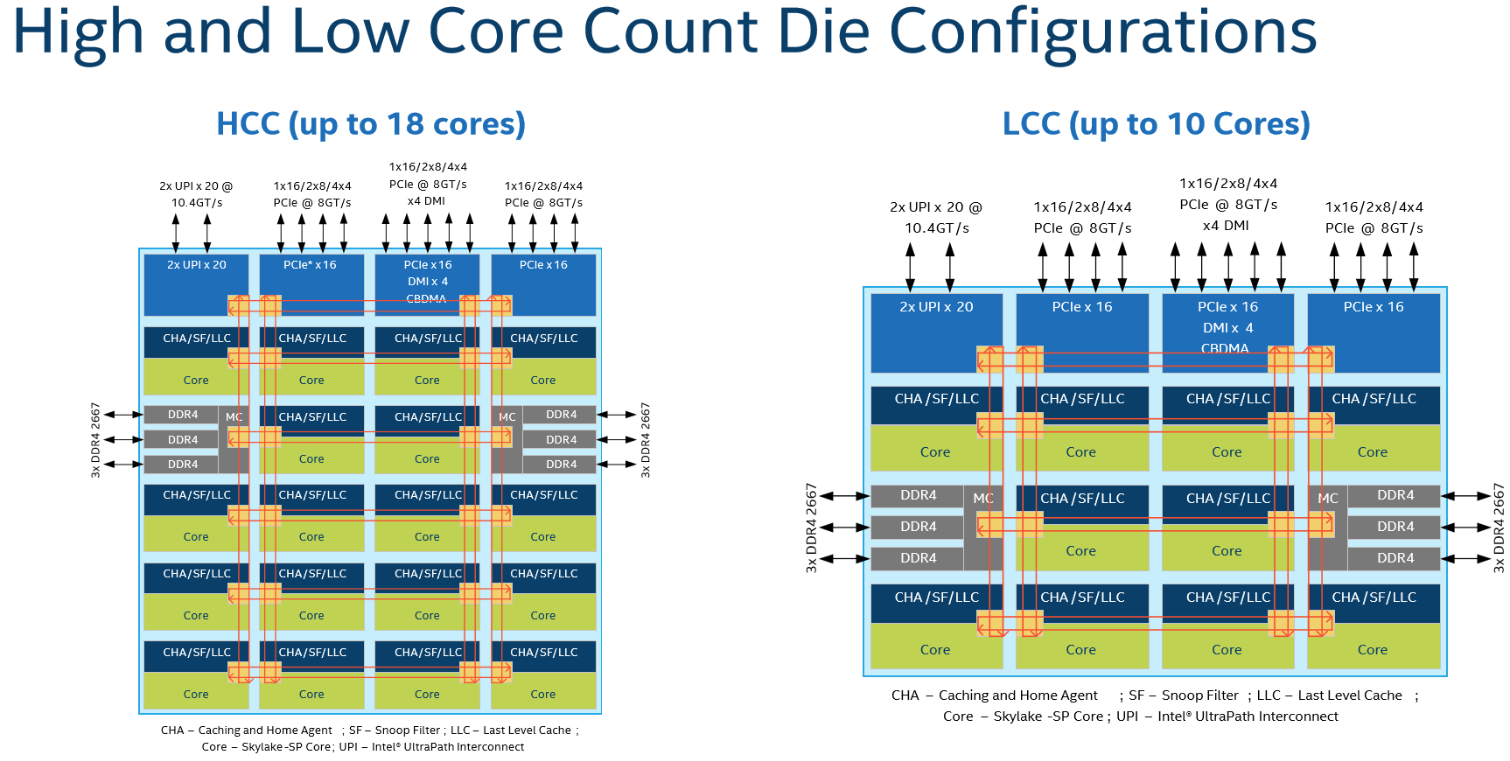

Like Intel's data center-oriented model, the W-3175X also features a familiar Skylake-SP microarchitecture, Mesh Topology, support for AVX-512, a 28-core XCC die and a rebalanced cache hierarchy that includes 1MB of private L2 cache per core and 38.5 MB of total shared L3.

Intel's Xeon processors are based on one of three dies: XCC (up to 28 cores), HCC (up to 18 cores), or LCC (up to 10 cores). Recently, the company used its HCC die for Core X-series CPUs with more than 10 cores and the LCC die for models with 10 or fewer cores. Now Intel uses the HCC die for all of its Core X-series models and the XCC die for its W-3175X.

The W-3175X also features a six-channel memory controller that supports up to 512GB of DDR4-2666 memory (less than standard Xeon's support for 768GB) in both ECC and non-ECC flavors. AMD's Threadripper platform supports up to 1.5TB of memory per chip, though its quad-channel controller can't provide as much throughput as the W-3175X (~35GB/s vs. ~59 GB/s).

Threadripper exposes 60 native PCIe lanes. Although Intel fires back with 68 "platform" lanes, that number includes additional lanes carved from the C621 chipset. The W-3175X actually only exposes 52 native PCIe 3.0 lanes. Four are dedicated to the DMI 3.0 connection between its PCH and CPU, meaning you get access to 48 native lanes.

| Active Cores - Non-AVX Frequency | Base | 1 -2 | 3 - 4 | 5 - 12 | 13 - 16 | 17 - 18 | 19 - 20 | 21 - 24 | 25 - 28 |

| Xeon W-3175X | 3.1 | 4.3 | 4.1 | 4.0 | 4.0 | 4.0 | 4.0 | 4.0 | 3.8 |

| Xeon Platinum Scalable 8180 | 2.1 | 3.6 | 3.4 | 3.3 | 3.3 | 3.1 | 3.1 | 2.9 | 2.8 |

| Core i9-9980XE | 3.0 | 4.5 | 4.2 | 4.1 | 3.9 | 3.8 | - | - | - |

As you can see above, the W-3175X's base and Turbo Boost frequencies are considerably higher than the 205W Platinum 8180, which isn't surprising given the W-3175X's 255W TDP rating. The improvements are apparent in Intel's multi-core Turbo Boost 2.0 clock rates, which increase between 700 MHz to 1 GHz depending on the number of active cores. That should yield big gains in games and productivity apps, along with sizeable speed-ups in content creation and rendering workloads.

Whereas the Core i9-9980XE uses a solder-based thermal interface material (STIM) to improve thermal transfer between its die and heat spreader, Intel's Xeon W-3175X uses the company's garden-variety thermal grease. We'd expect that to negatively affect overclocking, particularly in light of this CPU's prodigious power draw. Even at stock clock rates, expect to invest in a premium motherboard, high-capacity power supply, and beefy cooler to get the most out of the Xeon W-3175X. Intel goes so far as to recommend water-cooling.

| Row 0 - Cell 0 | Cores /Threads | Base / Boost (GHz) | L3 Cache (MB) | PCIe 3.0 | DRAM | TDP | MSRP/RCP | Price Per Core |

| TR 2990WX | 32 / 64 | 3.0 / 4.2 | 64 | 64 (4 to PCH) | Quad DDR4-2933 | 250W | $1,799 | $56 |

| Intel Xeon W-3175X | 28 / 56 | 3.1 / 4.3 | 38.5 | ? | Six-channel DDR4-2666 | 255W | $2,999 | $107 |

| TR 2970WX | 24 / 48 | 3.0 / 4.2 | 64 | 64 (4 to PCH) | Quad DDR4-2933 | 250W | $1,299 | $54 |

| Core i9-9980XE | 18 / 36 | 3.0 / 4.5 | 24.75 | 44 | Quad DDR4-2666 | 165W | $1,979 | $110 |

| TR 2950X | 16 / 32 | 3.5 / 4.4 | 32 | 64 (4 to PCH) | Quad DDR4-2933 | 180W | $899 | $56 |

| Core i9-9960X | 16 / 32 | 3.1 / 4.5 | 22 | 44 | Quad DDR4-2666 | 165W | $1,684 | $105 |

| TR 2920X | 12 / 24 | 3.5 / 4.3 | 32 | 64 (4 to PCH) | Quad DDR4-2933 | 180W | $649 | $54 |

| Core i9-9900K | 8 / 16 | 3.6 / 5.0 | 16 | 16 | Dual DDR4-2666 | 95W | $500 | $62.5 |

The Xeon W-3175X's $3,000 recommended price (per 1,000 tray units) means you pay a lot more per core than anything in AMD's Threadripper line-up. Intel obviously believes that its architecture, which doesn't require swapping between different modes for optimal performance across disparate workloads, like Threadripper, a more robust feature set, and six channels of memory throughput are worth the premium.

MORE: Best CPUs

MORE: Intel & AMD Processor Hierarchy

MORE: All CPUs Content

Current page: Pump up the Voltage

Next Page The Test System, ROG Dominus Extreme, and Test Setup

Paul Alcorn is the Editor-in-Chief for Tom's Hardware US. He also writes news and reviews on CPUs, storage, and enterprise hardware.

-

rantoc Shame i don't live on the north-pole where this cpu could be fully utilized - As a space heater and cpu ;)Reply -

shrapnel_indie Reply21725395 said:Shame i don't live on the north-pole where this cpu could be fully utilized - As a space heater and cpu ;)

With this current polar vortex.... you might not need to live at one of the poles to take advantage of the space heater qualities.

-

jimmysmitty As impressive as it is that Intel can match or beat more cores with less Intel really needs to get pricing in check. Its hard to justify this CPU when its cost is nearly double but the performance is not always double.Reply

I like Intels platform but man they really have to come back down to earth and start competing with AMD from a price perspective as well. -

rschiwal I've always been an AMD fan. For my gaming and Blender use it's Ryzen all the way! you can't beat the performance/cost ratio, but as a system administrator, I would recommend a Dell server with this processor as a core server in business infrastructure. Xeon is a known commodity. I would love to see Threadripper servers in non-critical operations until I know how dependable they are, but they are the new hotness. In business, you are looking for a dependable tractor, not a flashy sports car.Reply -

salgado18 Reply21725395 said:Shame i don't live on the north-pole where this cpu could be fully utilized - As a space heater and cpu ;)

*fortunatelly. One Blender run would melt the entire polar cap. -

bloodroses Reply21725522 said:21725395 said:Shame i don't live on the north-pole where this cpu could be fully utilized - As a space heater and cpu ;)

With this current polar vortex.... you might not need to live at one of the poles to take advantage of the space heater qualities.

I know what you mean. I live in Michigan and was greeted to -6 F outside this morning. :( I'm just glad this is only supposed to stay for a day or 2. -

logainofhades More ridiculous pricing from team blue. You could build a couple of threadripper systems, for the cost of this single Intel system.Reply -

jimmysmitty Reply21725843 said:More ridiculous pricing from team blue. You could build a couple of threadripper systems, for the cost of this single Intel system.

The issue is the market this is geared towards. That market doesn't see the same way we do. As another user said they will stick with what has worked until TR can be proven to work as well and support the same.

I agree the pricing is a bit insane though and Intel needs to get on the same level but I doubt they will until AMD truly threatens them. I mean look at the results. Its a 28 core chip thats performing on the same level and sometimes beating a 32 core chip. -

dorsai The vast majority of corporate IT departments will not care at all about the unlocked multiplier...most have strict policies about overclocking being a no go...so there's no reason to boost the rating of this chip because of it. Outside of a few key exceptions most of the test results would never justify the price associated with migration to the w-3173x platform...indeed I would guess that few of these processors will ever be bought outside of corporate IT shops with the deep pockets to purchase them. This chip is destined to be nothing but a niche product exemplifying both what Intel can do when pushed to it...and a lesson in cost vs performance economicsReply -

Brian_R170 If the system has the potential to earn you tens of thousands of dollars more than a competing system, then spending an extra $3K is a no-brainer. Of course, you have evaluate your choices 100% objectively, which isn't always easy to do without actually purchasing and using them, so Dorsai is likely correct that the vast majority will end up medium/large corporations. However, the few that do end up with reviewers and enthusiasts will undoubtedly garner the most attention.Reply