Intel Xeon E5-2600: Doing Damage With Two Eight-Core CPUs

Intel's vaunted Sandy Bridge architecture has finally made its way to the company's dual- and quad-socket-capable Xeon processors. We got our hands on a pair of eight-core Xeon E5-2687W CPUs to compare against the older Xeon 5600- and 5500-series chips.

Xeon E5-2687W: Replacing The Best With Something Better

After almost 14 years of writing about technology, I think it’s safe to say that I’ll perpetually enjoy getting my hands on the latest gear, testing it this way and that, and conveying my own impressions to folks who share my passion.

Although gaming-oriented components garner the most attention on this site, by far, enthusiasts can’t help but also get excited about more IT-oriented hardware, too. You might have a Phenom II X6 in your gaming box, but there’s a fair chance you also joined millions of readers who were curious about the water-cooled quad-Opteron rig that Puget Systems built in What Does A $16 000+ PC Look Like, Anyway?

Today’s story takes us down a similar path. We already evaluated the entire family of Sandy Bridge-E based Core i7-3000-series CPUs in Intel Core i7-3960X Review: Sandy Bridge-E And X79 Express and Intel Core i7-3930K And Core i7-3820: Sandy Bridge-E, Cheaper. We know that Intel neutered all of those desktop-oriented processors to some degree—whether to hit certain power targets at client-friendly clock rates or more easily differentiate its server parts, we may never know for sure.

Article continues belowBut now we have access to the full Monty, branded as Xeon E5 for single-, dual-, and quad-socket servers.

Meet Sandy Bridge-EP

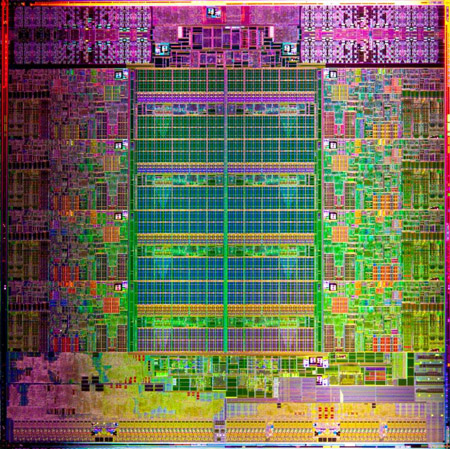

Intel uses the same piece of silicon to enable its Xeon E5s and Core i7-3000-series CPUs. As we know, Core i7s top out with six cores and 15 MB of shared L3. But the die actually hosts eight cores and 20 MB of last-level cache.

The modularity of this design is enabled by the same ring bus concept first introduced in Intel’s Second-Gen Core CPUs: The Sandy Bridge Review more than a year ago (more accurately, Xeon 7500s were Intel's first CPUs with ring buses, but we never tested them). You have cores, PCI Express control, QPI links, and a quad-channel memory controller all with stops around the ring. Because each core is tied to a 2.5 MB slice of L3 cache, it’s relatively easy to manipulate the die’s specifications to create a large number of derivative products with performance that scales up and down in a predictable way.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

For a product like Core i7-3960X, Intel simply snipped two cores and their respective 2.5 MB cache slices. But the L3 can even be tweaked more specifically than that. A few Xeon E5 models present 2 MB/core, demonstrating granularity down to 512 KB chunks.

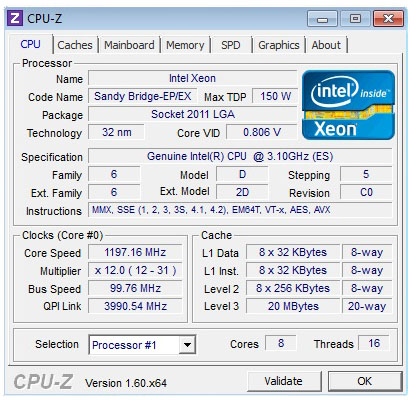

Today we’re able to test Sandy Bridge-EP (for Efficient Performance) in its most potent form: Xeon E5-2687W—a 150 W workstation-only processor boasting all eight of the die’s physical cores, its full 20 MB cache, twin 8 GT/s QPI links, 40 lanes of on-die third-gen PCIe, and a quad-channel memory controller capable of DDR3-1600. Manufactured at 32 nm, this highly-integrated SoC is composed of 2.27 billion transistors packed onto a portly 434 mm² die.

A maximum Turbo Boost frequency of 3.8 GHz makes the Xeon E5-2687W a little slower than Core i7-3960X, which hits 3.9 GHz, in lightly-threaded applications. However, a 3.1 GHz base frequency compares favorably to the -3960X’s 3.3 GHz clock in more taxing workloads thanks to the Xeon’s two-core advantage.

Although the Xeon includes more cache, it maintains the same one-core-to-2.5 MB ratio as the Core i7, and indeed most of the other Xeon E5 models.

The other notable difference between single-socket Core i7s/Xeon E5-1600s and Intel’s multi-socket platforms is the exposure of QPI. When Intel replaced the Gulftown-based processors with Sandy Bridge-E, it simultaneously shifted from three-piece platforms (CPU, northbridge, and southbridge) to a two-chip layout (CPU, platform controller hub), eliminating the I/O hub responsible for hosting PCI Express connectivity. The link between processor and northbridge, previously facilitated by QPI, was severed. With PCIe built right into Sandy Bridge-E, the southbridge component could be hitched right up to the CPU through a PCI Express-like Direct Media Interface. Thus, QPI is completely inactive on Sandy Bridge-E.

Multi-socket systems still need it for inter-processor communication, though. Sandy Bridge-EP CPUs feature two QPI links. In 2S configurations, they’re both used to shuttle data back and forth between sockets. With four processors in play, they create more of a circle, connecting each chip to the right and left. Intel tinkers with the QPI data rate as a differentiating feature, but whereas the Xeon 5600s topped out at 6.4 GT/s, yielding 25.6 GB/s per link, the highest-end Xeon E5s host 8 GT/s links, pushing bandwidth to 32 GB/s per link. Obviously, in a 2S workstation like ours, 64 GB/s of aggregate QPI bandwidth is super-duper overkill. But we’re happy to know that the days of front-side bus-based bottlenecks are over.

Aside from core count, last-level cache, and QPI, Sandy Bridge-EP is architecturally similar to Sandy Bridge-E. AVX support, AES-NI, second-gen Turbo Boost, Hyper-Threading—all of those familiar capabilities are included.

The only other difference of note is that Sandy Bridge-EP’s quad-channel memory controller supports mirroring, single device data correction, and lockstep. All three were available from Xeon 5500/5600 as well, but the whole triple-channel memory controller arrangement necessitated compromises. Now, you can mirror two channels and recover from a failure in each. Hooray for nice, round numbers.

Current page: Xeon E5-2687W: Replacing The Best With Something Better

Next Page Meet The Xeon E5s-

CaedenV My brain cannot comprehend what CS5 would look like with this combined with a 1TB R4 drive, and the GTX680 version of the Quatro would look like... and I am sure my wallet cannot!Reply

Great article! I was not expecting my mind to be blown away today, and it was :) -

dalethepcman No gaming benchmarks? I know this is a high workstation / mid server build, but you know some of the boutiques will make a gaming rig out of any platform. Just out of curiosity, I would have liked to see 2x7970 or 2x580 and a few gaming benchmarks thrown in. :)Reply -

willard dalethepcmanNo gaming benchmarks? I know this is a high workstation / mid server build, but you know some of the boutiques will make a gaming rig out of any platform. Just out of curiosity, I would have liked to see 2x7970 or 2x580 and a few gaming benchmarks thrown in.I'd be really surprised to see these in gaming machines, even in the high end boutiques. That's a $2k processor they reviewed, and basically all it offers over the $1k SB-E chip (for gamers) is an extra pair of cores, which games can't make use of.Reply -

reclusiveorc I wonder how fast TempEncode would chew thru transcoding avi/wmv files to mp3/mp4Reply -

willard esreverwhy aren't AMD cpus tested too? I wouldn't mind seeing how 2x interlagos stacks up.Anandtech benched those next to the new Xeons. Went about as well as Bulldozer vs. Sandy Bridge.Reply

http://www.anandtech.com/show/5553/the-xeon-e52600-dual-sandybridge-for-servers/6 -

cangelini esreverwhy aren't AMD cpus tested too? I wouldn't mind seeing how 2x interlagos stacks up.Mentioned on the test page--I've invited them to send hardware and they haven't moved on it yet.Reply -

willard cangeliniMentioned on the test page--I've invited them to send hardware and they haven't moved on it yet.I would guess that's because Interlagos is garbage compared to the new Xeons and they know it. I don't think they're terribly eager for the front page of Tom's Hardware to show the low end Xeon's beating the best Interlagos has to offer.Reply -

Onus What, or who, was the target? Are there military applications for this weapon?Reply

Sorry, vote me down all you like, but the title was just silly.