Tom's Hardware Verdict

Although GeForce GTX 1660 Ti costs more than the 1060 6GB it replaces, Nvidia's newest Turing-based board delivers performance similar to GeForce GTX 1070. High performance, a reasonable price tag, and modest power consumption come together in a solid upper-mainstream graphics card.

Pros

- +

Great performance at 1920 x 1080

- +

Acceptable frame rates at 2560 x 1440

- +

Retains Turing's video encode/decode acceleration features

- +

120W board power compares favorably to AMD competition

Cons

- -

No RT/Tensor cores mean you won't be able to try ray tracing or DLSS

Why you can trust Tom's Hardware

Turing Without The RTX

11/21/2019 Update: Since the launch of the GTX 1660 Ti in February 2019, the GPU landscape has changed dramatically, with a swath of "Super" cards based on the same Turing architecture, but pushing both higher performance and lower prices than the company's initial Turing lineup. Most relevant to potential buyers of the GTX 1660 Ti is the GeForce GTX 1660 Super, which delivers similar performance to the 1660 Ti, at a lower starting price of $229. At this writing, that's about $30 less than the lowest-price GTX 1660 Ti.

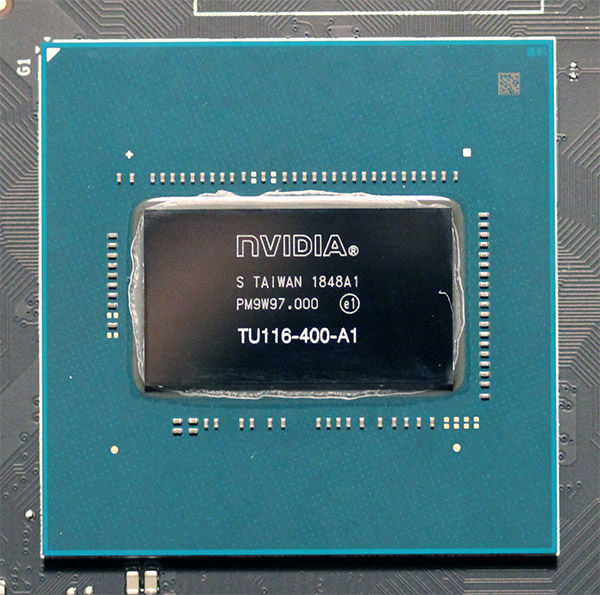

Nvidia GeForce GTX 1660 Ti is built on TU116—an all-new graphics processor that incorporates Turing’s improved shaders, its unified cache architecture, support for adaptive shading, and a full complement of video encode/decode acceleration features. The GPU is paired up to GDDR6 memory, just like the higher-end GeForce RTX 20-series models. But it’s not fast enough to justify tacking on RT cores for accelerated ray tracing or Tensor cores for inferencing in games. As a result, TU116 is a leaner chip with a list of specifications that emphasizes today’s top titles.

Nvidia says that GeForce GTX 1660 Ti will start at $280 and completely replace GeForce GTX 1060 6GB. Although that base price is $30 (or 12 percent) higher than where the Pascal-based 1060 6GB began its journey back in 2016, the company claims GeForce GTX 1660 Ti is up to 1.5 times faster—and at the same 120W board power rating, no less.

Improved performance per dollar isn’t something we’ve seen much of from the Turing generation thus far. Can Nvidia turn that around with a GPU more purpose-built for performance at 1920 x 1080?

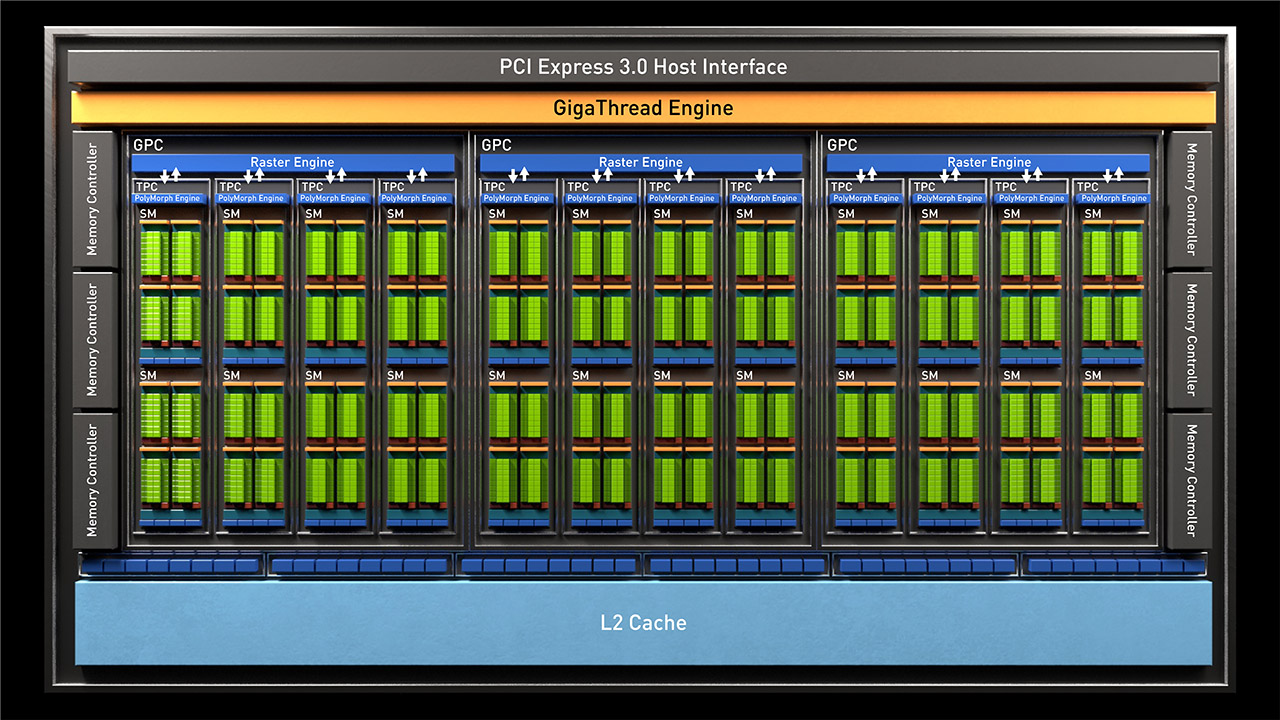

Meet TU116: Turing Sans RT and Tensor Cores

We’ve seen Nvidia launch four separate GPUs as it escorts us down the Turing hierarchy. With each, the company peels away resources to target lower price points. But we know it’s trying to maintain balance along the way, minimizing the bottlenecks that’d unnecessarily rob lower-end processors of their peak performance.

GeForce RTX 2060 is equipped with 44 percent of the 2080 Ti’s CUDA cores and texture units, 54 percent of its ROPs and memory bandwidth, and 50 percent of its L2 cache. Before the 2060 launched, we suspected that luxuries like RT and Tensor cores would no longer make sense at those levels. But a series of patches for Battlefield V—the one ray tracing-enabled game available at the time—enabled big performance gains, proving that Turing’s signature features could still be utilized at playable frame rates.

It turns out we were off by one tier. Nvidia considers TU116 the boundary where shading horsepower drops low enough to preclude Turing’s future-looking capabilities from serving much purpose. After stripping away the RT and Tensor cores, we’re left with a 284mm² chip composed of 6.6 billion transistors manufactured using TSMC’s 12nm FinFET process. But despite its smaller transistors, TU116 is still 42 percent larger than the GP106 processor that preceded it.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

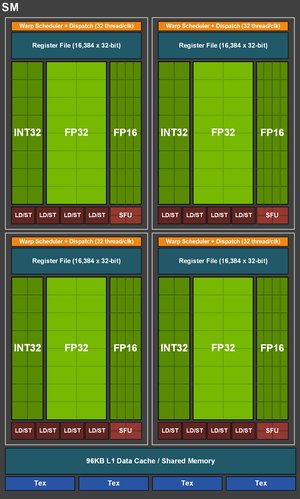

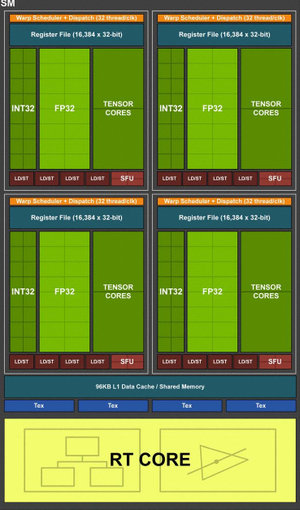

Some of the growth is attributable to Turing’s more sophisticated shaders. Like the higher-end GeForce RTX 20-series cards, GeForce GTX 1660 Ti supports simultaneous execution of FP32 arithmetic instructions, which constitute most shader workloads, and INT32 operations (for addressing/fetching data, floating-point min/max, compare, etc.). When you hear about Turing cores achieving better performance than Pascal at a given clock rate, this capability largely explains why.

Turing’s Streaming Multiprocessors are composed of fewer CUDA cores than Pascal’s, but the design compensates in part by spreading more SMs across each GPU. The newer architecture assigns one scheduler to each set of 16 CUDA cores (2x Pascal), along with one dispatch unit per 16 CUDA cores (same as Pascal). Four of those 16-core groupings comprise the SM, along with 96KB of cache that can be configured as 64KB L1/32KB shared memory or vice versa, and four texture units. Because Turing doubles up on schedulers, it only needs to issue an instruction to the CUDA cores every other clock cycle to keep them full. In between, it's free to issue a different instruction to any other unit, including the INT32 cores.

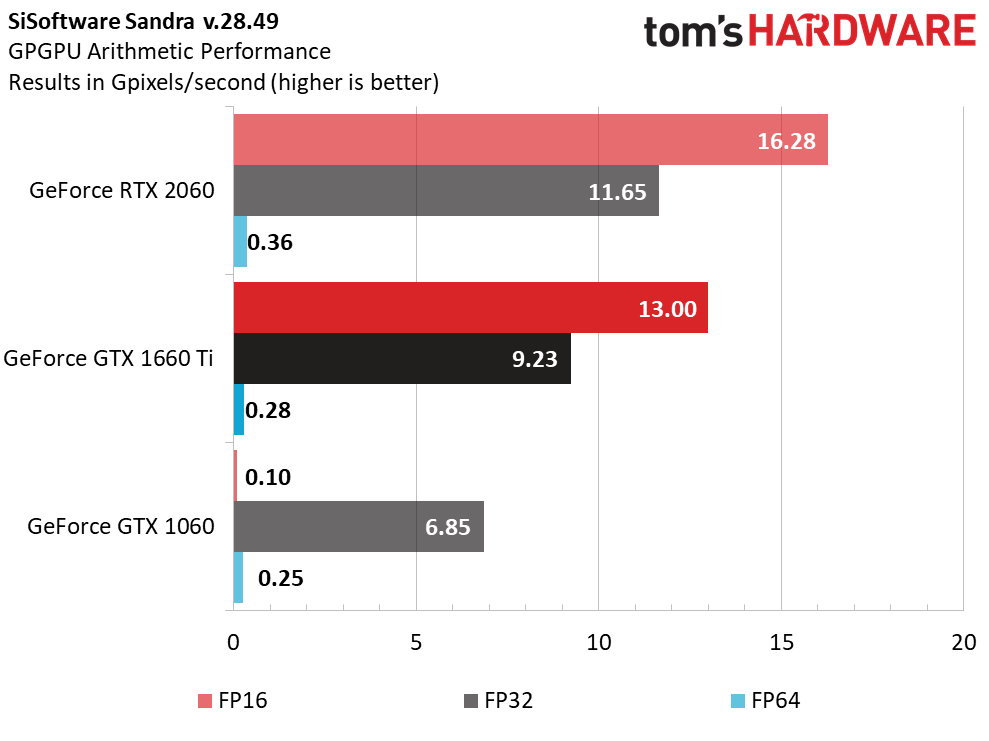

In TU116 specifically, Nvidia says it replaces Turing’s Tensor cores with 128 dedicated FP16 cores per SM, which allow GeForce GTX 1660 Ti to process half-precision operations at 2x the rate of FP32. The other Turing-based GPUs boast double-rate FP16 as well though, so it’s unclear how GeForce GTX 1660 Ti is unique within its family. More obvious, based on the chart below, is that the 1660 Ti delivers a massive improvement to half-precision throughput compared to GeForce GTX 1060 and its Pascal-based GP106 chip.

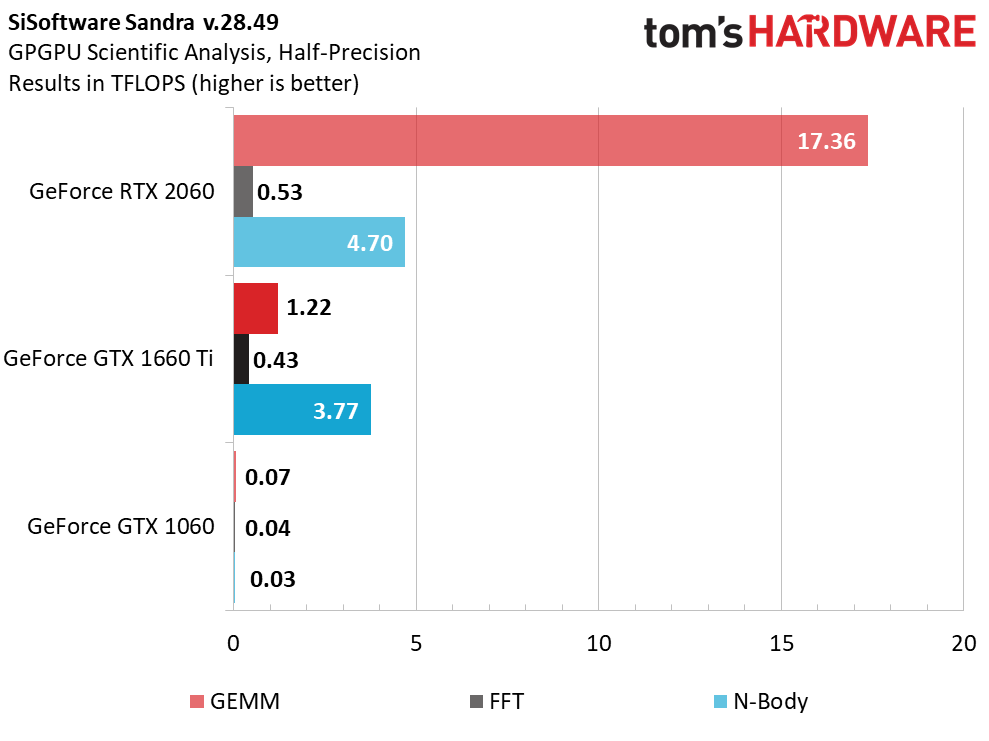

But when we run Sandra's Scientific Analysis module, which tests general matrix multiplies, we see how much more FP16 throughput TU106's Tensor cores achieve compared to TU116. GeForce GTX 1060, which only supported FP16 symbolically, barely registers on the chart at all.

In addition to the Turing architecture’s shaders and unified cache, TU116 also supports a pair of algorithms called Content Adaptive Shading and Motion Adaptive Shading, together referred to as Variable Rate Shading. We covered this technology in Nvidia’s Turing Architecture Explored: Inside the GeForce RTX 2080. That story also introduced Turing's accelerated video encode and decode capabilities, which carry over to GeForce GTX 1660 Ti as well.

Putting It All Together...

Nvidia packs 24 SMs into TU116, splitting them between three Graphics Processing Clusters. With 64 FP32 cores per SM, that’s 1,536 CUDA cores and 96 texture units across the entire GPU. Board partners will undoubtedly target a range of frequencies to fill the gap between GTX 1660 Ti and RTX 2060. However, the official base clock rate is 1,500 MHz with a GPU Boost specification of 1,770 MHz. Our EVGA GeForce GTX 1660 Ti XC Black Gaming sample topped out around 1,845 MHz through three runs of Metro: Last Light, while other cards we’ve seen readily exceed 2,000 MHz. On paper, then, GeForce GTX 1660 Ti offers up to 5.4 TFLOPS of FP32 performance and 10.9 TFLOPS of FP16 throughput.

Six 32-bit memory controllers give TU116 an aggregate 192-bit bus, which is populated by 12 Gb/s GDDR6 modules (Micron MT61K256M32JE-12:A) that push up to 288 GB/s. That’s 50% more memory bandwidth than GeForce GTX 1060 gets, helping GeForce GTX 1660 Ti maintain its performance advantage at 2560 x 1440 with anti-aliasing enabled.

Each memory controller is associated with eight ROPs and a 256KB slice of L2 cache. In total, TU116 exposes 48 ROPs and 1.5MB of L2. GeForce GTX 1660 Ti’s ROP count compares favorably to RTX 2060, which also utilizes 48 render outputs. But its L2 cache slices are half as large.

Despite a larger die, a 50%-higher transistor count, and a more aggressive GPU Boost clock rate, GeForce GTX 1660 Ti is rated for the same 120W as GeForce GTX 1060. Unfortunately, neither graphics card includes multi-GPU support. Nvidia continues pushing the narrative that SLI is meant to drive higher absolute performance, rather than give gamers a way to match single-GPU configurations.

| Header Cell - Column 0 | EVGA GeForce GTX 1660 Ti XC Black Gaming | GeForce RTX 2060 FE | GeForce GTX 1060 FE | GeForce GTX 1070 FE |

|---|---|---|---|---|

| Architecture (GPU) | Turing (TU116) | Turing (TU106) | Pascal (GP106) | Pascal (GP104) |

| CUDA Cores | 1536 | 1920 | 1280 | 1920 |

| Peak FP32 Compute | 5.4 TFLOPS | 6.45 TLFOPS | 4.4 TFLOPS | 6.5 TFLOPS |

| Tensor Cores | N/A | 240 | N/A | N/A |

| RT Cores | N/A | 30 | N/A | N/A |

| Texture Units | 96 | 120 | 80 | 120 |

| Base Clock Rate | 1500 MHz | 1365 MHz | 1506 MHz | 1506 MHz |

| GPU Boost Rate | 1770 MHz | 1680 MHz | 1708 MHz | 1683 MHz |

| Memory Capacity | 6GB GDDR6 | 6GB GDDR6 | 6GB GDDR5 | 8GB GDDR5 |

| Memory Bus | 192-bit | 192-bit | 192-bit | 256-bit |

| Memory Bandwidth | 288 GB/s | 336 GB/s | 192 GB/s | 256 GB/s |

| ROPs | 48 | 48 | 48 | 64 |

| L2 Cache | 1.5MB | 3MB | 1.5MB | 2MB |

| TDP | 120W | 160W | 120W | 150W |

| Transistor Count | 6.6 billion | 10.8 billion | 4.4 billion | 7.2 billion |

| Die Size | 284 mm² | 445 mm² | 200 mm² | 314 mm² |

| SLI Support | No | No | No | Yes (MIO) |

EVGA’s GeForce GTX 1660 Ti XC Black Gaming

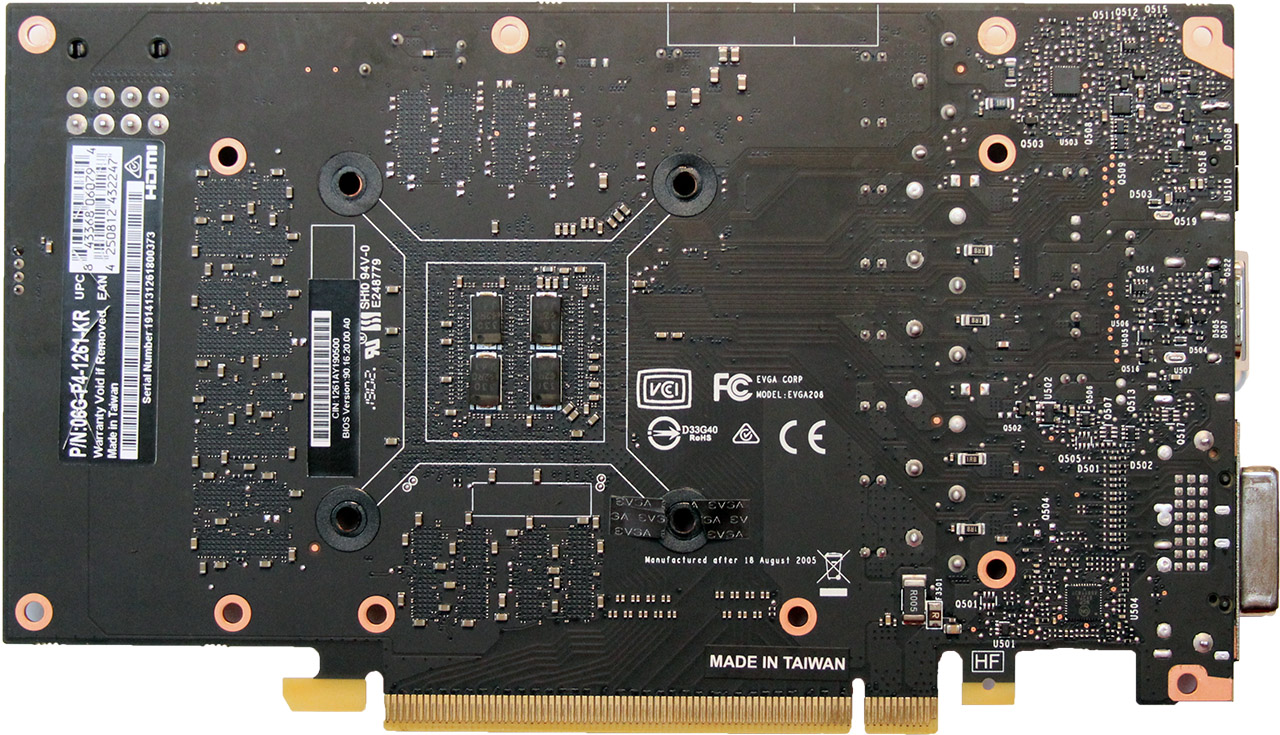

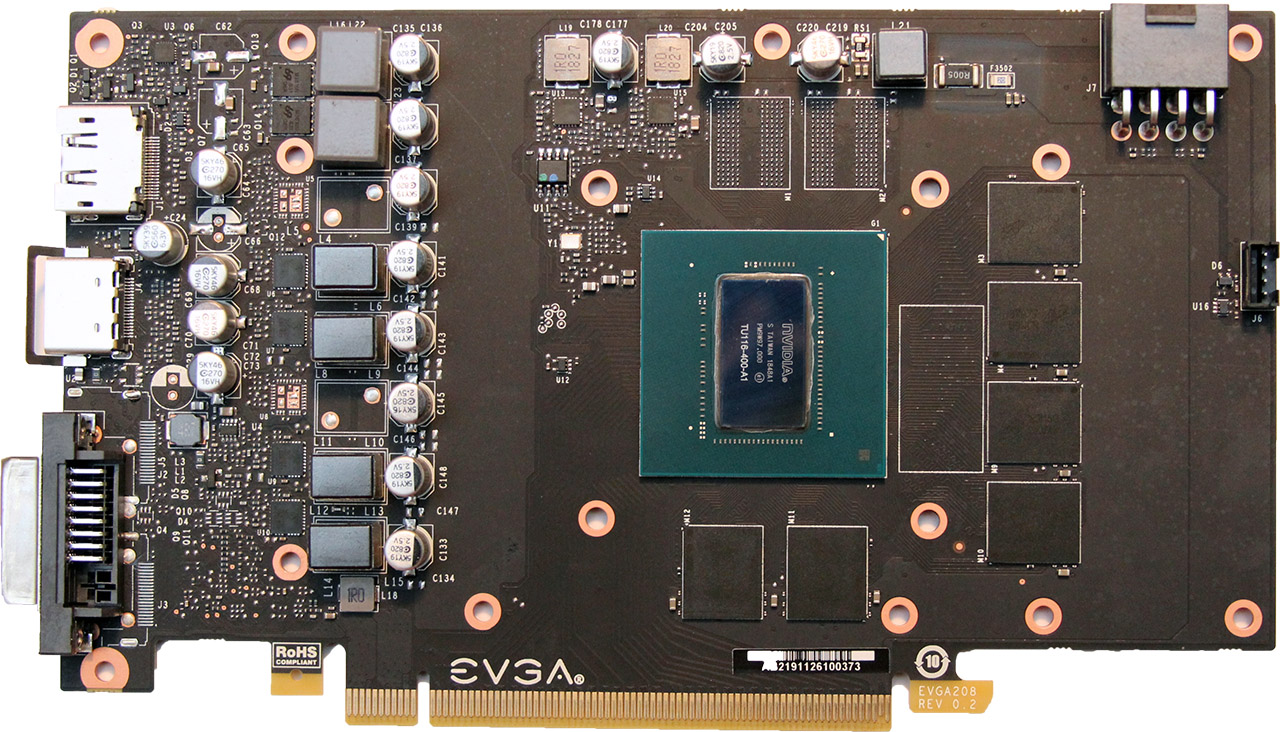

The GeForce GTX 1060 Founders Edition was also a 120W card and it squeaked by with one six-pin auxiliary connector. EVGA’s GeForce GTX 1660 Ti XC Black Gaming, on the other hand, employs an eight-pin input, giving it quite a bit of additional headroom. As we’ll see in our per-rail power testing, the card draws 3A of current over its PCIe slot during our stress test—the rest comes from its eight-pin connector.

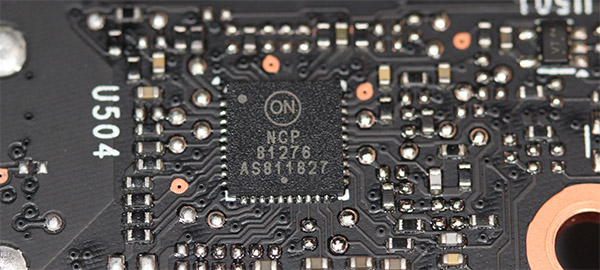

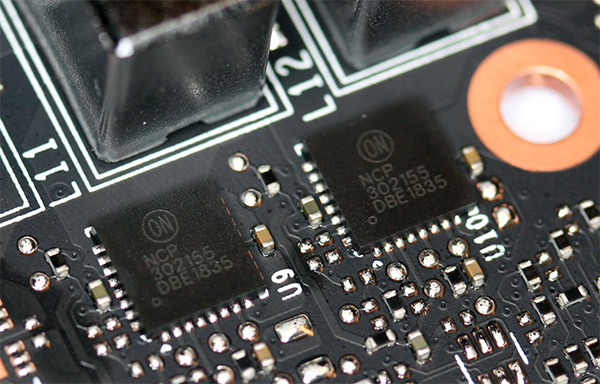

EVGA utilizes four power phases for TU116. The GPU’s phases are controlled by an older ON Semiconductor NCP81276 on the back of the PCB, which is attached to a quartet of ON Semiconductor NCP302155s.

Those four components integrate the high- and low-side MOSFETs, a driver, and the bootstrap diode. They’re the same parts used on GeForce RTX 2070 Founders Edition, capable of average currents up to 55A.

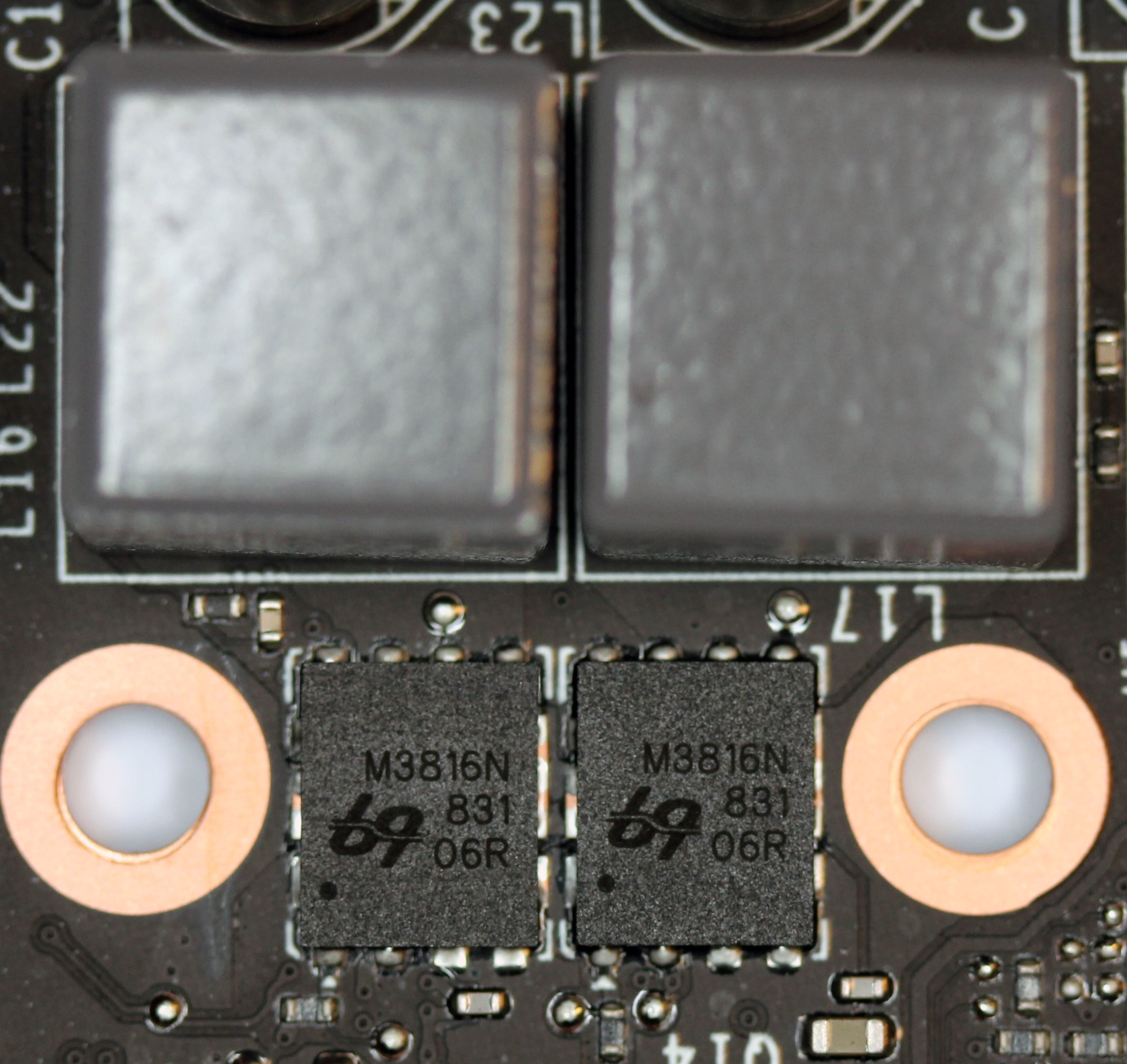

Ubiq Semiconductor’s familiar dual-phase uP1666Q controls the memory’s voltage regulation circuitry by way of two QM3816N6 dual N-channel MOSFETs.

More interesting than the GeForce GTX 1660 Ti XC Black Gaming’s fairly simple power supply, perhaps, is the fact that EVGA’s PCB has vacant pads for an additional two GPU phases. There’s also a pair of emplacements for two more GDDR6 memory modules. Nvidia did something similar with GeForce GTX 1060, leaving a couple of blank spots on its Founders Edition card that were never populated. This is a time- and cost-saving measure, which allows the company to use one PCB for multiple products.

A metal plate sits on top of the PCB, sandwiching thermal pads between the integrated driver/MOSFETs, GDDR6 memory modules, and current sense resistor. More thermal pads on top of the plate keep heat moving into the main sink assembly, which is mounted around the GPU at four points and screwed in through the PCB’s back side.

The thermal solution itself is composed of a fairly thin copper pad that makes direct contact with TU116. Three flattened pipes are soldered to the top of it, and an array of aluminum fins are, in turn, soldered to the heat pipes. A relatively thick fin stack is exaggerated by the shroud, which houses a single 85mm fan and adds even more depth. All told, EVGA’s GeForce GTX 1660 Ti XC Black Gaming eats up three expansion slots on your motherboard.

EVGA ends up trading thickness for length. The GeForce GTX 1660 Ti XC Black Gaming may be 2” deep, but it’s only about 7.5” (~190mm) long and 4 ⅜” (111mm) tall. Moreover, compared to the beefy Founders Edition cards we’ve been reviewing, a total weight of 1 lb. 7 oz. (656g) feels downright light.

Up front, the GeForce GTX 1660 Ti XC Black Gaming exposes one dual-link DVI connector, an HDMI port, and a DisplayPort interface. The USB Type-C-based VirtualLink connector seen on every other Turing-class card so far is gone, a sign that we’re getting down to a performance level unconducive of smooth VR gameplay (even on the best VR headsets). Board partners that choose to add VirtualLink to their designs are free to do so; EVGA simply didn’t implement it on this model.

How We Tested EVGA’s GeForce GTX 1660 Ti XC Black Gaming

Obviously, GeForce GTX 1660 Ti is more mainstream than the other Turing-based boards we’ve reviewed. As such, our graphics workstation, based on an MSI Z170 Gaming M7 motherboard and Intel Core i7-7700K CPU at 4.2 GHz, is apropos. The processor is complemented by G.Skill’s F4-3000C15Q-16GRR memory kit. Crucial’s MX200 SSD is here, joined by a 1.6TB Intel DC P3700 loaded down with games.

As far as competition goes, the 1660 Ti mostly goes up against GeForce GTX 1070, though we include 1070 Ti as well. Of course, comparisons to GeForce GTX 1060 are inevitable. All of those cards are included in our line-up, along with GeForce RTX 2060 and GeForce RTX 2070. On the AMD side, we’re mostly interested in Radeon RX 590, although Radeon RX Vega 64 and Radeon RX Vega 56 make for interesting additions, too.

Our benchmark selection includes Ashes of the Singularity: Escalation, Battlefield V, Destiny 2, Far Cry 5, Forza Horizon 4, Grand Theft Auto V, Metro: Last Light Redux, Shadow of the Tomb Raider, Tom Clancy’s The Division, Tom Clancy’s Ghost Recon Wildlands, The Witcher 3 and Wolfenstein II: The New Colossus.

The testing methodology we're using comes from PresentMon: Performance In DirectX, OpenGL, And Vulkan. In short, these games are evaluated using a combination of OCAT and our own in-house GUI for PresentMon, with logging via GPU-Z.

We're using driver version 418.91 to test GeForce GTX 1660 Ti and build 417.54 for everything else. AMD’s cards utilize Crimson Adrenalin 2019 Edition 18.12.3.

MORE: Best Graphics Cards

MORE: GPU Benchmarks

MORE: How to Buy the Right GPU

MORE: All Graphics Content

-

Ketchup79 In that review they added a link to this review, which should have been the initial link/review for this post IMO:Reply

https://www.tomshardware.com/reviews/evga-nvidia-geforce-gtx_1660_super-sc-ultra -

WildCard999 Reply

Yea this is odd, it should of been 1660 ti > 1660 Super >1650 Super over the last few months.NightHawkRMX said:Why the wait? This card is not new

Edit: Here's a 1660 ti TH review from May.

https://www.tomshardware.com/reviews/gigabyte-geforce-gtx-1660-ti-gaming-oc-6g-turing,6118.html -

TJ Hooker I also noticed that the i5 9600K review article says it was posted today, and has a new comments section.Reply -

Exploding PSU That EVGA GPU is one of the cutest-looking GPU I've seen in a while, I'm thinking of picking one up just from the way it looksReply