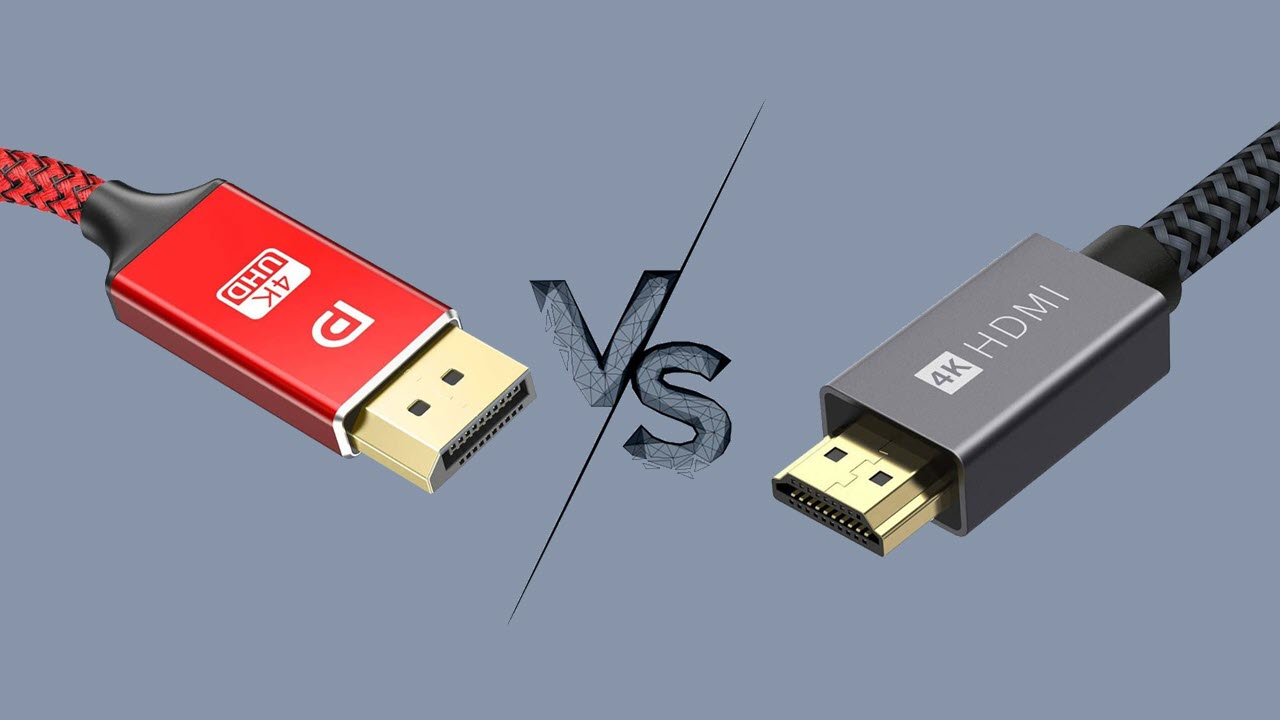

DisplayPort vs. HDMI: Which is better for gaming?

We look at bandwidth, resolution, refresh rate and more and discuss the differences between DisplayPort and HDMI connections.

The HDMI standard was designed for TVs (though it can of course be used for PCs), while DisplayPort was designed for monitors. For most mainstream PC users, even most gamers, either connection will deliver effectively the same result. But assuming your device supports a similar generation of both standards, DisplayPort can deliver more data throughput. This is important if you're running a high-end, high-refresh, high-resolution monitor for gaming, connected to a powerful graphics card capable of running games at those settings.

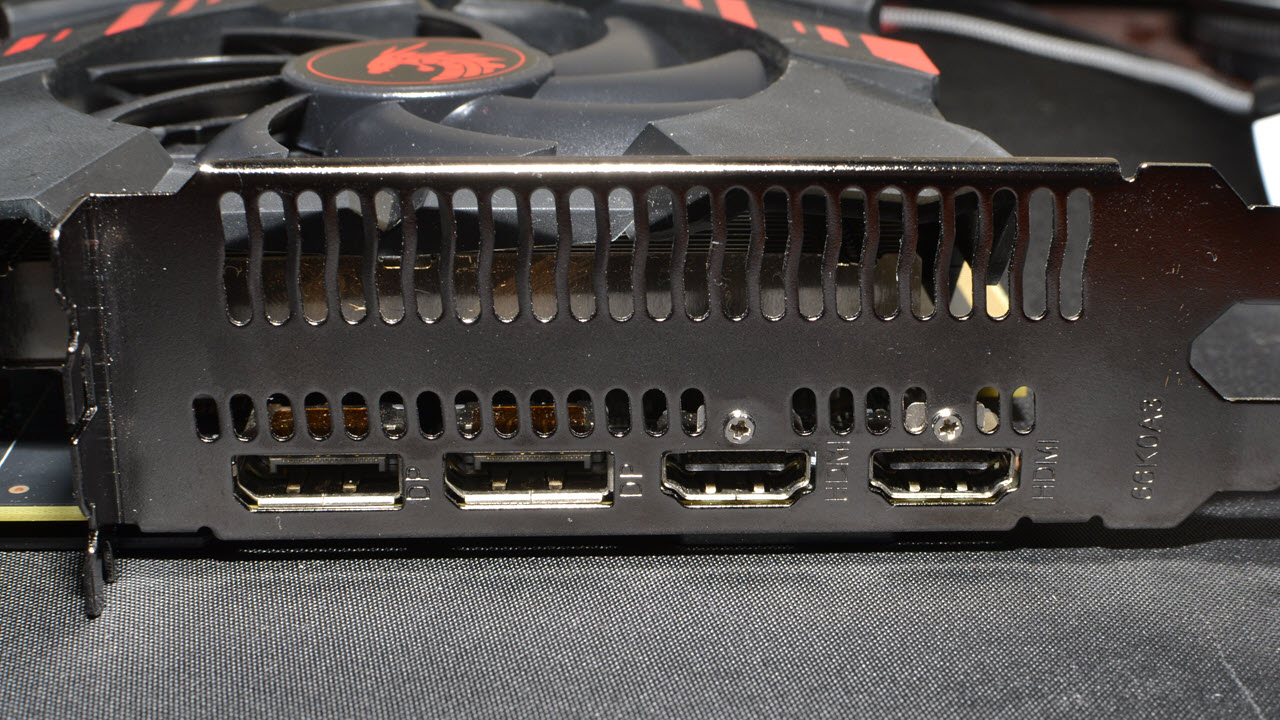

The best gaming monitors and best graphics cards are packed with features, but one aspect that often gets overlooked is the number and type of DisplayPort vs. HDMI connections. What are the differences between the two ports and is using one for connecting to your system definitively better?

You might think it's a simple matter of hooking up whatever cable comes with your monitor to your PC and calling it a day, but there are differences that can often mean a loss of refresh rate, color quality, or both if you're not careful. Here's what you need to know about DisplayPort vs. HDMI connections.

If you're looking to buy a new PC monitor or buy a new graphics card, you'll want to consider the capabilities of both sides of the connection — the video output of your graphics card and the video input on your display — before making any purchases. Our GPU benchmarks hierarchy will tell you how the various graphics cards rank in terms of performance, but it doesn't dig into the connectivity options, which is something we'll cover here.

The Major Display Connection Types

- Modern displays and graphics cards typically support only DisplayPort and HDMI outputs

- The older DVI-D connector can be found on lower-end monitors and graphics cards today, but it's rare in 2025 and not suitable for modern, high-resolution or high-refresh-rate displays

- Analog display outputs are practically obsolete in 2025 outside of retro gaming setups

- Thunderbolt ports and DisplayPort over USB Type-C connectors, also known as DisplayPort Alt Mode, are common features on laptops but rare on discrete graphics cards, so they're less important concerns for gaming

The latest display connectivity standards are DisplayPort and HDMI (High-Definition Multimedia Interface). DisplayPort first appeared in 2006, while HDMI came out in 2002. Both are digital standards, meaning all the data about the pixels on your screen is represented as 0s and 1s as it zips across your cable, and it's up to the display to convert that digital information into an image on your screen.

Earlier digital monitors used DVI (Digital Visual Interface) connectors, and going back even further, we had analog VGA (Video Graphics Array) — along with component RGB, S-Video, composite video, EGA, and CGA. You don't want to use VGA or any of those other connectors in the 2020s. They're old, meaning any new GPU likely won't even support the connector, and even if they did, you'd be using an analog signal that's prone to interference. Yuck.

DVI is the bare minimum you should use today, and even that has its limitations. It shares many similarities with early HDMI, except that it lacks audio support. It works fine for gaming at 1080p, or 1440p resolution if you have a dual-link connection. Dual-link DVI-D is basically double the bandwidth of single-link DVI-D via extra pins and wires, and most modern GPUs with a DVI port support dual-link. But the truly modern graphics cards like Nvidia's Blackwell RTX 50-series and Ada Lovelace RTX 40-series, AMD's RDNA 4 RX 9000-series and RDNA 3 RX 7000-series, and Intel's Battlemage Arc B-series and Arc Alchemist A-series GPUs almost never include DVI connectors these days. Basically, DVI-D has been deprecated since the pre-COVID days, so if you have an older monitor that needs a DVI-D connection, it's time to start thinking about an upgrade.

If you're wondering about Thunderbolt 2/3/4, it routes DisplayPort over the Thunderbolt connection. Thunderbolt 2 supports DisplayPort 1.2, and Thunderbolt 3 supports DisplayPort 1.4 video. Thunderbolt 4 also uses DisplayPort 1.4, with the requirement that devices support up to two simultaneous 4K60 signals. It's also possible to route HDMI 2.0 over Thunderbolt 3 with the right hardware.

Some newer displays also offer the option to use a USB Type-C connector for video. Supported bandwidths and resolutions depend on the particular monitor, but while the connector might be easier to insert, it can also be inadvertently pulled out if you're not careful. We're not going to dig into the Type-C options here, though if they begin to catch on, we may revisit the subject. (Note: Several years later, the Type-C option still hasn't caught on except with portable displays.)

For newer displays, it's best to go with DisplayPort or HDMI. But is there a clear winner between the two? Let's dig into the details.

DisplayPort vs. HDMI: Specs and Resolutions

- Both DisplayPort and HDMI generally support high-resolution and high-refresh-rate displays in their latest versions

- If you're using a high-resolution, high-refresh-rate display with HDR or wide color support, DisplayPort is generally a safer pick due to its broader support for Display Stream Compression (DSC), a lossless standard that allows more data to be transferred over the same cable

- High-resolution, high-refresh-rate displays need more bandwidth, and that pressure only increases with wide color depth and high dynamic range (HDR), so you may need to upgrade your graphics card to get DisplayPort and HDMI ports that support the latest and highest-bandwidth standards

Not all DisplayPort and HDMI ports are created equal. The DisplayPort and HDMI standards are backward compatible, meaning you can plug in an HDTV from the mid-00s and it should still work with a brand new RTX 50-series or RX 9000-series graphics card. However, the connection between your display and graphics card will end up using the best option supported by both the sending and receiving ends of the connection. That could mean the best 4K gaming monitor with 240 Hz and HDR support will end up running at 4K and 24 Hz on an older graphics card!

Here's a quick overview of the major DisplayPort and HDMI revisions, their maximum signal rates and the GPU families that first added support for the standard.

| Header Cell - Column 0 | Max Transmission Rate | Max Data Rate | Uncompressed Resolution/Refresh Rate Support (24 bpp) | Max DSC Resolution/Refresh Rate | GPU Introduction |

|---|---|---|---|---|---|

DisplayPort Versions | Row 0 - Cell 1 | Row 0 - Cell 2 | Row 0 - Cell 3 | Row 0 - Cell 4 | Row 0 - Cell 5 |

1.0-1.1a | 10.8 Gbps | 8.64 Gbps | 1080p @ 144 Hz | N/A | AMD HD 3000 (R600) |

| Row 2 - Cell 0 | Row 2 - Cell 1 | Row 2 - Cell 2 | 4K @ 30 Hz | Row 2 - Cell 4 | Nvidia GeForce 9 (Tesla) |

1.2-1.2a | 21.6 Gbps | 17.28 Gbps | 1080p @ 240 Hz | N/A | AMD HD 6000 (Northern Islands) |

| Row 4 - Cell 0 | Row 4 - Cell 1 | Row 4 - Cell 2 | 4K @ 75 Hz | Row 4 - Cell 4 | Nvidia GK100 (Kepler) |

| Row 5 - Cell 0 | Row 5 - Cell 1 | Row 5 - Cell 2 | 5K @ 30 Hz | Row 5 - Cell 4 | Row 5 - Cell 5 |

1.3 | 32.4 Gbps | 25.92 Gbps | 1080p @ 360 Hz | N/A | AMD RX 400 (Polaris) |

| Row 7 - Cell 0 | Row 7 - Cell 1 | Row 7 - Cell 2 | 4K @ 98 Hz | Row 7 - Cell 4 | Nvidia GM100 (Maxwell 1) |

| Row 8 - Cell 0 | Row 8 - Cell 1 | Row 8 - Cell 2 | 5K @ 60 Hz | Row 8 - Cell 4 | Row 8 - Cell 5 |

| Row 9 - Cell 0 | Row 9 - Cell 1 | Row 9 - Cell 2 | 8K @ 30 Hz | Row 9 - Cell 4 | Row 9 - Cell 5 |

1.4-1.4a | 32.4 Gbps | 25.92 Gbps | 4K @ 98 Hz | 4K @ 240 Hz | AMD RX 400 (Polaris) |

| Row 11 - Cell 0 | Row 11 - Cell 1 | Row 11 - Cell 2 | 8K @ 30 Hz | 8K @ 60 Hz | Nvidia GM200 (Maxwell 2) |

2.0-2.1 | 80.0 Gbps | 77.37 Gbps | 4K @ 240 Hz | 4K @ 500+ Hz | AMD RX 7000 (54 Gbps), Intel Arc A-series (40 Gbps) |

| Row 13 - Cell 0 | Row 13 - Cell 1 | Row 13 - Cell 2 | 8K @ 85 Hz | 8K @ 240 Hz | Nvidia RTX 50 (Blackwell) |

HDMI Versions | Row 14 - Cell 1 | Row 14 - Cell 2 | Row 14 - Cell 3 | Row 14 - Cell 4 | Row 14 - Cell 5 |

1.0-1.2a | 4.95 Gbps | 3.96 Gbps | 1080p @ 60 Hz | N/A | AMD HD 2000 (R600) |

| Row 16 - Cell 0 | Row 16 - Cell 1 | Row 16 - Cell 2 | Row 16 - Cell 3 | Row 16 - Cell 4 | Nvidia GeForce 9 (Tesla) |

1.3-1.4b | 10.2 Gbps | 8.16 Gbps | 1080p @ 144 Hz | N/A | AMD HD 5000 |

| Row 18 - Cell 0 | Row 18 - Cell 1 | Row 18 - Cell 2 | 1440p @ 75 Hz | Row 18 - Cell 4 | Nvidia GK100 (Kepler) |

| Row 19 - Cell 0 | Row 19 - Cell 1 | Row 19 - Cell 2 | 4K @ 30 Hz | Row 19 - Cell 4 | Row 19 - Cell 5 |

| Row 20 - Cell 0 | Row 20 - Cell 1 | Row 20 - Cell 2 | 4K 4:2:0 @ 60 Hz | Row 20 - Cell 4 | Row 20 - Cell 5 |

2.0-2.0b | 18.0 Gbps | 14.4 Gbps | 1080p @ 240 Hz | N/A | AMD RX 400 (Polaris) |

| Row 22 - Cell 0 | Row 22 - Cell 1 | Row 22 - Cell 2 | 4K @ 60 Hz | Row 22 - Cell 4 | Nvidia GM200 (Maxwell 2) |

| Row 23 - Cell 0 | Row 23 - Cell 1 | Row 23 - Cell 2 | 8K 4:2:0 @ 30 Hz | Row 23 - Cell 4 | Row 23 - Cell 5 |

2.1 | 48.0 Gbps | 42.6 Gbps | 4K @ 144 Hz | 4K @ 240 Hz | Nvidia RTX 30 (Ampere), AMD RX 5000 (RDNA) |

| Row 25 - Cell 0 | Row 25 - Cell 1 | Row 25 - Cell 2 | 8K @ 30 Hz | 8K @ 120 Hz | Partial 2.1 VRR on Nvidia Turing |

Note that there are two bandwidth columns: transmission rate and data rate. The DisplayPort and HDMI digital signals use bitrate encoding of some form — 8b/10b for most of the older standards, 16b/18b for HDMI 2.1, and 128b/132b for DisplayPort 2.x. 8b/10b encoding for example means for every 8 bits of data, 10 bits are actually transmitted, with the extra bits used to help maintain signal integrity (eg, by ensuring zero DC bias).

That means only 80% of the theoretical bandwidth is available for data use with 8b/10b. 16b/18b encoding improves that to 88.9% efficiency, while 128b/132b encoding yields 97% efficiency. There are still other considerations, like the auxiliary channel on HDMI, but that's not a major factor for PC use.

Also note the maximum supported uncompressed and compressed (DSC stands for Display Stream Compression) modes. DSC had some issues with the earliest versions, but GPUs in the post-2018 timeframe seem to work fine with the feature.

Let's Talk More About Bandwidth

To understand the above chart, we need to go deeper. What all digital connections — DisplayPort, HDMI and even DVI-D — end up coming down to is the required bandwidth. Every pixel on your display has three components: red, green, and blue (RGB) — alternatively: luma, blue chroma difference, and red chroma difference (YCbCr/YPbPr) can be used. Whatever your GPU renders internally (typically 16-bit floating point RGBA, where A is the alpha/transparency information), that data gets converted into a signal for your display.

The standard in the past has been 24-bit color, or eight bits each for the red, green and blue color components. HDR and high color depth displays have bumped that to 10-bit color, with 12-bit and 16-bit options as well, though the latter two are mostly in the professional space. Generally speaking, display signals use either 24 bits per pixel (bpp) or 30 bpp, with the best HDR monitors opting for 30 bpp. Multiply the color depth by the number of pixels and the screen refresh rate and you get the minimum required bandwidth. We say 'minimum' because there are a bunch of other factors as well.

Display timings are relatively complex calculations. The VESA governing body defines the standards, and there's even a handy spreadsheet that spits out the actual timings for a given resolution. A 1920x1080 monitor at a 60 Hz refresh rate, for example, uses 2,000 pixels per horizontal line and 1,111 lines once all the timing stuff is added. That's because display blanking intervals need to be factored in. (These blanking intervals are partly a holdover from the analog CRT screen days, but the standards still include it even with digital displays.)

Using the VESA spreadsheet and running the calculations gives the following bandwidth requirements. Look at the following table and compare it with the first table; if the required data bandwidth is less than the max data rate that a standard supports, then the resolution can be used.

Resolution | Color Depth | Refresh Rate (Hz) | Required Data Bandwidth |

|---|---|---|---|

1920 x 1080 | 8-bit | 60 | 3.20 Gbps |

1920 x 1080 | 10-bit | 60 | 4.00 Gbps |

1920 x 1080 | 8-bit | 144 | 8.00 Gbps |

1920 x 1080 | 10-bit | 144 | 10.00 Gbps |

2560 x 1440 | 8-bit | 60 | 5.63 Gbps |

2560 x 1440 | 10-bit | 60 | 7.04 Gbps |

2560 x 1440 | 8-bit | 144 | 14.08 Gbps |

2560 x 1440 | 10-bit | 144 | 17.60 Gbps |

3840 x 2160 | 8-bit | 60 | 12.54 Gbps |

3840 x 2160 | 10-bit | 60 | 15.68 Gbps |

3840 x 2160 | 8-bit | 144 | 31.35 Gbps |

3840 x 2160 | 10-bit | 144 | 39.19 Gbps |

3840 x 2160 | 8-bit | 240 | 56.45 Gbps (~19 DSC) |

3840 x 2160 | 10-bit | 240 | 70.56 Gbps (~24 DSC) |

7680 x 4320 | 8-bit | 60 | 49.99 Gbps (~17 DSC) |

7680 x 4320 | 10-bit | 60 | 62.49Gbps (~21 DSC) |

7680 x 4320 | 8-bit | 120 | 103.62 Gbps (~35 DSC) |

7680 x 4320 | 10-bit | 120 | 129.53 Gbps (~43 DSC) |

7680 x 4320 | 8-bit | 240 | 223.48 Gbps (~75 DSC) |

7680 x 4320 | 10-bit | 240 | 279.35 Gbps (~93 DSC) |

The above figures are for uncompressed signals, however, and DisplayPort 1.4 added the option of Display Stream Compression 1.2a (DSC), which is also part of HDMI 2.1. In short, DSC helps overcome bandwidth limitations, which are becoming increasingly problematic as resolutions and refresh rates increase. For example, basic 24 bpp at 8K and 60 Hz needs 49.65 Gbps of data bandwidth, or 62.06 Gbps for 10 bpp HDR color — the former could be supported by DisplayPort 2.1 UHBR13.5, while the latter would require UHBR20 (which few monitors currently support, and which is only available on RTX 50-series and one port for AMD's professional W7900 GPU).

DSC can provide up to a 3:1 compression ratio by converting to YCgCo and using delta PCM encoding. It provides a "visually lossless" (and sometimes even truly lossless, depending on what you're viewing) result. Using DSC, 8K 120 Hz HDR is suddenly viable, with a bandwidth requirement of 'only' 42.58 Gbps. DisplayPort 1.4 can also run 4K at 240 Hz using DSC.

There's a catch with DSC, however: Support can be a bit hit and miss, particularly on older GPUs. We've tested a bunch of graphics cards using a Samsung Odyssey Neo G8 32, which supports up to 4K at 240 Hz over DisplayPort 1.4 or HDMI 2.1. On DisplayPort connections, most of the latest GPUs are fine, but cards from 2016 and earlier may not even allow the use of 240 Hz. We've also seen video signal corruption on occasion, where dropping to 120 Hz (still with DSC) often fixes the problem. In short, cable quality and the DSC hardware implementation still factor into the equation.

Both HDMI and DisplayPort can also carry audio data, which requires bandwidth as well, though it's a minuscule amount compared to the video data. DisplayPort and HDMI currently use a maximum of 36.86 Mbps for audio, or 0.037 Gbps if we keep things in the same units as video. Earlier versions of each standard can use even less data for audio. One important note is that HDMI supports audio pass through, while DisplayPort does not. If you're planning to hook up your GPU to an amplifier, HDMI provides a better solution.

That's a lengthy introduction to a complex subject, but if you've ever wondered why the simple math (resolution * refresh rate * color depth) doesn't match published specs, it's because of all the timing standards, encoding, audio, and more. Bandwidth isn't the only factor, but in general, the standard with a higher maximum bandwidth is 'better.'

DisplayPort: The PC Choice

Currently DisplayPort 2.1 is the most capable version of the DisplayPort standard. The DisplayPort 2.0 spec came out in June 2019, and Intel's Arc Alchemist GPUs along with AMD's RDNA 3 GPUs supported the standard. It was later revised to DisplayPort 2.1, but all DP2.0 capable hardware should still be compatible. Nvidia finally added DisplayPort 2.1b with its Blackwell RTX 50-series GPUs.

So, there are now cards with DisplayPort 2.1 support, but they're still of different levels. Intel's Arc GPUs support UHBR10 (Ultra-High Bitrate 10 Gbps per lane), for a 40 Gbps maximum connection speed (not including 128b/132b encoding). AMD opted for the faster UHBR13.5 (54 Gbps total), but neither company supports the potential 20 Gbps per lane variant — except for AMD allowing UHBR20 on a single port for its professional Radeon Pro W7900 graphics card. Nvidia went with full UHBR20 (80 Gbps) support on all outputs for it's 50-series and future solutions. But perhaps the bigger issue now isn't GPU support.

There still aren't many displays that support DisplayPort 2.1. Those are starting to appear, but it's the old chicken and egg scenario. GPUs with DisplayPort 2.1 support have now been around for three years, monitors that can use DP2.1 have been lagging behind. Probably that's because DisplayPort 1.4 remains sufficient for up to 4K 240 Hz and 8K 60Hz with DSC, and HDMI 2.1 support is there for people that need up to 48 Gbps.

There are DP2.1 monitors now, supposedly with UHBR20 support. Curiously, we've seen 4K 240 Hz OLED monitors that will still enable DSC if you want to use the full capabilities of the display. That should be possible without DSC, but at least one monitor we've tested (MSI MPG272UX OLED) drops the maximum 4K refresh rate to 120 Hz if we disable DSC in the monitor OSD (on-screen display).

One advantage of DisplayPort is that variable refresh rates (VRR) have been part of the standard since DisplayPort 1.2a. We also like the robust DisplayPort connector (but not mini-DisplayPort), which has hooks that latch into place to keep cables secure. It's a small thing, but we've definitely pulled loose more than a few HDMI cables by accident. DisplayPort can also connect multiple screens to a single port via Multi-Stream Transport (MST), and the DisplayPort signal can be piped over a USB Type-C connector that also supports MST.

One area where there has been some confusion is in regards to licensing and royalties. DisplayPort was supposed to be a less expensive standard, but both HDMI and DisplayPort have various associated brands, trademarks, and patents that have to be licensed. With technologies like HDCP (High-bandwidth Digital Content Protection), DSC, and more, companies have to pay a royalty for DP just like HDMI. The current rate appears to be $0.20 per product with a DisplayPort interface, with a cap of $7 million per year. HDMI charges $0.15 per product, or $0.05 if the HDMI logo is used in promotional materials.

Because the standard has evolved over the years, not all DisplayPort cables will work properly at the latest speeds. The original Display 1.0-1.1a spec allowed for RBR (reduced bit rate) and HBR (high bit rate) cables, capable of 5.18 Gbps and 8.64 Gbps of data bandwidth, respectively. DisplayPort 1.2 introduced HBR2, doubled the maximum data bit rate to 17.28 Gbps and is compatible with standard HBR DisplayPort cables. HBR3 with DisplayPort 1.3-1.4a increased things again to 25.92 Gbps, and added the requirement of DP8K DisplayPort certified cables.

Finally, with DisplayPort 2.1 there are three new transmission modes: UHBR10 (ultra high bit rate), UHBR13.5 and UHBR20. The number refers to the bandwidth of each lane, and DisplayPort uses four lanes, so UHBR10 offers up to 40 Gbps of transmission rate, UHBR13.5 can do 54 Gbps and UHBR20 peaks at 80 Gbps. DP2.1 uses 128b/132b encoding, meaning data bit rates of 38.69 Gbs, 52.22 Gbps, and 77.37 Gbps. And now there are new cables to meet those standards.

Officially, the maximum length of a DisplayPort cable is up to 3m (9.8 feet), which is one of the potential drawbacks, particularly for consumer electronics use. Newer versions with higher bandwidths can cut that length even more. As an example, checking the official DisplayPort certification list, the longest DP80 certified cable right now is only 1.2m (3.94 ft) long, and many are only 0.8 or 1.0 meters. Getting a cable that's only 3 feet or less in length generally means you have to have your PC on top of your desk.

With a maximum data rate of 25.92 Gbps, DisplayPort 1.4 can handle 4K resolution 24-bit color at 98 Hz, and dropping to 4:2:2 YCbCr gets it to 144 Hz with HDR. Alternatively, DSC allows up to 4K and 240 Hz, even with HDR. Keep in mind that 4K HDR monitors running at 144 Hz or more carry premium pricing, so gamers will more likely be looking at something like a 144Hz display at 1440p. That only requires 14.08 Gbps for 24-bit color or 17.60 Gbps for 30-bit HDR, which DP 1.4 can easily handle.

If you're wondering about 8K content in the future, the reality is that even though it's doable right now via DSC and DisplayPort 1.4a or HDMI 2.1b, the displays and PC hardware needed to drive such displays aren't generally within reach of consumer budgets. Top-tier GPUs like the GeForce RTX 4090 and GeForce RTX 5090 sort of overcome that limitation, but 8K pixel densities often outstrip modest human eyesight. By the time 8K becomes a viable resolution, both in price and in the GPU performance required to run it adequately, we'll likely have gone through another generation or three of GPU hardware.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

HDMI: Ubiquitous Consumer Electronics

Updates to HDMI have kept the standard relevant for over 20 years. The earliest versions of HDMI have become outdated, but later versions have increased bandwidth and features.

HDMI 2.0b and earlier are 'worse' in some ways compared to DisplayPort 1.4, but if you're not trying to run at extremely high resolutions or refresh rates, you probably won't notice the difference. Full 24-bit RGB color at 4K 60 Hz has been available since HDMI 2.0 released in 2013, and higher resolutions and/or refresh rates are possible with 4:2:0 YCbCr output — though you generally don't want to use that with PC text, as it can make the edges look fuzzy.

For AMD FreeSync users, HDMI has also supported VRR via an AMD extension since 2.0b, but HDMI 2.1 is where VRR became part of the official standard. AMD and Nvidia all support HDMI 2.1 with VRR, starting with Turing and RDNA 2. (Intel has also supported the standard since its first Alchemist GPUs.) Nvidia opted to call its HDMI 2.1 VRR solution "G-Sync Compatible," and you can find a list of all the officially tested and supported displays on Nvidia's site.

One major advantage of HDMI is that it's ubiquitous. Millions of devices with HDMI shipped in 2004 when the standard was young, and it's now found everywhere. These days, consumer electronics devices like TVs often include support for three or more HDMI ports. TVs and consumer electronics hardware have been shipping HDMI 2.1 devices for a while, before PCs even had support. Finding a TV with a DisplayPort input, by contrast, remains very uncommon.

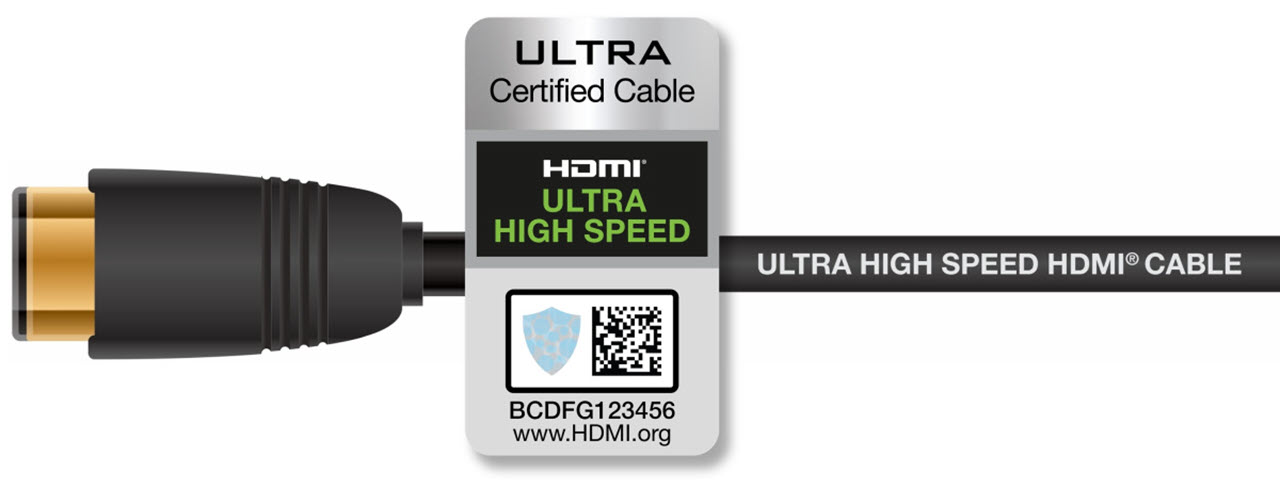

HDMI cable requirements have changed over time, just like DisplayPort. One of the big advantages is that high quality HDMI cables can be up to 15m (49.2 feet) in length — five times longer than DisplayPort. That may not be important for a display sitting on your desk, but it can definitely matter for home theater use. Originally, HDMI had two categories of cables: category 1 or standard HDMI cables are intended for lower resolutions and/or shorter runs, and category 2 or “High Speed” HDMI cables are capable of 1080p at 60 Hz and 4K at 30 Hz with lengths of up to 15m.

More recently, HDMI 2.0 introduced “Premium High Speed” cables certified to meet the 18 Gbps bit rate, and HDMI 2.1 has created a fourth class of cable, “Ultra High Speed” HDMI that can handle up to 48 Gbps. HDMI also provides for routing Ethernet signals over the HDMI cable, though this is rarely used in the PC space.

We mentioned licensing fees earlier, and while HDMI Technology doesn't explicitly state the cost, this website details the various HDMI licencing fees as of 2014. The short summary: for a high volume business making a lot of cables or devices, it's $10,000 annually, and $0.05 per HDMI port provided HDCP (High Definition Content Protection) is used and the HDMI logo is displayed in marketing material. In other words, the cost to end users is easily absorbed in most cases — unless some bean counter comes down with a case of extreme penny pinching.

Like DisplayPort, HDMI also supports HDCP to protect the content from being copied. That's a separate licensing fee, naturally (though it reduces the HDMI fee). HDMI has supported HDCP since the beginning, starting at HDCP 1.1 and reaching HDCP 2.2 with HDMI 2.0. HDCP can cause issues with longer cables, and ultimately it appears to annoy consumers more than the pirates. At present, known hacks / workarounds to strip HDCP 2.2 from video signals can be found.

HDMI 2.1 allows for up to 48 Gbps signaling rates, and it also supports DSC. Theoretically, that means resolutions and refresh rates of up to 4K at 480 Hz or 8K at 120 Hz are supported over a single connection and cable. We're not aware of any 4K 480 Hz displays yet, though there are prototype 8K 120 Hz TVs that have been shown at CES.

DisplayPort vs. HDMI: The Bottom Line for Gamers

We've covered the technical details of DisplayPort and HDMI, but which one is actually better for gaming? Some of that will depend on the hardware you already own or intend to purchase. Both standards are capable of delivering a good gaming experience, but if you want a great gaming experience, right now DisplayPort 1.4 is generally better than HDMI 2.0, HDMI 2.1 technically beats DP 1.4, and DisplayPort 2.1 trumps HDMI 2.1 (provided you have UHBR13.5 or higher support). The problem is that you'll need support for the desired standard from both your graphics card and your display for things to work right.

For most Nvidia gamers, your best option right now is a DisplayPort 1.4 connection to a G-Sync certified (compatible or official) display. Alternatively, HDMI 2.1 with a newer display works as well. Both the RTX 30-series and 40-series cards support the same connection standards, for better or worse. Most graphics cards will come with three DisplayPort connections and a single HDMI output, though you can find models with two HDMI and two (or three) DisplayPort connections as well — only four active outputs at a time are supported. RTX 50-series GPUs meanwhile can benefit from DisplayPort 2.1 monitors, so if you're planning on picking up a 4K 240 Hz display with an RTX 5080 or 5090, that's a potent combination.

AMD gamers have a few more options. You can find DisplayPort 2.1 monitors and TVs, if you look hard enough. The Asus ROG Swift PG32UXQR for example supports DisplayPort 2.1. HDMI 2.1 connectivity is also sufficient, and there are more displays available. Keep in mind that maximum bandwidth of both the RDNA 3 and RDNA 4 GPUs is 54 Gbps over DisplayPort 2.1, or 48 Gbps over HDMI 2.1, so it's not a huge difference. Most AMD RX 7900-series cards that we've seen include two DisplayPort 2.1 ports, and either two HDMI 2.1 or a single HDMI 2.1 alongside a USB Type-C connection. The newer RX 9070-series GPUs typically have triple DP2.1 and a single HDMI port.

Intel's GPUs support DP2.1 UHBR10, with the Battlemage B-series parts adding a single UHBR13.5 port. VRR is also supported, if you have an appropriate Adaptive Sync monitor. Basically, you don't want to try using a G-Sync display that isn't Adaptive Sync compatible with either AMD or Intel GPUs.

What if you already have a monitor that isn't running at higher refresh rates or doesn't have G-Sync or FreeSync capability, and it has both HDMI and DisplayPort inputs? Assuming your graphics card also supports both connections (and it probably does if it's a card made in the past eight years), in many instances the choice of connection won't really matter.

2560x1440 at a fixed 144 Hz refresh rate and 24-bit color works just fine on DisplayPort 1.2 or higher, as well as HDMI 2.0 or higher. Anything lower than that will also work without trouble on either connection type. About the only caveat is that sometimes HDMI connections on a monitor will default to a limited RGB range, but you can correct that in the AMD, Intel, or Nvidia display options. (This is because old TV standards used a limited color range, and some modern displays still think that's a good idea. News flash: it's not.)

Other use cases might push you toward DisplayPort as well, like if you want to use MST to have multiple displays daisy chained from a single port. That's not a very common scenario, but DisplayPort does make it possible. Home theater use on the other hand continues to prefer HDMI, and the auxiliary channel can improve universal remote compatibility. If you're hooking up your PC to a TV, HDMI is usually required, as there aren't many TVs that have a DisplayPort input.

You can do 4K at 60 Hz on both standards without DSC, so it's only 8K or 4K at refresh rates above 60 Hz where you actually run into limitations on recent GPUs. We've used AMD, Intel, and Nvidia GPUs at 4K and 98 Hz (8-bit RGB) with most models going back to the 2016 era, and 4:2:2 chroma can push even higher refresh rates if needed. Modern gaming monitors like the Samsung Odyssey Neo G8 32 with 4K and up to 240 Hz are also available, with DisplayPort 1.4 and HDMI 2.1 connectivity.

Ultimately, while there are certain specs advantages to DisplayPort and some features on HDMI that can make it a better choice for consumer electronics use, the two standards end up overlapping in many areas. The VESA standards group in charge of DisplayPort has its eyes on PC adoption growth, whereas HDMI is defined by a consumer electronics consortium and thinks about TVs first. But DisplayPort and HDMI end up with similar capabilities.

Jarred Walton is a senior editor at Tom's Hardware focusing on everything GPU. He has been working as a tech journalist since 2004, writing for AnandTech, Maximum PC, and PC Gamer. From the first S3 Virge '3D decelerators' to today's GPUs, Jarred keeps up with all the latest graphics trends and is the one to ask about game performance.

-

JarredWaltonGPU Reply

Thunderbolt just uses DisplayPort routed over the connection. Thunderbolt 2 supports DP 1.2 resolutions, and Thunderbolt 3 supports DP 1.4. I'll add a note in the article, though.Toadster88 said:what about Thunderbolt 3 in comparison? -

jonathan1683 I tried to setup a g-sync monitor with HDMI lol yea didn't work and took me forever to figure it out. Easy choice now.Reply -

CerianK Another big issue for me is input switching latency when I flip between sources, which is something missing from product specifications and reviews (unless I've somehow missed seeing it).Reply

I know that HDMI can be very slow (depending on monitor)... sometimes as much as 5 seconds to see the new source. I assumed that was content protection built into the standard and/or slow decoder ASIC.

I have not compared switch latency to Display Port, so would be curious if anyone here has impressions

.

Honestly, I would probably pay quite a bit extra for a monitor and/or TV that has much faster input source switching.. I have lost patience for technology regression as I have aged... I recall using monitors and TVs that had nearly instantaneous source switching back in the analog days. -

JarredWaltonGPU Reply

My experience is that it's more the monitor than the input. I've had monitors that take as long as 10 seconds to switch to a signal (or even turn on in the first place), and I've had others that switch in a second or less. I'm not sure if it's just poor firmware, or a cheap scaler, or something else.CerianK said:Another big issue for me is input switching latency when I flip between sources, which is something missing from product specifications and reviews (unless I've somehow missed seeing it).

I know that HDMI can be very slow (depending on monitor)... sometimes as much as 5 seconds to see the new source. I assumed that was content protection built into the standard and/or slow decoder ASIC.

I have not compared switch latency to Display Port, so would be curious if anyone here has impressions

.

Honestly, I would probably pay quite a bit extra for a monitor and/or TV that has much faster input source switching.. I have lost patience for technology regression as I have aged... I recall using monitors and TVs that had nearly instantaneous source switching back in the analog days.

I will say that I have an Acer XB280HK 4K60 G-Sync display that only has a single DisplayPort input, and it powers up or wakes from sleep almost instantly. I have an Acer G-Sync Ultimate 4K 144Hz HDR display meanwhile that takes about 7 seconds to wake from sleep. Rather annoying. -

Molor#1880 "36.86 Mbps for audio, or 0.37 Gbps" Actually it would be 0.037 Gbps, which takes it from relatively small to a near rounding error for several of those tables.Reply -

bit_user Reply

I always figured it's to do with HDMI's handshaking and auto-negotiation.JarredWaltonGPU said:My experience is that it's more the monitor than the input. I've had monitors that take as long as 10 seconds to switch to a signal (or even turn on in the first place), and I've had others that switch in a second or less. I'm not sure if it's just poor firmware, or a cheap scaler, or something else.

HDMI was designed for home theater, where you could have the signal pass through a receiver or splitter. Not only do you need to negotiate resolution, refresh rate, colorspace, bit-depth, link speed, ancillary & back-channel data, but also higher-level parameters like audio delay. So, probably just a poor implementation of that process, running on some dog-slow embedded processor.

As such, you might find that locking down the configuration range of the source can speed things up, a bit. -

JarredWaltonGPU Reply

Oops, you're correct. Speaking of rounding errors, I seemed to have misplaced my decimal point. ;-)Molor#1880 said:"36.86 Mbps for audio, or 0.37 Gbps" Actually it would be 0.037 Gbps, which takes it from relatively small to a near rounding error for several of those tables. -

waltc3 I'm surprised the article didn't mention TVs, as currently that's the main reason people go HDMI instead of DP, imo. I appreciated the fact that the article mentioned the loss of color fidelity the 144Hz compromise forces, although most people seem to ignore that difference. I include a link below that is informative on that topic--it's not mine but I saved the link to remind me...;) I haven't looked in a while, but last time I checked few if any TVs feature Display Ports--my TV at home is strictly HDMI. Personally, I use an AMD 50th Ann Ed 5700XT with a 1.4 DP cable plugged into my DP 1.4 monitor, the Ben Q 3270U.Reply

hardware/comments/8rlf2zView: https://www.reddit.com/r/hardware/comments/8rlf2z/psa_4k_144_hz_monitors_use_chroma_subsampling_for/