Intel Core i7 (Nehalem): Architecture By AMD?

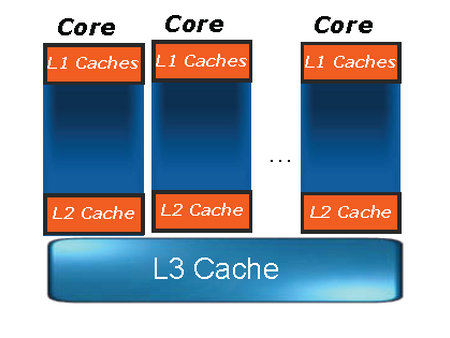

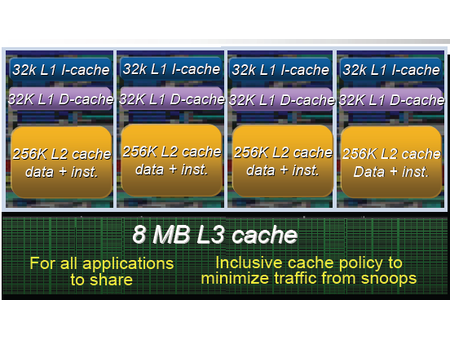

A Three-Level Cache Hierarchy

The memory hierarchy of Conroe was extremely simple and Intel was able to concentrate on the performance of the shared L2 cache, which was the best solution for an architecture that was aimed mostly at dual-core implementations. But with Nehalem, the engineers started from scratch and came to the same conclusions as their competitors: a shared L2 cache was not suited to a native quad-core architecture. The different cores can too frequently flush data needed by another core and that surely would have involved too many problems in terms of internal buses and arbitration to provide all four cores with sufficient bandwidth while keeping latency sufficiently low. To solve the problem, the engineers provided each core with a Level 2 cache of its own. Since it’s dedicated to a single core and relatively small (256 KB), the engineers were able to endow it with very high performance; latency, in particular, has reportedly improved significantly over Penryn—from 15 cycles to approximately 10 cycles.

Then comes an enormous Level 3 cache memory (8 MB) for managing communications between cores. While at first glance Nehalem’s cache hierarchy reminds one of Barcelona, the operation of the Level 3 cache is very different from AMD’s—it’s inclusive of all lower levels of the cache hierarchy. That means that if a core tries to access a data item and it’s not present in the Level 3 cache, there’s no need to look in the other cores’ private caches—the data item won’t be there either. Conversely, if the data are present, four bits associated with each line of the cache memory (one bit per core) show whether or not the data are potentially present (potentially, but not with certainty) in the lower-level cache of another core, and which one.

This technique is effective for ensuring the coherency of the private caches because it limits the need for exchanges between cores. It has the disadvantage of wasting part of the cache memory with data that is already in other cache levels. That’s somewhat mitigated, however, by the fact that the L1 and L2 caches are relatively small compared to the L3 cache—all the data in the L1 and L2 caches takes up a maximum of 1.25 MB out of the 8 MB available. As on Barcelona, the Level 3 cache doesn’t operate at the same frequency as the rest of the chip. Consequently, latency of access to this level is variable, but it should be in the neighborhood of 40 cycles.

The only real disappointment with Nehalem’s new cache hierarchy is its L1 cache. The bandwidth of the instruction cache hasn’t been increased—it’s still 16 bytes per cycle compared to 32 on Barcelona. This could create a bottleneck in a server-oriented architecture since 64-bit instructions are larger than 32-bit ones, especially since Nehalem has one more decoder than Barcelona, which puts that much more pressure on the cache. As for the data cache, its latency has increased to four cycles compared to three on the Conroe, facilitating higher clock frequencies. To end on a positive note, though, the engineers at Intel have increased the number of Level 1 data cache misses that the architecture can process in parallel.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

-

cl_spdhax1 good write-up, cant wait for the new architecture , plus the "older" chips are going to become cheaper/affordable. big plus.Reply -

neiroatopelcc No explaination as to why you can't use performance modules with higher voltage though.Reply -

neiroatopelcc AuDioFreaK39TomsHardware is just now getting around to posting this?Not to mention it being almost a direct copy/paste from other articles I've seen written about Nehalem architecture.I regard being late as a quality seal really. No point being first, if your info is only as credible as stuff on inquirer. Better be last, but be sure what you write is correct.Reply

-

cangelini AuDioFreaK39TomsHardware is just now getting around to posting this?Not to mention it being almost a direct copy/paste from other articles I've seen written about Nehalem architecture.Reply

Perhaps, if you count being translated from French. -

randomizer Yea, 13 pages is quite alot to translate. You could always use google translation if you want it done fast :kaola:Reply -

Duncan NZ Speaking of french... That link on page 3 goes to a French article that I found fascinating... Would be even better if there was an English version though, cause then I could actually read it. Any chance of that?Reply

Nice article, good depth, well written -

neiroatopelcc randomizerYea, 13 pages is quite alot to translate. You could always use google translation if you want it done fast I don't know french, so no idea if it actually works. But I've tried from english to germany and danish, and viseversa. Also tried from danish to german, and the result is always the same - it's incomplete, and anything that is slighty technical in nature won't be translated properly. In short - want it done right, do it yourself.Reply -

neiroatopelcc I don't think cangelini meant to say, that no other english articles on the subject exist.Reply

You claimed the article on toms was a copy paste from another article. He merely stated that the article here was based on a french version. -

enewmen Good article.Reply

I actually read the whole thing.

I just don't get TLP when RAM is cheap and the Nehalem/Vista can address 128gigs. Anyway, things have changed a lot since running Win NT with 16megs RAM and constant memory swapping. -

cangelini I can't speak for the author, but I imagine neiro's guess is fairly accurate. Written in French, translated to English, and then edited--I'm fairly confident they're different stories ;)Reply