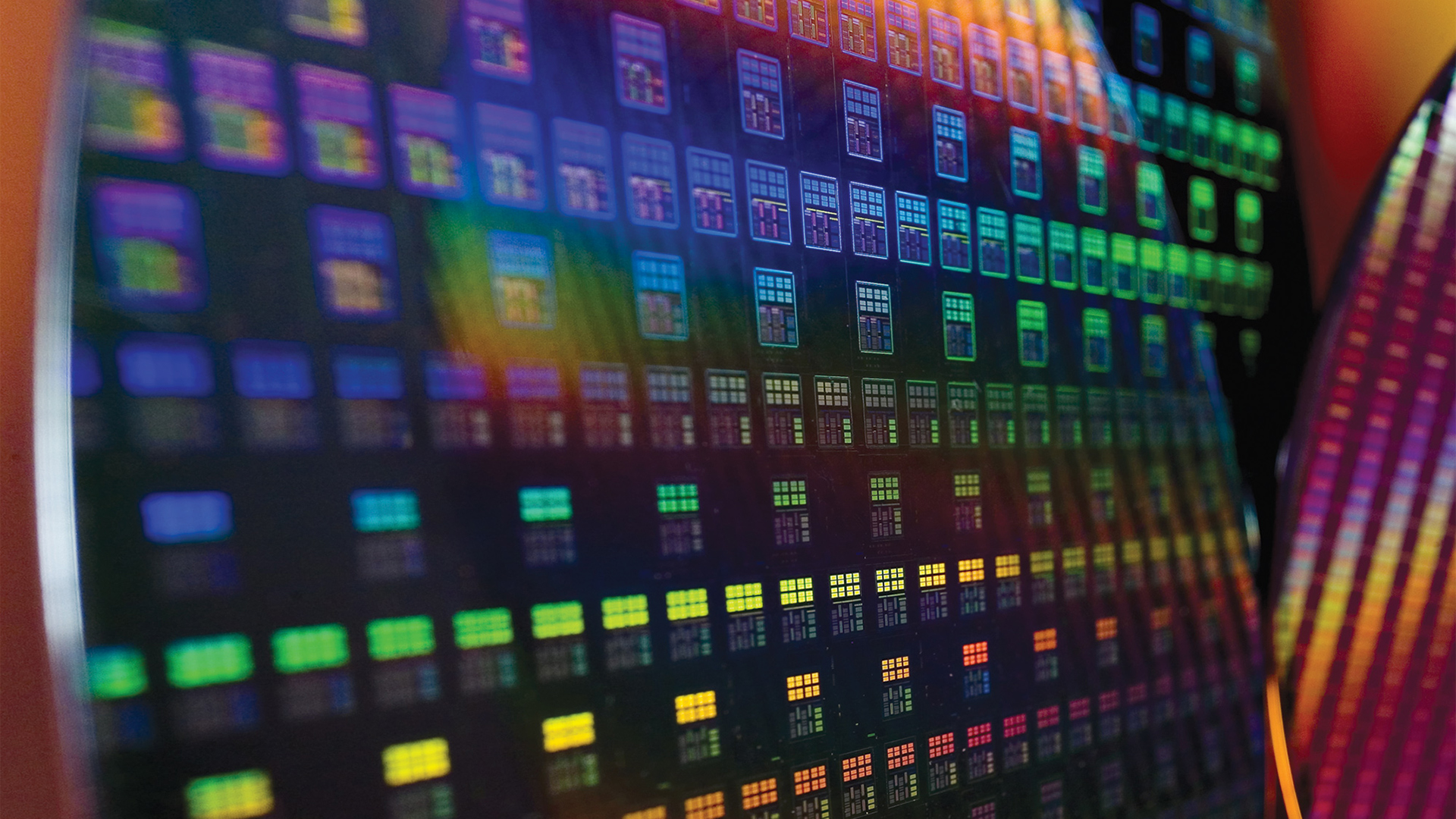

TSMC's 3nm Node: No SRAM Scaling Implies More Expensive CPUs and GPUs

Big problems from tiny memory cells.

According to a report from WikiChip, TSMC's SRAM Scaling has slowed tremendously. When it comes to brand-new fabrication nodes, we expect them to increase performance, cut down power consumption, and increase transistor density. But while logic circuits have been scaling well with the recent process technologies, SRAM cells have been lagging behind and apparently almost stopped scaling at TSMC's 3nm-class production nodes. This is a major problem for future CPUs, GPUs, and SoCs that will likely get more expensive because of slow SRAM cells area scaling.

SRAM Scaling Slows

When TSMC formally introduced its N3 fabrication technologies earlier this year, it said that the new nodes would provide 1.6x and 1.7x improvements in logic density when compared to its N5 (5nm-class) process. What it did not reveal is that SRAM cells of the new technologies almost do not scale compared to N5, according to WikiChip, which obtained information from a TSMC paper published at the International Electron Devices Meeting (IEDM)

TSMC's N3 features an SRAM bitcell size of 0.0199µm^², which is only ~5% smaller compared to N5's 0.021 µm^²SRAM bitcell. It gets worse with the revamped N3E as it comes with a 0.021 µm^² SRAM bitcell (which roughly translates to 31.8 Mib/mm^²), which means no scaling compared to N5 at all.

Meanwhile, Intel's Intel 4 (originally called 7nm EUV) reduces SRAM bitcell size to 0.024µm^² from 0.0312µm^² in case of Intel 7 (formerly known as 10nm Enhanced SuperFin), we are still talking about something like 27.8 Mib/mm^², which is a bit behind TSMC's HD SRAM density.

Furthermore, WikiChip recalls an Imec presentation that showed SRAM densities of around 60 Mib/mm^² on a 'beyond 2nm node' with forksheet transistors. Such process technology is years away and between now and then chip designers will have to develop processors with SRAM densities advertised by Intel and TSMC (though, Intel 4 will unlikely be used by anyone except Intel anyway).

Loads of SRAM in Modern Chips

Modern CPUs, GPUs, and SoCs use loads of SRAM for various caches as they process loads of data and it is extremely inefficient to fetch data from memory, especially for various artificial intelligence (AI) and machine learning (ML) workloads. But even general-purpose processors, graphics chips, and application processors for smartphones carry huge caches these days: AMD's Ryzen 9 7950X carries 81MB of cache in total, whereas Nvidia's AD102 uses at least 123MB of SRAM for various caches that Nvidia publicly disclosed.

Going forward, the need for caches and SRAM will only increase, but with N3 (which is set to be used for a few products only) and N3E there will be no way to reduce die area occupied by SRAM and mitigate higher costs of the new node compared to N5. Essentially, it means that die sizes of high-performance processors will increase, and so will their costs. Meanwhile, just like logic cells, SRAM cells are prone to defects. To some degree chip designers will be able to alleviate larger SRAM cells with N3's FinFlex innovations (mixing and matching different kinds of FinFETs in a block to optimize it for performance, power, or area), but at this point we can only guess what kind of fruits this will bring.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

TSMC plans to bring its density-optimized N3S process technology that promises to shrink SRAM bitcell size compared to N5, but this is set to happen in circa 2024 and we wonder whether this one will provide enough logic performance for chips designed by AMD, Apple, Nvidia and Qualcomm.

Mitigations?

One of the ways to mitigate slowing SRAM area scaling in terms of costs is going multi-chiplet design and disaggregate larger caches into separate dies made on a cheaper node. This is something that AMD does with its 3D V-Cache, albeit for a slightly different reason (for now). Another way is to use alternative memory technologies like eDRAM or FeRAM for caches, though the latter have their own peculiarities.

In any case, it looks like slowing of SRAM scaling with FinFET-based nodes at 3nm and beyond seems to be a major challenge for chip designers in the coming years.

Anton Shilov is a contributing writer at Tom’s Hardware. Over the past couple of decades, he has covered everything from CPUs and GPUs to supercomputers and from modern process technologies and latest fab tools to high-tech industry trends.

-

hotaru251 and heres NVIDIA's excuse next gen.Reply

"and thats why the 5090 costs $3000" - jensen prolly -

jp7189 It seems like AMD has already implemented a good solution with the GCD/MCD arrangement on RDNA3 with the largest L3 caches made on an older, cheaper node. I expected more designs to pick that up in the future.Reply -

emike09 Hell of a lot of numbers and nonsense to simply say - we want way more of your money, the world's getting more technical based, and we want all your money. JUST LOOK AT THESE CRAZY NUMBERS! Pay me, gimme your money! Because you know you will and you don't have a choice because the world is so tech based now. Thanks Covid.Reply -

umeng2002_2 I think once the cooling issue is handled, stacking SRAM on top of smaller logic and other "3D" techniques mitigate these issues.Reply

Remember like 10 or 15 years ago when everyone was saying we were all doomed because of "quantum tunneling?" Well, here we are about to go down bellow 1nm. This is why research is always ongoing. -

rluker5 I could see Intel's chiplet method handling this the easiest. AMD's stacking method will always be hot because of the thermal insulation it makes. Also the cache on a Zen chiplet is of the same expensive node as the CPU logic and the stacked cache has to match perfectly so a cheaper node for the stacked cache seems out of the question. Intel is already attaching HBM2e, why not the same area in L3?Reply -

Kamen Rider Blade Reply

Don't temp Jensen Huang, he might be crazy enough to do it.hotaru251 said:and heres NVIDIA's excuse next gen.

"and thats why the 5090 costs $3000" - jensen prolly

That was going to happen, regardless of COVID.emike09 said:Hell of a lot of numbers and nonsense to simply say - we want way more of your money, the world's getting more technical based, and we want all your money. JUST LOOK AT THESE CRAZY NUMBERS! Pay me, gimme your money! Because you know you will and you don't have a choice because the world is so tech based now. Thanks Covid.

The question is "How Fast", and "How Soon" will we get there. -

hannibal Replyumeng2002_2 said:I think once the cooling issue is handled, stacking SRAM on top of smaller logic and other "3D" techniques mitigate these issues.

Remember like 10 or 15 years ago when everyone was saying we were all doomed because of "quantum tunneling?" Well, here we are about to go down bellow 1nm. This is why research is always ongoing.

Mainly because we are not even near 1nm in real life!

The marketing "nm" is so far from real nm that quantum tunneling is not problem for years! -

Nikolay Mihaylov Replyemike09 said:Hell of a lot of numbers and nonsense to simply say - we want way more of your money, the world's getting more technical based, and we want all your money. JUST LOOK AT THESE CRAZY NUMBERS! Pay me, gimme your money! Because you know you will and you don't have a choice because the world is so tech based now. Thanks Covid.

Chill out dude! No one is forcing you to buy anything. And you are not entitled to getting anything at the price you are willing to pay. If there is a match, fine - go and buy. If there isn't - well, maybe it's just out of your price range.

For companies to provide goods and services, they have to be able to make a profit. If there isn't, they won't do it. The only things that keep keep them in check are actual market demand and competition. You can not decree lower prices. I grew up in a former communist country and I've seen where this ends.

The only outcome from your whining is that manufacturers are reluctant to charge the equilibrium price for their product so they don't tarnish their image. Which invites scalpers. So you are not better off for that, but the actual manufacturers are worse. I know this is not a popular opinion but, unfortunately, common sense is not very common. -

Rdslw I guess miniaturization can only go so far, it's time to make another design, that either scale or go 2.5D like finfet did. I guess it's time for someone to notice you can get clusters of 4 cells with something shared, or maybe someone will find a way to overlap neighboring gates.Reply

Whatever it will be, we need some innovation, not just gzip.

I hope smart guys can figure something out.