Why you can trust Tom's Hardware

We'll start with the performance that matters most: 4K at maxed out settings. Again, if you're using a 1080p monitor — even one with an extreme refresh rate — the RTX 4090 will almost certainly be overkill. That's less true if you're playing games with lots of ray tracing effects, but we'll get to that in a moment.

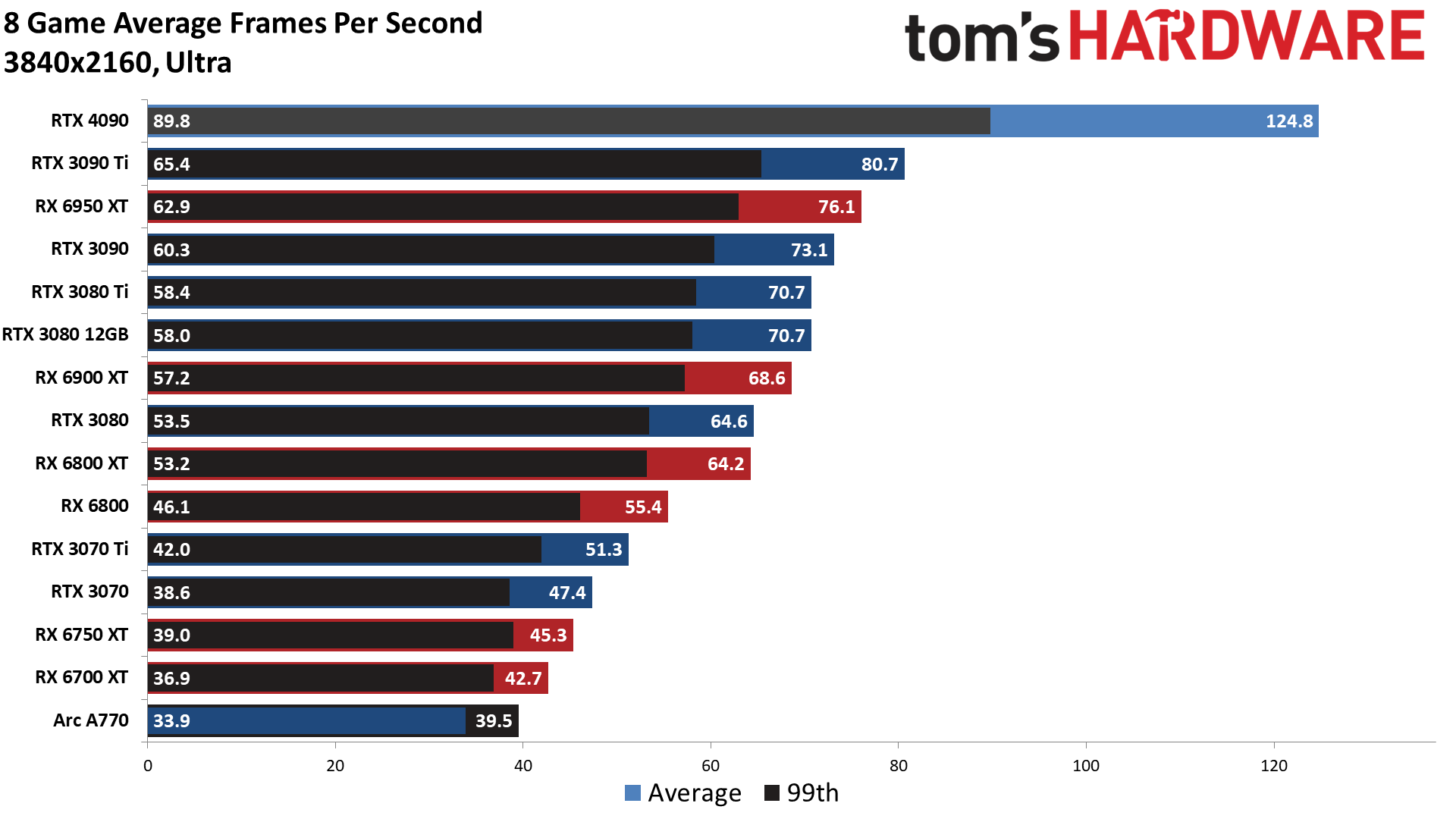

Let's pause for a moment while you check through those charts — there are nine in total, so don't just look at the overall average! But if you do just check the average, you'll see that the RTX 4090 provides a massive 55% improvement over the RTX 3090 Ti that launched six months ago. If you just bought a 3090 Ti, this one's going to sting more than a little. Yeah, you might have saved $500, but if you're in the market for a GPU that costs $1,000 or more, we think this level of performance at least justifies the cost.

Looking at other GPUs, there's a 71% generational uplift compared to the RTX 3090 Founders Edition, and a slightly larger 77% improvement over the RTX 3080 Ti. Against AMD's best, the RX 6950 XT, in our standard gaming suite that doesn't tap into most of the Ada Lovelace architecture's extra features, you're still getting a 64% improvement. That's huge and might put Nvidia's raw performance out of reach for AMD's upcoming RDNA 3 GPUs. We'll have to wait a month or so to see where AMD lands, but this is Nvidia throwing down the gauntlet.

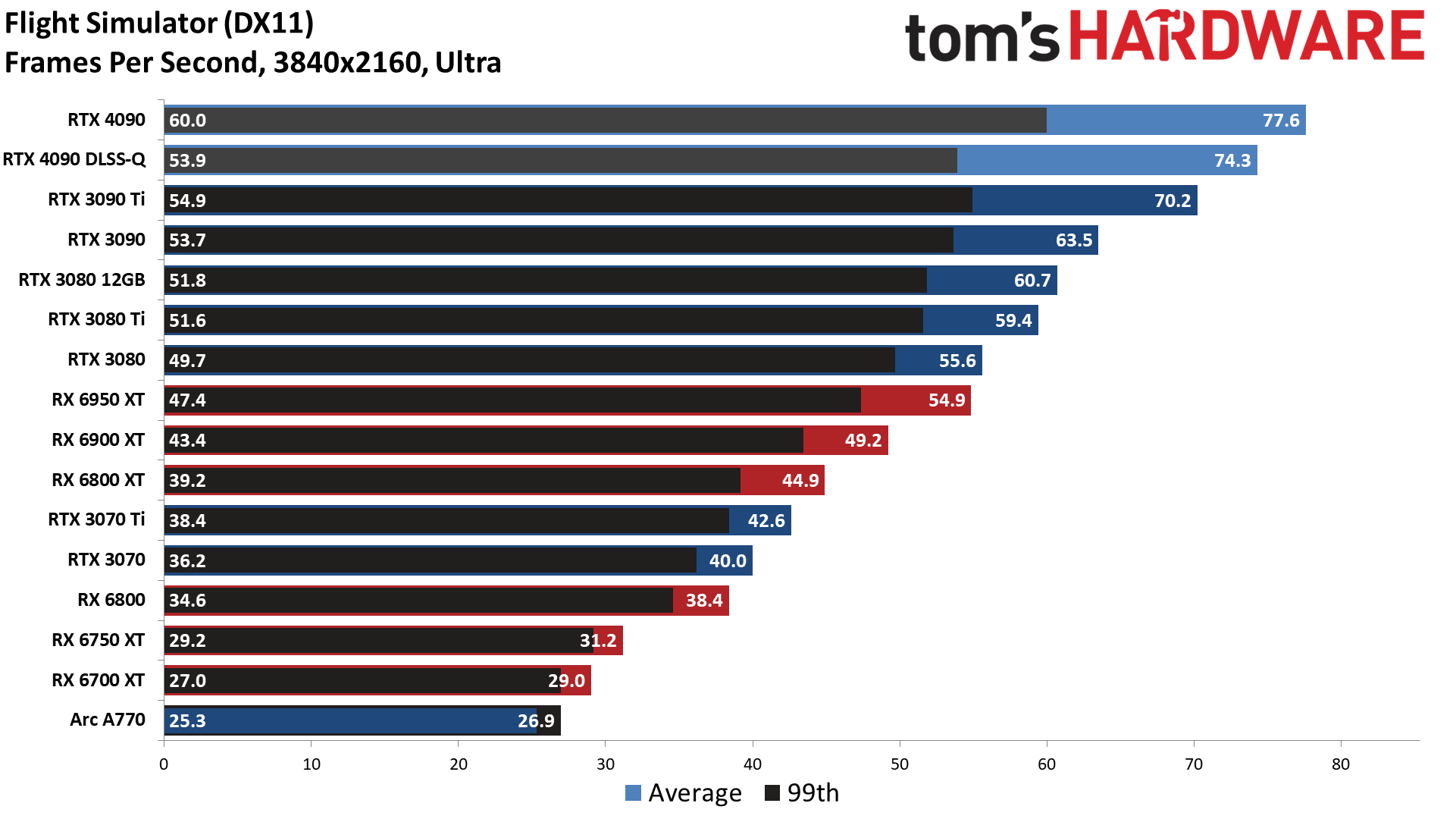

It's also important to note that the 55% average includes games that are still hitting CPU bottlenecks, like Flight Simulator. The RTX 4090 barely drops in performance going from 1080p ultra to 1440p ultra to 4K ultra — which is part of why Nvidia's DLSS 3 Frame Generation technology is so exciting, but we'll get to that in a bit.

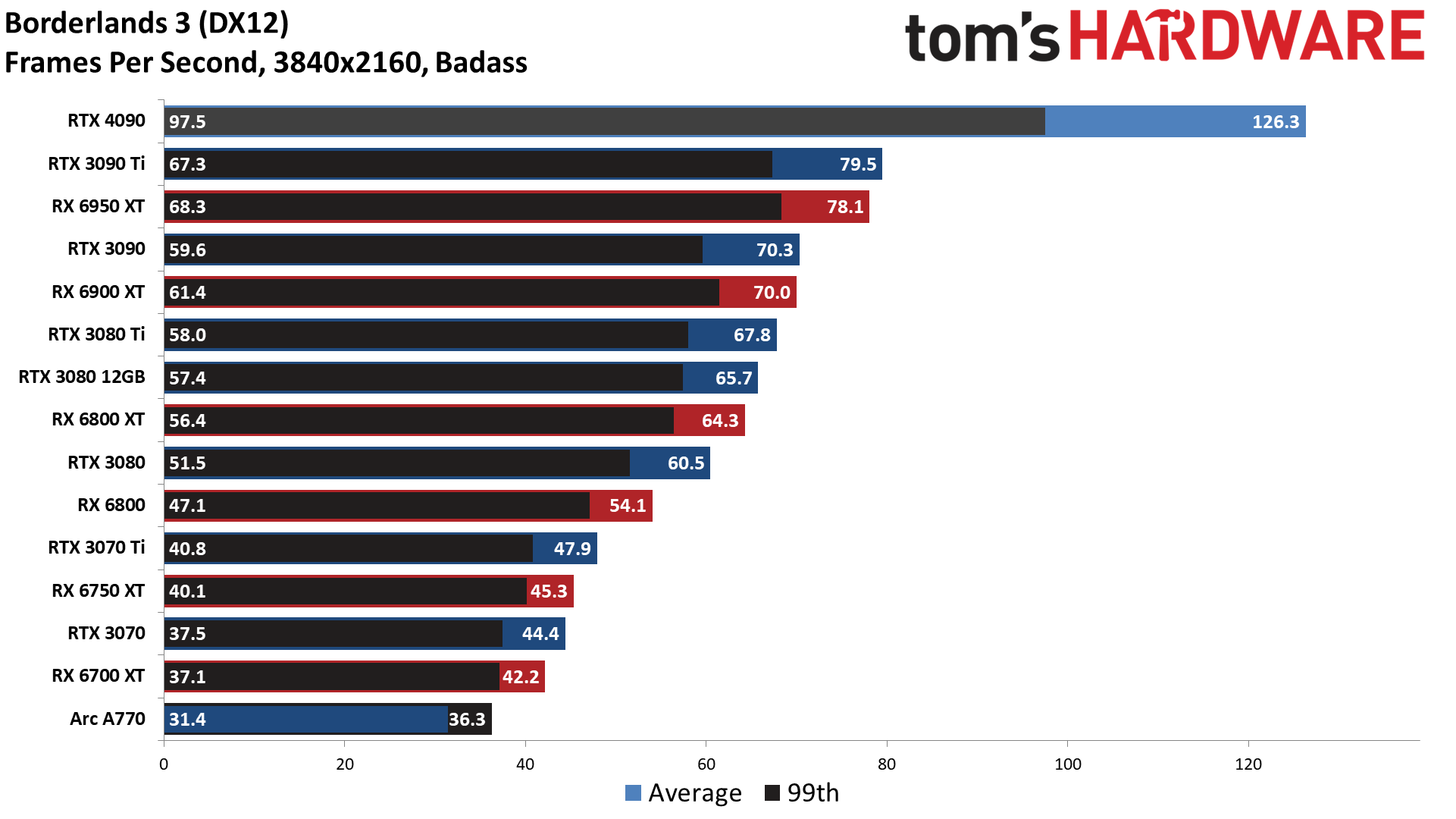

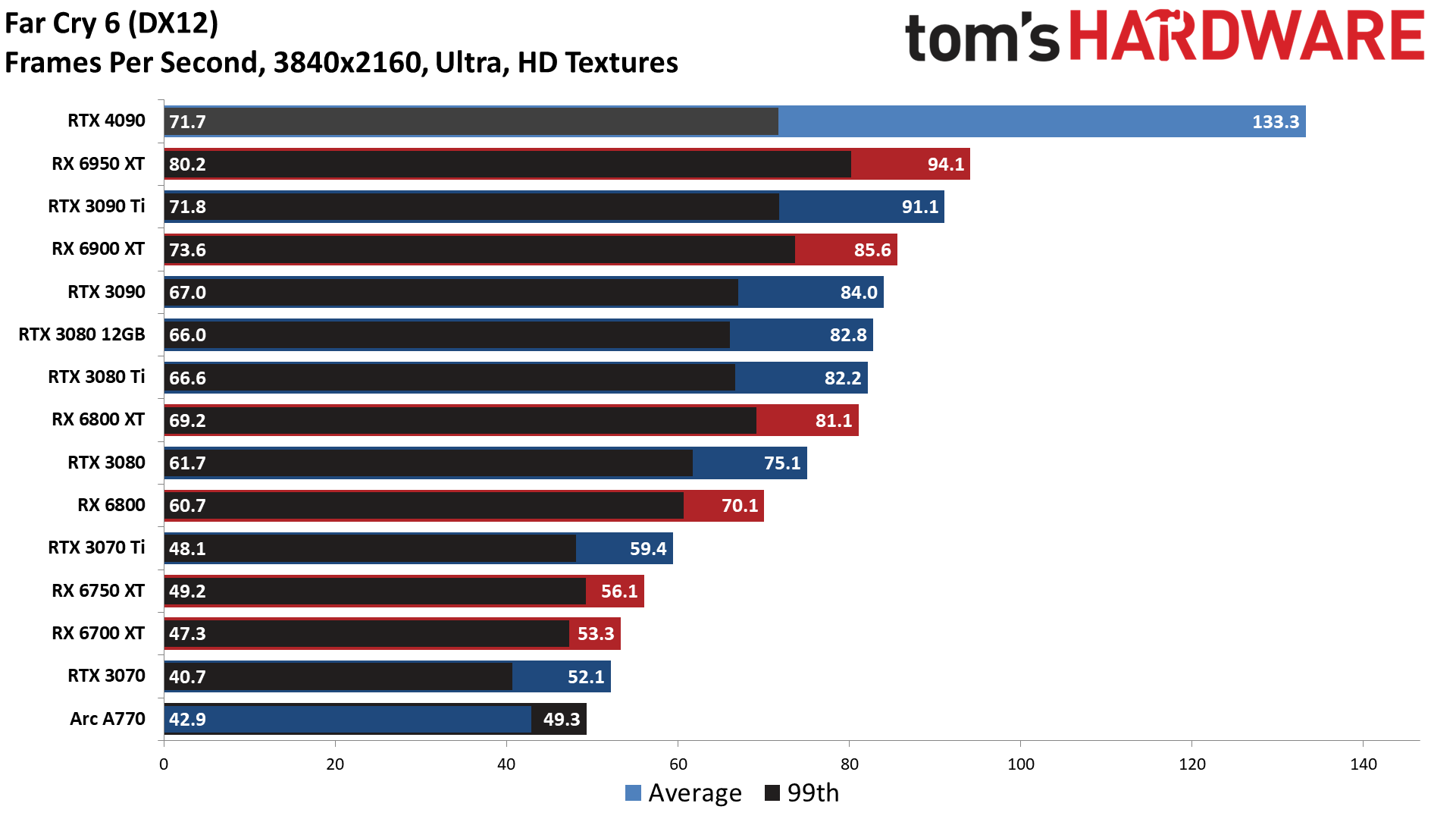

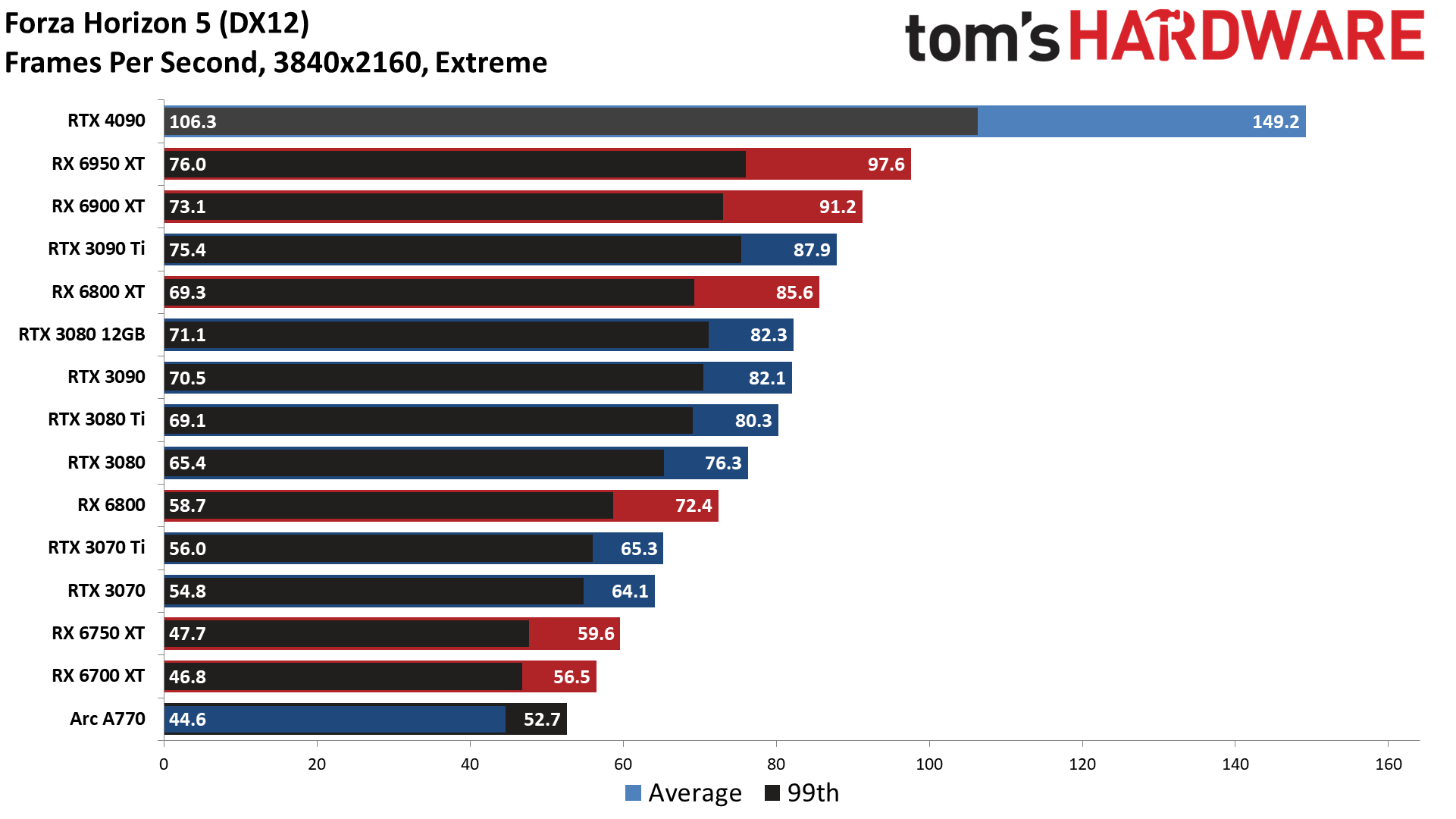

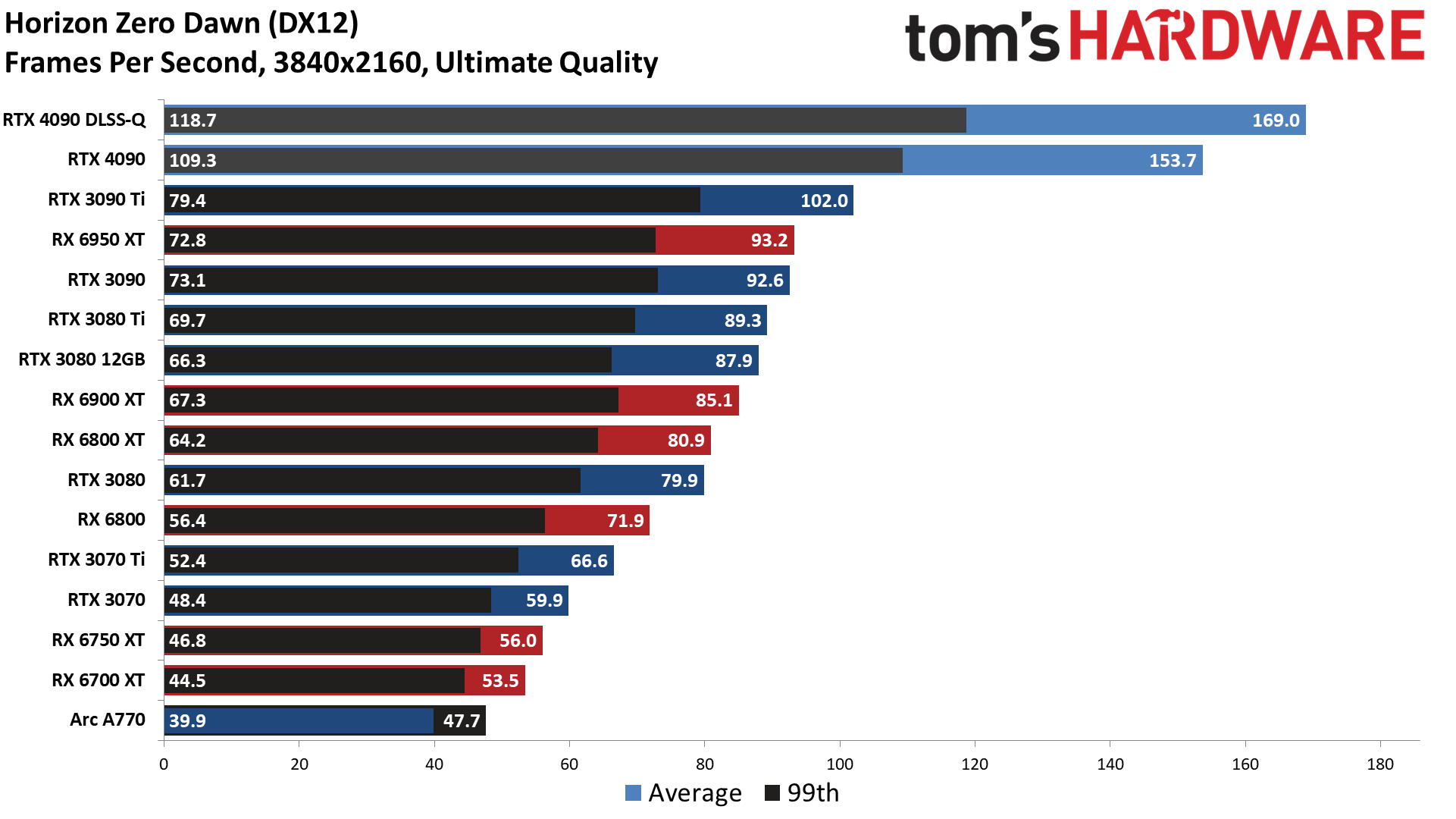

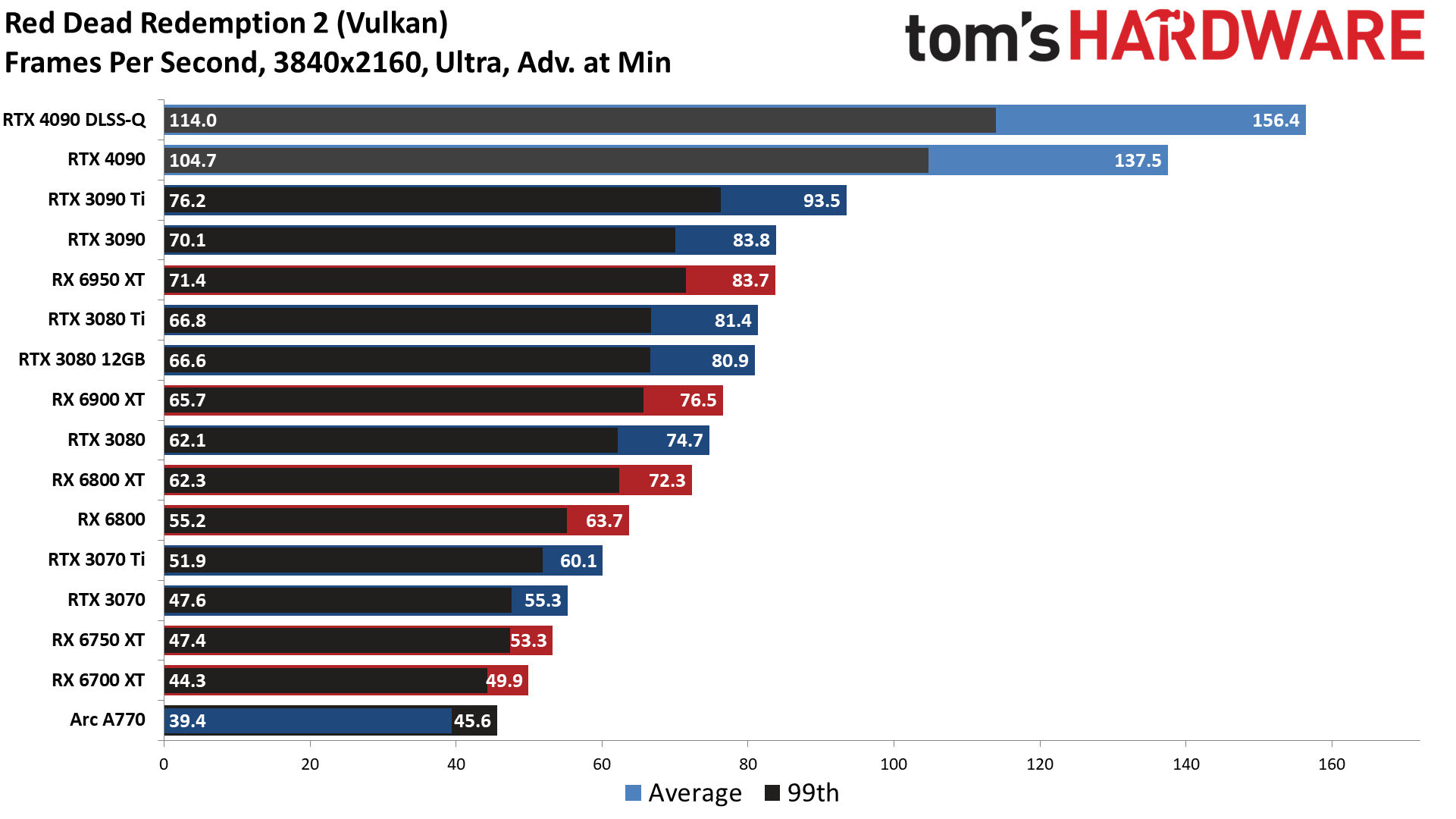

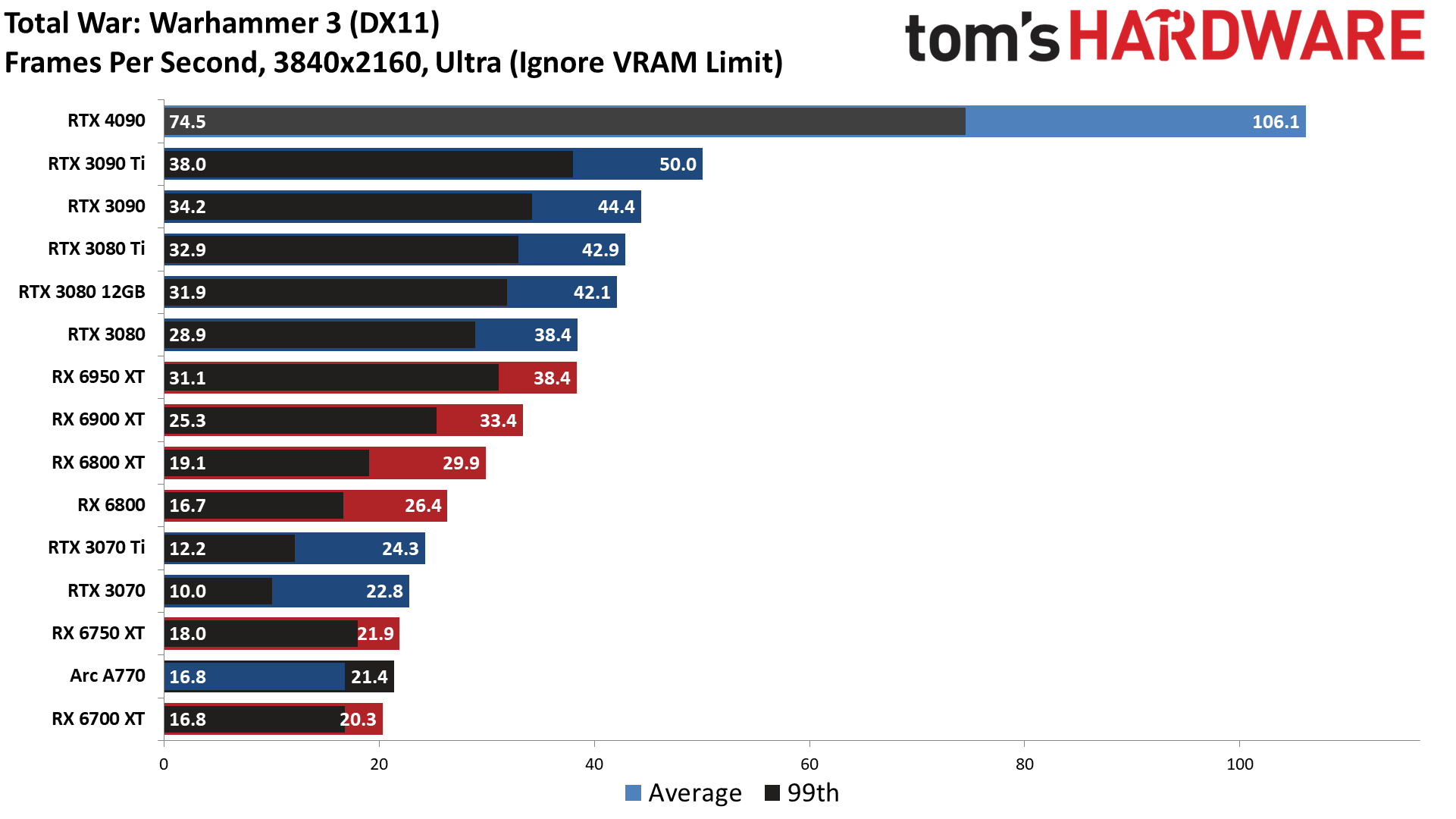

In the eight individual test results, the RTX 4090 beats the 3090 Ti by anywhere from 11% (Flight Simulator) to 112% (Total War: Warhammer 3). Those are the two outliers, with the remaining six games falling in a tighter range of 46% (Far Cry 6, Red Dead Redemption 2) to 70% (Forza Horizon 5).

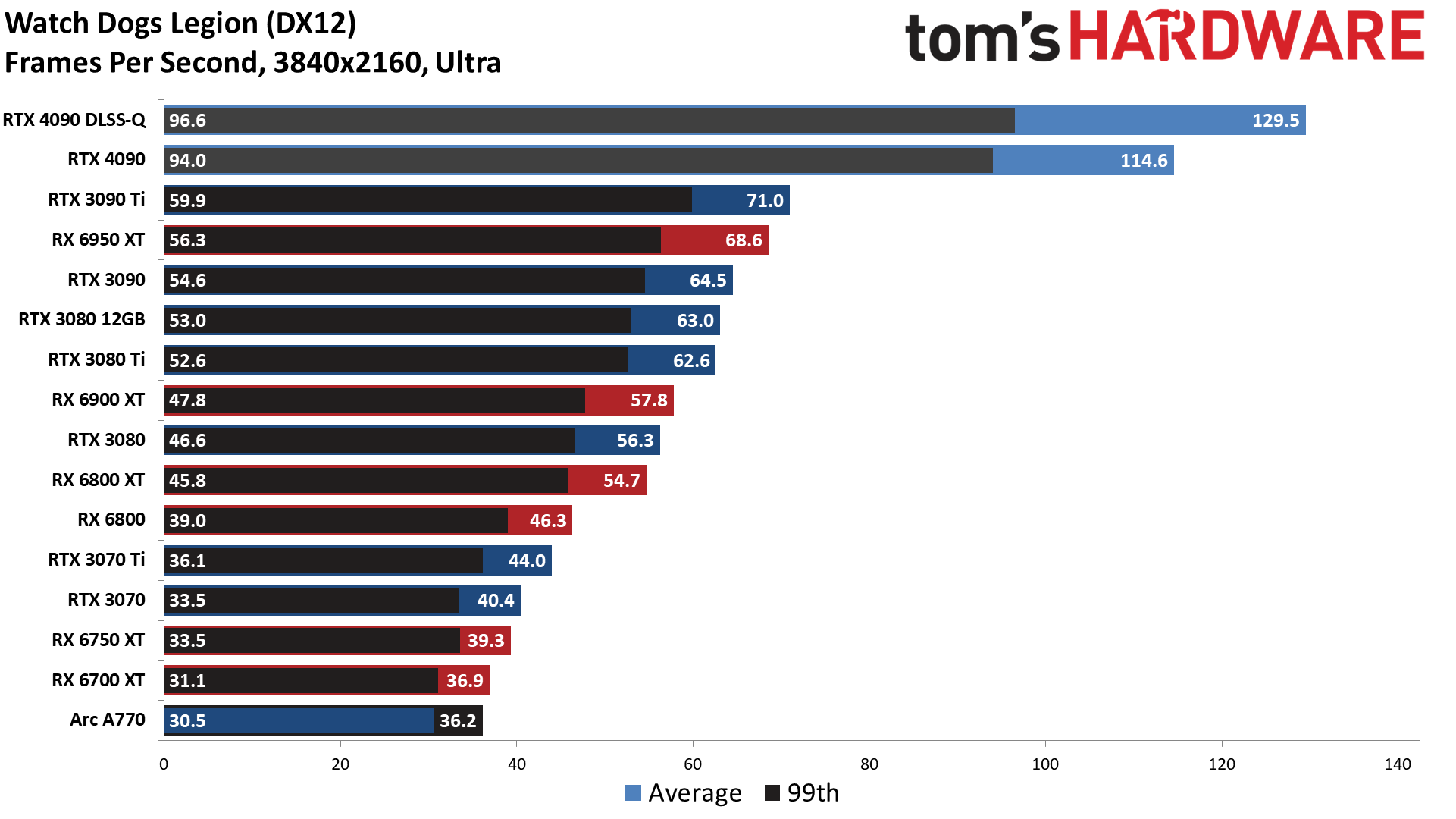

It's also worth noting what DLSS 2 in Quality mode does for performance in the four games that support it. Flight Simulator performance drops 4%, again due to the CPU limited nature. Horizon Zero Dawn only gains 10%, Watch Dogs Legion gets a 13% boost, and Red Dead Redemption performance improves by 14%. The RTX 3090 Ti saw up to a 35% increase in performance with DLSS 2 Quality mode, so again there are clear CPU bottlenecks coming into play, even at 4K.

But this isn't a card primarily designed for standard rasterization rendering techniques. It can easily beat the previous generation cards in such games, but where it's really destined to shine is with significant levels of ray tracing.

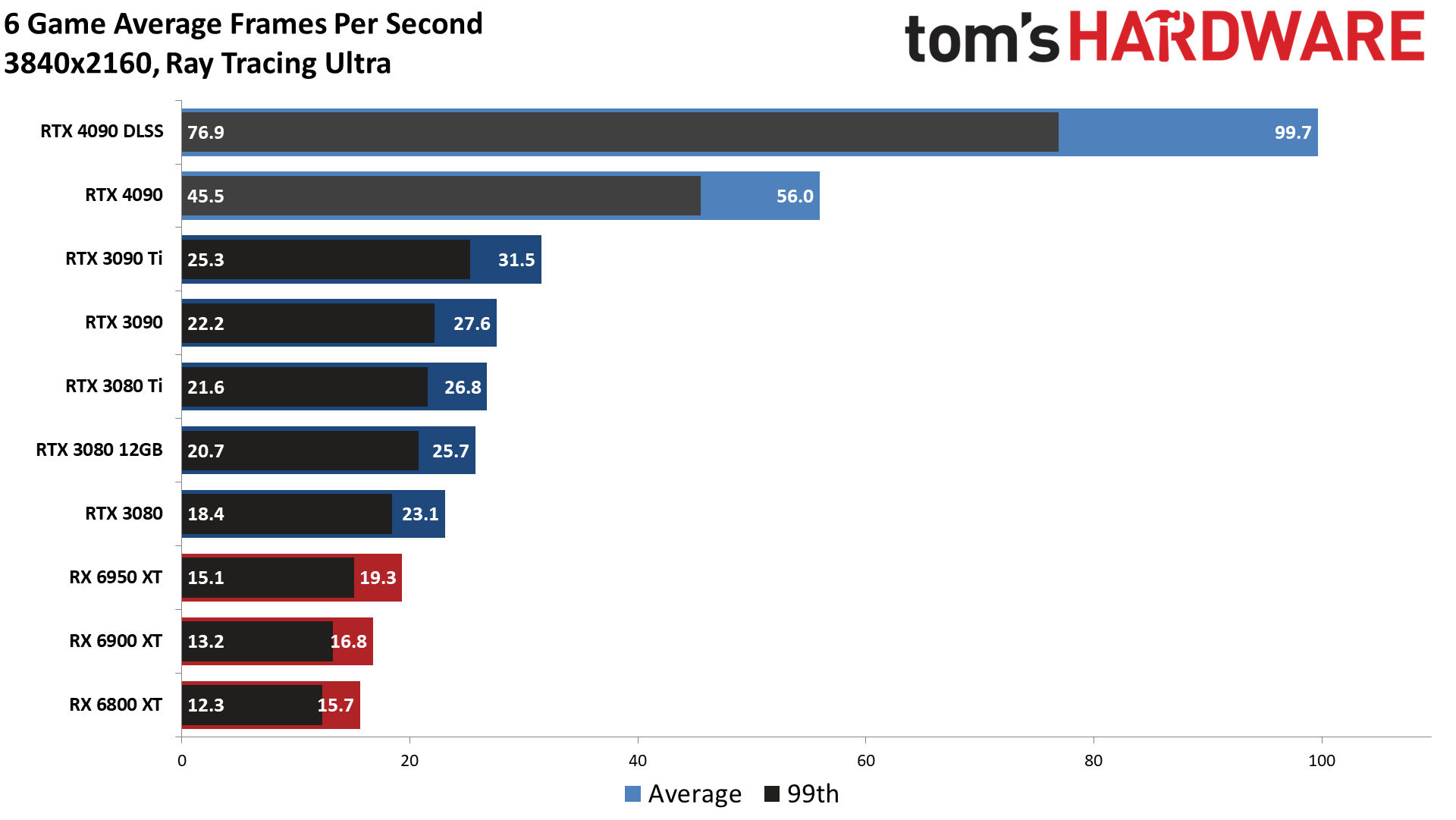

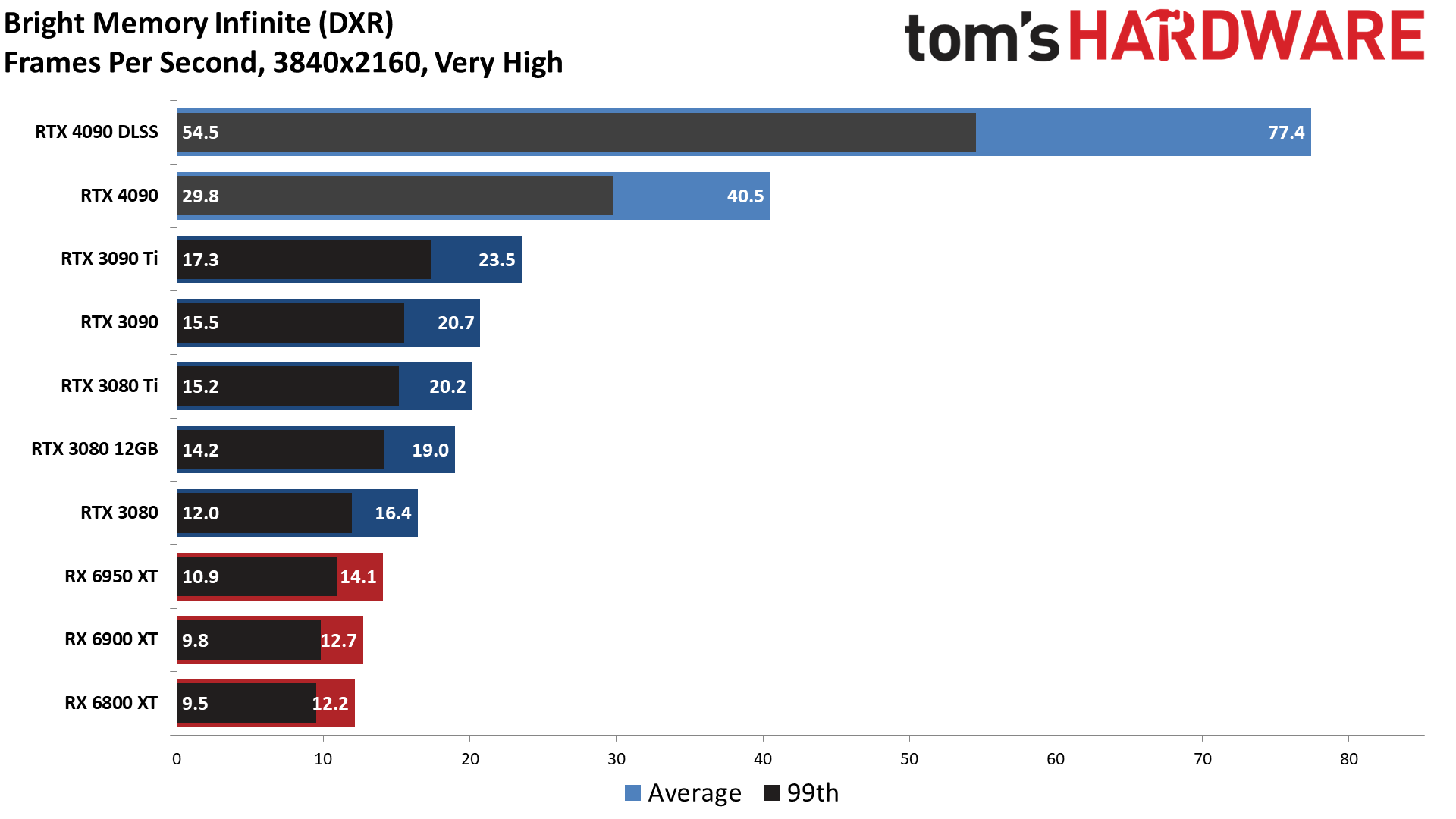

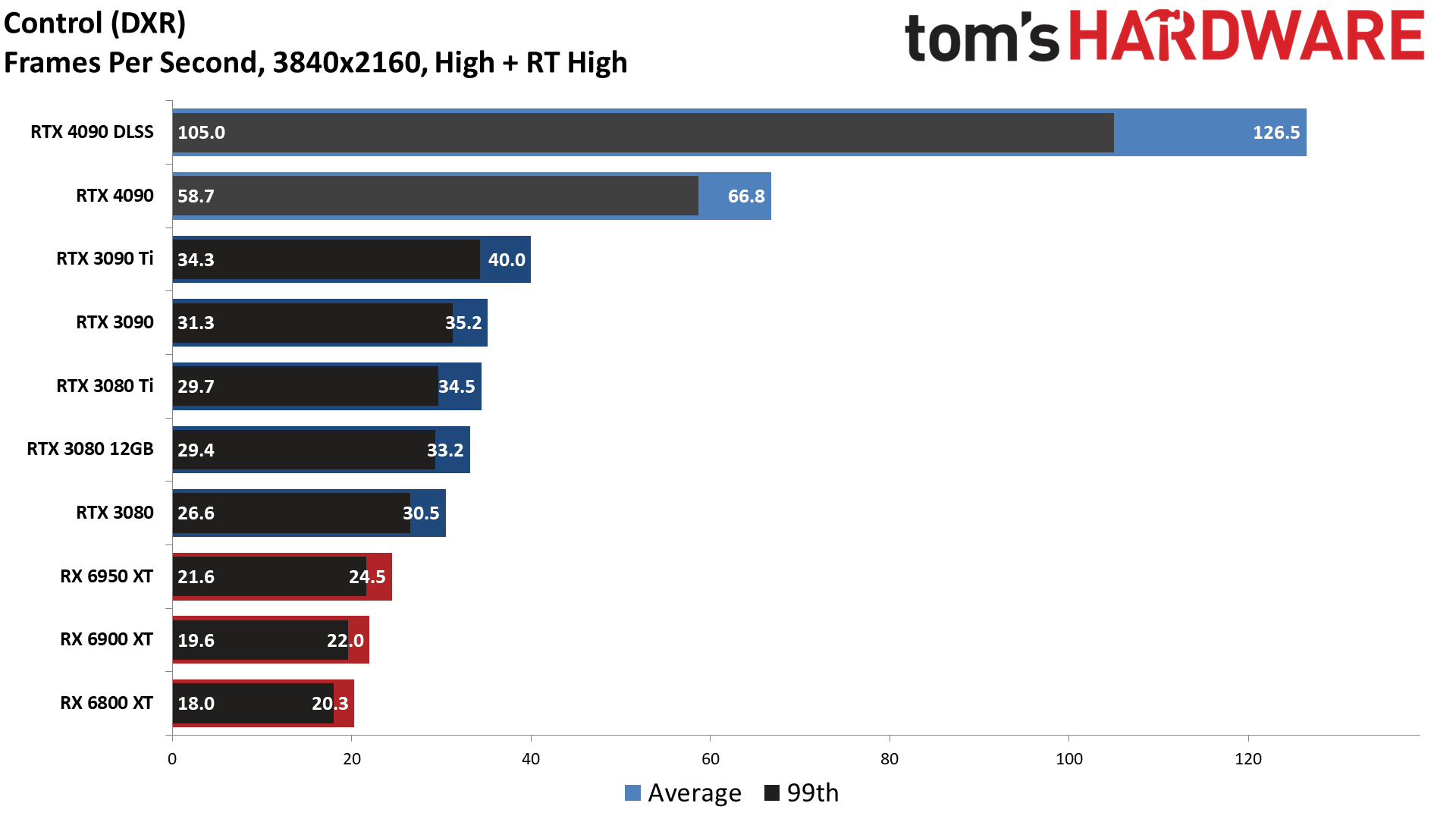

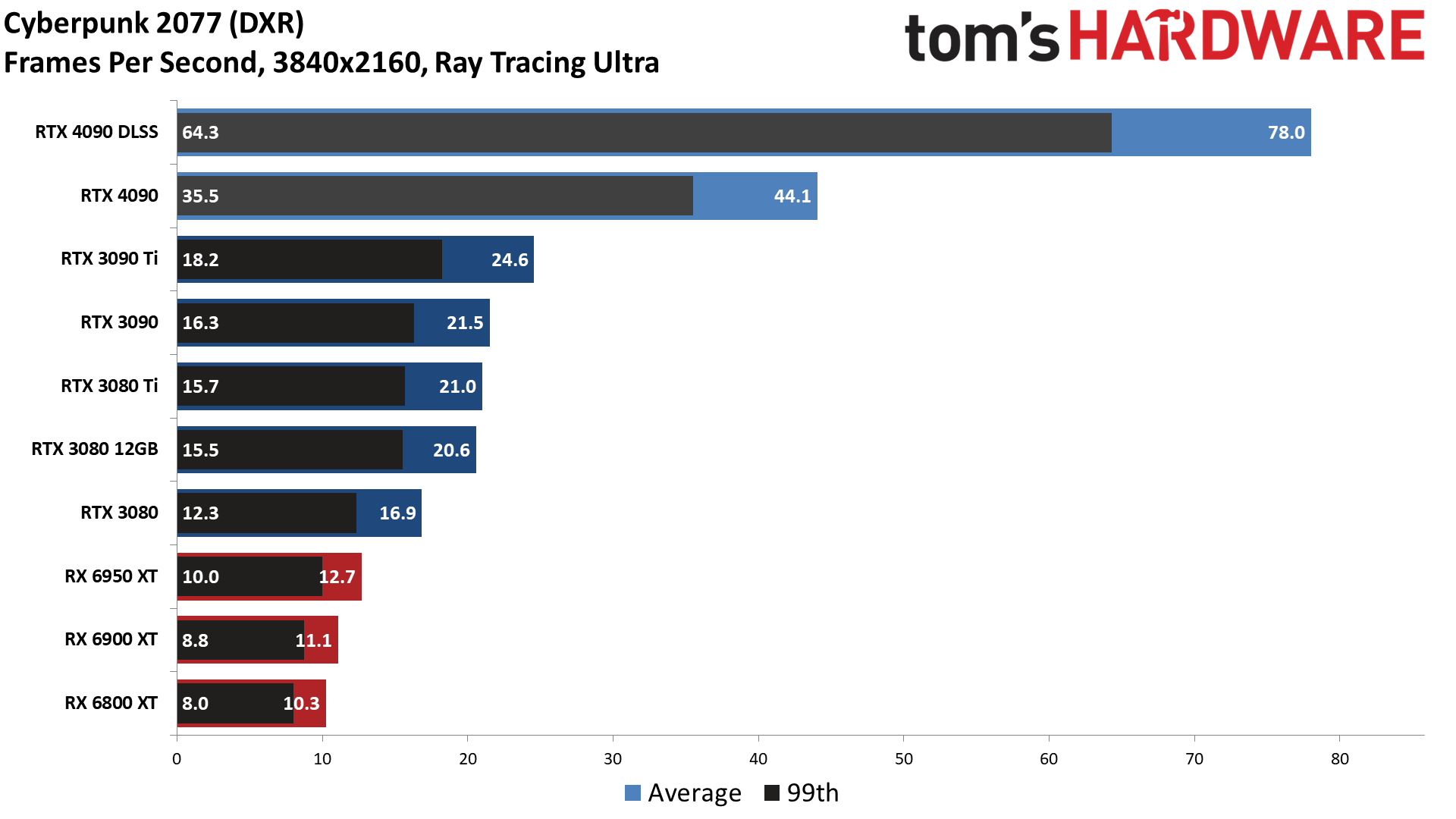

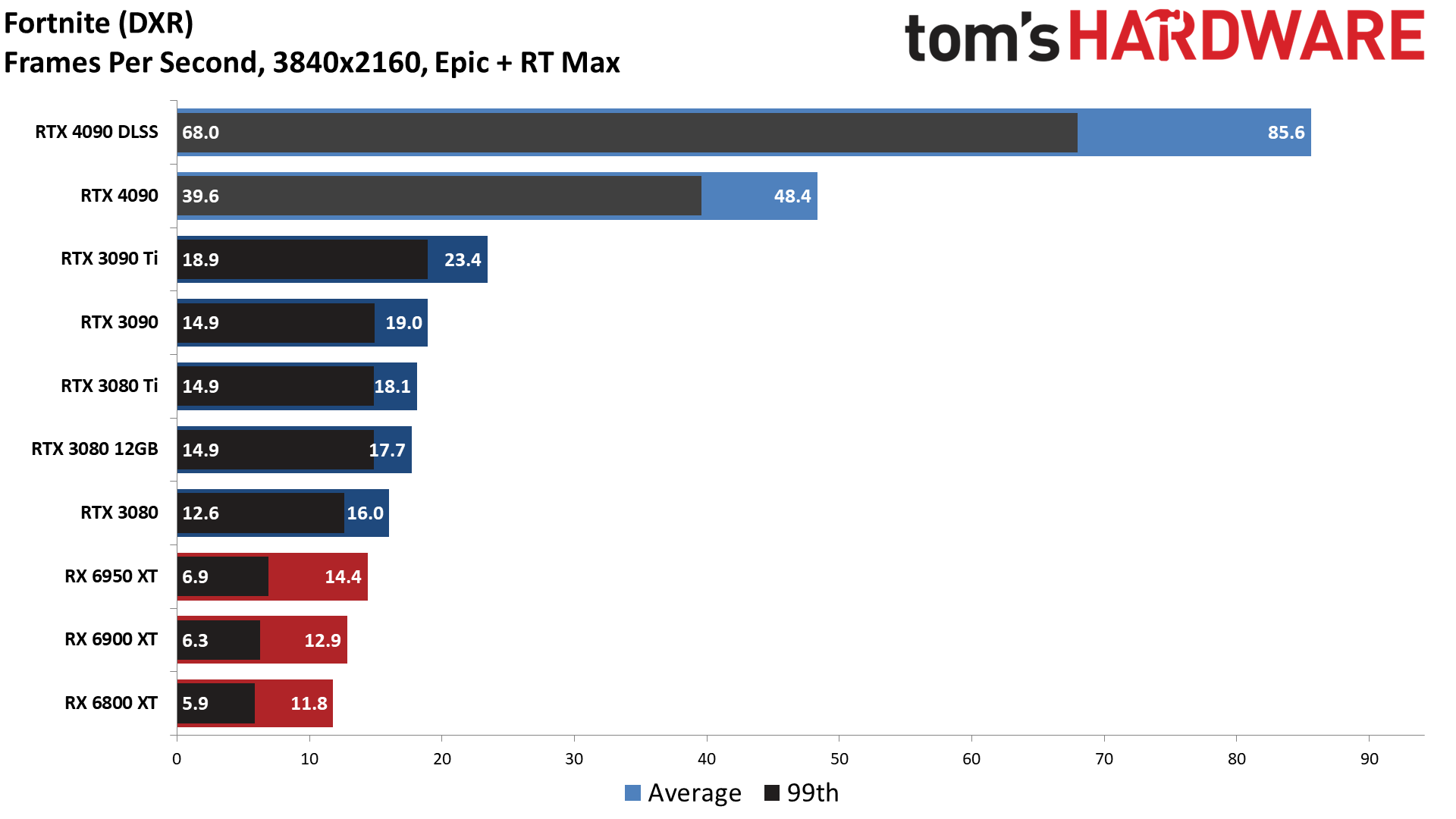

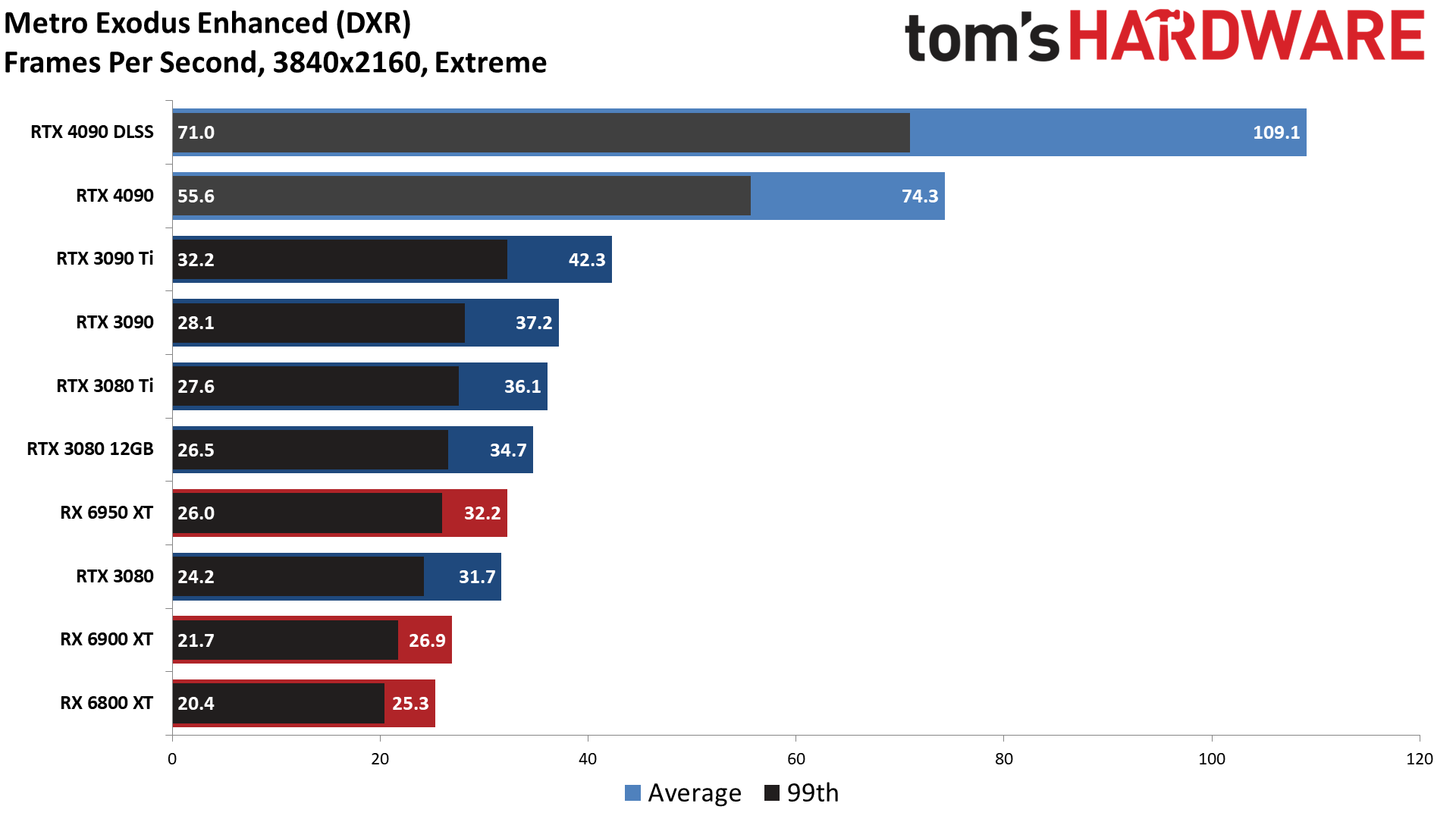

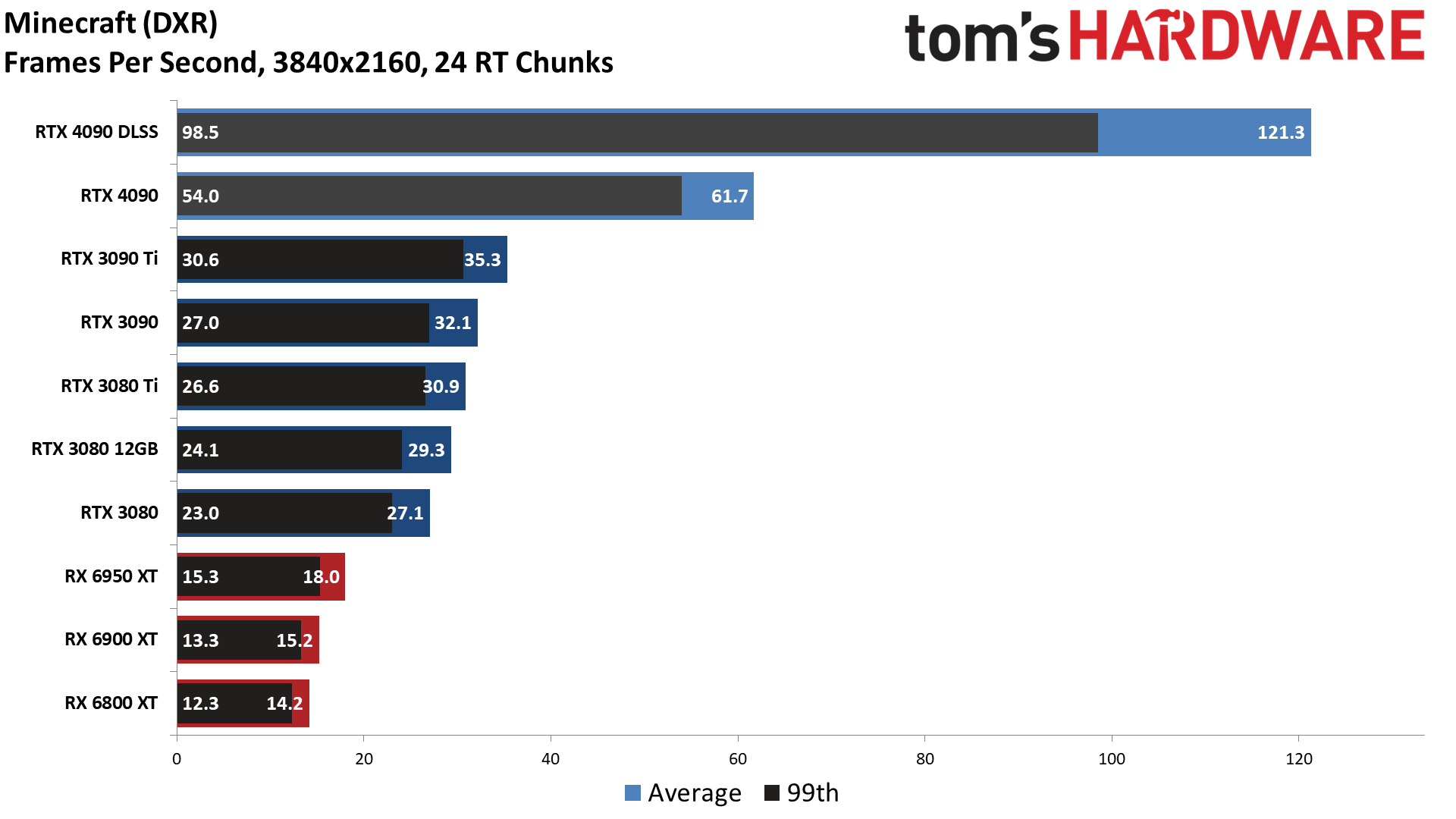

If the standard gaming performance didn't spark your interest, surely the ray tracing results will. Now we're looking at a 78% improvement over the previous gen RTX 3090 Ti, and this is in existing games. More demanding ray tracing titles are on the way, though there's a good chance you'll need an RTX 40-series with DLSS 3 to get decent performance out of those games.

Looking at other GPUs, the 4090 outpaces AMD's top RX 6950 XT by 190% — as in, it's nearly three times faster. It's just over twice as fast as the RTX 3090 and 3080 Ti as well. And if you turn on DLSS Quality mode upscaling, which could be done on any of the RTX cards, the 4090 performance improves by 78%. RTX 4090 running the highest quality mode of DLSS thus ends up with nearly five times the performance of the 6950 XT in demanding DXR games.

The individual gaming charts as usual show a range of performance, though it's not nearly as wide as in our standard rasterization benchmarks. That's because most ray tracing games will fully tax the GPU, particularly at 4K. Across our six games, the 4090 advantage ranged from 67% in Control Ultimate Edition — which also happens to be the oldest of the games in our DXR suite — up to a 106% advantage in Fortnite with all the RT options maxed out.

Even without DLSS, the RTX 4090 almost manages to deliver native 4K ultra ray tracing performance of 60 fps or more. Certainly the performance is playable, at 40 fps or higher in all of the games. DLSS 2 Quality upscaling pushes that to 100 fps on average, and all of the DXR tests are now well above 60 fps. Again, we'll look at what DLSS 3 can do in some preview builds of games in just a moment.

- MORE: Best Graphics Cards

- MORE: GPU Benchmarks and Hierarchy

- MORE: All Graphics Content

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: GeForce RTX 4090: Gaming Performance at 4K

Prev Page Nvidia GeForce RTX 4090 Founders Edition Next Page GeForce RTX 4090: Gaming Performance at 1440p and 1080p

Jarred Walton is a senior editor at Tom's Hardware focusing on everything GPU. He has been working as a tech journalist since 2004, writing for AnandTech, Maximum PC, and PC Gamer. From the first S3 Virge '3D decelerators' to today's GPUs, Jarred keeps up with all the latest graphics trends and is the one to ask about game performance.

-

-Fran- Shouldn't this be up tomorrow?Reply

EDIT: Nevermind. Looks like it was today! YAY.

Thanks for the review!

Regards. -

brandonjclark Replycolossusrage said:Can finally put 4K 120Hz displays to good use.

I'm still on a 3090, but on my 165hz 1440p display, so it maxes most things just fine. I think I'm going to wait for the 5k series GPU's. I know this is a major bump, but dang it's expensive! I simply can't afford to be making these kind of investments in depreciating assets for FUN. -

JarredWaltonGPU Reply

Yeah, Nvidia almost always does major launches with Founders Edition reviews the day before launch, and partner card reviews the day of launch.-Fran- said:Shouldn't this be up tomorrow?

EDIT: Nevermind. Looks like it was today! YAY.

Thanks for the review!

Regards. -

JarredWaltonGPU Reply

You could still possibly get $800 for the 3090. Then it’s “only” $800 to upgrade! LOL. Of course if you sell on eBay it’s $800 - 15%.brandonjclark said:I'm still on a 3090, but on my 165hz 1440p display, so it maxes most things just fine. I think I'm going to wait for the 5k series GPU's. I know this is a major bump, but dang it's expensive! I simply can't afford to be making these kind of investments in depreciating assets for FUN. -

kiniku A review like this, comparing a 4090 to an expensive sports car we should be in awe and envy of, is a bit misleading. PC Gaming systems don't equate to racing on the track or even the freeway. But the way it's worded in this review if you don't buy this GPU, anything "less" is a compromise. That couldn't be further from the truth. People with "big pockets" aren't fools either, except for maybe the few readers here that have convinced themselves and posted they need one or spend everything they make on their gaming PC's. Most gamers don't want or need a 450 watt sucking, 3 slot, space heater to enjoy an immersive, solid 3D experience.Reply -

spongiemaster Reply

Congrats on stating the obvious. Most gamers have no need for a halo GPU that can be CPU limited sometimes even at 4k. A 50% performance improvement while using the same power as a 3090Ti shows outstanding efficiency gains. Early reports are showing excellent undervolting results. 150W decrease with only a 5% loss to performance.kiniku said:Most gamers don't want or need a 450 watt sucking, 3 slot, space heater to enjoy an immersive, solid 3D experience.

Any chance we could get some 720P benchmarks? -

LastStanding Replythe RTX 4090 still comes with three DisplayPort 1.4a outputs

the PCIe x16 slot sticks with the PCIe 4.0 standard rather than upgrading to PCIe 5.0.

These missing components are selling points now, especially knowing NVIDIA's rival(s?) supports the updated ports, so, IMO, this should have been included as a "con" too.

Another thing, why would enthusiasts only value "average metrics" when "average" barely tells the complete results?! It doesn't show the programs stability, any frame-pacing/hitches issues, etc., so a VERY miss oversight here, IMO.

I also find weird is, the DLSS benchmarks. Why champion the increase for extra fps buuuut... never, EVER, no mention of the awareness of DLSS included awful sharpening-pass?! 😏 What the sense of having faster fps but the results show the imagery smeared, ghosting, and/or artefacts to hades? 🤔