Nvidia GeForce GTX 780 Ti Review: GK110, Fully Unlocked

Hot on the heels of AMD's Radeon R9 290X receiving acclaim for a fair price and high performance, Nvidia is launching its fastest single-GPU gaming card ever: GeForce GTX 780 Ti. It's quicker than 290X, but also more expensive. Is the premium worthwhile?

GK110, Unleashed: The Wonders Of Tight Binning

GeForce GTX Titan is a super-fast graphics card, right? We know it employs a trimmed-back version of Nvidia’s GK110 GPU, and sure, we’ve often wondered what a fully-functional version of the processor could do. But given the board’s once-uncontested performance lead and its butt-clenching $1000 price tag, it was never a sure thing that GK110, uncut, would ever surface on the desktop.

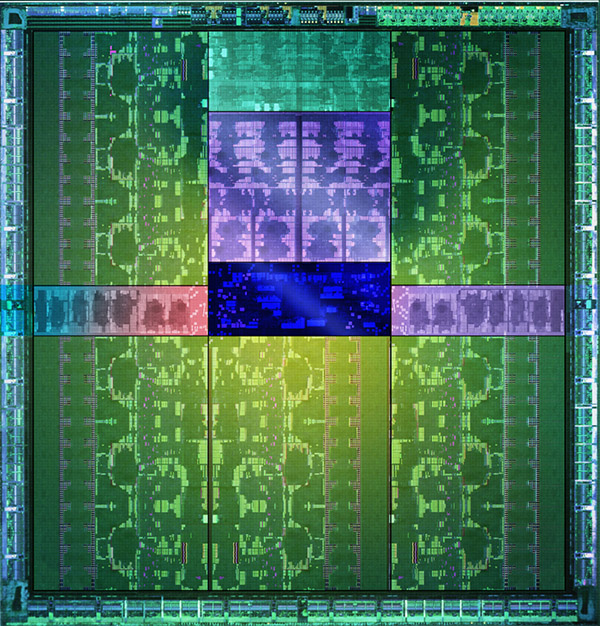

After all, GK110 is a 7.1-billion-transistor GPU. And Nvidia is already (happily) selling a 2880-core version into $5000 Quadro K6000 cards.

Competition has a way of altering perspective, though. AMD’s Radeon R9 290X launch wasn’t perfect. However, it taught us that the Hawaii GPU, properly cooled, can humble Nvidia’s mighty Titan at a much lower price point.

Not to be caught off-guard, Nvidia was already binning its GK110B GPUs, which have been shipping since this summer on GeForce GTX 780 and Titan cards. The company won’t get specific about what it was looking for, but we have to imagine it set aside flawless processors with the lowest power leakage to create a spiritual successor for GeForce GTX 580. Today, those fully-functional GPUs drop into Nvidia’s GeForce GTX 780 Ti.

GK110 In Its Fully Glory

That’s right—we’re finally getting a glimpse of GK110 with all of its Streaming Multiprocessors turned on. So, GeForce GTX 780 Ti features a total of 2880 CUDA cores and 240 texture units. For the sake of completeness, we can work backward: given 192 shaders per SMX, we have 15 working blocks, and with three SMX blocks per Graphics Processing Cluster, there are five of those operating in parallel, too.

This is one SMX more than GeForce GTX Titan, with its 2688 CUDA cores, enjoys. So, you get 192 additional shaders and 16 more texture units. Nvidia also turns up the GPU’s clock rates too, though. Titan’s base clock is 837 MHz and its typical GPU Boost frequency is specified at 876 MHz. GTX 780 Ti starts at 875 MHz and, Nvidia says, can be expected to stretch up to 928 MHz in most workloads.

GK110’s back-end looks the same. Six ROP partitions handle up to eight pixels per clock, adding up to 48 ROP units. A sextet of 64-bit controllers facilitate a familiar 384-bit aggregate memory bus. Only, rather than dropping 1500 MHz modules onto it like the company did with Titan, Nvidia leans on the latest 1750 MHz memory, yielding a 7000 Gb/s data rate and up to 336 GB/s of bandwidth.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

The design decision that’ll probably trigger the most controversy is Nvidia’s choice to use 3 GB of GDDR5, down from Titan’s 6 GB. In today’s games, I’ve tested 3 GB cards like the Radeon R9 280X at up to 3840x2160 and not had issues running out of memory. You will, however, have trouble with three QHD screens at 7680x1440. Battlefield 4, for example, goes right over 3 GB of memory usage at that resolution. You’ll be fine at 5760x1080 and Ultra HD for now, but on-board GDDR5 will become a bigger issue moving forward.

Is GeForce GTX 780 Ti More Titanic Than Titan?

At this juncture, the most natural question to ask is: well what about the $1000 GeForce GTX Titan? Nvidia is calling GeForce GTX 780 Ti the fastest gaming graphics card ever, and it’s selling for $700. That’s less than Titan for a card with technically superior specifications.

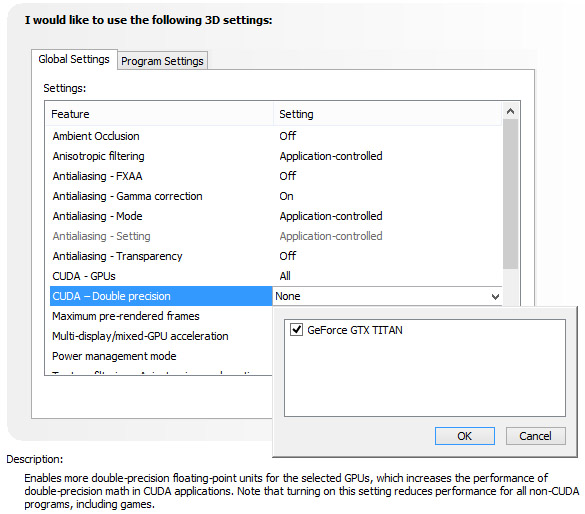

Titan lives on as a solution for CUDA developers and anyone else who needs GK110’s double-precision compute performance, but is not beholden to the workstation-oriented ECC memory protection, RDMA functionality, or Hyper-Q features you’d get from a Tesla or Quadro card. Remember—each SMX block on GK110 includes 64 FP64 CUDA cores. A Titan card with 14 active SMXes, running at 837 MHz, should be capable of 1.5 TFLOPS of double-precision math.

GeForce GTX 780 Ti, on the other hand, gets neutered in the same way Nvidia handicapped its GTX 780. The card’s driver deliberately operates GK110’s FP64 units at 1/8 of the GPU’s clock rate. When you multiply that by the 3:1 ratio of single- to double-precision CUDA cores, you get a 1/24 rate. The math on that adds up to 5 TFLOPS of single- and 210 GFLOPS of double-precision compute performance.

That’s a compromise, no question. But Nvidia had to do something to preserve Titan’s value and keep GeForce GTX 780 Ti from cannibalizing sales of much more expensive professional-class cards. AMD does something similar with its Hawaii-based cards (though not as severe), limiting DP performance to 1/8 of FP32.

And so we’re left with GeForce GTX 780 Ti unequivocally taking the torch from Titan when it comes to gaming, while Titan trudges forward more as a niche offering for the development and research community. The good news for desktop enthusiasts is that Nvidia’s price bar comes down $300, while performance goes up.

Now, is that enough flip the script on AMD and its Radeon R9 290X? The company is still selling at a very attractive (for ultra-high-end hardware) $550 price point, after all. Here’s the thing: as you saw two days ago from our R9 290 coverage, retail cards are rolling into our lab, and we’re not seeing the same Titan-beating performance that manifested in Radeon R9 290X Review: AMD's Back In Ultra-High-End Gaming. With only a handful of data points pegging 290X between GeForce GTX 770 and 780, and quicker than Titan, consistency appears to be AMD’s enemy right now. Company representatives confirm that there's a discrepancy between between absolute fan speed and its PWM controller, and is working to remedy this with a software update. Our German team continued investigating as I peeled off to cover GeForce GTX 780 Ti, and demonstrated that the press and retail cards are spinning at different fan speeds. But there's more to this story relating to ambient conditions, so you'll be hearing more about it soon.

Nvidia is seizing on this issue in the meantime, and with good reason. With clock rates ranging from 727 to 1000 MHz on our Radeon R9 290X cards, and AMD’s reference thermal solution limiting performance at different frequencies in different games, we couldn’t draw a conclusion one way or the other in AMD Radeon R9 290 Review: Fast And $400, But Is It Consistent? Can we be any more definitive about Nvidia’s response to all of the Hawaii news?

Current page: GK110, Unleashed: The Wonders Of Tight Binning

Next Page Meet The GeForce GTX 780 Ti-

faster23rd My heart broke a little bit for AMD. Unless AMD's got something up their sleeve, it's up to the board manufacturers now to get the 290X in a better competitive stance than the 780 ti.Reply -

tomc100 At $700, AMD has nothing to worry about other than the minority of enthusiast who are willing to pay $200 more for the absolute fastest. Also, when games like Battlefield 4 uses mantle the performance gains will be eroded or wiped out.Reply -

expl0itfinder Keep up the competition. Performance per dollar is the name of the game, and the consumers are thriving in it right now.Reply -

alterecho I want to see cooler as efficient as the 780 ti, on the 290X, and the benchmarks be run again. Something tells me 290X will perform similar or greater than 780ti, in that situation.Reply -

ohim Price vs way too few more fps than the rival will say a lot no matter who gets the crown, but can`t wonder to imagine the look on the face of the guys who got Titans for only few months of "fps supremacy" at insane price tags :)Reply -

bjaminnyc 2x R9 290's for $100 more will destroy the 780Ti. I don't really see where this logically fits in a competitively priced environment. Nice card, silly price point.Reply -

Innocent_Bystander-1312890 "Hawaii-based boards delivering frame rates separated by double-digit percentages, the real point is that this behavior is designed into the Radeon R9 290X. "Reply

It could also come down to production variance between the chips. Seen in before in manufacturing and it's not pretty. Sounds like we're starting to hit the ceiling with these GPUs... Makes me wonder what architectural magic they'll come up with next.

IB -

bjaminnyc 2x R9 290's for $100 more will destroy the 780Ti. I don't really see where this logically fits in a competitively priced environment. Nice card, silly price point.Reply -

Deus Gladiorum I'm going to build a rig for a friend and was planning on getting him the R9 290, but after the R9 290 review I'm quite hesitant. How can we know how the retail version of that card performs? Any chance you guys could pick one up and test it out? Furthermore, how can we know Nvidia isn't pulling the same trick: i.e. giving a press card that performs way above the retail version?Reply