Tech Industry

Latest about Tech Industry

-

-

Anthropic in early talks to buy DRAM-less AI inference chips from UK startupBy Luke James Published

Anthropic in early talks to buy DRAM-less AI inference chips from UK startupBy Luke James Published -

Chinese court rules companies can't fire workers just because AI is cheaperBy Etiido Uko Published

Chinese court rules companies can't fire workers just because AI is cheaperBy Etiido Uko Published -

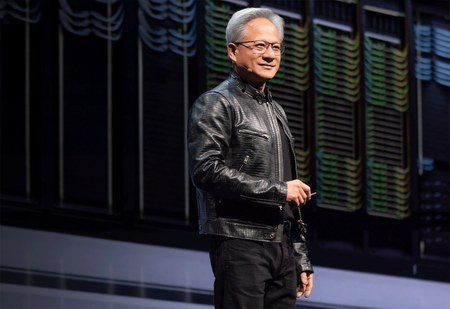

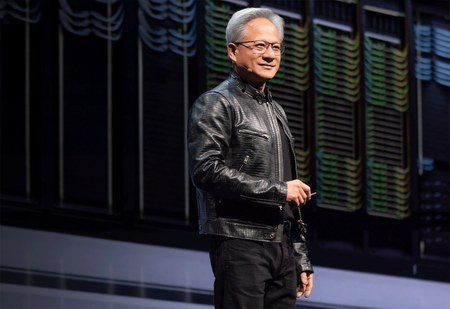

Jensen says Nvidia now has 'zero percent' market share in China — says US export policy 'has already largely backfired'By Anton Shilov Published

Jensen says Nvidia now has 'zero percent' market share in China — says US export policy 'has already largely backfired'By Anton Shilov Published -

Japan is deploying ultra-cheap cardboard drones built for swarm warfare and expendable combat missionsBy Etiido Uko Published

Japan is deploying ultra-cheap cardboard drones built for swarm warfare and expendable combat missionsBy Etiido Uko Published -

FCC votes to ban all Chinese labs from certifying electronics sold in the US due to national security concernsBy Luke James Published

FCC votes to ban all Chinese labs from certifying electronics sold in the US due to national security concernsBy Luke James Published -

US Navy signs deal with AI firm for training underwater drones to detect mines in Strait of HormuzBy Jowi Morales Published

US Navy signs deal with AI firm for training underwater drones to detect mines in Strait of HormuzBy Jowi Morales Published -

Pentagon budget reveals it's pursuing containerized 300kW+ laser weapons to shoot down cruise missilesBy Etiido Uko Last updated

Pentagon budget reveals it's pursuing containerized 300kW+ laser weapons to shoot down cruise missilesBy Etiido Uko Last updated

-

Explore Tech Industry

Artificial Intelligence

-

-

Anthropic in early talks to buy DRAM-less AI inference chips from UK startupBy Luke James Published

Anthropic in early talks to buy DRAM-less AI inference chips from UK startupBy Luke James Published -

Chinese court rules companies can't fire workers just because AI is cheaperBy Etiido Uko Published

Chinese court rules companies can't fire workers just because AI is cheaperBy Etiido Uko Published -

Jensen says Nvidia now has 'zero percent' market share in China — says US export policy 'has already largely backfired'By Anton Shilov Published

Jensen says Nvidia now has 'zero percent' market share in China — says US export policy 'has already largely backfired'By Anton Shilov Published -

US Navy signs deal with AI firm for training underwater drones to detect mines in Strait of HormuzBy Jowi Morales Published

US Navy signs deal with AI firm for training underwater drones to detect mines in Strait of HormuzBy Jowi Morales Published -

The Pentagon announces AI deals with OpenAI, Google, Microsoft, Amazon, Nvidia, and moreBy Jowi Morales Published

The Pentagon announces AI deals with OpenAI, Google, Microsoft, Amazon, Nvidia, and moreBy Jowi Morales Published -

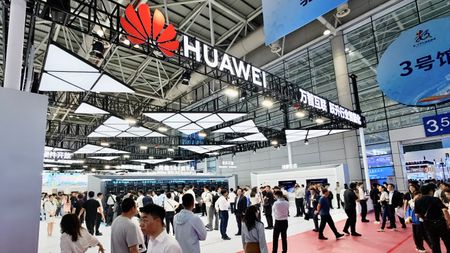

Huawei could seize China’s AI chip crown in 2026 as Nvidia’s H200 shipments stall in regulatory limboBy Etiido Uko Published

Huawei could seize China’s AI chip crown in 2026 as Nvidia’s H200 shipments stall in regulatory limboBy Etiido Uko Published -

PremiumTalent over tokens: AI models are becoming more expensive to run, and productivity gains are limitedBy Jon Martindale Published

PremiumTalent over tokens: AI models are becoming more expensive to run, and productivity gains are limitedBy Jon Martindale Published -

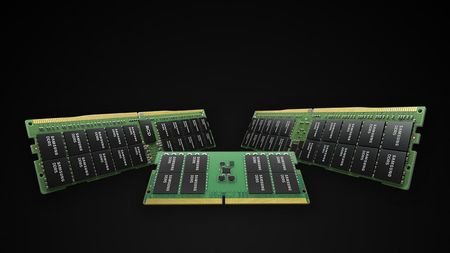

Samsung and SK hynix warn AI-driven memory shortages could last until 2027 and beyond, as HBM demand explodesBy Etiido Uko Published

Samsung and SK hynix warn AI-driven memory shortages could last until 2027 and beyond, as HBM demand explodesBy Etiido Uko Published -

Victim of AI agent that deleted company's entire database gets their data backBy Mark Tyson Published

Victim of AI agent that deleted company's entire database gets their data backBy Mark Tyson Published

-

Big Tech

-

-

PremiumMicrosoft attributes $25 billion of AI budget to memory and chip costsBy Luke James Published

PremiumMicrosoft attributes $25 billion of AI budget to memory and chip costsBy Luke James Published -

Mark Zuckerberg says Meta is cutting 8,000 jobs to pay for AI infrastructureBy Luke James Published

Mark Zuckerberg says Meta is cutting 8,000 jobs to pay for AI infrastructureBy Luke James Published -

Google, Microsoft, Meta, and Amazon capex spending to hit $725 billion in 2026, up 77% from last yearBy Luke James Published

Google, Microsoft, Meta, and Amazon capex spending to hit $725 billion in 2026, up 77% from last yearBy Luke James Published -

Princeton researchers combine living brain cells and advanced electronics to perform computational tasksBy Etiido Uko Published

Princeton researchers combine living brain cells and advanced electronics to perform computational tasksBy Etiido Uko Published -

Union rally causes Samsung fab production to plummet by 58% during night shift as workers demand up to $400,000 bonusesBy Jowi Morales Published

Union rally causes Samsung fab production to plummet by 58% during night shift as workers demand up to $400,000 bonusesBy Jowi Morales Published -

More than 30,000 Samsung union members take to the streets to demand higher cut of AI profits, an average of $400,000 per workerBy Jowi Morales Published

More than 30,000 Samsung union members take to the streets to demand higher cut of AI profits, an average of $400,000 per workerBy Jowi Morales Published -

Microsoft facing $2.8 billion UK lawsuit for overcharging 60,000 businesses using Microsoft Server on other cloudsBy Jowi Morales Published

Microsoft facing $2.8 billion UK lawsuit for overcharging 60,000 businesses using Microsoft Server on other cloudsBy Jowi Morales Published -

Scientists solve decades-old 2D physics puzzleBy Etiido Uko Published

Scientists solve decades-old 2D physics puzzleBy Etiido Uko Published -

Oklahoma farmer arrested and jailed for trespassing during AI data center town hallBy Jowi Morales Published

Oklahoma farmer arrested and jailed for trespassing during AI data center town hallBy Jowi Morales Published

-

Cryptocurrency

-

-

Tennessee bans crypto ATMs that have become 'payment portal of choice for scammers'By Jowi Morales Published

Tennessee bans crypto ATMs that have become 'payment portal of choice for scammers'By Jowi Morales Published -

Crypto scam takes advantage of Strait of Hormuz crisis by taking fake payments, leading to two ships being fired uponBy Jowi Morales Published

Crypto scam takes advantage of Strait of Hormuz crisis by taking fake payments, leading to two ships being fired uponBy Jowi Morales Published -

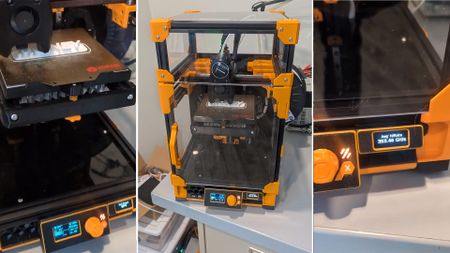

Inventor showcases 3D printer filament dryer that mines Bitcoins and dries filament with waste heat, capable of 6 TH/sBy Mark Tyson Published

Inventor showcases 3D printer filament dryer that mines Bitcoins and dries filament with waste heat, capable of 6 TH/sBy Mark Tyson Published -

British cryptographer Adam Back is the secret creator of Bitcoin, claims new reportBy Hassam Nasir Published

British cryptographer Adam Back is the secret creator of Bitcoin, claims new reportBy Hassam Nasir Published -

Crypto platform Drift suffers from hack suspected to total $270 millionBy Jowi Morales Published

Crypto platform Drift suffers from hack suspected to total $270 millionBy Jowi Morales Published -

Hacker charged for stealing $53 million in crypto, faces up to 30 years in prisonBy Jowi Morales Published

Hacker charged for stealing $53 million in crypto, faces up to 30 years in prisonBy Jowi Morales Published -

Iran conflict spurs Bitcoin mining operators to pivot to AI infrastructureBy Bruno Ferreira Published

Iran conflict spurs Bitcoin mining operators to pivot to AI infrastructureBy Bruno Ferreira Published -

Bitcoin is so resilient it could survive as much as 90% of the world's undersea cables failing simultaneouslyBy Mark Tyson Published

Bitcoin is so resilient it could survive as much as 90% of the world's undersea cables failing simultaneouslyBy Mark Tyson Published -

3D printer that can mine Bitcoin uses excess heat for temperature controlBy Mark Tyson Published

3D printer that can mine Bitcoin uses excess heat for temperature controlBy Mark Tyson Published

-

Cybersecurity

-

-

Canonical under sustained DDoS attack as Ubuntu 26 releasesBy Bruno Ferreira Published

Canonical under sustained DDoS attack as Ubuntu 26 releasesBy Bruno Ferreira Published -

Zero-day exploit instantly grants administrator access on most Linux distributions since 2017By Bruno Ferreira Published

Zero-day exploit instantly grants administrator access on most Linux distributions since 2017By Bruno Ferreira Published -

Ransomware accidentally destroys all files larger than 128KB, preventing decryptionBy Jowi Morales Published

Ransomware accidentally destroys all files larger than 128KB, preventing decryptionBy Jowi Morales Published -

The Chernobyl virus turned 27 today, and it could brick your PC in ways that modern malware can'tBy Luke James Published

The Chernobyl virus turned 27 today, and it could brick your PC in ways that modern malware can'tBy Luke James Published -

Mobile SMS blasters in vehicles prowled Canadian streets, causing 13 million network disruptions and infiltrating tens of thousands of devicesBy Jowi Morales Published

Mobile SMS blasters in vehicles prowled Canadian streets, causing 13 million network disruptions and infiltrating tens of thousands of devicesBy Jowi Morales Published -

PremiumHow a cavalcade of blunders gave unauthorized users access to Claude MythosBy Jon Martindale Published

PremiumHow a cavalcade of blunders gave unauthorized users access to Claude MythosBy Jon Martindale Published -

UK spy agency releases malware-blocking gadget for HDMI and DisplayPort cablesBy Jowi Morales Published

UK spy agency releases malware-blocking gadget for HDMI and DisplayPort cablesBy Jowi Morales Published -

Ransomware negotiator pleads guilty after leaking victims' insurance details to 'BlackCat' hackersBy Luke James Published

Ransomware negotiator pleads guilty after leaking victims' insurance details to 'BlackCat' hackersBy Luke James Published -

Iran claims US exploited networking equipment backdoors during strikesBy Luke James Published

Iran claims US exploited networking equipment backdoors during strikesBy Luke James Published

-

Manufacturing

-

-

PremiumASML's roadmap for chipmaking lithography tools examined — from DUV to Low-NA, High-NA, Hyper-NA, and beyondBy Luke James Published

PremiumASML's roadmap for chipmaking lithography tools examined — from DUV to Low-NA, High-NA, Hyper-NA, and beyondBy Luke James Published -

Intel details 18A-P process node, touts higher performance, lower power, and much moreBy Anton Shilov Published

Intel details 18A-P process node, touts higher performance, lower power, and much moreBy Anton Shilov Published -

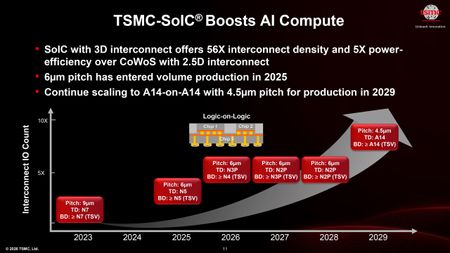

PremiumTSMC SoIC 3D stacking roadmap outlines path from 6-micron pitches today to 4.5-micron in 2029By Anton Shilov Published

PremiumTSMC SoIC 3D stacking roadmap outlines path from 6-micron pitches today to 4.5-micron in 2029By Anton Shilov Published -

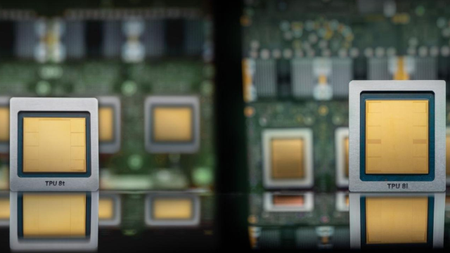

PremiumInside Google's TPU V8 strategy, delivering two chips for two crucial tasks at incredible scaleBy Luke James Published

PremiumInside Google's TPU V8 strategy, delivering two chips for two crucial tasks at incredible scaleBy Luke James Published -

PremiumTSMC's details next-gen CoWoS roadmap: over 14-reticle packages and 48x leap in compute power expected by 2029By Anton Shilov Published

PremiumTSMC's details next-gen CoWoS roadmap: over 14-reticle packages and 48x leap in compute power expected by 2029By Anton Shilov Published -

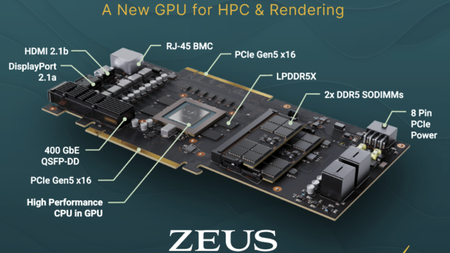

Bolt Graphics tapes out its first Zeus GPU test chip on TSMC 12nmBy Luke James Published

Bolt Graphics tapes out its first Zeus GPU test chip on TSMC 12nmBy Luke James Published -

Elon Musk says TeraFab will use Intel's 14A process technology to make AI chipsBy Anton Shilov Published

Elon Musk says TeraFab will use Intel's 14A process technology to make AI chipsBy Anton Shilov Published -

TSMC unveils process technology roadmap through 2029 — A12, A13, N2U announced, A16 slips to 2027By Anton Shilov Published

TSMC unveils process technology roadmap through 2029 — A12, A13, N2U announced, A16 slips to 2027By Anton Shilov Published -

PremiumCongress moves to strip the DoC of chip-export discretion with the MATCH ActBy Luke James Published

PremiumCongress moves to strip the DoC of chip-export discretion with the MATCH ActBy Luke James Published

-

Quantum Computing

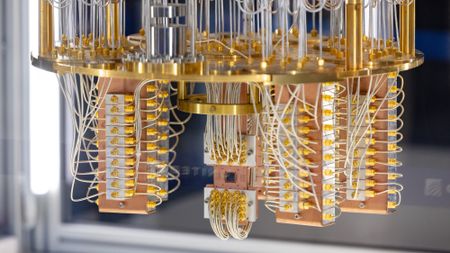

-

-

PremiumQuantum photonics roadmap — how Xanadu and PsiQuantum are looking to transfer qubits through beams of lightBy Francisco Pires Published

PremiumQuantum photonics roadmap — how Xanadu and PsiQuantum are looking to transfer qubits through beams of lightBy Francisco Pires Published -

PremiumThe future of Quantum computing — the tech, companies, and roadmaps that map out a coherent quantum futureBy Francisco Pires Published

PremiumThe future of Quantum computing — the tech, companies, and roadmaps that map out a coherent quantum futureBy Francisco Pires Published -

Quantum computing firm dangles $22,500 Bitcoin prizeBy Mark Tyson Published

Quantum computing firm dangles $22,500 Bitcoin prizeBy Mark Tyson Published -

PremiumIBM and Cisco agree to lay the foundations for a quantum internetBy Luke James Published

PremiumIBM and Cisco agree to lay the foundations for a quantum internetBy Luke James Published -

New Chinese optical quantum chip allegedly 1,000x faster than Nvidia GPUs for processing AI workloadsBy Aaron Klotz Published

New Chinese optical quantum chip allegedly 1,000x faster than Nvidia GPUs for processing AI workloadsBy Aaron Klotz Published -

IBM's boffins run a nifty quantum error-correction algorithm on conventional AMD FPGAsBy Bruno Ferreira Published

IBM's boffins run a nifty quantum error-correction algorithm on conventional AMD FPGAsBy Bruno Ferreira Published -

Trump administration to follow up Intel stake with investment in quantum computing, report claimsBy Anton Shilov Published

Trump administration to follow up Intel stake with investment in quantum computing, report claimsBy Anton Shilov Published -

Google's Quantum Echo algorithm shows world's first practical application of Quantum Computing — Willow 105-qubit chip runs algorithm 13,000x faster than a supercomputerBy Bruno Ferreira Published

Google's Quantum Echo algorithm shows world's first practical application of Quantum Computing — Willow 105-qubit chip runs algorithm 13,000x faster than a supercomputerBy Bruno Ferreira Published -

Harvard researchers hail quantum computing breakthrough with a machine that can run for two hoursBy Jowi Morales Published

Harvard researchers hail quantum computing breakthrough with a machine that can run for two hoursBy Jowi Morales Published

-

Supercomputers

-

-

Elon Musk restarts Dojo3 'space' supercomputer project as AI5 chip design gets in 'good shape'By Aaron Klotz Published

Elon Musk restarts Dojo3 'space' supercomputer project as AI5 chip design gets in 'good shape'By Aaron Klotz Published -

AMD and Eviden unveil Europe's second exascale systemBy Anton Shilov Published

AMD and Eviden unveil Europe's second exascale systemBy Anton Shilov Published -

Nvidia to build seven AI supercomputers for the U.S. gov't with over 100,000 Blackwell GPUsBy Anton Shilov Published

Nvidia to build seven AI supercomputers for the U.S. gov't with over 100,000 Blackwell GPUsBy Anton Shilov Published -

Nvidia unveils Vera Rubin supercomputers for Los Alamos National LaboratoryBy Anton Shilov Published

Nvidia unveils Vera Rubin supercomputers for Los Alamos National LaboratoryBy Anton Shilov Published -

U.S. Department of Energy and AMD cut a $1 billion deal for two AI supercomputersBy Bruno Ferreira Published

U.S. Department of Energy and AMD cut a $1 billion deal for two AI supercomputersBy Bruno Ferreira Published -

China's supercomputer breakthrough uses 37 million processor cores to model complex quantum chemistry at molecular scaleBy Anton Shilov Last updated

China's supercomputer breakthrough uses 37 million processor cores to model complex quantum chemistry at molecular scaleBy Anton Shilov Last updated -

Start-up hails world's first quantum computer made from standard siliconBy Luke James Published

Start-up hails world's first quantum computer made from standard siliconBy Luke James Published -

Nvidia GPUs and Fujitsu Arm CPUs will power Japan's next $750M zetta-scale supercomputerBy Hassam Nasir Published

Nvidia GPUs and Fujitsu Arm CPUs will power Japan's next $750M zetta-scale supercomputerBy Hassam Nasir Published -

AMD's massive GPU VRAM on its Instinct cards has broken Linux's hibernation featureBy Hassam Nasir Published

AMD's massive GPU VRAM on its Instinct cards has broken Linux's hibernation featureBy Hassam Nasir Published

-

Superconductors

-

-

New 3D printing process could improve superconductorsBy Ash Hill Published

New 3D printing process could improve superconductorsBy Ash Hill Published -

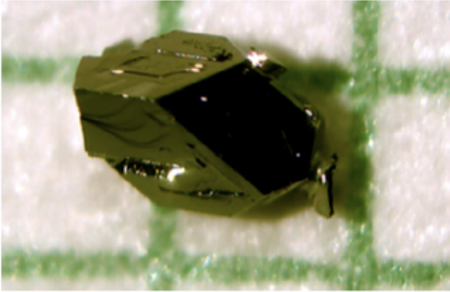

New research shows naturally occurring mineral is an 'unconventional superconductor' when purifiedBy Christopher Harper Published

New research shows naturally occurring mineral is an 'unconventional superconductor' when purifiedBy Christopher Harper Published -

New research reignites the possibility of LK-99 room-temperature superconductivityBy Francisco Pires Published

New research reignites the possibility of LK-99 room-temperature superconductivityBy Francisco Pires Published -

U.S. Govt and researchers seemingly discover new type of superconductivity in an exotic, crystal-like materialBy Francisco Pires Published

U.S. Govt and researchers seemingly discover new type of superconductivity in an exotic, crystal-like materialBy Francisco Pires Published -

Nature Retracts Controversial Room Temperature Superconductor Paper (But Not LK-99)By Francisco Pires Published

Nature Retracts Controversial Room Temperature Superconductor Paper (But Not LK-99)By Francisco Pires Published -

What is a Superconductor?By Francisco Pires Published

What is a Superconductor?By Francisco Pires Published -

MIT's Superconducting Qubit Breakthrough Boosts Quantum PerformanceBy Francisco Pires Published

MIT's Superconducting Qubit Breakthrough Boosts Quantum PerformanceBy Francisco Pires Published -

LK-99 Research Continues, Paper Says Superconductivity Could be PossibleBy Francisco Pires Published

LK-99 Research Continues, Paper Says Superconductivity Could be PossibleBy Francisco Pires Published -

Is LK-99 a Superconductor After All? New Research and Updated Patent Say SoBy Francisco Pires Published

Is LK-99 a Superconductor After All? New Research and Updated Patent Say SoBy Francisco Pires Published

-

More about Tech Industry

-

-

Pentagon budget reveals it's pursuing containerized 300kW+ laser weapons to shoot down cruise missilesBy Etiido Uko Last updated

Pentagon budget reveals it's pursuing containerized 300kW+ laser weapons to shoot down cruise missilesBy Etiido Uko Last updated -

Canonical under sustained DDoS attack as Ubuntu 26 releasesBy Bruno Ferreira Published

Canonical under sustained DDoS attack as Ubuntu 26 releasesBy Bruno Ferreira Published -

The Pentagon announces AI deals with OpenAI, Google, Microsoft, Amazon, Nvidia, and moreBy Jowi Morales Published

The Pentagon announces AI deals with OpenAI, Google, Microsoft, Amazon, Nvidia, and moreBy Jowi Morales Published

-