Intel Developer Forum, Day Two

SSDs And Random Notes

SSDs Really are Better for Gaming

One of the cooler sessions we attended was about SSDs.

Anyone who’s used an SSD knows that the operating system and large apps (like games) can load much more quickly off a solid state drive than off of rotating media. It turns out that modern games can actually run better, too.

You won’t see that reflected in average frame rate numbers, however.

Modern game engines constantly stream data off the hard drive or optical drive during game play. Some level data is preloaded, but today’s games are far too large for levels to be completely loaded into system memory, even on Windows 7 64-bit with four or six gigabytes of RAM. So, the game is constantly making a best guess as to what data to stream to main memory or GPU VRAM in order to maintain smooth frame rates.

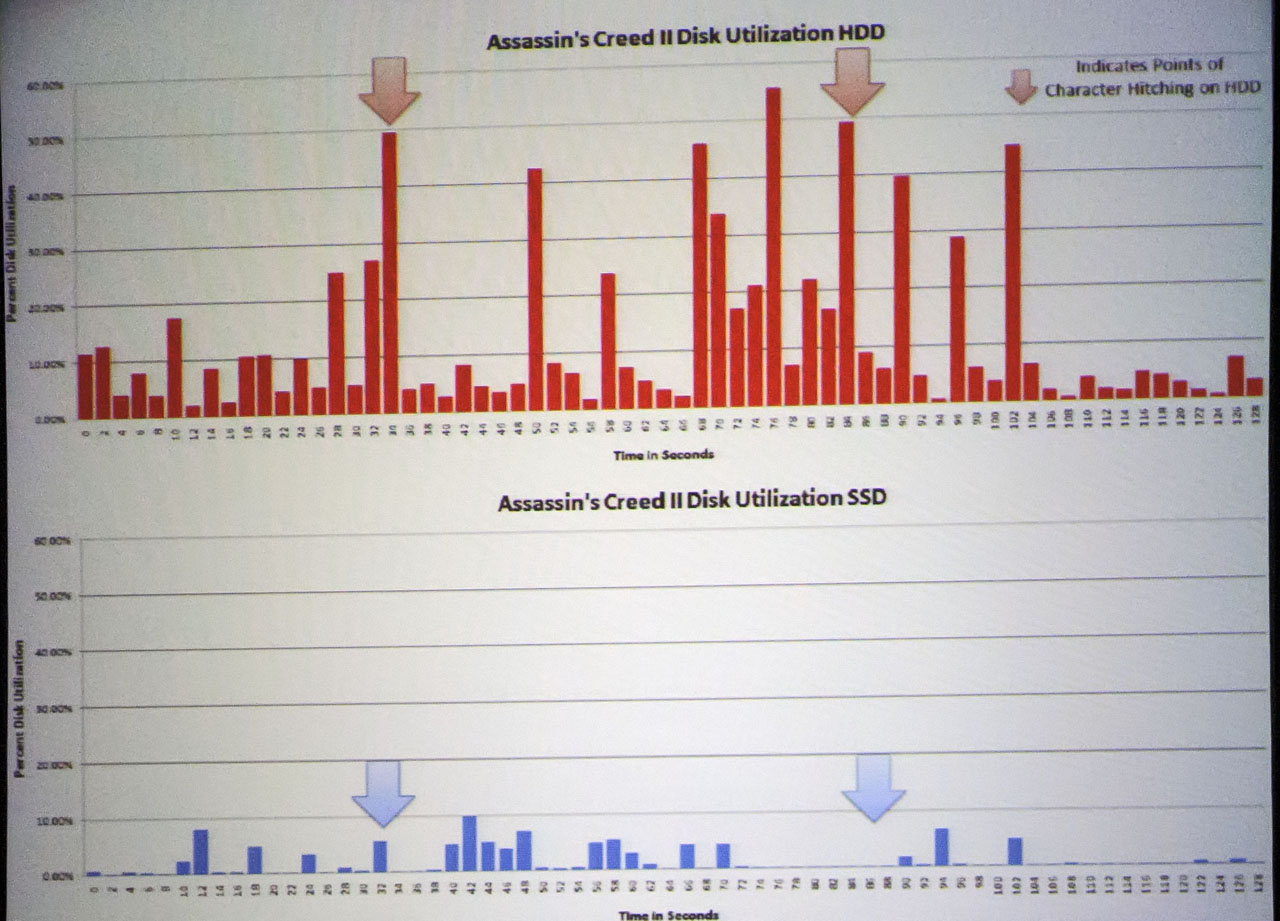

Intel noticed that, sometimes during game play, the game would momentarily freeze for a second, for example when the main character in Assassin’s Creed II was running through a level. What happened was the game made a bad bet on which data to load, then had to recover. Intel calls these momentary pauses “hitching.”

Intel recorded uncompressed full-resolution streams of game play and compared them to recorded hard drive load traces and found that these “hitches” or pauses matched up to large amounts of data being loaded in a short period of time, as the game engine recovered from a bad guess.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

When the hard drive is replaced by an SSD, the hitching vanishes. This is reflected in the massive decrease in instantaneous load times as seen in the trace chart for the game.

As it turns out, SSDs actually improve the game experience in a visible way, beyond just loading the title and its associated levels faster.

By the way, faster level load times--the more obvious way SSDs improve game performance--turns out to be a competitive advantage in games like StarCraft II. When the game starts, it synchronizes all of the players so they start at the same time. However, if you’re using an SSD, you actually get a little head start, as some latency from the level load is still taking place among the "unfortunate folks" with mere 10 000 RPM hard drives (hah!).

Random Notes

We did spend some time wandering around the show floor. The emphasis on mobility was apparent everywhere, as we noted earlier. One example is this small herd of Atom-based tablets under development. The success of the iPad has created a stampede of small tablets, and it remains to be seen who will survive and who will fall by the wayside.

Intel was demonstrating “Wi-Di” in a number of locations. This is an enhanced version of Intel’s wireless display technology, which shipped earlier this year from Netgear and several laptop PC suppliers (we covered this technology in-depth in Intel Wireless Display: From Your Notebook To The Big Screen). The concept is pretty cool: share your laptop screen on your HDTV without connecting wires, rather than people having to crowd around to watch the small screen.

USB 3.0 is finally gaining major traction. DisplayLink was showing off products built with the USB 3.0 version of its display chip. This allows laptop users to connect to a docking station via SuperSpeed USB and use larger displays. You still won’t get good 3D frame rates, but USB 3.0 should allow for the use of larger displays while maintaining mouse and keyboard responsiveness.

We were disappointed that the one Light Peak technical session was canceled. But a number of demonstrations of Light Peak, Intel’s optical interconnect that may one day succeed USB 3.0, were evident. This one is showing video streaming off a hard drive using Light Peak optical connections.

Finally, we’ll close our Day Two report with this last image. Intel's Developer Forum has always been about the future, and today’s children represent the future of our world and of technology. Intel invited some local students to come in and try out Classmate PCs, Intel’s low-cost educational laptop. Hey, if someone had taken us on a field trip when we were kids and showed off a tiny computer for use in the classroom, we would have been have been enthralled, too.

-

rwmunchkin12788 I think the article is right on the money when it says the Sandy Bridge based laptops will be marketed as premium mobile PC's, while the AMD Zacate will be cheaper.Reply

I personally think AMD will win out in the cheap laptop with integrated graphics battle. AMD just has a great chance to put a big dent in this market in their favor. -

lamorpa It is very sad to see half of the 'local students' trying out the Classmate PCs are overweight or obese. There may be some more fundamental programs these kids need, like healthy living.Reply -

azcoyote I like the Boxee idea but seriously... Where the &%$# is anyone gonna fit a device shaped like that?Reply -

insightdriver @azcoyote, based on looking at the rear side of the box, I gather it sits flat, albiet, skewed, but is small enough that fitting it on a shelf won't be a problem. It's just "out of the box," in shape, so to speak. :-)Reply -

kilthas_th You'll want to take a look at Anandtech's updated notes on the Zacate chip. It appears that the "psychedelic" benchmark wins were due to the OEM driver for the Intel GPU. When updated, they scored basically the same. However, Zacate still trounced it in CoH and also Arkham Asylum.Reply -

dowsire ReplySpeaking of graphics, AMD’s Dave Hoff also alluded to its next-generation graphics, code named Northern Islands. Little actual data was forthcoming, but the magic eight-ball says “sooner than you think.”

What? I thought the new AMD HD 6800s were going to be southern island. Is northern island actually coming out earlier like 1H11 or in the beginning 2H11. The same time that Bulldozer and AM3+ comes out.

2nd why is the "cpu" so important in fusion chips.I think that the AMD version, priced as mainstream, will be better than the sandy bridge. More and more software is being off loaded to the GPU, even MS is making software run from the gpu i.e. internet explorer 9. -

jimmysmitty vnsGood coverage, but is it justified to bring in "zacate" to IDF ?Reply

Why not? IDF is not just for Intel. Sure Intel started it and is the main attraction but its for developers everywhere to show stuff off.

I like it that way. Lets Intel and AMD see whats coimng.