Facebook Uses AI To Fight Malicious Link 'Cloaking'

Facebook announced that it's using AI to decloak malicious content that's masquerading as legitimate advertisements or web pages. These efforts are supposed to help the company make sure bad actors can't evade its reviewers' gazes to mislead or harm the billion-plus people who use its service.

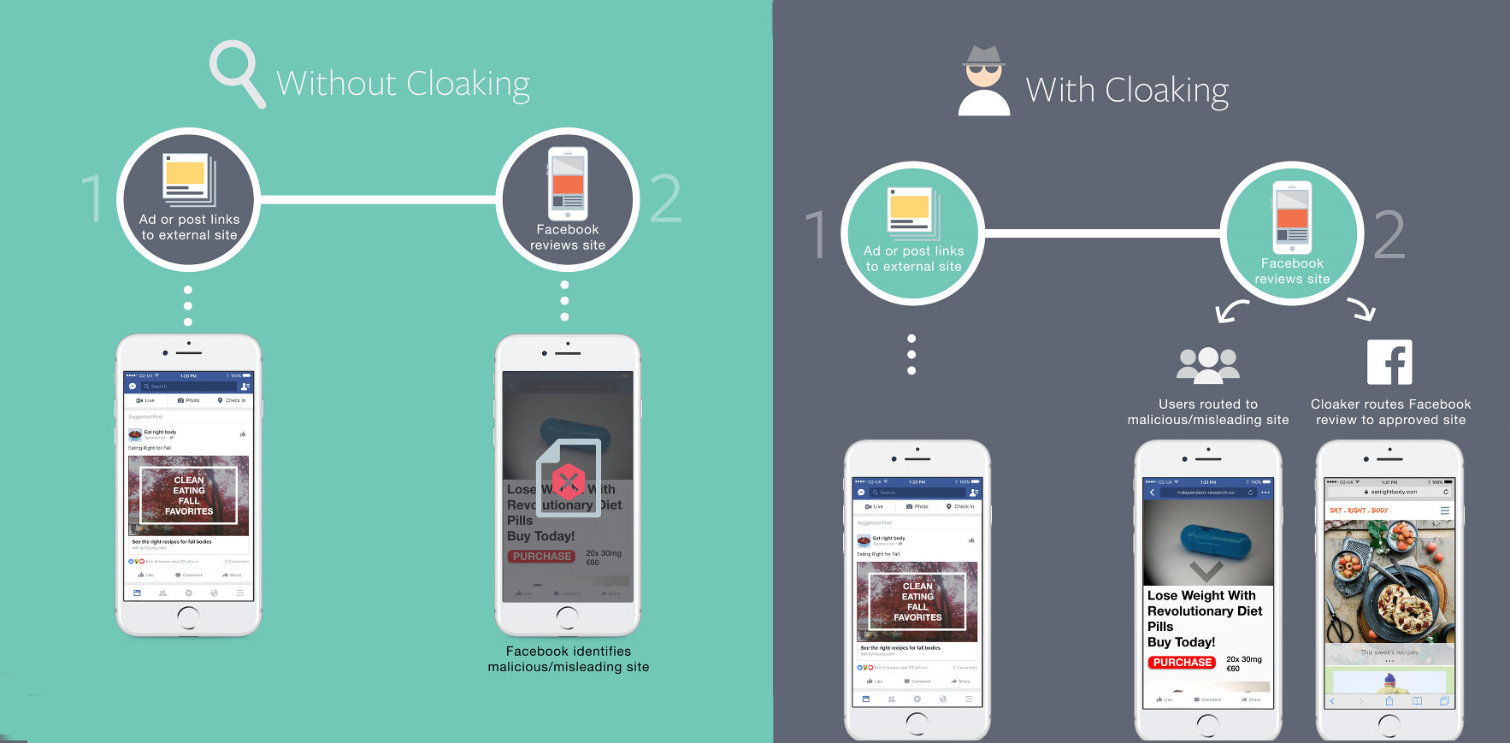

Cloaking works by showing Facebook reviewers one thing and displaying another to Facebook end users. The company provided an example in which a link took its reviewers to a recipe site, which doesn't violate its advertising policies or community guidelines, while sending its users to a page for "revolutionary diet pills." Those sites, as well as those for porn, muscle building scams, and other malicious content, do violate Facebook's rules.

This creates a problem for Facebook. The company is expected to halt bad actors in their tracks, but it's hard to do that if those tracks are carefully covered. That's why Facebook decided to see behind the invisibility cloak, as it were, and to work with other companies to "find new ways to combat it and punish bad actors." In a blog post, Facebook explained how it improved its processes and how well those improvements have worked:

Article continues belowWe are utilizing artificial intelligence and have expanded our human review processes to help us identify, capture, and verify cloaking. We can now better observe differences in the type of content served to people using our apps compared to our own internal systems. [...] In the past few months these new steps have resulted in us taking down thousands of these offenders and disrupting their economic incentives for misleading people.

Facebook said none of these changes should affect legitimate organizations' efforts to advertise or share content to its service. The company also said it sees cloaking "as deliberate and deceptive" and that it "will not tolerate it on Facebook." Pages caught engaging in these bad practices will be removed from the site. (They'll probably try to return, as spammers and scammers are wont to do, but at least they'll be stopped for a while.)

Enforcing platform rules has become increasingly tricky in recent years. Just consider how Uber reportedly hid its fingerprinting of iPhones from Apple, which doesn't allow companies to install persistent identifiers on iOS devices. The company is said to have set up a geofence around Apple's headquarters. Inside the fence, the app didn't fingerprint devices, so Apple reviewers were none the wiser. Outside, the fingerprinting was a go.

Others are bound to employ similar tactics. As long as there are rules governing potentially lucrative platforms like Facebook and iOS, someone will attempt to circumvent them using whatever tools are at their disposal. Now, at least, it seems companies are stepping up their efforts to catch these rule-breakers, whether it's by making it harder for scammers to sell weight loss pills or warning companies not to trifle with a company's security team.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Nathaniel Mott is a freelance news and features writer for Tom's Hardware US, covering breaking news, security, and the silliest aspects of the tech industry.

-

Kennyy Evony It wont. Have you been able to remove all cr@p on toms hardware site so its actually enjoyable to read articles? or are you plagued with them each time you visit the site because every day they find ways to fk you over?Reply -

virtualban Downloading and sharing is jailable and they can track and find the guilty party despite most of the world urging 'authorities' not to.Reply

Scamming and infecting can be chased to these levels, and, despite most of the world wishing authorities to chase scammers, they are still there and we are not hearing the many year sentences of them getting caught.

Well, #FUR them!!

#FractalUniverseRevolution #FUR - when our civilization becomes obsessed with fractals in search of familiar physics in them, and programs to render a slice of the universe are perfected. -

RomeoReject I know Facebook takes a lot of crap - rightfully so. But this seems like a decent move on their part. Hopefully it spreads throughout the web.Reply