AMD’s Bulldozer And Bobcat Architectures Pave The Way

Ahead of its most significant processor redesign since 2003, AMD is talking about its Bulldozer and Bobcat architectures, both of which are expected in 2011. Will AMD be able to catch up, or even surpass Intel's lead? The future looks interesting, indeed.

More On Bulldozer

In reality, much of what AMD is discussing at Hot Chips is already known, lending to a much-regurgitated slide deck covering the Bulldozer and Bobcat architectures.

Much of the company’s emphasis is on Bulldozer and its approach to threading. AMD draws a clear distinction between conventional simultaneous multi-threading (productized by Intel as Hyper-Threading) and chip-level multi-processing, employed by the six-core Thuban design, for example, where one core operates on one thread.

CMP is pretty straightforward. You replicate physical cores to scale out performance in threaded software, basically. It’s a brute-force approach that yields the best performance, but becomes very expensive for a manufacturer bumping up against the limits of its process technology, especially if execution resources are left idle. This is the exact reason we often recommend quick quad-core processors over slower six-core CPUs for gaming. Unless your workload is properly optimized for parallelism, CMP results in over-provisioning, and the higher clock rates of less-complex dual- and quad-core designs yield better performance.

Intel combats this with Hyper-Threading, which allows each physical core to work on two threads. Over-provisioning is assumed, meaning you rely on under-utilization to extract additional performance from each core. This is a relatively inexpensive technology. But it’s also quite limited in the benefits it offers. Some workloads don’t see any speed-up from Hyper-Threading. Others barely crack double-digit performance gains.

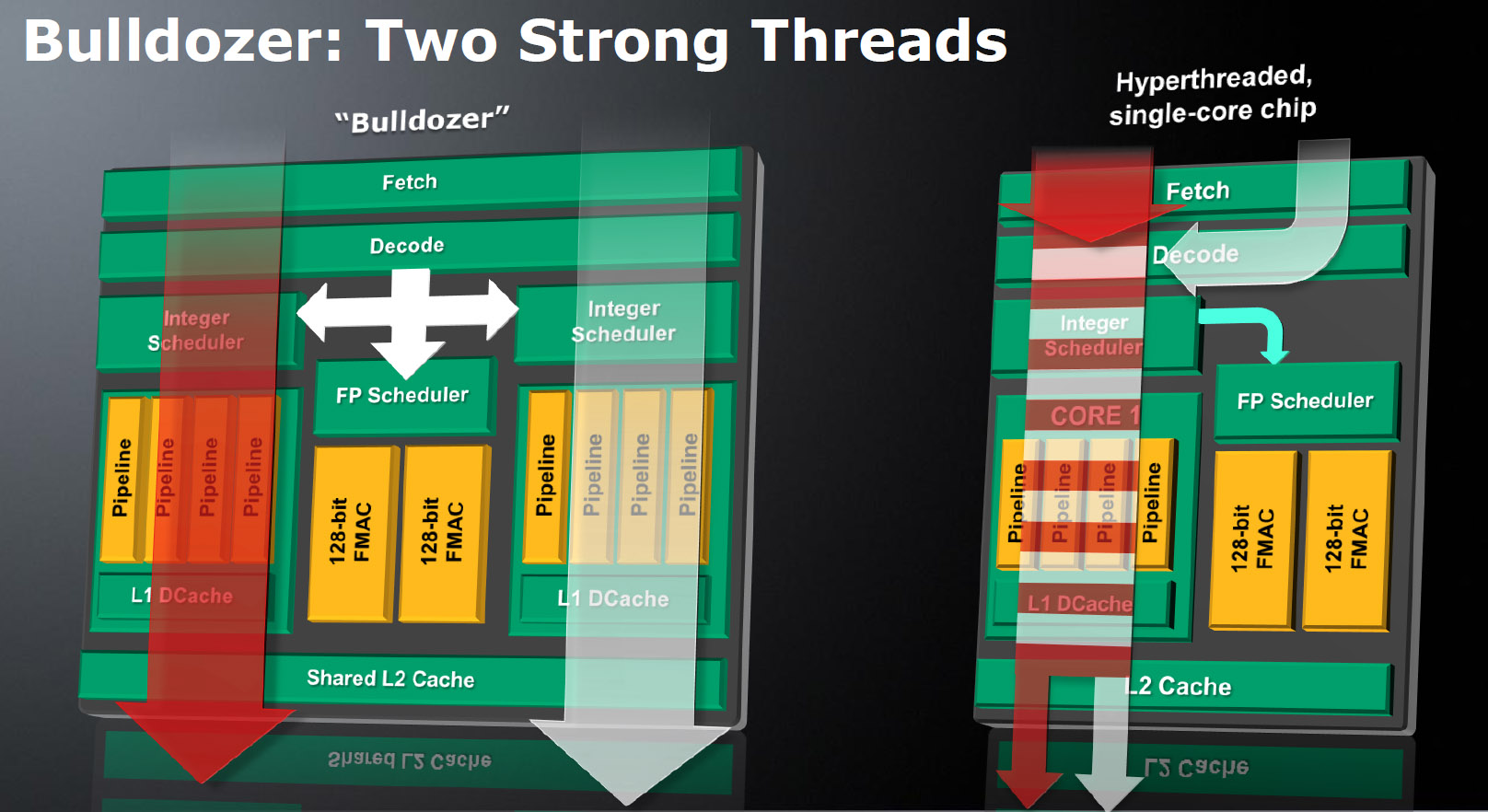

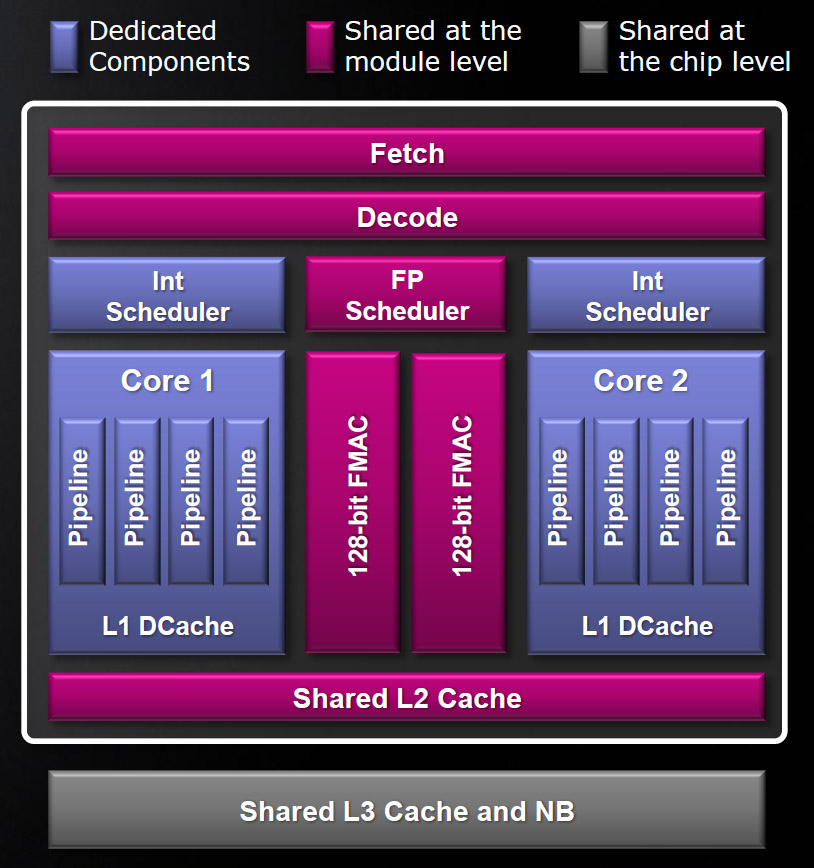

AMD is trying to define a third approach to threading it calls Two Strong Threads. Whereas Hyper-Threading only duplicates architectural states, the Bulldozer design shares the front-end (fetch/decode) and back-end of the core (through a shared L2 cache), but duplicates integer schedulers and execution pipelines, offering dedicated hardware to each of two threads.

The pair of threads share a floating point scheduler with two 128-bit fused multiply-accumulate-capable units. Consequently, it’s clear that AMD’s emphasis here is integer performance, which makes sense given the company’s Fusion initiative and impending plan to have GPU resources handle floating-point work. Just bear in mind that the first Bulldozer-powered processors won’t be APUs. Despite the fact that it is sharing FP resources here, AMD remains confident in its balance between dedicated and shared components.

None of that is new, though. AMD talked about it all back in November of last year.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Ahead of the Hot Chips presentation, we had the opportunity to refresh what we knew about Bulldozer with Dina McKinney, corporate vice president of design engineering at AMD. According to Dina, the company’s Two Strong Thread approach achieves somewhere in the neighborhood of 80% of the performance you’d see from simply replicating cores. At the same time, sharing some resources helps cut back on power use and die space.

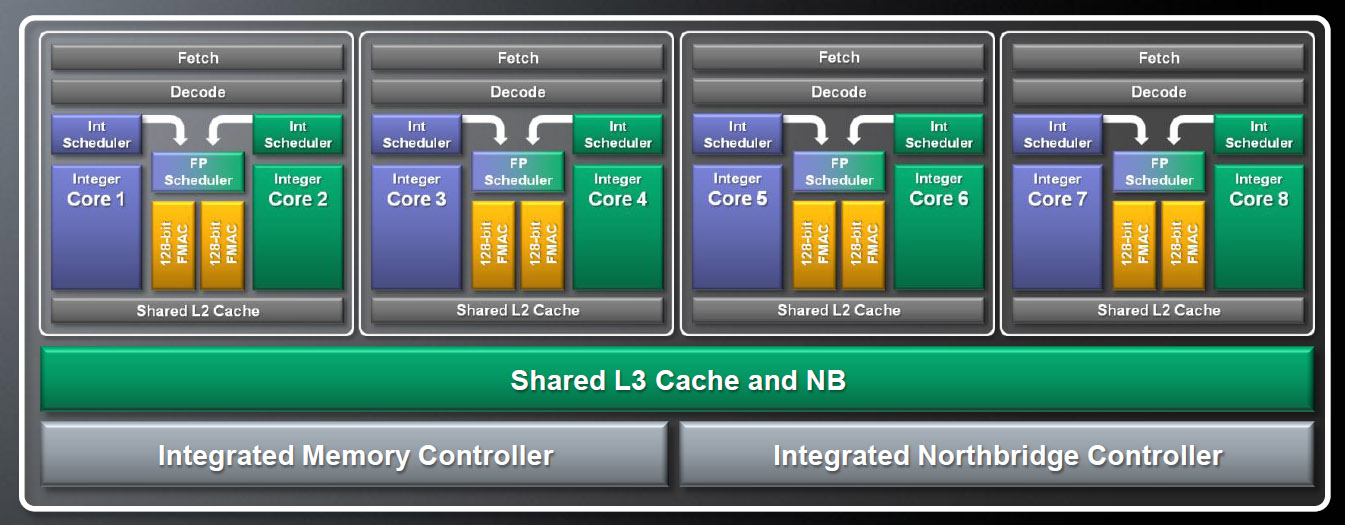

This development, along with a shift to 32 nm SOI manufacturing, is leading AMD to estimate a 33% increase in core count and a 50% increase in throughput (suggesting significant IPC gains) in the same power envelope as Magny-Cours-based Opteron processors. The projections here are based on simulated comparisons between today’s 12-core Opteron 6100-series chips and upcoming 16-core Bulldozer-based models, currently code-named Interlagos.

Now, one of the concerns I’ve seen brought up regarding AMD’s taxonomy is that a Bulldozer module looks like an SMT-enabled single-core processor. Only, instead of duplicating registers to store the architectural state, AMD gives each thread its own instruction window and dedicated pipelines. In talking back and forth with AMD’s John Fruehe, it’s clear that the company thinks that the duplication of integer schedulers and corresponding pipelines (disregarding the other shared components) makes each Bulldozer module a dual-core design, distinguishing it from SMT as it’s associated with Hyper-Threading. That gets a little marketing-heavy for me, but I can certainly respect that we’re looking at an architecture that’ll do much more for performance than Hyper-Threading in parallelized workloads.

I was also curious how Bulldozer modules are expected to interact with Windows 7. Intel and Microsoft put a deliberate effort into optimizing for Hyper-Threading. The operating system’s scheduler knows the difference between a physical core and a Hyper-Threaded core. If it has two threads to schedule, Windows 7 and Server 2008 R2 use two physical cores. The alternative—scheduling two threads to the same physical, Hyper-Threaded core—would naturally sacrifice performance. Because Bulldozer modules are still sharing resources, it’d stand to reason that a four-module Zambezi CPU would be best served by similarly handling two threads using different modules. Though AMD wasn’t able to address how it’ll handle this interaction, it assures me that it’s working with OS vendors on optimizations that’ll be ready for Bulldozer’s release.

I also asked John about the front-end’s instruction/cycle capabilities and the shared L2’s capacity configuration, but neither of those details is available yet. What he could tell me was that the 128-bit FP units are symmetrical, and that, on any cycle, either integer core can dispatch a 256-bit AVX instruction (assuming software compiled to support AVX). Or, both integer cores can dispatch a single 128-bit instruction at the same time.

In addition, John clarified how each integer unit’s pipelines are oriented. Whereas K10 enables three pipelines shared between ALUs and AGUs (effectively 1.5 of each), Bulldozer increases this number to four pipelines—two dedicated AGU and two dedicated ALU. The L1 cache configuration is a bit different, too. Whereas K10 offered 64 KB of L1 instruction and 64 KB of L1 data cache per core, Bulldozer enables 16 KB of L1 data cache per core and 64 KB of 2-way L1 instruction cache per module. It remains to be seen how the smaller L1 affects performance.

-

tacoslave dogman_1234No new news here, but I cannot wait until AMD releases "Bulldozer"! The thing that got me was the use of the cores. Really, all AMD needs now is a Commercial. Anybody?Reply

Put it in a mac, the sheeple will eat this Sh1t up.

-

buzznut Thanks for the great article and valuable information. Someone scolded me for saying I wanted to wait for a bulldozer processor to upgrade. Thanks for clearing this up. Yes, Bulldozer is what I'm waiting for, prolly a Zamboni would be nice.Reply

By the way, just who the hell comes up with these ridiculous names? I personally think manufacturers would sell more units if they weren't so confusing. -

Judguh dogman_1234No new news here, but I cannot wait until AMD releases "Bulldozer"! The thing that got me was the use of the cores. Really, all AMD needs now is a Commercial. Anybody?I'd have to say I partially agree with you. I see way more Intel commercials (many) than AMD (none). My next build: Bulldozer :DReply -

SpadeM ReplyDetails being discussed today include a dual-issue x86 decoder and out-of-order execution, perhaps enabling a performance advantage compared to Intel’s Atom CPUs.

Not quite, the out of order execution WILL enable a performance advantage compared to Atom, + the added bonus that the AMD GPU on the Ontario platform (if similar or better in performance to the ION) WILL again make for a better platform as a hole.

On the Bulldozer side, Power Gating and Turbo for modules, TST > SMT should be something to look forward.

PS: On the commercials side I'm looking forward to something like this:

http://www.youtube.com/watch?v=MK0hU0OYvCI -

luke904 ok everyone... I swear to god if AMD pulls this one off and bitch slaps Intel again... PARTY AT MY HOUSE!!!Reply -

joytech22 Excellent!! i skipped the Istanbul CPU's because i'm waiting to score on a "Scorpius" platform! and i know 100% that i will be able to afford it on release!Reply -

thomaseron Hopefully, Bulldozer will easily outperform my 955BE. So when the time comes, Bulldozer, Northern islands and about 8GB RAM on a new motherboard. :-) I hope Bulldozer kicks the living daylight out of intel for like one or two generations, so we can get som balance on the market.Reply -

thomaseron SpadeMNot quite, the out of order execution WILL enable a performance advantage compared to Atom, + the added bonus that the AMD GPU on the Ontario platform (if similar or better in performance to the ION) WILL again make for a better platform as a hole. On the Bulldozer side, Power Gating and Turbo for modules, TST > SMT should be something to look forward.PS: On the commercials side I'm looking forward to something like this: http://www.youtube.com/watch?v=MK0hU0OYvCIReply

I totaly agree on the commersial bit. :)