Lucid Demonstrates XLR8 Frame Rate Boosting Technology

Lucidlogix shows off its virtual GPU technology.

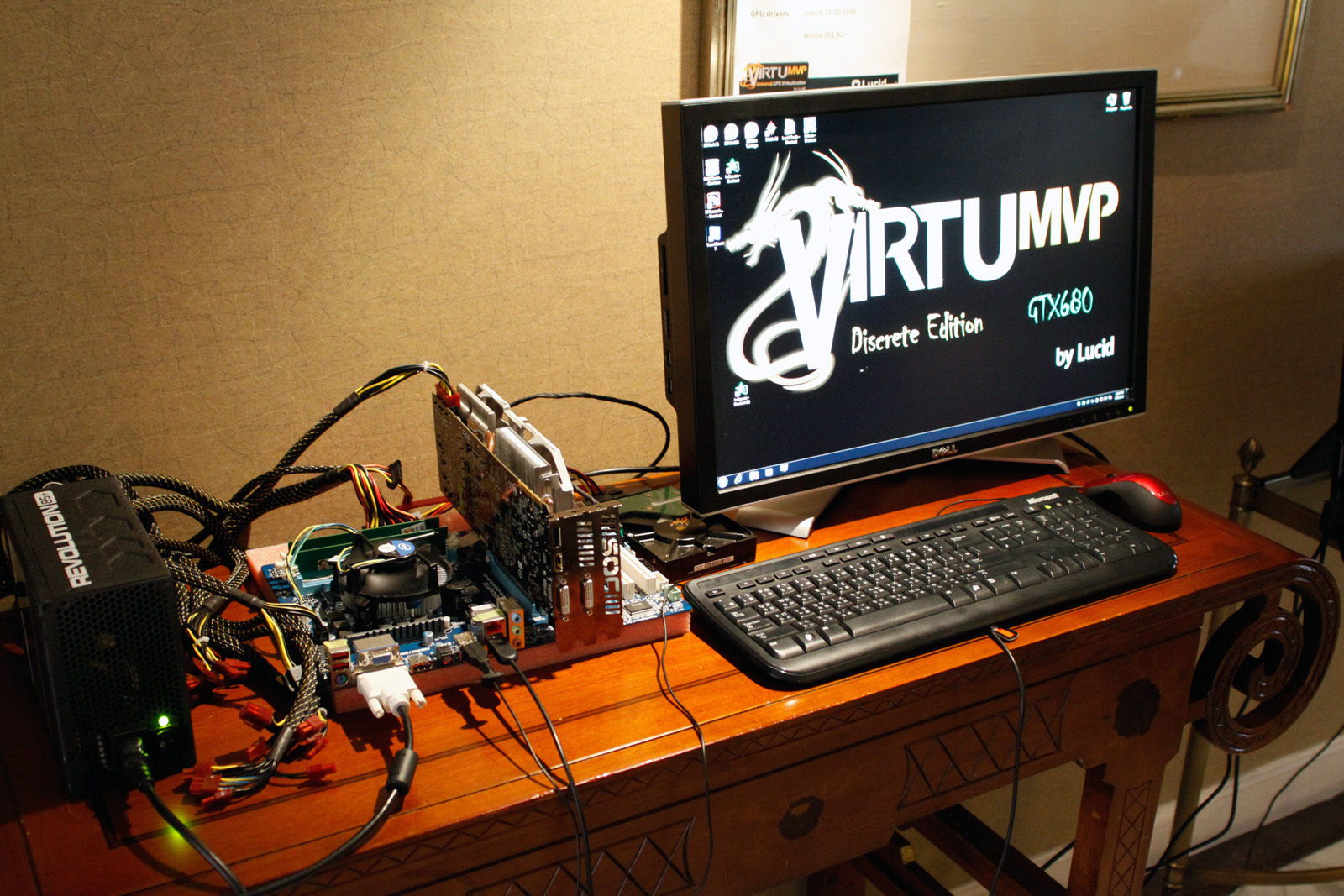

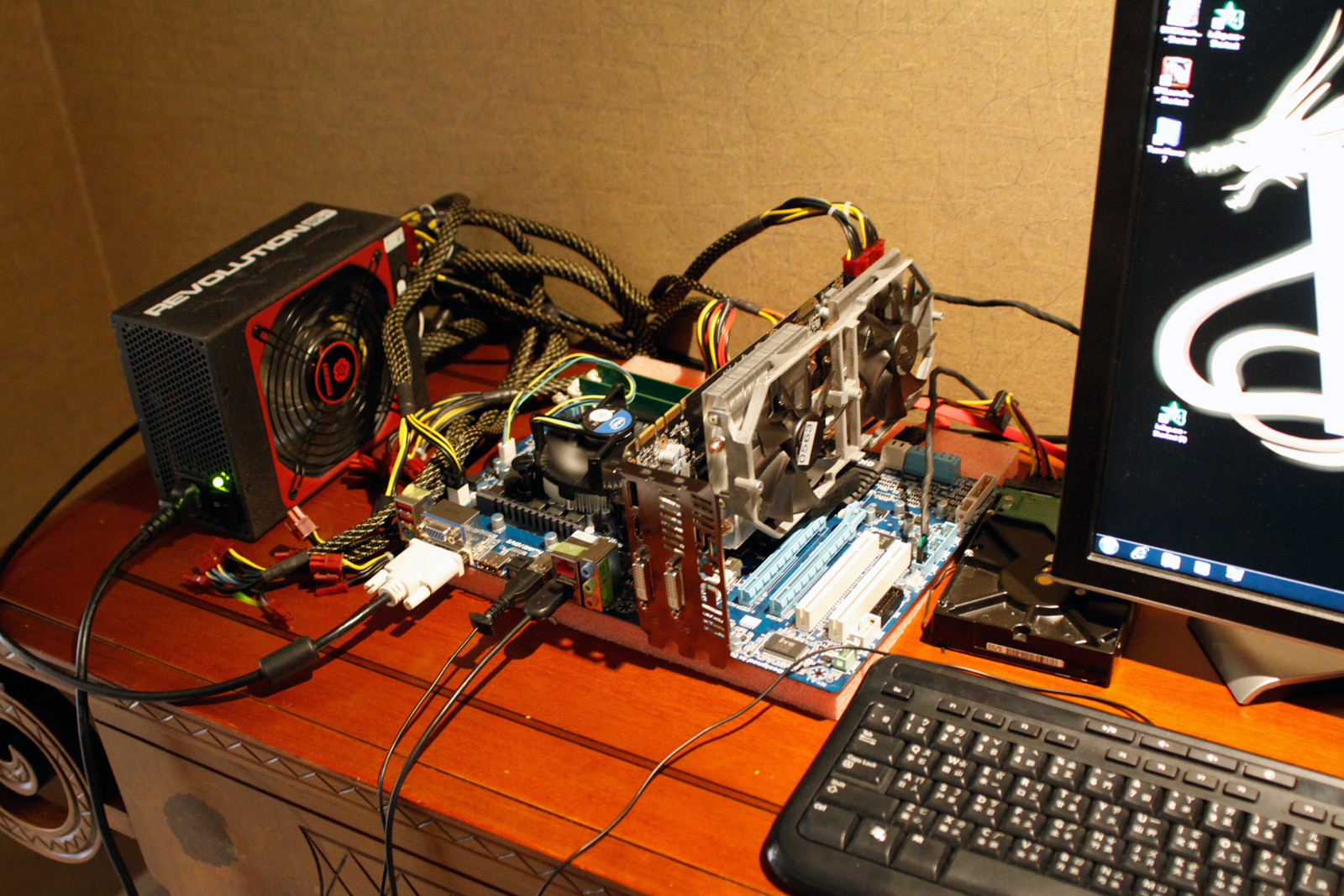

We paid Lucidlogix a visit a Computex to get a run through of its current portfolio. We were given a demo of Virtu MVP "discrete edition," which as the name suggests, is a solution for the desktop with a discrete add-in graphics card.

This version of Virtu that can do everything previous versions of MVP could, except it also has an integrated mode (i mode), where the monitor is attached to the outputs of the integrated GPU.

If the system is only showing 2D output or doing tasks that can be handled by the integrated GPU (such as Quick Sync transcoding), the add-in graphics card is turned off completely for a 0 Watt mode. As soon as 3D mode is activated or a heavier workload comes up, Virtu automatically wakes up the add-in card seamlessly.

Two ways to achieve this:

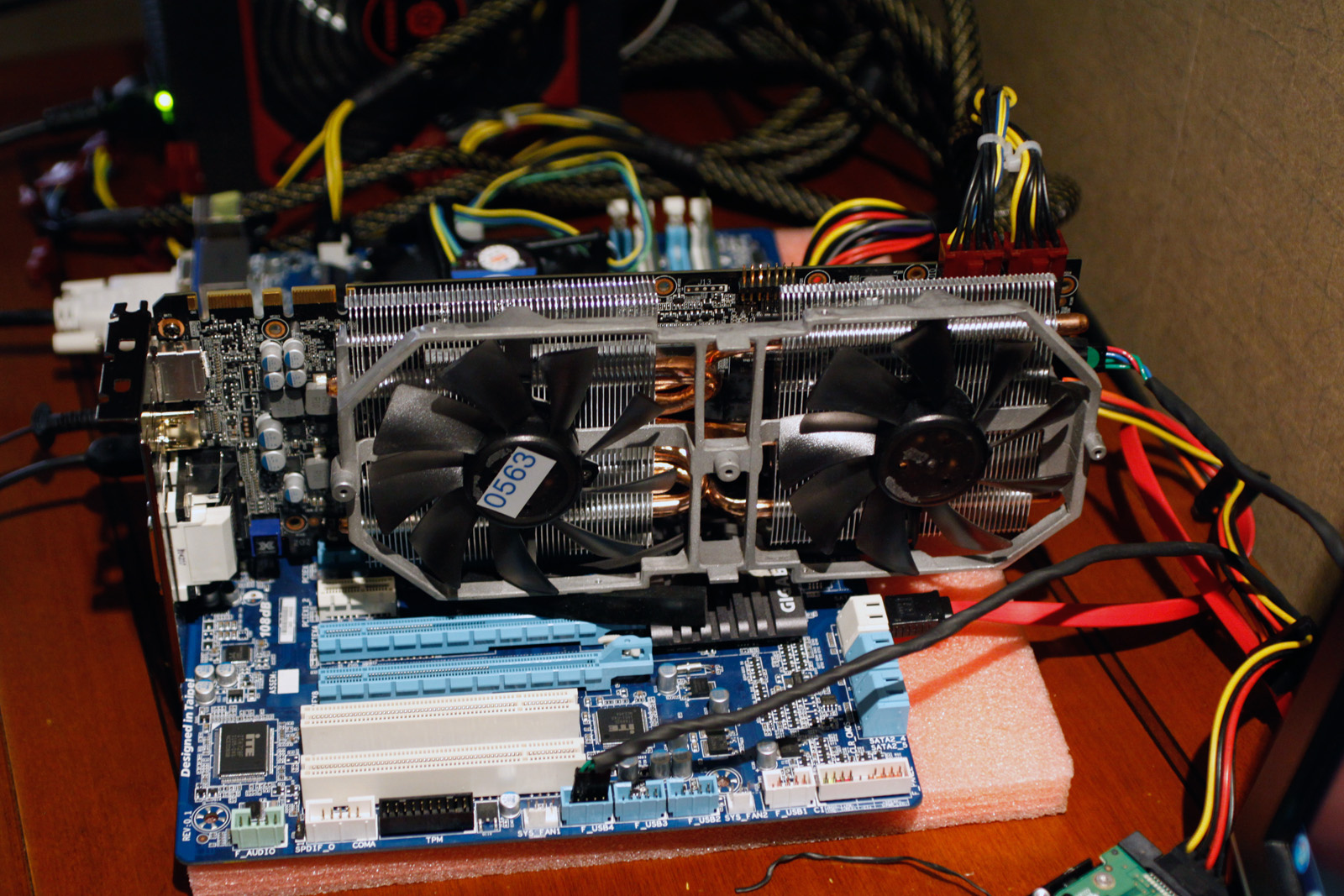

• You need either a graphics card with a special chip to do the monitoring and waking (currently connected internally from the graphics card to the motherboard via USB)

• You need a mainboard that can turn the x16 PCIe slot on and off.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

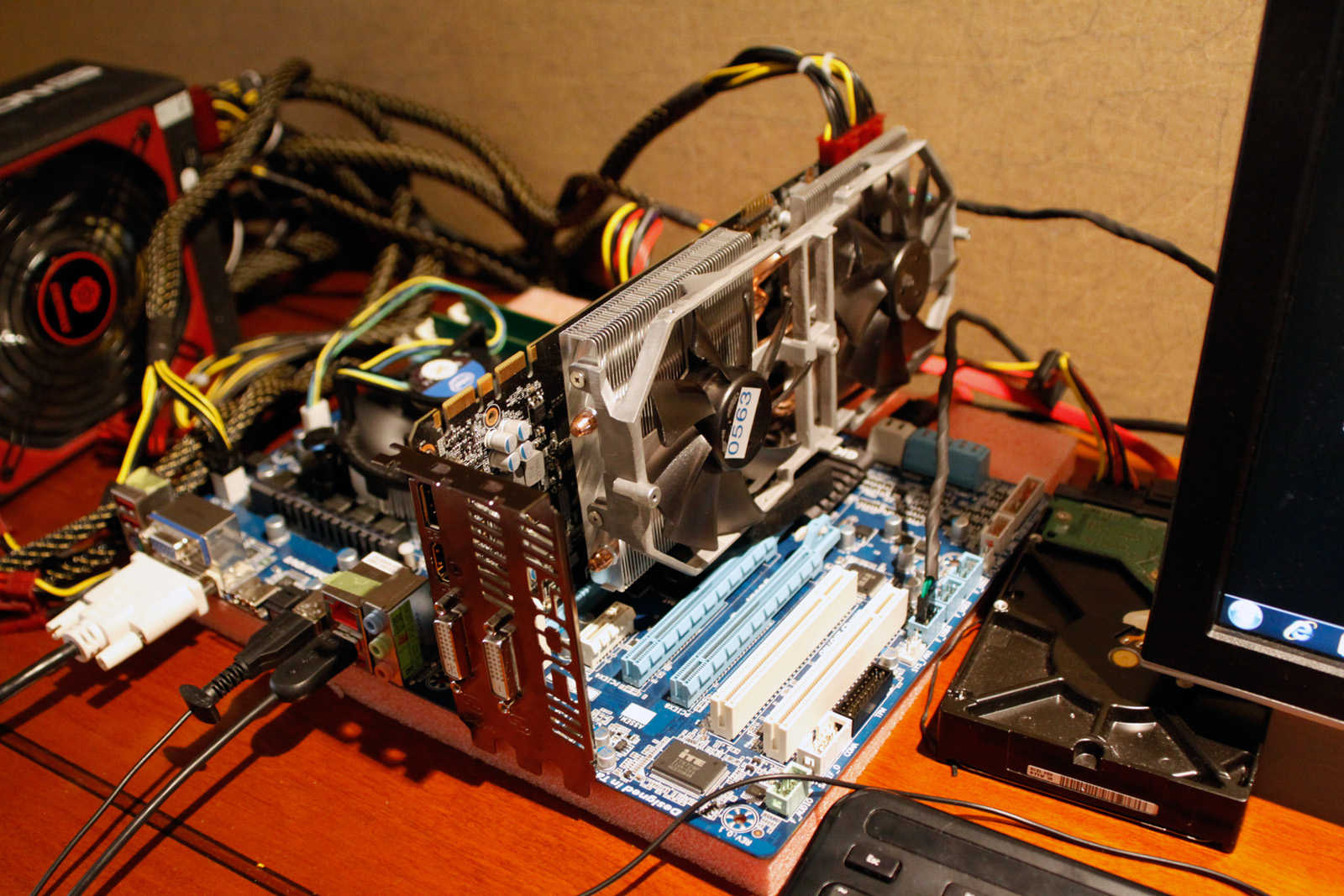

Lucidlogix demonstrated this system using a standard Z68 board and a slightly modified Gigabyte GV-N680SOC-2GD (we reported on this in its monstrous five fan configuration) that was equipped with the special chip.

UPDATE: To be clear, Lucidlogix is just a software solutions provider. This demonstration of Virtu MVP was running on hardware modified by Gigabyte with a simple USB control on the GPU AIC that connects to the motherboard. It is then Lucid's software that manages and controls it.

During the demo, the system was running normally, but the graphics card was powered down completely with the fans stopped. When a game was launched, the fans spun up and you got the GTX 680's performance with no flickering or other disruptive signs.

In theory, you could even remove the discrete card from the active system in 2D mode, as shown in this video:

Lucid is now working on applying some of its technical know-how to single-GPU configurations, which is interesting to hear given that we know this technology as something for automatically switching between or virtualizing two GPUs.

Lucid is calling this XLR8, which uses the two MVP technologies we already know about: Virtual V-Sync to eliminate reduce screen tearing; and HyperFormance to make 3D rendering more efficient.

New to the mix is DynamiX. On weaker GPUs, it is meant to improve frame rates by dynamically scaling back quality. While static elements such as the HUD and GUI remain at the same quality, the rest of the scene is reduced in an attempt to achieve a certain minimal frame rate target set by the user.

It's a simple interface with two sliders: One with the minimal frame rate the user wants, the other for the minimal quality the user is willing to settle for. This was demoed with Diablo III on a Dell XPS 13 Ultrabook with a Sandy Bridge Core i5 and its integrated GPU. Quality reduction was very evident, but responsiveness was much better, with frame rates doubling from 14 stock to 28 FPS with XLR8. Also, as promised, the HUD remained unaffected.

The XLR8 technology is about graphical and performance compromise, but the point, according to them, is to take games to playable levels on lower-end hardware. It's still just a tech demo, but it already works with DX 9, 10 and 11. In another demo on an Asus laptop with a GeForce 610M, the XLR8 tech took frame rates from 14 to 26 FPS playing Battlefield 3.

Finally, Lucid also they also showed remote rendering and gaming. The current problem with remote desktop software is that it uses a (slow) virtualized VGA device. Lucidlogix's solution enables using the actual GPU for rendering, so that output on the remote screen is much smoother. This will work with any remote connection software because the rendering happens on the server side. They showed it on an Android tablet and a smartphone, but it will works for pretty much any client, though, even desktop-to-desktop.

Here's a video showing that in action:

-

army_ant7 Lucidlogix is making me love them more now! I remember far back that it was just the concept of using different GPU's in tandem that intrigued me, but practical efficiency technologies are really wowing me!Reply -

army_ant7 fb39ca4Oh look a lot of fancy marketing terms! So exciting!!!Reply

Well, you gotta name your "children" something cool (that would be up for discussion (its coolness)). :-)) -

geminireaper whats the news here? This is already being done in laptops. my wifes i5 has an intel and an nvidia gpu. Under light load it uses the intel but when I load a 3d intensive game the nvidia one takes over.Reply -

memadmax Do the authors of these articles even bother reading what they just typed anymore??????Reply