Nvidia's FleX Technology Used In 'Killing Floor 2'

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

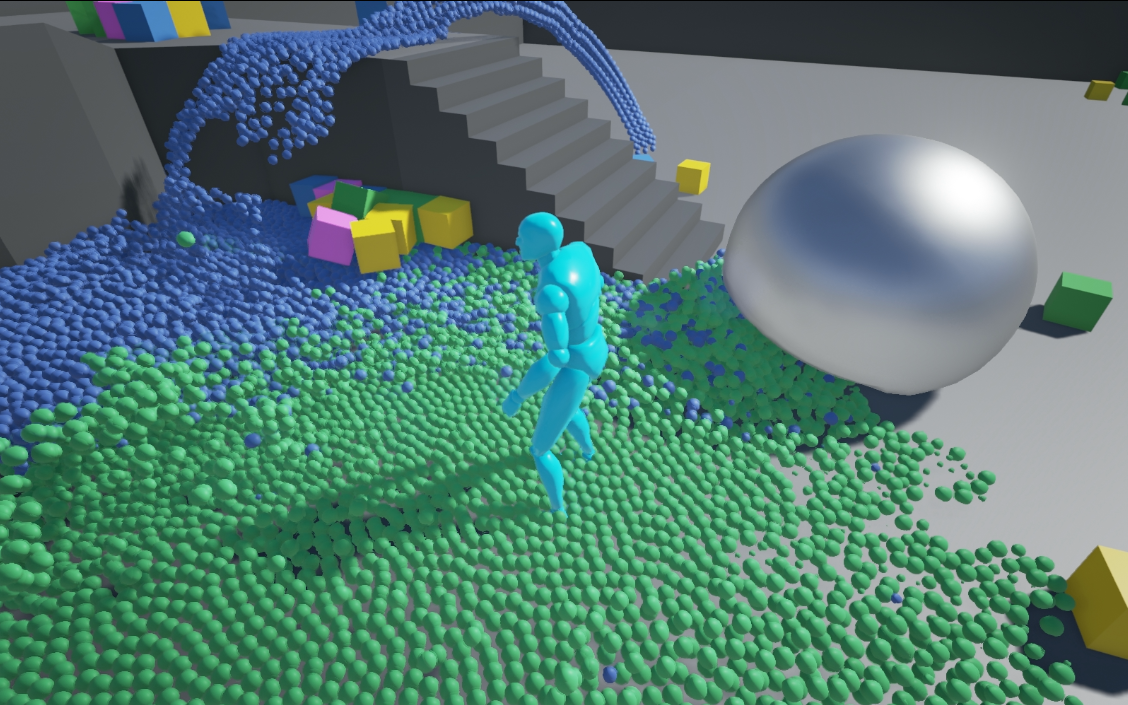

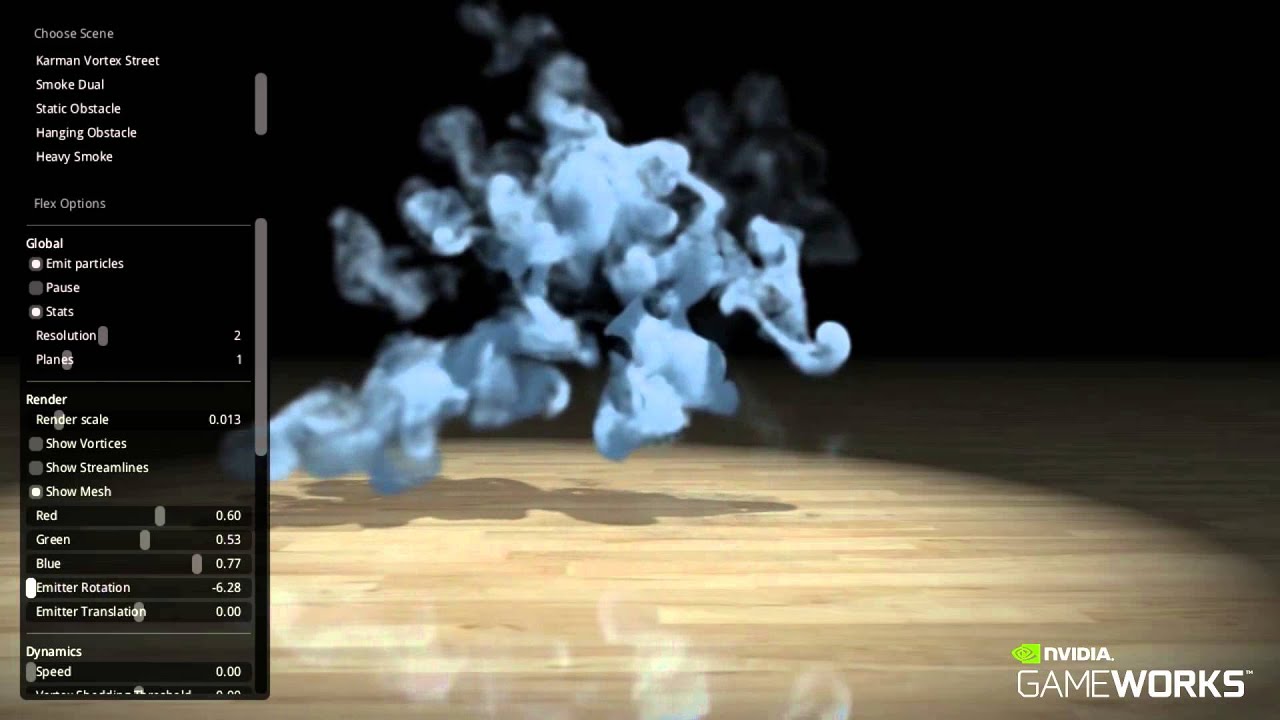

Nvidia FleX technology is a particle-based simulation technique that allows different simulated substances to interact seamlessly. It makes use of a unified particle representation for all types of objects and fluids.

Traditional visual effects use a combination of rigid bodies, fluids, cloth, ropes and smoke and rely on specialized solvers to work out how each substance interacts with the others. Nvidia said FleX's unified particle system allows all substances to interact with each other seamlessly. When Nividia displayed the technology in a demo, it showed liquid and smoke interacting with different objects that looked very natural.

Tripwire's use of FleX is a little bit messier. The company used the technology to help depict the blood and guts in a game. Killing Floor, as you can imagine from the title, is a rather violent and graphic game. The developers created a system they called M.E.A.T. (massive evisceration and trauma) that handles the dynamic gore, blood splatter and graphic violence, and FleX has been used for soft tissue and fluid interaction.

Article continues belowNvidia hopes many more games will make use of this and other GameWorks technologies and has made the custom branches for Unreal Engine 4 available for free from GitHub to any developer who wants to make use of it.

Tripwire just happens to be the first to ship a game using FleX technology, but it certainly won't be the last. Just yesterday, Nvidia announced a partnership with Konami to help integrate GameWorks technologies into Metal Gear Solid V: The Phantom Pain. The new Metal Gear will rely heavily on stealth, and it's set in a large open world. Nvidia's engineers will be helping Konami's artists get the lighting and the special effects just right.

Follow us @tomshardware, on Facebook and on Google+.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Kevin Carbotte is a contributing writer for Tom's Hardware who primarily covers VR and AR hardware. He has been writing for us for more than four years.

-

jimmysmitty Reply16068150 said:AMD stall/pause code included at no cost.

It is not nVidias job to make their products work with AMDs GPUs nor is it AMDs job to make their products work with NVidia GPUs. AMD doesn't sit and code TressFX to make sure it works right on nVidias GPU or Mantle.

It has long been that way with GPUs. I remember when 3DFX was the king and they had multiple technologies the others did not have that only worked on a 3DFX Voodoo GPU. -

Tanquen Reply16069175 said:16068150 said:AMD stall/pause code included at no cost.

It is not nVidias job to make their products work with AMDs GPUs nor is it AMDs job to make their products work with NVidia GPUs. AMD doesn't sit and code TressFX to make sure it works right on nVidias GPU or Mantle.

It has long been that way with GPUs. I remember when 3DFX was the king and they had multiple technologies the others did not have that only worked on a 3DFX Voodoo GPU.

When I buy an Nvidia card or an AMD card I'm not paying them to make proprietary software that won't support my Windows DirectX system. They should not be in the game engine side of things paying or enticing game developers to use code that won't work on their competitors hardware. You mentioned 3dfx from ages ago I'm talking about Windows 7 operating system that supports DirectX. I buy games that support Windows and DirectX not Nvidia or AMD. They are both evil companies that just want your money but AMD has been way more open. Nvidia wants to turn the PC into their console I don't want them to. You want a console, go buy one. -

jerm1027 Reply16069175 said:16068150 said:AMD stall/pause code included at no cost.

It is not nVidias job to make their products work with AMDs GPUs nor is it AMDs job to make their products work with NVidia GPUs. AMD doesn't sit and code TressFX to make sure it works right on nVidias GPU or Mantle.

It has long been that way with GPUs. I remember when 3DFX was the king and they had multiple technologies the others did not have that only worked on a 3DFX Voodoo GPU.

The problem is not that it's not NVidias job to make their software solution work on AMD's hardware, but rather NVidia actively preventing their products from working on AMD's hardware with licensing restrictions. When AMD releases a new technology they have it open so anyone can take it and make improvements; AMD doesn't actively code to work on competitor's solutions, but at least AMD gives them the opportunity to improve. When AMD released TressFX, it sucked on NVidia's GPUs, but NV was able to make optimizations and the performance hit was reduced. When NV released a similar technology, HairWorks, it sucks on both NV and AMD, but disproportionately worse on the compute monster that is GCN, which is nonsensical unless it was intentionally designed to perform poorly on the competition's hardware (which isn't unheard of). And due to licensing restrictions, neither AMD nor Game Devs can work on improving performance on AMD hardware.

Also NVidia's refusal to adopt open standards screws over the consumer as well. I wanted variable refresh rate, and I drank the G-Sync KoolAid, but I need multiple inputs, and I need a reasonably priced monitor, not something that costs more than my entire computer. AMD introduces FreeSync, which utilizes open standards, so there is no reason for Nvidia not to implement it, especially with FreeSync Monitors shipping out faster, cheaper, and with more diversity vs G-Sync Monitors. However, they are not, which means as an NVidia Consumer, I'm screwed out of variable refresh rates because reasons.

With these practices, NVidia is hurting and stifling the industry. -

xyriin ReplyAlso NVidia's refusal to adopt open standards screws over the consumer as well. I wanted variable refresh rate, and I drank the G-Sync KoolAid, but I need multiple inputs, and I need a reasonably priced monitor, not something that costs more than my entire computer. AMD introduces FreeSync, which utilizes open standards, so there is no reason for Nvidia not to implement it, especially with FreeSync Monitors shipping out faster, cheaper, and with more diversity vs G-Sync Monitors. However, they are not, which means as an NVidia Consumer, I'm screwed out of variable refresh rates because reasons.

With these practices, NVidia is hurting and stifling the industry.

As for your complaints about G-Sync you're leaving out an important point.

FreeSync fails horribly at any refresh rate outside of the panel's hardware refresh rate. FreeSync can only match refresh rates to frame rates. If the video card puts out a higher or lower frame rate than the monitor's refresh rate it turns FreeSync off which means you face the exact same problems you have now.

So if you're playing a game on a slow computer where you get super low refresh rates then FreeSync is ok...at least until you hit a lull in the action and your FPS jump or a spike in the action and your FPS plummets. Likewise if you're gaming on a fast computer the problem is even worse because you'll output FPS above the panel refresh rate even more often.

nVidia's G-Sync solution doesn't suffer from this fundamental flaw which is why it's a superior technology and why they developed an in house solution which requires assist from the GPU. Now if you want to talk about pricing, you can have a FreeSync solution that can avoid this flaw, if you buy a FreeSync panel that has a high enough refresh rate. That's a bit of a problem because there aren't any 240hz or higher FreeSync panels and even if they become available you're going to be paying more than what you would for a G-Sync module.

FreeSync is just a stop gap by AMD until they develop a true competitor to G-Sync, and FreeSync is only free because it wasn't developed by AMD, it's simply utilizing existing video standards. When AMD develops their solution it's going to require the same overhead as G-Sync and maybe at that point in time there will either be two dedicated modules in gaming panels or nVidia and AMD will converge into a common module technology.

At the end of the day this will be just like Crossfire and SLI. Originally motherboard chipsets could only handle one or the other (funny, that sounds like panel modules). You bought a motherboard for either Crossfire OR SLI which locked you into a specific GPU type (funny, that sounds like Free/G-Sync panels). However, as the technology matured motherboard chipsets evolved in order to handle both multi-GPU specs. In the future you'll be buying an 'Adaptive Sync' panel that supports both AMD and nVidia GPUs. -

dorsai ReplyAMD stall/pause code included at no cost.

That's interesting...do you currently own a specific AMD GPU that you're using to play KF2 ? I'm playing on a 280x backed by a heavily overclocked i5 2500k and I have no pausing or stalling at all with the graphics cranked all the way up. KF2 is sensitive to lag though, so I wonder if your confusing GPU issues with your internet connection...

-

Tanquen Reply...FreeSync fails horribly at any refresh rate outside of the panel's hardware refresh rate. FreeSync can only match refresh rates to frame rates. If the video card puts out a higher or lower frame rate than the monitor's refresh rate it turns FreeSync off which means you face the exact same problems you have now...

Ok. VESA adaptive sync (around since 2009) does not fail horribly. Adaptive-Sync protocol is in the DisplayPort standard and Nvidia said they will not support it. Easy for them to do but you can guess why they will not. There is already a 30-144Hz VESA adaptive sync display and VESA adaptive supports down to like 9Hz. Yes, if your game drops below 30 FPS on this new display… Wait, why are you playing games at 20-ish FPS? Cleaning up some horizontal tearing is not going to make that a fun experience. G-Sync tries to help with this by passing the same frame over again, like V-Sync already does. With VESA adaptive sync you can use it with or without V-Sync turned on. So if you go below 30Hz V-Sync kicks in. So your 10-20FPS slide show has no horizontal tearing. For the high end or going over the displays refresh rate, again you can choose to use V-Sync or not and most new cards have frame limiting so it’s not generating 300FPS on your 60 or 120 or 144Hz display and wasting electricity. Besides, horizontal tearing is not normally an issue if you consistently above your displays refresh rate.

So again, thanks to Nvidia you have to try and pick the video card you want and worry if the display you want will support adaptive sync.

-

alidan Reply...FreeSync fails horribly at any refresh rate outside of the panel's hardware refresh rate. FreeSync can only match refresh rates to frame rates. If the video card puts out a higher or lower frame rate than the monitor's refresh rate it turns FreeSync off which means you face the exact same problems you have now...

Ok. VESA adaptive sync (around since 2009) does not fail horribly. Adaptive-Sync protocol is in the DisplayPort standard and Nvidia said they will not support it. Easy for them to do but you can guess why they will not. There is already a 30-144Hz VESA adaptive sync display and VESA adaptive supports down to like 9Hz. Yes, if your game drops below 30 FPS on this new display… Wait, why are you playing games at 20-ish FPS? Cleaning up some horizontal tearing is not going to make that a fun experience. G-Sync tries to help with this by passing the same frame over again, like V-Sync already does. With VESA adaptive sync you can use it with or without V-Sync turned on. So if you go below 30Hz V-Sync kicks in. So your 10-20FPS slide show has no horizontal tearing. For the high end or going over the displays refresh rate, again you can choose to use V-Sync or not and most new cards have frame limiting so it’s not generating 300FPS on your 60 or 120 or 144Hz display and wasting electricity. Besides, horizontal tearing is not normally an issue if you consistently above your displays refresh rate.

So again, thanks to Nvidia you have to try and pick the video card you want and worry if the display you want will support adaptive sync.

i think he was talking about the initial freesync monitors that skimped out/were half baked but attributes that to the standard and not to companies cutting corners.