External RAID Storage

Features

By

Patrick Schmid and Achim Roos

published

Add us as a preferred source on Google

Subscribe to our newsletter

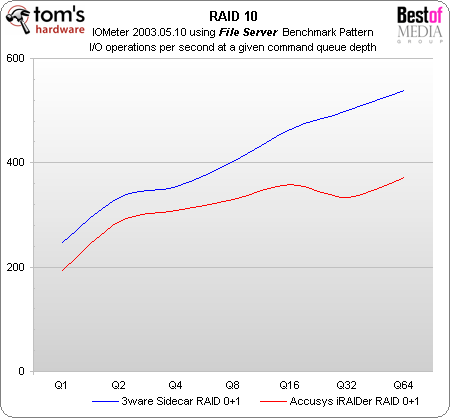

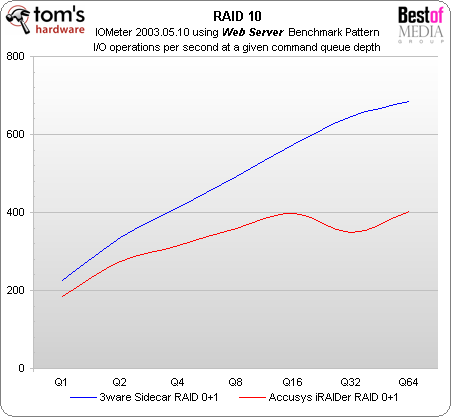

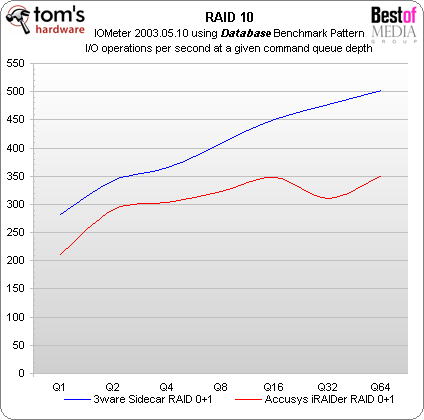

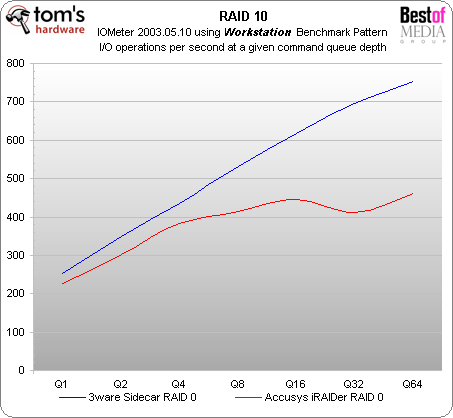

RAID 10 I/O Performance

Although the performance level in RAID 10 is lower than in RAID 0, AMCC again delivers significantly better performance in RAID 10.

Stay On the Cutting Edge: Get the Tom's Hardware Newsletter

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: RAID 10 I/O Performance

Prev Page RAID 0 I/O Performance Next Page RAID 5 I/O PerformanceTOPICS