CUDA-Enabled Apps: Measuring Mainstream GPU Performance

Aliens? Look Faster

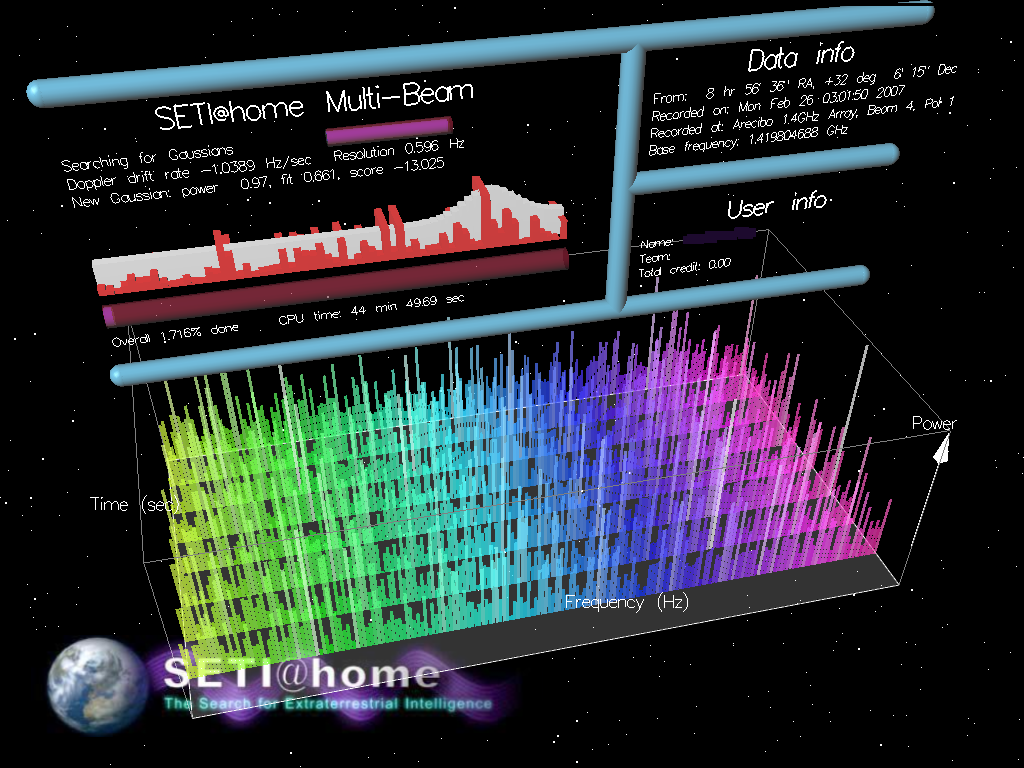

If you’ve seen the movie Contact, you know the gist of the SETI program. The Search for Extraterrestrial Intelligence program uses radio astronomy to search the skies for radio signals that, by their nature, must have come from intelligent life beyond the Earth. Raw data is gathered in a 2.5 MHz-wide band and streamed back to SETI@home’s main location at UC Berkeley. As shown in the movie, most if not all such radio data is simply random noise, like static against the cosmic background. The SETI@home software performs signal analysis on this data, scouring the bits for non-random patterns, such as pulsing signals and power spikes. The more floating point computing power available to process the data, the wider the spectrum and the more sensitive the analysis can be. This is where the parallelism of multi-threading and CUDA pay off.

Berkeley workers divide the raw data into single-frequency work units of about 0.35 MB, or 107 seconds. The SETI@home server then doles out work units to home computers, which typically run the SETI@home client as a screen saver application. When SETI@home went live in May of 1999, the goal was to combine the collective power of 100,000 PCs. Today, the project boasts over 300,000 active computers across 210 countries.

In benchmarking SETI@home, one needs a consistent work unit in order to get reliable results. We only found this out after hours of receiving nonsensical results. It turns out that the Nvidia performance lab had been prepping special script and batch files for testing SETI@home. These run from a command line, not the usual graphics-rich, eye candy interface. Nvidia sent us the needed files and a much clearer performance picture emerged.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

-

SpadeM The 8800GS or with the new name 9600GSO goes for 60$ and delivers 96 stream processors. Would it be correct to assume that it would perform betwen the 9600 GT and 9800 GTX you reviewed?Reply

Other then that great article, been waiting for it since we got a sneak preview from Chris last week. -

curnel_D And I'll never take Nvidia marketing seriously until they either stop singing about CUDA being the holy grail of computing, or this changes: "Aside from Folding@home and SETI@home, every single application on Nvidia’s consumer CUDA list involves video editing and/or transcoding."Reply -

As more software will use CUDA, we will not only see a great boost in performance for e.g. video performance, but for parallel programing in general. This sky rocket this business into a new age!Reply

-

curnel_D l0bd0nAs more software will use CUDA, we will not only see a great boost in performance for e.g. video performance, but for parallel programing in general. This sky rocket this business into a new age!Honestly, I dont think a proprietary language will do this. If anything, it's likely to be GPGPU's in general, run by Open Computing Language.(OpenCL)Reply -

IzzyCraft Who knows it's just a clip he used he could be naming it anything for the hell of it.Reply

CUDA transcoding is very nice to someone that does H.264 transcoding at a high profile and lacks a 300+ dollar cpu who would spend hours transcoding a dvd on high profile settings.

Else from that CUDA acceleration has just been more of a feature nothing like a main event. Although can easly be the main attraction to someone that does a good flow of H.264 trasncoding/encoding.

Encoding/transcoding in h.264 high profile can easily make someone who is very content with their cpu and it's power become sad very quickly when they see the est time for their 30 min clip or something. -

I'm using CoreAVC since support was added for CUDA h264 decoding. I kinda feel stupid for buying a high end CPU (at the time) since playing all videos, no matter the resolution or bit-rate, leaves the CPU at near-idle usage.Reply

Vid card: 8600GTS

CPU: E6700 -

IzzyCraft Well you lucked in considering not all of the geforce 8 series supports H.264 decoding etc.Reply -

ohim they should remove Adobe CS4 suite from there since Cuda transcoding is only posible with nvidia CX videocards not with normal gaming cards wich supports cuda.Reply -

adbat CUDA means Miracle in my language :-) I it will do thoseReply

The sad thing is that ATI does not truly compete in CUDA department and there is not standard for it.