IDF 2010, Research Day: Context-Aware Computing

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

Meet Context-Aware Computing

If you have a fairly current smart phone, it has some sensors built in. It likely has a digital camera, a motion sensor, a GPS radio, and possibly even a tiny gyroscope. Right now, though, your phone is just a collection of hardware with various bits of software running on it. Need to get somewhere? Fire up the GPS app and get directions. Or, use the GPS locator to automatically check you in on Facebook Places or Foursquare.

But what if your smart phone could be really...well, smart? What if your phone always had software running in the background keeping track of what you do? We’re not talking about giving up privacy. Maybe the data on what you’re doing is kept locally, or in a personal cloud, rather than a big aggregator like Google or Facebook.

So, over time, for example, you may have a preference for low-cost Chinese restaurants. If you travel somewhere new, you’re phone will pop up recommendations for cheap Chinese food. Oh, and you’ve always shown a preference for spicy food, so you get a list of cheap Szechwan or Hunan food. You won’t be locked into those choices, either. If you feel like a pizza, you can change the preferences.

Article continues belowContext-aware computing, then, is a combination of sensors that monitor what you do, databases that collect information on what you like, and even post it on your blog or on Facebook, if you choose.

Now, you’re probably thinking that the potential for privacy abuse is legion. Already, electronic kiosks in Japan will tailor advertising to you personally as you walk by their locations. Is that intrusive? Perhaps.

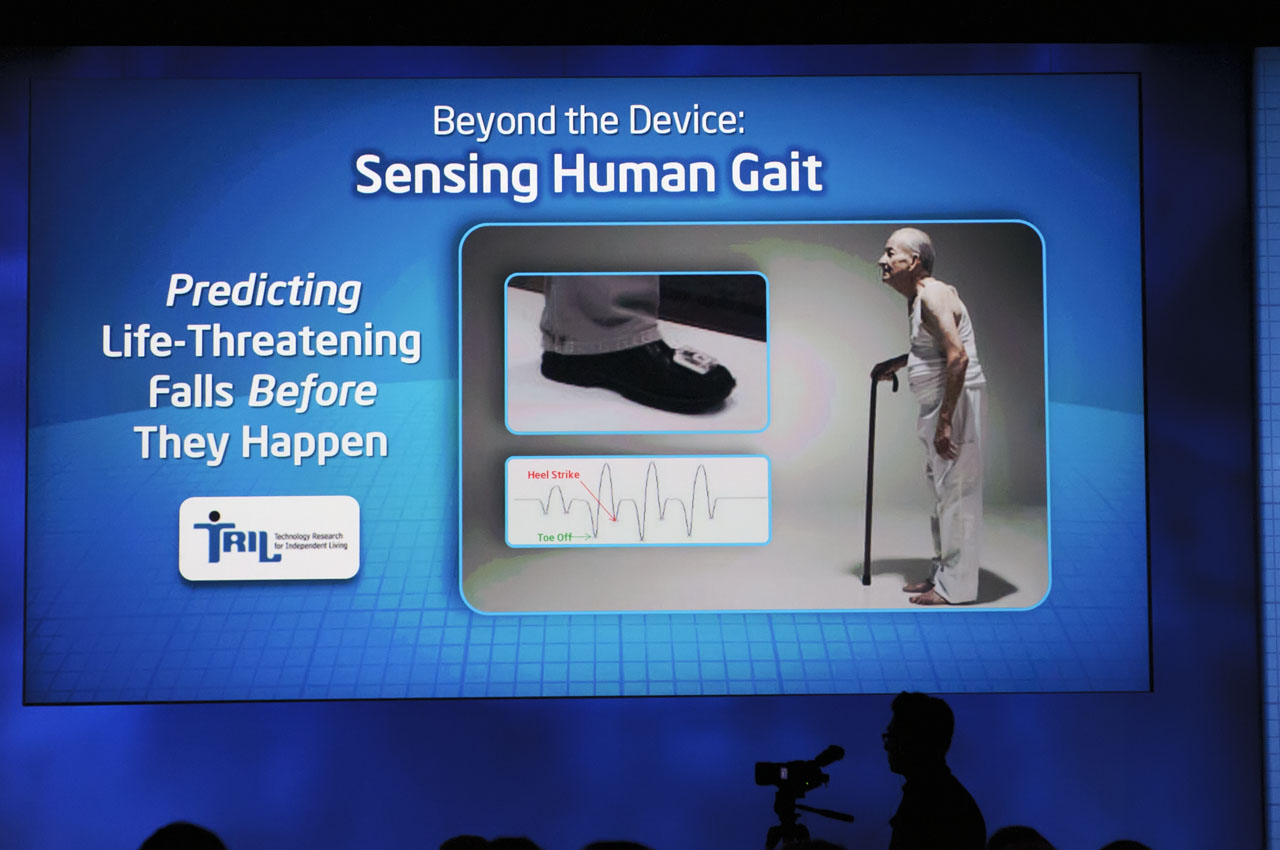

Let’s take a somewhat more benign application: monitoring your elderly parent. As sensors become more compact, they can be woven into clothes or built into shoes or slippers.

How Does This All Work?

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Justin Rattner, who runs Intel’s research arm, and Lama Nachman, a senior researcher at Intel’s Interaction and Experience research, dove into the details of context aware computing and how works under the hood at this fall's IDF.

The keys to making context-aware computing work are low power, low cost, and flexible sensors: accelerometers, GPS locators, cameras, and so on. Note that these sensors don’t have to be built into a smart device. They can have radios (WiFi, for example) that communicate with a personal area or local area network.

Imagine a sensor build into a small device that mounts on the foot or shoe.

The sensor measures the strike time, stride time, and other data. The sensor would have to collect data for a fairly extended period of time. After it has that data, the system can detect if the user’s gait starts to stutter or change in a drastic way, and issue an alert that the user might fall. Alternatively, that could be communicated over the network to a care provider, who can intervene as needed.

Another example mentioned by Nachman is a TV remote control augmented with a sensor, which monitors what buttons are pushed and also picks up characteristics about how the remote is used. It could tell who the user is, because everyone moves or handles the remote just a little differently. Then it could make recommendations for shows to watch, based on what you’ve watched before.

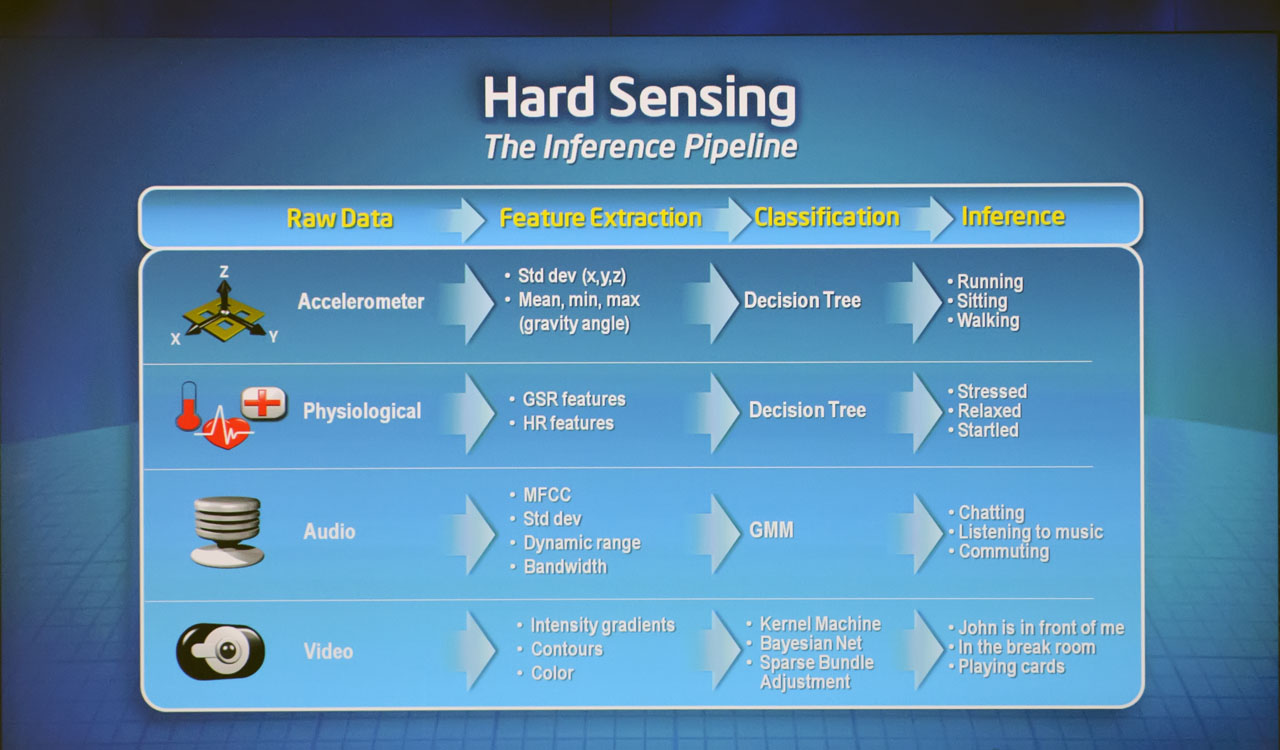

Having small, low-power sensors with radios is one thing, but you need software that’s smart enough to do something with that data. This is where the inference pipeline comes in.

-

Maybe, at 29, I'm already the old guy that thinks new-fangled technology is evil, but I absolutely do not want this.Reply

Almost every infuriatingly negative experience I've had with technology was the result of some developer trying so hard to make my computer do what I want for me (or take advantage of some cool new technology), I had to fight to make it do what I actually wanted it to do (I'm looking right at you Microsoft). It gets even worse when tech developers implement stuff "because they think it's cool" instead of "because people actually want it" (clippy anyone?)... or I suddenly can't do a simple help search for something like "where's the button to do X" because a software developer decided it would be a great idea to have ALL of their help documentation online, but never contemplated the idea that somebody might use their software on a private network that doesn't have internet access (or in a hotel room that charges 12.95 for internet access, or in an airport with no wi-fi, etc). -

randomizer "So, users need granular control over what data is collected and where it’s stored."Reply

Users don't want granular control. That's why they whine about Facebook privacy settings. Users want "me," "my friends" and "everyone" privacy settings. Heaven forbid they have so much choice that it makes them think for more than a fraction of a second. This is the 21st century, choice is old-fashioned. These days automation is key. If a program doesn't do everything then it isn't good enough. Gone are the days when people had a program that they expected to do one thing well. Now programs must do everything, poorly. -

executor2 @anon dude "I'm looking right at you Microsoft" , I don't get you Sir , because Windows evolved from better to best , quick search programs in the start menu ,memory caching to speed applications to "instant" opening , reliable updates of windows and it`s drives , a very big (extreme) database of drives for hardware.Reply

"where's the button to do X" Sir I never had to search for a button in my life in Windows , the interface is intuitive that means you have to use your brain to understand it .

"a private network that doesn't have internet access" well if you travel allot you must have a wireless laptop hooked to your phone , I am wrong ?

All those problems are directly connected to your inability to adapt to change , try figuring out the solutions not blame problem , best regards.

-

amnotanoobie ReplyAlmost every infuriatingly negative experience I've had with technology was the result of some developer trying so hard to make my computer do what I want for me (or take advantage of some cool new technology), I had to fight to make it do what I actually wanted it to do (I'm looking right at you Microsoft). It gets even worse when tech developers implement stuff "because they think it's cool" instead of "because people actually want it" (clippy anyone?)...

We could agree that not everything that MS implements turns into a goldmine. What we have is MS trying to explore what "could" possibly work or what people would possibly want. SuperFetch for example was initially hell for Vista, but after refinement in Win7 most people probably couldn't do without the speed boost of launching applications.

or I suddenly can't do a simple help search for something like "where's the button to do X" because a software developer decided it would be a great idea to have ALL of their help documentation online, but never contemplated the idea that somebody might use their software on a private network ...

Not all software makers are like this, though some are. Microsoft usually has an offline help available unless of course if you don't include it in the installation. MS is probably one of the biggest companies who research items about usability and how people interact with computers.

9501559 said:Now programs must do everything, poorly.

It sometimes is how some software makers try to rush and add new features just to get ahead of other companies. Sometimes it pays off, sometimes it doesn't (e.g. Vista).

-

randomizer executor2All those problems are directly connected to your inability to adapt to changeOr maybe it's due to his lack of desire to adapt to how Windows wants him to do things. Is it beyond reason that a person would want their software to do what they want it to do, and how they want it to do it? I generally don't mind using Windows, as it has some nice features that make using it convenient (and some that make it downright infuriating).Reply

Maybe you don't like having control of your system. I suppose if not wanting to give up control and not liking the idea of hiding system configuration behind more and more levels of abstraction (and deeper and deeper menus) is what you call "inability to adapt to change", then yes, some of us have a problem and would prefer things to be simple again. -

randomizer amnotanoobieSuperFetch for example was initially hell for Vista, but after refinement in Win7 most people probably couldn't do without the speed boost of launching applications.I'd prefer it if applications launched quickly without aggressive prefetching. But I'd have to use another OS for that. Or I could get an SSD since that makes far more difference than Superfetch.Reply

amnotanoobieIt sometimes is how some software makers try to rush and add new features just to get ahead of other companies. Sometimes it pays off, sometimes it doesn't (e.g. Vista).Just look at AVG. Up to AVG 7.5 I was able to use it, but once AVG 8 came out the program became a free version of Norton. Bloated, slow, packed with useless features...

Then one day Microsoft opened up a dusty chest in their Redmond attic and discovered long-forgotten writings containing Ockham's Razor. Thus Microsoft Security Essentials was born. -

rwmunchkin12788 Well this turned into some heated debate pretty darn quickly. Anyway, back on topic.Reply

I think both anon dude and randomizer have perfectly valid points for two very different groups of people. The everyday user has nowhere near the technological know-how that even tomshardware.com newbies have, and a plethora of controls to do anything the person wanted would only make them confused.

What's frustrating anon dude is the obvious response to make everything easier to the point of losing that lack of control. I remember people griping back when Windows XP had the new format for the control panel and start menu. Heck, even as a young teenager it frustrated me until I figured out that you can switch everything back.

Us tech savvy people want our choices, our options, our features, all to be customizable and tunable to the nth degree, while the everyday user just wants point and click (or not even).

I think that context-aware computing, if done well, can allow for both ends of the spectrum. Experienced users would love to be able to choose what things get automated and have infinite control over how it does that, while everyday users would love to just plug it in and instantly see it work without having to pick or choose any options. -

luke904 anon dudeMaybe, at 29, I'm already the old guy that thinks new-fangled technology is evil, but I absolutely do not want this.Almost every infuriatingly negative experience I've had with technology was the result of some developer trying so hard to make my computer do what I want for me (or take advantage of some cool new technology), I had to fight to make it do what I actually wanted it to do (I'm looking right at you Microsoft). It gets even worse when tech developers implement stuff "because they think it's cool" instead of "because people actually want it" (clippy anyone?)... or I suddenly can't do a simple help search for something like "where's the button to do X" because a software developer decided it would be a great idea to have ALL of their help documentation online, but never contemplated the idea that somebody might use their software on a private network that doesn't have internet access (or in a hotel room that charges 12.95 for internet access, or in an airport with no wi-fi, etc).Reply

I'm 16 and I agree -

luke904 executor2@anon dude "I'm looking right at you Microsoft" , I don't get you Sir , because Windows evolved from better to best , quick search programs in the start menu ,memory caching to speed applications to "instant" opening , reliable updates of windows and it`s drives , a very big (extreme) database of drives for hardware."where's the button to do X" Sir I never had to search for a button in my life in Windows , the interface is intuitive that means you have to use your brain to understand it . "a private network that doesn't have internet access" well if you travel allot you must have a wireless laptop hooked to your phone , I am wrong ?All those problems are directly connected to your inability to adapt to change , try figuring out the solutions not blame problem , best regards.Reply

windows makes too many decisions for you, its annoying.. they are wrong 90% o the time and even if they were the right ones it would hardly save me any time

I'm not happy with any operating system, not windows, not mac, not even linux