Ceva's Improved Deep Learning Software Framework Now Supports TensorFlow For Embedded Systems

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

Ceva, a licensor of signal processing intellectual property (IP) for connected devices, announced its second-generation deep neural network software framework. The new CDNN2 (Ceva Deep Neural Network) brings support for Google's TensorFlow to embedded systems.

CDNN2 can enable on-device deep learning-based video analytics in real time, saving bandwidth and storage compared to running the same analytics in the cloud. The CDNN2 software framework is part of an emerging trend of on-device deep learning, which could make devices much smarter even without a connection to the Internet. It’s also a big benefit to those who don’t trust third-party providers with applications such as analyzing video feeds of their homes.

Latency for analytics is also reduced, which means such devices with built-in deep learning capabilities can do things that perhaps cloud-based deep learning solutions wouldn’t be able to achieve with their higher latency.

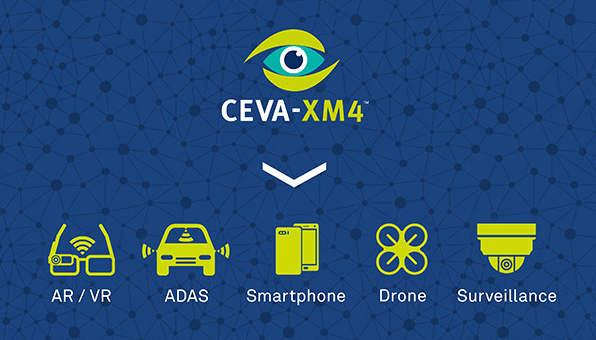

Article continues belowThe CDNN2 is paired with Ceva’s own “intelligent vision processor,” the Ceva-XMP4, which can enable 3D vision, computational photography, visual perception and analytics.

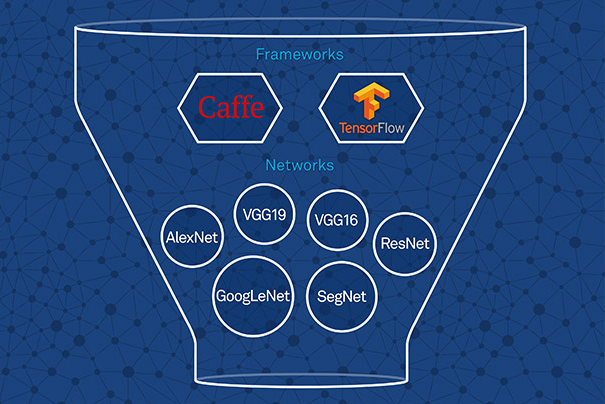

One of the major additions to Ceva’s second-generation deep neural network software framework is support for Google’s TensorFlow, which has quickly become one of the most popular machine learning software libraries. CDNN2 also brings support for convolutional networks, which allow any given network to work with any input resolution, as well as improved capabilities and performance for the latest network topologies and layers.

Pete Warden, lead of the TensorFlow Mobile/Embedded team at Google, said, “It's great to see Ceva adopting TensorFlow. Power efficiency is key to successfully harnessing the potential of deep learning in embedded devices. Ceva's low-power vision processors and CDNN2 framework could help a wide variety of developers get TensorFlow working on their devices.”

Google benefits from having everyone adopt TensorFlow because if there are more TensorFlow developers, the supply of chips that natively support TensorFlow will grow as well. That ultimately gives Google the opportunity to buy cheaper TensorFlow-optimized chips for its datacenters, without having to build such chips from scratch itself (as it’s already done).

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Eran Briman, the vice president of marketing at Ceva, said that the company’s customers are already using its deep learning solutions to enhance the capabilities of drones, surveillance cameras and advanced driver assistance systems. The new CDNN2, combined with its support for TensorFlow, should improve the capabilities of the same types of products, but it will also open up the market for other types of products and services that can take advantage of deep learning. Those include augmented reality, virtual reality, and other similar computer vision applications.

The CDNN2 software library is highly modular and is provided as source code, as an extension to the company’s Application Developer Kit for the Ceva-XM4 chip. The library includes support for a variety of networks, including Alexnet, GoogLeNet, ResidualNet (ResNet), SegNet, VGG (VGG-19, VGG-16, VGG_S) and Network-in-network (NIN), and others. CDNN2 also supports the most advanced neural network layers, including convolution, deconvolution, pooling, fully connected, softmax, concatenation and upsample, and various inception models.

One of the main features of the CDNN2 is that it contains an offline Ceva Network Generator that can convert a pre-trained network into one that is highly optimized for embedded devices with fixed-point math hardware. Usually only older or ultra-low-cost embedded chips lack support for a floating-point unit. According to Ceva, a network that’s optimized for these chips can be generated at the push of a button.

Developers interested in Ceva’s deep learning software framework can also get a developer board on which to run their network simulations in real time.

Lucian Armasu is a Contributing Writer for Tom's Hardware. You can follow him at @lucian_armasu.

Lucian Armasu is a Contributing Writer for Tom's Hardware US. He covers software news and the issues surrounding privacy and security.

-

stevenrix CEVA Inc., not to be confused with CEVA logistics. Maybe they should change their name.Reply