Nvidia Explains How Flickr Uses Deep Learning To Auto-Tag Images

Flickr is using Deep Learning to auto-tag images with its feature called "Magic View," and Nvidia explains how.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

Shortly after unveiling its updates to build bigger and faster neural nets, now Nvidia explains in a blog post how it helped Flickr build its "Magic View" auto-tagging feature that can sort through all of the 11 billion images on its servers.

Of course, the engineers at Nvidia know that there's nothing "Magic" about Magic view, but to someone who isn't quite as lavishly familiar with the technology it can certainly appear to be so, especially if you have a somewhat decent understanding of how difficult some computer algorithms are to create. It's quite easy to ask a human being what brand a certain car is, but programmers will think you're pulling their leg if you ask them to write a program to do the same task -- the software required to do so is all but simple.

Deep Learning solves that problem, but not in a particularly simple way. By feeding the Deep Learning software many images, it can learn the content of these images by recognizing various patterns. It then learns these patterns and can use them to recognize similar things in other images. All of these patterns are stored in the deep neural network (DNN) in the form of vectors.

Article continues belowEssentially, you're allowing the computer to recognize patterns by itself, in much the same way a biological brain does, rather than programming a computer to recognize predetermined patterns. Also note that Deep Learning can be applied not only to images, but also to speech recognition, object classification, translation, automatic car pilots, and much more.

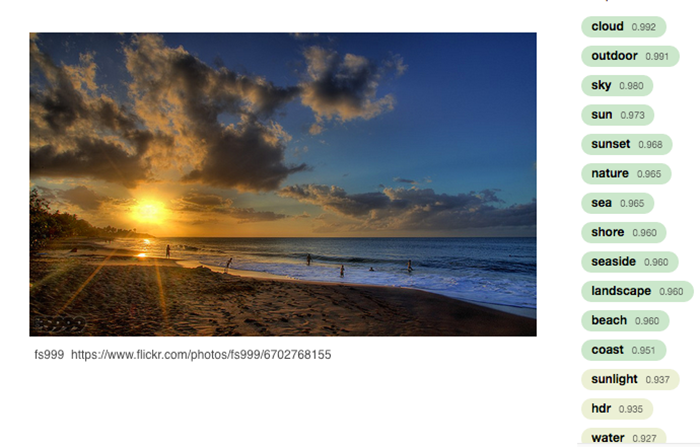

Flickr is using Deep Learning to classify its images, with the Magic View tool automatically tagging the images based on their content. Tags include things like cloud, sunset, nature, ocean, car, dog -- anything you can imagine, really. The tool should even be able to tell what some well-known objects are. For example, if you take a picture of the Golden Gate Bridge, it should be able to recognize that and tell you that you're in San Francisco.

"We do have some exciting new image intelligence features — beyond simple auto-tagging — that we should be rolling out later this year," read an Nvidia blog post. "And for the auto-tagging system, we are continually expanding the repertoire of concepts our models are able to handle, as well as working to improve the accuracy and coverage of the concepts that we already use."

Because Flickr is using Deep Learning, it can also be trained further than the original model. Every image that is tagged incorrectly and fixed (that is, tagged by hand), will go towards the neural net and be used to train it further. Train it far enough, and perhaps one day Flickr will be able to tell you what country you're in based on the architecture of the houses in the background, or perhaps even which city or street.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Of course, Nvidia pointed out that one of the catches is that there has to be a balance between precision and recall, explaining that there is a trade-off between failing to tag an image with a label and incorrectly labeling an image. Flickr could make the auto-tagging aggressive, where it will try to apply labels to all images, but it would then risk getting it wrong more often, requiring its users to correct it. Naturally, user corrections will increase the overall accuracy of the DNN, and some input will be required to train the model for better future tagging, but it shouldn't be wrong so much that users stop using Magic View.

Follow Niels Broekhuijsen @NBroekhuijsen. Follow us @tomshardware, on Facebook and on Google+.

Niels Broekhuijsen is a Contributing Writer for Tom's Hardware US. He reviews cases, water cooling and pc builds.