Athlon II Or Phenom II: Does Your CPU Need L3 Cache?

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

L3 Cache: How Important Is It To AMD?

It makes sense to equip multi-core processors with a dedicated memory utilized jointly by all available cores. In this role, fast third-level cache (L3) can accelerate access to frequently needed data. Cores should not revert to accessing the slower main memory (RAM) whenever possible.

That’s the theory, at least. AMD’s recent launch of the Athlon II X4, which is fundamentally a Phenom II X4 without the L3, implies that the tertiary cache may not always be necessary. We decided to do an apples to apples comparison using both options and find out.

How Cache Works

Before diving deeper into our tests, it’s important to understand some basics. The principle of caches is rather simple. They buffer data as close as possible to the processing core(s) in order to avoid the CPU having to access the data from more distant, slower memory sources. Today’s desktop platform cache hierarchies consist of three cache levels before reaching system memory access. The second and especially the third levels aren’t just for data buffering. Their purpose is also to prevent choking the CPU bus with unnecessary data exchange traffic between cores.

Cache Hit/Miss

The effectiveness of a cache architecture is measured by its hit rate. Data requests that can be answered within a given cache are referred to as hits. If that cache doesn’t contain the sought data and must pass the request on to subsequent memory structures, this is a miss. Obviously, misses are slow. They lead to stalls in the execution pipeline and introduce wait periods. Hits, on the other hand, help sustain maximum performance.

Cache Writes, Exclusivity, Coherency

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Replacement policies dictate how room is created in a full cache for new cache entries. Since data written into a cache eventually has to be available in the main memory, systems can either do this at the same time (write-through) or mark overwritten locations as “dirty” (write-back) and execute the write once the data is wiped out of the cache.

Data on several levels of cache can be stored exclusively, meaning that no redundancy exists. You won’t find the same piece of data in two different cache structures. Alternatively, caches can operate in an inclusive manner, with lower levels guaranteed to hold the data found in higher-levels (closer to the processor) of cache. AMD’s Phenom works with an exclusive L3 cache, while Intel follows the inclusive cache strategy. Coherency protocols take care of maintaining data across multiple levels, cores, and even processors.

Cache Capacity

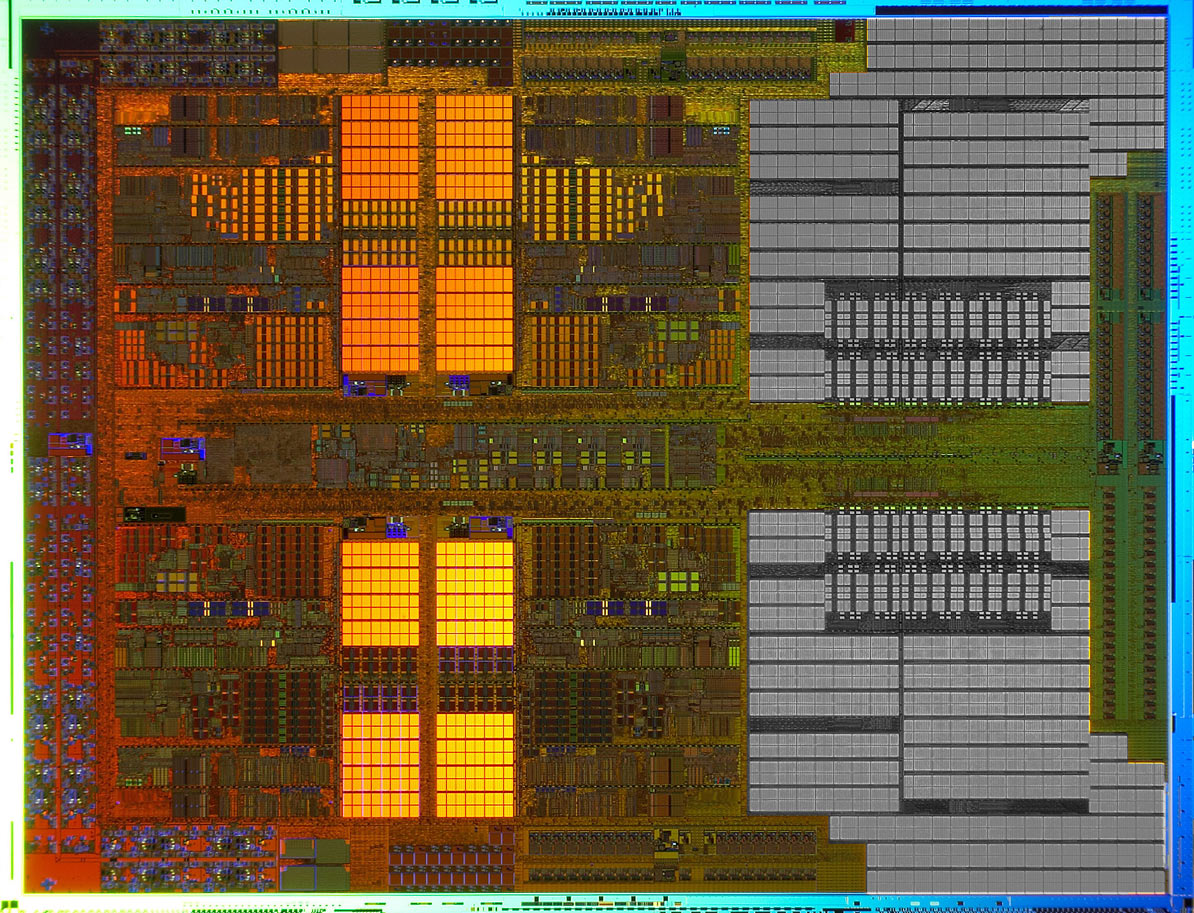

Larger caches can buffer more data, but they also tend to introduce higher latency. Since cache also consumes large amounts of a processor’s transistors, it is important to find a viable balance between transistor cost and die size, power consumption, and performance/latency issues.

Associativity

RAM entries can either be direct-mapped, meaning that there can only be one position in a cache for copies of main memory, or they may be n-way associative, which stands for n possible positions in the cache to store data. Higher associativity (up to fully associative caches) provide the best caching flexibility because existing cache data doesn’t have to be overwritten. In other words, high n-way associativity guarantees higher hit rates, but it introduces more latency, since it takes more time to compare all of those associations for hits. Ultimately, it makes sense to implement many-way associativity for the last cache level because there’s the most capacity available, and searching beyond that would send the processor out to slower system memory.

Here are some examples: The Core i5 and i7 work with 32KB of 8-way associative L1 data cache and 32KB of 4-way associative L1 instruction cache. Clearly, Intel wants instructions to be available quicker while also maximizing hits on the L1 data cache. Its L2 cache is also 8-way set-associative, while Intel’s L3 cache is even smarter, implementing 16-way associativity to maximize cache hits.

However, AMD follows another strategy on the Phenom II X4 with a 2-way set-associative L1 cache, which offers lower latencies. To compensate for possible misses, it features twice the memory capacity: 64KB data and 64KB instruction cache. The L2 cache is 8-way set-associative, like Intel's design, but AMD’s L3 cache works at 48-way set associativity. None of this can be judged without looking at the entire CPU architecture. Naturally, only the benchmarks results really count, but the whole purpose of this technical excursion is to provide a look into the complexity behind multi-level caching.

Current page: L3 Cache: How Important Is It To AMD?

Next Page 1, 2, 3: Cache Levels