GeForce GTX 570 Review: Hitting $349 With Nvidia's GF110

A month ago, Nvidia launched its GeForce GTX 580, and it was everything we wanted back in March. Now the company is introducing the GeForce GTX 570, also based on its GF110. Is it fast enough to make us forget the GF100-based 400-series ever existed?

Power Consumption And Noise

Power Consumption

My power numbers caused quite a splash in the GeForce GTX 580 review. Nvidia made changes to its power circuitry to protect against overloading the voltage regulation. Incidentally, these are changes AMD made back when it launched the Radeon HD 5870; Nvidia's leash is just a little bit tighter, likely out of necessity due to the more power-hungry GPU.

As a result, “power bugs” (AMD’s term, not mine) like FurMark result in throttling to keep from damaging the card. That was fine with me—the figure FurMark spits out isn’t particularly meaningful anyway, aside from its ramifications as an absolute worst-case. Nevertheless, there were sites out there that tinkered with the app until they found settings that’d duck in under Nvidia’s trigger. It seemed that, just because FurMark could be run, a lot of readers still thought it should be.

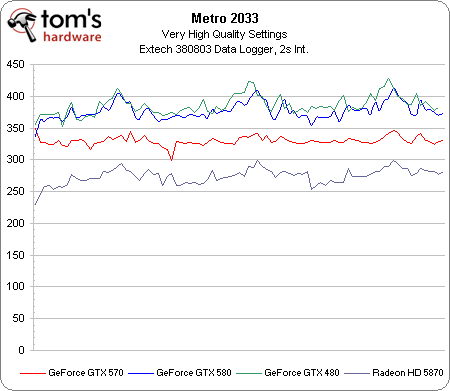

Logging power use in real-world games can be so much more meaningful, though. It’s an actual result. It’s the Crysis to 3DMark. And that’s what we care about. What really happens when you’re gaming. And so we cranked up the settings in Metro 2033 yet again and put as much stress on these cards as possible in a three-run pass through the built-in benchmark. The result is a telling chart of samples every two seconds, and an average power figure.

| Average System Power Consumption | |

|---|---|

| Nvidia GeForce GTX 570 1.25 GB | 329.78 W |

| Nvidia GeForce GTX 580 1.5 GB | 376.51 W |

| Nvidia GeForce GTX 480 1.5 GB | 385.70 W |

| AMD Radeon HD 5870 1 GB | 274.14 W |

With GeForce GTX 580, we know that Nvidia used the GeForce GTX 480’s TDP as its ceiling, so it’s really not a surprise that the GTX 480 and GTX 580 run very close together. In fact, the GTX 580 averages 9 W less than the GTX 480 across the test (376 W system power versus 385 W). The GeForce GTX 570 drops that average to 329 W, a 47 W difference. This doesn’t exactly match Nvidia’s TDP spec, which puts the boards 25 W apart. AMD’s Radeon HD 5870 is most definitely a slower graphics card, but it also demonstrates impressive power figures, down at 274 W average system power use.

Noise

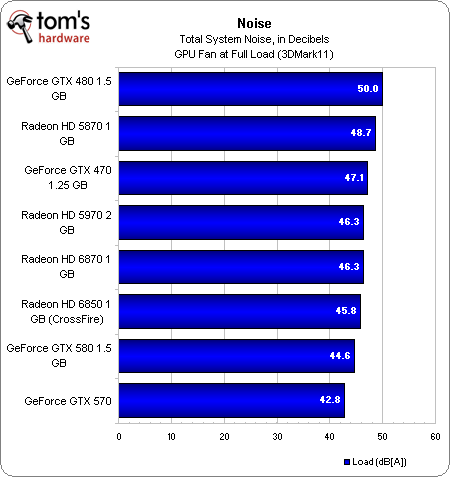

Another requested addition to the GTX 580 story was noise measurements. So, I fired up my Extech 407768 sound level meter, placed it 12 inches away from the back of our test machine to keep the registered output within the meter's range, and tested each of the cards tested in that story, plus this one.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

It's hardly a surprise to see the GeForce GTX 480 topping our load charts after half an hour of loops in 3DMark11 (I took the measurement during Game Test 2 for each contender). The GeForce GTX 470 is also expected to be one of the louder cards here, and it shows up as the third highest.

It's a little more unexpected to see the 5870 under the GTX 480, but perhaps our original launch sample from more than a year ago is getting a little long in the tooth. Also interesting is that the very hot Radeon HD 5970 and Radeon HD 6870 appear to be as loud.

What I really like to see is that a pair of Radeon HD 6850 cards in CrossFire make less noise than the 6870. This was my biggest reservation in recommending a CrossFire configuration--especially one with cards sitting back to back, without a slot's worth of space between them. Nevertheless, we're seeing that AMD's latest not only put down impressive performance at a reasonable price, but they also do it elegantly (aside from the four slots that get consumed).

And the big news is Nvidia's GeForce GTX 500-series. The changes made to its heatsink, fan, and ramping algorithm make a massive difference in the GeForce GTX 580. Consequently, when the company decided to use the same solution on its GeForce GTX 570 reference design, it was only natural for the acoustics to get even more attractive.

I liken this to Toyota's JZ engine. The cast iron block was built for the twin-turbocharged 2JZ-GTE, and overbuilt for its role in the 2JZ-GT. So too are the changes Nvidia made for the uncut GF110 seemingly overkill for the GTX 570. We'll take them, though, along with the cooler temps and quieter acoustics they bring. Bear in mind that this is only going to apply to the reference heatsink and fan combo, though. Should add-in board partners deviate from Nvidia's implementation to save money or differentiate in some other way, these numbers will of course change.

Current page: Power Consumption And Noise

Prev Page Benchmark Results: Just Cause 2 (DX 11) Next Page Conclusion-

thearm Grrrr... Every time I see these benchmarks, I'm hoping Nvidia has taken the lead. They'll come back. It's alllll a cycle.Reply -

xurwin at $350 beating the 6850 in xfire? i COULD say this would be a pretty good deal, but why no 6870 in xfire? but with a narrow margin and if you need cuda. this would be a pretty sweet deal, but i'd also wait for 6900's but for now. we have a winner?Reply -

sstym thearmGrrrr... Every time I see these benchmarks, I'm hoping Nvidia has taken the lead. They'll come back. It's alllll a cycle.Reply

There is no need to root for either one. What you really want is a healthy and competitive Nvidia to drive prices down. With Intel shutting them off the chipset market and AMD beating them on their turf with the 5XXX cards, the future looked grim for NVidia.

It looks like they still got it, and that's what counts for consumers. Let's leave fanboyism to 12 year old console owners. -

nevertell It's disappointing to see the freaky power/temperature parameters of the card when using two different displays. I was planing on using a display setup similar to that of the test, now I am in doubt.Reply -

reggieray I always wonder why they use the overpriced Ultimate edition of Windows? I understand the 64 bit because of memory, that is what I bought but purchased the OEM home premium and saved some cash. For games the Ultimate does no extra value to them.Reply

Or am I missing something? -

theholylancer hmmm more sexual innuendo today than usual, new GF there chris? :DReply

EDIT:

Love this gem:

Before we shift away from HAWX 2 and onto another bit of laboratory drama, let me just say that Ubisoft’s mechanism for playing this game is perhaps the most invasive I’ve ever seen. If you’re going to require your customers to log in to a service every time they play a game, at least make that service somewhat responsive. Waiting a minute to authenticate over a 24 Mb/s connection is ridiculous, as is waiting another 45 seconds once the game shuts down for a sync. Ubi’s own version of Steam, this is not.

When a reviewer of not your game, but of some hardware using your game comments on how bad it is for the DRM, you know it's time to not do that, or get your game else where warning.