Far Cry 3 Performance, Benchmarked

The third installment of the Far Cry series is more impressive as a game (to us, at least) than its predecessor. But do you need really powerful hardware to enjoy it? We benchmark 15 different graphics cards and eight different CPUs to find out.

Image Quality And Settings

Far Cry 3 is powered by the Dunia 2 engine, a heavily modified version of Crytek's CryEngine. The game looks fantastic, and, more than anything, else it reminds me of the original Crysis (a testament to how ahead of its time that game was back in 2007). Some of Far Cry 3's visual elements may be superior to the original Crysis, though it appears that the environment isn't quite as detailed (this is subjective; I haven't played the original Crysis in a long time). No matter what, though, Far Cry 3 looks amazing. My one nitpick is that some of the animal animations seem to halt abruptly and unnaturally as they transition.

Far Cry 3 employs an advanced light culling system that brings down the overhead of shading many lights, some of which don't necessarily affect an entire scene. The title also optimizes multisample anti-aliasing by only applying the feature to "important" parts of the image, purportedly without sacrificing image quality. DirectCompute-accelerated HDAO and Direct3D 11 TSAA also serve to improve the title's better-looking graphics.

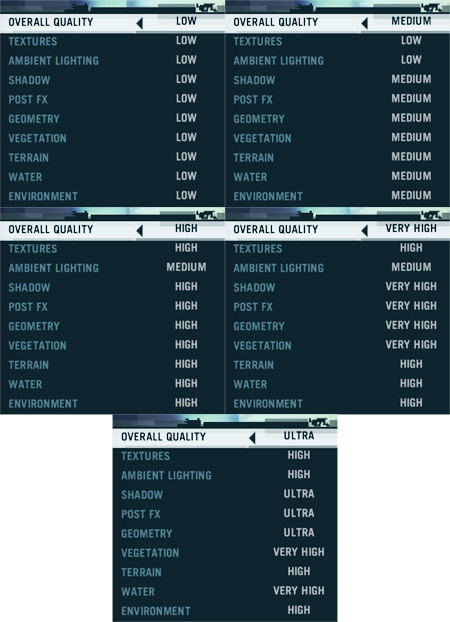

The game has five detail presets, but individual settings can be customized as you see fit. What follows are the options you see when you specify Low, Medium, High, Very High, and Ultra on the Overall Quality menu.

The changes between each detail level are often subtle. However, there are gradual improvements in shadow, geometry, and lighting quality as you go from one end of the spectrum to the other.

One thing you can't see in these screenshots is the LOD transition that happens as you approach objects. The developer chose a pixellated fade between levels of detail, rather than a softer transparency fade (perhaps to help performance?), and it's painfully obvious at times. The following screenshot captures the effect as it happens.

The good news is that this artifact is less apparent at higher detail settings because the transition happens further away from the camera. If you're using the Medium or Low preset, though, you're going to have to live with it.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: Image Quality And Settings

Prev Page Far Cryin' For The Third Time Next Page Test System And Graphics HardwareDon Woligroski was a former senior hardware editor for Tom's Hardware. He has covered a wide range of PC hardware topics, including CPUs, GPUs, system building, and emerging technologies.

-

sugetsu "The good news for folks with Piledriver-based processors is that the FX-8350 is nearly as quick as Intel's Core i3-2100 (never mind the fact that the Core i3 costs $90 less)."Reply

My God... Are the reviewers of this website paid to make AMD look bad? Any person with a minimum hint of common sense can clearly see that there is virtually no difference between FX 8350, the i3, the i5 and i7. This is a big disservice to the community. -

rdc85 :DReply

I thinks it read like this

"The good news for folks with Piledriver-based processors is that the FX-8350 is nearly as quick as Intel's Core i7-3960X (never mind the fact that the Core i7 costs more than $500..). "

hehe....

anyways good review... -

Tom Burnqest sugetsu"The good news for folks with Piledriver-based processors is that the FX-8350 is nearly as quick as Intel's Core i3-2100 (never mind the fact that the Core i3 costs $90 less)."My God... Are the reviewers of this website paid to make AMD look bad? Any person with a minimum hint of common sense can clearly see that there is virtually no difference between FX 8350, the i3, the i5 and i7. This is a big disservice to the community.LOL truthed ! I bet that 8350 when OCed can even close the tiny gap between it and the Intel processors. Can the i3 OC I don't think so.Reply -

echondo Why did the benchmark go from Medium straight to Ultra? Why not High settings? Now I don't know how well my 7870 will do on High at 1080p. It does pretty good at medium, but then gets destroyed with everything else on Ultra/high resolution.Reply

Why no middle ground? And why no 7970/680 tests in Crossfire/SLI? Why use single flagship cards, but then only use SLI/Crossfire for the medium bunch?

I'm very glad to see that this game uses Crossfire/SLI effectively, ~50% increase in performance for dual GPU configurations. -

EzioAs I've heard that FC3 was a demanding game but I never realized that ultra settings was SUPER demanding. Anyways, heard a lot of good things about this game, maybe I'll give it a try.Reply

Thanks Don for the great review as always. -

Heironious 2 x 2GB Galaxy GTX 560's in SLI with everything maxed in game and control panel gives around 35 FPS average. (4 X MSAA only though) Ran the cards to 78 which is fine. Turned it down in the NVIDIA control panel to get steadier frames. Not the best looking game you've seen? I think it looks better than even BF 3.Reply

Edit: These still screen shots don't do it justice. -

sayantan This game can be really demanding on CPU depending upon the environment. In a firefight that involves flame throwers and explosions along with some AIs , you can see the framerates drop from 60 to 40 in no time. Also I would like to mention that game stutters like hell with anything below 60 fps . Even 57 -58 fps is unplayable and gives me headache. So it is essential to tweak the settings such that the fps is above 60 most of the time. The good thing is if you have a decent system you can maintain 60fps without loosing too much visual fiedelity. I can run the game at 0x AA @1080p with all other details maxed out using OCed 7970(1060,1575) and 2500k(4.0Ghz).Reply

-

sayantan sugetsu"The good news for folks with Piledriver-based processors is that the FX-8350 is nearly as quick as Intel's Core i3-2100 (never mind the fact that the Core i3 costs $90 less)."My God... Are the reviewers of this website paid to make AMD look bad? Any person with a minimum hint of common sense can clearly see that there is virtually no difference between FX 8350, the i3, the i5 and i7. This is a big disservice to the community.Reply

rdc85I thinks it read like this"The good news for folks with Piledriver-based processors is that the FX-8350 is nearly as quick as Intel's Core i7-3960X (never mind the fact that the Core i7 costs more than $500..). "hehe....anyways good review...

The good thing is the game doesn't scale up with intel CPUs making the 8350 really look good in comparison.

-

sharpies sugetsu"The good news for folks with Piledriver-based processors is that the FX-8350 is nearly as quick as Intel's Core i3-2100 (never mind the fact that the Core i3 costs $90 less)."My God... Are the reviewers of this website paid to make AMD look bad? Any person with a minimum hint of common sense can clearly see that there is virtually no difference between FX 8350, the i3, the i5 and i7. This is a big disservice to the community.Reply

Dude, the writer is only trying to point out that using a dual core i3 is more meaningful than using the 8core FX8350. AND B.T.W. its common sense than the latest games dont even benefit from so many cores. Stop moaning about whether or not the writer is an Intel fanboy because AMD performed well in the GPU section.