Microsoft Surface Review, Part 1: Performance And Display Quality

Web Performance: SunSpider, V8, And BrowsingBench

We searched far and wide for benchmarks that run under Windows RT. But as a result of Microsoft's strict usage guidelines, there simply aren't any cross-platform tests available for comparing the Surface to other ARM-based tablets.

Everything we know about how Tegra 3 fares against competing SoCs comes from comparing Android- and iOS-based devices. We've seen the same processor perform differently under different operating environments, though. Right now, browser-based tests are the only way to quantify the performance of a Windows RT-based device compared to other tablets.

Unfortunately, that's not even a perfect solution, since browser support varies based on operating system. Safari is the default on iOS-based devices, and we saw a number of examples of it outperforming its competition in Which Browser Should You Be Running On Your iPad And iPhone?. Meanwhile, the Jelly Bean update to Android makes Chrome the default on Google's Nexus 7. With the Surface, we’re dealing with IE 10, complicating the comparison further. Even though we can’t standardize to a single browser, using each device's default is more appropriate for measuring device performance anyway.

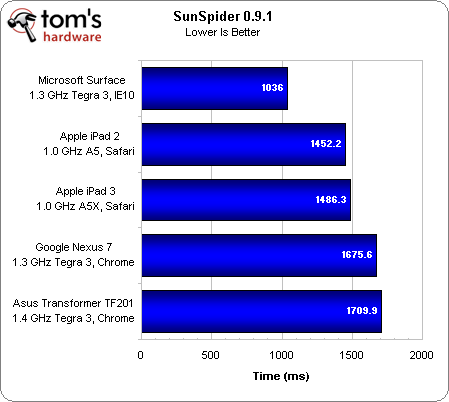

SunSpider is a browser-based performance test that measures screen drawing, text manipulation, and encryption. We regularly test JavaScript performance as part of our regular Web Browser Grand Prix series, which is why these results are a bit surprising (Ed.: Actually, they shouldn't be. Our first look at RoboHornet in RoboHornet: The Next Big Thing In Browser Benchmarking showed IE10 doing really, really, well.). We already know IE 9 to be slower than Chrome on Windows 7. So, IE10 seems to be a major upgrade. The combination of Microsoft’s latest Web browser and Windows RT turns out to be quite a bit faster than Chrome on Android 4.1. Also, JavaScript performance is better on the Surface than on the iPad 2 or third-gen iPad.

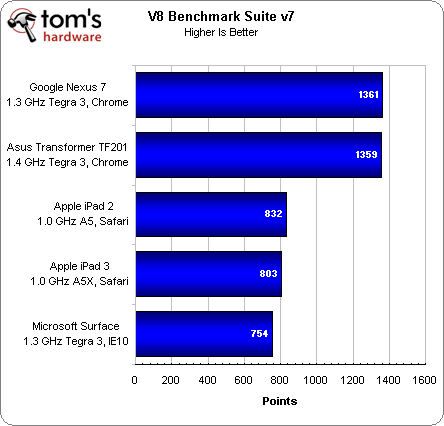

The V8 Benchmark Suite was created by Google specifically to test the runtime performance of JavaScript in Chrome, so it's hardly surprising to see the Nexus 7 and Transformer TF201 in the lead. Because Microsoft won’t allow browsers other than Internet Explorer to run under Windows RT, though, it'd be difficult to remove the browser as a variable in this test.

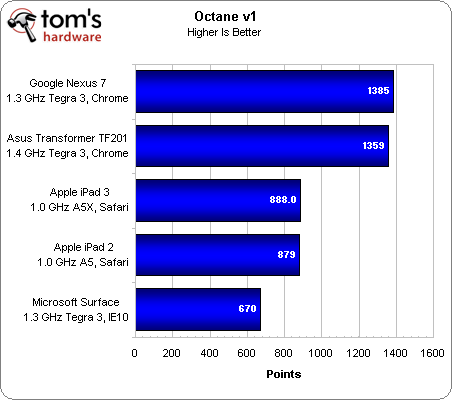

Octane is Google's newest JavaScript-based benchmark. It incorporates the eight original tests from V8 along with five additional test that focus on runtime performance. Interestingly, the Surface fares worse than it did in V8. But, given the source of this test, we're not prepared to draw any conclusions from it.

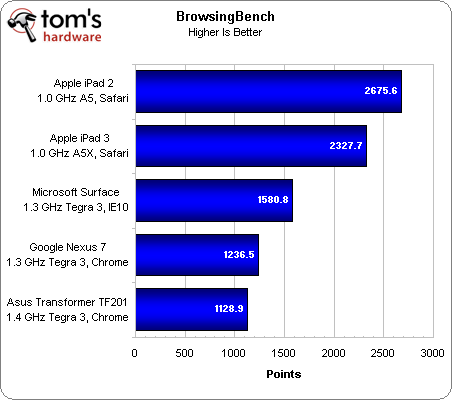

BrowsingBench was created by EEMBC, also known as the Embedded Microprocessor Benchmark Consortium. It's a non-profit organization tasked with finding ways to develop testing methodology, specifically for embedded hardware. We're been playing around with this tool in the lab, and we love it. While it's meant for testing "smartphones, netbooks, portable gaming devices, navigation devices, and IP set-top boxes," it's just as applicable for measuring browser performance in general.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Unlike SunSpider or V8, BrowsingBench evaluates the total performance of a browser: page loading, processing, rendering, compositing, and so on. This helps reflect real-world use, unlike a single JavaScript-based metric. Frankly, these results are more representative of our own subjective experience. Both iPads share similar processing hardware, and yet Apple's second-generation tablet gets the win. Resolution plays a big part in the rendering workload, and at 2048x1536, more is asked of the third-gen iPad than the iPad 2 at 1024x768.

Meanwhile, Microsoft’s Surface falls ~30% behind the iPad 3 and ~40% behind the iPad 2. That’s in sharp contrast to SunSpider, where IE10 took a commanding lead. The good news is that Surface manages to outpace Tegra 3-based Android tablets like the Nexus 7 and Transformer TF201 by ~20%.

Bear in mind that the performance measured in these three tests is only one aspect of using a tablet, and by no means does a discrepancy of 40% in a browsing metric mean you're going to see a corresponding experiential gap in the real world.

Current page: Web Performance: SunSpider, V8, And BrowsingBench

Prev Page Nvidia Tegra 3: Familiar Hardware At The Heart Of Surface Next Page Display Performance, Analyzed-

brandonvi so microsoft went the apple way with it and shut everything out but what they want ..... o fun how long before some one jail brakes oneReply

hopefully this will crash and burn hard for the first time every i am praying apple will stomp on Microsoft just so they will stop trying to turn my desktop in to a tablet like this -

jhansonxi brandonviso microsoft went the apple way with it and shut everything out but what they want ..... o fun how long before some one jail brakes one hopefully this will crash and burn hard for the first time every i am praying apple will stomp on Microsoft just so they will stop trying to turn my desktop in to a tablet like thisThat will be very difficult with the Surface RT. The key is stored on the main chip (SoC): http://infocenter.arm.com/help/index.jsp?topic=/com.arm.doc.prd29-genc-009492c/CACGCHFE.htmlReply

There's probably a unique key in every production run, if not every individual tablet. The only solution is to replace the SoC with an unkeyed version. It's probably a micro BGA and your not going to be replacing that with just a pencil iron, assuming an alternative SoC is even available. -

friskiest @ brandonvi,. If you'll think things more rationally, its obvious that a controlled environment (like the device whose function is to deliver media, quality user experience is a must,. everything should work and feel as expected,. without tight control,. you'll probably have apps that runs poorly or not at all, also, malware could easily be managed.Reply

Offcourse, that should only be the case for Windows RT, but you can still install softwares to Windows 8 from different sources. -

brandonvi friskiest@ brandonvi,. If you'll think things more rationally, its obvious that a controlled environment (like the device whose function is to deliver media, quality user experience is a must,. everything should work and feel as expected,. without tight control,. you'll probably have apps that runs poorly or not at all, also, malware could easily be managed.Offcourse, that should only be the case for Windows RT, but you can still install softwares to Windows 8 from different sources.Reply

if they dont fail in this which as a said i pray they fail hard how long do you think it will be before they use the same "we need Tight control to give you a good user experience" idea in the main windows they are already a MAJOR step twards it with them having a windows store in the first place this Windows RT is nothing but a test to see how it goes windows 9 or 10 are very likly to be just like this locked down as much as they can -

wildkitten brandonviif they dont fail in this which as a said i pray they fail hard how long do you think it will be before they use the same "we need Tight control to give you a good user experience" idea in the main windows they are already a MAJOR step twards it with them having a windows store in the first place this Windows RT is nothing but a test to see how it goes windows 9 or 10 are very likly to be just like this locked down as much as they canIt won't happen in main Windows. The whole point of Windows, and the reason it became and remains the dominant OS, was because, while being closed source, it ran on an open architecture system and anyone could write applications for it.Reply

If they made it that only "approved" applications from the Windows Store could be run, this would ruin Window's dominance of the OS market and tank PC sales completely. For one thing, they could no longer do licencing deals as to completely wall off Windows while licencing it out the way they currently do would break all the current anti-trust deals in place. Look at how much trouble there was simply cause IE was preinstalled when they never blocked Netscape or Firefox. What do you think the lawsuits would be if they walled it off? It would be horrendous and they know it.

The only way they could wall it off was to sell hardware and OS themselves the way Apple does with Mac. But as I said, MS knows that would be business suicide. -

Darkerson So far, I have to admit, I like what I see, but until I get to see the Surface Pro, I will continue to wait. I have held out this long for a tablet, I think I can wait a little longer. But it definitely looks neat, as least to me it does.Reply -

brandonvi wildkittenIt won't happen in main Windows. The whole point of Windows, and the reason it became and remains the dominant OS, was because, while being closed source, it ran on an open architecture system and anyone could write applications for it.If they made it that only "approved" applications from the Windows Store could be run, this would ruin Window's dominance of the OS market and tank PC sales completely. For one thing, they could no longer do licencing deals as to completely wall off Windows while licencing it out the way they currently do would break all the current anti-trust deals in place. Look at how much trouble there was simply cause IE was preinstalled when they never blocked Netscape or Firefox. What do you think the lawsuits would be if they walled it off? It would be horrendous and they know it.The only way they could wall it off was to sell hardware and OS themselves the way Apple does with Mac. But as I said, MS knows that would be business suicide.Reply

and 2 or 3 years ago almost everyone would of said taking away the start menu and giving wndows a interface from a tablet would be "business suicide" this is just one step in a 5-10 year planed prosess to shuting windows off apple may not be making money hand over fist on there PC sells but overall apple is worth more then microsoft by a great deal if the choice is make games for a closed windows or apple or one of the other lesser used OS's its going to be closed windows because it will more then likly be the same programing as all of the older windows just thru the windows store

so windows 9 comes out closed you cant just stop progaming for windows and start linix all the people useing windows 7 and 8 are still out there that you can sell to along with many in the windows store on windows 9

the basic thing is the have the market by the balls and they are going to take advantage of it since they know they can

if things they are doing like this dont crash and burn we are going to be buying everything from the windows store in a few years -

wildkitten brandonviand 2 or 3 years ago almost everyone would of said taking away the start menu and giving wndows a interface from a tablet would be "business suicide" this is just one step in a 5-10 year planed prosess to shuting windows off apple may not be making money hand over fist on there PC sells but overall apple is worth more then microsoft by a great deal if the choice is make games for a closed windows or apple or one of the other lesser used OS's its going to be closed windows because it will more then likly be the same programing as all of the older windows just thru the windows store so windows 9 comes out closed you cant just stop progaming for windows and start linix all the people useing windows 7 and 8 are still out there that you can sell to along with many in the windows store on windows 9 the basic thing is the have the market by the balls and they are going to take advantage of it since they know they can if things they are doing like this dont crash and burn we are going to be buying everything from the windows store in a few yearsYou're comparing dumping the Start button to them doing something that will cost them tons of customers and open themselves to massive lawsuits and scrutiny from multiple governments? The two are not anywhere closely analogous. Please, stop and think before making such comparisons.Reply

While losing the Start button may seem to many of us, right now, as a silly move, remember, Windows never had it before 95 and the Start button was widely criticized then. You can still get to the regular desktop in Windows 8 and be able to get the Start button through addons.

But there is no way Microsoft is going to end 3rd party development for Windows. You can say all you want about how Apple is worth more now, but was just 15 years ago in 1997 that Microsoft actually bailed Apple out and kept them from going bankrupt. Microsoft has ALWAYS been the more stable company and has never had any form of debt liability. All Apple did was jump on a niche market and perfected the way people buy music with iTunes as well as develop what was at the time the very best mp3 player on the market with the iPod. Had it not been for iTunes and the iPod, Apple may very well not exist today.

Did the Xbox cause Microsoft to wall off Windows? No, but some claimed it would and were just as wrong. Some claimed that Microsoft would kill off PC gaming, but it hasn't. The fact that Windows doesn't allow 3rd party apps is mainly because of the ARM architecture and they have seen how malware has grown exponentially on Android and has decided to take a more Apple-ish approch to their main mobile platform, RT, to control things. That's not such a bad idea.

You also can say all you want that they have the market "by the balls", but if they were to wall off Windows, that would be them releasing their grip. No one would have any incentive to continue to run Windows. It would throw the market place into chaos. Linux would briefly jump up in use by people, but Linux's strengths are also it's weakness, and that is the open source nature and the fact there are so many distro's. Game developers had a brief flirtation with Linux several years ago porting many popular titles to it and discovered that just because the games would run well under Distro X didn't mean it would run well under Distro Y, Z, V and Q. What would happen is the PC market would fall flat, sales would be non existent, R&D would not be nearly what it is, you wouldn't see Intel, AMD, nVidia all releasing new products ever 6-12 months. Eventually someone would come along with a closed source, open architecture OS that people would start using because it would bring compatibility under the same umbrella, but PC use would be far less than what it is now and growth would be slow.

Microsoft knows this. One thing they will not do is bite the hand that feeds them. They are not going to have the US and Europe taking them to court over antitrust violations that walling off would mean, and sure wouldn't risk losing the main portion of their userbase who use 3rd party applications. -

brandonvi ?? you do know that the windows store is not a end to 3rd party development right???? thay will let ANYONE post a app there thay just take i think it was 30% i also think there is a option to make a app/program free when you make itReply

more than likly they will even have places to download netscape and others like it not to far down the road

also more then likly thay will give large things like say call of duty or other major things a break and lower the % to say 10-15%

the fact is its not at all a "lock out for 3rd party's " but it is a Major step in having a Large control over the market -

Someone Somewhere I'm waiting for the EU to sue them over the MS apps only on desktop thing. That's blatantly against the anti-monopoly laws.Reply