AMD Talks Steamroller: 15% Improvement Over Piledriver

At today's Hot Chips Symposium, Mark Papermaster, Senior Vice President and CTO, AMD, talks about the upcoming "Steamroller" Microarchitecture

We are getting our first look at the "Steamroller”, which is the core for the "Kaveri" APU, among others. AMD is expecting to see a 15 percent improvement in performance per watt over the "Piledriver" core. The improvements are seen through design-level improvements rather than process-level improvements.

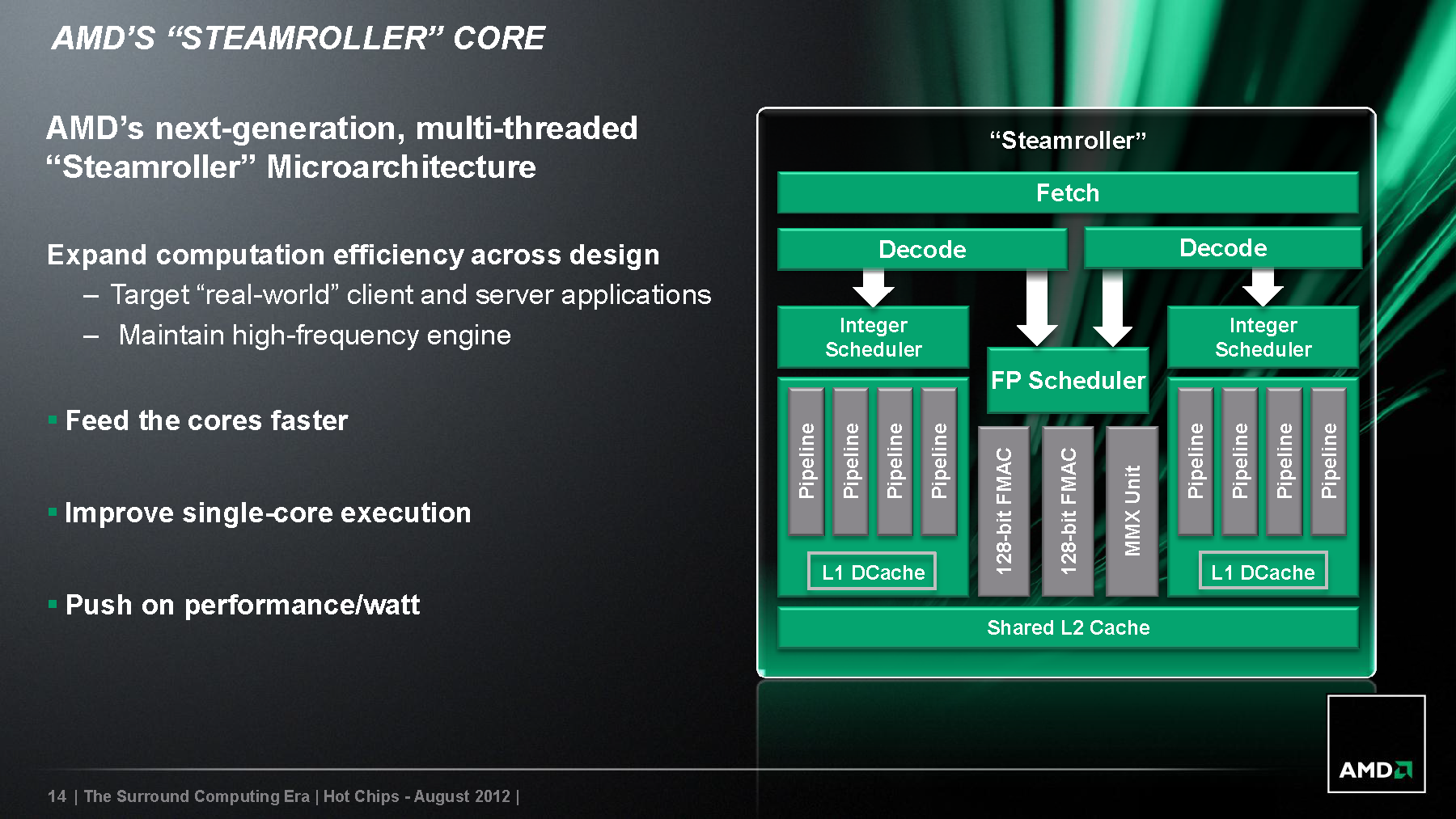

The design-level improvements are driven by the microarchitecture's design to feed the cores faster, improve single-core execution and a push on performance per watt.

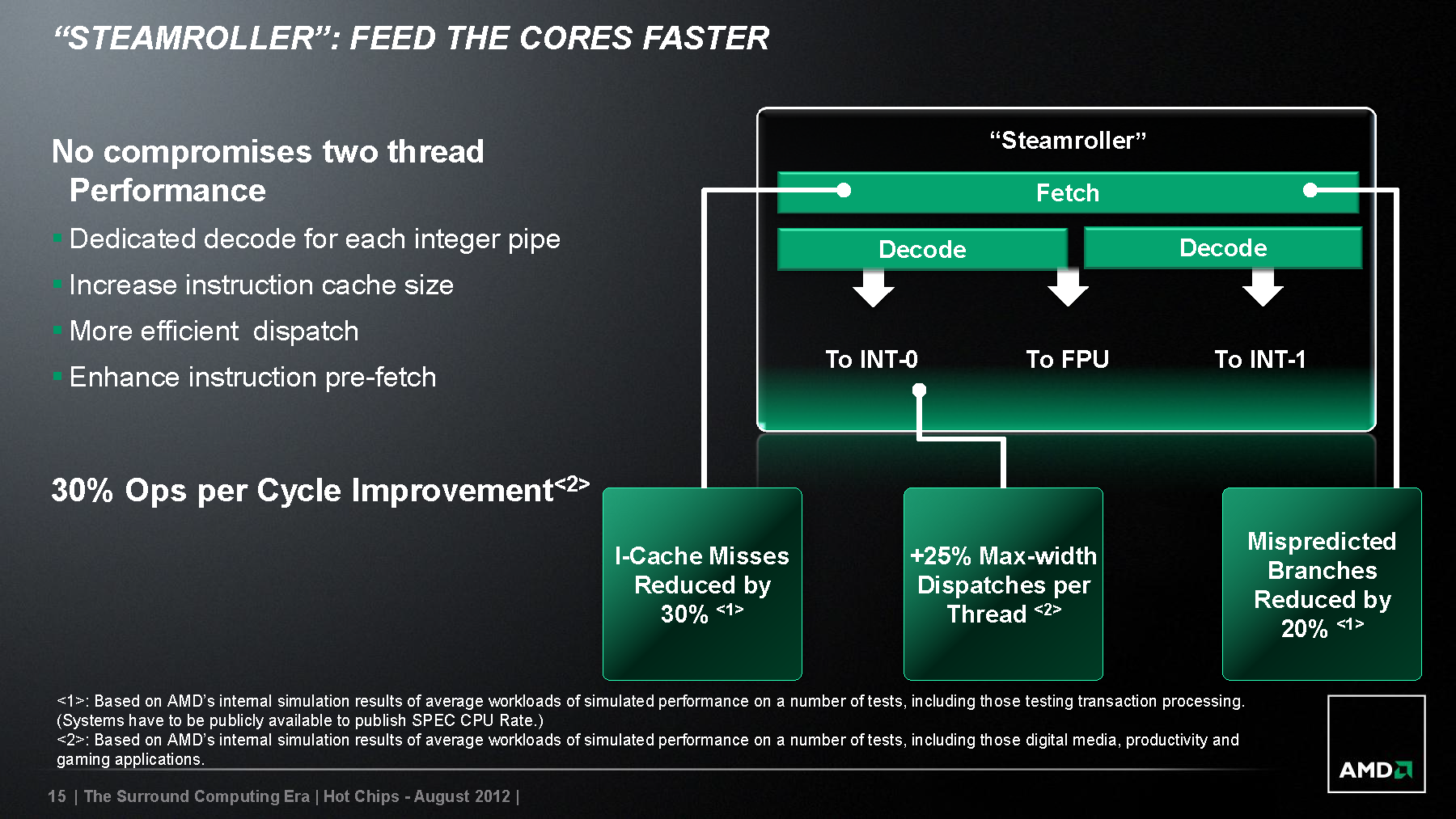

To "Feed the Cores Faster", AMD has increased the instruction cache size, enhanced instruction prefetch, and has a more efficient dispatch. In addition, Steamroller has a dedicated decode for each integer pipe. These design improvements have resulted in a 30 percent reduction in i-cache misses, an increase of 25 percent on max-width dispatcher per thread and a 20 percent reduction in mispredicted branches, which results in a 30 percent increase in overall ops delivered per cycle.

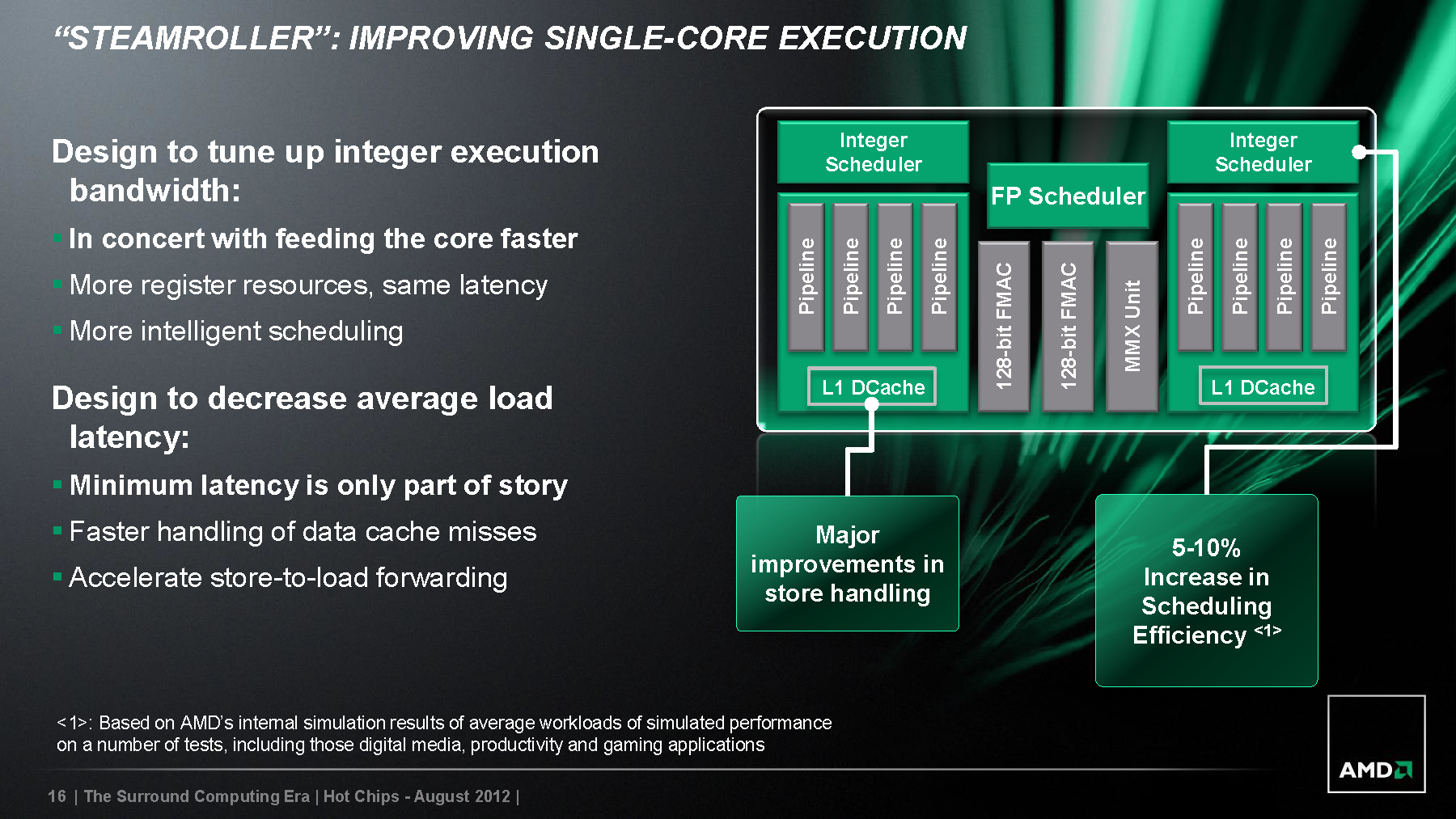

Steamroller improves single-core execution by tuning up the integer execution bandwidth and decrease average load latency. The integer execution bandwidth is tuned up with the improvement seen with "Feed the Cores Faster", along with more register resources (same latency) and intelligent scheduling. Average load latency is decreased by not just minimizing latency but with faster handling of data cache misses and accelerated store-to-load forwarding. These design improvements have resulted in a 5 to 10 percent increase in scheduling efficiency, along with major improvements in store handling.

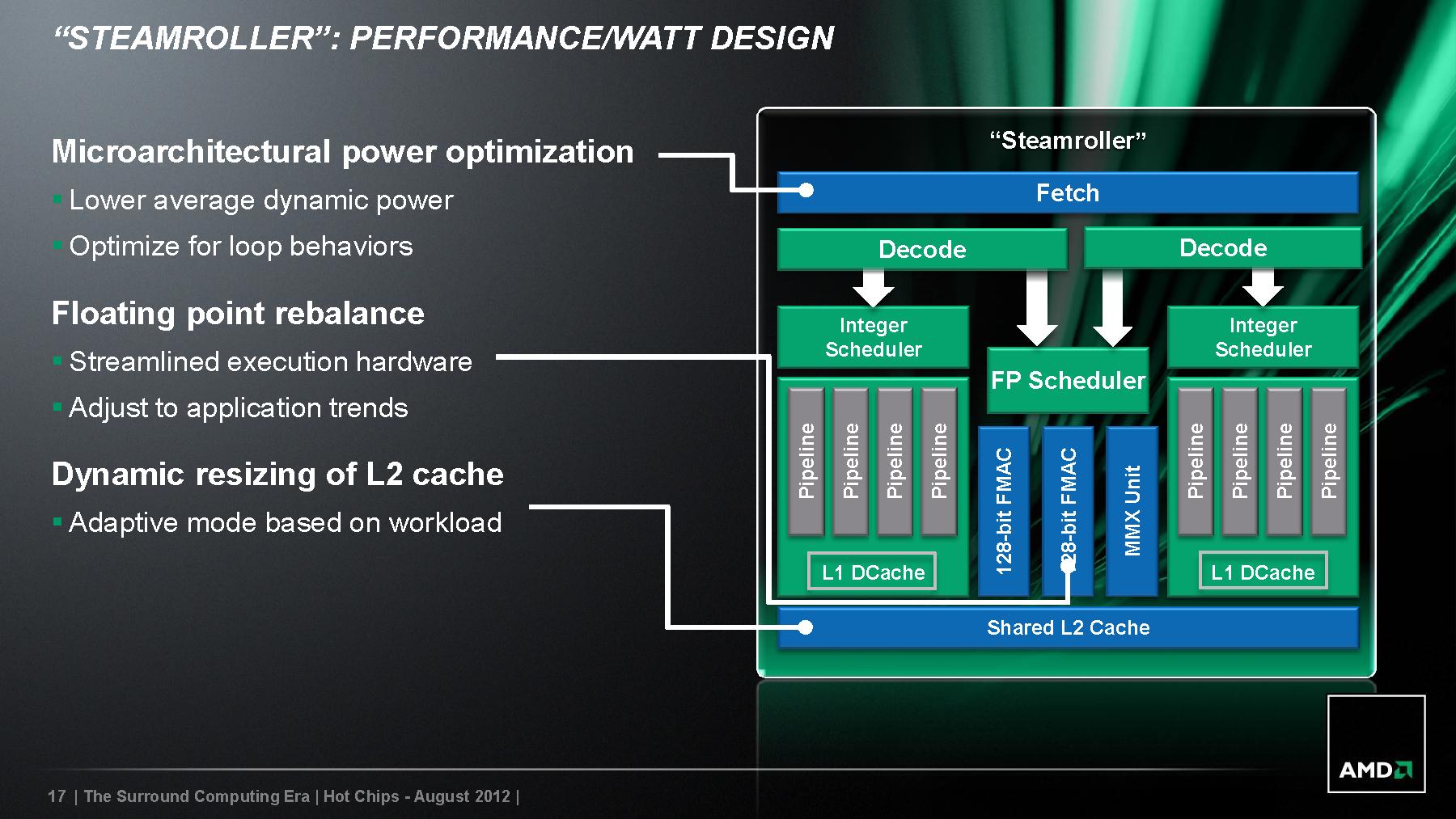

As with any design improvement, companies are trying to get more performance out the process with equal or less power requirements. We are seeing this not only with processors but with graphics cards as well. AMD improves Steamroller's performance per watt with its power optimization, floating point rebalance and dynamic resizing of L2 cache. The dynamic resizing of L2 cache has allowed the shared L2 cache to work in an adaptive mode based on workload. The floating point rebalance allows it to streamline execution hardware and adjust to application trends, which add to the efficiency of the design. In addition, its power optimization offers lower average dynamic power and is optimized for loop behaviors.

Look for more details from AMD during Hot Chips Symposium on its Surround Computing and High Density (Thin) Libraries.

Contact Us for News Tips, Corrections and Feedback

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

-

fedelm First and foremost, let's hope Piledriver can deliver on the desktop, especially after the bulldozergate. If it does, then this is excellent news.Reply

I want AMD to be able to give intel a run for its money performance-wise. Doesn't have to match the i-xxs, just provide a decent, reliable and affordable option.

Cheers. -

Pinhedd Good.Reply

It sounds like they've learned a lot from Bulldozer. If they can keep pulling off 15-20% improvements in 10-12 month intervals than perhaps AMD can still be a viable competitor to Intel -

aznshinobi I feel like with Piledriver and Steamroller AMD will finally be able to put some pressure on Intel.Reply -

dalethepcman If the 5-10% scheduling efficiency and 20-30% increase in ops along with the increased clock rates comes out to a 25-30% increase in performance then AMD will be in a happy place, and so will the consumer. If this turns out to be a bust, it would be really really bad for the industry unless your name in Intel.Reply

-

redyellowblueblast Us AM3+ users never received any Piledriver based CPUs. Are we getting Steamroller instead?Reply -

leeashton well we currently know that trinity on the mobile platform out performs any intel Chip, so AMD has the mobile performance crownReply -

vistaofdoom leeashtonwell we currently know that trinity on the mobile platform out performs any intel Chip, so AMD has the mobile performance crownGood troll there...Reply -

pharoahhalfdead Will it be 32nm or 22nm? It would be nice if AMD could get away from 32nm in a swift, timely fashion. Let's hope they don't stay here for four years like they stayed at 45nm.Reply -

cscott_it In addition to all of this, they re-acquired one of their lead designers from the K8 days who co-authored their original x64 endeavor. As we all recall, those were some major wins for AMD. The gentlemen had been a lead designer for Apple's mobile segment, so his chops are still in pretty good shape.Reply

Even if this generation does fail, I have a feeling they will be doing something right in the next 5 years.