Intel May Deliver Optical Chips Soon

Intel has developed a high-speed silicon-based avalanche photodetector that can be manufactured cheaply, which could one day lead to optically-connected Intel processors.

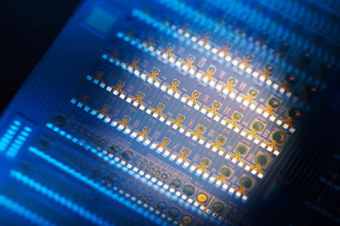

Looking beyond the realm of copper, Intel has announced it has developed a new silicon-based avalanche photodetector (APD) for use in optical communication devices. Publishing its results in the journal Nature Photonics, the new APD can achieve a record setting gain-bandwidth product (GBP) of 340 GHz; a representation of the device's maximum bandwidth and amplification.

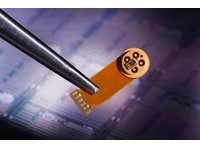

The new avalanche photodetector can be used to detect and amplify low-power optical signals, essentially turning photons into electronic signals, at data rates of potentially 40 Gb/s or more. Unlike traditional InP-based APDs, Intel's new APD uses silicon and germanium, which could make it very inexpensive to manufacture, while also being of small form-factor. Intel has a nifty flash animation located here that explains how the new ADP is made and how it works. The research was made possible with funding from Intel and DARPA, along with the help of various experts in the field.

Intel foresees many significant uses for silicon-based APDs, including “support for multiple high-definition video feeds, higher performance for PCs and servers, and near-perfect security for data transmissions. ”Although telecommunications may find a more immediate use for the new technology, Intel does see the new technology being used eventually in its processors. According to Intel's Patrick Gelsinger, "Today, optics is a niche technology. Tomorrow, it's the mainstream of every chip that we build. "

With modern processors featuring a growing number of integrated cores, with hundreds of cores possible in the future, the problem of how to get data in and out of these cores arises. Traditional communication methods will likely face bandwidth limitations in the future, as even the restriction of just how many copper pins can possibly be placed on a chip could become a problem. Silicon-based optical communication could be used instead of electrical communication to connect these cores in a feasible manner. According to Justin Rattner, Intel's CTO, "These fundamental scientific advances made by our silicon photonics team give me confidence that for decades to come, we will have the communications and I/O bandwidths to match the continued increases in computing performance provided by Moore’s law. "

The new silicon-based avalanche photodetector technology is just a part of the technology required to make silicon-based photonics a mainstream reality though. Aspects requiring development include the generation of a light source, being able to guide that light selectively within the silicon, encoding and decoding the light, detecting the light, intelligently controlling the device and lastly, being able to package the photonic device. Intel had already announced its success with developing the first continuous-wave silicon laser and the first gigabit speed silicon modulator. As for when we should see these new products hit the market, Justin Rattner stated back in 2007, "Well, it’s possible that by the end of the decade we’ll have silicon photonic products in the market. If it’s not 2010, maybe 2011, but I think we are close now. "

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

-

Pei-chen Athlon X2 will still be cheap enough to compete on value; 6600+ X2 Black Black Black Edition for $4.00.Reply

-

hannibal Well they are talkin about foto-optical prosessors. It's guite sensible if they can make the light source small enough. It seems to be that they will use it as an ultra high speed connection methot, not in actual CPU logic. It is easily compared to what light fibres has done to copper connections when high trasfer speeds are needed. Only in miniatyre size.Reply

Nice to see how this will work out. We are wainting for holocraphic storages, and optical chips. The future seems to be much brighter ;-)

-

pbrigido I wonder if this may totally eliminate the need for a traditional CPU heatsink and fan.Reply -

Shadow703793 ^Actually it will requite new cooling methods. The lasers required to create the light produce quite a lot of heat.Reply -

prodigy22 Super duper technology will never be out in one hit. U gotta keep milking the market :D so all this talk is waved as crap to meReply -

This shouldn't just be for processors.. I feel it is EXTREMELY important that intel/IBM/AMD design a low cost universal PCIe optical interconnect chip.. built into either the southbridge or a dedicated additional component, that to the system looks like a PCIe 16x or 32x slot (PCI 2.0 or hopefully 3.0).Reply

It could allow two separate PC's/servers to interconnect natively, expand out large PCIe backplanes, etc..

But what I'd really like to see is a cheap part for OEMs to put on their video cards. No more cross fire and nVidia SLI external connects or physical PCIe slots. Just cards with a powersupply connector and an optical interface. Systems would no longer be limited to just a few GFX cards. i.e. 128 GPGPU's on a single system. All interconnected via optical.

I'd even go so far as to say that the big boys (Intel, IBM, AMD) should all come together and agree to skip physical electrical PCIe 3.0 and take it optical from the start. If everyone agreed on a standard set of chips, and could get the cost to nearly the same price as it takes to build a electrical interface on a card, it would have a great chance.

I'm really tired of being limited in scalability to just whats in my case. Optical PCIe would really be a boost to our options.