AMD FX-8370E Review: Pulling The Handbrake For More Efficiency

Going more slowly is more efficient. That’s what AMD must have thought when they designed its new FX-8370E processor, thus closing a gap in the company's line-up. We evaluate whether this CPU is really more efficient and what happens when we overclock it.

Results: Gaming

This brings us to the fun part of the review: the FX’s gaming performance. Typically, when we review a CPU, we use a high-end GPU to avoid bottlenecking host processor performance. That makes sense from a theoretical approach, but it's naturally not going to be practical. At what point does graphics hold back a CPU, anyway?

For this reason, when there are large differences between CPUs, we test each game twice: once with a high-end graphics card (in this case, AMD’s Radeon R9 295X2) and once with a more mainstream graphics card better balanced to match the FX. AMD's Radeon R9 270X or 285 are good matches.

When Does The Graphics Card Become The Limiting Factor?

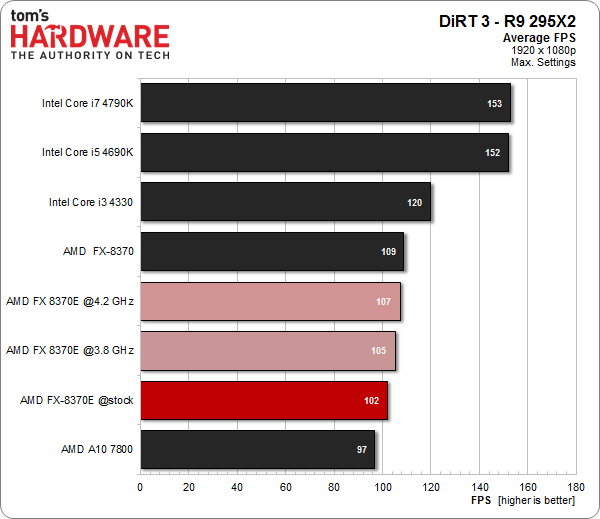

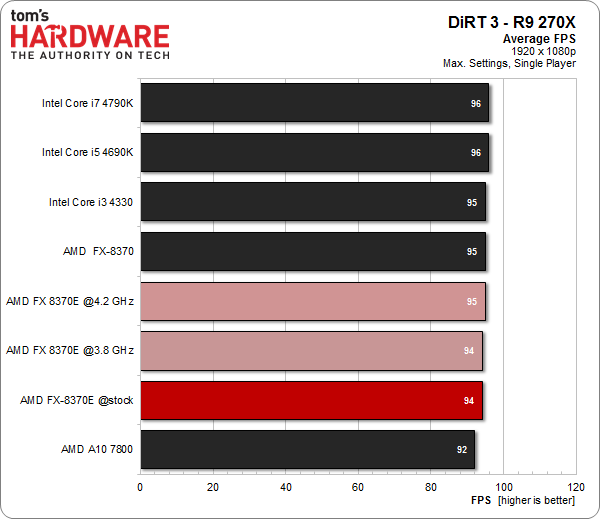

DiRT 3's ability to scale makes it a good example. If you pair a fast CPU with a high-end graphics card, this title really flies. But if you handicap your platform with a lower-end GPU, the bottleneck becomes obvious.

Now, this isn't to say that matching a Radeon R9 270X to a Core i7-4790K is a good idea. However, if you allow graphics to become your limiting factor, the impact of CPU performance becomes less obvious. If anything, let our exploration serve to better inform you how component choice can dramatically alter the outcome of benchmark results.

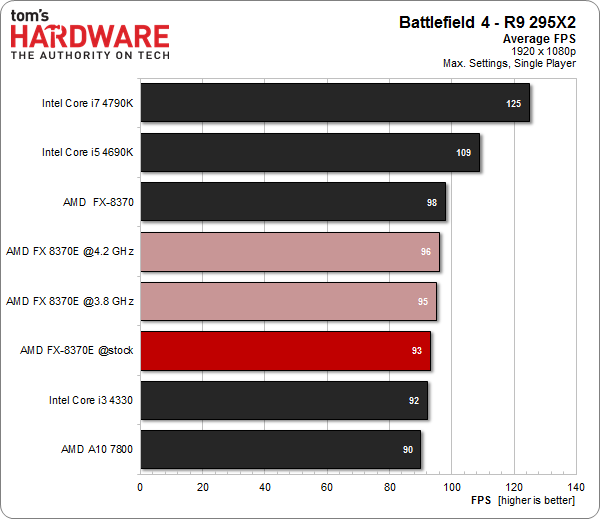

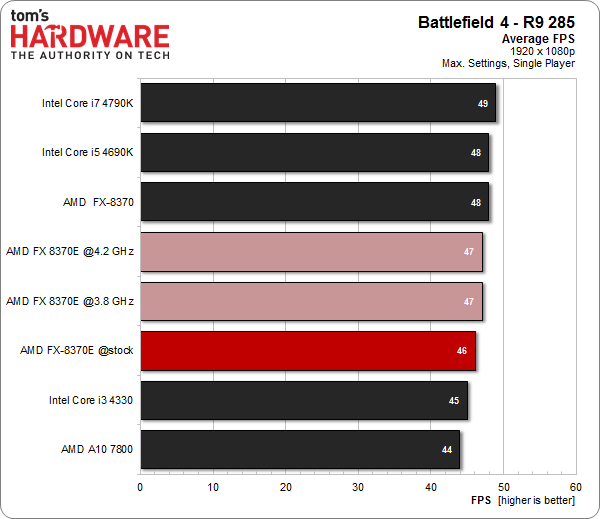

We perform the same exercise in Battlefield 4, even though we measured a performance difference of only 30 percent with the high-end graphics card. First, let’s take a look at the original test:

In single-player mode, a Radeon R9 285 is enough to essentially level the playing field. It's our limiting component at Ultra detail settings and a 1920x1080 resolution. Of course, most folks still looking at Battlefield 4 are involved in the more CPU-taxing multi-player component. Unfortunately, that's difficult to benchmark reliably.

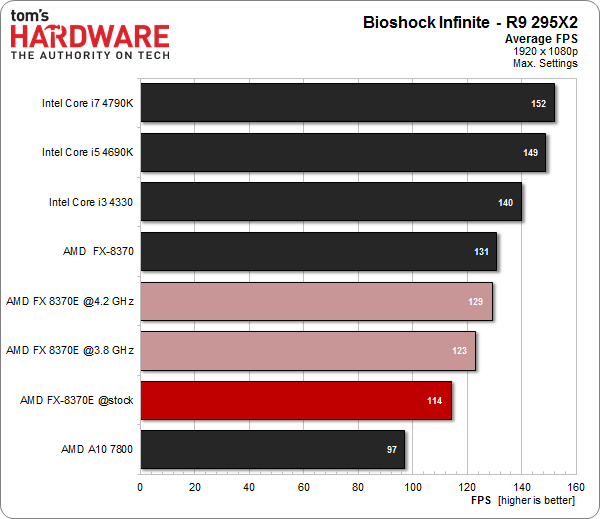

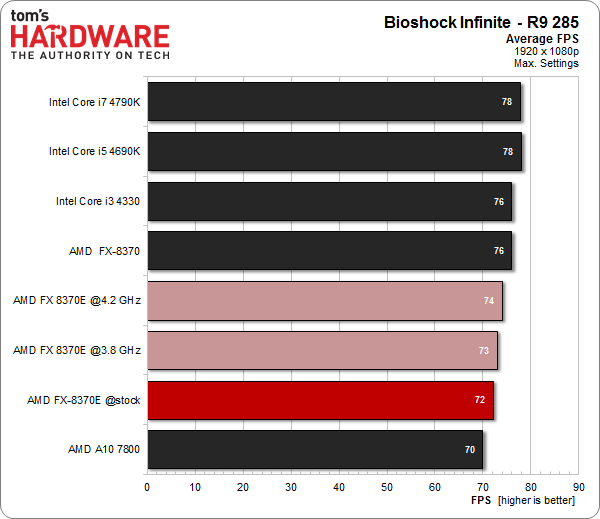

BioShock Infinite doesn’t have the reputation of being hard on hardware, but that doesn’t mean that the combination of a high-end graphics card and AMD's FX-8370E makes sense.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Once again, capping performance with a more mainstream GPU masks the potential of our various host processors. In the real-world, pairing a Radeon R9 285 and FX CPU makes sense. Substituting in a Core i7 won't yield dramatically better frame rates until you also step up to a much faster graphics configuration.

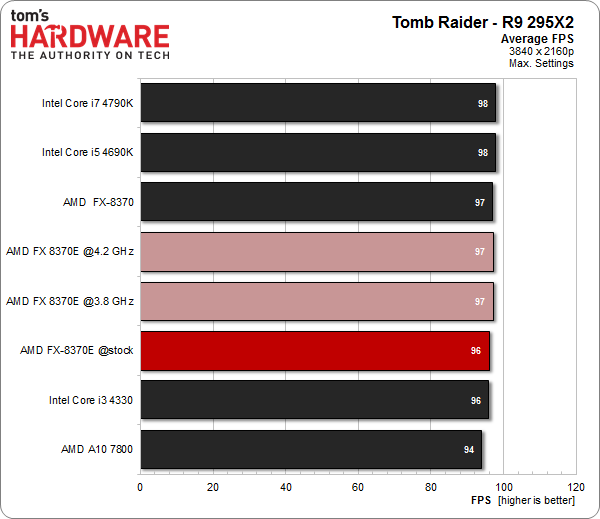

Gaming at 3840x2160 Resolution

It’s common knowledge that massive resolutions almost always lead to GPU bottlenecks. Consider it an exaggeration of what we just saw. Even at maxed-out settings, there’s almost no difference between the CPUs in spite of the relatively high frame rates. It basically doesn’t matter what CPU you pick because the graphics card creates the performance ceiling.

To put it nicely, the FX-8370E is a true middle-of-the-road CPU. Using it only makes sense as long as the graphics card you choose comes from a similar performance segment.

Depending on the game in question, AMD’s new processor has the potential to keep you happy around the AMD Radeon R9 270X/285 or Nvidia GeForce GTX 760 or 660 Ti level.

A higher- or even high-end graphics card doesn’t make sense, as pairing it with AMD's FX-8370E simply limits the card's potential.

Current page: Results: Gaming

Prev Page Results: Synthetics And Applications Next Page The Same CPU, Reprised

Igor Wallossek wrote a wide variety of hardware articles for Tom's Hardware, with a strong focus on technical analysis and in-depth reviews. His contributions have spanned a broad spectrum of PC components, including GPUs, CPUs, workstations, and PC builds. His insightful articles provide readers with detailed knowledge to make informed decisions in the ever-evolving tech landscape

-

MeteorsRaining The price point is a deal breaker. Its a fairly good CPU for AMD builders, but can't give it the tag of budget builder, you get i5 non-K in that price. Its moving into a higher (cost wise) territory with weak arsenal.Reply -

The_Doc How to start a benchmark review? But of course, let's show how powerful is AMD in single core!Reply

I think we all get it Vishera isn't exactly wonderful in single core operations, but:

A) I have yet to see any software which requires A LOT of single core power, it's 2014, if something is still single-core, it probably doesn't need all that power or il old enough to make even Vishera good at it.

B) You are comparing a 2012 architecture to a 4790K, It's like comparing Pentium 4 to a Pentium G3258.

-

husker Article quote: "However, it's probable that AMD sent us a sample chosen specifically for this purpose. Plus, there is almost certainly variance from one -8370E to the next. And so it's hard to know if the FX-8370E is actually better."Reply

If you pre-suppose that your sample is tainted why bother to do the testing and the article in the first place. Perhaps this is a case where your should purchase the product of the shelf in order to better serve your readers. -

1991ATServerTower I would have liked to see a power consumption chart of the following cpus all clocked to 3.8GHz.Reply

8150, 8320, 8230e, 8350, 8370e.

That would demonstrate the improvements of Vishera over Bulldozer, as well as any improvements offered by binning. -

oxxfatelostxxo "If you pre-suppose that your sample is tainted why bother to do the testing and the article in the first place. Perhaps this is a case where your should purchase the product of the shelf in order to better serve your readers."Reply

1) almost every vendor does this, cpus, graphics, ect..

2) the chip they received is exactly what you get when you buy it off the shelf, however every cpu/gpu ect varies by a small amount. The vendors simply make sure that review sites get the top end of that group. In all honesty we are probably talking 3% performance from the majority at most. -

ShadyHamster Any chance the older 8320/50 could be tested at the same voltages and clock speeds to better compare power usage?Reply

My 8320 will happily run 3.5/3.6ghz @ 1.15v as long as turbo core is disabled. -

m32 I've had a few 8350's that needed 1.41-1.45v for 4.5. These E models needs less voltage compared to the originals when dealing with "moderate" overclocks.Reply

I will probably get the 8320E for my office computer during Black Friday. $140 is the price right now but I prefer $125 or less for an AMD CPU. -

Chris Droste for all we know a nice, process-refined 8350 is the exact same chip under the hood, they just clocked it down and gave it a new name. someone wake me when AMD starts to innovate desktop CPUs again.Reply -

hmp_goose While it's nice to see a sweet spot staked out for the OC, and really nice to hear about how much smaller the heatsink can be, what I'd like to see if the E OCs cooler/ less wattage then the two non-Es. I like to think a 8350 is better then a 8320 if you care about power consumption at all, and want to see if the trend continues with the 8370 & 8370E …Reply -

RedJaron Reply

Precisely, which goes right along with what Igor said:14227111 said:The price point is a deal breaker. Its a fairly good CPU for AMD builders, but can't give it the tag of budget builder, you get i5 non-K in that price. Its moving into a higher (cost wise) territory with weak arsenal.

Yes 4.5 GHz and higher is possible, but at a certain point you're going to spend too much on a beefy motherboard and high-end cooler, negating the value of overclocking outright.

Far too many people forget the whole cost of OCing a chip. Sure, a 4.5 83XX can slightly beat a stock i5, but at what cost? The 6300 is a far more compelling CPU for tweakers. If you're lucky on a few sales, you can get the chip, cooler, and mboard for the same $200. And as pointed out here, unless you're pairing it with a top-shelf GPU, you won't see any gaming benefits with a pricier platform.

This is AMD's latest offering. The Haswell refresh is Intel's latest offering. Whatever the products' pedigrees, why shouldn't the two latest SKUs be compared?14227240 said:B) You are comparing a 2012 architecture to a 4790K, It's like comparing Pentium 4 to a Pentium G3258.