The Dual Graphics Platform Battle, Part 1

NVIDIA nForce4 SLI X16

Introduced around a year ago, it is safe to call the nForce4 SLI chipset the most successful Socket 939 chipset these days, despite lots of smaller problems that are constantly mentioned on various forums on the Internet. There is a regular version around - the nForce4 Ultra - but the decreasing price difference between it and the SLI version, combined with the attractiveness of SLI, has made the Ultra less important over time. People go for SLI because that is what many enthusiasts refer to if you ask about the features that one must have today.

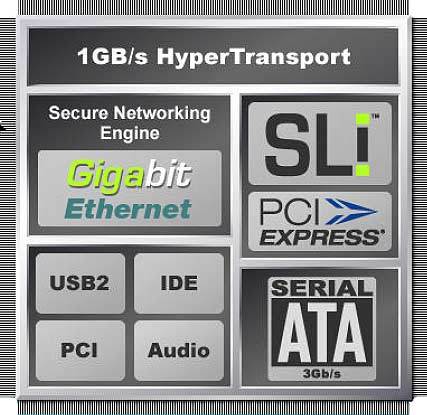

The latest version of the nForce4 SLI carries the suffix X16, which represents its ability to power two GeForce 6 or 7 type graphics cards with 16 PCI Express lanes each. As you can see in our benchmark results, this does not have an influence on common 3D benchmarks, though it's certainly nice to run a graphics card at its maximum bandwidth.

Feature-wise, the nForce4 SLI supports everything that is offered by ATI's Radeon Xpress 200 Crossfire Edition, no matter what south bridge you use. In addition to that, nForce4 comes with its integrated Gigabit Ethernet controller, which features an integrated hardware-supported firewall capable of stateful packet inspection. NVIDIA's mass storage controller supports four SATA ports with SATA II features such as 300 MB/s per-port bandwidth and Native Command Queuing. In addition, the firm offers its own overclocking software called nTune, as well as the more comprehensive overall software and drivers package.

The latest nForce4 SLI X16 has six, rather than three, spare PCI Express lanes; these could be used to run one x4 and two x1 PCIe slots. For the sake of completeness, we need to mention that ATI is not going to be able to provide a x4 PCIe slot once there is a Gigabit Ethernet chip around; it really shouldn't be connected via 32 bit PCI. However, there are very few cards around that really require PCI Express x4.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Patrick Schmid was the editor-in-chief for Tom's Hardware from 2005 to 2006. He wrote numerous articles on a wide range of hardware topics, including storage, CPUs, and system builds.