Opinion: AMD, Intel, And Nvidia In The Next Ten Years

Introduction

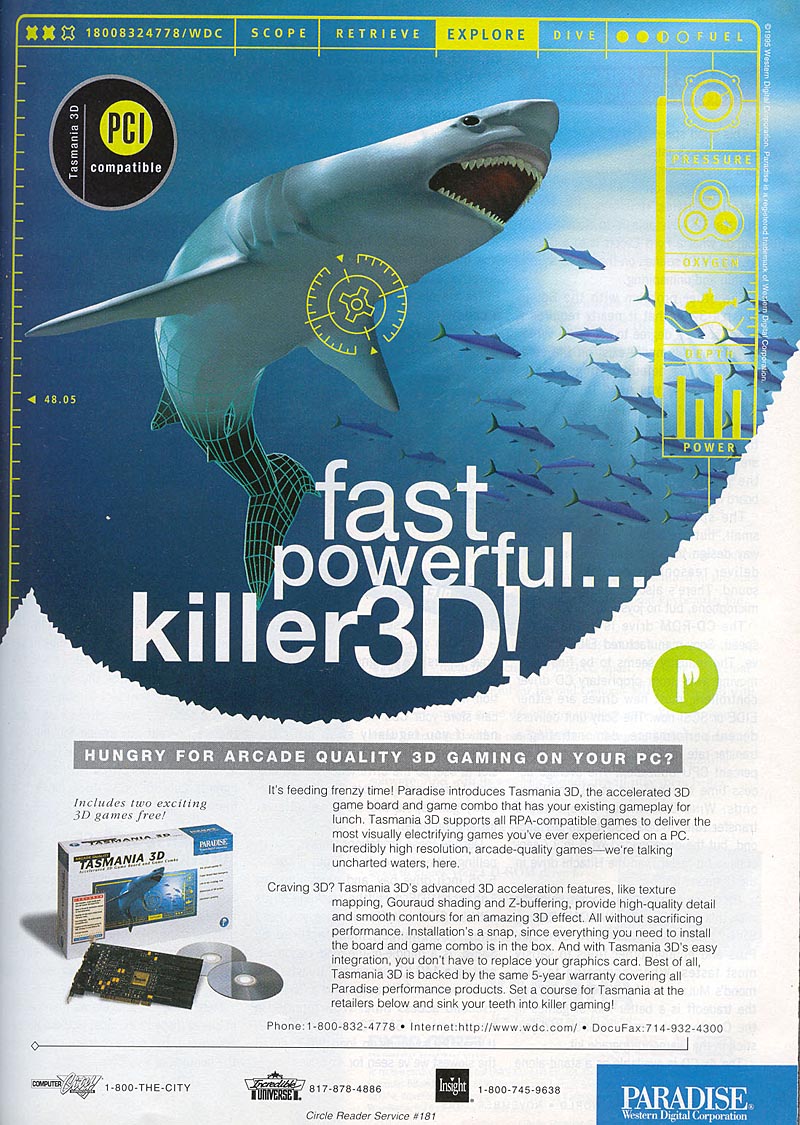

I've been a journalist/reviewer in the 3D graphics industry for over a decade. I can still remember walking through Fry's Electronics and seeing Western Digital's Paradise Tasmania 3D and actually getting excited about the Yamaha-powered graphics chip. Chris Angelini, the managing editor of Tom's Hardware US, and I go way back, with our first jobs in online journalism traced back to 3DGaming.com more than a decade ago.

Having been there from the beginning, I've seen the rise and fall of countless graphics manufacturers: S3, 3DLabs, Rendition, 3dfx, as well as board manufacturers like Orchid, STB, Hercules, the original Diamond, and Canopus. But as wild and crazy as the last decade was for visual computing, the next decade is going to be even more exciting, not only in what technology will offer to consumers, but in the upcoming arms race in visual computing.

A lot has been said about the impending death of the dedicated GPU. If you look at the history of dedicated upgrade products for consumer PC technology, they all eventually reach the point of diminishing returns and then integration. However, while it is inevitable that the dedicated GPU will eventually disappear, it’s not going to happen in the next decade.

Integration of computer technology only happens after the evolutionary process of reaching the point of diminishing returns on quality and performance is reached. We can see evidence of this with sound cards, video processing, and even monitors.

What follows is a discussion on the future of 3D graphics. Is the GPU on its death bed? Will AMD, Intel, and Nvidia continue to be relevant? This is purely an opinion piece, but it is based on more than a decade of experience.

Disclosure: per FTC guidelines, I am required to disclose any potential conflicts of interest. I own no shares of any company discussed in this editorial. Additionally, although I have received free engineering samples from AMD, Intel, and Nvidia in the past for editorial purposes, I have not received any products from these companies in the last year.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: Introduction

Next Page A Lesson In History: The Death Of The Sound Card-

anamaniac Alan DangAnd games will look pretty sweet, too. At least, that’s the way I see it.After several pages of technology mumbo jumbo jargon, that was a perfect closing statement. =)Reply

Wicked article Alan. Sounds like you've had an interesting last decade indeed.

I'm hoping we all get to see another decade of constant change and improvement to technology as we know it.

Also interesting is that you almost seemed to be attacking every company, you still managed to remain neutral.

Everyone has benefits and flaws, nice to see you mentioned them both for everybody.

Here's to another 10 years of success everyone! -

" Simply put, software development has not been moving as fast as hardware growth. While hardware manufacturers have to make faster and faster products to stay in business, software developers have to sell more and more games"Reply

Hardware is moving so fast and game developers just cant keep pace with it. -

Ikke_Niels What I miss in the article is the following (well it's partly told):Reply

I am allready suspecting a long time that the videocards are gonna surpass the CPU's.

You allready see it atm, videocards get cheaper, CPU's on the other hand keep going pricer for the relative performance.

In the past I had the problem with upgrading my videocard, but with that pushing my CPU to the limit and thus not using the full potential of the videocard.

In my view we're on that point again: you buy a system and if you upgrade your videocard after a year/year-and-a-half your mostlikely pushing your CPU to the limits, at least in the high-end part of the market.

Ofcourse in the lower regions these problems are smaller but still, it "might" happen sooner then we think especially if the NVidia design is as astonishing as they say and on the same time the major development of cpu's slowly break up.

-

lashton one of the most interesting and informativfe articles from toms hardware, what about another story about the smaller players, like Intel Atom and VILW chips and so onReply -

JeanLuc Out of all 3 companies Nvidia is the one that's facing the more threats. It may have a lead in the GPGPU arena but that's rather a niche market compared to consumer entertainment wouldn't you say? Nvidia are also facing problems at the low end of market with Intel now supplying integrated video on their CPU's which makes the need for low end video cards practically redundant and no doubt AMD will be supplying a smiler product with Fusion at some point in the near future.Reply -

jontseng This means that we haven’t reached the plateau in "subjective experience" either. Newer and more powerful GPUs will continue to be produced as software titles with more complex graphics are created. Only when this plateau is reached will sales of dedicated graphics chips begin to decline.Reply

I'm surprised that you've completely missed the console factor.

The reason why devs are not coding newer and more powerful games is nothing to do with budgetary constraints or lack thereof. It is because they are coding for an XBox360 / PS3 baseline hardware spec that is stuck somewhere in the GeForce 7800 era. Remember only 13% of COD:MW2 units were PC (and probably less as a % sales given PC ASPs are lower).

So your logic is flawed, or rather you have the wrong end of the stick. Because software titles with more complex graphics are not being created (because of the console baseline), newer and more powerful GPUs will not continue to produced.

Or to put it in more practical terms, because the most graphically demanding title you can possibly get is now three years old (Crysis), then NVidia has been happy to churn out G92 respins based on a 2006 spec.

Until we next generation of consoles comes through there is zero commercial incentive for a developer to build a AAA title which exploits the 13% of the market that has PCs (or the even smaller bit of that has a modern graphics card). Which means you don't get phat new GPUs, QED.

And the problem is the console cycle seems to be elongating...

J -

Swindez95 I agree with jontseng above ^. I've already made a point of this a couple of times. We will not see an increase in graphics intensity until the next generation of consoles come out simply because consoles is where the majority of games sales are. And as stated above developers are simply coding games and graphics for use on much older and less powerful hardware than the PC has available to it currently due to these last generation consoles still being the most popular venue for consumers.Reply